Charalampos Kalalas

Self-Supervised Learning at the Edge: The Cost of Labeling

Jul 09, 2025Abstract:Contrastive learning (CL) has recently emerged as an alternative to traditional supervised machine learning solutions by enabling rich representations from unstructured and unlabeled data. However, CL and, more broadly, self-supervised learning (SSL) methods often demand a large amount of data and computational resources, posing challenges for deployment on resource-constrained edge devices. In this work, we explore the feasibility and efficiency of SSL techniques for edge-based learning, focusing on trade-offs between model performance and energy efficiency. In particular, we analyze how different SSL techniques adapt to limited computational, data, and energy budgets, evaluating their effectiveness in learning robust representations under resource-constrained settings. Moreover, we also consider the energy costs involved in labeling data and assess how semi-supervised learning may assist in reducing the overall energy consumed to train CL models. Through extensive experiments, we demonstrate that tailored SSL strategies can achieve competitive performance while reducing resource consumption by up to 4X, underscoring their potential for energy-efficient learning at the edge.

Energy-Efficient Federated Learning for AIoT using Clustering Methods

May 14, 2025Abstract:While substantial research has been devoted to optimizing model performance, convergence rates, and communication efficiency, the energy implications of federated learning (FL) within Artificial Intelligence of Things (AIoT) scenarios are often overlooked in the existing literature. This study examines the energy consumed during the FL process, focusing on three main energy-intensive processes: pre-processing, communication, and local learning, all contributing to the overall energy footprint. We rely on the observation that device/client selection is crucial for speeding up the convergence of model training in a distributed AIoT setting and propose two clustering-informed methods. These clustering solutions are designed to group AIoT devices with similar label distributions, resulting in clusters composed of nearly heterogeneous devices. Hence, our methods alleviate the heterogeneity often encountered in real-world distributed learning applications. Throughout extensive numerical experimentation, we demonstrate that our clustering strategies typically achieve high convergence rates while maintaining low energy consumption when compared to other recent approaches available in the literature.

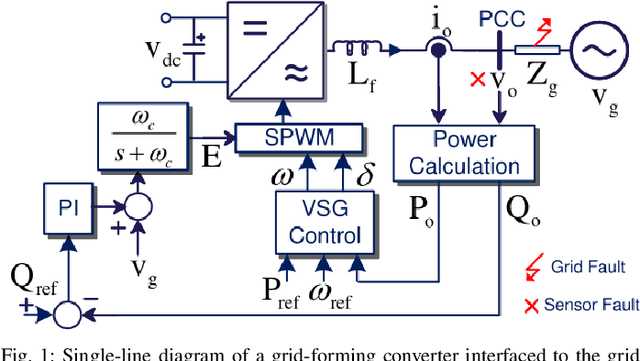

Optimizing a Digital Twin for Fault Diagnosis in Grid Connected Inverters -- A Bayesian Approach

Dec 07, 2022Abstract:In this paper, a hyperparameter tuning based Bayesian optimization of digital twins is carried out to diagnose various faults in grid connected inverters. As fault detection and diagnosis require very high precision, we channelize our efforts towards an online optimization of the digital twins, which, in turn, allows a flexible implementation with limited amount of data. As a result, the proposed framework not only becomes a practical solution for model versioning and deployment of digital twins design with limited data, but also allows integration of deep learning tools to improve the hyperparameter tuning capabilities. For classification performance assessment, we consider different fault cases in virtual synchronous generator (VSG) controlled grid-forming converters and demonstrate the efficacy of our approach. Our research outcomes reveal the increased accuracy and fidelity levels achieved by our digital twin design, overcoming the shortcomings of traditional hyperparameter tuning methods.

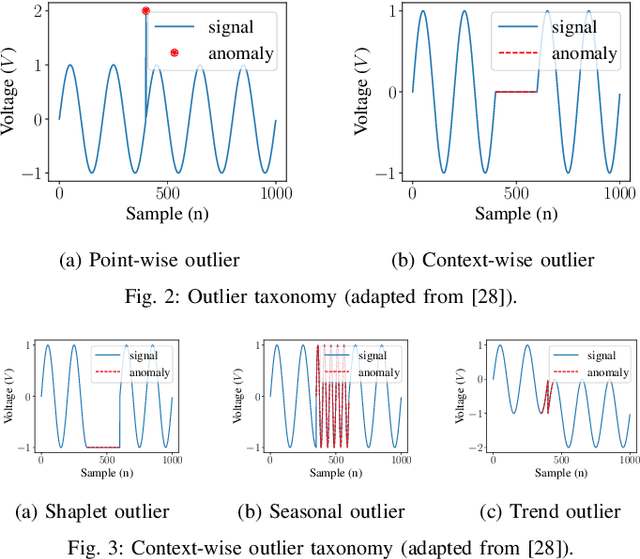

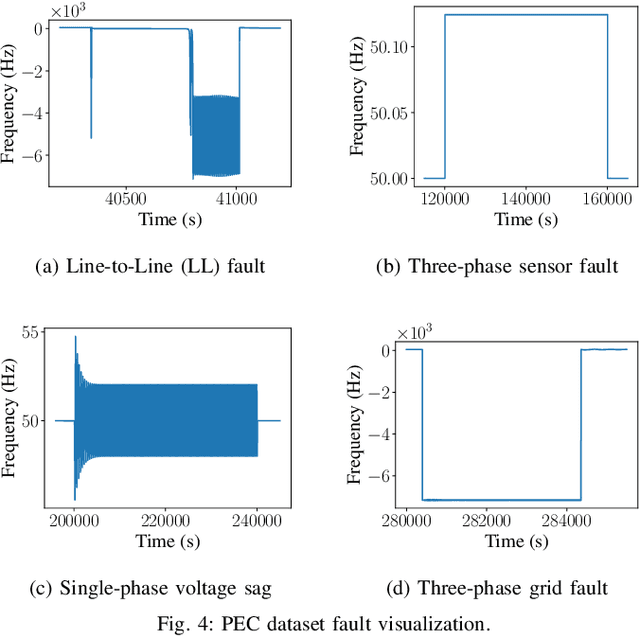

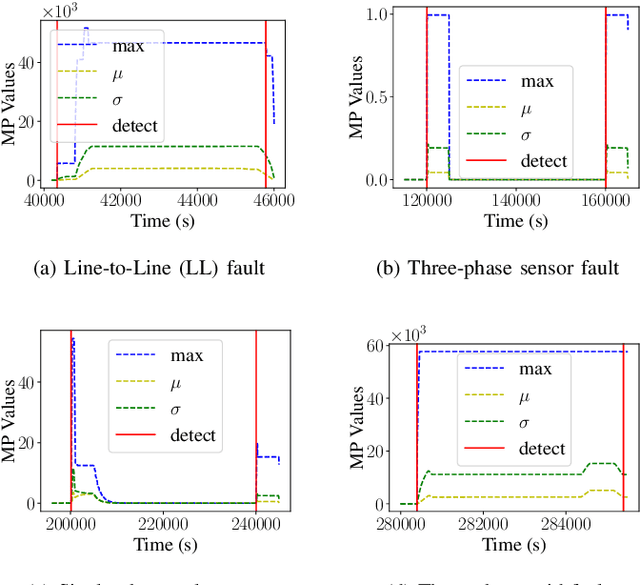

A Robust and Explainable Data-Driven Anomaly Detection Approach For Power Electronics

Sep 23, 2022

Abstract:Timely and accurate detection of anomalies in power electronics is becoming increasingly critical for maintaining complex production systems. Robust and explainable strategies help decrease system downtime and preempt or mitigate infrastructure cyberattacks. This work begins by explaining the types of uncertainty present in current datasets and machine learning algorithm outputs. Three techniques for combating these uncertainties are then introduced and analyzed. We further present two anomaly detection and classification approaches, namely the Matrix Profile algorithm and anomaly transformer, which are applied in the context of a power electronic converter dataset. Specifically, the Matrix Profile algorithm is shown to be well suited as a generalizable approach for detecting real-time anomalies in streaming time-series data. The STUMPY python library implementation of the iterative Matrix Profile is used for the creation of the detector. A series of custom filters is created and added to the detector to tune its sensitivity, recall, and detection accuracy. Our numerical results show that, with simple parameter tuning, the detector provides high accuracy and performance in a variety of fault scenarios.

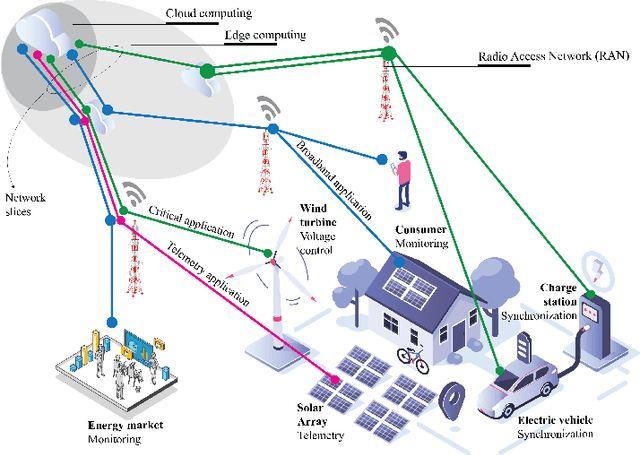

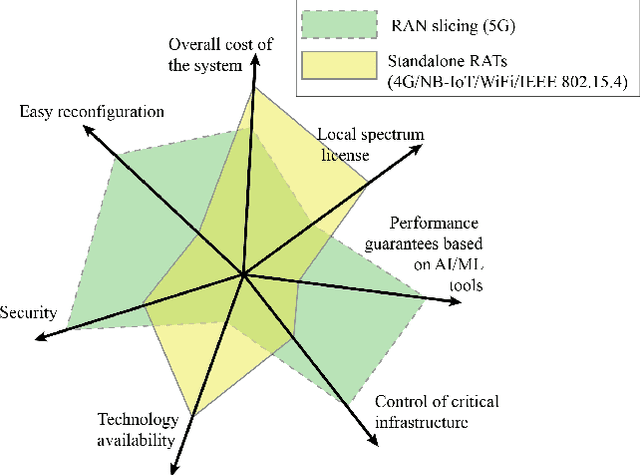

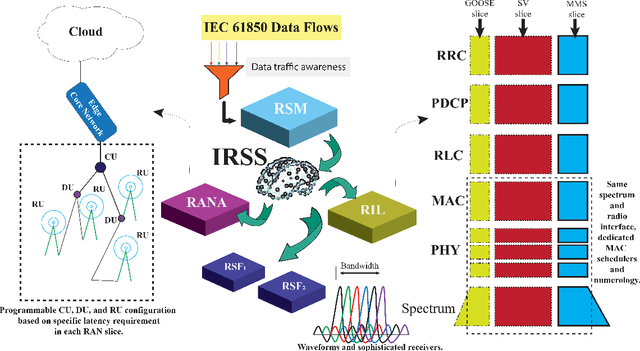

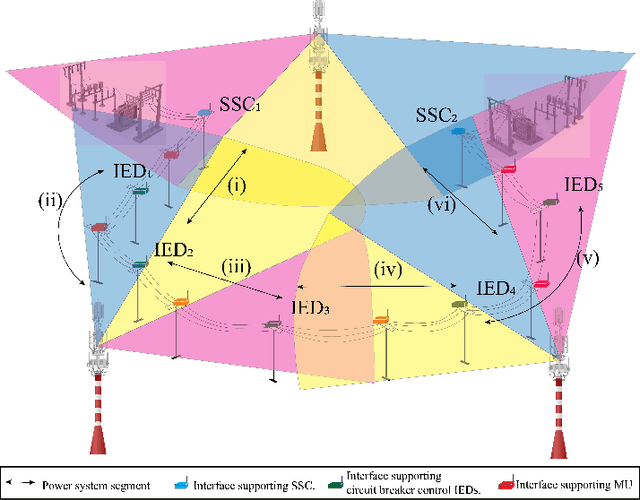

Boosting 5G on Smart Grid Communication: A Smart RAN Slicing Approach

Aug 30, 2022

Abstract:Fifth-generation (5G) and beyond systems are expected to accelerate the ongoing transformation of power systems towards the smart grid. However, the inherent heterogeneity in smart grid services and requirements pose significant challenges towards the definition of a unified network architecture. In this context, radio access network (RAN) slicing emerges as a key 5G enabler to ensure interoperable connectivity and service management in the smart grid. This article introduces a novel RAN slicing framework which leverages the potential of artificial intelligence (AI) to support IEC 61850 smart grid services. With the aid of deep reinforcement learning, efficient radio resource management for RAN slices is attained, while conforming to the stringent performance requirements of a smart grid self-healing use case. Our research outcomes advocate the adoption of emerging AI-native approaches for RAN slicing in beyond-5G systems, and lay the foundations for differentiated service provisioning in the smart grid.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge