Changjae Lee

Large Language Models have Intrinsic Self-Correction Ability

Jun 21, 2024

Abstract:Large language models (LLMs) have attracted significant attention for their remarkable abilities in various natural language processing tasks, but they suffer from hallucinations that will cause performance degradation. One promising solution to improve the LLMs' performance is to ask LLMs to revise their answer after generation, a technique known as self-correction. Among the two types of self-correction, intrinsic self-correction is considered a promising direction because it does not utilize external knowledge. However, recent works doubt the validity of LLM's ability to conduct intrinsic self-correction. In this paper, we present a novel perspective on the intrinsic self-correction capabilities of LLMs through theoretical analyses and empirical experiments. In addition, we identify two critical factors for successful self-correction: zero temperature and fair prompts. Leveraging these factors, we demonstrate that intrinsic self-correction ability is exhibited across multiple existing LLMs. Our findings offer insights into the fundamental theories underlying the self-correction behavior of LLMs and remark on the importance of unbiased prompts and zero temperature settings in harnessing their full potential.

From Keyboard to Chatbot: An AI-powered Integration Platform with Large-Language Models for Teaching Computational Thinking for Young Children

May 01, 2024Abstract:Teaching programming in early childhood (4-9) to enhance computational thinking has gained popularity in the recent movement of computer science for all. However, current practices ignore some fundamental issues resulting from young children's developmental readiness, such as the sustained capability to keyboarding, the decomposition of complex tasks to small tasks, the need for intuitive mapping from abstract programming to tangible outcomes, and the limited amount of screen time exposure. To address these issues in this paper, we present a novel methodology with an AI-powered integration platform to effectively teach computational thinking for young children. The system features a hybrid pedagogy that supports both the top-down and bottom-up approach for teaching computational thinking. Young children can describe their desired task in natural language, while the system can respond with an easy-to-understand program consisting of the right level of decomposed sub-tasks. A tangible robot can immediately execute the decomposed program and demonstrate the program's outcomes to young children. The system is equipped with an intelligent chatbot that can interact with young children through natural languages, and children can speak to the chatbot to complete all the needed programming tasks, while the chatbot orchestrates the execution of the program onto the robot. This would completely eliminates the need of keyboards for young children to program. By developing such a system, we aim to make the concept of computational thinking more accessible to young children, fostering a natural understanding of programming concepts without the need of explicit programming skills. Through the interactive experience provided by the robotic agent, our system seeks to engage children in an effective manner, contributing to the field of educational technology for early childhood computer science education.

Human-Robot Interface to Operate Robotic Systems via Muscle Synergy-Based Kinodynamic Information Transfer

May 11, 2022

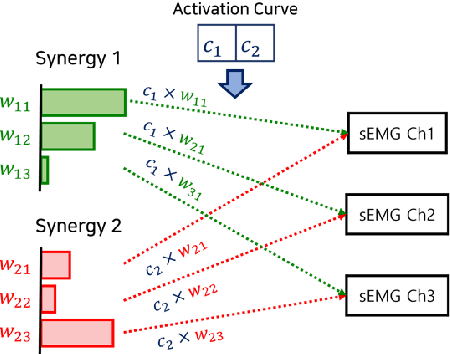

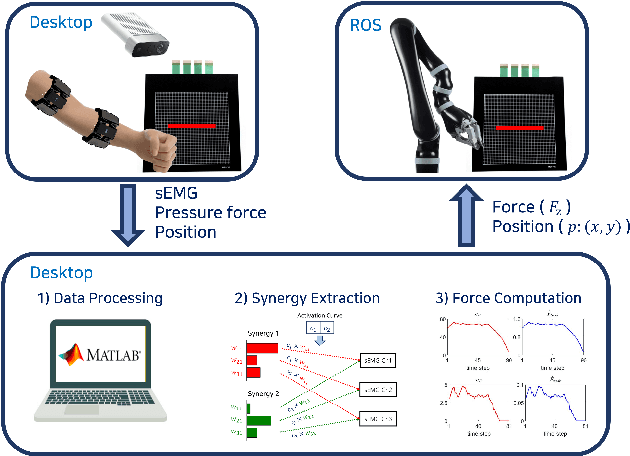

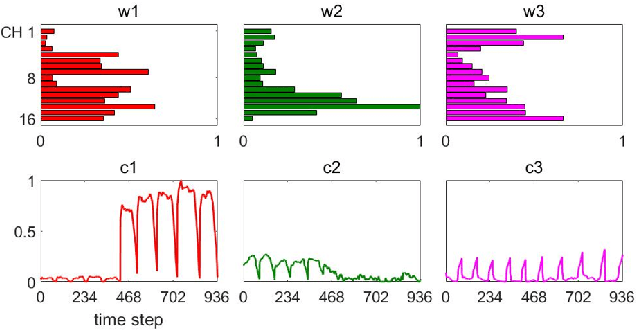

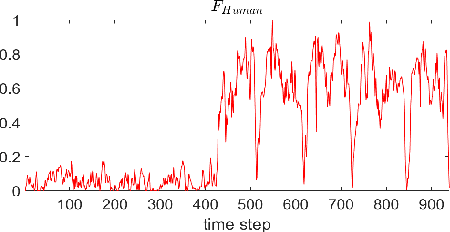

Abstract:When a human performs a given specific task, it has been known that the central nervous system controls modularized muscle group, which is called muscle synergy. For human-robot interface design problem, therefore, the muscle synergy can be utilized to reduce the dimensionality of control signal as well as the complexity of classifying human posture and motion. In this paper, we propose an approach to design a human-robot interface which enables a human operator to transfer a kinodynamic control command to robotic systems. A key feature of the proposed approach is that the muscle synergy and corresponding activation curve are employed to calculate a force generated by a tool at the robot end effector. A test bed for experiments consisted of two armband type surface electromyography sensors, an RGB-d camera, and a Kinova Gen2 robotic manipulator to verify the proposed approach. The result showed that both force and position commands could be successfully transferred to the robotic manipulator via our muscle synergy-based kinodynamic interface.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge