Casey A. Cole

Recognition of Smoking Gesture Using Smart Watch Technology

Mar 05, 2020

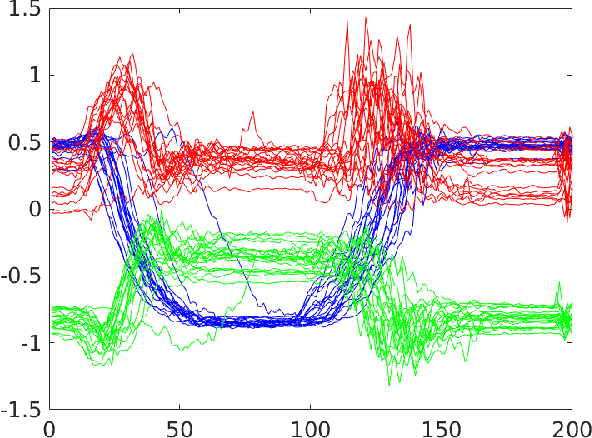

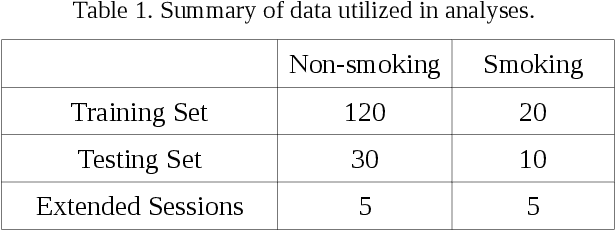

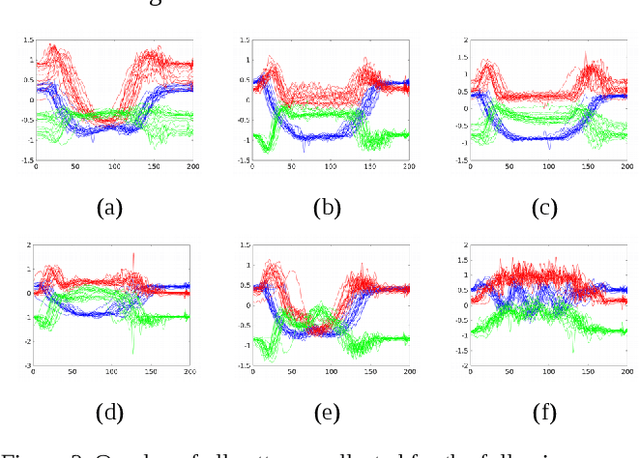

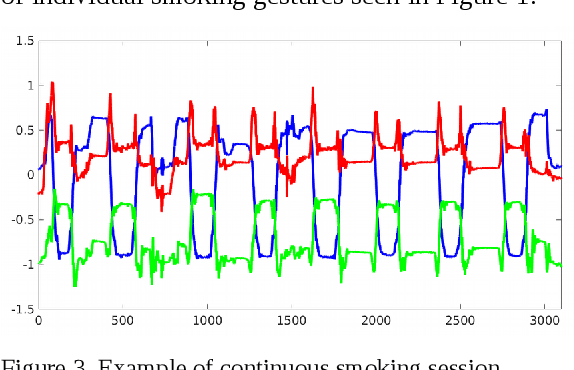

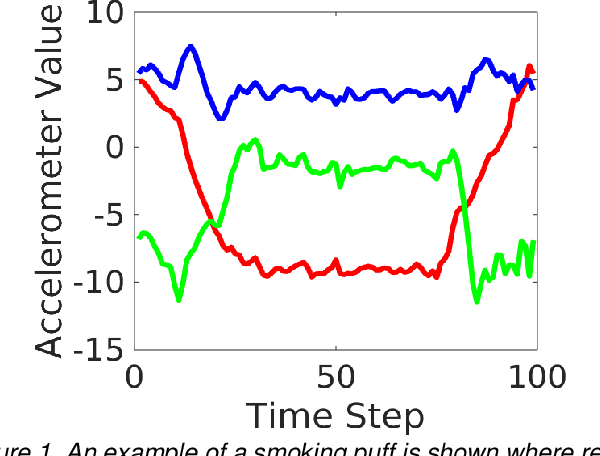

Abstract:Diseases resulting from prolonged smoking are the most common preventable causes of death in the world today. In this report we investigate the success of utilizing accelerometer sensors in smart watches to identify smoking gestures. Early identification of smoking gestures can help to initiate the appropriate intervention method and prevent relapses in smoking. Our experiments indicate 85%-95% success rates in identification of smoking gesture among other similar gestures using Artificial Neural Networks (ANNs). Our investigations concluded that information obtained from the x-dimension of accelerometers is the best means of identifying the smoking gesture, while y and z dimensions are helpful in eliminating other gestures such as: eating, drinking, and scratch of nose. We utilized sensor data from the Apple Watch during the training of the ANN. Using sensor data from another participant collected on Pebble Steel, we obtained a smoking identification accuracy of greater than 90% when using an ANN trained on data previously collected from the Apple Watch. Finally, we have demonstrated the possibility of using smart watches to perform continuous monitoring of daily activities.

State Transition Modeling of the Smoking Behavior using LSTM Recurrent Neural Networks

Jan 07, 2020

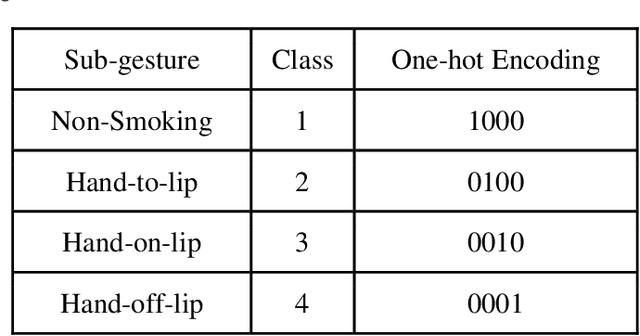

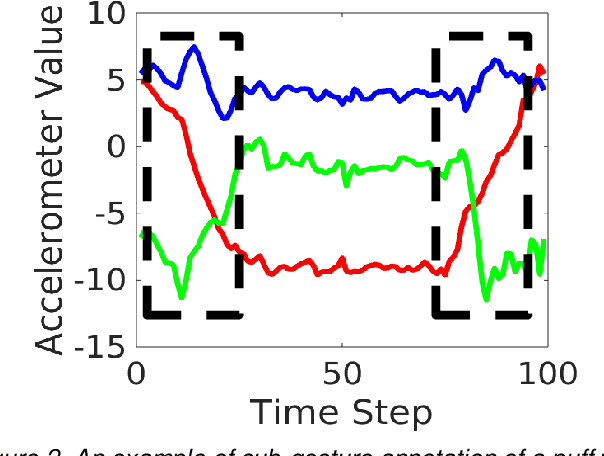

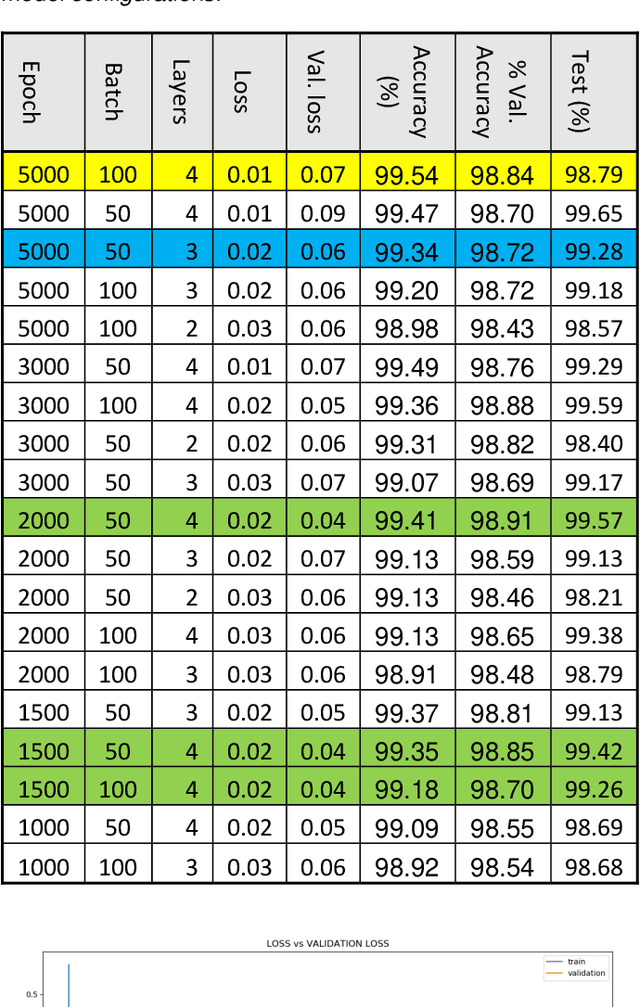

Abstract:The use of sensors has pervaded everyday life in several applications including human activity monitoring, healthcare, and social networks. In this study, we focus on the use of smartwatch sensors to recognize smoking activity. More specifically, we have reformulated the previous work in detection of smoking to include in-context recognition of smoking. Our presented reformulation of the smoking gesture as a state-transition model that consists of the mini-gestures hand-to-lip, hand-on-lip, and hand-off-lip, has demonstrated improvement in detection rates nearing 100% using conventional neural networks. In addition, we have begun the utilization of Long-Short-Term Memory (LSTM) neural networks to allow for in-context detection of gestures with accuracy nearing 97%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge