Carlos Hernández

Benchmarking MOEAs for solving continuous multi-objective RL problems

May 19, 2025

Abstract:Multi-objective reinforcement learning (MORL) addresses the challenge of simultaneously optimizing multiple, often conflicting, rewards, moving beyond the single-reward focus of conventional reinforcement learning (RL). This approach is essential for applications where agents must balance trade-offs between diverse goals, such as speed, energy efficiency, or stability, as a series of sequential decisions. This paper investigates the applicability and limitations of multi-objective evolutionary algorithms (MOEAs) in solving complex MORL problems. We assess whether these algorithms can effectively address the unique challenges posed by MORL and how MORL instances can serve as benchmarks to evaluate and improve MOEA performance. In particular, we propose a framework to characterize the features influencing MORL instance complexity, select representative MORL problems from the literature, and benchmark a suite of MOEAs alongside single-objective EAs using scalarized MORL formulations. Additionally, we evaluate the utility of existing multi-objective quality indicators in MORL scenarios, such as hypervolume conducting a comparison of the algorithms supported by statistical analysis. Our findings provide insights into the interplay between MORL problem characteristics and algorithmic effectiveness, highlighting opportunities for advancing both MORL research and the design of evolutionary algorithms.

Avoiding and Escaping Depressions in Real-Time Heuristic Search

Jan 23, 2014

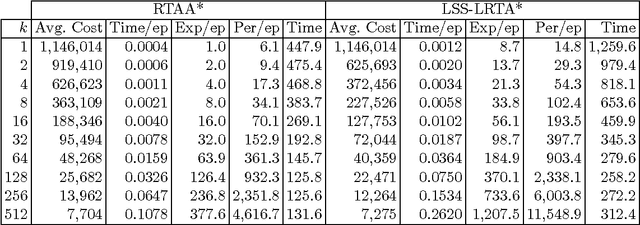

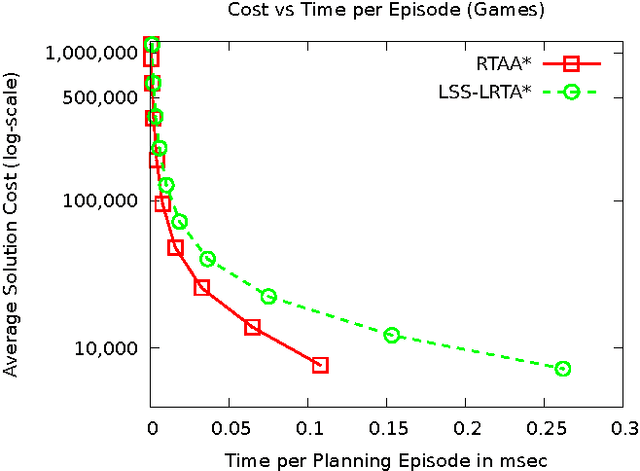

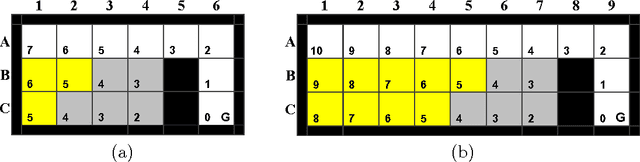

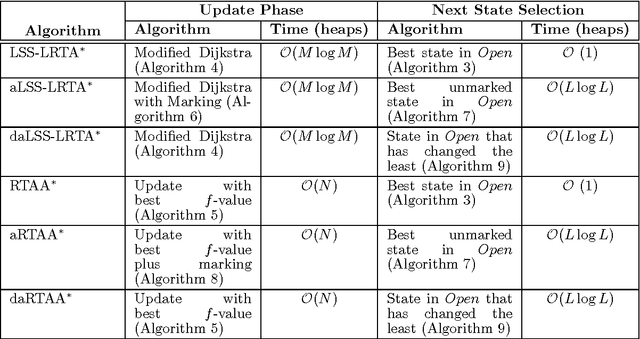

Abstract:Heuristics used for solving hard real-time search problems have regions with depressions. Such regions are bounded areas of the search space in which the heuristic function is inaccurate compared to the actual cost to reach a solution. Early real-time search algorithms, like LRTA*, easily become trapped in those regions since the heuristic values of their states may need to be updated multiple times, which results in costly solutions. State-of-the-art real-time search algorithms, like LSS-LRTA* or LRTA*(k), improve LRTA*s mechanism to update the heuristic, resulting in improved performance. Those algorithms, however, do not guide search towards avoiding depressed regions. This paper presents depression avoidance, a simple real-time search principle to guide search towards avoiding states that have been marked as part of a heuristic depression. We propose two ways in which depression avoidance can be implemented: mark-and-avoid and move-to-border. We implement these strategies on top of LSS-LRTA* and RTAA*, producing 4 new real-time heuristic search algorithms: aLSS-LRTA*, daLSS-LRTA*, aRTAA*, and daRTAA*. When the objective is to find a single solution by running the real-time search algorithm once, we show that daLSS-LRTA* and daRTAA* outperform their predecessors sometimes by one order of magnitude. Of the four new algorithms, daRTAA* produces the best solutions given a fixed deadline on the average time allowed per planning episode. We prove all our algorithms have good theoretical properties: in finite search spaces, they find a solution if one exists, and converge to an optimal after a number of trials.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge