C. -B. Schönlieb

Bang and the Artefacts are Gone! Rapid Artefact Removal and Tissue Segmentation in Haematoxylin and Eosin Stained Biopsies

Aug 25, 2023Abstract:We present H&E Otsu thresholding, a scheme for rapidly detecting tissue in whole-slide images (WSIs) that eliminates a wide range of undesirable artefacts such as pen marks and scanning artefacts. Our method involves obtaining a bid-modal representation of a low-magnification RGB overview image which enables simple Otsu thresholding to separate tissue from background and artefacts. We demonstrate our method on WSIs prepared from a wide range of institutions and WSI digital scanners, each containing substantial artefacts that cause other methods to fail. The beauty of our approach lies in its simplicity: manipulating RGB colour space and using Otsu thresholding allows for the rapid removal of artefacts and segmentation of tissue.

Bilevel parameter learning for higher-order total variation regularisation models

Aug 28, 2015

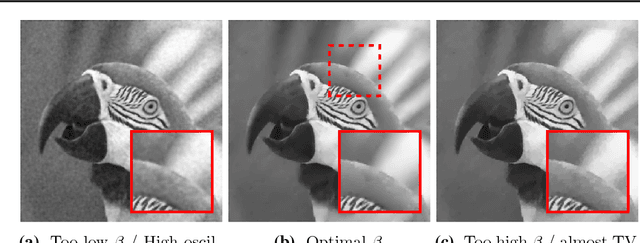

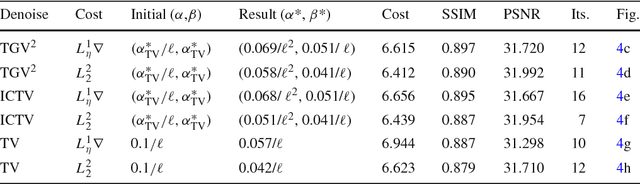

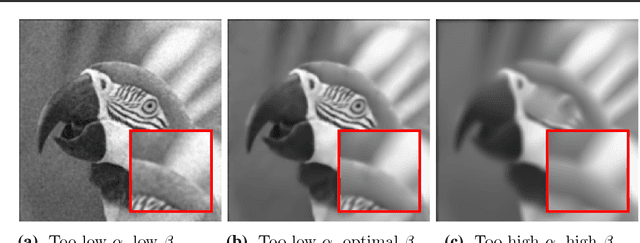

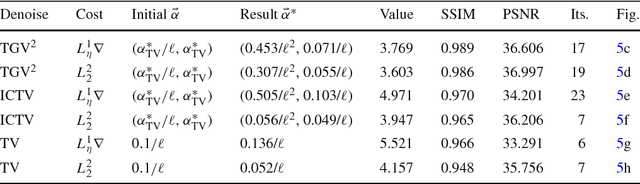

Abstract:We consider a bilevel optimisation approach for parameter learning in higher-order total variation image reconstruction models. Apart from the least squares cost functional, naturally used in bilevel learning, we propose and analyse an alternative cost, based on a Huber regularised TV-seminorm. Differentiability properties of the solution operator are verified and a first-order optimality system is derived. Based on the adjoint information, a quasi-Newton algorithm is proposed for the numerical solution of the bilevel problems. Numerical experiments are carried out to show the suitability of our approach and the improved performance of the new cost functional. Thanks to the bilevel optimisation framework, also a detailed comparison between TGV$^2$ and ICTV is carried out, showing the advantages and shortcomings of both regularisers, depending on the structure of the processed images and their noise level.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge