Bulat Maksudov

Towards generating more interpretable counterfactuals via concept vectors: a preliminary study on chest X-rays

Jun 04, 2025Abstract:An essential step in deploying medical imaging models is ensuring alignment with clinical knowledge and interpretability. We focus on mapping clinical concepts into the latent space of generative models to identify Concept Activation Vectors (CAVs). Using a simple reconstruction autoencoder, we link user-defined concepts to image-level features without explicit label training. The extracted concepts are stable across datasets, enabling visual explanations that highlight clinically relevant features. By traversing latent space along concept directions, we produce counterfactuals that exaggerate or reduce specific clinical features. Preliminary results on chest X-rays show promise for large pathologies like cardiomegaly, while smaller pathologies remain challenging due to reconstruction limits. Although not outperforming baselines, this approach offers a path toward interpretable, concept-based explanations aligned with clinical knowledge.

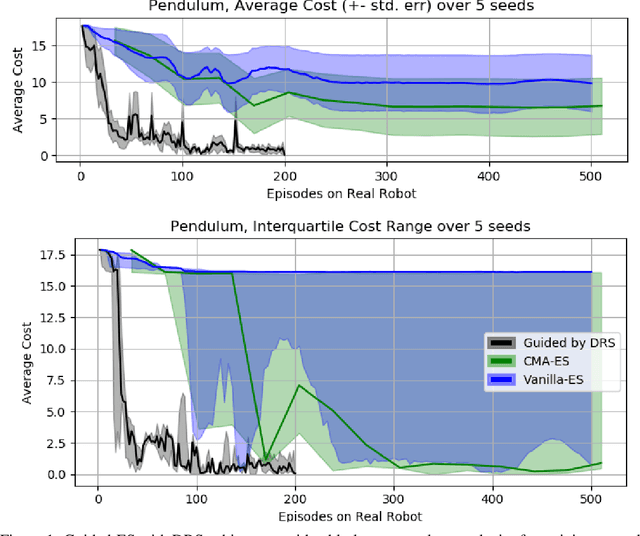

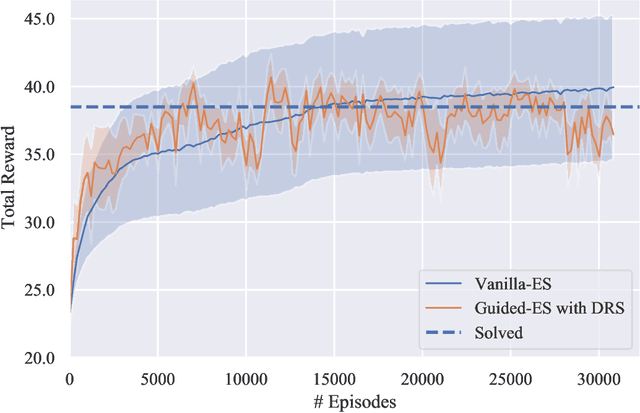

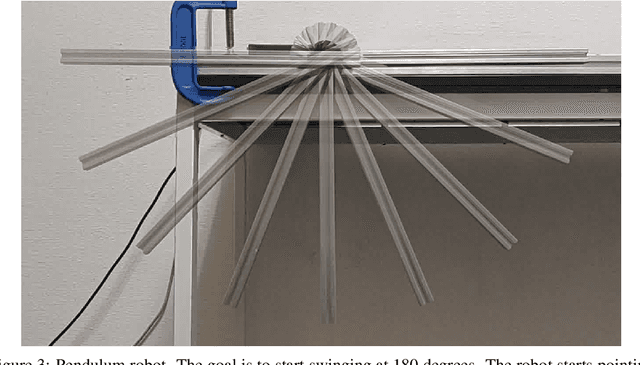

Guiding Evolutionary Strategies by Differentiable Robot Simulators

Oct 05, 2021

Abstract:In recent years, Evolutionary Strategies were actively explored in robotic tasks for policy search as they provide a simpler alternative to reinforcement learning algorithms. However, this class of algorithms is often claimed to be extremely sample-inefficient. On the other hand, there is a growing interest in Differentiable Robot Simulators (DRS) as they potentially can find successful policies with only a handful of trajectories. But the resulting gradient is not always useful for the first-order optimization. In this work, we demonstrate how DRS gradient can be used in conjunction with Evolutionary Strategies. Preliminary results suggest that this combination can reduce sample complexity of Evolutionary Strategies by 3x-5x times in both simulation and the real world.

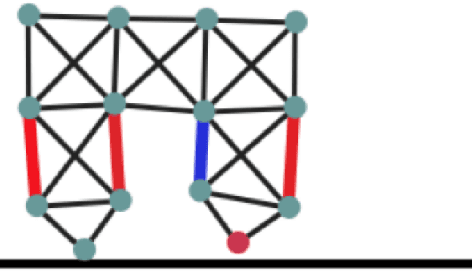

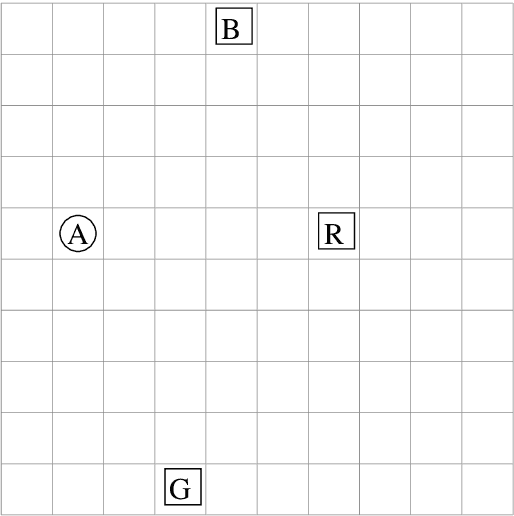

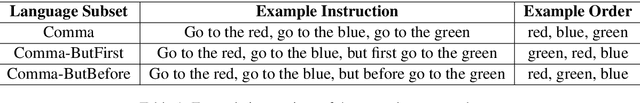

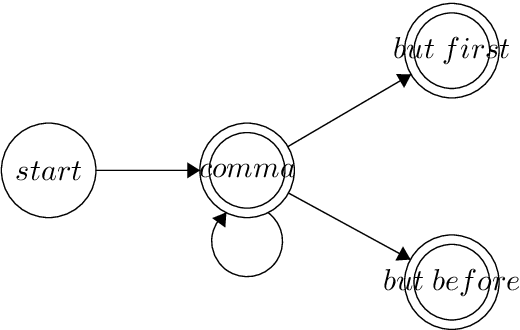

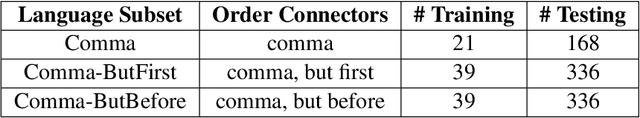

Task-Oriented Language Grounding for Language Input with Multiple Sub-Goals of Non-Linear Order

Oct 27, 2019

Abstract:In this work, we analyze the performance of general deep reinforcement learning algorithms for a task-oriented language grounding problem, where language input contains multiple sub-goals and their order of execution is non-linear. We generate a simple instructional language for the GridWorld environment, that is built around three language elements (order connectors) defining the order of execution: one linear - "comma" and two non-linear - "but first", "but before". We apply one of the deep reinforcement learning baselines - Double DQN with frame stacking and ablate several extensions such as Prioritized Experience Replay and Gated-Attention architecture. Our results show that the introduction of non-linear order connectors improves the success rate on instructions with a higher number of sub-goals in 2-3 times, but it still does not exceed 20%. Also, we observe that the usage of Gated-Attention provides no competitive advantage against concatenation in this setting. Source code and experiments' results are available at https://github.com/vkurenkov/language-grounding-multigoal

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge