Bob Coecke

Quantinuum

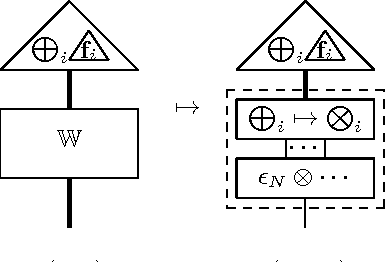

The Mathematics of Text Structure

Apr 06, 2019Abstract:In previous work we gave a mathematical foundation, referred to as DisCoCat, for how words interact in a sentence in order to produce the meaning of that sentence. To do so, we exploited the perfect structural match of grammar and categories of meaning spaces. Here, we give a mathematical foundation, referred to as DisCoCirc, for how sentences interact in texts in order to produce the meaning of that text. We revisit DisCoCat: while in the latter all meanings are states (i.e. have no input), in DisCoCirc word meanings are types of which the state can evolve, and sentences are gates within a circuit which update the meaning of words. Like in DisCoCat, word meanings can live in a variety of spaces e.g. propositional, vectorial, or cognitive. The compositional structure are string diagrams representing information flows, and an entire text yields a single string diagram in which word meanings lift to the meaning of an entire text. While the developments in this paper are independent of a physical embodiment (cf. classical vs. quantum computing), both the compositional formalism and suggested meaning model are highly quantum-inspired, and implementation on a quantum computer would come with a range of benefits. We also praise Jim Lambek for his role in mathematical linguistics in general, and the development of the DisCo program more specifically.

Towards Compositional Distributional Discourse Analysis

Nov 08, 2018Abstract:Categorical compositional distributional semantics provide a method to derive the meaning of a sentence from the meaning of its individual words: the grammatical reduction of a sentence automatically induces a linear map for composing the word vectors obtained from distributional semantics. In this paper, we extend this passage from word-to-sentence to sentence-to-discourse composition. To achieve this we introduce a notion of basic anaphoric discourses as a mid-level representation between natural language discourse formalised in terms of basic discourse representation structures (DRS); and knowledge base queries over the Semantic Web as described by basic graph patterns in the Resource Description Framework (RDF). This provides a high-level specification for compositional algorithms for question answering and anaphora resolution, and allows us to give a picture of natural language understanding as a process involving both statistical and logical resources.

* In Proceedings CAPNS 2018, arXiv:1811.02701

Internal Wiring of Cartesian Verbs and Prepositions

Nov 08, 2018

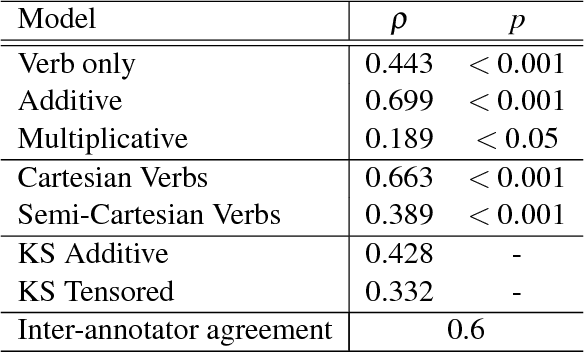

Abstract:Categorical compositional distributional semantics (CCDS) allows one to compute the meaning of phrases and sentences from the meaning of their constituent words. A type-structure carried over from the traditional categorial model of grammar a la Lambek becomes a 'wire-structure' that mediates the interaction of word meanings. However, CCDS has a much richer logical structure than plain categorical semantics in that certain words can also be given an 'internal wiring' that either provides their entire meaning or reduces the size their meaning space. Previous examples of internal wiring include relative pronouns and intersective adjectives. Here we establish the same for a large class of well-behaved transitive verbs to which we refer as Cartesian verbs, and reduce the meaning space from a ternary tensor to a unary one. Some experimental evidence is also provided.

* In Proceedings CAPNS 2018, arXiv:1811.02701

Proceedings of the 2018 Workshop on Compositional Approaches in Physics, NLP, and Social Sciences

Nov 06, 2018Abstract:The ability to compose parts to form a more complex whole, and to analyze a whole as a combination of elements, is desirable across disciplines. This workshop bring together researchers applying compositional approaches to physics, NLP, cognitive science, and game theory. Within NLP, a long-standing aim is to represent how words can combine to form phrases and sentences. Within the framework of distributional semantics, words are represented as vectors in vector spaces. The categorical model of Coecke et al. [2010], inspired by quantum protocols, has provided a convincing account of compositionality in vector space models of NLP. There is furthermore a history of vector space models in cognitive science. Theories of categorization such as those developed by Nosofsky [1986] and Smith et al. [1988] utilise notions of distance between feature vectors. More recently G\"ardenfors [2004, 2014] has developed a model of concepts in which conceptual spaces provide geometric structures, and information is represented by points, vectors and regions in vector spaces. The same compositional approach has been applied to this formalism, giving conceptual spaces theory a richer model of compositionality than previously [Bolt et al., 2018]. Compositional approaches have also been applied in the study of strategic games and Nash equilibria. In contrast to classical game theory, where games are studied monolithically as one global object, compositional game theory works bottom-up by building large and complex games from smaller components. Such an approach is inherently difficult since the interaction between games has to be considered. Research into categorical compositional methods for this field have recently begun [Ghani et al., 2018]. Moreover, the interaction between the three disciplines of cognitive science, linguistics and game theory is a fertile ground for research. Game theory in cognitive science is a well-established area [Camerer, 2011]. Similarly game theoretic approaches have been applied in linguistics [J\"ager, 2008]. Lastly, the study of linguistics and cognitive science is intimately intertwined [Smolensky and Legendre, 2006, Jackendoff, 2007]. Physics supplies compositional approaches via vector spaces and categorical quantum theory, allowing the interplay between the three disciplines to be examined.

Interacting Conceptual Spaces I : Grammatical Composition of Concepts

Sep 29, 2017

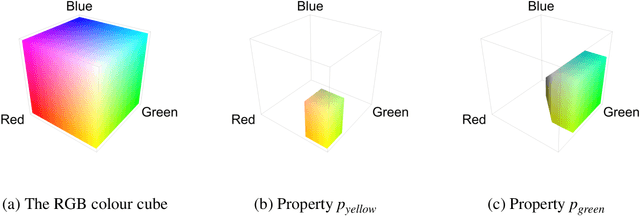

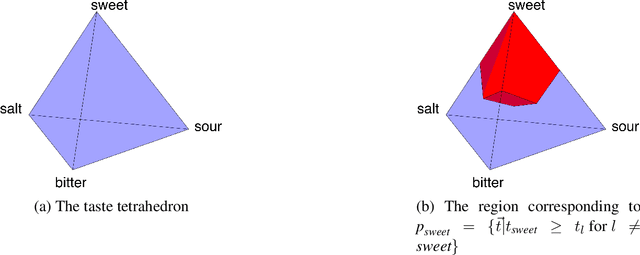

Abstract:The categorical compositional approach to meaning has been successfully applied in natural language processing, outperforming other models in mainstream empirical language processing tasks. We show how this approach can be generalized to conceptual space models of cognition. In order to do this, first we introduce the category of convex relations as a new setting for categorical compositional semantics, emphasizing the convex structure important to conceptual space applications. We then show how to construct conceptual spaces for various types such as nouns, adjectives and verbs. Finally we show by means of examples how concepts can be systematically combined to establish the meanings of composite phrases from the meanings of their constituent parts. This provides the mathematical underpinnings of a new compositional approach to cognition.

Compositional Distributional Cognition

Aug 12, 2016

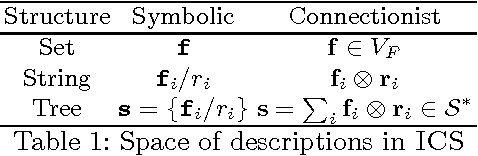

Abstract:We accommodate the Integrated Connectionist/Symbolic Architecture (ICS) of [32] within the categorical compositional semantics (CatCo) of [13], forming a model of categorical compositional cognition (CatCog). This resolves intrinsic problems with ICS such as the fact that representations inhabit an unbounded space and that sentences with differing tree structures cannot be directly compared. We do so in a way that makes the most of the grammatical structure available, in contrast to strategies like circular convolution. Using the CatCo model also allows us to make use of tools developed for CatCo such as the representation of ambiguity and logical reasoning via density matrices, structural meanings for words such as relative pronouns, and addressing over- and under-extension, all of which are present in cognitive processes. Moreover the CatCog framework is sufficiently flexible to allow for entirely different representations of meaning, such as conceptual spaces. Interestingly, since the CatCo model was largely inspired by categorical quantum mechanics, so is CatCog.

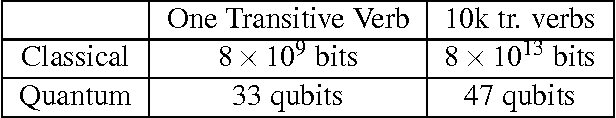

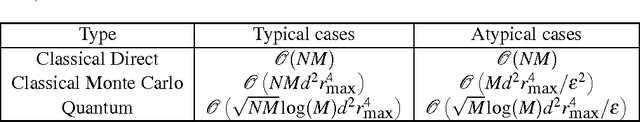

Quantum Algorithms for Compositional Natural Language Processing

Aug 04, 2016

Abstract:We propose a new application of quantum computing to the field of natural language processing. Ongoing work in this field attempts to incorporate grammatical structure into algorithms that compute meaning. In (Coecke, Sadrzadeh and Clark, 2010), the authors introduce such a model (the CSC model) based on tensor product composition. While this algorithm has many advantages, its implementation is hampered by the large classical computational resources that it requires. In this work we show how computational shortcomings of the CSC approach could be resolved using quantum computation (possibly in addition to existing techniques for dimension reduction). We address the value of quantum RAM (Giovannetti,2008) for this model and extend an algorithm from Wiebe, Braun and Lloyd (2012) into a quantum algorithm to categorize sentences in CSC. Our new algorithm demonstrates a quadratic speedup over classical methods under certain conditions.

* In Proceedings SLPCS 2016, arXiv:1608.01018

Interacting Conceptual Spaces

Aug 04, 2016Abstract:We propose applying the categorical compositional scheme of [6] to conceptual space models of cognition. In order to do this we introduce the category of convex relations as a new setting for categorical compositional semantics, emphasizing the convex structure important to conceptual space applications. We show how conceptual spaces for composite types such as adjectives and verbs can be constructed. We illustrate this new model on detailed examples.

* In Proceedings SLPCS 2016, arXiv:1608.01018

Dual Density Operators and Natural Language Meaning

Aug 04, 2016Abstract:Density operators allow for representing ambiguity about a vector representation, both in quantum theory and in distributional natural language meaning. Formally equivalently, they allow for discarding part of the description of a composite system, where we consider the discarded part to be the context. We introduce dual density operators, which allow for two independent notions of context. We demonstrate the use of dual density operators within a grammatical-compositional distributional framework for natural language meaning. We show that dual density operators can be used to simultaneously represent: (i) ambiguity about word meanings (e.g. queen as a person vs. queen as a band), and (ii) lexical entailment (e.g. tiger -> mammal). We provide a proof-of-concept example.

* In Proceedings SLPCS 2016, arXiv:1608.01018

From quantum foundations via natural language meaning to a theory of everything

Feb 22, 2016Abstract:In this paper we argue for a paradigmatic shift from `reductionism' to `togetherness'. In particular, we show how interaction between systems in quantum theory naturally carries over to modelling how word meanings interact in natural language. Since meaning in natural language, depending on the subject domain, encompasses discussions within any scientific discipline, we obtain a template for theories such as social interaction, animal behaviour, and many others.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge