Benjamin Naujoks

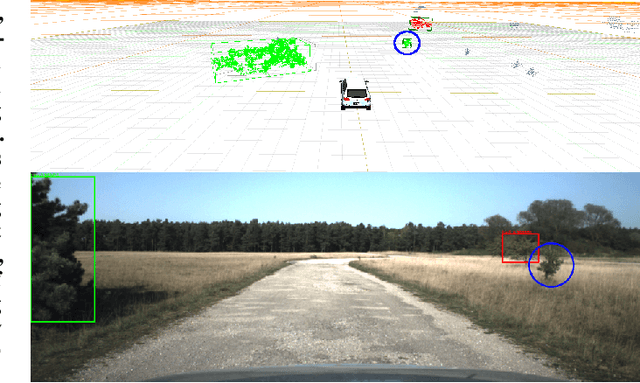

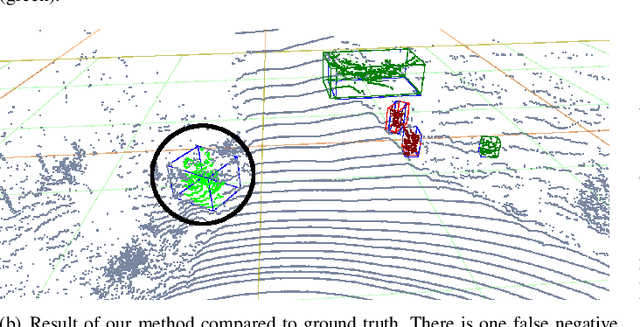

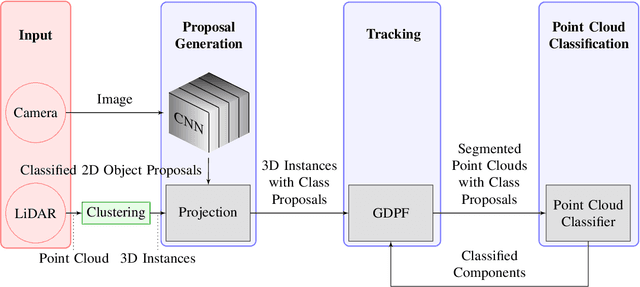

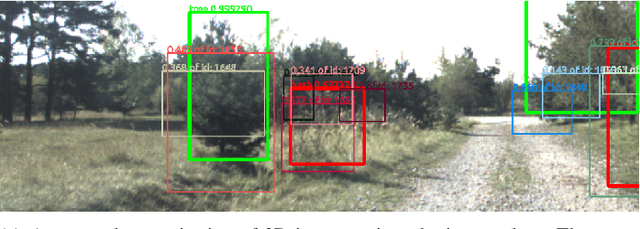

Combining Deep Learning and Model-Based Methods for Robust Real-Time Semantic Landmark Detection

Sep 02, 2019

Abstract:Compared to abstract features, significant objects, so-called landmarks, are a more natural means for vehicle localization and navigation, especially in challenging unstructured environments. The major challenge is to recognize landmarks in various lighting conditions and changing environment (growing vegetation) while only having few training samples available. We propose a new method which leverages Deep Learning as well as model-based methods to overcome the need of a large data set. Using RGB images and light detection and ranging (LiDAR) point clouds, our approach combines state-of-the-art classification results of Convolutional Neural Networks (CNN), with robust model-based methods by taking prior knowledge of previous time steps into account. Evaluations on a challenging real-wold scenario, with trees and bushes as landmarks, show promising results over pure learning-based state-of-the-art 3D detectors, while being significant faster.

The Greedy Dirichlet Process Filter - An Online Clustering Multi-Target Tracker

Nov 14, 2018

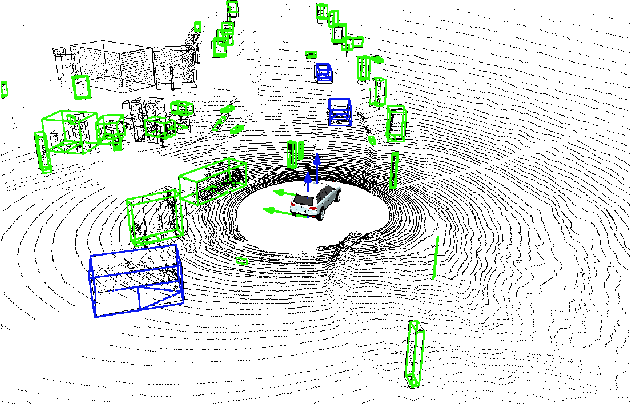

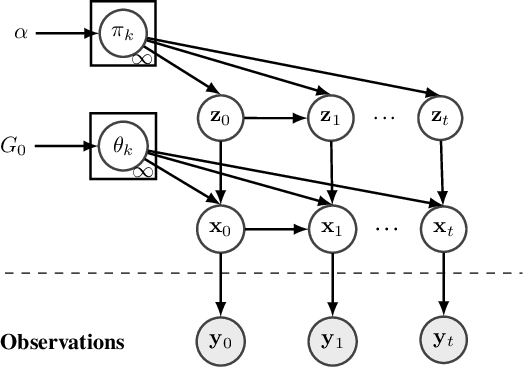

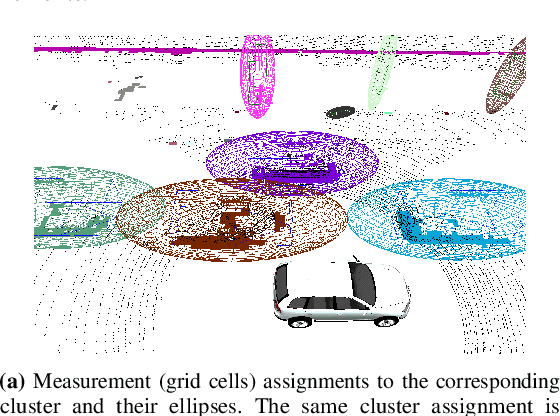

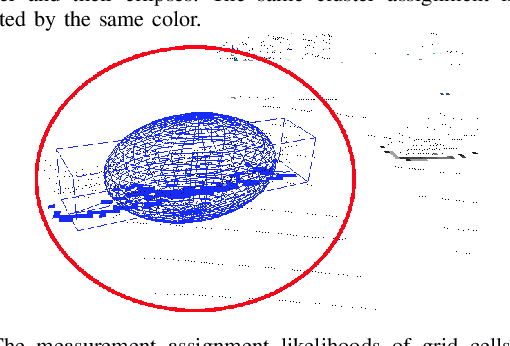

Abstract:Reliable collision avoidance is one of the main requirements for autonomous driving. Hence, it is important to correctly estimate the states of an unknown number of static and dynamic objects in real-time. Here, data association is a major challenge for every multi-target tracker. We propose a novel multi-target tracker called Greedy Dirichlet Process Filter (GDPF) based on the non-parametric Bayesian model called Dirichlet Processes and the fast posterior computation algorithm Sequential Updating and Greedy Search (SUGS). By adding a temporal dependence we get a real-time capable tracking framework without the need of a previous clustering or data association step. Real-world tests show that GDPF outperforms other multi-target tracker in terms of accuracy and stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge