Bastian Kaspschak

New insights into four-boson renormalization group limit cycles

Mar 29, 2022

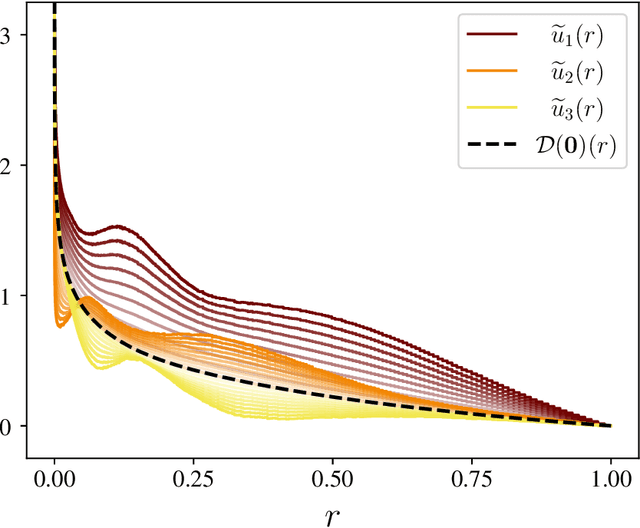

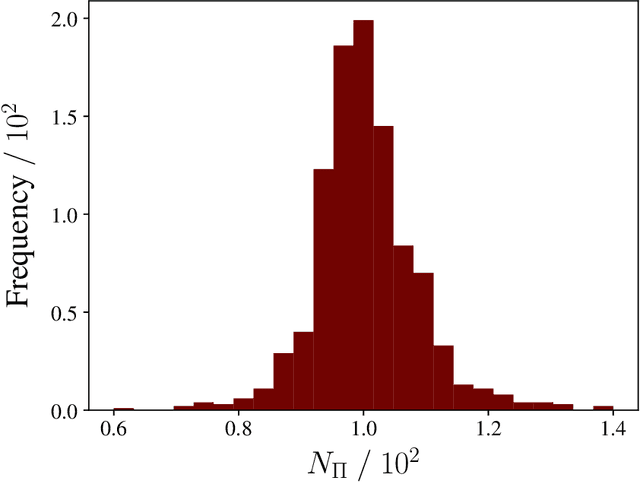

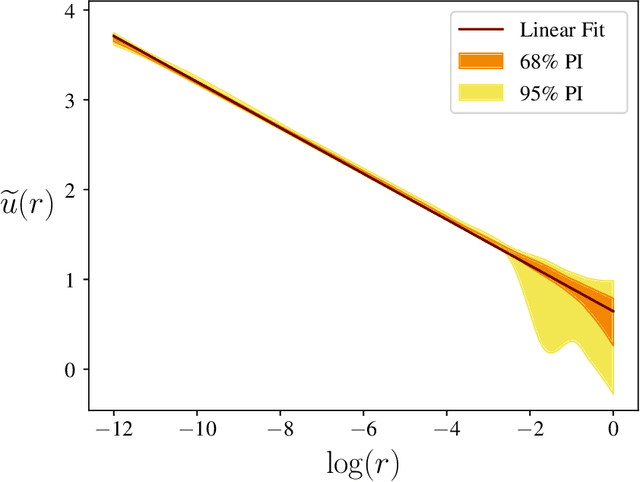

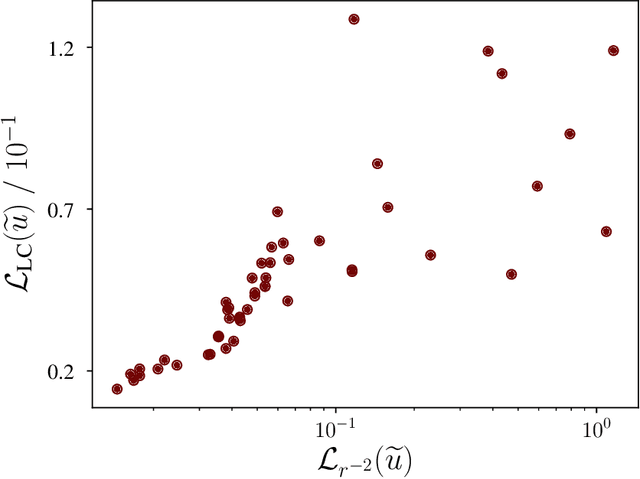

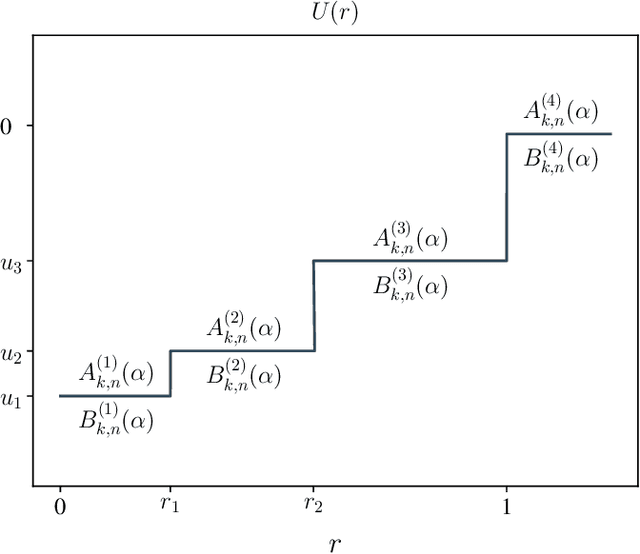

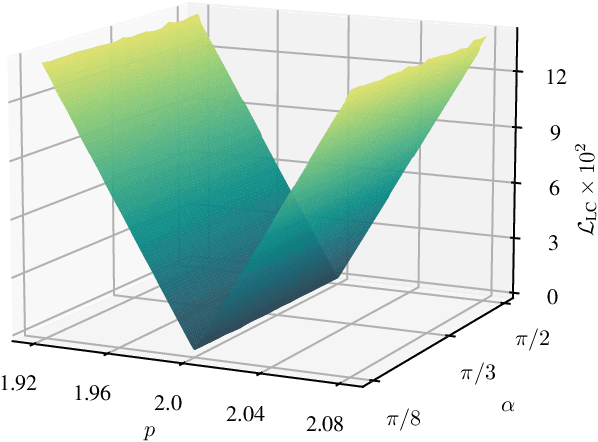

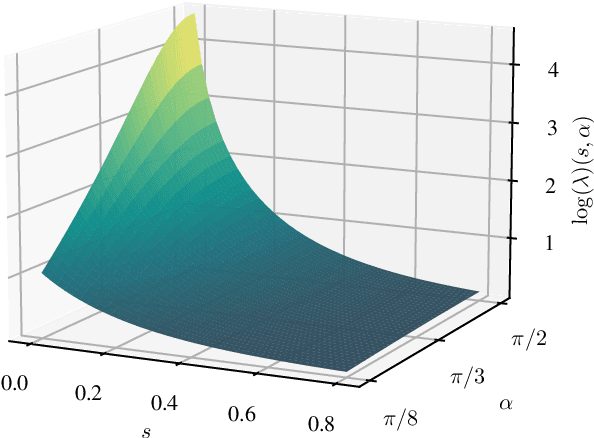

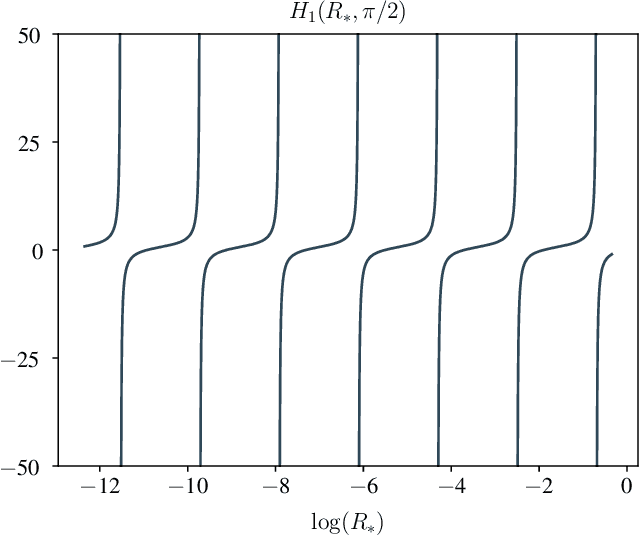

Abstract:Using machine learning techniques, we verify that the emergence of renormalization group limit cycles beyond the unitary limit is transferred from the three-boson subsystems to the whole four-boson system. Focussing on four identical bosons, we first generate populations of synthetic singular potentials within the latent space of a boosted ensemble of variational autoencoders. After introducing the limit cycle loss for measuring the deviation of a given renormalization group flow from limit cycle behavior, we minimize it by applying an elitist genetic algorithm to the generated populations. The fittest potentials are observed to accumulate around the inverse-square potential, which we prove to generate limit cycles for four bosons and which is already known to produce limit cycles in the three-boson system. This also indicates that a four-body term does not enter low-energy observables at leading order, since we do not observe any additional scale to emerge.

Three-body renormalization group limit cycles based on unsupervised feature learning

Nov 15, 2021

Abstract:Both the three-body system and the inverse square potential carry a special significance in the study of renormalization group limit cycles. In this work, we pursue an exploratory approach and address the question which two-body interactions lead to limit cycles in the three-body system at low energies, without imposing any restrictions upon the scattering length. For this, we train a boosted ensemble of variational autoencoders, that not only provide a severe dimensionality reduction, but also allow to generate further synthetic potentials, which is an important prerequisite in order to efficiently search for limit cycles in low-dimensional latent space. We do so by applying an elitist genetic algorithm to a population of synthetic potentials that minimizes a specially defined limit-cycle-loss. The resulting fittest individuals suggest that the inverse square potential is the only two-body potential that minimizes this limit cycle loss independent of the hyperangle.

A Neural Network Perturbation Theory Based on the Born Series

Sep 07, 2020

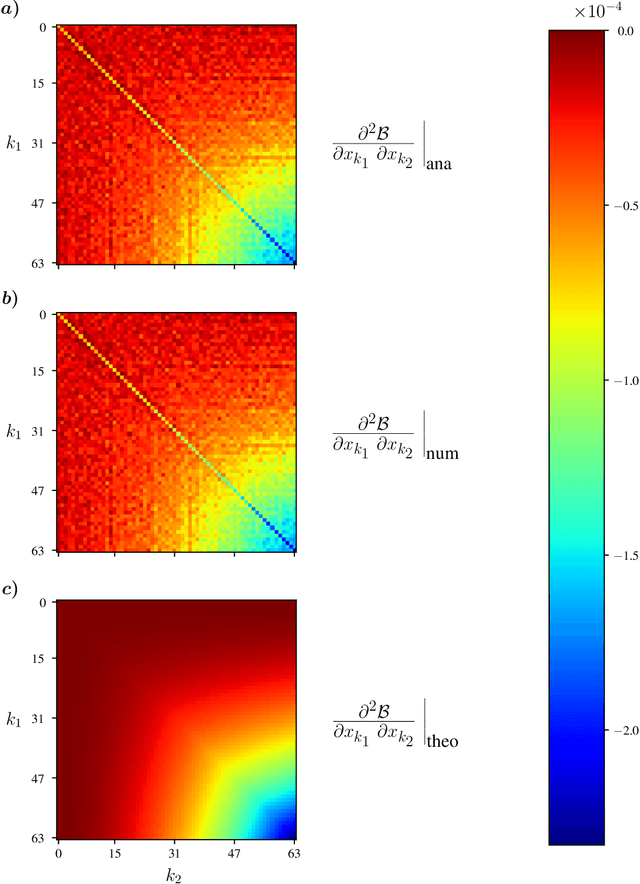

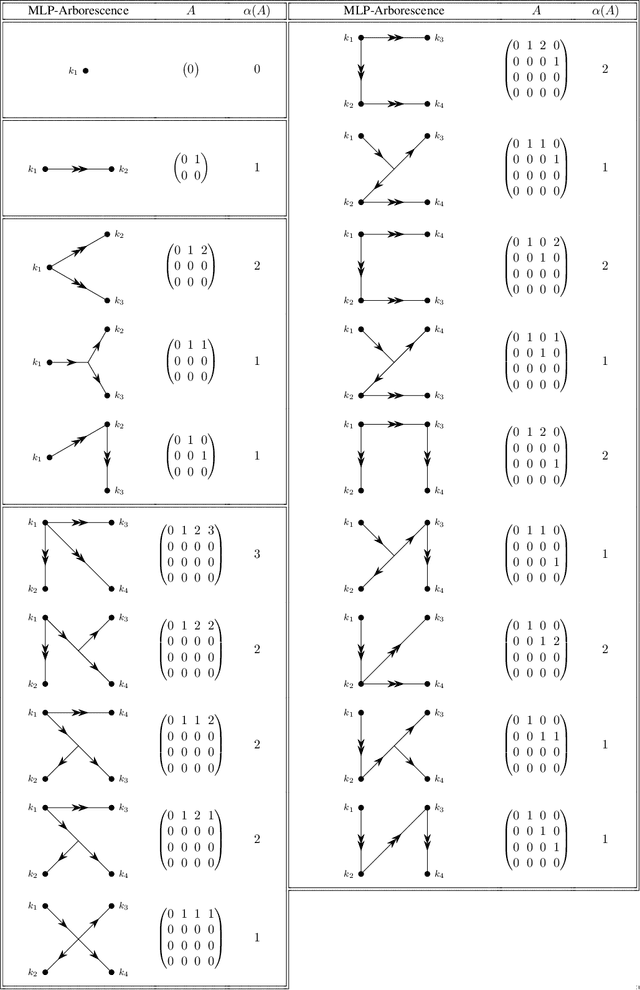

Abstract:Deep Learning has become an attractive approach towards various data-based problems of theoretical physics in the past decade. Its protagonists, the deep neural networks (DNNs), are capable of making accurate predictions for data of arbitrarily high complexity. A well-known issue most DNNs share is their lack of interpretability. In order to explain their behavior and extract physical laws they have discovered during training, a suitable interpretation method has, therefore, to be applied post-hoc. Due to its simplicity and ubiquity in quantum physics, we decide to present a rather general interpretation method in the context of two-body scattering: We find a one-to-one correspondence between the $n^\text{th}$-order Born approximation and the $n^\text{th}$-order Taylor approximation of deep multilayer perceptrons (MLPs), that predict S-wave scattering lengths $a_0$ for discretized, attractive potentials of finite range. This defines a perturbation theory for MLPs similarily to Born approximations defining a perturbation theory for $a_0$. In the case of shallow potentials, lower-order approximations, that can be argued to be local interpretations of respective MLPs, reliably reproduce $a_0$. As deep MLPs are highly nested functions, the computation of higher-order partial derivatives, which is substantial for a Taylor approximation, is an effortful endeavour. By introducing quantities we refer to as propagators and vertices and that depend on the MLP's weights and biases, we establish a graph-theoretical approach towards partial derivatives and local interpretability. Similar to Feynman rules in quantum field theories, we find rules that systematically assign diagrams consisting of propagators and vertices to the corresponding order of the MLP perturbation theory.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge