Aurobinda Routray

A novel multiclassSVM based framework to classify lithology from well logs: a real-world application

Dec 02, 2016

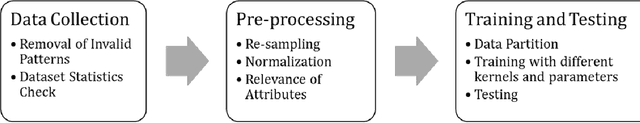

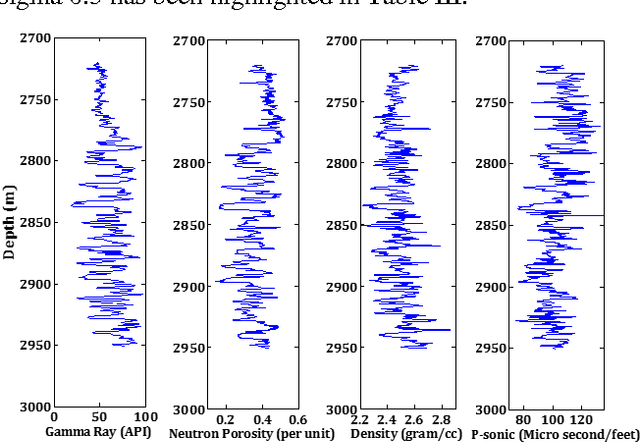

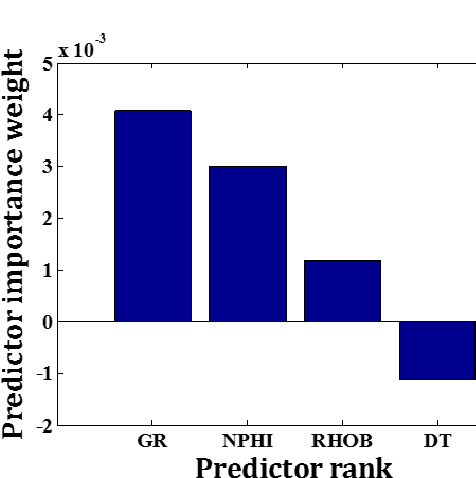

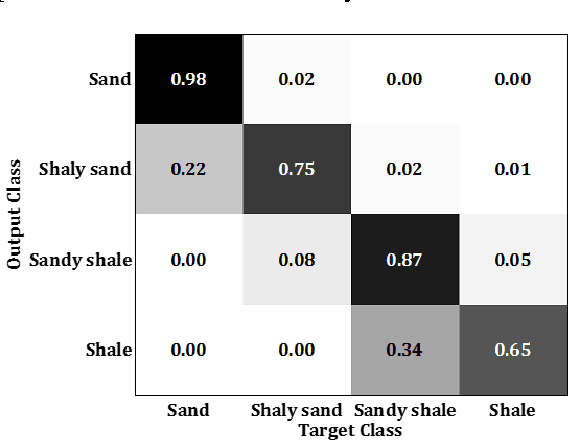

Abstract:Support vector machines (SVMs) have been recognized as a potential tool for supervised classification analyses in different domains of research. In essence, SVM is a binary classifier. Therefore, in case of a multiclass problem, the problem is divided into a series of binary problems which are solved by binary classifiers, and finally the classification results are combined following either the one-against-one or one-against-all strategies. In this paper, an attempt has been made to classify lithology using a multiclass SVM based framework using well logs as predictor variables. Here, the lithology is classified into four classes such as sand, shaly sand, sandy shale and shale based on the relative values of sand and shale fractions as suggested by an expert geologist. The available dataset consisting well logs (gamma ray, neutron porosity, density, and P-sonic) and class information from four closely spaced wells from an onshore hydrocarbon field is divided into training and testing sets. We have used one-against-all strategy to combine the results of multiple binary classifiers. The reported results established the superiority of multiclass SVM compared to other classifiers in terms of classification accuracy. The selection of kernel function and associated parameters has also been investigated here. It can be envisaged from the results achieved in this study that the proposed framework based on multiclass SVM can further be used to solve classification problems. In future research endeavor, seismic attributes can be introduced in the framework to classify the lithology throughout a study area from seismic inputs.

A One class Classifier based Framework using SVDD : Application to an Imbalanced Geological Dataset

Dec 02, 2016

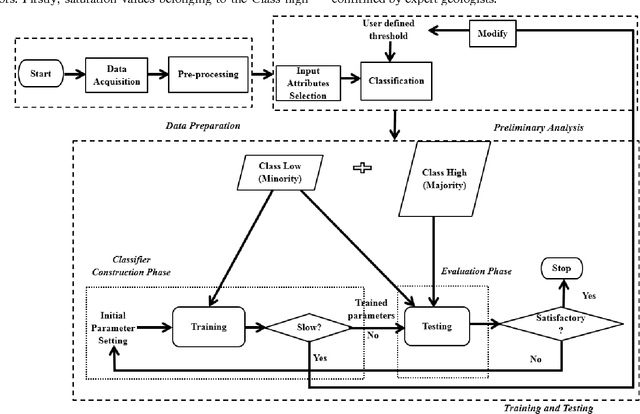

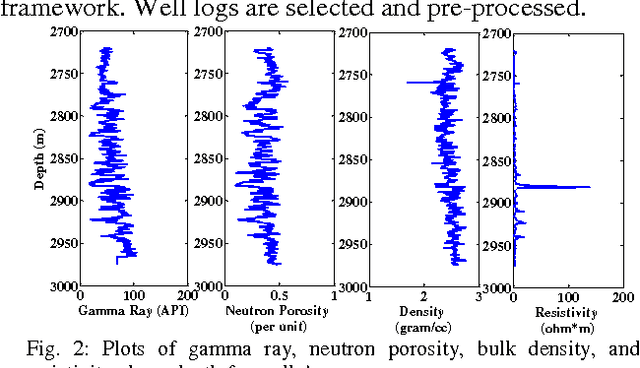

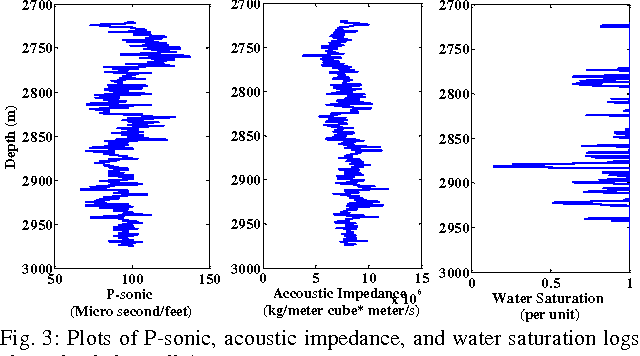

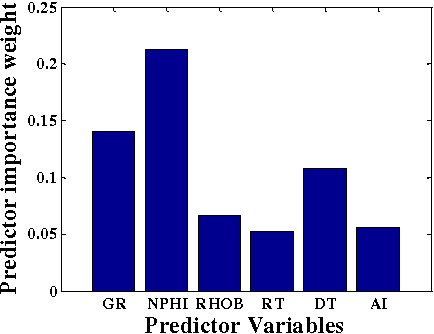

Abstract:Evaluation of hydrocarbon reservoir requires classification of petrophysical properties from available dataset. However, characterization of reservoir attributes is difficult due to the nonlinear and heterogeneous nature of the subsurface physical properties. In this context, present study proposes a generalized one class classification framework based on Support Vector Data Description (SVDD) to classify a reservoir characteristic water saturation into two classes (Class high and Class low) from four logs namely gamma ray, neutron porosity, bulk density, and P sonic using an imbalanced dataset. A comparison is carried out among proposed framework and different supervised classification algorithms in terms of g metric means and execution time. Experimental results show that proposed framework has outperformed other classifiers in terms of these performance evaluators. It is envisaged that the classification analysis performed in this study will be useful in further reservoir modeling.

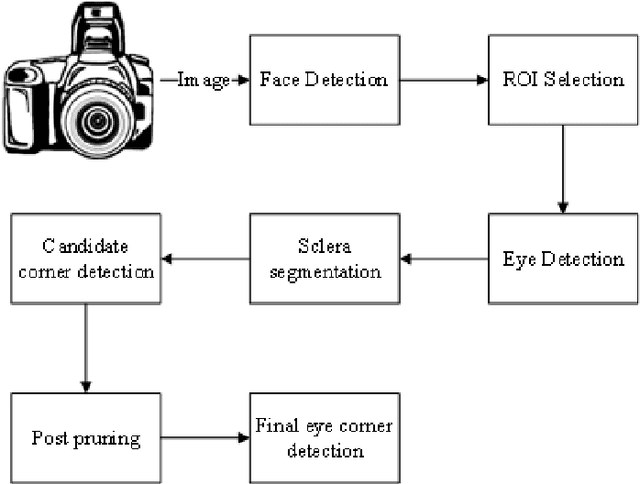

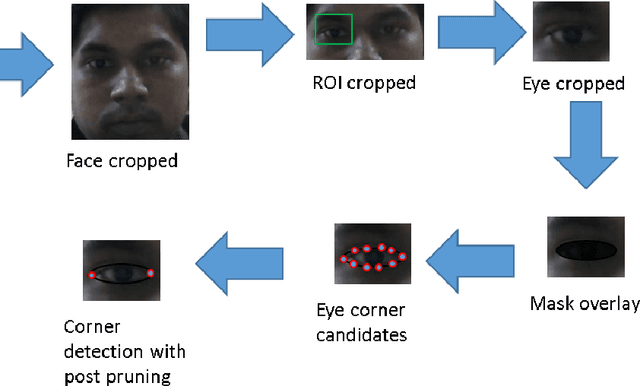

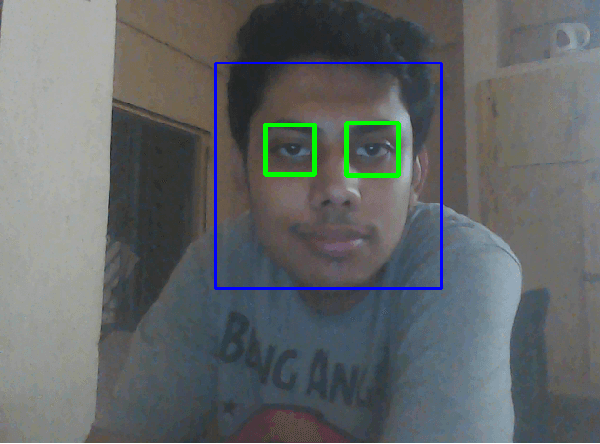

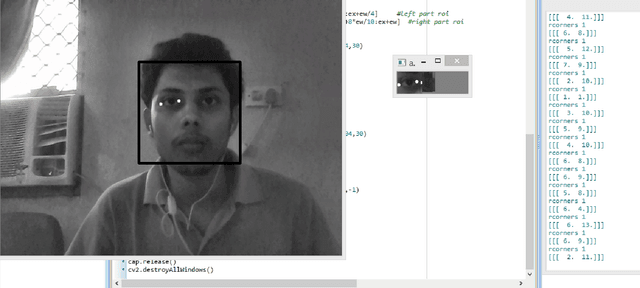

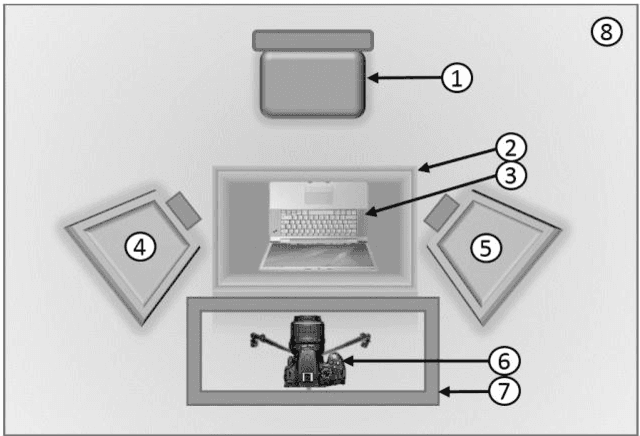

An Improved Algorithm for Eye Corner Detection

Nov 08, 2016

Abstract:In this paper, a modified algorithm for the detection of nasal and temporal eye corners is presented. The algorithm is a modification of the Santos and Proenka Method. In the first step, we detect the face and the eyes using classifiers based on Haar-like features. We then segment out the sclera, from the detected eye region. From the segmented sclera, we segment out an approximate eyelid contour. Eye corner candidates are obtained using Harris and Stephens corner detector. We introduce a post-pruning of the Eye corner candidates to locate the eye corners, finally. The algorithm has been tested on Yale, JAFFE databases as well as our created database.

Evaluation of Denoising Techniques for EOG signals based on SNR Estimation

Oct 27, 2016

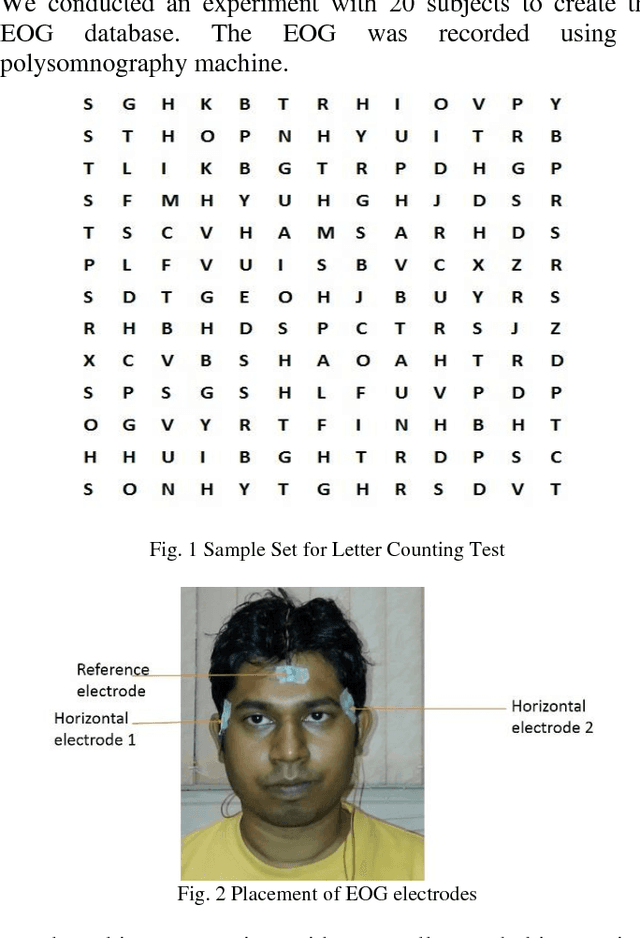

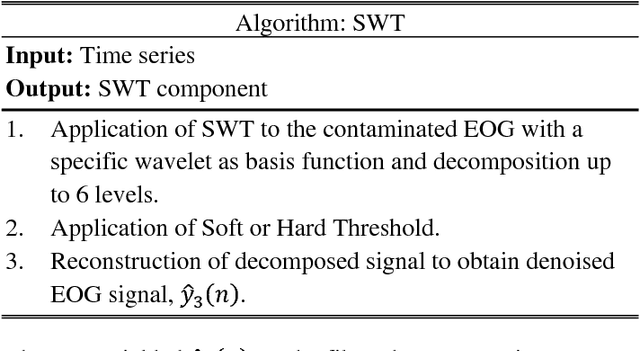

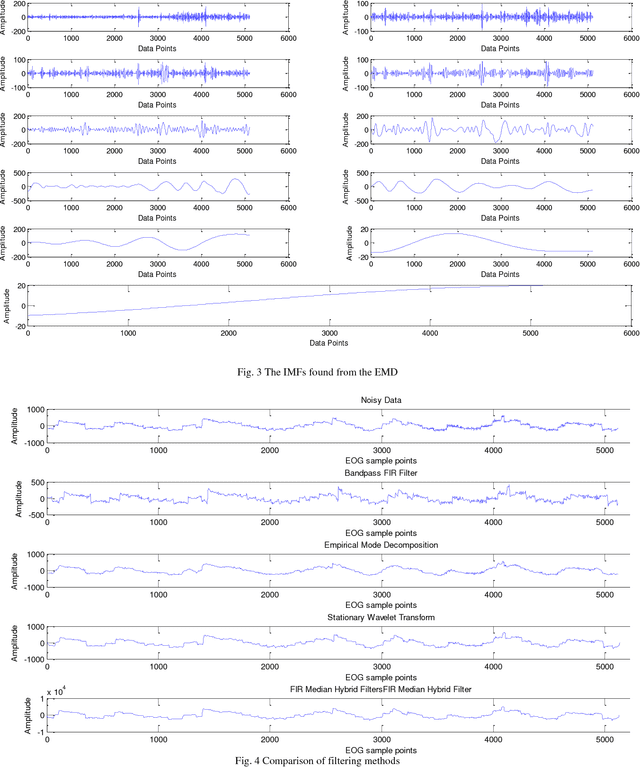

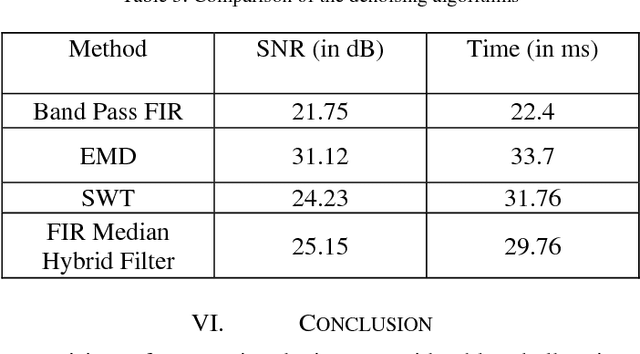

Abstract:This paper evaluates four algorithms for denoising raw Electrooculography (EOG) data based on the Signal to Noise Ratio (SNR). The SNR is computed using the eigenvalue method. The filtering algorithms are a) Finite Impulse Response (FIR) bandpass filters, b) Stationary Wavelet Transform, c) Empirical Mode Decomposition (EMD) d) FIR Median Hybrid Filters. An EOG dataset has been prepared where the subject is asked to perform letter cancelation test on 20 subjects.

SPECFACE - A Dataset of Human Faces Wearing Spectacles

Aug 11, 2016

Abstract:This paper presents a database of human faces for persons wearing spectacles. The database consists of images of faces having significant variations with respect to illumination, head pose, skin color, facial expressions and sizes, and nature of spectacles. The database contains data of 60 subjects. This database is expected to be a precious resource for the development and evaluation of algorithms for face detection, eye detection, head tracking, eye gaze tracking, etc., for subjects wearing spectacles. As such, this can be a valuable contribution to the computer vision community.

* 5 pages, 9 figures, 1 Table. arXiv admin note: text overlap with arXiv:1505.04055

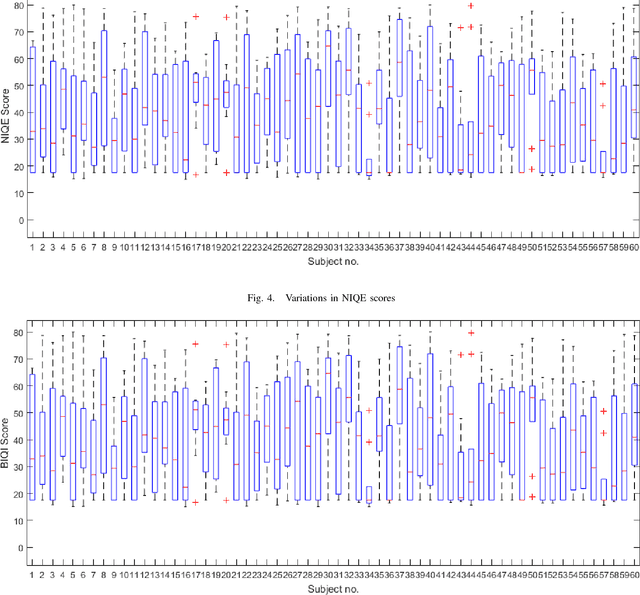

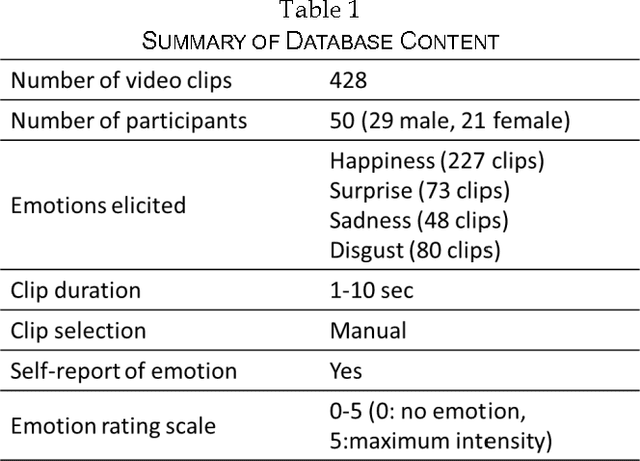

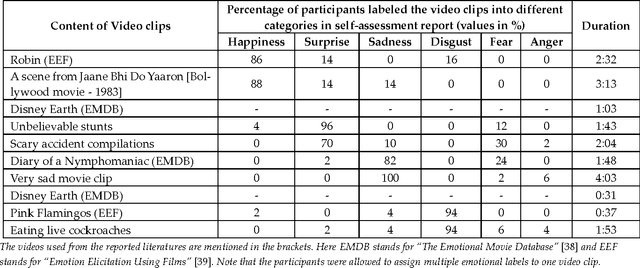

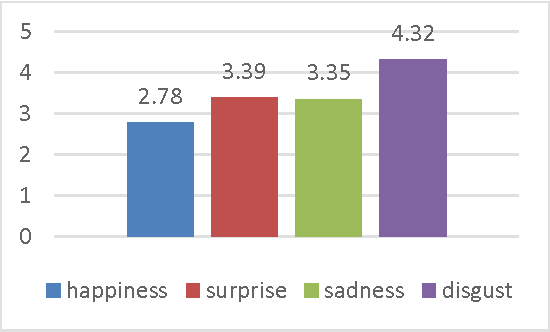

The Indian Spontaneous Expression Database for Emotion Recognition

Jun 16, 2016

Abstract:Automatic recognition of spontaneous facial expressions is a major challenge in the field of affective computing. Head rotation, face pose, illumination variation, occlusion etc. are the attributes that increase the complexity of recognition of spontaneous expressions in practical applications. Effective recognition of expressions depends significantly on the quality of the database used. Most well-known facial expression databases consist of posed expressions. However, currently there is a huge demand for spontaneous expression databases for the pragmatic implementation of the facial expression recognition algorithms. In this paper, we propose and establish a new facial expression database containing spontaneous expressions of both male and female participants of Indian origin. The database consists of 428 segmented video clips of the spontaneous facial expressions of 50 participants. In our experiment, emotions were induced among the participants by using emotional videos and simultaneously their self-ratings were collected for each experienced emotion. Facial expression clips were annotated carefully by four trained decoders, which were further validated by the nature of stimuli used and self-report of emotions. An extensive analysis was carried out on the database using several machine learning algorithms and the results are provided for future reference. Such a spontaneous database will help in the development and validation of algorithms for recognition of spontaneous expressions.

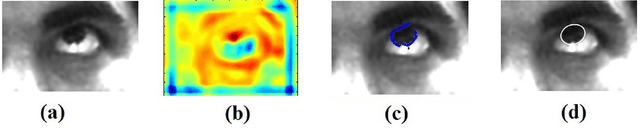

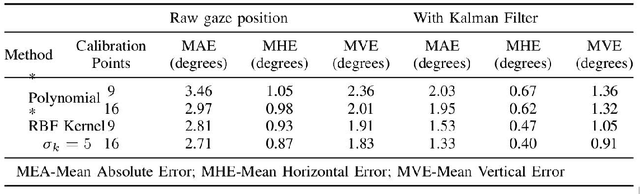

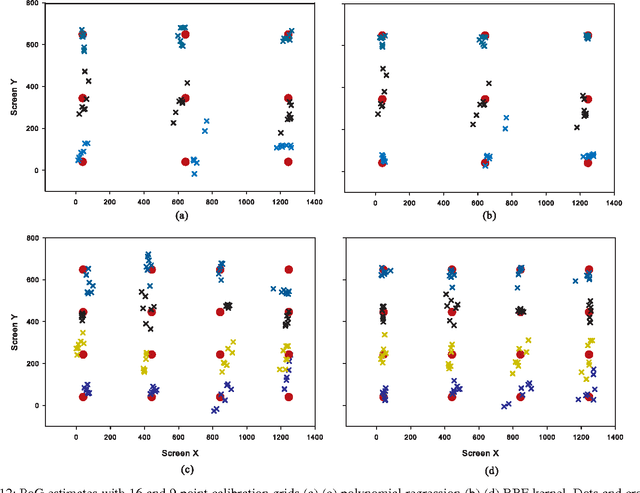

Fast and Accurate Algorithm for Eye Localization for Gaze Tracking in Low Resolution Images

May 17, 2016

Abstract:Iris centre localization in low-resolution visible images is a challenging problem in computer vision community due to noise, shadows, occlusions, pose variations, eye blinks, etc. This paper proposes an efficient method for determining iris centre in low-resolution images in the visible spectrum. Even low-cost consumer-grade webcams can be used for gaze tracking without any additional hardware. A two-stage algorithm is proposed for iris centre localization. The proposed method uses geometrical characteristics of the eye. In the first stage, a fast convolution based approach is used for obtaining the coarse location of iris centre (IC). The IC location is further refined in the second stage using boundary tracing and ellipse fitting. The algorithm has been evaluated in public databases like BioID, Gi4E and is found to outperform the state of the art methods.

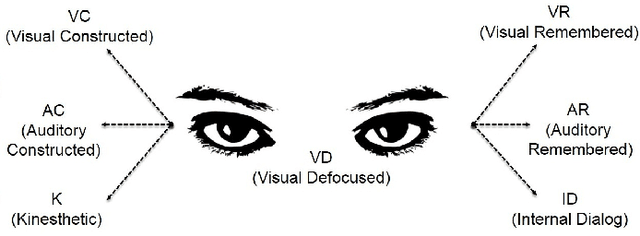

Real-time Eye Gaze Direction Classification Using Convolutional Neural Network

May 17, 2016

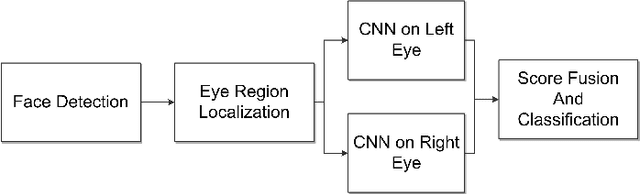

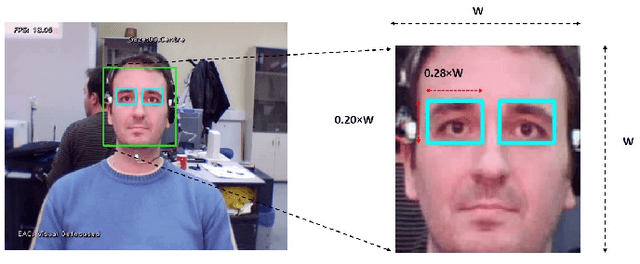

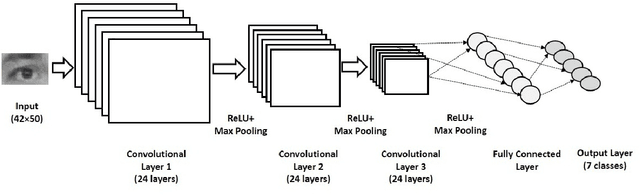

Abstract:Estimation eye gaze direction is useful in various human-computer interaction tasks. Knowledge of gaze direction can give valuable information regarding users point of attention. Certain patterns of eye movements known as eye accessing cues are reported to be related to the cognitive processes in the human brain. We propose a real-time framework for the classification of eye gaze direction and estimation of eye accessing cues. In the first stage, the algorithm detects faces using a modified version of the Viola-Jones algorithm. A rough eye region is obtained using geometric relations and facial landmarks. The eye region obtained is used in the subsequent stage to classify the eye gaze direction. A convolutional neural network is employed in this work for the classification of eye gaze direction. The proposed algorithm was tested on Eye Chimera database and found to outperform state of the art methods. The computational complexity of the algorithm is very less in the testing phase. The algorithm achieved an average frame rate of 24 fps in the desktop environment.

A Score-level Fusion Method for Eye Movement Biometrics

Jan 13, 2016

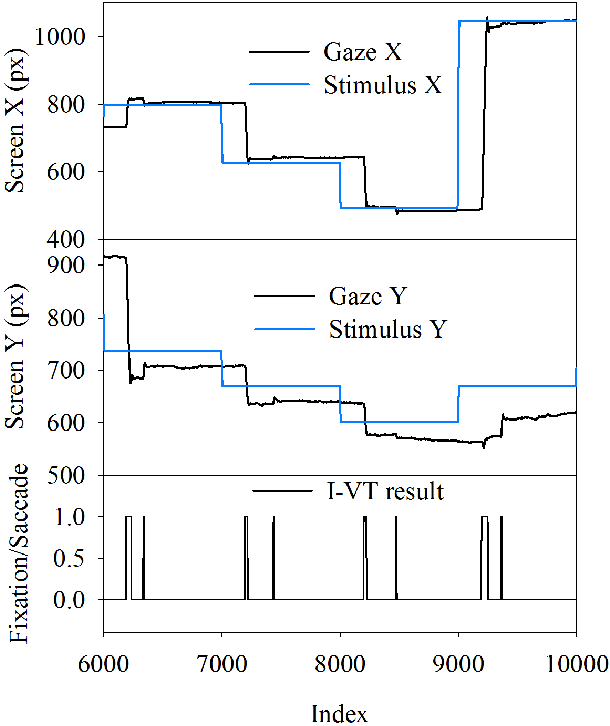

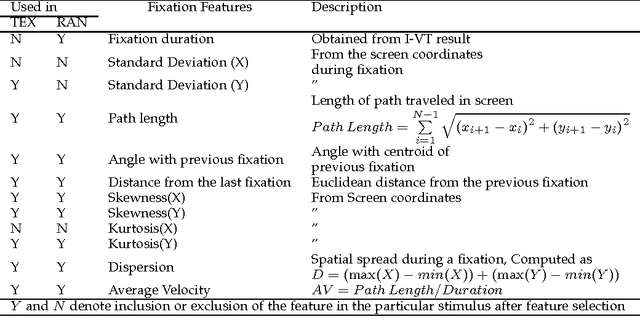

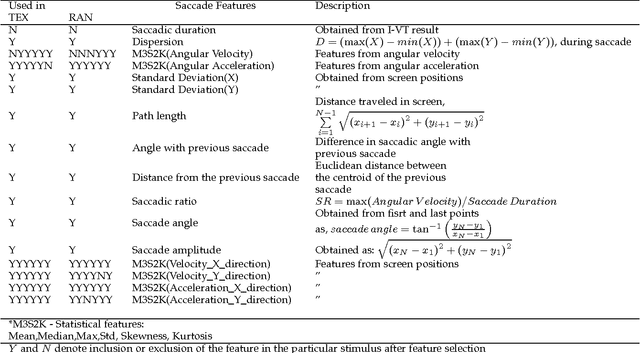

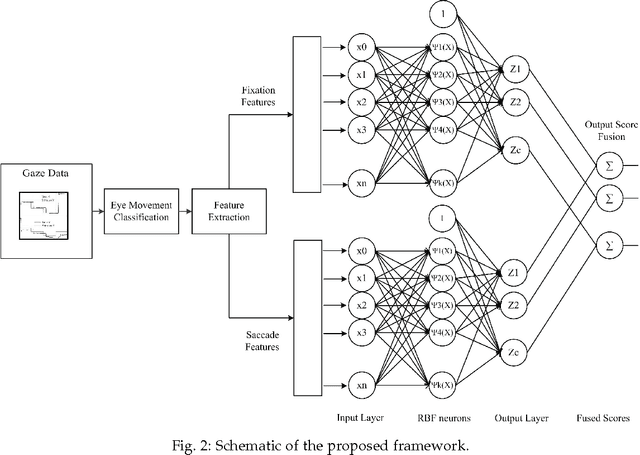

Abstract:This paper proposes a novel framework for the use of eye movement patterns for biometric applications. Eye movements contain abundant information about cognitive brain functions, neural pathways, etc. In the proposed method, eye movement data is classified into fixations and saccades. Features extracted from fixations and saccades are used by a Gaussian Radial Basis Function Network (GRBFN) based method for biometric authentication. A score fusion approach is adopted to classify the data in the output layer. In the evaluation stage, the algorithm has been tested using two types of stimuli: random dot following on a screen and text reading. The results indicate the strength of eye movement pattern as a biometric modality. The algorithm has been evaluated on BioEye 2015 database and found to outperform all the other methods. Eye movements are generated by a complex oculomotor plant which is very hard to spoof by mechanical replicas. Use of eye movement dynamics along with iris recognition technology may lead to a robust counterfeit-resistant person identification system.

Well Tops Guided Prediction of Reservoir Properties using Modular Neural Network Concept A Case Study from Western Onshore, India

Sep 23, 2015Abstract:This paper proposes a complete framework consisting pre-processing, modeling, and post-processing stages to carry out well tops guided prediction of a reservoir property (sand fraction) from three seismic attributes (seismic impedance, instantaneous amplitude, and instantaneous frequency) using the concept of modular artificial neural network (MANN). The data set used in this study comprising three seismic attributes and well log data from eight wells, is acquired from a western onshore hydrocarbon field of India. Firstly, the acquired data set is integrated and normalized. Then, well log analysis and segmentation of the total depth range into three different units (zones) separated by well tops are carried out. Secondly, three different networks are trained corresponding to three different zones using combined data set of seven wells and then trained networks are validated using the remaining test well. The target property of the test well is predicted using three different tuned networks corresponding to three zones; and then the estimated values obtained from three different networks are concatenated to represent the predicted log along the complete depth range of the testing well. The application of multiple simpler networks instead of a single one improves the prediction accuracy in terms of performance metrics such as correlation coefficient, root mean square error, absolute error mean and program execution time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge