Aurelio Uncini

On the Stability and Generalization of Learning with Kernel Activation Functions

Mar 28, 2019

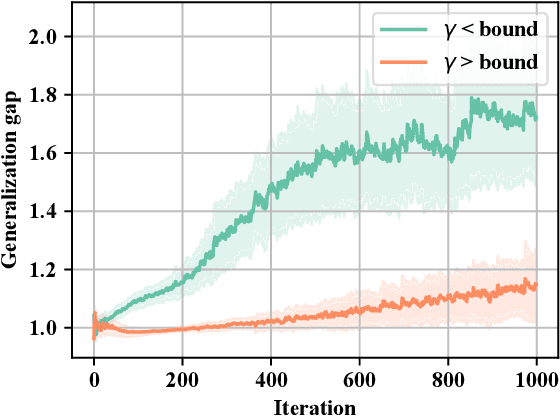

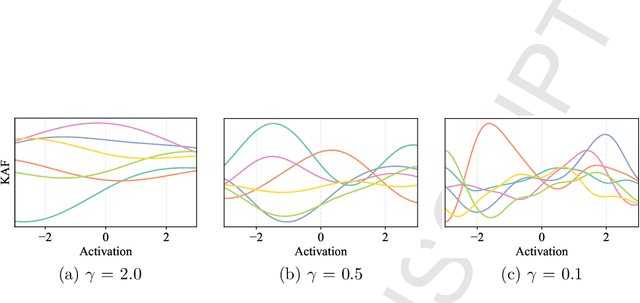

Abstract:In this brief we investigate the generalization properties of a recently-proposed class of non-parametric activation functions, the kernel activation functions (KAFs). KAFs introduce additional parameters in the learning process in order to adapt nonlinearities individually on a per-neuron basis, exploiting a cheap kernel expansion of every activation value. While this increase in flexibility has been shown to provide significant improvements in practice, a theoretical proof for its generalization capability has not been addressed yet in the literature. Here, we leverage recent literature on the stability properties of non-convex models trained via stochastic gradient descent (SGD). By indirectly proving two key smoothness properties of the models under consideration, we prove that neural networks endowed with KAFs generalize well when trained with SGD for a finite number of steps. Interestingly, our analysis provides a guideline for selecting one of the hyper-parameters of the model, the bandwidth of the scalar Gaussian kernel. A short experimental evaluation validates the proof.

Widely Linear Kernels for Complex-Valued Kernel Activation Functions

Feb 06, 2019

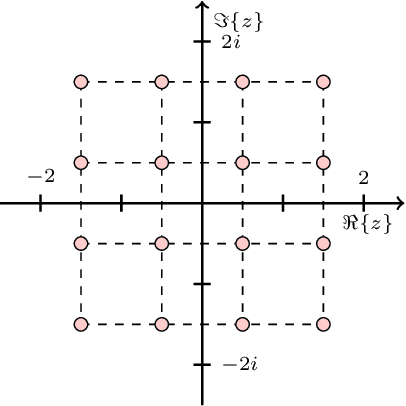

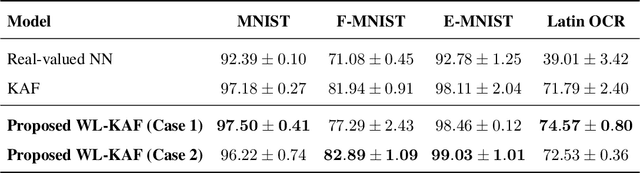

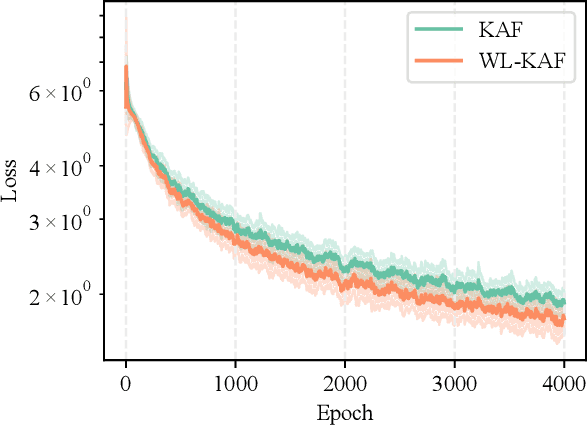

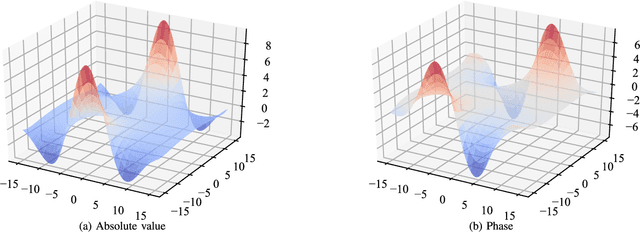

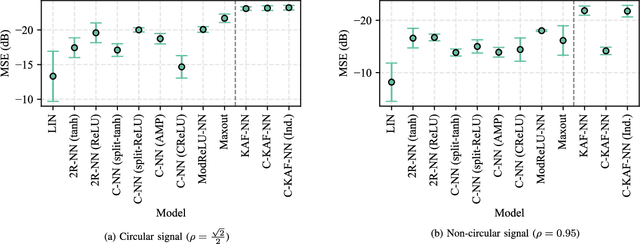

Abstract:Complex-valued neural networks (CVNNs) have been shown to be powerful nonlinear approximators when the input data can be properly modeled in the complex domain. One of the major challenges in scaling up CVNNs in practice is the design of complex activation functions. Recently, we proposed a novel framework for learning these activation functions neuron-wise in a data-dependent fashion, based on a cheap one-dimensional kernel expansion and the idea of kernel activation functions (KAFs). In this paper we argue that, despite its flexibility, this framework is still limited in the class of functions that can be modeled in the complex domain. We leverage the idea of widely linear complex kernels to extend the formulation, allowing for a richer expressiveness without an increase in the number of adaptable parameters. We test the resulting model on a set of complex-valued image classification benchmarks. Experimental results show that the resulting CVNNs can achieve higher accuracy while at the same time converging faster.

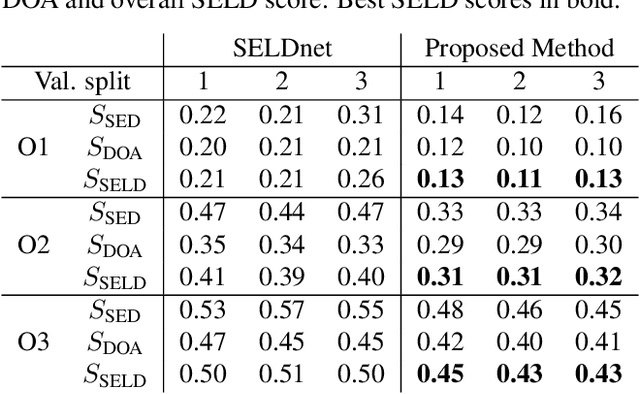

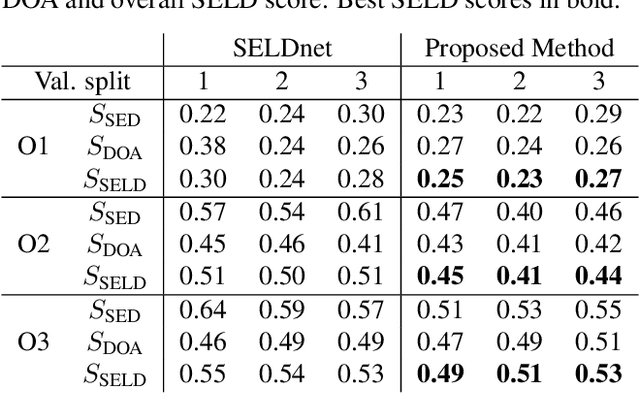

Quaternion Convolutional Neural Networks for Detection and Localization of 3D Sound Events

Dec 17, 2018

Abstract:Learning from data in the quaternion domain enables us to exploit internal dependencies of 4D signals and treating them as a single entity. One of the models that perfectly suits with quaternion-valued data processing is represented by 3D acoustic signals in their spherical harmonics decomposition. In this paper, we address the problem of localizing and detecting sound events in the spatial sound field by using quaternion-valued data processing. In particular, we consider the spherical harmonic components of the signals captured by a first-order ambisonic microphone and process them by using a quaternion convolutional neural network. Experimental results show that the proposed approach exploits the correlated nature of the ambisonic signals, thus improving accuracy results in 3D sound event detection and localization.

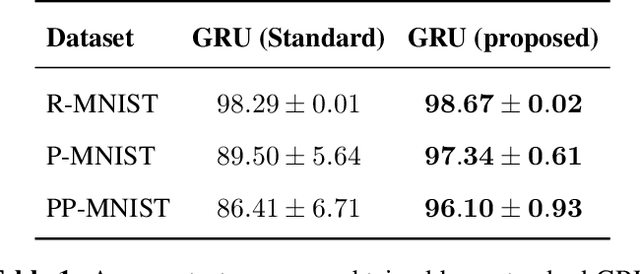

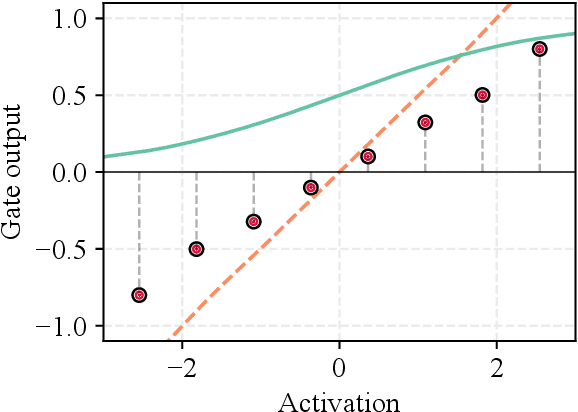

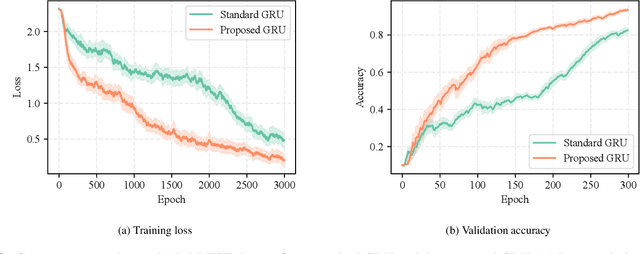

Recurrent Neural Networks with Flexible Gates using Kernel Activation Functions

Jul 11, 2018

Abstract:Gated recurrent neural networks have achieved remarkable results in the analysis of sequential data. Inside these networks, gates are used to control the flow of information, allowing to model even very long-term dependencies in the data. In this paper, we investigate whether the original gate equation (a linear projection followed by an element-wise sigmoid) can be improved. In particular, we design a more flexible architecture, with a small number of adaptable parameters, which is able to model a wider range of gating functions than the classical one. To this end, we replace the sigmoid function in the standard gate with a non-parametric formulation extending the recently proposed kernel activation function (KAF), with the addition of a residual skip-connection. A set of experiments on sequential variants of the MNIST dataset shows that the adoption of this novel gate allows to improve accuracy with a negligible cost in terms of computational power and with a large speed-up in the number of training iterations.

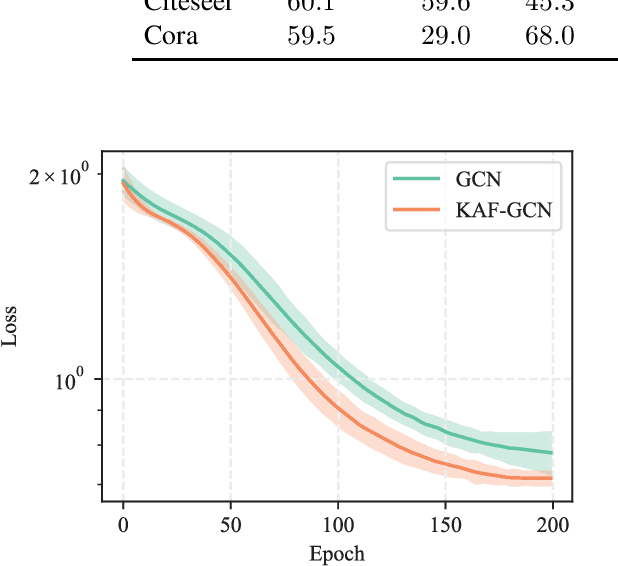

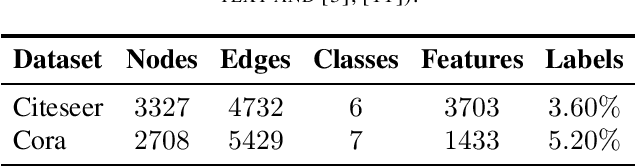

Improving Graph Convolutional Networks with Non-Parametric Activation Functions

Feb 26, 2018

Abstract:Graph neural networks (GNNs) are a class of neural networks that allow to efficiently perform inference on data that is associated to a graph structure, such as, e.g., citation networks or knowledge graphs. While several variants of GNNs have been proposed, they only consider simple nonlinear activation functions in their layers, such as rectifiers or squashing functions. In this paper, we investigate the use of graph convolutional networks (GCNs) when combined with more complex activation functions, able to adapt from the training data. More specifically, we extend the recently proposed kernel activation function, a non-parametric model which can be implemented easily, can be regularized with standard $\ell_p$-norms techniques, and is smooth over its entire domain. Our experimental evaluation shows that the proposed architecture can significantly improve over its baseline, while similar improvements cannot be obtained by simply increasing the depth or size of the original GCN.

Complex-valued Neural Networks with Non-parametric Activation Functions

Feb 22, 2018

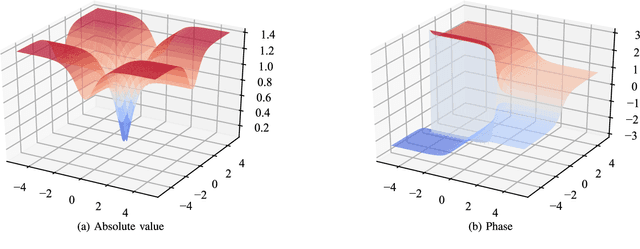

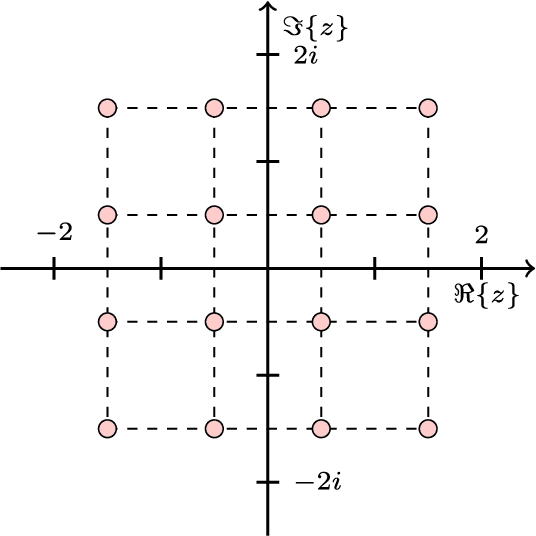

Abstract:Complex-valued neural networks (CVNNs) are a powerful modeling tool for domains where data can be naturally interpreted in terms of complex numbers. However, several analytical properties of the complex domain (e.g., holomorphicity) make the design of CVNNs a more challenging task than their real counterpart. In this paper, we consider the problem of flexible activation functions (AFs) in the complex domain, i.e., AFs endowed with sufficient degrees of freedom to adapt their shape given the training data. While this problem has received considerable attention in the real case, a very limited literature exists for CVNNs, where most activation functions are generally developed in a split fashion (i.e., by considering the real and imaginary parts of the activation separately) or with simple phase-amplitude techniques. Leveraging over the recently proposed kernel activation functions (KAFs), and related advances in the design of complex-valued kernels, we propose the first fully complex, non-parametric activation function for CVNNs, which is based on a kernel expansion with a fixed dictionary that can be implemented efficiently on vectorized hardware. Several experiments on common use cases, including prediction and channel equalization, validate our proposal when compared to real-valued neural networks and CVNNs with fixed activation functions.

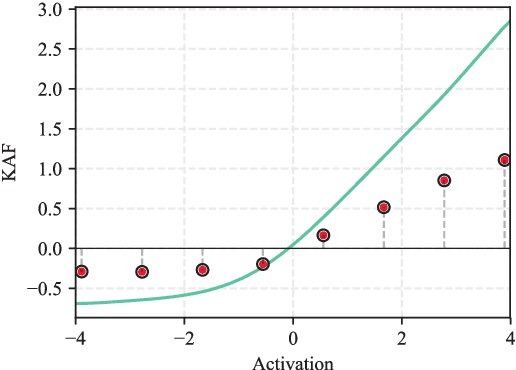

Kafnets: kernel-based non-parametric activation functions for neural networks

Nov 23, 2017

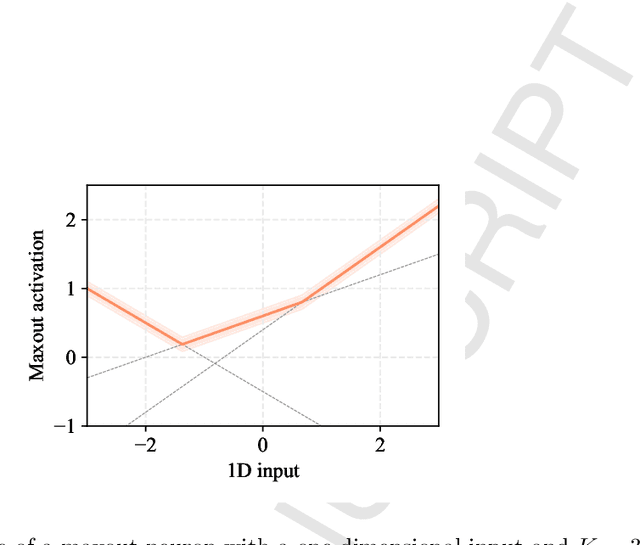

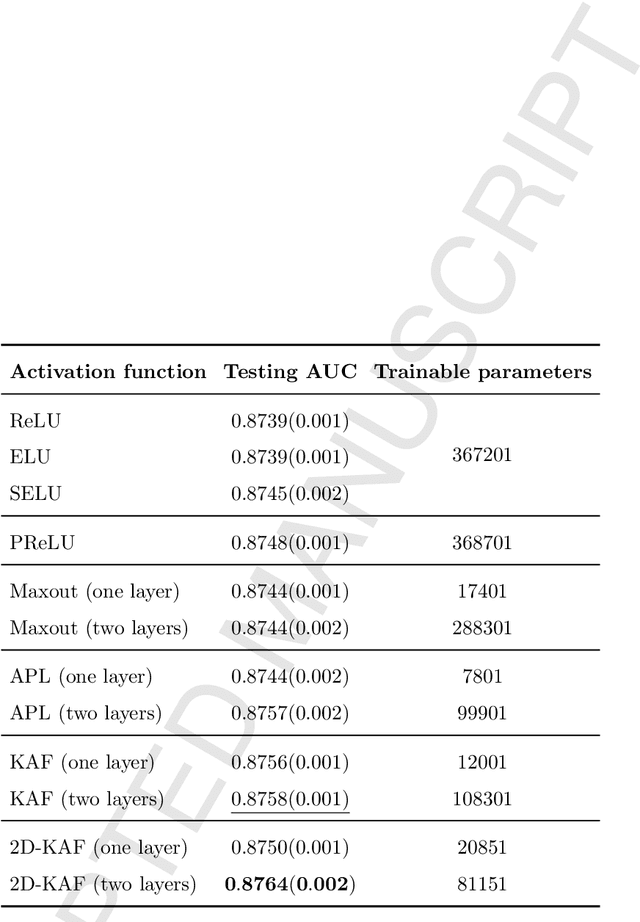

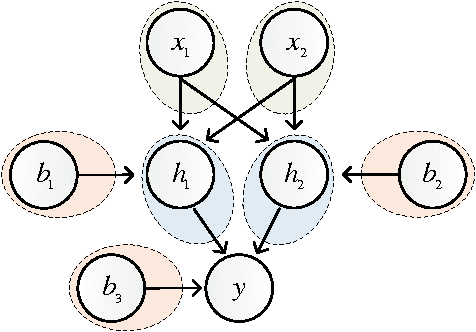

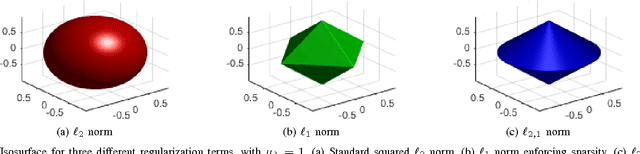

Abstract:Neural networks are generally built by interleaving (adaptable) linear layers with (fixed) nonlinear activation functions. To increase their flexibility, several authors have proposed methods for adapting the activation functions themselves, endowing them with varying degrees of flexibility. None of these approaches, however, have gained wide acceptance in practice, and research in this topic remains open. In this paper, we introduce a novel family of flexible activation functions that are based on an inexpensive kernel expansion at every neuron. Leveraging over several properties of kernel-based models, we propose multiple variations for designing and initializing these kernel activation functions (KAFs), including a multidimensional scheme allowing to nonlinearly combine information from different paths in the network. The resulting KAFs can approximate any mapping defined over a subset of the real line, either convex or nonconvex. Furthermore, they are smooth over their entire domain, linear in their parameters, and they can be regularized using any known scheme, including the use of $\ell_1$ penalties to enforce sparseness. To the best of our knowledge, no other known model satisfies all these properties simultaneously. In addition, we provide a relatively complete overview on alternative techniques for adapting the activation functions, which is currently lacking in the literature. A large set of experiments validates our proposal.

Learning activation functions from data using cubic spline interpolation

May 11, 2017

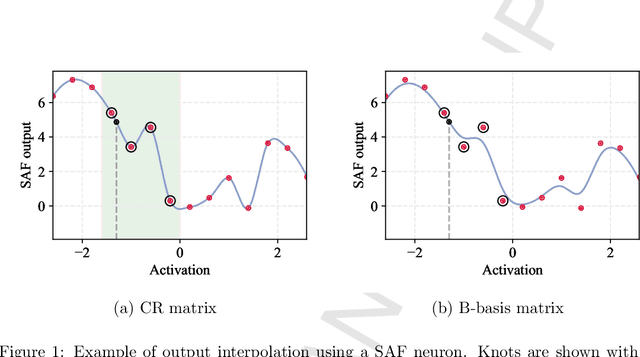

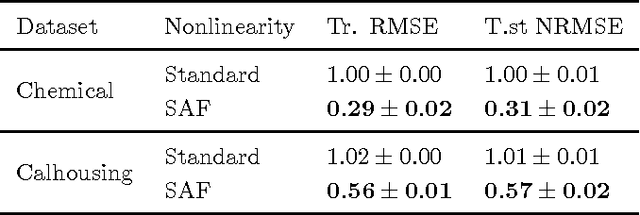

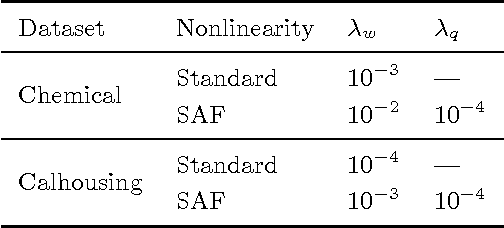

Abstract:Neural networks require a careful design in order to perform properly on a given task. In particular, selecting a good activation function (possibly in a data-dependent fashion) is a crucial step, which remains an open problem in the research community. Despite a large amount of investigations, most current implementations simply select one fixed function from a small set of candidates, which is not adapted during training, and is shared among all neurons throughout the different layers. However, neither two of these assumptions can be supposed optimal in practice. In this paper, we present a principled way to have data-dependent adaptation of the activation functions, which is performed independently for each neuron. This is achieved by leveraging over past and present advances on cubic spline interpolation, allowing for local adaptation of the functions around their regions of use. The resulting algorithm is relatively cheap to implement, and overfitting is counterbalanced by the inclusion of a novel damping criterion, which penalizes unwanted oscillations from a predefined shape. Experimental results validate the proposal over two well-known benchmarks.

Effective Blind Source Separation Based on the Adam Algorithm

Sep 26, 2016

Abstract:In this paper, we derive a modified InfoMax algorithm for the solution of Blind Signal Separation (BSS) problems by using advanced stochastic methods. The proposed approach is based on a novel stochastic optimization approach known as the Adaptive Moment Estimation (Adam) algorithm. The proposed BSS solution can benefit from the excellent properties of the Adam approach. In order to derive the new learning rule, the Adam algorithm is introduced in the derivation of the cost function maximization in the standard InfoMax algorithm. The natural gradient adaptation is also considered. Finally, some experimental results show the effectiveness of the proposed approach.

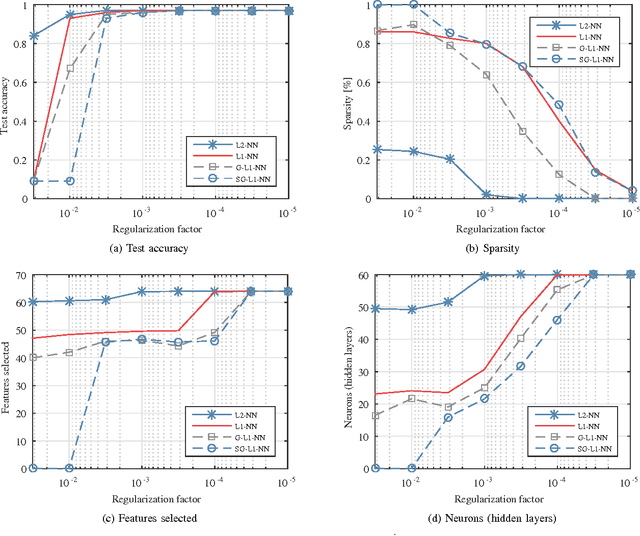

Group Sparse Regularization for Deep Neural Networks

Jul 02, 2016

Abstract:In this paper, we consider the joint task of simultaneously optimizing (i) the weights of a deep neural network, (ii) the number of neurons for each hidden layer, and (iii) the subset of active input features (i.e., feature selection). While these problems are generally dealt with separately, we present a simple regularized formulation allowing to solve all three of them in parallel, using standard optimization routines. Specifically, we extend the group Lasso penalty (originated in the linear regression literature) in order to impose group-level sparsity on the network's connections, where each group is defined as the set of outgoing weights from a unit. Depending on the specific case, the weights can be related to an input variable, to a hidden neuron, or to a bias unit, thus performing simultaneously all the aforementioned tasks in order to obtain a compact network. We perform an extensive experimental evaluation, by comparing with classical weight decay and Lasso penalties. We show that a sparse version of the group Lasso penalty is able to achieve competitive performances, while at the same time resulting in extremely compact networks with a smaller number of input features. We evaluate both on a toy dataset for handwritten digit recognition, and on multiple realistic large-scale classification problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge