Astrid Rakow

Framing Relevance for Safety-Critical Autonomous Systems

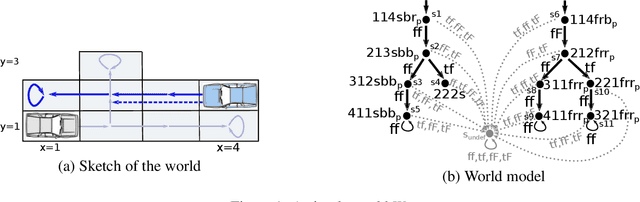

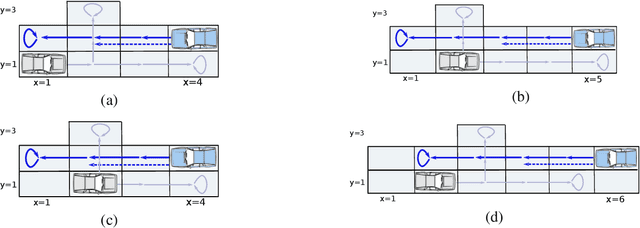

Jul 23, 2023Abstract:We are in the process of building complex highly autonomous systems that have build-in beliefs, perceive their environment and exchange information. These systems construct their respective world view and based on it they plan their future manoeuvres, i.e., they choose their actions in order to establish their goals based on their prediction of the possible futures. Usually these systems face an overwhelming flood of information provided by a variety of sources where by far not everything is relevant. The goal of our work is to develop a formal approach to determine what is relevant for a safety critical autonomous system at its current mission, i.e., what information suffices to build an appropriate world view to accomplish its mission goals.

A Doxastic Characterisation of Autonomous Decisive Systems

Sep 28, 2022

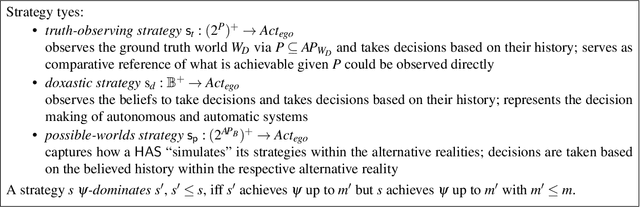

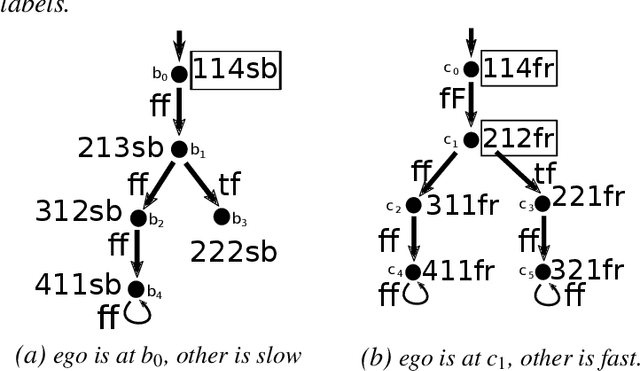

Abstract:A highly autonomous system (HAS) has to assess the situation it is in and derive beliefs, based on which, it decides what to do next. The beliefs are not solely based on the observations the HAS has made so far, but also on general insights about the world, in which the HAS operates. These insights have either been built in the HAS during design or are provided by trusted sources during its mission. Although its beliefs may be imprecise and might bear flaws, the HAS will have to extrapolate the possible futures in order to evaluate the consequences of its actions and then take its decisions autonomously. In this paper, we formalize an autonomous decisive system as a system that always chooses actions that it currently believes are the best. We show that it can be checked whether an autonomous decisive system can be built given an application domain, the dynamically changing knowledge base and a list of LTL mission goals. We moreover can synthesize a belief formation for an autonomous decisive system. For the formal characterization, we use a doxastic framework for safety-critical HASs where the belief formation supports the HAS's extrapolation.

* In Proceedings FMAS2022 ASYDE2022, arXiv:2209.13181

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge