Ashkan Ebadi

Interpretable Link Prediction in AI-Driven Cancer Research: Uncovering Co-Authorship Patterns

Dec 19, 2025Abstract:Artificial intelligence (AI) is transforming cancer diagnosis and treatment. The intricate nature of this disease necessitates the collaboration of diverse stakeholders with varied expertise to ensure the effectiveness of cancer research. Despite its importance, forming effective interdisciplinary research teams remains challenging. Understanding and predicting collaboration patterns can help researchers, organizations, and policymakers optimize resources and foster impactful research. We examined co-authorship networks as a proxy for collaboration within AI-driven cancer research. Using 7,738 publications (2000-2017) from Scopus, we constructed 36 overlapping co-authorship networks representing new, persistent, and discontinued collaborations. We engineered both attribute-based and structure-based features and built four machine learning classifiers. Model interpretability was performed using Shapley Additive Explanations (SHAP). Random forest achieved the highest recall for all three types of examined collaborations. The discipline similarity score emerged as a crucial factor, positively affecting new and persistent patterns while negatively impacting discontinued collaborations. Additionally, high productivity and seniority were positively associated with discontinued links. Our findings can guide the formation of effective research teams, enhance interdisciplinary cooperation, and inform strategic policy decisions.

An Explainable Hybrid AI Framework for Enhanced Tuberculosis and Symptom Detection

Oct 21, 2025

Abstract:Tuberculosis remains a critical global health issue, particularly in resource-limited and remote areas. Early detection is vital for treatment, yet the lack of skilled radiologists underscores the need for artificial intelligence (AI)-driven screening tools. Developing reliable AI models is challenging due to the necessity for large, high-quality datasets, which are costly to obtain. To tackle this, we propose a teacher--student framework which enhances both disease and symptom detection on chest X-rays by integrating two supervised heads and a self-supervised head. Our model achieves an accuracy of 98.85% for distinguishing between COVID-19, tuberculosis, and normal cases, and a macro-F1 score of 90.09% for multilabel symptom detection, significantly outperforming baselines. The explainability assessments also show the model bases its predictions on relevant anatomical features, demonstrating promise for deployment in clinical screening and triage settings.

Decoding Funded Research: Comparative Analysis of Topic Models and Uncovering the Effect of Gender and Geographic Location

Oct 21, 2025Abstract:Optimizing national scientific investment requires a clear understanding of evolving research trends and the demographic and geographical forces shaping them, particularly in light of commitments to equity, diversity, and inclusion. This study addresses this need by analyzing 18 years (2005-2022) of research proposals funded by the Natural Sciences and Engineering Research Council of Canada (NSERC). We conducted a comprehensive comparative evaluation of three topic modelling approaches: Latent Dirichlet Allocation (LDA), Structural Topic Modelling (STM), and BERTopic. We also introduced a novel algorithm, named COFFEE, designed to enable robust covariate effect estimation for BERTopic. This advancement addresses a significant gap, as BERTopic lacks a native function for covariate analysis, unlike the probabilistic STM. Our findings highlight that while all models effectively delineate core scientific domains, BERTopic outperformed by consistently identifying more granular, coherent, and emergent themes, such as the rapid expansion of artificial intelligence. Additionally, the covariate analysis, powered by COFFEE, confirmed distinct provincial research specializations and revealed consistent gender-based thematic patterns across various scientific disciplines. These insights offer a robust empirical foundation for funding organizations to formulate more equitable and impactful funding strategies, thereby enhancing the effectiveness of the scientific ecosystem.

Predicting Star Scientists in the Field of Artificial Intelligence: A Machine Learning Approach

Jul 18, 2024Abstract:Star scientists are highly influential researchers who have made significant contributions to their field, gained widespread recognition, and often attracted substantial research funding. They are critical for the advancement of science and innovation, and they have a significant influence on the transfer of knowledge and technology to industry. Identifying potential star scientists before their performance becomes outstanding is important for recruitment, collaboration, networking, or research funding decisions. Using machine learning techniques, this study proposes a model to predict star scientists in the field of artificial intelligence while highlighting features related to their success. Our results confirm that rising stars follow different patterns compared to their non-rising stars counterparts in almost all the early-career features. We also found that certain features such as gender and ethnic diversity play important roles in scientific collaboration and that they can significantly impact an author's career development and success. The most important features in predicting star scientists in the field of artificial intelligence were the number of articles, group discipline diversity, and weighted degree centrality. The proposed approach offers valuable insights for researchers, practitioners, and funding agencies interested in identifying and supporting talented researchers.

Empowering Tuberculosis Screening with Explainable Self-Supervised Deep Neural Networks

Jun 19, 2024

Abstract:Tuberculosis persists as a global health crisis, especially in resource-limited populations and remote regions, with more than 10 million individuals newly infected annually. It stands as a stark symbol of inequity in public health. Tuberculosis impacts roughly a quarter of the global populace, with the majority of cases concentrated in eight countries, accounting for two-thirds of all tuberculosis infections. Although a severe ailment, tuberculosis is both curable and manageable. However, early detection and screening of at-risk populations are imperative. Chest x-ray stands as the predominant imaging technique utilized in tuberculosis screening efforts. However, x-ray screening necessitates skilled radiologists, a resource often scarce, particularly in remote regions with limited resources. Consequently, there is a pressing need for artificial intelligence (AI)-powered systems to support clinicians and healthcare providers in swift screening. However, training a reliable AI model necessitates large-scale high-quality data, which can be difficult and costly to acquire. Inspired by these challenges, in this work, we introduce an explainable self-supervised self-train learning network tailored for tuberculosis case screening. The network achieves an outstanding overall accuracy of 98.14% and demonstrates high recall and precision rates of 95.72% and 99.44%, respectively, in identifying tuberculosis cases, effectively capturing clinically significant features.

Proximity Matters: Analyzing the Role of Geographical Proximity in Shaping AI Research Collaborations

Jun 10, 2024Abstract:The role of geographical proximity in facilitating inter-regional or inter-organizational collaborations has been studied thoroughly in recent years. However, the effect of geographical proximity on forming scientific collaborations at the individual level still needs to be addressed. Using publication data in the field of artificial intelligence from 2001 to 2019, in this work, the effect of geographical proximity on the likelihood of forming future scientific collaborations among researchers is studied. In addition, the interaction between geographical and network proximities is examined to see whether network proximity can substitute geographical proximity in encouraging long-distance scientific collaborations. Employing conventional and machine learning techniques, our results suggest that geographical distance impedes scientific collaboration at the individual level despite the tremendous improvements in transportation and communication technologies during recent decades. Moreover, our findings show that the effect of network proximity on the likelihood of scientific collaboration increases with geographical distance, implying that network proximity can act as a substitute for geographical proximity.

COVID-Net USPro: An Open-Source Explainable Few-Shot Deep Prototypical Network to Monitor and Detect COVID-19 Infection from Point-of-Care Ultrasound Images

Jan 04, 2023Abstract:As the Coronavirus Disease 2019 (COVID-19) continues to impact many aspects of life and the global healthcare systems, the adoption of rapid and effective screening methods to prevent further spread of the virus and lessen the burden on healthcare providers is a necessity. As a cheap and widely accessible medical image modality, point-of-care ultrasound (POCUS) imaging allows radiologists to identify symptoms and assess severity through visual inspection of the chest ultrasound images. Combined with the recent advancements in computer science, applications of deep learning techniques in medical image analysis have shown promising results, demonstrating that artificial intelligence-based solutions can accelerate the diagnosis of COVID-19 and lower the burden on healthcare professionals. However, the lack of a huge amount of well-annotated data poses a challenge in building effective deep neural networks in the case of novel diseases and pandemics. Motivated by this, we present COVID-Net USPro, an explainable few-shot deep prototypical network, that monitors and detects COVID-19 positive cases with high precision and recall from minimal ultrasound images. COVID-Net USPro achieves 99.65% overall accuracy, 99.7% recall and 99.67% precision for COVID-19 positive cases when trained with only 5 shots. The analytic pipeline and results were verified by our contributing clinician with extensive experience in POCUS interpretation, ensuring that the network makes decisions based on actual patterns.

A Trustworthy Framework for Medical Image Analysis with Deep Learning

Dec 06, 2022

Abstract:Computer vision and machine learning are playing an increasingly important role in computer-assisted diagnosis; however, the application of deep learning to medical imaging has challenges in data availability and data imbalance, and it is especially important that models for medical imaging are built to be trustworthy. Therefore, we propose TRUDLMIA, a trustworthy deep learning framework for medical image analysis, which adopts a modular design, leverages self-supervised pre-training, and utilizes a novel surrogate loss function. Experimental evaluations indicate that models generated from the framework are both trustworthy and high-performing. It is anticipated that the framework will support researchers and clinicians in advancing the use of deep learning for dealing with public health crises including COVID-19.

On the evolution of research in hypersonics: application of natural language processing and machine learning

Aug 17, 2022

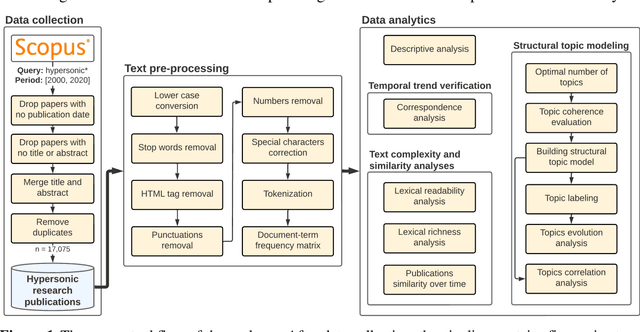

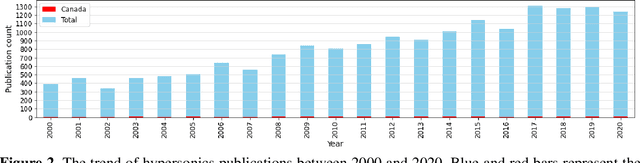

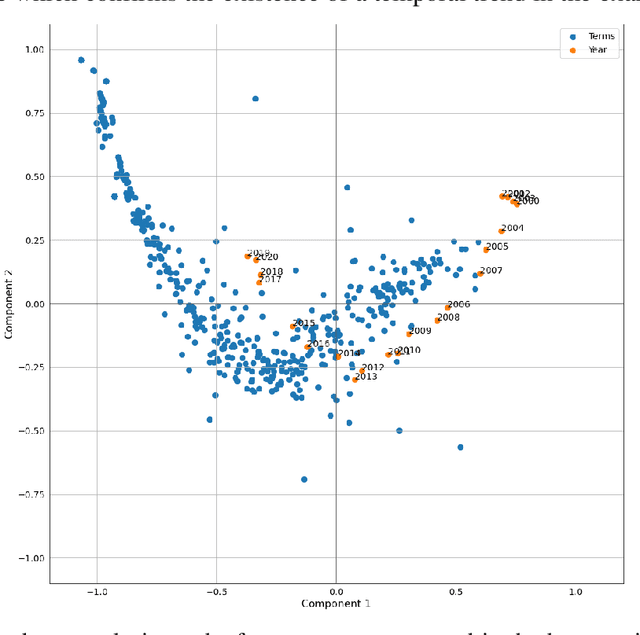

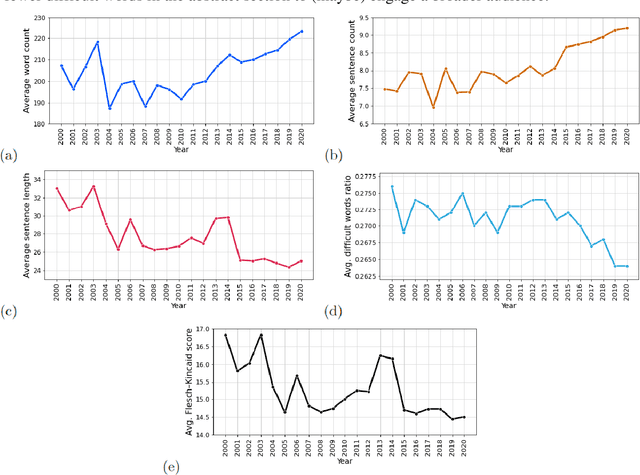

Abstract:Research and development in hypersonics have progressed significantly in recent years, with various military and commercial applications being demonstrated increasingly. Public and private organizations in several countries have been investing in hypersonics, with the aim to overtake their competitors and secure/improve strategic advantage and deterrence. For these organizations, being able to identify emerging technologies in a timely and reliable manner is paramount. Recent advances in information technology have made it possible to analyze large amounts of data, extract hidden patterns, and provide decision-makers with new insights. In this study, we focus on scientific publications about hypersonics within the period of 2000-2020, and employ natural language processing and machine learning to characterize the research landscape by identifying 12 key latent research themes and analyzing their temporal evolution. Our publication similarity analysis revealed patterns that are indicative of cycles during two decades of research. The study offers a comprehensive analysis of the research field and the fact that the research themes are algorithmically extracted removes subjectivity from the exercise and enables consistent comparisons between topics and between time intervals.

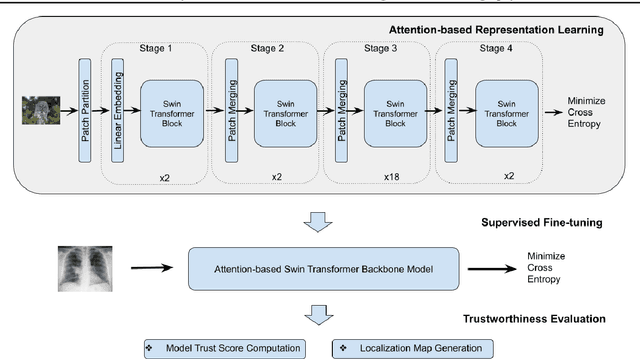

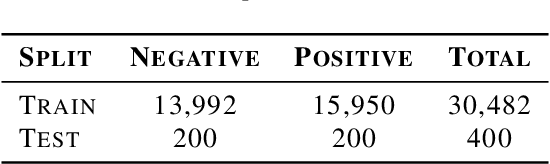

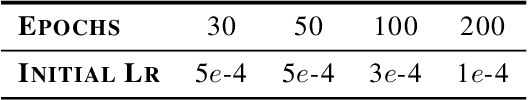

Towards Trustworthy Healthcare AI: Attention-Based Feature Learning for COVID-19 Screening With Chest Radiography

Jul 19, 2022

Abstract:Building AI models with trustworthiness is important especially in regulated areas such as healthcare. In tackling COVID-19, previous work uses convolutional neural networks as the backbone architecture, which has shown to be prone to over-caution and overconfidence in making decisions, rendering them less trustworthy -- a crucial flaw in the context of medical imaging. In this study, we propose a feature learning approach using Vision Transformers, which use an attention-based mechanism, and examine the representation learning capability of Transformers as a new backbone architecture for medical imaging. Through the task of classifying COVID-19 chest radiographs, we investigate into whether generalization capabilities benefit solely from Vision Transformers' architectural advances. Quantitative and qualitative evaluations are conducted on the trustworthiness of the models, through the use of "trust score" computation and a visual explainability technique. We conclude that the attention-based feature learning approach is promising in building trustworthy deep learning models for healthcare.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge