Arghya Basak

Multi Scale Graph Wavenet for Wind Speed Forecasting

Oct 01, 2021

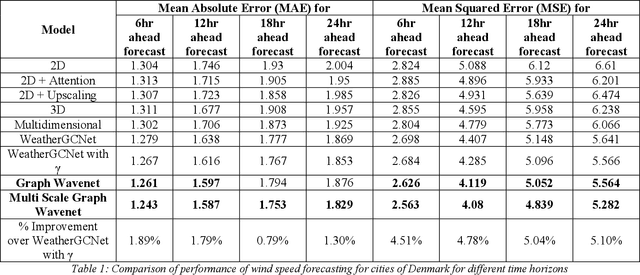

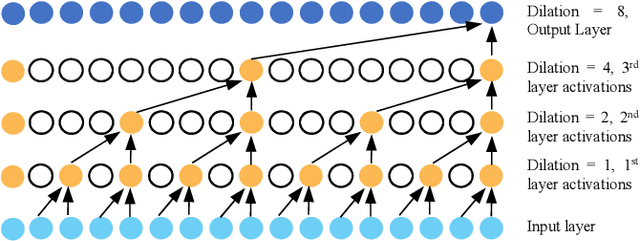

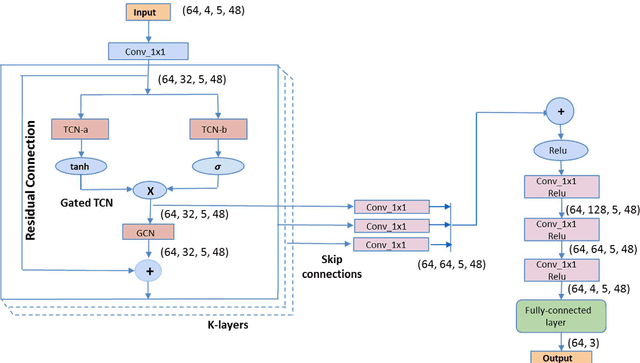

Abstract:Geometric deep learning has gained tremendous attention in both academia and industry due to its inherent capability of representing arbitrary structures. Due to exponential increase in interest towards renewable sources of energy, especially wind energy, accurate wind speed forecasting has become very important. . In this paper, we propose a novel deep learning architecture, Multi Scale Graph Wavenet for wind speed forecasting. It is based on a graph convolutional neural network and captures both spatial and temporal relationships in multivariate time series weather data for wind speed forecasting. We especially took inspiration from dilated convolutions, skip connections and the inception network to capture temporal relationships and graph convolutional networks for capturing spatial relationships in the data. We conducted experiments on real wind speed data measured at different cities in Denmark and compared our results with the state-of-the-art baseline models. Our novel architecture outperformed the state-of-the-art methods on wind speed forecasting for multiple forecast horizons by 4-5%.

Universal Adversarial Attack on Deep Learning Based Prognostics

Sep 15, 2021

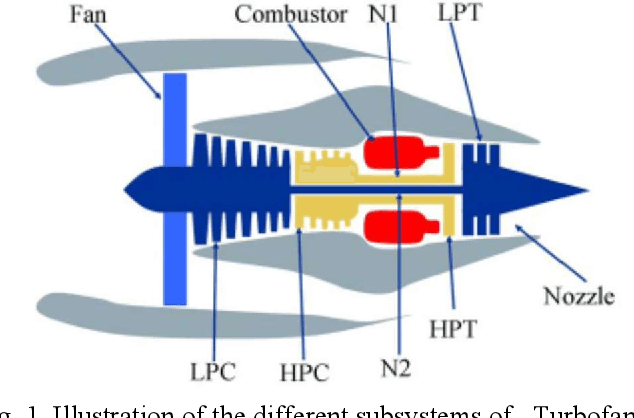

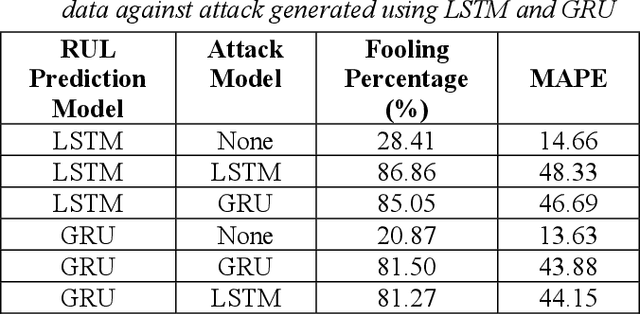

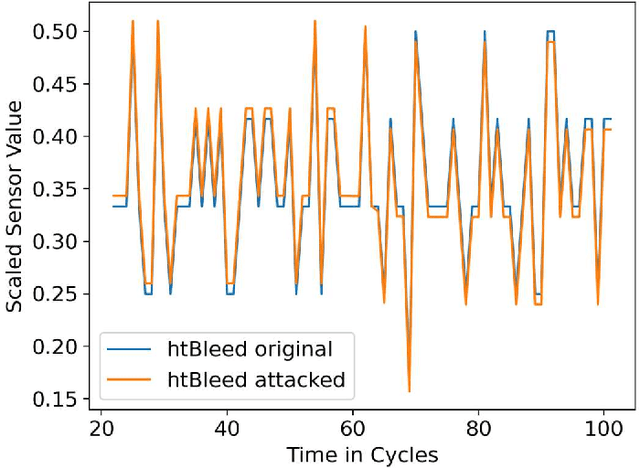

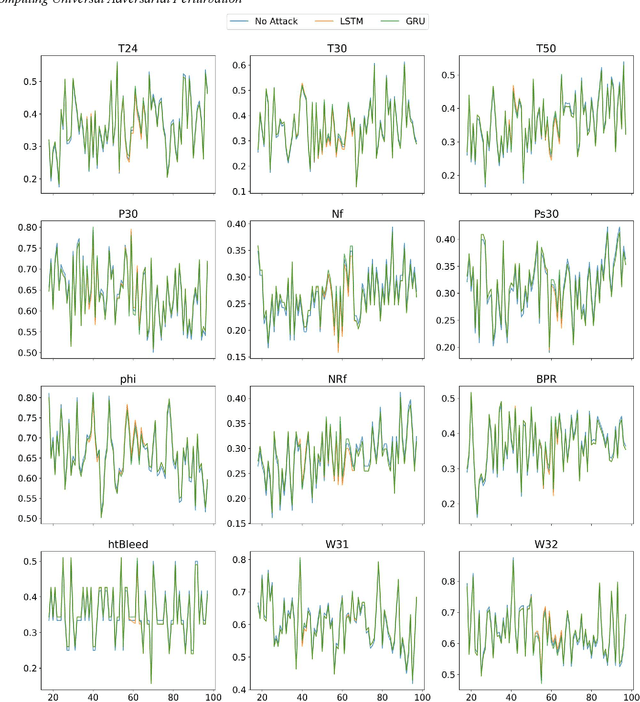

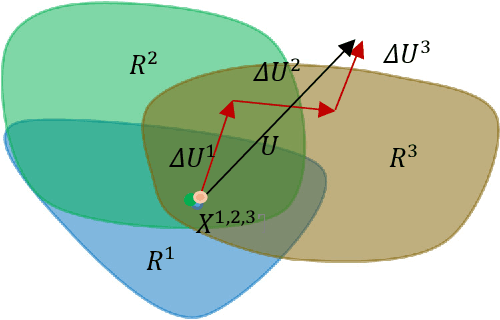

Abstract:Deep learning-based time series models are being extensively utilized in engineering and manufacturing industries for process control and optimization, asset monitoring, diagnostic and predictive maintenance. These models have shown great improvement in the prediction of the remaining useful life (RUL) of industrial equipment but suffer from inherent vulnerability to adversarial attacks. These attacks can be easily exploited and can lead to catastrophic failure of critical industrial equipment. In general, different adversarial perturbations are computed for each instance of the input data. This is, however, difficult for the attacker to achieve in real time due to higher computational requirement and lack of uninterrupted access to the input data. Hence, we present the concept of universal adversarial perturbation, a special imperceptible noise to fool regression based RUL prediction models. Attackers can easily utilize universal adversarial perturbations for real-time attack since continuous access to input data and repetitive computation of adversarial perturbations are not a prerequisite for the same. We evaluate the effect of universal adversarial attacks using NASA turbofan engine dataset. We show that addition of universal adversarial perturbation to any instance of the input data increases error in the output predicted by the model. To the best of our knowledge, we are the first to study the effect of the universal adversarial perturbation on time series regression models. We further demonstrate the effect of varying the strength of perturbations on RUL prediction models and found that model accuracy decreases with the increase in perturbation strength of the universal adversarial attack. We also showcase that universal adversarial perturbation can be transferred across different models.

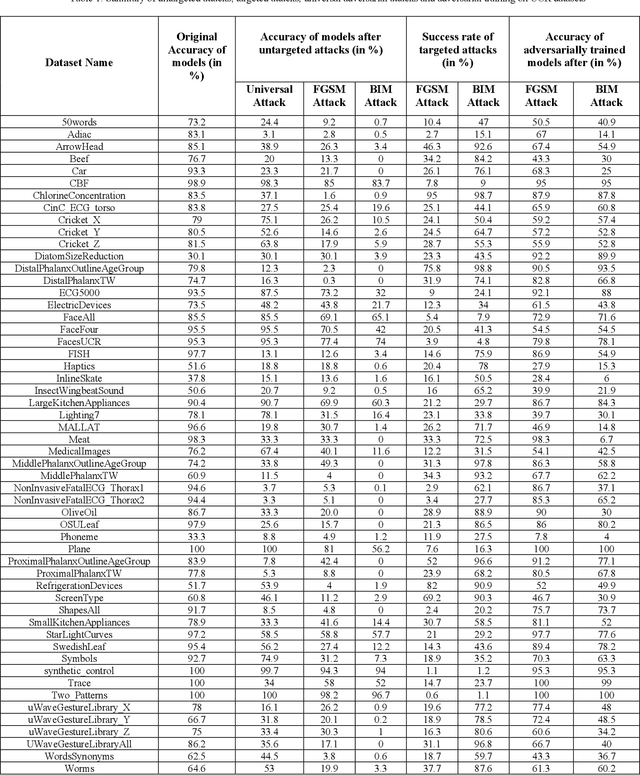

Untargeted, Targeted and Universal Adversarial Attacks and Defenses on Time Series

Jan 13, 2021

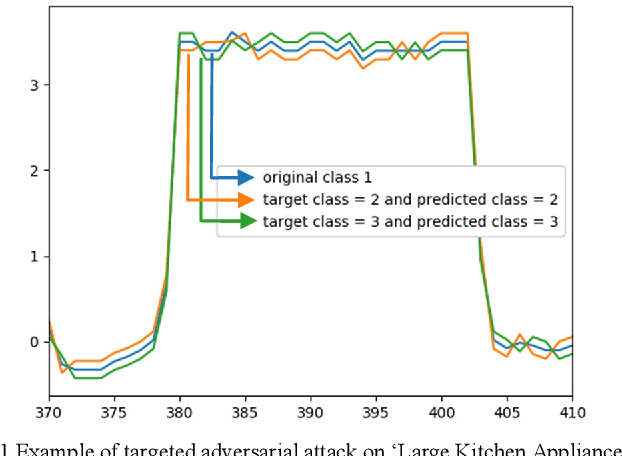

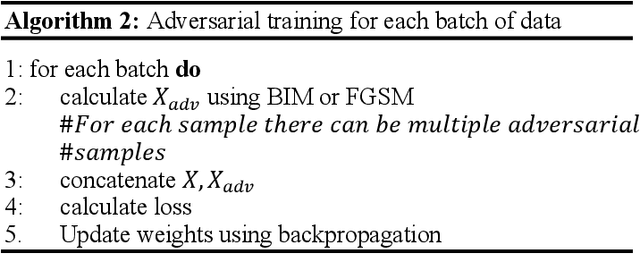

Abstract:Deep learning based models are vulnerable to adversarial attacks. These attacks can be much more harmful in case of targeted attacks, where an attacker tries not only to fool the deep learning model, but also to misguide the model to predict a specific class. Such targeted and untargeted attacks are specifically tailored for an individual sample and require addition of an imperceptible noise to the sample. In contrast, universal adversarial attack calculates a special imperceptible noise which can be added to any sample of the given dataset so that, the deep learning model is forced to predict a wrong class. To the best of our knowledge these targeted and universal attacks on time series data have not been studied in any of the previous works. In this work, we have performed untargeted, targeted and universal adversarial attacks on UCR time series datasets. Our results show that deep learning based time series classification models are vulnerable to these attacks. We also show that universal adversarial attacks have good generalization property as it need only a fraction of the training data. We have also performed adversarial training based adversarial defense. Our results show that models trained adversarially using Fast gradient sign method (FGSM), a single step attack, are able to defend against FGSM as well as Basic iterative method (BIM), a popular iterative attack.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge