Anugyan Das

Compositional Neuro-Symbolic Reasoning

Apr 02, 2026Abstract:We study structured abstraction-based reasoning for the Abstraction and Reasoning Corpus (ARC) and compare its generalization to test-time approaches. Purely neural architectures lack reliable combinatorial generalization, while strictly symbolic systems struggle with perceptual grounding. We therefore propose a neuro-symbolic architecture that extracts object-level structure from grids, uses neural priors to propose candidate transformations from a fixed domain-specific language (DSL) of atomic patterns, and filters hypotheses using cross-example consistency. Instantiated as a compositional reasoning framework based on unit patterns inspired by human visual abstraction, the system augments large language models (LLMs) with object representations and transformation proposals. On ARC-AGI-2, it improves base LLM performance from 16% to 24.4% on the public evaluation set, and to 30.8% when combined with ARC Lang Solver via a meta-classifier. These results demonstrate that separating perception, neural-guided transformation proposal, and symbolic consistency filtering improves generalization without task-specific finetuning or reinforcement learning, while reducing reliance on brute-force search and sampling-based test-time scaling. We open-source the ARC-AGI-2 Reasoner code (https://github.com/CoreThink-AI/arc-agi-2-reasoner).

Depression Status Estimation by Deep Learning based Hybrid Multi-Modal Fusion Model

Nov 30, 2020

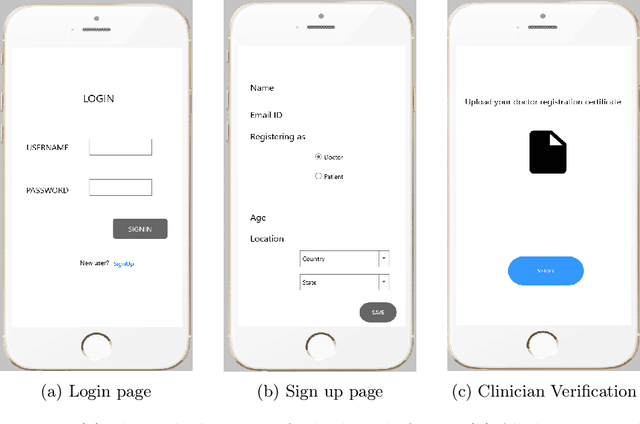

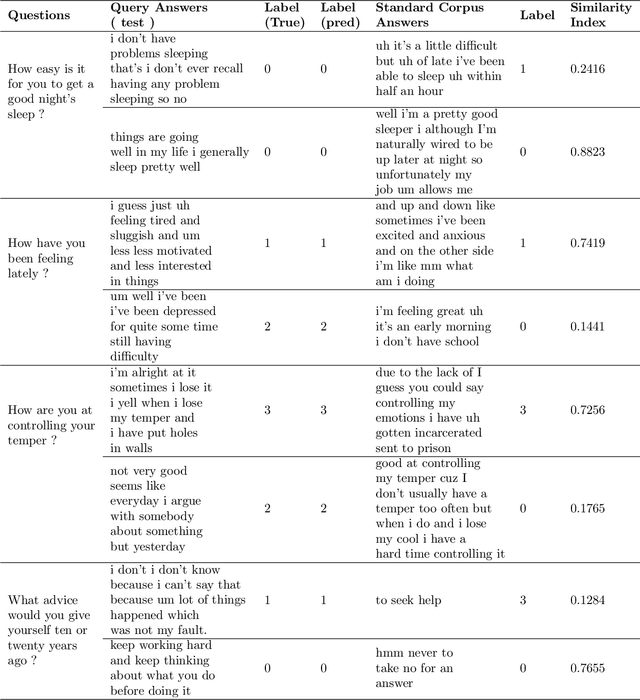

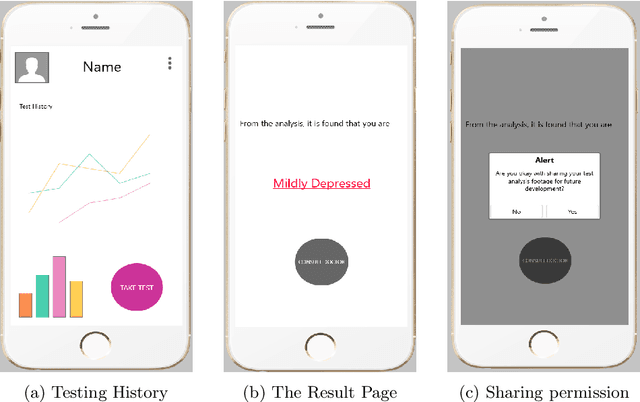

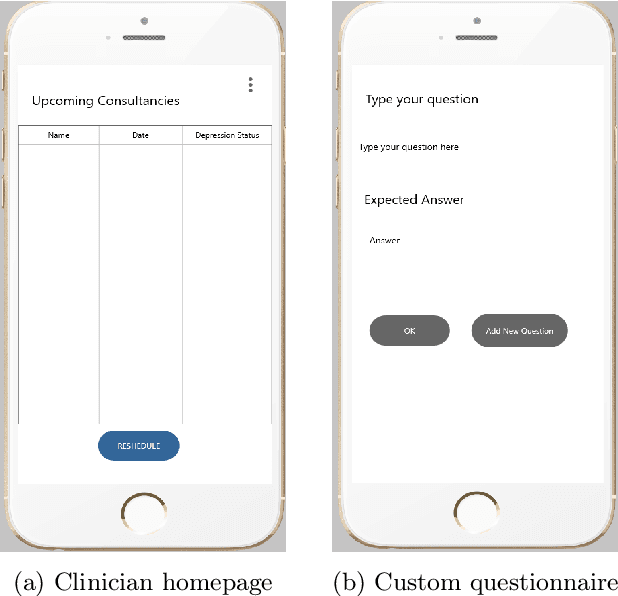

Abstract:Preliminary detection of mild depression could immensely help in effective treatment of the common mental health disorder. Due to the lack of proper awareness and the ample mix of stigmas and misconceptions present within the society, mental health status estimation has become a truly difficult task. Due to the immense variations in character level traits from person to person, traditional deep learning methods fail to generalize in a real world setting. In our study we aim to create a human allied AI workflow which could efficiently adapt to specific users and effectively perform in real world scenarios. We propose a Hybrid deep learning approach that combines the essence of one shot learning, classical supervised deep learning methods and human allied interactions for adaptation. In order to capture maximum information and make efficient diagnosis video, audio, and text modalities are utilized. Our Hybrid Fusion model achieved a high accuracy of 96.3% on the Dataset; and attained an AUC of 0.9682 which proves its robustness in discriminating classes in complex real-world scenarios making sure that no cases of mild depression are missed during diagnosis. The proposed method is deployed in a cloud-based smartphone application for robust testing. With user-specific adaptations and state of the art methodologies, we present a state-of-the-art model with user friendly experience.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge