Antonios Tzortzakakis

DosimeTron: Automating Personalized Monte Carlo Radiation Dosimetry in PET/CT with Agentic AI

Apr 07, 2026Abstract:Purpose: To develop and evaluate DosimeTron, an agentic AI system for automated patient-specific MC internal radiation dosimetry in PET/CT examinations. Materials and Methods: In this retrospective study, DosimeTron was evaluated on a publicly available PSMA-PET/CT dataset comprising 597 studies from 378 male patients acquired on three scanner models (18-F, n = 369; 68-Ga, n = 228). The system uses GPT-5.2 as its reasoning engine and 23 tools exposed via four Model Context Protocol servers, automating DICOM metadata extraction, image preprocessing, MC simulation, organ segmentation, and dosimetric reporting through natural-language interaction. Agentic performance was assessed using diverse prompt templates spanning single-turn instructions of varying specificity and multi-turn conversational exchanges, monitored via OpenTelemetry traces. Dosimetric accuracy was validated against OpenDose3D across 114 cases and 22 organs using Pearson's r, Lin's concordance correlation coefficient (CCC), and Bland-Altman analysis. Results: Across all prompt templates and all runs, no execution failures, pipeline errors, or hallucinated outputs were observed. Pearson's r ranged from 0.965 to 1.000 (median 0.997; all p < 0.001) and CCC from 0.963 to 1.000 (median 0.996). Mean absolute percentage difference was below 5% for 19 of 22 organs (median 2.5%). Total per-study processing time (SD) was 32.3 (6.0) minutes. Conclusion: DosimeTron autonomously executed complex dosimetry pipelines across diverse prompt configurations and achieved high dosimetric agreement with OpenDose3D at clinically acceptable processing times, demonstrating the feasibility of agentic AI for patient-specific Monte Carlo dosimetry in PET/CT.

Anatomy-Aware Lymphoma Lesion Detection in Whole-Body PET/CT

Nov 10, 2025

Abstract:Early cancer detection is crucial for improving patient outcomes, and 18F FDG PET/CT imaging plays a vital role by combining metabolic and anatomical information. Accurate lesion detection remains challenging due to the need to identify multiple lesions of varying sizes. In this study, we investigate the effect of adding anatomy prior information to deep learning-based lesion detection models. In particular, we add organ segmentation masks from the TotalSegmentator tool as auxiliary inputs to provide anatomical context to nnDetection, which is the state-of-the-art for lesion detection, and Swin Transformer. The latter is trained in two stages that combine self-supervised pre-training and supervised fine-tuning. The method is tested in the AutoPET and Karolinska lymphoma datasets. The results indicate that the inclusion of anatomical priors substantially improves the detection performance within the nnDetection framework, while it has almost no impact on the performance of the vision transformer. Moreover, we observe that Swin Transformer does not offer clear advantages over conventional convolutional neural network (CNN) encoders used in nnDetection. These findings highlight the critical role of the anatomical context in cancer lesion detection, especially in CNN-based models.

Generative Aging of Brain Images with Diffeomorphic Registration

May 31, 2022

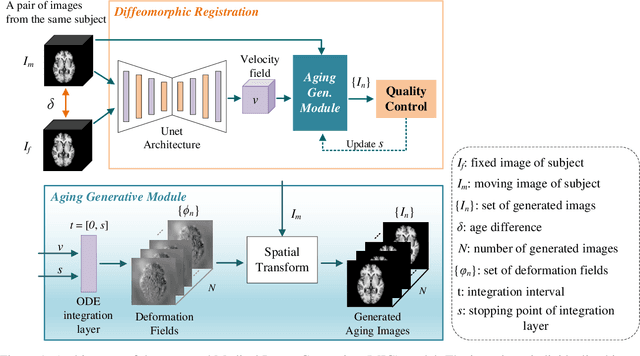

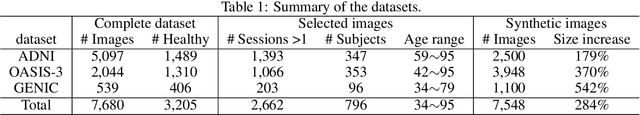

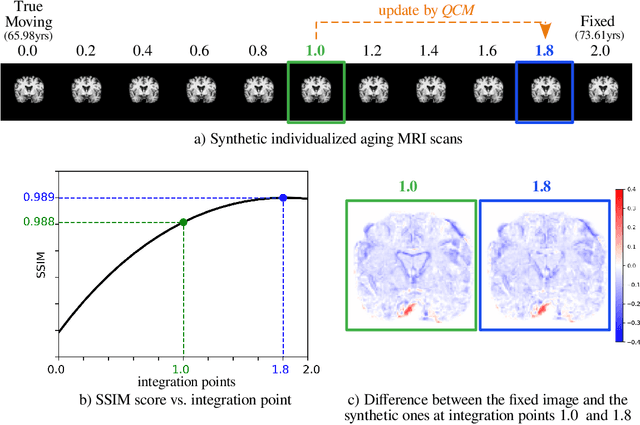

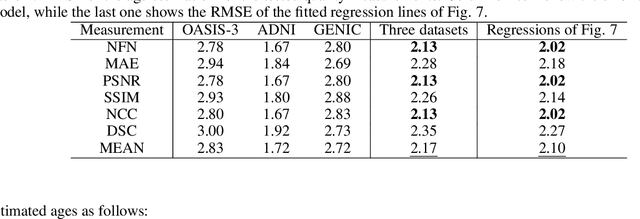

Abstract:Analyzing and predicting brain aging is essential for early prognosis and accurate diagnosis of cognitive diseases. The technique of neuroimaging, such as Magnetic Resonance Imaging (MRI), provides a noninvasive means of observing the aging process within the brain. With longitudinal image data collection, data-intensive Artificial Intelligence (AI) algorithms have been used to examine brain aging. However, existing state-of-the-art algorithms tend to be restricted to group-level predictions and suffer from unreal predictions. This paper proposes a methodology for generating longitudinal MRI scans that capture subject-specific neurodegeneration and retain anatomical plausibility in aging. The proposed methodology is developed within the framework of diffeomorphic registration and relies on three key novel technological advances to generate subject-level anatomically plausible predictions: i) a computationally efficient and individualized generative framework based on registration; ii) an aging generative module based on biological linear aging progression; iii) a quality control module to fit registration for generation task. Our methodology was evaluated on 2662 T1-weighted (T1-w) MRI scans from 796 participants from three different cohorts. First, we applied 6 commonly used criteria to demonstrate the aging simulation ability of the proposed methodology; Secondly, we evaluated the quality of the synthetic images using quantitative measurements and qualitative assessment by a neuroradiologist. Overall, the experimental results show that the proposed method can produce anatomically plausible predictions that can be used to enhance longitudinal datasets, in turn enabling data-hungry AI-driven healthcare tools.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge