Andrei Dan

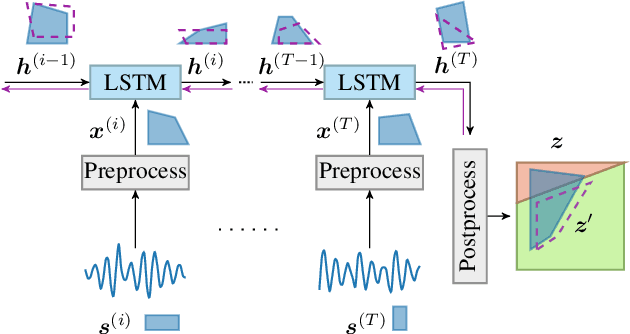

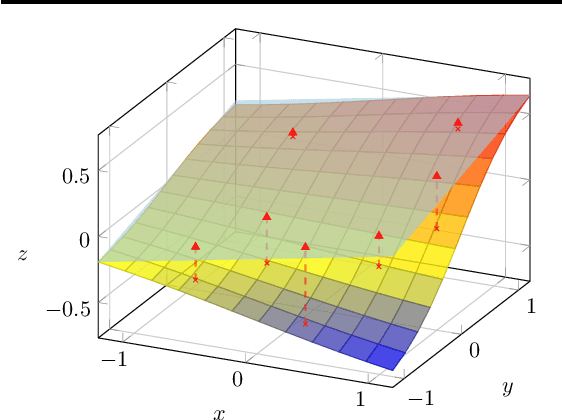

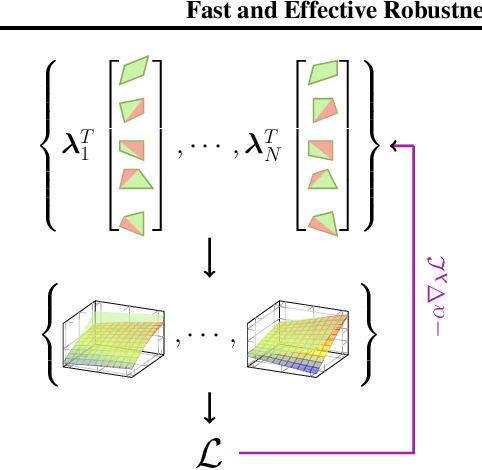

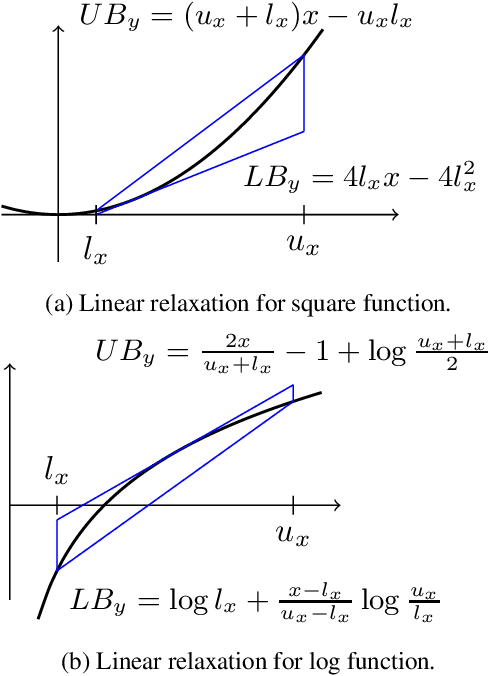

Fast and Effective Robustness Certification for Recurrent Neural Networks

May 27, 2020

Abstract:We present a precise and scalable verifier for recurrent neural networks, called R2. The verifier is based on two key ideas: (i) a method to compute tight linear convex relaxations of a recurrent update function via sampling and optimization, and (ii) a technique to optimize convex combinations of multiple bounds for each neuron instead of a single bound as previously done. Using R2, we present the first study of certifying a non-trivial use case of recurrent neural networks, namely speech classification. This required us to also develop custom convex relaxations for the general operations that make up speech preprocessing. Our evaluation across a number of recurrent architectures in computer vision and speech domains shows that these networks are out of reach for existing methods as these are an order of magnitude slower than R2, while R2 successfully verified robustness in many cases.

Synthesizing Unrestricted False Positive Adversarial Objects Using Generative Models

May 19, 2020

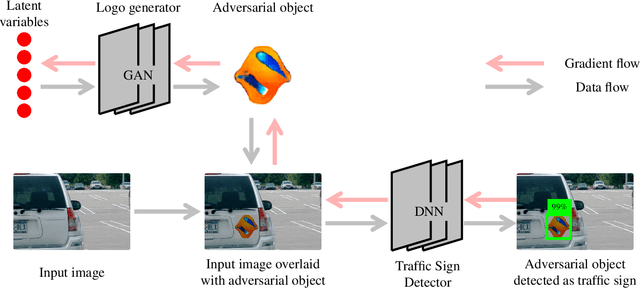

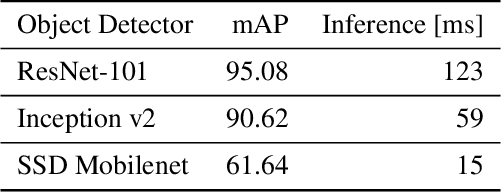

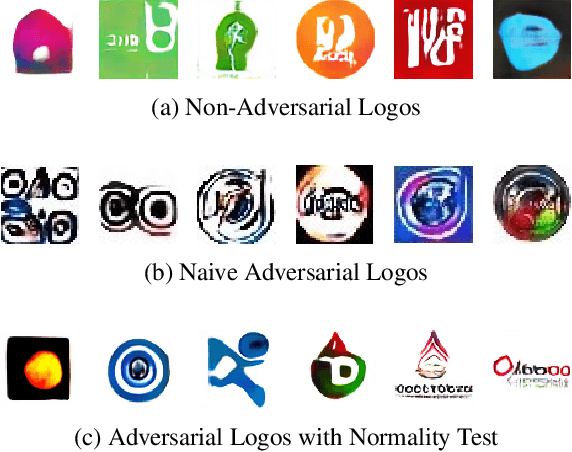

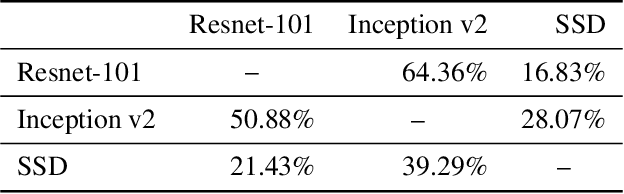

Abstract:Adversarial examples are data points misclassified by neural networks. Originally, adversarial examples were limited to adding small perturbations to a given image. Recent work introduced the generalized concept of unrestricted adversarial examples, without limits on the added perturbations. In this paper, we introduce a new category of attacks that create unrestricted adversarial examples for object detection. Our key idea is to generate adversarial objects that are unrelated to the classes identified by the target object detector. Different from previous attacks, we use off-the-shelf Generative Adversarial Networks (GAN), without requiring any further training or modification. Our method consists of searching over the latent normal space of the GAN for adversarial objects that are wrongly identified by the target object detector. We evaluate this method on the commonly used Faster R-CNN ResNet-101, Inception v2 and SSD Mobilenet v1 object detectors using logo generative iWGAN-LC and SNGAN trained on CIFAR-10. The empirical results show that the generated adversarial objects are indistinguishable from non-adversarial objects generated by the GANs, transferable between the object detectors and robust in the physical world. This is the first work to study unrestricted false positive adversarial examples for object detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge