Alexander Lavin

Technology Readiness Levels for Machine Learning Systems

Jan 11, 2021

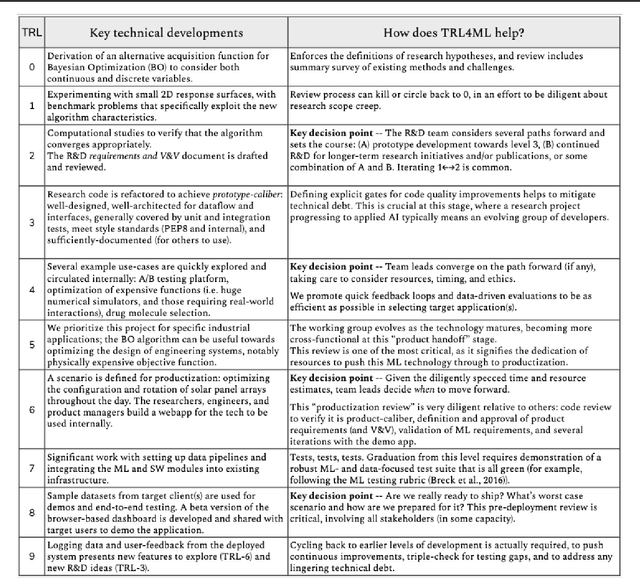

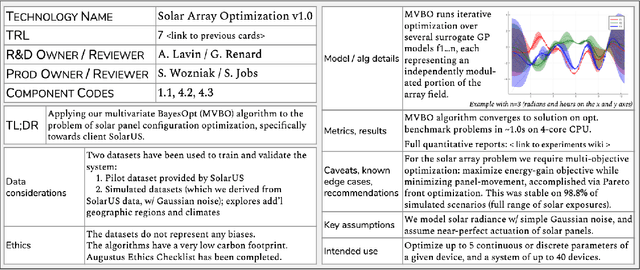

Abstract:The development and deployment of machine learning (ML) systems can be executed easily with modern tools, but the process is typically rushed and means-to-an-end. The lack of diligence can lead to technical debt, scope creep and misaligned objectives, model misuse and failures, and expensive consequences. Engineering systems, on the other hand, follow well-defined processes and testing standards to streamline development for high-quality, reliable results. The extreme is spacecraft systems, where mission critical measures and robustness are ingrained in the development process. Drawing on experience in both spacecraft engineering and ML (from research through product across domain areas), we have developed a proven systems engineering approach for machine learning development and deployment. Our "Machine Learning Technology Readiness Levels" (MLTRL) framework defines a principled process to ensure robust, reliable, and responsible systems while being streamlined for ML workflows, including key distinctions from traditional software engineering. Even more, MLTRL defines a lingua franca for people across teams and organizations to work collaboratively on artificial intelligence and machine learning technologies. Here we describe the framework and elucidate it with several real world use-cases of developing ML methods from basic research through productization and deployment, in areas such as medical diagnostics, consumer computer vision, satellite imagery, and particle physics.

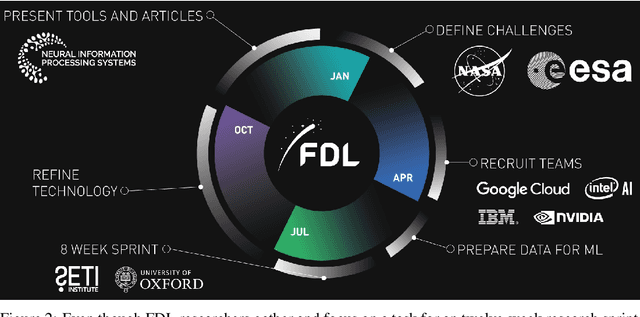

Learnings from Frontier Development Lab and SpaceML -- AI Accelerators for NASA and ESA

Nov 09, 2020

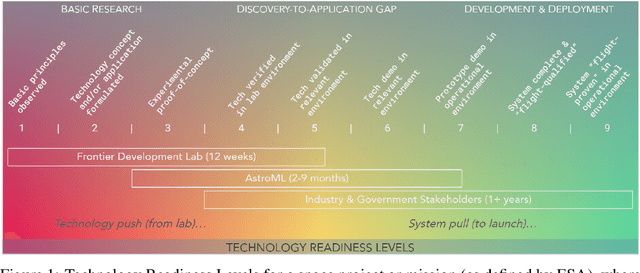

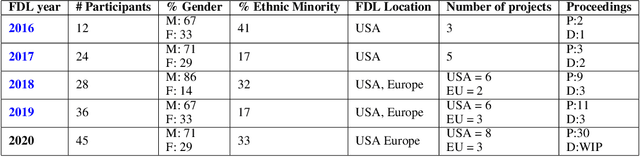

Abstract:Research with AI and ML technologies lives in a variety of settings with often asynchronous goals and timelines: academic labs and government organizations pursue open-ended research focusing on discoveries with long-term value, while research in industry is driven by commercial pursuits and hence focuses on short-term timelines and return on investment. The journey from research to product is often tacit or ad hoc, resulting in technology transition failures, further exacerbated when research and development is interorganizational and interdisciplinary. Even more, much of the ability to produce results remains locked in the private repositories and know-how of the individual researcher, slowing the impact on future research by others and contributing to the ML community's challenges in reproducibility. With research organizations focused on an exploding array of fields, opportunities for the handover and maturation of interdisciplinary research reduce. With these tensions, we see an emerging need to measure the correctness, impact, and relevance of research during its development to enable better collaboration, improved reproducibility, faster progress, and more trusted outcomes. We perform a case study of the Frontier Development Lab (FDL), an AI accelerator under a public-private partnership from NASA and ESA. FDL research follows principled practices that are grounded in responsible development, conduct, and dissemination of AI research, enabling FDL to churn successful interdisciplinary and interorganizational research projects, measured through NASA's Technology Readiness Levels. We also take a look at the SpaceML Open Source Research Program, which helps accelerate and transition FDL's research to deployable projects with wide spread adoption amongst citizen scientists.

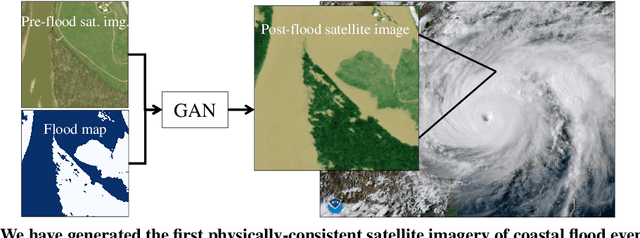

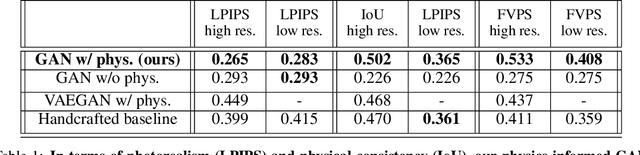

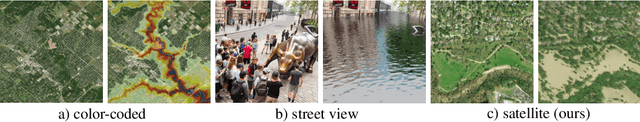

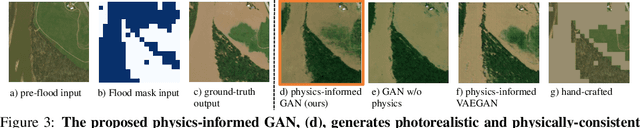

Physics-informed GANs for Coastal Flood Visualization

Oct 16, 2020

Abstract:As climate change increases the intensity of natural disasters, society needs better tools for adaptation. Floods, for example, are the most frequent natural disaster, but during hurricanes the area is largely covered by clouds and emergency managers must rely on nonintuitive flood visualizations for mission planning. To assist these emergency managers, we have created a deep learning pipeline that generates visual satellite images of current and future coastal flooding. We advanced a state-of-the-art GAN called pix2pixHD, such that it produces imagery that is physically-consistent with the output of an expert-validated storm surge model (NOAA SLOSH). By evaluating the imagery relative to physics-based flood maps, we find that our proposed framework outperforms baseline models in both physical-consistency and photorealism. While this work focused on the visualization of coastal floods, we envision the creation of a global visualization of how climate change will shape our earth.

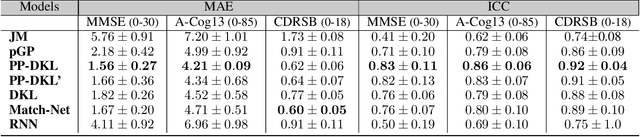

Neuro-symbolic Neurodegenerative Disease Modeling as Probabilistic Programmed Deep Kernels

Sep 16, 2020

Abstract:We present a probabilistic programmed deep kernel learning approach to personalized, predictive modeling of neurodegenerative diseases. Our analysis considers a spectrum of neural and symbolic machine learning approaches, which we assess for predictive performance and important medical AI properties such as interpretability, uncertainty reasoning, data-efficiency, and leveraging domain knowledge. Our Bayesian approach combines the flexibility of Gaussian processes with the structural power of neural networks to model biomarker progressions, without needing clinical labels for training. We run evaluations on the problem of Alzheimer's disease prediction, yielding results surpassing deep learning and with the practical advantages of Bayesian non-parametrics and probabilistic programming.

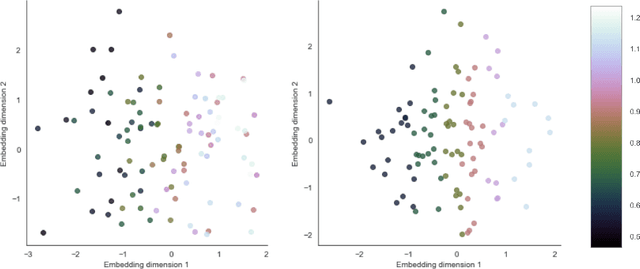

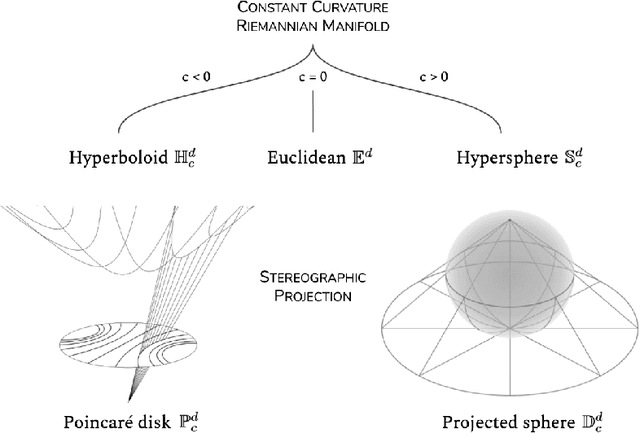

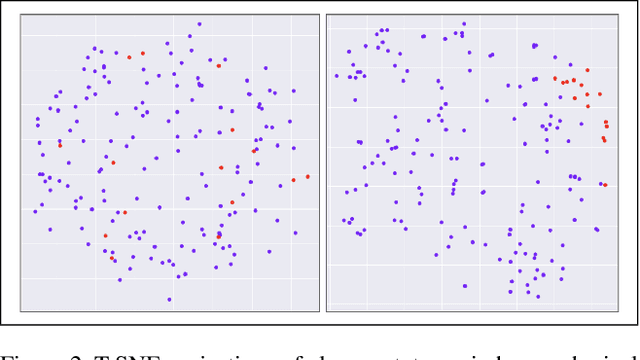

Manifolds for Unsupervised Visual Anomaly Detection

Jun 19, 2020

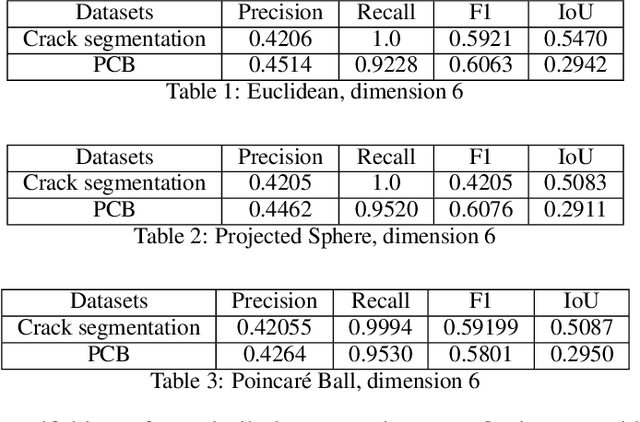

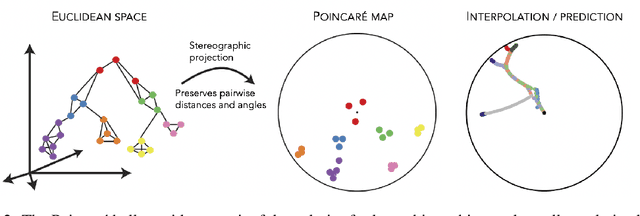

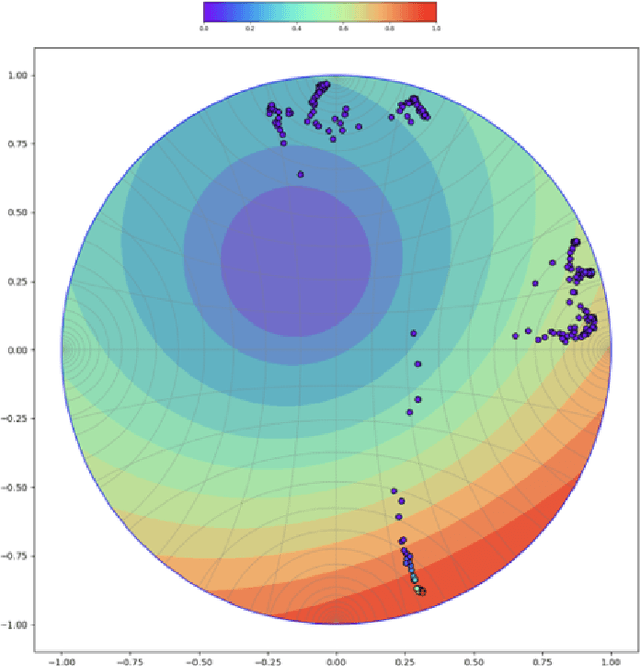

Abstract:Anomalies are by definition rare, thus labeled examples are very limited or nonexistent, and likely do not cover unforeseen scenarios. Unsupervised learning methods that don't necessarily encounter anomalies in training would be immensely useful. Generative vision models can be useful in this regard but do not sufficiently represent normal and abnormal data distributions. To this end, we propose constant curvature manifolds for embedding data distributions in unsupervised visual anomaly detection. Through theoretical and empirical explorations of manifold shapes, we develop a novel hyperspherical Variational Auto-Encoder (VAE) via stereographic projections with a gyroplane layer - a complete equivalent to the Poincar\'e VAE. This approach with manifold projections is beneficial in terms of model generalization and can yield more interpretable representations. We present state-of-the-art results on visual anomaly benchmarks in precision manufacturing and inspection, demonstrating real-world utility in industrial AI scenarios. We further demonstrate the approach on the challenging problem of histopathology: our unsupervised approach effectively detects cancerous brain tissue from noisy whole-slide images, learning a smooth, latent organization of tissue types that provides an interpretable decisions tool for medical professionals.

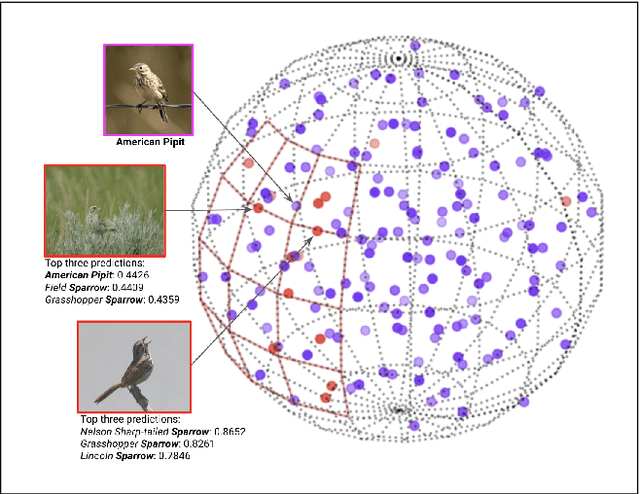

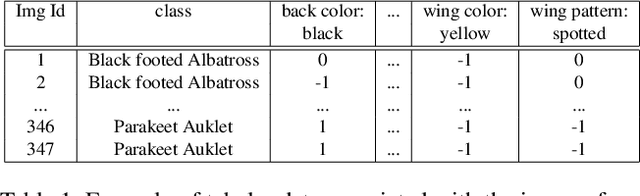

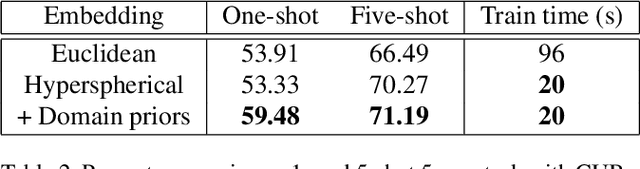

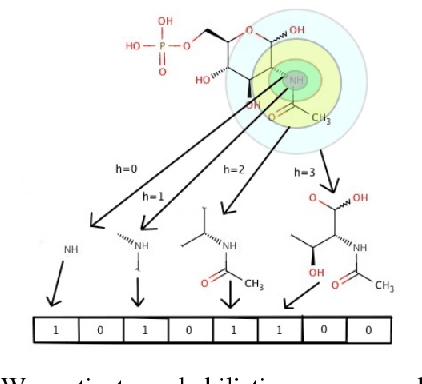

Fine-Grain Few-Shot Vision via Domain Knowledge as Hyperspherical Priors

May 23, 2020

Abstract:Prototypical networks have been shown to perform well at few-shot learning tasks in computer vision. Yet these networks struggle when classes are very similar to each other (fine-grain classification) and currently have no way of taking into account prior knowledge (through the use of tabular data). Using a spherical latent space to encode prototypes, we can achieve few-shot fine-grain classification by maximally separating the classes while incorporating domain knowledge as informative priors. We describe how to construct a hypersphere of prototypes that embed a-priori domain information, and demonstrate the effectiveness of the approach on challenging benchmark datasets for fine-grain classification, with top results for one-shot classification and 5x speedups in training time.

Doubly Bayesian Optimization

Dec 13, 2018

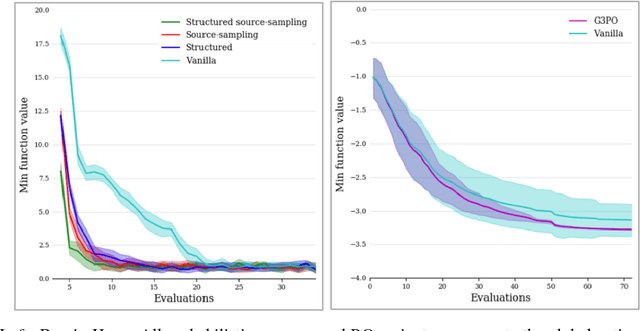

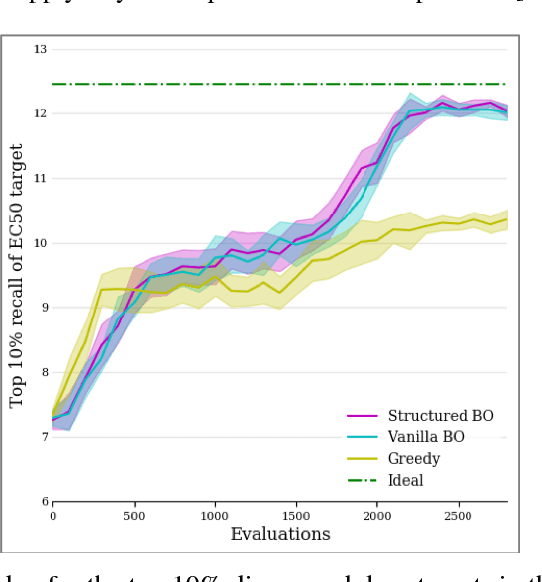

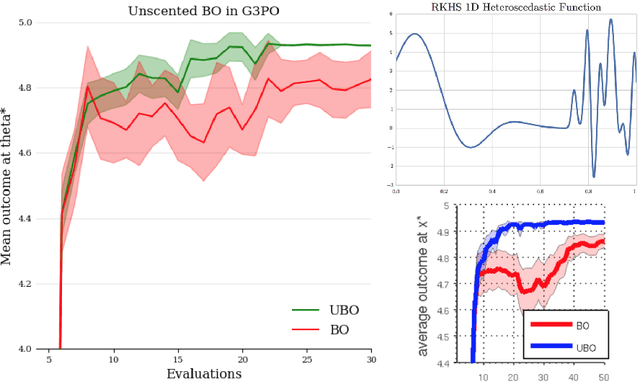

Abstract:Bayesian optimization (BO) is a powerful method for optimizing complex black-box functions that are costly to evaluate directly. Although useful out of the box, complexities arise when the domain exhibits non-smooth structure, noise, or greater than five dimensions. Extending BO for these issues is non-trivial, which is why we suggest casting BO methods into the probabilistic programming paradigm. These systems (PPS) enable users to encode model structure and naturally reason about uncertainties, which can be leveraged towards improved BO methods. Here we present a probabilistic domain-specific language where BO is native, showing the Bayesian approach to optimization is more naturally expressed in a PPS, and better equipped to address the above issues. We validate the approach on standard optimization benchmarks, while demonstrating the utility of programmable structure to address the inner-optimization problem of BO. Importantly, we also show that the framework enables the user to more readily use advanced techniques such as unscented BO and noisy expected improvement.

Cortical Microcircuits from a Generative Vision Model

Aug 03, 2018

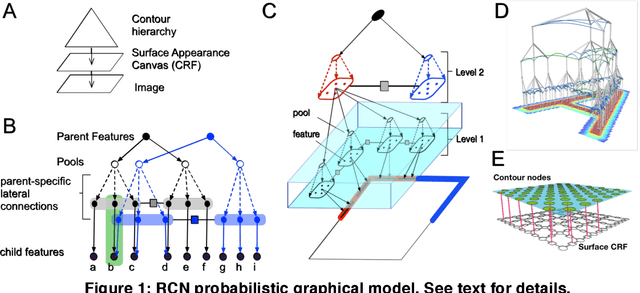

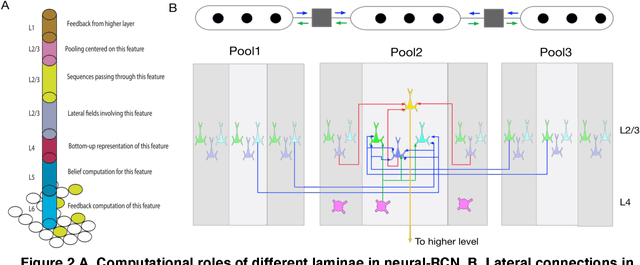

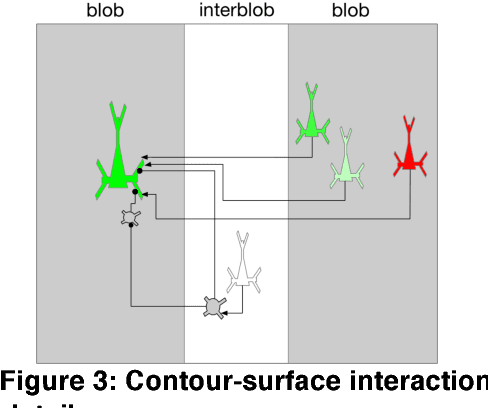

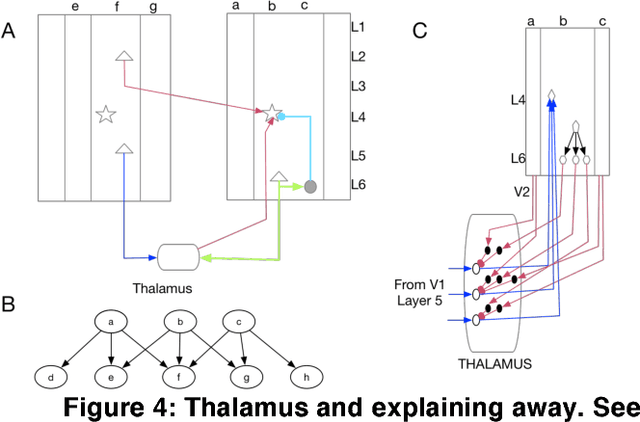

Abstract:Understanding the information processing roles of cortical circuits is an outstanding problem in neuroscience and artificial intelligence. The theoretical setting of Bayesian inference has been suggested as a framework for understanding cortical computation. Based on a recently published generative model for visual inference (George et al., 2017), we derive a family of anatomically instantiated and functional cortical circuit models. In contrast to simplistic models of Bayesian inference, the underlying generative model's representational choices are validated with real-world tasks that required efficient inference and strong generalization. The cortical circuit model is derived by systematically comparing the computational requirements of this model with known anatomical constraints. The derived model suggests precise functional roles for the feedforward, feedback and lateral connections observed in different laminae and columns, and assigns a computational role for the path through the thalamus.

Clustering Time-Series Energy Data from Smart Meters

Mar 24, 2016Abstract:Investigations have been performed into using clustering methods in data mining time-series data from smart meters. The problem is to identify patterns and trends in energy usage profiles of commercial and industrial customers over 24-hour periods, and group similar profiles. We tested our method on energy usage data provided by several U.S. power utilities. The results show accurate grouping of accounts similar in their energy usage patterns, and potential for the method to be utilized in energy efficiency programs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge