Alessandro Zavoli

Reinforcement Learning for Low-Thrust Trajectory Design of Interplanetary Missions

Aug 19, 2020

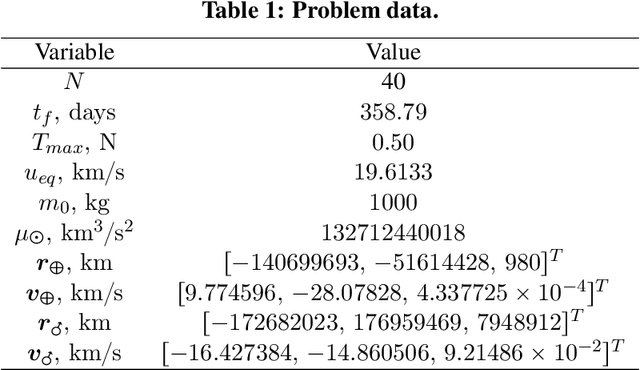

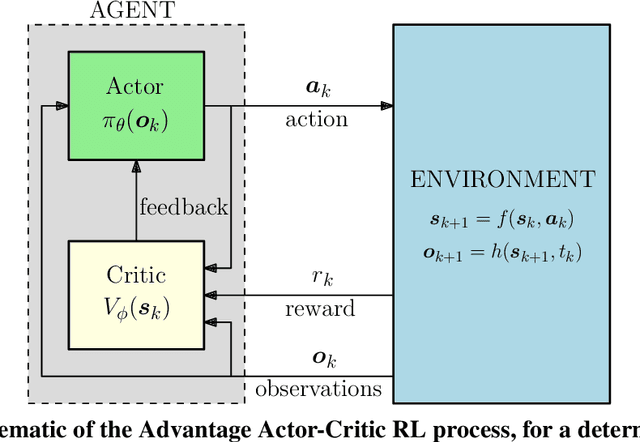

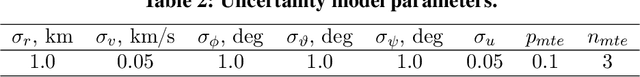

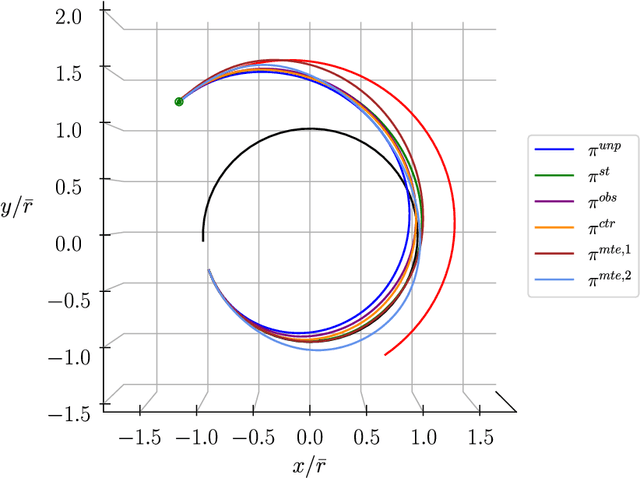

Abstract:This paper investigates the use of Reinforcement Learning for the robust design of low-thrust interplanetary trajectories in presence of severe disturbances, modeled alternatively as Gaussian additive process noise, observation noise, control actuation errors on thrust magnitude and direction, and possibly multiple missed thrust events. The optimal control problem is recast as a time-discrete Markov Decision Process to comply with the standard formulation of reinforcement learning. An open-source implementation of the state-of-the-art algorithm Proximal Policy Optimization is adopted to carry out the training process of a deep neural network, used to map the spacecraft (observed) states to the optimal control policy. The resulting Guidance and Control Network provides both a robust nominal trajectory and the associated closed-loop guidance law. Numerical results are presented for a typical Earth-Mars mission. First, in order to validate the proposed approach, the solution found in a (deterministic) unperturbed scenario is compared with the optimal one provided by an indirect technique. Then, the robustness and optimality of the obtained closed-loop guidance laws is assessed by means of Monte Carlo campaigns performed in the considered uncertain scenarios. These preliminary results open up new horizons for the use of reinforcement learning in the robust design of interplanetary missions.

EOS: a Parallel, Self-Adaptive, Multi-Population Evolutionary Algorithm for Constrained Global Optimization

Jul 13, 2020

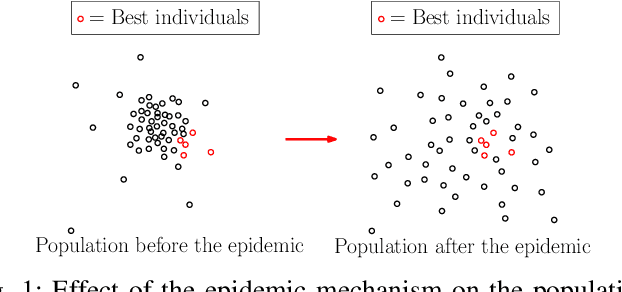

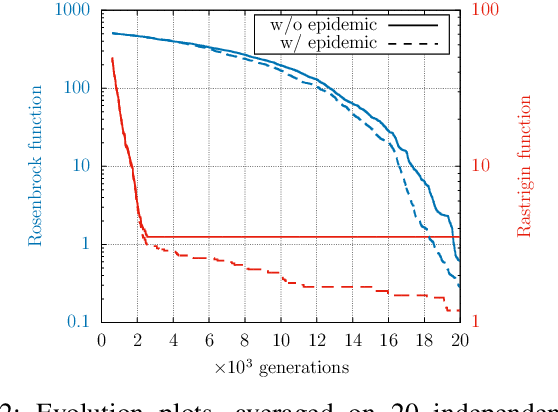

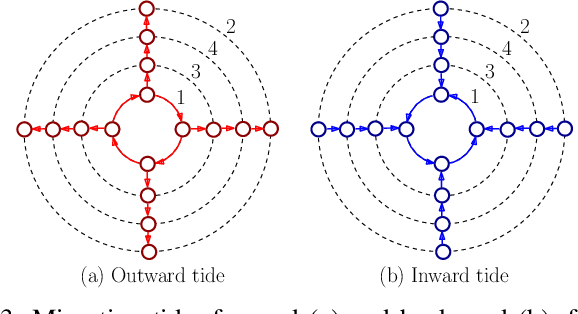

Abstract:This paper presents the main characteristics of the evolutionary optimization code named EOS, Evolutionary Optimization at Sapienza, and its successful application to challenging, real-world space trajectory optimization problems. EOS is a global optimization algorithm for constrained and unconstrained problems of real-valued variables. It implements a number of improvements to the well-known Differential Evolution (DE) algorithm, namely, a self-adaptation of the control parameters, an epidemic mechanism, a clustering technique, an $\varepsilon$-constrained method to deal with nonlinear constraints, and a synchronous island-model to handle multiple populations in parallel. The results reported prove that EOSis capable of achieving increased performance compared to state-of-the-art single-population self-adaptive DE algorithms when applied to high-dimensional or highly-constrained space trajectory optimization problems.

Integrated Optimization of Ascent Trajectory and SRM Design of Multistage Launch Vehicles

Oct 08, 2019

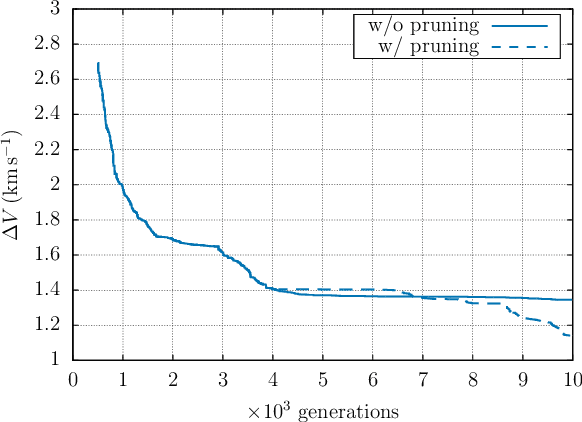

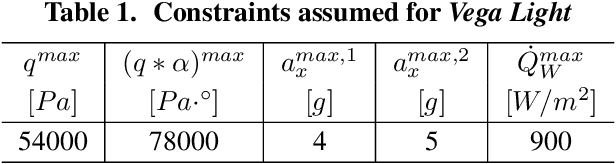

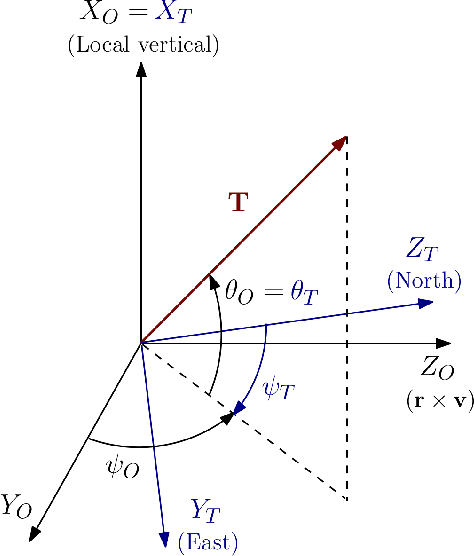

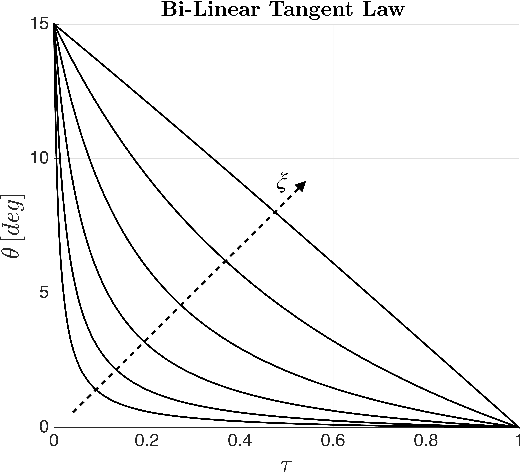

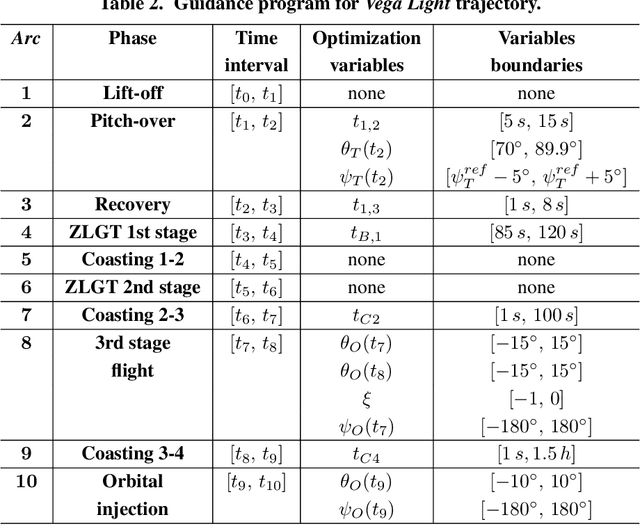

Abstract:This paper presents a methodology for the concurrent first-stage preliminary design and ascent trajectory optimization, with application to a Vega-derived Light Launch Vehicle. The reuse as first stage of an existing upper-stage (Zefiro 40) requires a propellant grain geometry redesign, in order to account for the mutated operating conditions. An optimization code based on the parallel running of several Differential Evolution algorithms is used to find the optimal internal pressure law during Z40 operation, together with the optimal thrust direction and other relevant flight parameters of the entire ascent trajectory. Payload injected into a target orbit is maximized, while respecting multiple design constraints, either involving the alone solid rocket motor or dependent on the actual flight trajectory. Numerical results for SSO injection are presented.

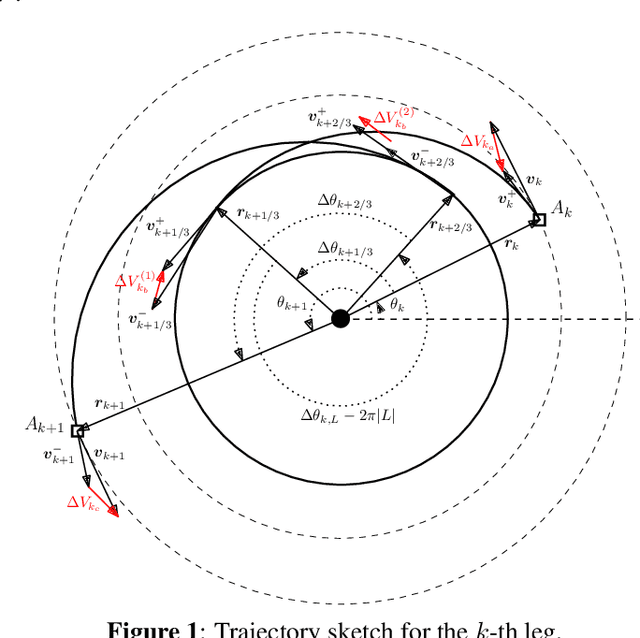

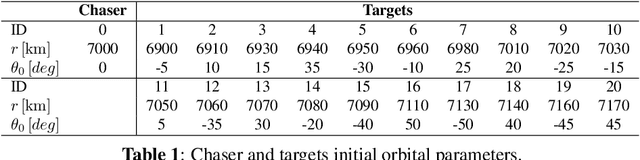

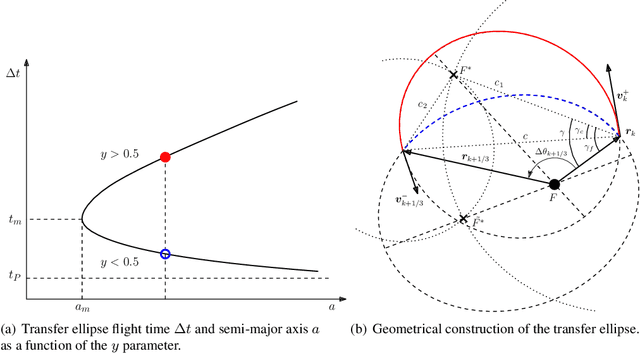

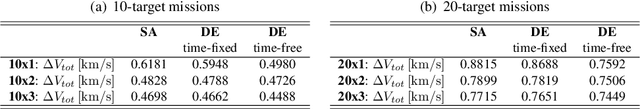

A Time-Dependent TSP Formulation for the Design of an Active Debris Removal Mission using Simulated Annealing

Sep 23, 2019

Abstract:This paper proposes a formulation of the Active Debris Removal (ADR) Mission Design problem as a modified Time-Dependent Traveling Salesman Problem (TDTSP). The TDTSP is a well-known combinatorial optimization problem, whose solution is the cheapest mono-cyclic tour connecting a number of non-stationary cities in a map. The problem is tackled with an optimization procedure based on Simulated Annealing, that efficiently exploits a natural encoding and a careful choice of mutation operators. The developed algorithm is used to simultaneously optimize the targets sequence and the rendezvous epochs of an impulsive ADR mission. Numerical results are presented for sets comprising up to 20 targets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge