Alejandro M. Aragón

A machine learning framework for uncovering stochastic nonlinear dynamics from noisy data

Apr 07, 2026Abstract:Modeling real-world systems requires accounting for noise - whether it arises from unpredictable fluctuations in financial markets, irregular rhythms in biological systems, or environmental variability in ecosystems. While the behavior of such systems can often be described by stochastic differential equations, a central challenge is understanding how noise influences the inference of system parameters and dynamics from data. Traditional symbolic regression methods can uncover governing equations but typically ignore uncertainty. Conversely, Gaussian processes provide principled uncertainty quantification but offer little insight into the underlying dynamics. In this work, we bridge this gap with a hybrid symbolic regression-probabilistic machine learning framework that recovers the symbolic form of the governing equations while simultaneously inferring uncertainty in the system parameters. The framework combines deep symbolic regression with Gaussian process-based maximum likelihood estimation to separately model the deterministic dynamics and the noise structure, without requiring prior assumptions about their functional forms. We verify the approach on numerical benchmarks, including harmonic, Duffing, and van der Pol oscillators, and validate it on an experimental system of coupled biological oscillators exhibiting synchronization, where the algorithm successfully identifies both the symbolic and stochastic components. The framework is data-efficient, requiring as few as 100-1000 data points, and robust to noise - demonstrating its broad potential in domains where uncertainty is intrinsic and both the structure and variability of dynamical systems must be understood.

Fighting the curse of dimensionality: A machine learning approach to finding global optima

Oct 28, 2021

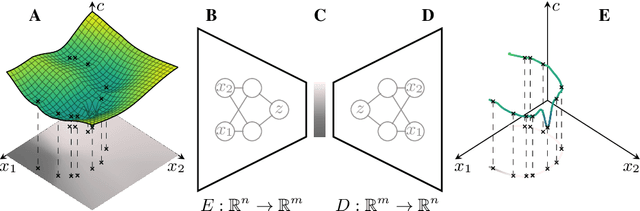

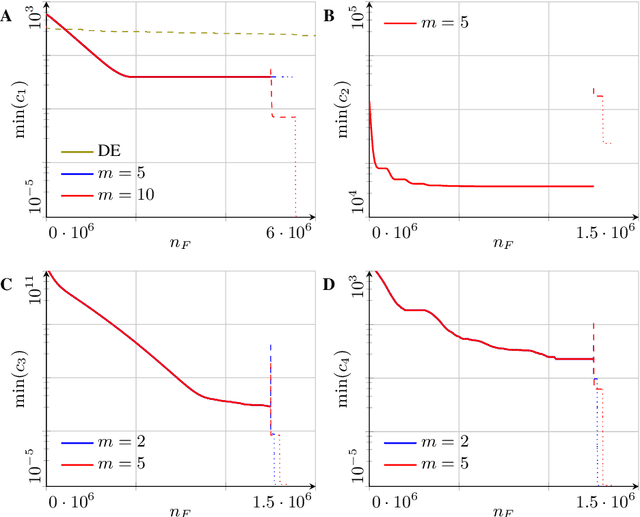

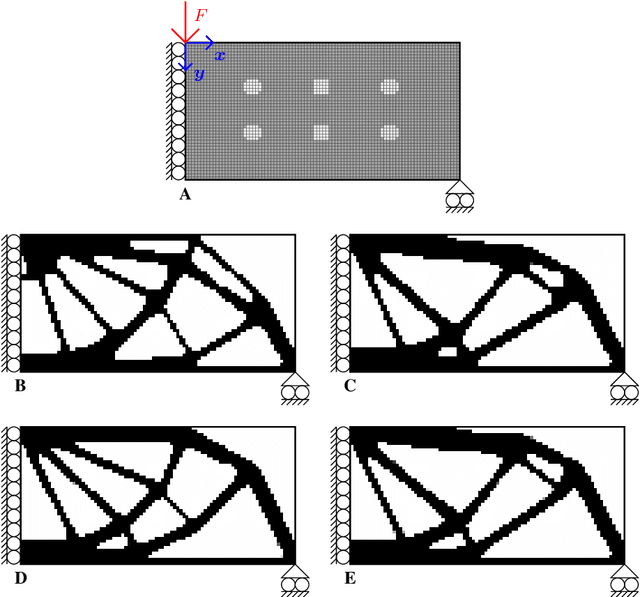

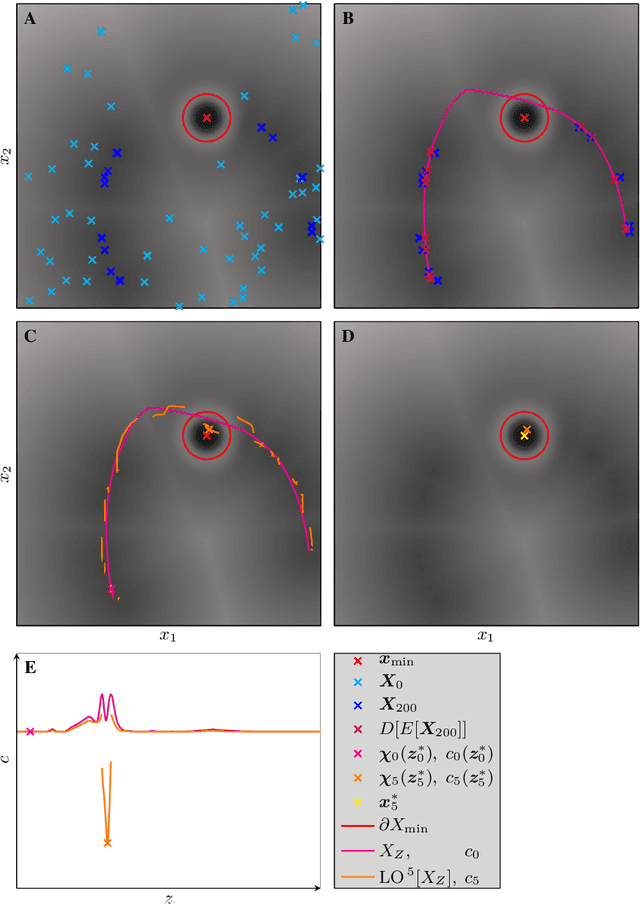

Abstract:Finding global optima in high-dimensional optimization problems is extremely challenging since the number of function evaluations required to sufficiently explore the design space increases exponentially with its dimensionality. Furthermore, non-convex cost functions render local gradient-based search techniques ineffective. To overcome these difficulties, here we demonstrate the use of machine learning to find global minima, whereby autoencoders are used to drastically reduce the search space dimensionality, and optima are found by surveying the lower-dimensional latent spaces. The methodology is tested on benchmark functions and on a structural optimization problem, where we show that by exploiting the behavior of certain cost functions we either obtain the global optimum at best or obtain superior results at worst when compared to established optimization procedures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge