Abdelhafid Zenati

Lightweight Spatiotemporal Highway Lane Detection via 3D-ResNet and PINet with ROI-Aware Attention

Apr 02, 2026Abstract:This paper presents a lightweight, end-to-end highway lane detection architecture that jointly captures spatial and temporal information for robust performance in real-world driving scenarios. Building on the strengths of 3D convolutional neural networks and instance segmentation, we propose two models that integrate a 3D-ResNet encoder with a Point Instance Network (PINet) decoder. The first model enhances multi-scale feature representation using a Feature Pyramid Network (FPN) and Self-Attention mechanism to refine spatial dependencies. The second model introduces a Region of Interest (ROI) detection head to selectively focus on lane-relevant regions, thereby improving precision and reducing computational complexity. Experiments conducted on the TuSimple dataset (highway driving scenarios) demonstrate that the proposed second model achieves 93.40% accuracy while significantly reducing false negatives. Compared to existing 2D and 3D baselines, our approach achieves improved performance with fewer parameters and reduced latency. The architecture has been validated through offline training and real-time inference in the Autonomous Systems Laboratory at City, St George's University of London. These results suggest that the proposed models are well-suited for integration into Advanced Driver Assistance Systems (ADAS), with potential scalability toward full Lane Assist Systems (LAS).

Explainability in Deep Reinforcement Learning, a Review into Current Methods and Applications

Jul 13, 2022

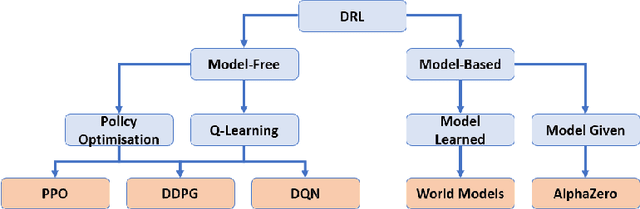

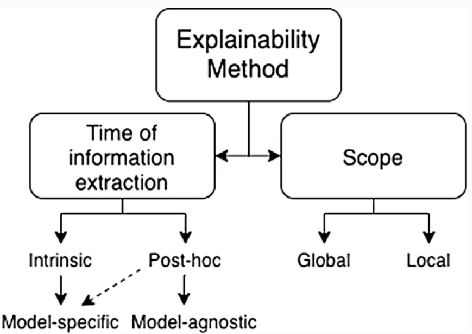

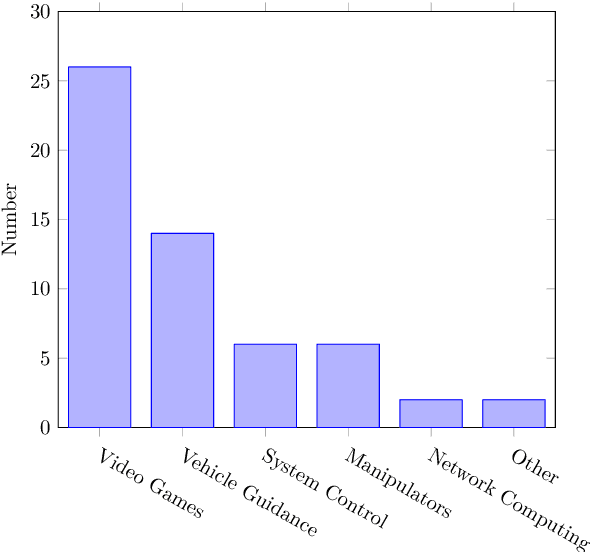

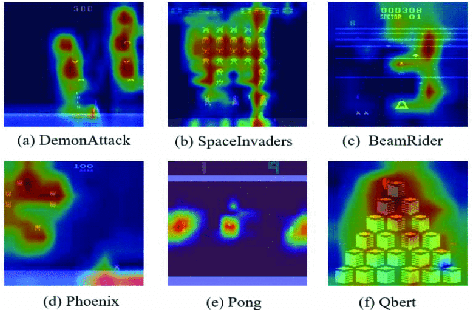

Abstract:The use of Deep Reinforcement Learning (DRL) schemes has increased dramatically since their first introduction in 2015. Though uses in many different applications are being found they still have a problem with the lack of interpretability. This has bread a lack of understanding and trust in the use of DRL solutions from researchers and the general public. To solve this problem the field of explainable artificial intelligence (XAI) has emerged. This is a variety of different methods that look to open the DRL black boxes, they range from the use of interpretable symbolic decision trees to numerical methods like Shapley Values. This review looks at which methods are being used and what applications they are being used. This is done to identify which models are the best suited to each application or if a method is being underutilised.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge