Abdallah Shami

CorrFL: Correlation-Based Neural Network Architecture for Unavailability Concerns in a Heterogeneous IoT Environment

Jul 22, 2023Abstract:The Federated Learning (FL) paradigm faces several challenges that limit its application in real-world environments. These challenges include the local models' architecture heterogeneity and the unavailability of distributed Internet of Things (IoT) nodes due to connectivity problems. These factors posit the question of "how can the available models fill the training gap of the unavailable models?". This question is referred to as the "Oblique Federated Learning" problem. This problem is encountered in the studied environment that includes distributed IoT nodes responsible for predicting CO2 concentrations. This paper proposes the Correlation-based FL (CorrFL) approach influenced by the representational learning field to address this problem. CorrFL projects the various model weights to a common latent space to address the model heterogeneity. Its loss function minimizes the reconstruction loss when models are absent and maximizes the correlation between the generated models. The latter factor is critical because of the intersection of the feature spaces of the IoT devices. CorrFL is evaluated on a realistic use case, involving the unavailability of one IoT device and heightened activity levels that reflect occupancy. The generated CorrFL models for the unavailable IoT device from the available ones trained on the new environment are compared against models trained on different use cases, referred to as the benchmark model. The evaluation criteria combine the mean absolute error (MAE) of predictions and the impact of the amount of exchanged data on the prediction performance improvement. Through a comprehensive experimental procedure, the CorrFL model outperformed the benchmark model in every criterion.

* 17 pages, 12 figures, IEEE Transactions on Network and Service Management

Modular Simulation Environment Towards OTN AI-based Solutions

Jun 19, 2023Abstract:The current trend for highly dynamic and virtualized networking infrastructure made automated networking a critical requirement. Multiple solutions have been proposed to address this, including the most sought-after machine learning ML-based solutions. However, the main hurdle when developing Next Generation Network is the availability of large datasets, especially in 5G and beyond and Optical Transport Networking (OTN) traffic. This need led researchers to look for viable simulation environments to generate the necessary volume with highly configurable real-life scenarios, which can be costly in setup and require subscription-based products and even the purchase of dedicated hardware, depending on the supplier. We aim to address this issue by generating high-volume and fidelity datasets by proposing a modular solution to adapt to the user's available resources. These datasets can be used to develop better-aforementioned ML solutions resulting in higher accuracy and adaptation to real-life networking traffic.

Intelligent O-RAN Traffic Steering for URLLC Through Deep Reinforcement Learning

Mar 03, 2023Abstract:The goal of Next-Generation Networks is to improve upon the current networking paradigm, especially in providing higher data rates, near-real-time latencies, and near-perfect quality of service. However, existing radio access network (RAN) architectures lack sufficient flexibility and intelligence to meet those demands. Open RAN (O-RAN) is a promising paradigm for building a virtualized and intelligent RAN architecture. This paper presents a Machine Learning (ML)-based Traffic Steering (TS) scheme to predict network congestion and then proactively steer O-RAN traffic to avoid it and reduce the expected queuing delay. To achieve this, we propose an optimized setup focusing on safeguarding both latency and reliability to serve URLLC applications. The proposed solution consists of a two-tiered ML strategy based on Naive Bayes Classifier and deep Q-learning. Our solution is evaluated against traditional reactive TS approaches that are offered as xApps in O-RAN and shows an average of 15.81 percent decrease in queuing delay across all deployed SFCs.

Similarity-Based Predictive Maintenance Framework for Rotating Machinery

Dec 30, 2022

Abstract:Within smart manufacturing, data driven techniques are commonly adopted for condition monitoring and fault diagnosis of rotating machinery. Classical approaches use supervised learning where a classifier is trained on labeled data to predict or classify different operational states of the machine. However, in most industrial applications, labeled data is limited in terms of its size and type. Hence, it cannot serve the training purpose. In this paper, this problem is tackled by addressing the classification task as a similarity measure to a reference sample rather than a supervised classification task. Similarity-based approaches require a limited amount of labeled data and hence, meet the requirements of real-world industrial applications. Accordingly, the paper introduces a similarity-based framework for predictive maintenance (PdM) of rotating machinery. For each operational state of the machine, a reference vibration signal is generated and labeled according to the machine's operational condition. Consequentially, statistical time analysis, fast Fourier transform (FFT), and short-time Fourier transform (STFT) are used to extract features from the captured vibration signals. For each feature type, three similarity metrics, namely structural similarity measure (SSM), cosine similarity, and Euclidean distance are used to measure the similarity between test signals and reference signals in the feature space. Hence, nine settings in terms of feature type-similarity measure combinations are evaluated. Experimental results confirm the effectiveness of similarity-based approaches in achieving very high accuracy with moderate computational requirements compared to machine learning (ML)-based methods. Further, the results indicate that using FFT features with cosine similarity would lead to better performance compared to the other settings.

A Multi-Stage Automated Online Network Data Stream Analytics Framework for IIoT Systems

Oct 05, 2022

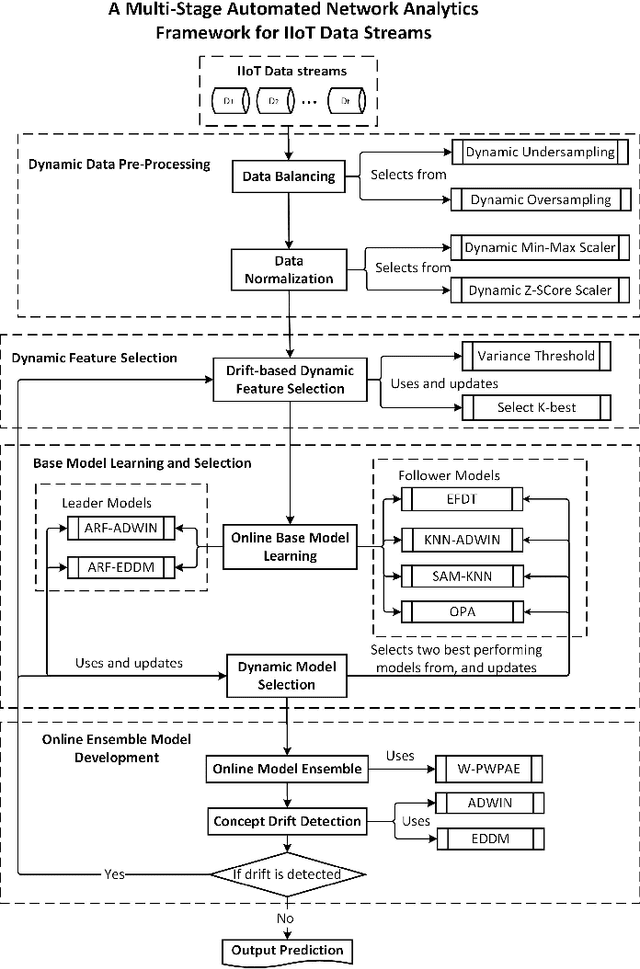

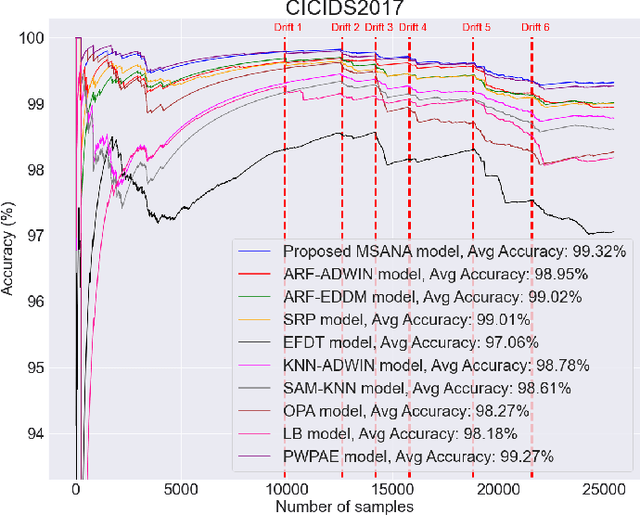

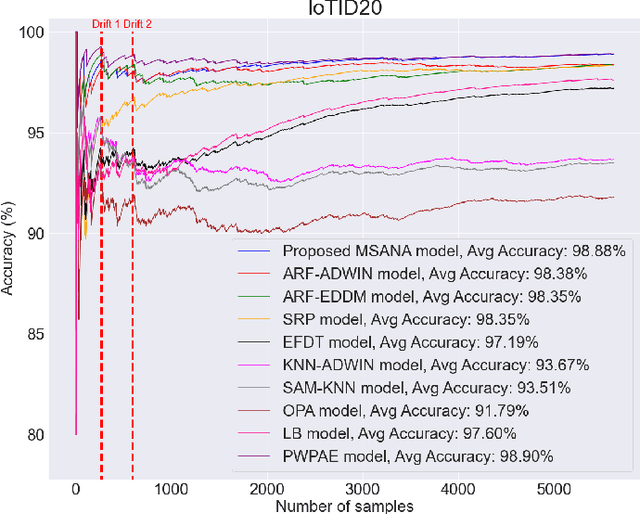

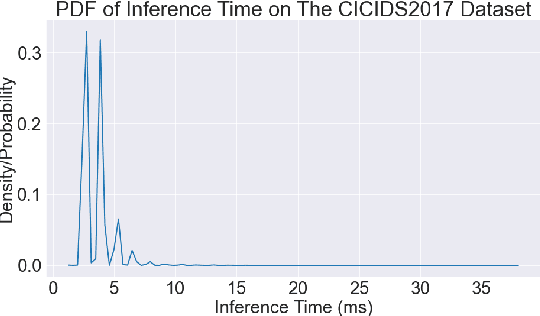

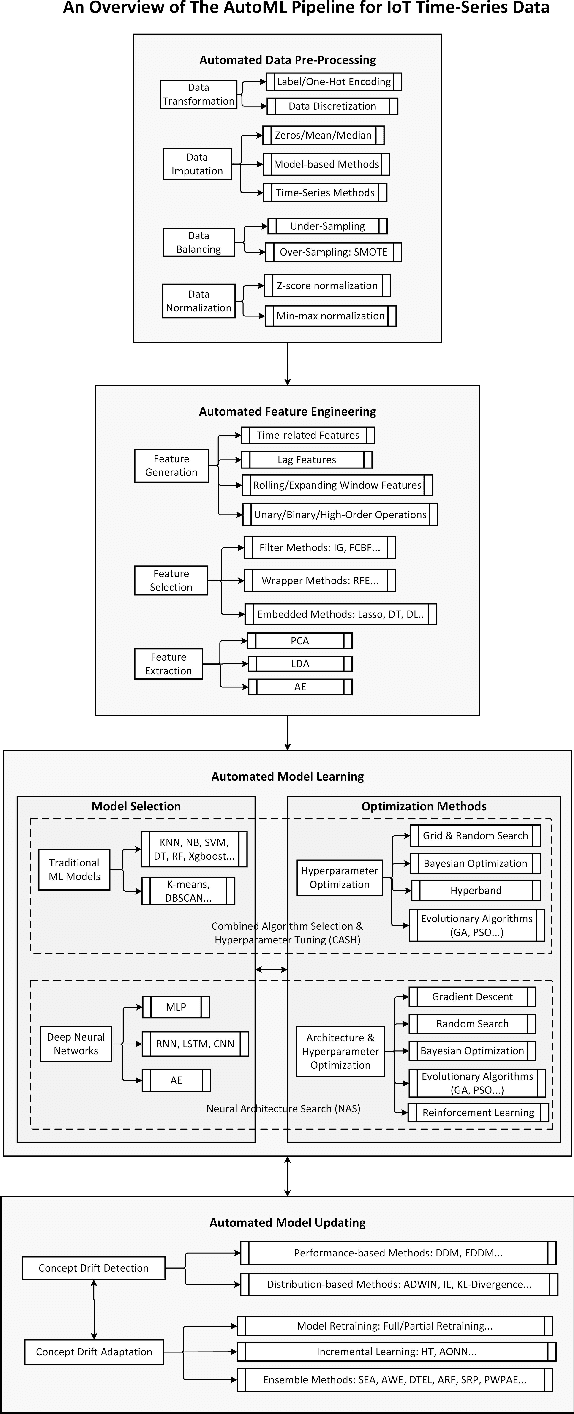

Abstract:Industry 5.0 aims at maximizing the collaboration between humans and machines. Machines are capable of automating repetitive jobs, while humans handle creative tasks. As a critical component of Industrial Internet of Things (IIoT) systems for service delivery, network data stream analytics often encounter concept drift issues due to dynamic IIoT environments, causing performance degradation and automation difficulties. In this paper, we propose a novel Multi-Stage Automated Network Analytics (MSANA) framework for concept drift adaptation in IIoT systems, consisting of dynamic data pre-processing, the proposed Drift-based Dynamic Feature Selection (DD-FS) method, dynamic model learning & selection, and the proposed Window-based Performance Weighted Probability Averaging Ensemble (W-PWPAE) model. It is a complete automated data stream analytics framework that enables automatic, effective, and efficient data analytics for IIoT systems in Industry 5.0. Experimental results on two public IoT datasets demonstrate that the proposed framework outperforms state-of-the-art methods for IIoT data stream analytics.

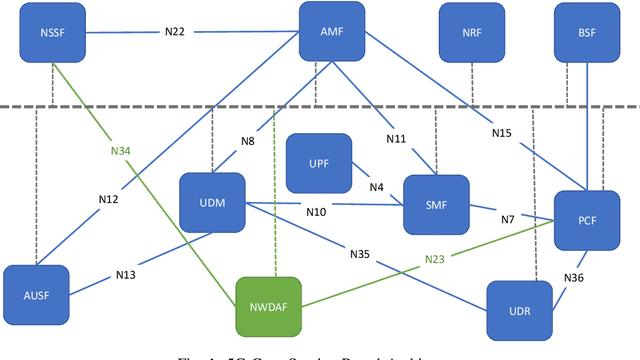

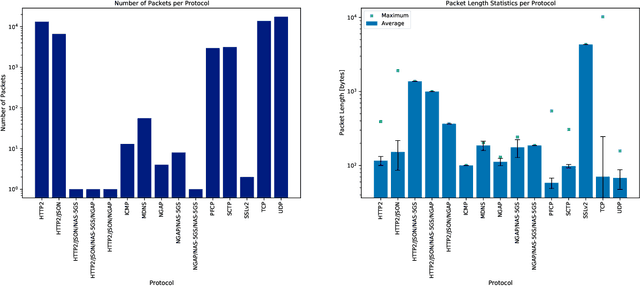

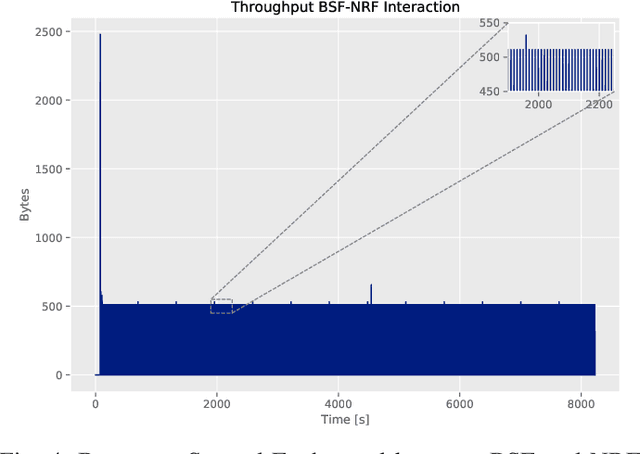

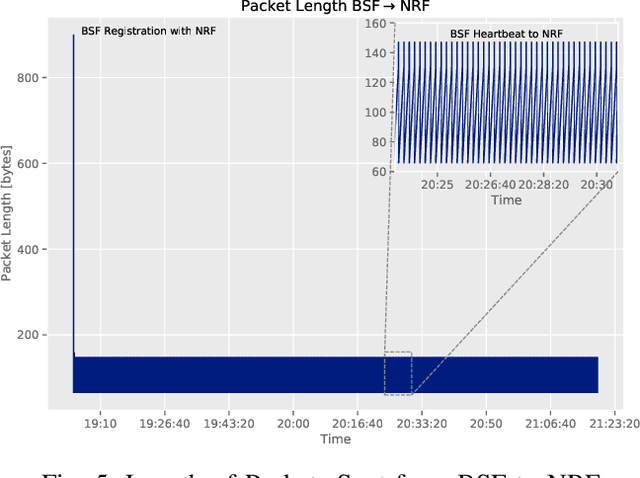

An NWDAF Approach to 5G Core Network Signaling Traffic: Analysis and Characterization

Sep 29, 2022

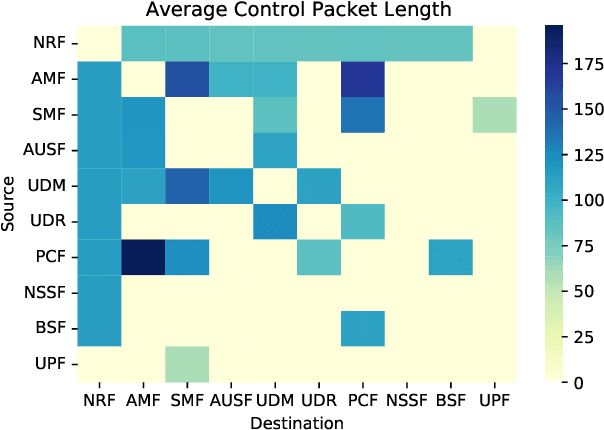

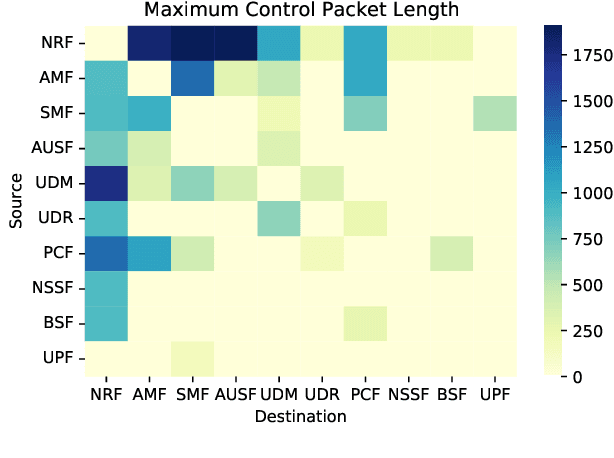

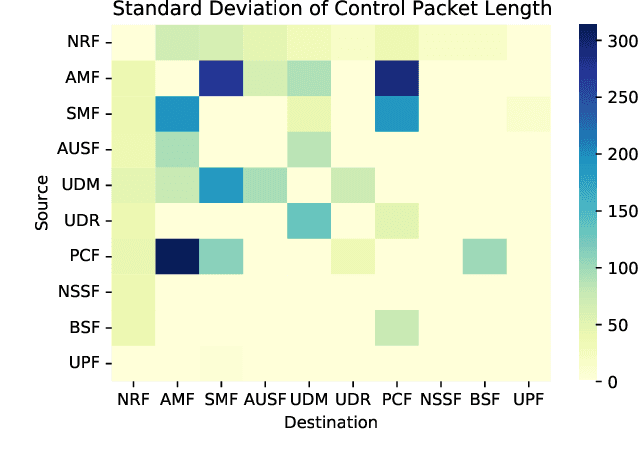

Abstract:Data-driven approaches and paradigms have become promising solutions to efficient network performances through optimization. These approaches focus on state-of-the-art machine learning techniques that can address the needs of 5G networks and the networks of tomorrow, such as proactive load balancing. In contrast to model-based approaches, data-driven approaches do not need accurate models to tackle the target problem, and their associated architectures provide a flexibility of available system parameters that improve the feasibility of learning-based algorithms in mobile wireless networks. The work presented in this paper focuses on demonstrating a working system prototype of the 5G Core (5GC) network and the Network Data Analytics Function (NWDAF) used to bring the benefits of data-driven techniques to fruition. Analyses of the network-generated data explore core intra-network interactions through unsupervised learning, clustering, and evaluate these results as insights for future opportunities and works.

IoT Data Analytics in Dynamic Environments: From An Automated Machine Learning Perspective

Sep 16, 2022

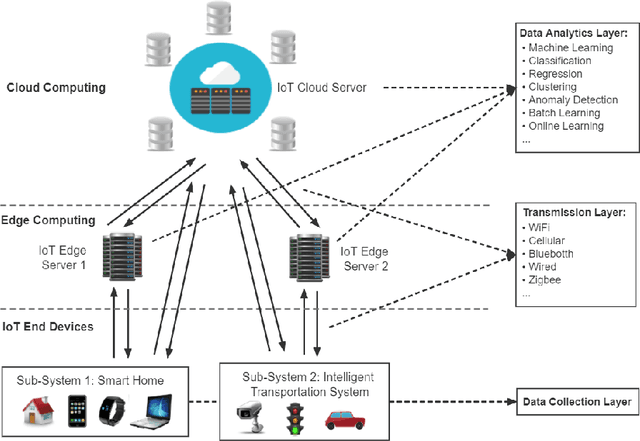

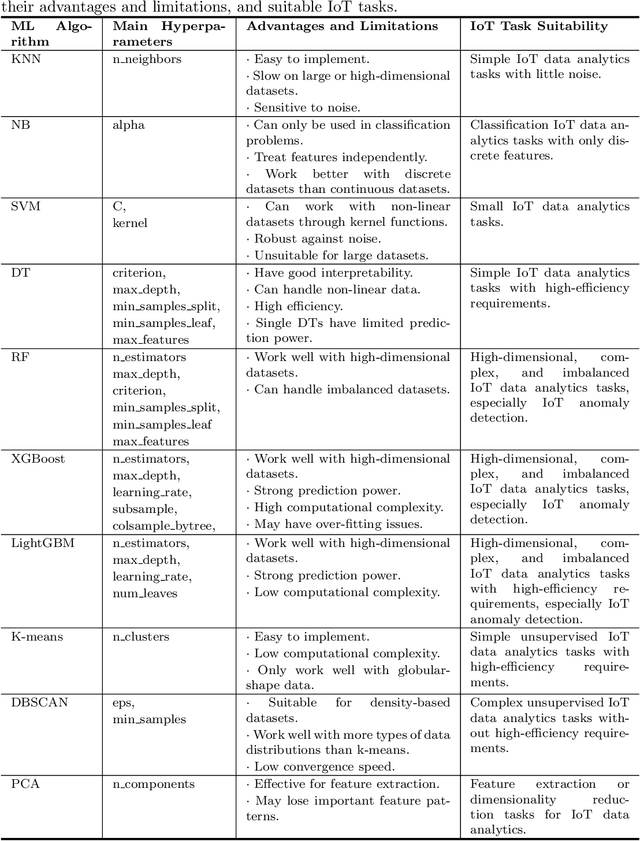

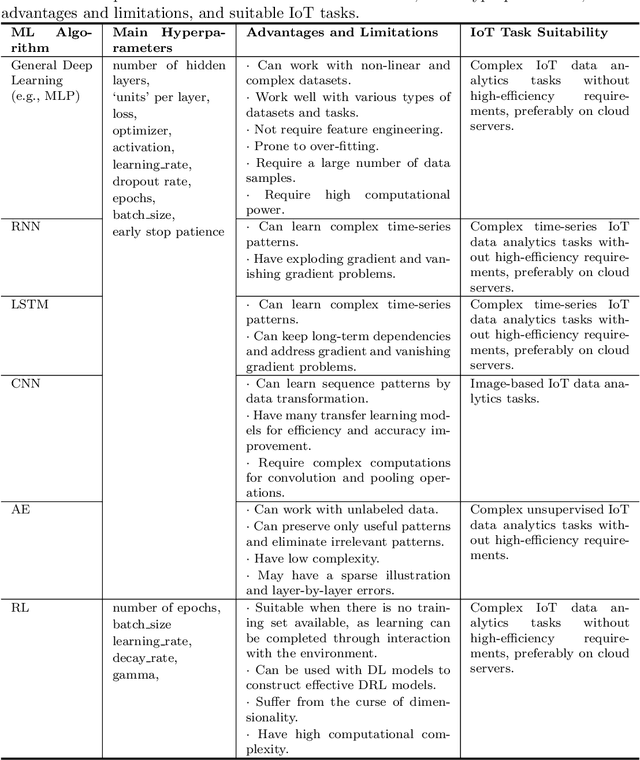

Abstract:With the wide spread of sensors and smart devices in recent years, the data generation speed of the Internet of Things (IoT) systems has increased dramatically. In IoT systems, massive volumes of data must be processed, transformed, and analyzed on a frequent basis to enable various IoT services and functionalities. Machine Learning (ML) approaches have shown their capacity for IoT data analytics. However, applying ML models to IoT data analytics tasks still faces many difficulties and challenges, specifically, effective model selection, design/tuning, and updating, which have brought massive demand for experienced data scientists. Additionally, the dynamic nature of IoT data may introduce concept drift issues, causing model performance degradation. To reduce human efforts, Automated Machine Learning (AutoML) has become a popular field that aims to automatically select, construct, tune, and update machine learning models to achieve the best performance on specified tasks. In this paper, we conduct a review of existing methods in the model selection, tuning, and updating procedures in the area of AutoML in order to identify and summarize the optimal solutions for every step of applying ML algorithms to IoT data analytics. To justify our findings and help industrial users and researchers better implement AutoML approaches, a case study of applying AutoML to IoT anomaly detection problems is conducted in this work. Lastly, we discuss and classify the challenges and research directions for this domain.

* Published in Engineering Applications of Artificial Intelligence (Elsevier, IF:7.8); Code/An AutoML tutorial is available at Github link: https://github.com/Western-OC2-Lab/AutoML-Implementation-for-Static-and-Dynamic-Data-Analytics

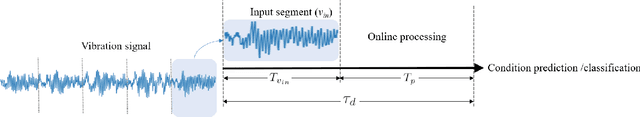

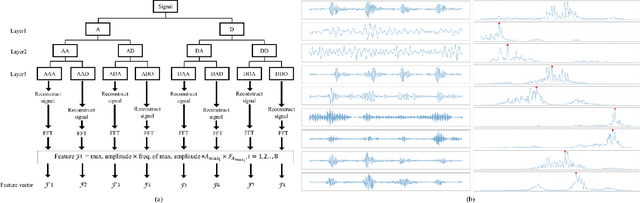

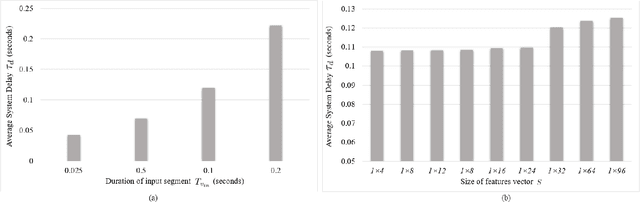

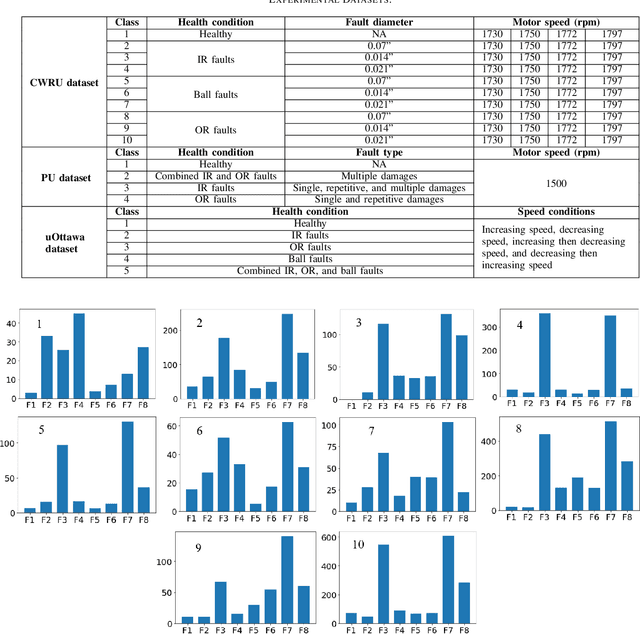

A Hybrid Method for Condition Monitoring and Fault Diagnosis of Rolling Bearings With Low System Delay

Aug 11, 2022

Abstract:Vibration-based condition monitoring techniques are commonly used to detect and diagnose failures of rolling bearings. Accuracy and delay in detecting and diagnosing different types of failures are the main performance measures in condition monitoring. Achieving high accuracy with low delay improves system reliability and prevents catastrophic equipment failure. Further, delay is crucial to remote condition monitoring and time-sensitive industrial applications. While most of the proposed methods focus on accuracy, slight attention has been paid to addressing the delay introduced in the condition monitoring process. In this paper, we attempt to bridge this gap and propose a hybrid method for vibration-based condition monitoring and fault diagnosis of rolling bearings that outperforms previous methods in terms of accuracy and delay. Specifically, we address the overall delay in vibration-based condition monitoring systems and introduce the concept of system delay to assess it. Then, we present the proposed method for condition monitoring. It uses Wavelet Packet Transform (WPT) and Fourier analysis to decompose short-duration input segments of the vibration signal into elementary waveforms and obtain their spectral contents. Accordingly, energy concentration in the spectral components-caused by defect induced transient vibrations-is utilized to extract a small number of features with high discriminative capabilities. Consequently, Bayesian optimization-based Random Forest (RF) algorithm is used to classify healthy and faulty operating conditions under varying motor speeds. The experimental results show that the proposed method can achieve high accuracy with low system delay.

LCCDE: A Decision-Based Ensemble Framework for Intrusion Detection in The Internet of Vehicles

Aug 05, 2022

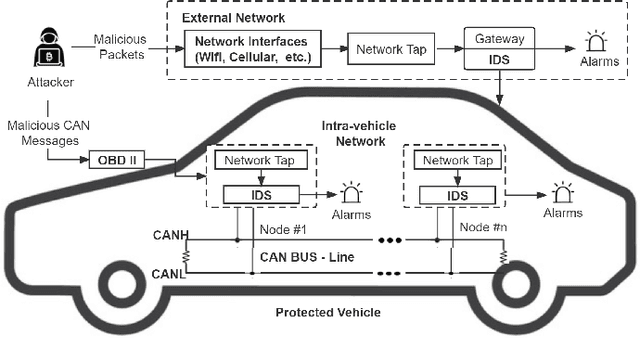

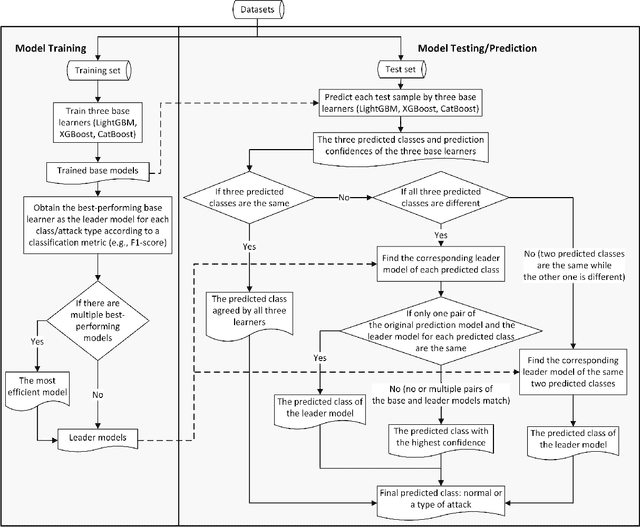

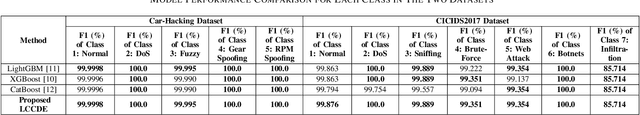

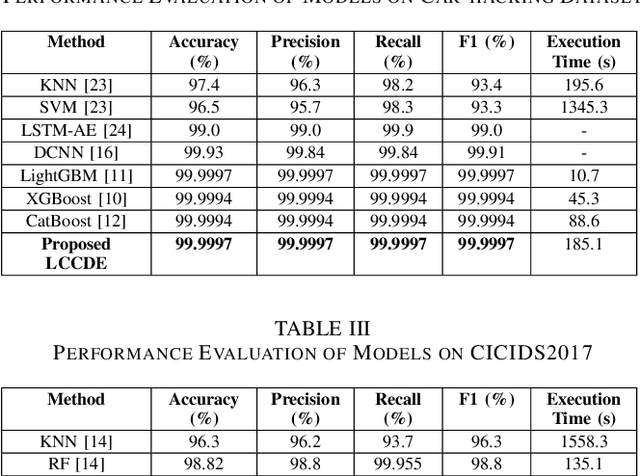

Abstract:Modern vehicles, including autonomous vehicles and connected vehicles, have adopted an increasing variety of functionalities through connections and communications with other vehicles, smart devices, and infrastructures. However, the growing connectivity of the Internet of Vehicles (IoV) also increases the vulnerabilities to network attacks. To protect IoV systems against cyber threats, Intrusion Detection Systems (IDSs) that can identify malicious cyber-attacks have been developed using Machine Learning (ML) approaches. To accurately detect various types of attacks in IoV networks, we propose a novel ensemble IDS framework named Leader Class and Confidence Decision Ensemble (LCCDE). It is constructed by determining the best-performing ML model among three advanced ML algorithms (XGBoost, LightGBM, and CatBoost) for every class or type of attack. The class leader models with their prediction confidence values are then utilized to make accurate decisions regarding the detection of various types of cyber-attacks. Experiments on two public IoV security datasets (Car-Hacking and CICIDS2017 datasets) demonstrate the effectiveness of the proposed LCCDE for intrusion detection on both intra-vehicle and external networks.

Towards Supporting Intelligence in 5G/6G Core Networks: NWDAF Implementation and Initial Analysis

May 30, 2022

Abstract:Wireless networks, in the fifth-generation and beyond, must support diverse network applications which will support the numerous and demanding connections of today's and tomorrow's devices. Requirements such as high data rates, low latencies, and reliability are crucial considerations and artificial intelligence is incorporated to achieve these requirements for a large number of connected devices. Specifically, intelligent methods and frameworks for advanced analysis are employed by the 5G Core Network Data Analytics Function (NWDAF) to detect patterns and ascribe detailed action information to accommodate end users and improve network performance. To this end, the work presented in this paper incorporates a functional NWDAF into a 5G network developed using open source software. Furthermore, an analysis of the network data collected by the NWDAF and the valuable insights which can be drawn from it have been presented with detailed Network Function interactions. An example application of such insights used for intelligent network management is outlined. Finally, the expected limitations of 5G networks are discussed as motivation for the development of 6G networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge