Aayush Kubba

Duration Aware Scheduling for ASR Serving Under Workload Drift

Mar 11, 2026Abstract:Scheduling policies in large-scale Automatic Speech Recognition (ASR) serving pipelines play a key role in determining end-to-end (E2E) latency. Yet, widely used serving engines rely on first-come-first-served (FCFS) scheduling, which ignores variability in request duration and leads to head-of-line blocking under workload drift. We show that audio duration is an accurate proxy for job processing time in ASR models such as Whisper, and use this insight to enable duration-aware scheduling. We integrate two classical algorithms, Shortest Job First (SJF) and Highest Response Ratio Next (HRRN), into vLLM and evaluate them under realistic and drifted workloads. On LibriSpeech test-clean, compared to baseline, SJF reduces median E2E latency by up to $73\%$ at high load, but increases $90$th-percentile tail latency by up to $97\%$ due to starvation of long requests. HRRN addresses this trade-off: it reduces median E2E latency by up to $28\%$ while bounding tail-latency degradation to at most $24\%$. These gains persist under workload drift, with no throughput penalty and $<0.1$\,ms scheduling overhead per request.

Improving Rare-Word Recognition of Whisper in Zero-Shot Settings

Feb 18, 2025Abstract:Whisper, despite being trained on 680K hours of web-scaled audio data, faces difficulty in recognising rare words like domain-specific terms, with a solution being contextual biasing through prompting. To improve upon this method, in this paper, we propose a supervised learning strategy to fine-tune Whisper for contextual biasing instruction. We demonstrate that by using only 670 hours of Common Voice English set for fine-tuning, our model generalises to 11 diverse open-source English datasets, achieving a 45.6% improvement in recognition of rare words and 60.8% improvement in recognition of words unseen during fine-tuning over the baseline method. Surprisingly, our model's contextual biasing ability generalises even to languages unseen during fine-tuning.

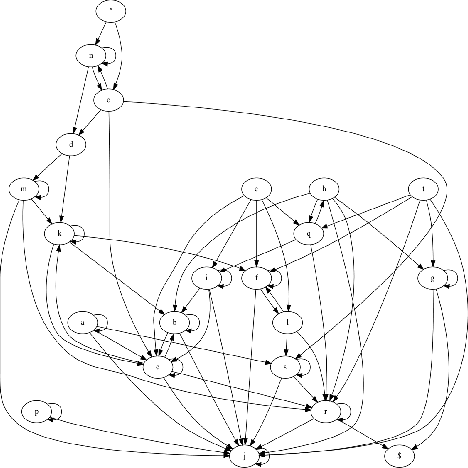

TSCAN : Dialog Structure discovery using SCAN

Jul 18, 2021

Abstract:Can we discover dialog structure by dividing utterances into labelled clusters. Can these labels be generated from the data. Typically for dialogs we need an ontology and use that to discover structure, however by using unsupervised classification and self-labelling we are able to intuit this structure without any labels or ontology. In this paper we apply SCAN (Semantic Clustering using Nearest Neighbors) to dialog data. We used BERT for pretext task and an adaptation of SCAN for clustering and self labeling. These clusters are used to identify transition probabilities and create the dialog structure. The self-labelling method used for SCAN makes these structures interpretable as every cluster has a label. As the approach is unsupervised, evaluation metrics is a challenge, we use statistical measures as proxies for structure quality

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge