"music": models, code, and papers

Modeling of the Latent Embedding of Music using Deep Neural Network

May 12, 2017

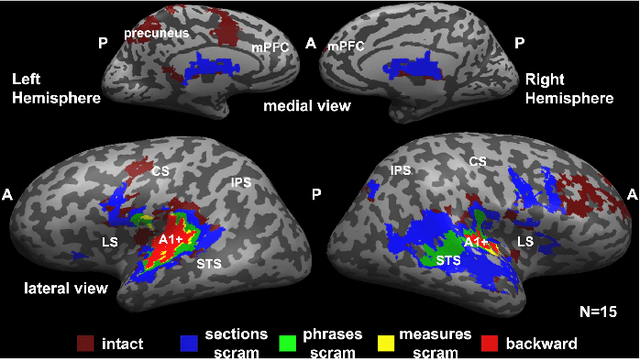

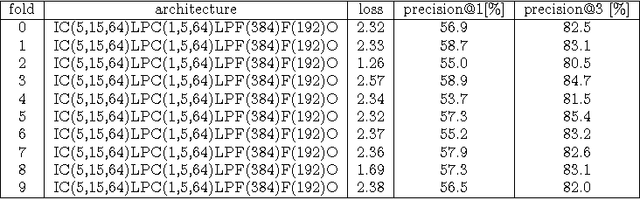

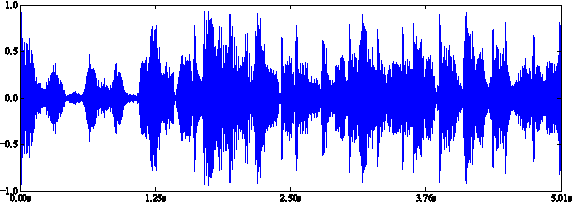

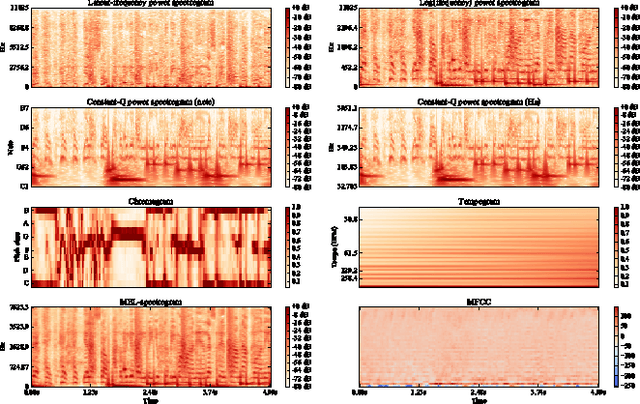

While both the data volume and heterogeneity of the digital music content is huge, it has become increasingly important and convenient to build a recommendation or search system to facilitate surfacing these content to the user or consumer community. Most of the recommendation models fall into two primary species, collaborative filtering based and content based approaches. Variants of instantiations of collaborative filtering approach suffer from the common issues of so called "cold start" and "long tail" problems where there is not much user interaction data to reveal user opinions or affinities on the content and also the distortion towards the popular content. Content-based approaches are sometimes limited by the richness of the available content data resulting in a heavily biased and coarse recommendation result. In recent years, the deep neural network has enjoyed a great success in large-scale image and video recognitions. In this paper, we propose and experiment using deep convolutional neural network to imitate how human brain processes hierarchical structures in the auditory signals, such as music, speech, etc., at various timescales. This approach can be used to discover the latent factor models of the music based upon acoustic hyper-images that are extracted from the raw audio waves of music. These latent embeddings can be used either as features to feed to subsequent models, such as collaborative filtering, or to build similarity metrics between songs, or to classify music based on the labels for training such as genre, mood, sentiment, etc.

Human Evaluation of Interpretability: The Case of AI-Generated Music Knowledge

Apr 15, 2020

Interpretability of machine learning models has gained more and more attention among researchers in the artificial intelligence (AI) and human-computer interaction (HCI) communities. Most existing work focuses on decision making, whereas we consider knowledge discovery. In particular, we focus on evaluating AI-discovered knowledge/rules in the arts and humanities. From a specific scenario, we present an experimental procedure to collect and assess human-generated verbal interpretations of AI-generated music theory/rules rendered as sophisticated symbolic/numeric objects. Our goal is to reveal both the possibilities and the challenges in such a process of decoding expressive messages from AI sources. We treat this as a first step towards 1) better design of AI representations that are human interpretable and 2) a general methodology to evaluate interpretability of AI-discovered knowledge representations.

Efficient Learning of Harmonic Priors for Pitch Detection in Polyphonic Music

May 19, 2017

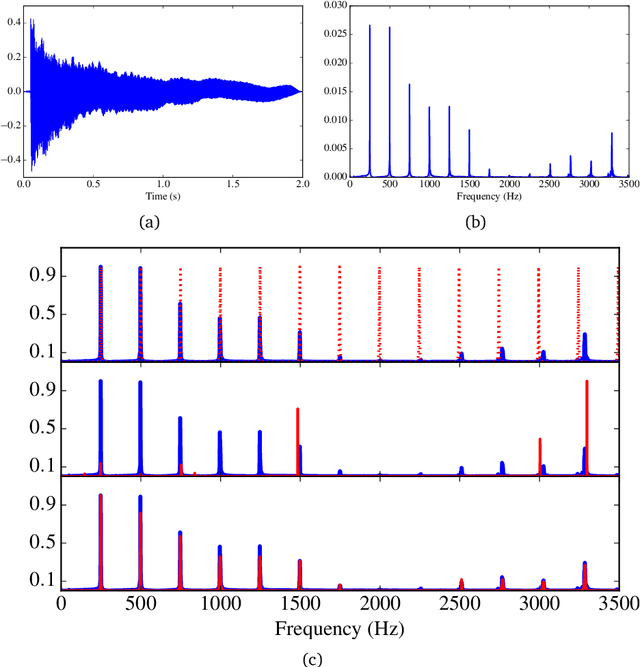

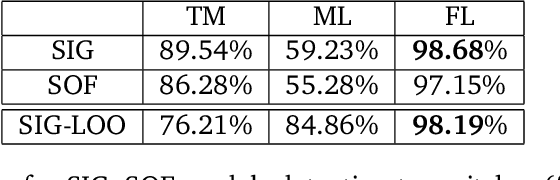

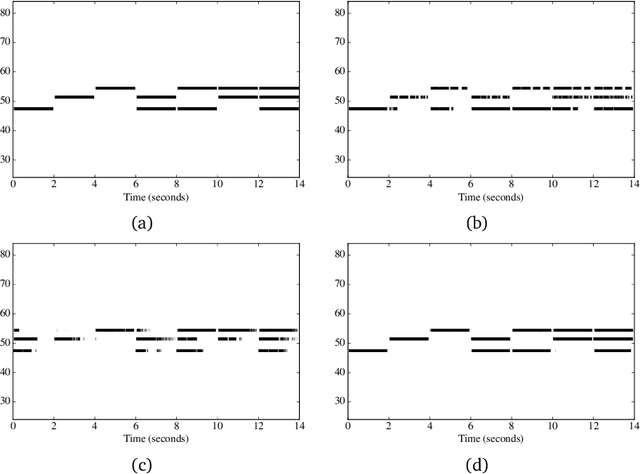

Automatic music transcription (AMT) aims to infer a latent symbolic representation of a piece of music (piano-roll), given a corresponding observed audio recording. Transcribing polyphonic music (when multiple notes are played simultaneously) is a challenging problem, due to highly structured overlapping between harmonics. We study whether the introduction of physically inspired Gaussian process (GP) priors into audio content analysis models improves the extraction of patterns required for AMT. Audio signals are described as a linear combination of sources. Each source is decomposed into the product of an amplitude-envelope, and a quasi-periodic component process. We introduce the Mat\'ern spectral mixture (MSM) kernel for describing frequency content of singles notes. We consider two different regression approaches. In the sigmoid model every pitch-activation is independently non-linear transformed. In the softmax model several activation GPs are jointly non-linearly transformed. This introduce cross-correlation between activations. We use variational Bayes for approximate inference. We empirically evaluate how these models work in practice transcribing polyphonic music. We demonstrate that rather than encourage dependency between activations, what is relevant for improving pitch detection is to learnt priors that fit the frequency content of the sound events to detect.

An Indoor Environment Sensing and Localization System via mmWave Phased Array

Jun 07, 2022

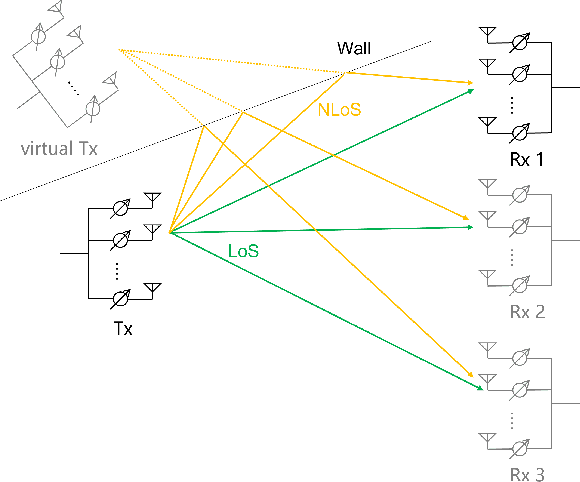

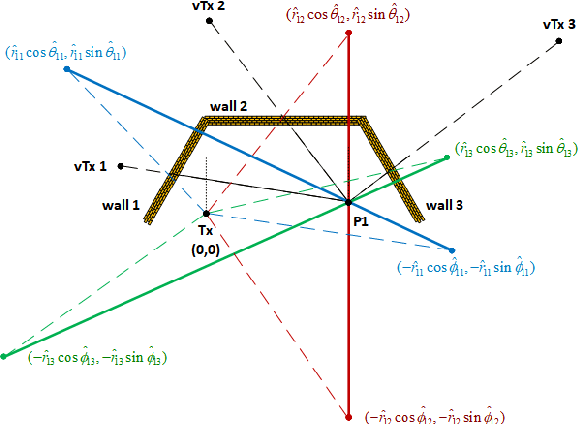

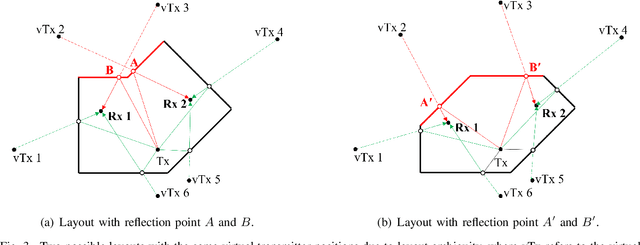

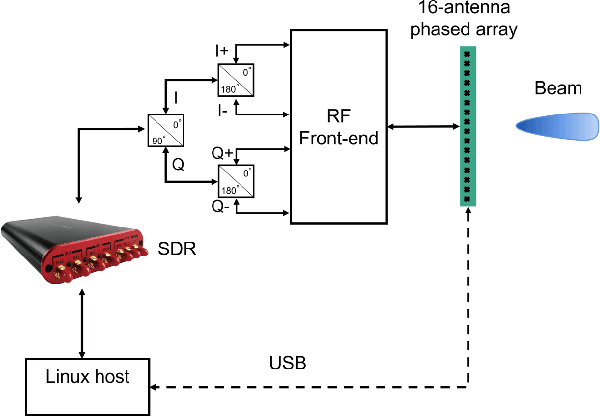

An indoor layout sensing and localization system in 60GHz millimeter wave (mmWave) band, named mmReality, is elaborated in this paper. The mmReality system consists of one transmitter and one mobile receiver, each with a phased array and a single radio frequency (RF) chain. To reconstruct the room layout, the pilot signal is delivered from the transmitter to the receiver via different pairs of transmission and receiving beams, so that the signals at all antenna elements can be resolved. Then, the spatial smoothing and two-dimensional multiple signal classification (MUSIC) algorithm is applied to detect the angle-of-arrival (AoAs) and angle-of-departure (AoDs) of the rays from the transmitter to the receiver. Moreover, the technique of multi-carrier ranging is adopted to measure the distance of each propagation path. Synthesizing the above geometrical parameters, the location of receiver relative to the transmitter can be pinpointed, both line-of-sight (LoS) and non-line-of-sight (NLoS) paths can also be determined. Therefore, the room layout can be reconstructed by moving the receiver and repeating the above measurement in different locations of the room. At the end, we show that the reconstructed room layout can be utilized to locate a mobile device according to its AoA spectrum, even with single access point.

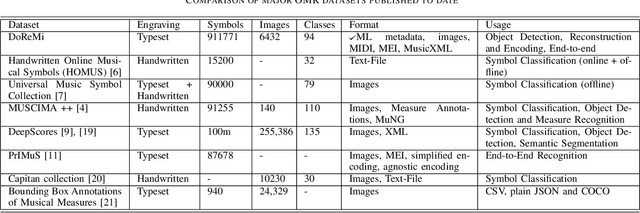

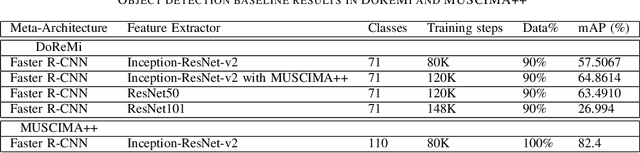

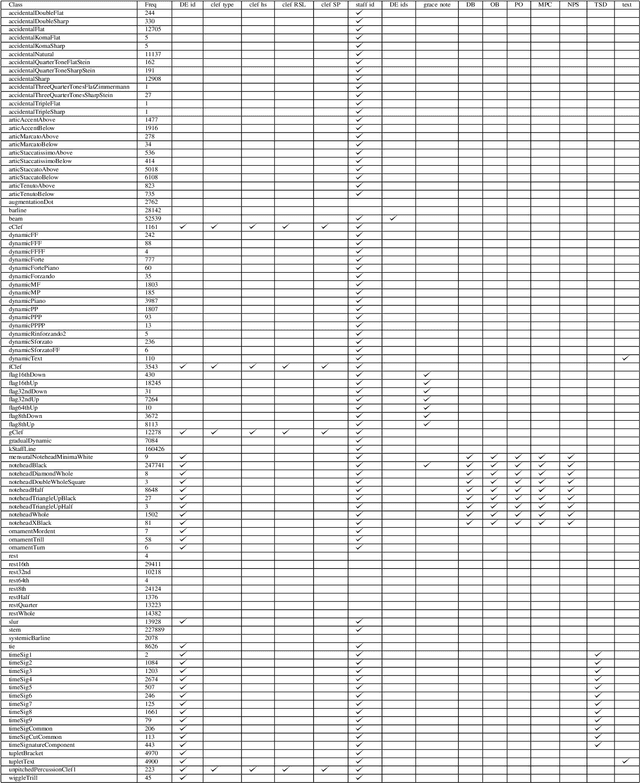

DoReMi: First glance at a universal OMR dataset

Jul 16, 2021

The main challenges of Optical Music Recognition (OMR) come from the nature of written music, its complexity and the difficulty of finding an appropriate data representation. This paper provides a first look at DoReMi, an OMR dataset that addresses these challenges, and a baseline object detection model to assess its utility. Researchers often approach OMR following a set of small stages, given that existing data often do not satisfy broader research. We examine the possibility of changing this tendency by presenting more metadata. Our approach complements existing research; hence DoReMi allows harmonisation with two existing datasets, DeepScores and MUSCIMA++. DoReMi was generated using a music notation software and includes over 6400 printed sheet music images with accompanying metadata useful in OMR research. Our dataset provides OMR metadata, MIDI, MEI, MusicXML and PNG files, each aiding a different stage of OMR. We obtain 64% mean average precision (mAP) in object detection using half of the data. Further work includes re-iterating through the creation process to satisfy custom OMR models. While we do not assume to have solved the main challenges in OMR, this dataset opens a new course of discussions that would ultimately aid that goal.

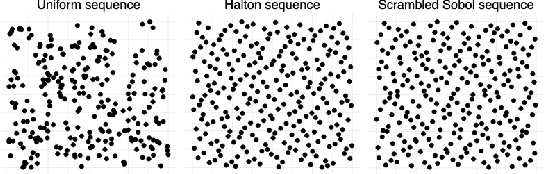

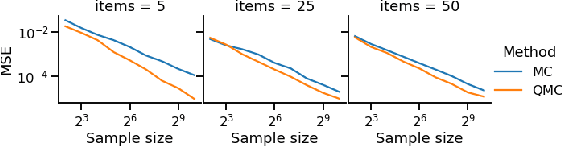

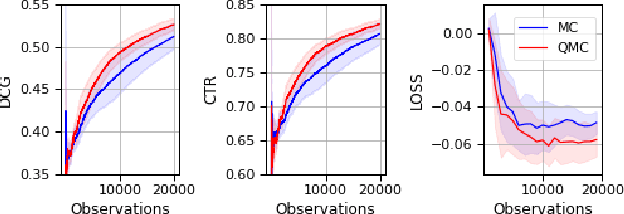

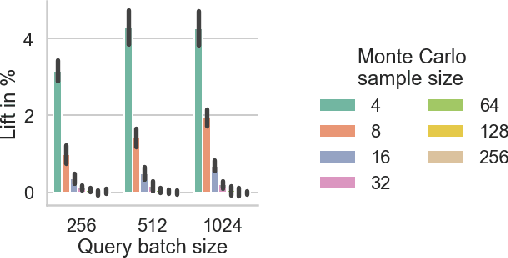

Low-variance estimation in the Plackett-Luce model via quasi-Monte Carlo sampling

May 12, 2022

The Plackett-Luce (PL) model is ubiquitous in learning-to-rank (LTR) because it provides a useful and intuitive probabilistic model for sampling ranked lists. Counterfactual offline evaluation and optimization of ranking metrics are pivotal for using LTR methods in production. When adopting the PL model as a ranking policy, both tasks require the computation of expectations with respect to the model. These are usually approximated via Monte-Carlo (MC) sampling, since the combinatorial scaling in the number of items to be ranked makes their analytical computation intractable. Despite recent advances in improving the computational efficiency of the sampling process via the Gumbel top-k trick, the MC estimates can suffer from high variance. We develop a novel approach to producing more sample-efficient estimators of expectations in the PL model by combining the Gumbel top-k trick with quasi-Monte Carlo (QMC) sampling, a well-established technique for variance reduction. We illustrate our findings both theoretically and empirically using real-world recommendation data from Amazon Music and the Yahoo learning-to-rank challenge.

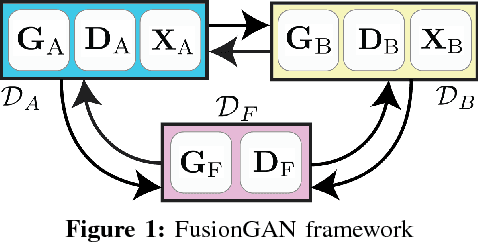

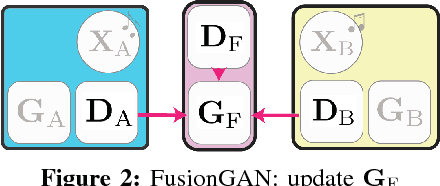

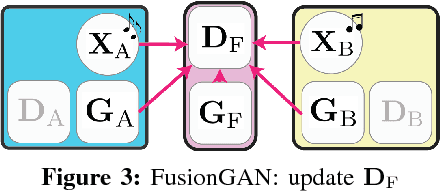

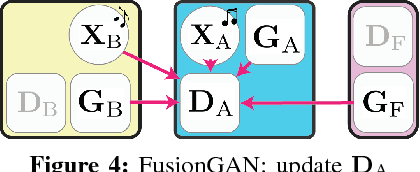

Learning to Fuse Music Genres with Generative Adversarial Dual Learning

Dec 05, 2017

FusionGAN is a novel genre fusion framework for music generation that integrates the strengths of generative adversarial networks and dual learning. In particular, the proposed method offers a dual learning extension that can effectively integrate the styles of the given domains. To efficiently quantify the difference among diverse domains and avoid the vanishing gradient issue, FusionGAN provides a Wasserstein based metric to approximate the distance between the target domain and the existing domains. Adopting the Wasserstein distance, a new domain is created by combining the patterns of the existing domains using adversarial learning. Experimental results on public music datasets demonstrated that our approach could effectively merge two genres.

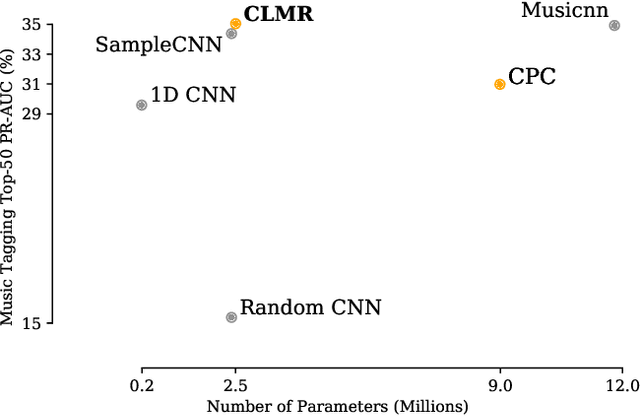

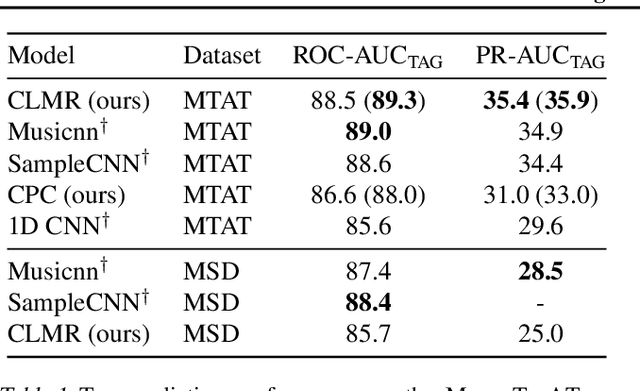

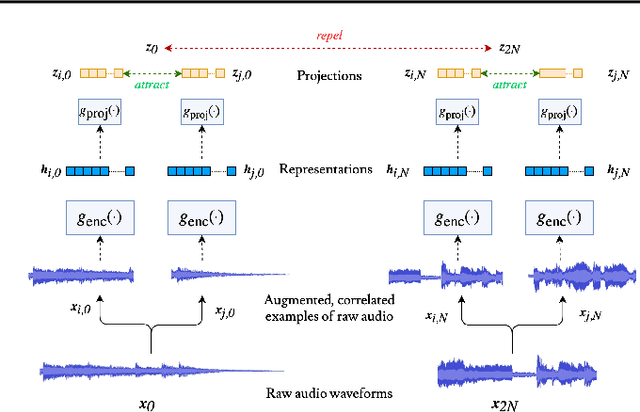

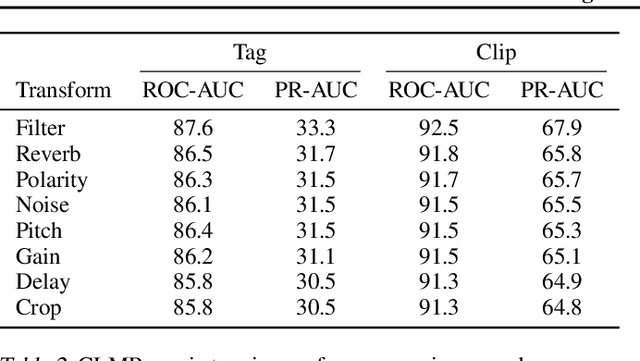

Contrastive Learning of Musical Representations

Mar 17, 2021

While supervised learning has enabled great advances in many areas of music, labeled music datasets remain especially hard, expensive and time-consuming to create. In this work, we introduce SimCLR to the music domain and contribute a large chain of audio data augmentations, to form a simple framework for self-supervised learning of raw waveforms of music: CLMR. This approach requires no manual labeling and no preprocessing of music to learn useful representations. We evaluate CLMR in the downstream task of music classification on the MagnaTagATune and Million Song datasets. A linear classifier fine-tuned on representations from a pre-trained CLMR model achieves an average precision of 35.4% on the MagnaTagATune dataset, superseding fully supervised models that currently achieve a score of 34.9%. Moreover, we show that CLMR's representations are transferable using out-of-domain datasets, indicating that they capture important musical knowledge. Lastly, we show that self-supervised pre-training allows us to learn efficiently on smaller labeled datasets: we still achieve a score of 33.1% despite using only 259 labeled songs during fine-tuning. To foster reproducibility and future research on self-supervised learning in music, we publicly release the pre-trained models and the source code of all experiments of this paper on GitHub.

Studying the role of named entities for content preservation in text style transfer

Jun 20, 2022Text style transfer techniques are gaining popularity in Natural Language Processing, finding various applications such as text detoxification, sentiment, or formality transfer. However, the majority of the existing approaches were tested on such domains as online communications on public platforms, music, or entertainment yet none of them were applied to the domains which are typical for task-oriented production systems, such as personal plans arrangements (e.g. booking of flights or reserving a table in a restaurant). We fill this gap by studying formality transfer in this domain. We noted that the texts in this domain are full of named entities, which are very important for keeping the original sense of the text. Indeed, if for example, someone communicates the destination city of a flight it must not be altered. Thus, we concentrate on the role of named entities in content preservation for formality text style transfer. We collect a new dataset for the evaluation of content similarity measures in text style transfer. It is taken from a corpus of task-oriented dialogues and contains many important entities related to realistic requests that make this dataset particularly useful for testing style transfer models before using them in production. Besides, we perform an error analysis of a pre-trained formality transfer model and introduce a simple technique to use information about named entities to enhance the performance of baseline content similarity measures used in text style transfer.

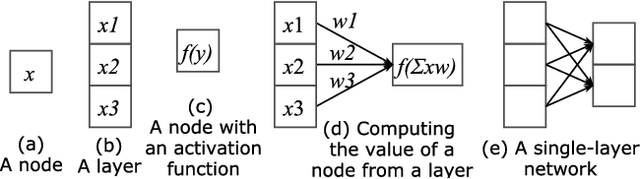

A Tutorial on Deep Learning for Music Information Retrieval

May 03, 2018

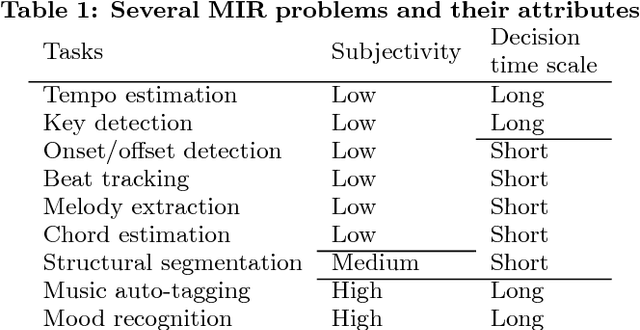

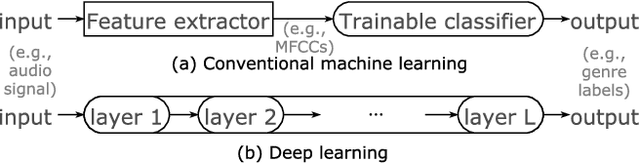

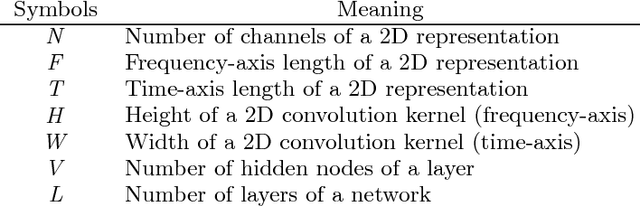

Following their success in Computer Vision and other areas, deep learning techniques have recently become widely adopted in Music Information Retrieval (MIR) research. However, the majority of works aim to adopt and assess methods that have been shown to be effective in other domains, while there is still a great need for more original research focusing on music primarily and utilising musical knowledge and insight. The goal of this paper is to boost the interest of beginners by providing a comprehensive tutorial and reducing the barriers to entry into deep learning for MIR. We lay out the basic principles and review prominent works in this hard to navigate the field. We then outline the network structures that have been successful in MIR problems and facilitate the selection of building blocks for the problems at hand. Finally, guidelines for new tasks and some advanced topics in deep learning are discussed to stimulate new research in this fascinating field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge