"facial recognition": models, code, and papers

About Face: A Survey of Facial Recognition Evaluation

Feb 01, 2021

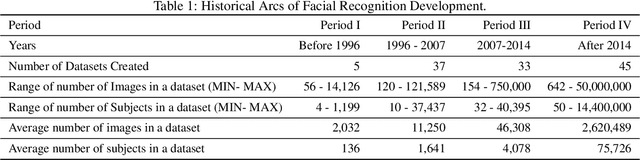

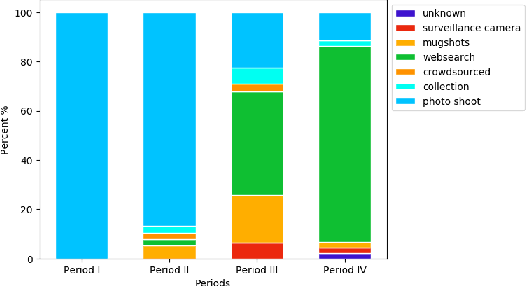

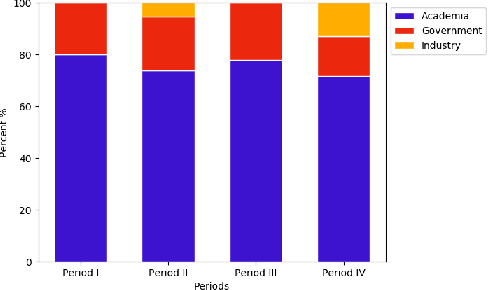

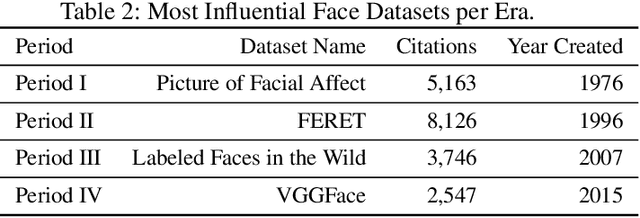

We survey over 100 face datasets constructed between 1976 to 2019 of 145 million images of over 17 million subjects from a range of sources, demographics and conditions. Our historical survey reveals that these datasets are contextually informed, shaped by changes in political motivations, technological capability and current norms. We discuss how such influences mask specific practices (some of which may actually be harmful or otherwise problematic) and make a case for the explicit communication of such details in order to establish a more grounded understanding of the technology's function in the real world.

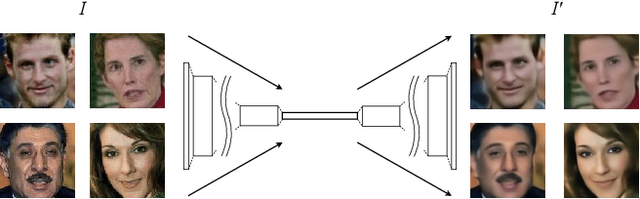

Adversarially Perturbed Wavelet-based Morphed Face Generation

Nov 03, 2021

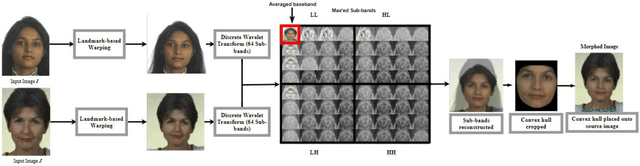

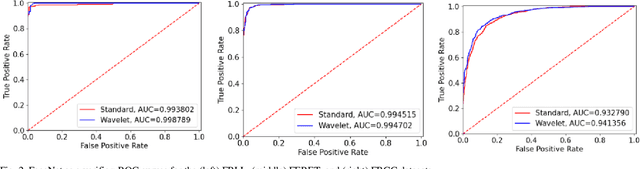

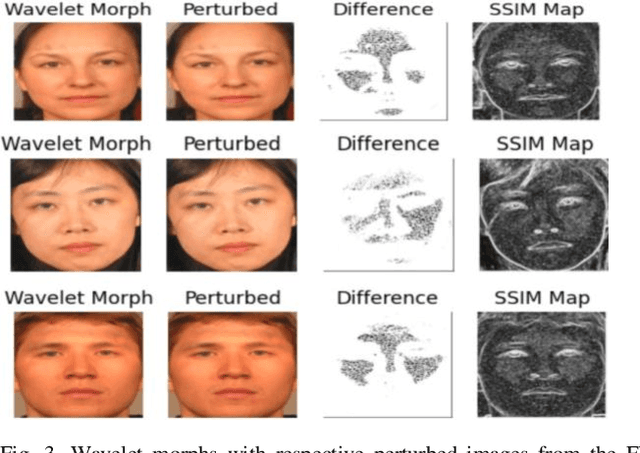

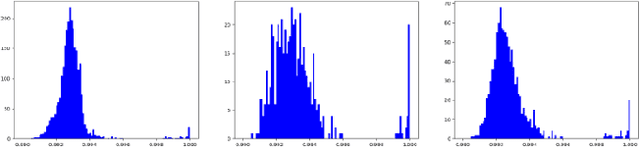

Morphing is the process of combining two or more subjects in an image in order to create a new identity which contains features of both individuals. Morphed images can fool Facial Recognition Systems (FRS) into falsely accepting multiple people, leading to failures in national security. As morphed image synthesis becomes easier, it is vital to expand the research community's available data to help combat this dilemma. In this paper, we explore combination of two methods for morphed image generation, those of geometric transformation (warping and blending to create morphed images) and photometric perturbation. We leverage both methods to generate high-quality adversarially perturbed morphs from the FERET, FRGC, and FRLL datasets. The final images retain high similarity to both input subjects while resulting in minimal artifacts in the visual domain. Images are synthesized by fusing the wavelet sub-bands from the two look-alike subjects, and then adversarially perturbed to create highly convincing imagery to deceive both humans and deep morph detectors.

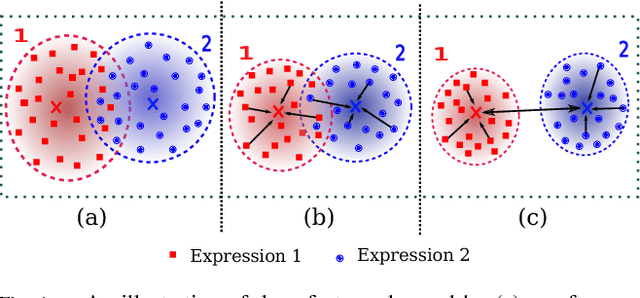

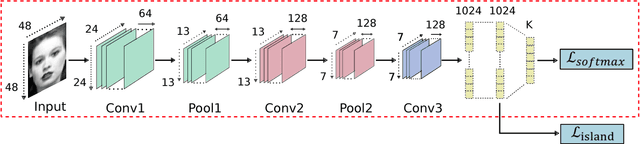

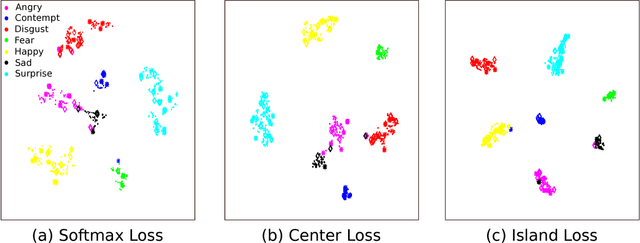

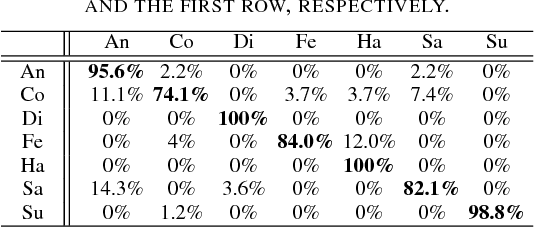

Island Loss for Learning Discriminative Features in Facial Expression Recognition

Oct 23, 2017

Over the past few years, Convolutional Neural Networks (CNNs) have shown promise on facial expression recognition. However, the performance degrades dramatically under real-world settings due to variations introduced by subtle facial appearance changes, head pose variations, illumination changes, and occlusions. In this paper, a novel island loss is proposed to enhance the discriminative power of the deeply learned features. Specifically, the IL is designed to reduce the intra-class variations while enlarging the inter-class differences simultaneously. Experimental results on four benchmark expression databases have demonstrated that the CNN with the proposed island loss (IL-CNN) outperforms the baseline CNN models with either traditional softmax loss or the center loss and achieves comparable or better performance compared with the state-of-the-art methods for facial expression recognition.

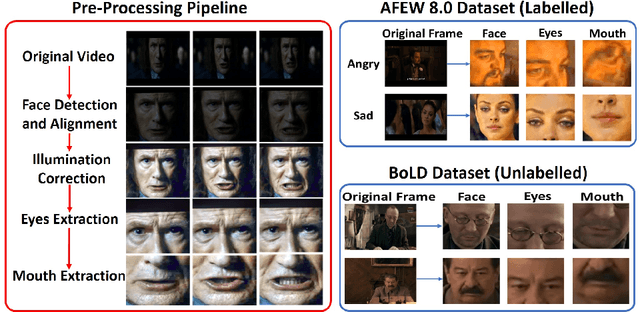

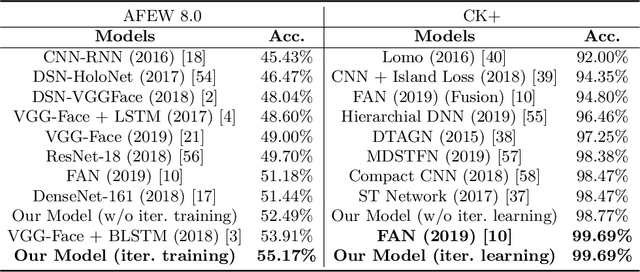

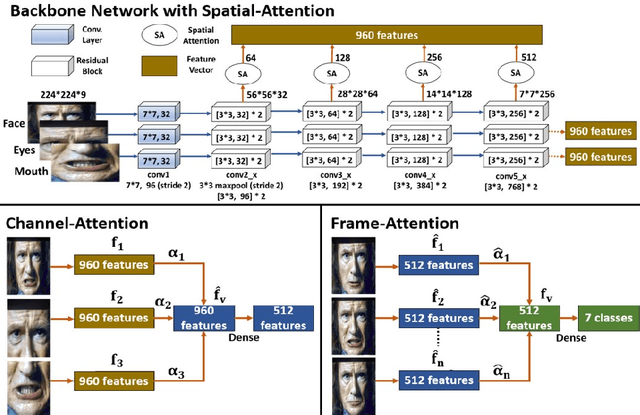

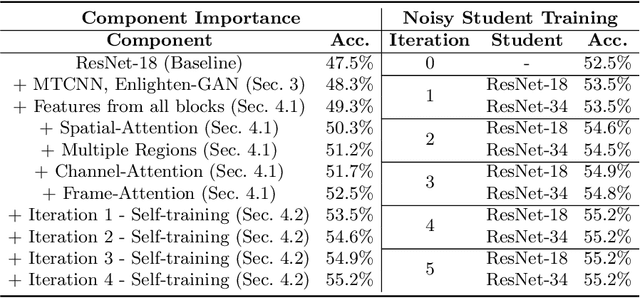

Noisy Student Training using Body Language Dataset Improves Facial Expression Recognition

Aug 06, 2020

Facial expression recognition from videos in the wild is a challenging task due to the lack of abundant labelled training data. Large DNN (deep neural network) architectures and ensemble methods have resulted in better performance, but soon reach saturation at some point due to data inadequacy. In this paper, we use a self-training method that utilizes a combination of a labelled dataset and an unlabelled dataset (Body Language Dataset - BoLD). Experimental analysis shows that training a noisy student network iteratively helps in achieving significantly better results. Additionally, our model isolates different regions of the face and processes them independently using a multi-level attention mechanism which further boosts the performance. Our results show that the proposed method achieves state-of-the-art performance on benchmark datasets CK+ and AFEW 8.0 when compared to other single models.

Facial Expression Recognition based on Multi-head Cross Attention Network

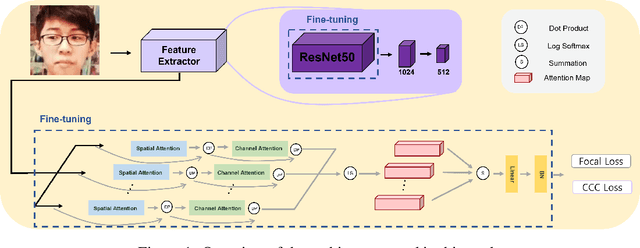

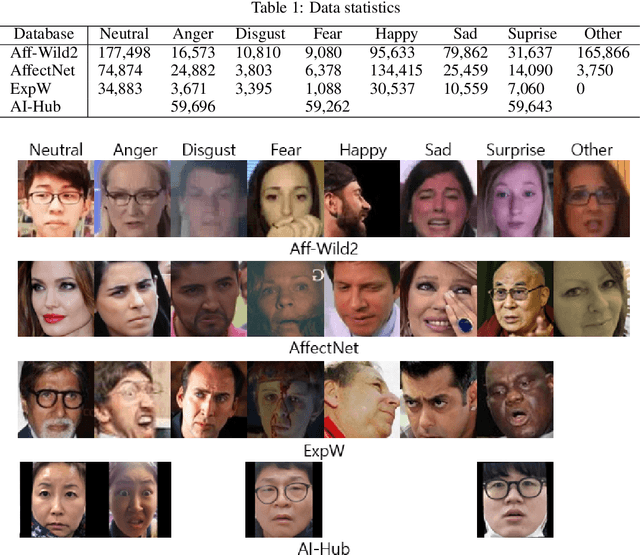

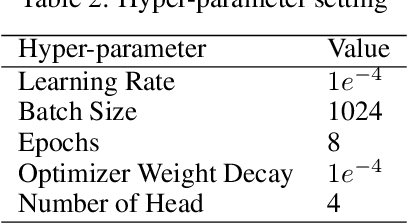

Mar 24, 2022

Facial expression in-the-wild is essential for various interactive computing domains. In this paper, we proposed an extended version of DAN model to address the VA estimation and facial expression challenges introduced in ABAW 2022. Our method produced preliminary results of 0.44 of mean CCC value for the VA estimation task, and 0.33 of the average F1 score for the expression classification task.

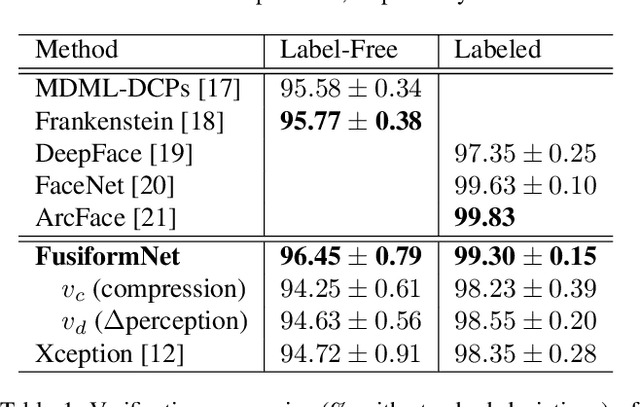

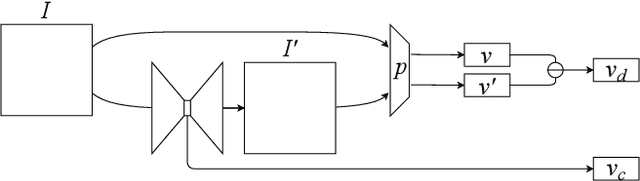

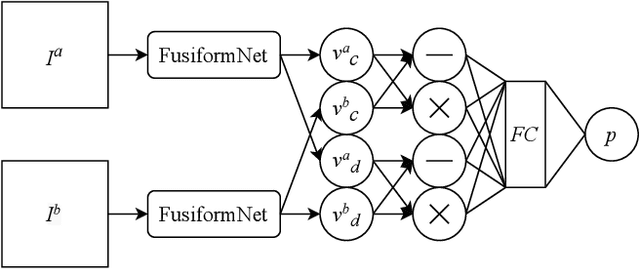

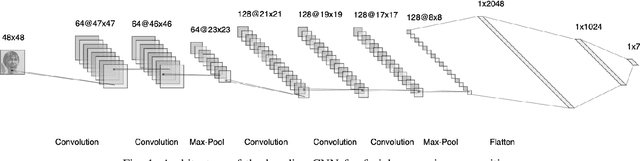

FusiformNet: Extracting Discriminative Facial Features on Different Levels

Nov 06, 2020

Over the last several years, research on facial recognition based on Deep Neural Network has evolved with approaches like task-specific loss functions, image normalization and augmentation, network architectures, etc. However, there have been few approaches with attention to how human faces differ from person to person. Premising that inter-personal differences are found both generally and locally on the human face, I propose FusiformNet, a novel framework for feature extraction that leverages the nature of discriminative facial features. Tested on Image-Unrestricted setting of Labeled Face in the Wild benchmark, this method achieved a state-of-the-art accuracy of 96.67% without labeled outside data, image augmentation, normalization, or special loss functions. Likewise, the method also performed on par with previous state-of-the-arts when pre-trained on CASIA-WebFace dataset. Considering its ability to extract both general and local facial features, the utility of FusiformNet may not be limited to facial recognition but also extend to other DNN-based tasks.

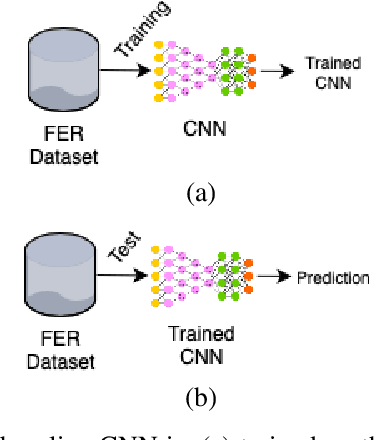

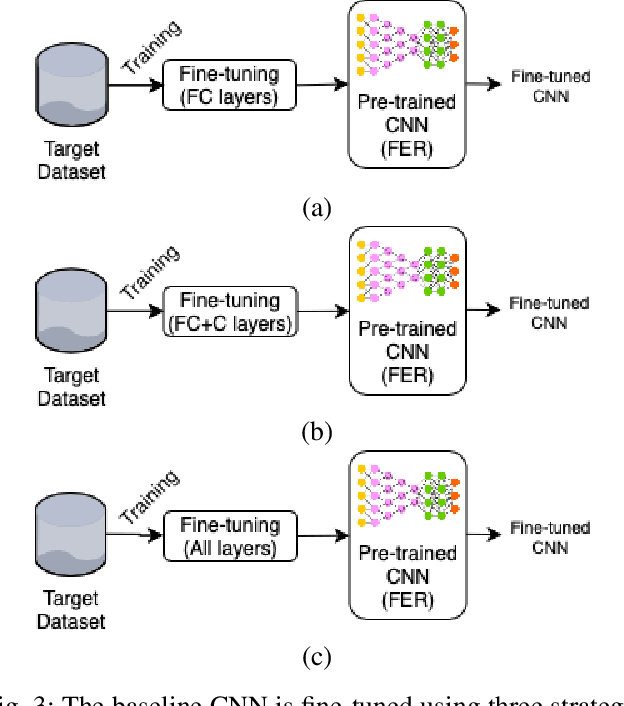

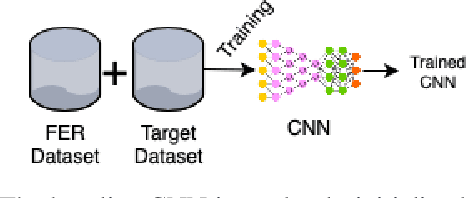

Memory Integrity of CNNs for Cross-Dataset Facial Expression Recognition

May 28, 2019

Facial expression recognition is a major problem in the domain of artificial intelligence. One of the best ways to solve this problem is the use of convolutional neural networks (CNNs). However, a large amount of data is required to train properly these networks but most of the datasets available for facial expression recognition are relatively small. A common way to circumvent the lack of data is to use CNNs trained on large datasets of different domains and fine-tuning the layers of such networks to the target domain. However, the fine-tuning process does not preserve the memory integrity as CNNs have the tendency to forget patterns they have learned. In this paper, we evaluate different strategies of fine-tuning a CNN with the aim of assessing the memory integrity of such strategies in a cross-dataset scenario. A CNN pre-trained on a source dataset is used as the baseline and four adaptation strategies have been evaluated: fine-tuning its fully connected layers; fine-tuning its last convolutional layer and its fully connected layers; retraining the CNN on a target dataset; and the fusion of the source and target datasets and retraining the CNN. Experimental results on four datasets have shown that the fusion of the source and the target datasets provides the best trade-off between accuracy and memory integrity.

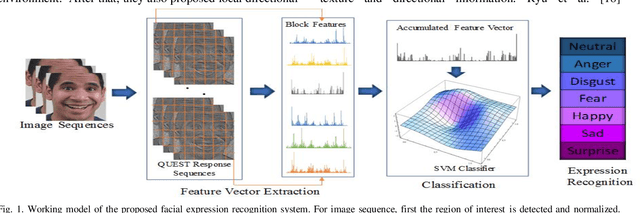

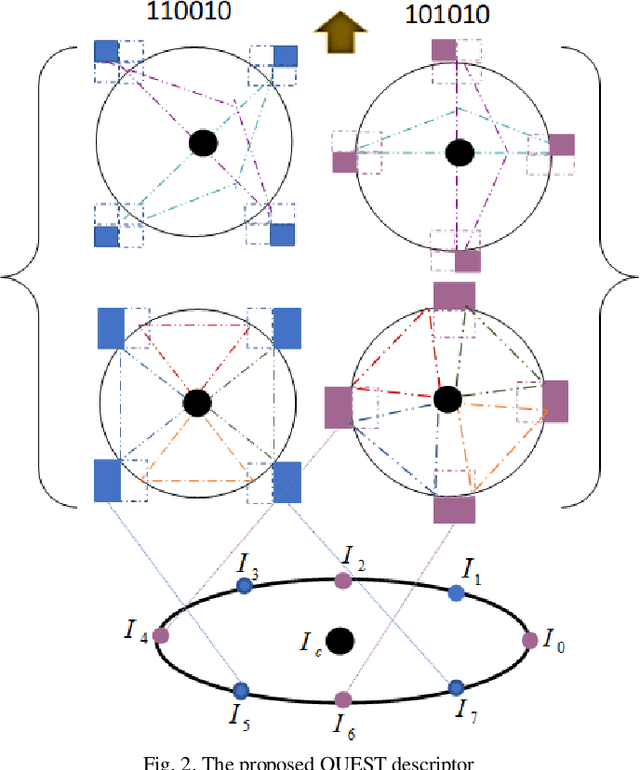

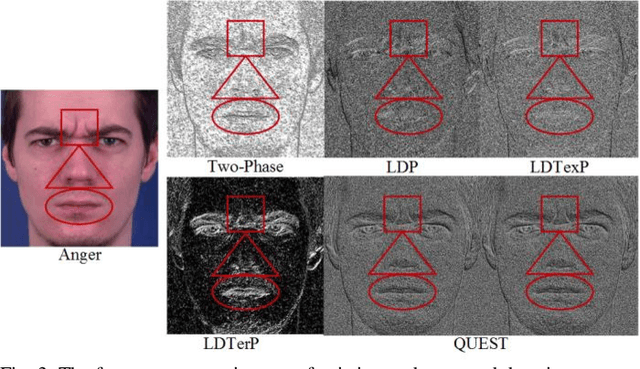

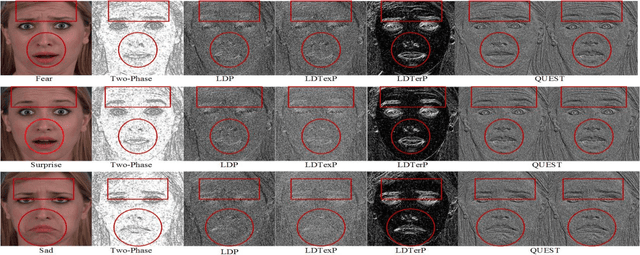

QUEST: Quadriletral Senary bit Pattern for Facial Expression Recognition

Jul 24, 2018

Facial expression has a significant role in analyzing human cognitive state. Deriving an accurate facial appearance representation is a critical task for an automatic facial expression recognition application. This paper provides a new feature descriptor named as Quadrilateral Senary bit Pattern for facial expression recognition. The QUEST pattern encoded the intensity changes by emphasizing the relationship between neighboring and reference pixels by dividing them into two quadrilaterals in a local neighborhood. Thus, the resultant gradient edges reveal the transitional variation information, that improves the classification rate by discriminating expression classes. Moreover, it also enhances the capability of the descriptor to deal with viewpoint variations and illumination changes. The trine relationship in a quadrilateral structure helps to extract the expressive edges and suppressing noise elements to enhance the robustness to noisy conditions. The QUEST pattern generates a six-bit compact code, which improves the efficiency of the FER system with more discriminability. The effectiveness of the proposed method is evaluated by conducting several experiments on four benchmark datasets: MMI, GEMEP-FERA, OULU-CASIA, and ISED. The experimental results show better performance of the proposed method as compared to existing state-art-the approaches.

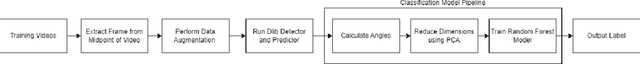

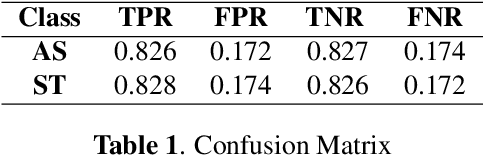

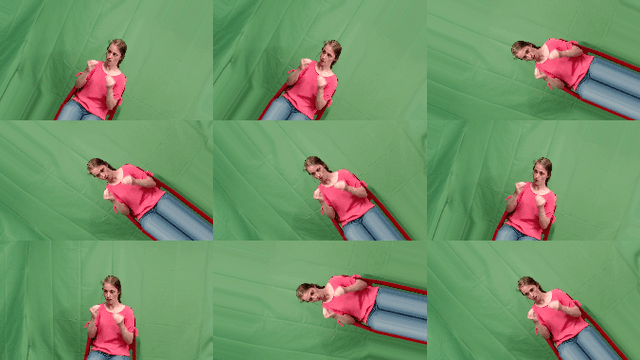

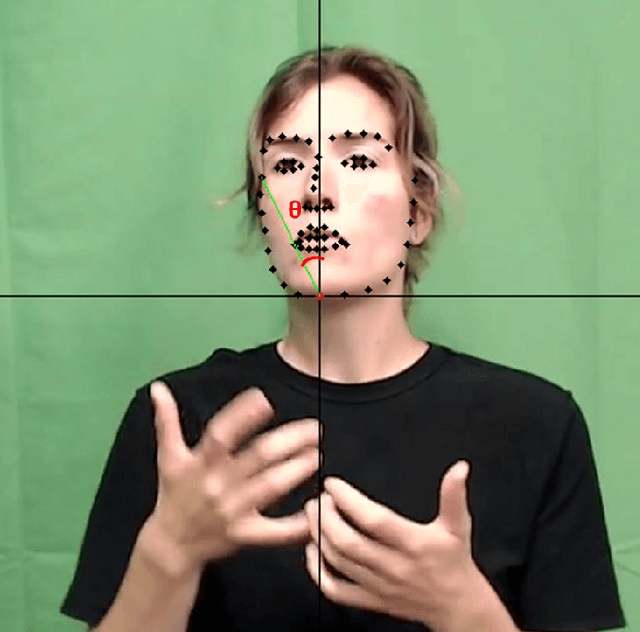

Implementing Facial Landmark Tracking for American Sign Language Recognition using Random Forest Classification Tree Model and Principal Component Analysis

Nov 23, 2022

The deaf and hard of hearing community relies on American Sign Language (ASL) as their primary mode of communication, but communication with others who do not know ASL can be difficult, especially during emergencies where no interpreter is available. As an effort to alleviate this problem, research in computer vision based real time ASL interpreting models is ongoing. However, most of these models are hand shape (gesture) based and lack the integration of facial cues, which are crucial in ASL to convey tone and distinguish similar looking signs. Thus, the integration of facial cues in computer vision based ASL interpreting models has the potential to improve performance and reliability. In this paper, we introduce a new facial expression-based classification model that can be used to improve ASL interpreting models. This model utilizes the relative angles of facial landmarks with principal component analysis and a Random Forest Classification tree model to classify frames taken from videos of ASL users signing a complete sentence. The model classifies the frames as statements or assertions. The model was able to achieve an accuracy of 82%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge