"Time": models, code, and papers

Powerset multi-class cross entropy loss for neural speaker diarization

Oct 19, 2023Since its introduction in 2019, the whole end-to-end neural diarization (EEND) line of work has been addressing speaker diarization as a frame-wise multi-label classification problem with permutation-invariant training. Despite EEND showing great promise, a few recent works took a step back and studied the possible combination of (local) supervised EEND diarization with (global) unsupervised clustering. Yet, these hybrid contributions did not question the original multi-label formulation. We propose to switch from multi-label (where any two speakers can be active at the same time) to powerset multi-class classification (where dedicated classes are assigned to pairs of overlapping speakers). Through extensive experiments on 9 different benchmarks, we show that this formulation leads to significantly better performance (mostly on overlapping speech) and robustness to domain mismatch, while eliminating the detection threshold hyperparameter, critical for the multi-label formulation.

Object-Aware Impedance Control for Human-Robot Collaborative Task with Online Object Parameter Estimation

Oct 19, 2023Physical human-robot interactions (pHRIs) can improve robot autonomy and reduce physical demands on humans. In this paper, we consider a collaborative task with a considerably long object and no prior knowledge of the object's parameters. An integrated control framework with an online object parameter estimator and a Cartesian object-aware impedance controller is proposed to realize complicated scenarios. During the transportation task, the object parameters are estimated online while a robot and human lift an object. The perturbation motion is incorporated into the null space of the desired trajectory to enhance the estimator accuracy. An object-aware impedance controller is designed using the real-time estimation results to effectively transmit the intended human motion to the robot through the object. Experimental demonstrations of collaborative tasks, including object transportation and assembly tasks, are implemented to show the effectiveness of our proposed method.

Predict the Future from the Past? On the Temporal Data Distribution Shift in Financial Sentiment Classifications

Oct 19, 2023Temporal data distribution shift is prevalent in the financial text. How can a financial sentiment analysis system be trained in a volatile market environment that can accurately infer sentiment and be robust to temporal data distribution shifts? In this paper, we conduct an empirical study on the financial sentiment analysis system under temporal data distribution shifts using a real-world financial social media dataset that spans three years. We find that the fine-tuned models suffer from general performance degradation in the presence of temporal distribution shifts. Furthermore, motivated by the unique temporal nature of the financial text, we propose a novel method that combines out-of-distribution detection with time series modeling for temporal financial sentiment analysis. Experimental results show that the proposed method enhances the model's capability to adapt to evolving temporal shifts in a volatile financial market.

An Exploration of Task-decoupling on Two-stage Neural Post Filter for Real-time Personalized Acoustic Echo Cancellation

Oct 07, 2023Deep learning based techniques have been popularly adopted in acoustic echo cancellation (AEC). Utilization of speaker representation has extended the frontier of AEC, thus attracting many researchers' interest in personalized acoustic echo cancellation (PAEC). Meanwhile, task-decoupling strategies are widely adopted in speech enhancement. To further explore the task-decoupling approach, we propose to use a two-stage task-decoupling post-filter (TDPF) in PAEC. Furthermore, a multi-scale local-global speaker representation is applied to improve speaker extraction in PAEC. Experimental results indicate that the task-decoupling model can yield better performance than a single joint network. The optimal approach is to decouple the echo cancellation from noise and interference speech suppression. Based on the task-decoupling sequence, optimal training strategies for the two-stage model are explored afterwards.

Optimizing Layerwise Polynomial Approximation for Efficient Private Inference on Fully Homomorphic Encryption: A Dynamic Programming Approach

Oct 16, 2023Recent research has explored the implementation of privacy-preserving deep neural networks solely using fully homomorphic encryption. However, its practicality has been limited because of prolonged inference times. When using a pre-trained model without retraining, a major factor contributing to these prolonged inference times is the high-degree polynomial approximation of activation functions such as the ReLU function. The high-degree approximation consumes a substantial amount of homomorphic computational resources, resulting in slower inference. Unlike the previous works approximating activation functions uniformly and conservatively, this paper presents a \emph{layerwise} degree optimization of activation functions to aggressively reduce the inference time while maintaining classification accuracy by taking into account the characteristics of each layer. Instead of the minimax approximation commonly used in state-of-the-art private inference models, we employ the weighted least squares approximation method with the input distributions of activation functions. Then, we obtain the layerwise optimized degrees for activation functions through the \emph{dynamic programming} algorithm, considering how each layer's approximation error affects the classification accuracy of the deep neural network. Furthermore, we propose modulating the ciphertext moduli-chain layerwise to reduce the inference time. By these proposed layerwise optimization methods, we can reduce inference times for the ResNet-20 model and the ResNet-32 model by 3.44 times and 3.16 times, respectively, in comparison to the prior implementations employing uniform degree polynomials and a consistent ciphertext modulus.

Fast Parameter Inference on Pulsar Timing Arrays with Normalizing Flows

Oct 18, 2023Pulsar timing arrays (PTAs) perform Bayesian posterior inference with expensive MCMC methods. Given a dataset of ~10-100 pulsars and O(10^3) timing residuals each, producing a posterior distribution for the stochastic gravitational wave background (SGWB) can take days to a week. The computational bottleneck arises because the likelihood evaluation required for MCMC is extremely costly when considering the dimensionality of the search space. Fortunately, generating simulated data is fast, so modern simulation-based inference techniques can be brought to bear on the problem. In this paper, we demonstrate how conditional normalizing flows trained on simulated data can be used for extremely fast and accurate estimation of the SGWB posteriors, reducing the sampling time from weeks to a matter of seconds.

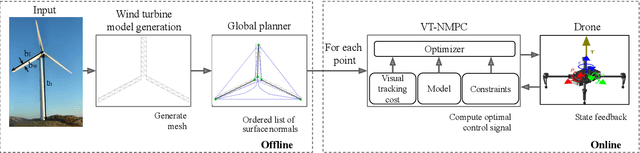

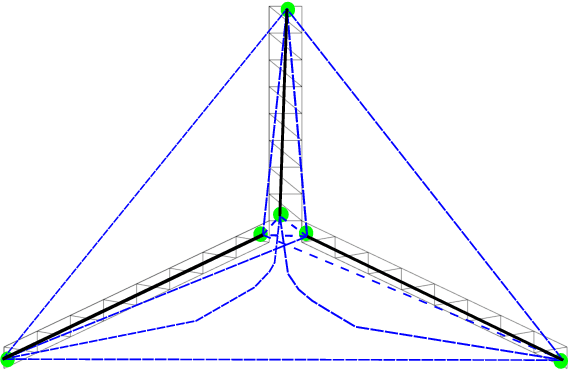

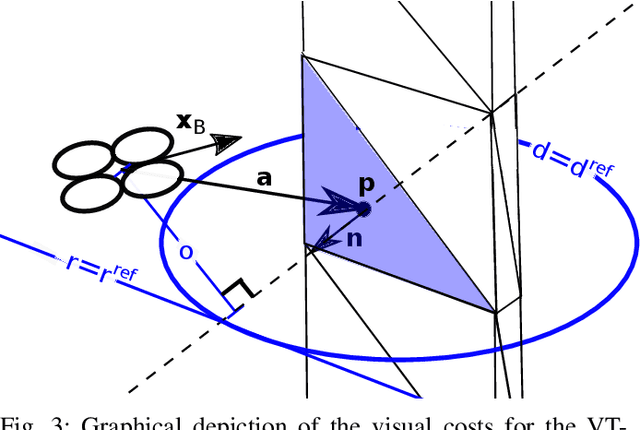

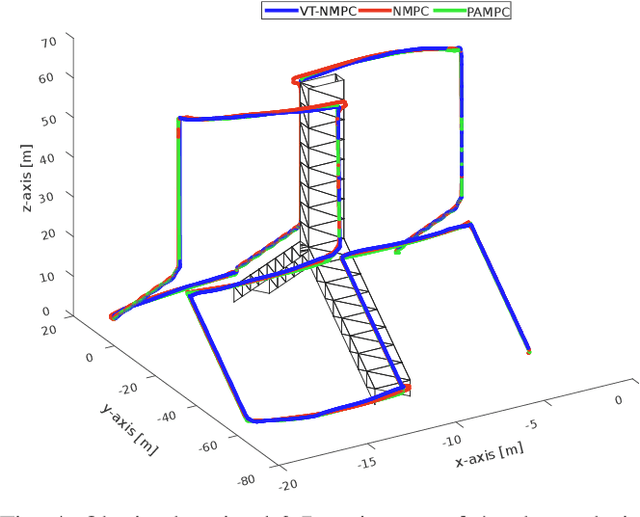

Visual Tracking Nonlinear Model Predictive Control Method for Autonomous Wind Turbine Inspection

Oct 21, 2023

Automated visual inspection of on-and offshore wind turbines using aerial robots provides several benefits, namely, a safe working environment by circumventing the need for workers to be suspended high above the ground, reduced inspection time, preventive maintenance, and access to hard-to-reach areas. A novel nonlinear model predictive control (NMPC) framework alongside a global wind turbine path planner is proposed to achieve distance-optimal coverage for wind turbine inspection. Unlike traditional MPC formulations, visual tracking NMPC (VT-NMPC) is designed to track an inspection surface, instead of a position and heading trajectory, thereby circumventing the need to provide an accurate predefined trajectory for the drone. An additional capability of the proposed VT-NMPC method is that by incorporating inspection requirements as visual tracking costs to minimize, it naturally achieves the inspection task successfully while respecting the physical constraints of the drone. Multiple simulation runs and real-world tests demonstrate the efficiency and efficacy of the proposed automated inspection framework, which outperforms the traditional MPC designs, by providing full coverage of the target wind turbine blades as well as its robustness to changing wind conditions. The implementation codes are open-sourced.

COVIDFakeExplainer: An Explainable Machine Learning based Web Application for Detecting COVID-19 Fake News

Oct 21, 2023Fake news has emerged as a critical global issue, magnified by the COVID-19 pandemic, underscoring the need for effective preventive tools. Leveraging machine learning, including deep learning techniques, offers promise in combatting fake news. This paper goes beyond by establishing BERT as the superior model for fake news detection and demonstrates its utility as a tool to empower the general populace. We have implemented a browser extension, enhanced with explainability features, enabling real-time identification of fake news and delivering easily interpretable explanations. To achieve this, we have employed two publicly available datasets and created seven distinct data configurations to evaluate three prominent machine learning architectures. Our comprehensive experiments affirm BERT's exceptional accuracy in detecting COVID-19-related fake news. Furthermore, we have integrated an explainability component into the BERT model and deployed it as a service through Amazon's cloud API hosting (AWS). We have developed a browser extension that interfaces with the API, allowing users to select and transmit data from web pages, receiving an intelligible classification in return. This paper presents a practical end-to-end solution, highlighting the feasibility of constructing a holistic system for fake news detection, which can significantly benefit society.

Fast Diffusion GAN Model for Symbolic Music Generation Controlled by Emotions

Oct 21, 2023Diffusion models have shown promising results for a wide range of generative tasks with continuous data, such as image and audio synthesis. However, little progress has been made on using diffusion models to generate discrete symbolic music because this new class of generative models are not well suited for discrete data while its iterative sampling process is computationally expensive. In this work, we propose a diffusion model combined with a Generative Adversarial Network, aiming to (i) alleviate one of the remaining challenges in algorithmic music generation which is the control of generation towards a target emotion, and (ii) mitigate the slow sampling drawback of diffusion models applied to symbolic music generation. We first used a trained Variational Autoencoder to obtain embeddings of a symbolic music dataset with emotion labels and then used those to train a diffusion model. Our results demonstrate the successful control of our diffusion model to generate symbolic music with a desired emotion. Our model achieves several orders of magnitude improvement in computational cost, requiring merely four time steps to denoise while the steps required by current state-of-the-art diffusion models for symbolic music generation is in the order of thousands.

Beyond Accuracy: Evaluating Self-Consistency of Code Large Language Models with IdentityChain

Oct 21, 2023Code Large Language Models (Code LLMs) are being increasingly employed in real-life applications, so evaluating them is critical. While the general accuracy of Code LLMs on individual tasks has been extensively evaluated, their self-consistency across different tasks is overlooked. Intuitively, a trustworthy model should be self-consistent when generating natural language specifications for its own code and generating code for its own specifications. Failure to preserve self-consistency reveals a lack of understanding of the shared semantics underlying natural language and programming language, and therefore undermines the trustworthiness of a model. In this paper, we first formally define the self-consistency of Code LLMs and then design a framework, IdentityChain, which effectively and efficiently evaluates the self-consistency and general accuracy of a model at the same time. We study eleven Code LLMs and show that they fail to preserve self-consistency, which is indeed a distinct aspect from general accuracy. Furthermore, we show that IdentityChain can be used as a model debugging tool to expose weaknesses of Code LLMs by demonstrating three major weaknesses that we identify in current models using IdentityChain. Our code is available at https://github.com/marcusm117/IdentityChain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge