"Time": models, code, and papers

Recursive and non-recursive filters for sequential smoothing and prediction with instantaneous phase and frequency estimation applications

Nov 14, 2023A simple procedure for the design of recursive digital filters with an infinite impulse response (IIR) and non-recursive digital filters with a finite impulse response (FIR) is described. The fixed-lag smoothing filters are designed to track an approximately polynomial signal of specified degree without bias at steady state, while minimizing the gain of high-frequency (coloured) noise with a specified power spectral density. For the IIR variant, the procedure determines the optimal lag (i.e. the passband group delay) yielding a recursive low-complexity smoother of low order, with a specified bandwidth, and excellent passband phase linearity. The filters are applied to the problem of instantaneous frequency estimation, e.g. for Doppler-shift measurement, for a complex exponential with polynomial phase progression in additive white noise. For this classical problem, simulations show that the incorporation of a prediction filter (with a one-sample lead) reduces the incidence of (phase or frequency) angle unwrapping errors, particularly for signals with high rates of angle change, which are known to limit the performance of standard FIR estimators at low SNR. This improvement allows the instantaneous phase of low-frequency signals to be estimated, e.g. for time-delay measurement, and/or the instantaneous frequency of frequency-modulated signals, down to a lower SNR. In the absence of unwrapping errors, the error variance of the IIR estimators (with the optimal phase lag) reaches the FIR lower bound, at a significantly lower computational cost. Guidelines for configuring and tuning both FIR and IIR filters are provided.

3DHR-Co: A Collaborative Test-time Refinement Framework for In-the-Wild 3D Human-Body Reconstruction Task

Oct 02, 2023The field of 3D human-body reconstruction (abbreviated as 3DHR) that utilizes parametric pose and shape representations has witnessed significant advancements in recent years. However, the application of 3DHR techniques to handle real-world, diverse scenes, known as in-the-wild data, still faces limitations. The primary challenge arises as curating accurate 3D human pose ground truth (GT) for in-the-wild scenes is still difficult to obtain due to various factors. Recent test-time refinement approaches on 3DHR leverage initial 2D off-the-shelf human keypoints information to support the lack of 3D supervision on in-the-wild data. However, we observed that additional 2D supervision alone could cause the overfitting issue on common 3DHR backbones, making the 3DHR test-time refinement task seem intractable. We answer this challenge by proposing a strategy that complements 3DHR test-time refinement work under a collaborative approach. Specifically, we initially apply a pre-adaptation approach that works by collaborating various 3DHR models in a single framework to directly improve their initial outputs. This approach is then further combined with the test-time adaptation work under specific settings that minimize the overfitting issue to further boost the 3DHR performance. The whole framework is termed as 3DHR-Co, and on the experiment sides, we showed that the proposed work can significantly enhance the scores of common classic 3DHR backbones up to -34 mm pose error suppression, putting them among the top list on the in-the-wild benchmark data. Such achievement shows that our approach helps unveil the true potential of the common classic 3DHR backbones. Based on these findings, we further investigate various settings on the proposed framework to better elaborate the capability of our collaborative approach in the 3DHR task.

An Improved Transformer-based Model for Detecting Phishing, Spam, and Ham: A Large Language Model Approach

Nov 12, 2023Phishing and spam detection is long standing challenge that has been the subject of much academic research. Large Language Models (LLM) have vast potential to transform society and provide new and innovative approaches to solve well-established challenges. Phishing and spam have caused financial hardships and lost time and resources to email users all over the world and frequently serve as an entry point for ransomware threat actors. While detection approaches exist, especially heuristic-based approaches, LLMs offer the potential to venture into a new unexplored area for understanding and solving this challenge. LLMs have rapidly altered the landscape from business, consumers, and throughout academia and demonstrate transformational potential for the potential of society. Based on this, applying these new and innovative approaches to email detection is a rational next step in academic research. In this work, we present IPSDM, our model based on fine-tuning the BERT family of models to specifically detect phishing and spam email. We demonstrate our fine-tuned version, IPSDM, is able to better classify emails in both unbalanced and balanced datasets. This work serves as an important first step towards employing LLMs to improve the security of our information systems.

A GPU-Accelerated Moving-Horizon Algorithm for Training Deep Classification Trees on Large Datasets

Nov 12, 2023Decision trees are essential yet NP-complete to train, prompting the widespread use of heuristic methods such as CART, which suffers from sub-optimal performance due to its greedy nature. Recently, breakthroughs in finding optimal decision trees have emerged; however, these methods still face significant computational costs and struggle with continuous features in large-scale datasets and deep trees. To address these limitations, we introduce a moving-horizon differential evolution algorithm for classification trees with continuous features (MH-DEOCT). Our approach consists of a discrete tree decoding method that eliminates duplicated searches between adjacent samples, a GPU-accelerated implementation that significantly reduces running time, and a moving-horizon strategy that iteratively trains shallow subtrees at each node to balance the vision and optimizer capability. Comprehensive studies on 68 UCI datasets demonstrate that our approach outperforms the heuristic method CART on training and testing accuracy by an average of 3.44% and 1.71%, respectively. Moreover, these numerical studies empirically demonstrate that MH-DEOCT achieves near-optimal performance (only 0.38% and 0.06% worse than the global optimal method on training and testing, respectively), while it offers remarkable scalability for deep trees (e.g., depth=8) and large-scale datasets (e.g., ten million samples).

Osteoporosis Prediction from Hand and Wrist X-rays using Image Segmentation and Self-Supervised Learning

Nov 12, 2023Osteoporosis is a widespread and chronic metabolic bone disease that often remains undiagnosed and untreated due to limited access to bone mineral density (BMD) tests like Dual-energy X-ray absorptiometry (DXA). In response to this challenge, current advancements are pivoting towards detecting osteoporosis by examining alternative indicators from peripheral bone areas, with the goal of increasing screening rates without added expenses or time. In this paper, we present a method to predict osteoporosis using hand and wrist X-ray images, which are both widely accessible and affordable, though their link to DXA-based data is not thoroughly explored. Initially, our method segments the ulnar, radius, and metacarpal bones using a foundational model for image segmentation. Then, we use a self-supervised learning approach to extract meaningful representations without the need for explicit labels, and move on to classify osteoporosis in a supervised manner. Our method is evaluated on a dataset with 192 individuals, cross-referencing their verified osteoporosis conditions against the standard DXA test. With a notable classification score (AUC=0.83), our model represents a pioneering effort in leveraging vision-based techniques for osteoporosis identification from the peripheral skeleton sites.

Coexistence of OTFS Modulation With OFDM-based Communication Systems

Nov 12, 2023This study examines the coexistence of orthogonal time-frequency space (OTFS) modulation with current fourth- and fifth-generation (4G/5G) wireless communication systems that primarily use orthogonal frequency-division multiplexing (OFDM) waveforms. We first derive the input-output-relation (IOR) of OTFS when it coexists with an OFDM system while considering the impact of unequal lengths of the cyclic prefixes (CPs) in the OTFS signal. We show analytically that the inclusion of multiple CPs to the OTFS signal results in the effective sampled delay-Doppler (DD) domain channel response to be less sparse. We also show that the effective DD domain channel coefficients for OTFS in coexisting systems are influenced by the unequal lengths of the CPs. Subsequently, we propose an embedded pilot-aided channel estimation (CE) technique for OTFS in coexisting systems that leverages the derived IOR for accurate channel characterization. Using numerical results, we show that ignoring the impact of unequal lengths of the CPs during signal detection can degrade the bit error rate performance of OTFS in coexisting systems. We also show that the proposed CE technique for OTFS in coexisting systems outperforms the state-of-the-art threshold-based CE technique.

ReIDTracker Sea: the technical report of BoaTrack and SeaDronesSee-MOT challenge at MaCVi of WACV24

Nov 12, 2023Multi-Object Tracking is one of the most important technologies in maritime computer vision. Our solution tries to explore Multi-Object Tracking in maritime Unmanned Aerial vehicles (UAVs) and Unmanned Surface Vehicles (USVs) usage scenarios. Most of the current Multi-Object Tracking algorithms require complex association strategies and association information (2D location and motion, 3D motion, 3D depth, 2D appearance) to achieve better performance, which makes the entire tracking system extremely complex and heavy. At the same time, most of the current Multi-Object Tracking algorithms still require video annotation data which is costly to obtain for training. Our solution tries to explore Multi-Object Tracking in a completely unsupervised way. The scheme accomplishes instance representation learning by using self-supervision on ImageNet. Then, by cooperating with high-quality detectors, the multi-target tracking task can be completed simply and efficiently. The scheme achieved top 3 performance on both UAV-based Multi-Object Tracking with Reidentification and USV-based Multi-Object Tracking benchmarks and the solution won the championship in many multiple Multi-Object Tracking competitions. such as BDD100K MOT,MOTS, Waymo 2D MOT

ArcheType: A Novel Framework for Open-Source Column Type Annotation using Large Language Models

Nov 06, 2023Existing deep-learning approaches to semantic column type annotation (CTA) have important shortcomings: they rely on semantic types which are fixed at training time; require a large number of training samples per type and incur large run-time inference costs; and their performance can degrade when evaluated on novel datasets, even when types remain constant. Large language models have exhibited strong zero-shot classification performance on a wide range of tasks and in this paper we explore their use for CTA. We introduce ArcheType, a simple, practical method for context sampling, prompt serialization, model querying, and label remapping, which enables large language models to solve CTA problems in a fully zero-shot manner. We ablate each component of our method separately, and establish that improvements to context sampling and label remapping provide the most consistent gains. ArcheType establishes a new state-of-the-art performance on zero-shot CTA benchmarks (including three new domain-specific benchmarks which we release along with this paper), and when used in conjunction with classical CTA techniques, it outperforms a SOTA DoDuo model on the fine-tuned SOTAB benchmark. Our code is available at https://github.com/penfever/ArcheType.

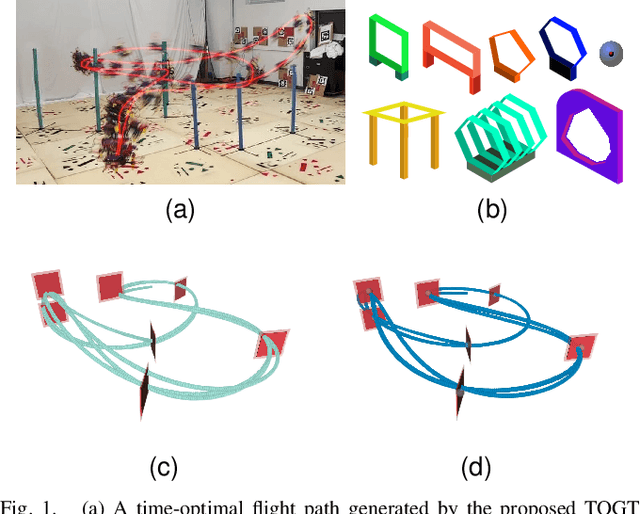

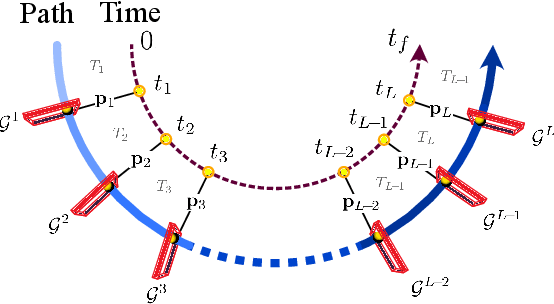

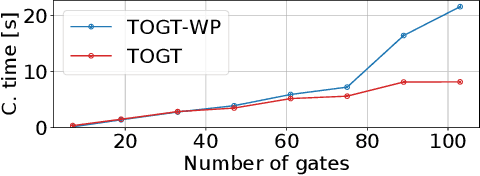

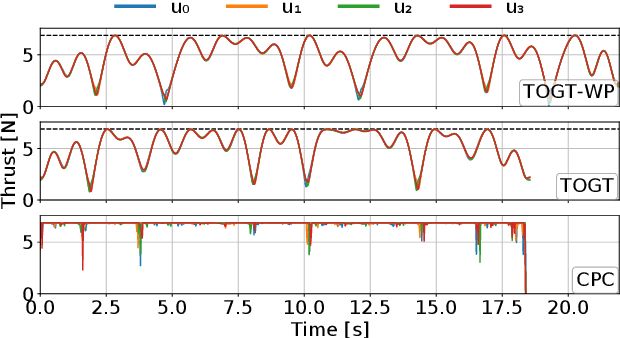

Time-Optimal Gate-Traversing Planner for Autonomous Drone Racing

Sep 16, 2023

In drone racing, the time-minimum trajectory is affected by the drone's capabilities, the layout of the race track, and the configurations of the gates (e.g., their shapes and sizes). However, previous studies neglect the configuration of the gates, simply rendering drone racing a waypoint-passing task. This formulation often leads to a conservative choice of paths through the gates, as the spatial potential of the gates is not fully utilized. To address this issue, we present a time-optimal planner that can faithfully model gate constraints with various configurations and thereby generate a more time-efficient trajectory while considering the single-rotor-thrust limits. Our approach excels in computational efficiency which only takes a few seconds to compute the full state and control trajectories of the drone through tracks with dozens of different gates. Extensive simulations and experiments confirm the effectiveness of the proposed methodology, showing that the lap time can be further reduced by taking into account the gate's configuration. We validate our planner in real-world flights and demonstrate super-extreme flight trajectory through race tracks.

Game Solving with Online Fine-Tuning

Nov 13, 2023Game solving is a similar, yet more difficult task than mastering a game. Solving a game typically means to find the game-theoretic value (outcome given optimal play), and optionally a full strategy to follow in order to achieve that outcome. The AlphaZero algorithm has demonstrated super-human level play, and its powerful policy and value predictions have also served as heuristics in game solving. However, to solve a game and obtain a full strategy, a winning response must be found for all possible moves by the losing player. This includes very poor lines of play from the losing side, for which the AlphaZero self-play process will not encounter. AlphaZero-based heuristics can be highly inaccurate when evaluating these out-of-distribution positions, which occur throughout the entire search. To address this issue, this paper investigates applying online fine-tuning while searching and proposes two methods to learn tailor-designed heuristics for game solving. Our experiments show that using online fine-tuning can solve a series of challenging 7x7 Killall-Go problems, using only 23.54% of computation time compared to the baseline without online fine-tuning. Results suggest that the savings scale with problem size. Our method can further be extended to any tree search algorithm for problem solving. Our code is available at https://rlg.iis.sinica.edu.tw/papers/neurips2023-online-fine-tuning-solver.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge