"Time": models, code, and papers

Scheduling Distributed Flexible Assembly Lines using Safe Reinforcement Learning with Soft Shielding

Nov 21, 2023

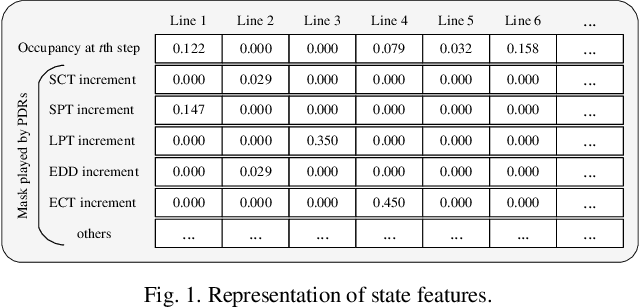

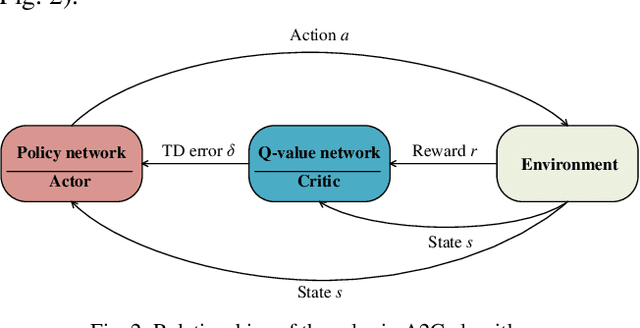

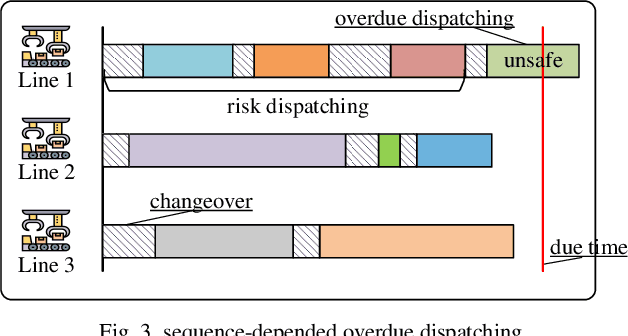

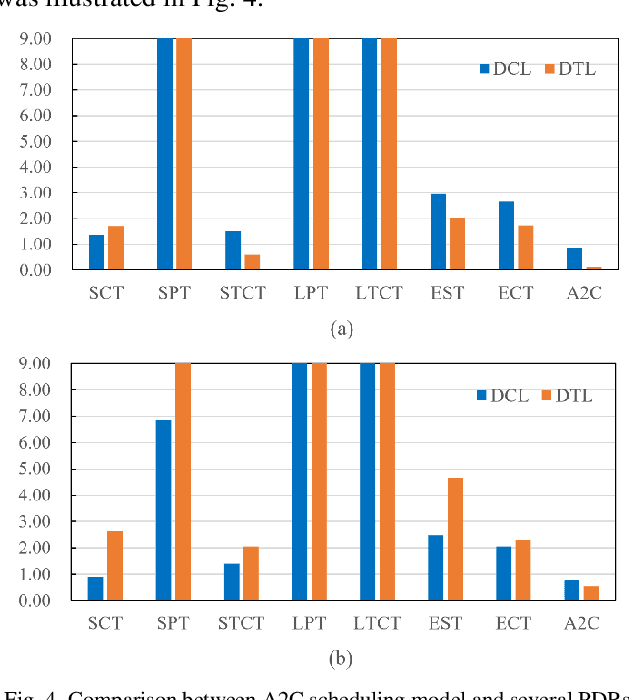

Highly automated assembly lines enable significant productivity gains in the manufacturing industry, particularly in mass production condition. Nonetheless, challenges persist in job scheduling for make-to-job and mass customization, necessitating further investigation to improve efficiency, reduce tardiness, promote safety and reliability. In this contribution, an advantage actor-critic based reinforcement learning method is proposed to address scheduling problems of distributed flexible assembly lines in a real-time manner. To enhance the performance, a more condensed environment representation approach is proposed, which is designed to work with the masks made by priority dispatching rules to generate fixed and advantageous action space. Moreover, a Monte-Carlo tree search based soft shielding component is developed to help address long-sequence dependent unsafe behaviors and monitor the risk of overdue scheduling. Finally, the proposed algorithm and its soft shielding component are validated in performance evaluation.

Quadcopter Trajectory Time Minimization and Robust Collision Avoidance via Optimal Time Allocation

Sep 15, 2023

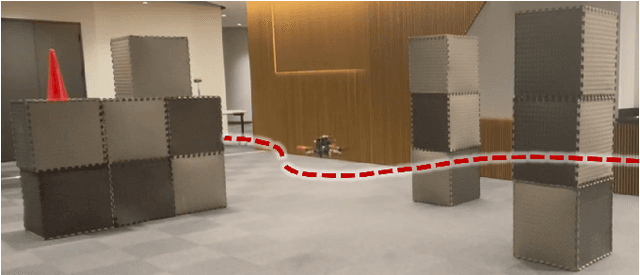

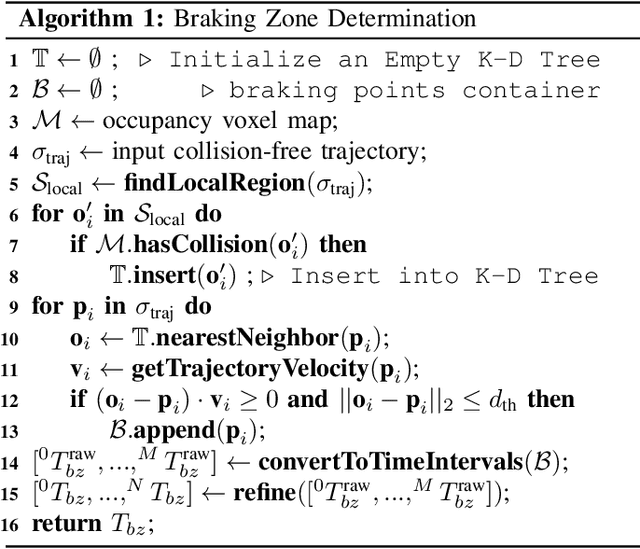

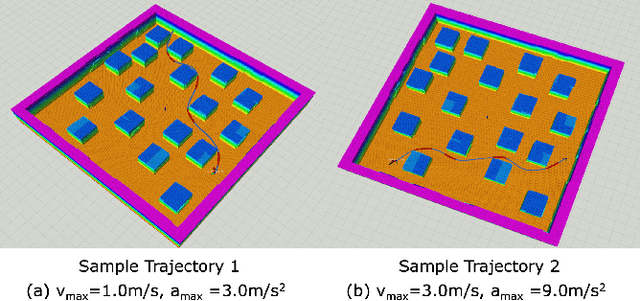

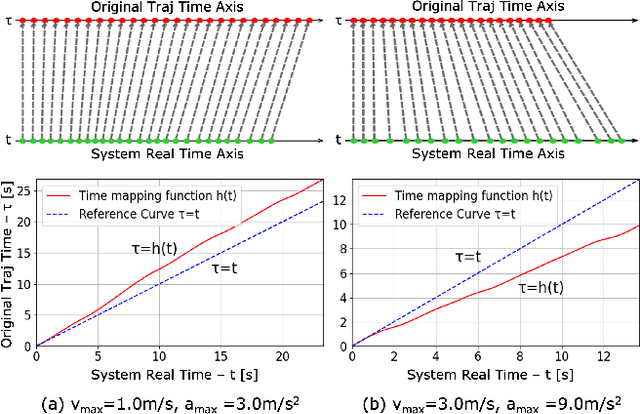

Autonomous navigation requires robots to generate trajectories for collision avoidance efficiently. Although plenty of previous works have proven successful in generating smooth and spatially collision-free trajectories, their solutions often suffer from suboptimal time efficiency and potential unsafety, particularly when accounting for uncertainties in robot perception and control. To address this issue, this paper presents the Robust Optimal Time Allocation (ROTA) framework. This framework is designed to optimize the time progress of the trajectories temporally, serving as a post-processing tool to enhance trajectory time efficiency and safety under uncertainties. In this study, we begin by formulating a non-convex optimization problem aimed at minimizing trajectory execution time while incorporating constraints on collision probability as the robot approaches obstacles. Subsequently, we introduce the concept of the trajectory braking zone and adopt the chance-constrained formulation for robust collision avoidance in the braking zones. Finally, the non-convex optimization problem is reformulated into a second-order cone programming problem to achieve real-time performance. Through simulations and physical flight experiments, we demonstrate that the proposed approach effectively reduces trajectory execution time while enabling robust collision avoidance in complex environments.

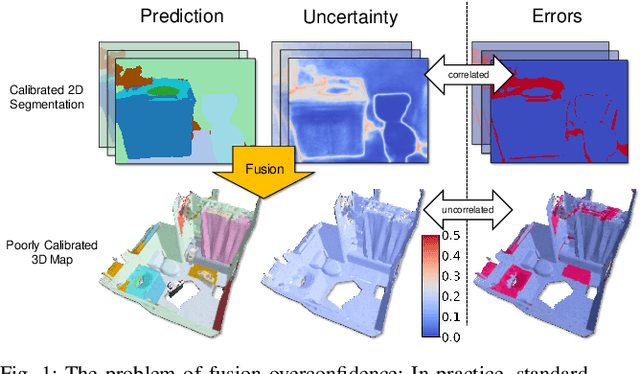

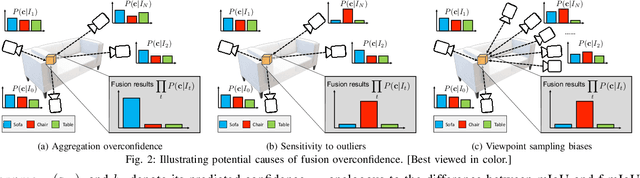

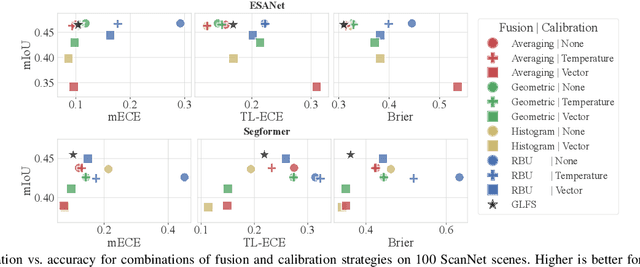

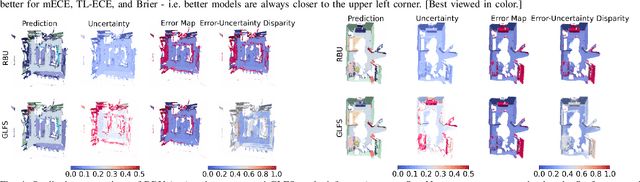

On the Overconfidence Problem in Semantic 3D Mapping

Nov 16, 2023

Semantic 3D mapping, the process of fusing depth and image segmentation information between multiple views to build 3D maps annotated with object classes in real-time, is a recent topic of interest. This paper highlights the fusion overconfidence problem, in which conventional mapping methods assign high confidence to the entire map even when they are incorrect, leading to miscalibrated outputs. Several methods to improve uncertainty calibration at different stages in the fusion pipeline are presented and compared on the ScanNet dataset. We show that the most widely used Bayesian fusion strategy is among the worst calibrated, and propose a learned pipeline that combines fusion and calibration, GLFS, which achieves simultaneously higher accuracy and 3D map calibration while retaining real-time capability. We further illustrate the importance of map calibration on a downstream task by showing that incorporating proper semantic fusion on a modular ObjectNav agent improves its success rates. Our code will be provided on Github for reproducibility upon acceptance.

Spike-time encoding of gas concentrations using neuromorphic analog sensory front-end

Oct 11, 2023Gas concentration detection is important for applications such as gas leakage monitoring. Metal Oxide (MOx) sensors show high sensitivities for specific gases, which makes them particularly useful for such monitoring applications. However, how to efficiently sample and further process the sensor responses remains an open question. Here we propose a simple analog circuit design inspired by the spiking output of the mammalian olfactory bulb and by event-based vision sensors. Our circuit encodes the gas concentration in the time difference between the pulses of two separate pathways. We show that in the setting of controlled airflow-embedded gas injections, the time difference between the two generated pulses varies inversely with gas concentration, which is in agreement with the spike timing difference between tufted cells and mitral cells of the mammalian olfactory bulb. Encoding concentration information in analog spike timings may pave the way for rapid and efficient gas detection, and ultimately lead to data- and power-efficient monitoring devices to be deployed in uncontrolled and turbulent environments.

USLR: an open-source tool for unbiased and smooth longitudinal registration of brain MR

Nov 14, 2023We present USLR, a computational framework for longitudinal registration of brain MRI scans to estimate nonlinear image trajectories that are smooth across time, unbiased to any timepoint, and robust to imaging artefacts. It operates on the Lie algebra parameterisation of spatial transforms (which is compatible with rigid transforms and stationary velocity fields for nonlinear deformation) and takes advantage of log-domain properties to solve the problem using Bayesian inference. USRL estimates rigid and nonlinear registrations that: (i) bring all timepoints to an unbiased subject-specific space; and (i) compute a smooth trajectory across the imaging time-series. We capitalise on learning-based registration algorithms and closed-form expressions for fast inference. A use-case Alzheimer's disease study is used to showcase the benefits of the pipeline in multiple fronts, such as time-consistent image segmentation to reduce intra-subject variability, subject-specific prediction or population analysis using tensor-based morphometry. We demonstrate that such approach improves upon cross-sectional methods in identifying group differences, which can be helpful in detecting more subtle atrophy levels or in reducing sample sizes in clinical trials. The code is publicly available in https://github.com/acasamitjana/uslr

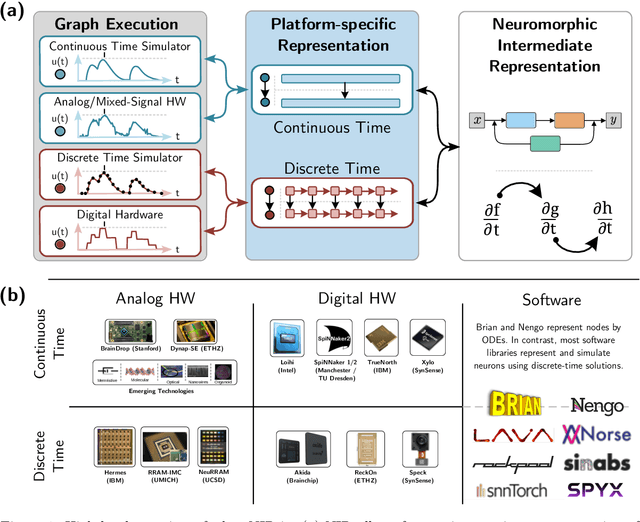

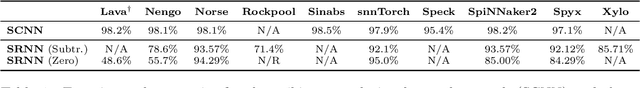

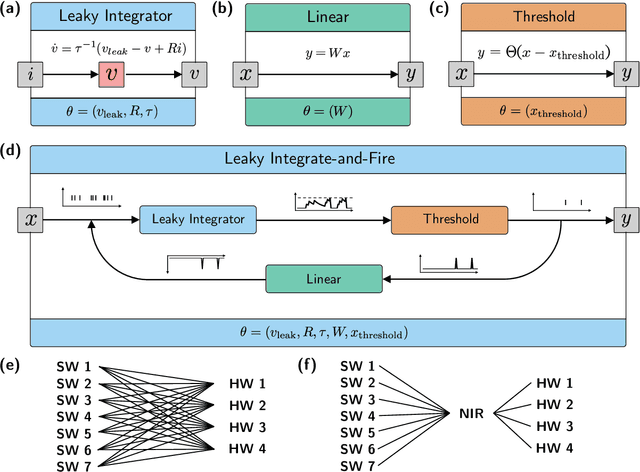

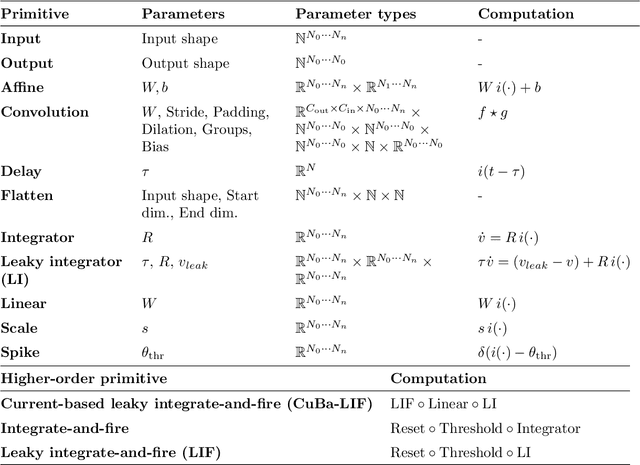

Neuromorphic Intermediate Representation: A Unified Instruction Set for Interoperable Brain-Inspired Computing

Nov 24, 2023

Spiking neural networks and neuromorphic hardware platforms that emulate neural dynamics are slowly gaining momentum and entering main-stream usage. Despite a well-established mathematical foundation for neural dynamics, the implementation details vary greatly across different platforms. Correspondingly, there are a plethora of software and hardware implementations with their own unique technology stacks. Consequently, neuromorphic systems typically diverge from the expected computational model, which challenges the reproducibility and reliability across platforms. Additionally, most neuromorphic hardware is limited by its access via a single software frameworks with a limited set of training procedures. Here, we establish a common reference-frame for computations in neuromorphic systems, dubbed the Neuromorphic Intermediate Representation (NIR). NIR defines a set of computational primitives as idealized continuous-time hybrid systems that can be composed into graphs and mapped to and from various neuromorphic technology stacks. By abstracting away assumptions around discretization and hardware constraints, NIR faithfully captures the fundamental computation, while simultaneously exposing the exact differences between the evaluated implementation and the idealized mathematical formalism. We reproduce three NIR graphs across 7 neuromorphic simulators and 4 hardware platforms, demonstrating support for an unprecedented number of neuromorphic systems. With NIR, we decouple the evolution of neuromorphic hardware and software, ultimately increasing the interoperability between platforms and improving accessibility to neuromorphic technologies. We believe that NIR is an important step towards the continued study of brain-inspired hardware and bottom-up approaches aimed at an improved understanding of the computational underpinnings of nervous systems.

Electric Vehicles coordination for grid balancing using multi-objective Harris Hawks Optimization

Nov 24, 2023The rise of renewables coincides with the shift towards Electrical Vehicles (EVs) posing technical and operational challenges for the energy balance of the local grid. Nowadays, the energy grid cannot deal with a spike in EVs usage leading to a need for more coordinated and grid aware EVs charging and discharging strategies. However, coordinating power flow from multiple EVs into the grid requires sophisticated algorithms and load-balancing strategies as the complexity increases with more control variables and EVs, necessitating large optimization and decision search spaces. In this paper, we propose an EVs fleet coordination model for the day ahead aiming to ensure a reliable energy supply and maintain a stable local grid, by utilizing EVs to store surplus energy and discharge it during periods of energy deficit. The optimization problem is addressed using Harris Hawks Optimization (HHO) considering criteria related to energy grid balancing, time usage preference, and the location of EV drivers. The EVs schedules, associated with the position of individuals from the population, are adjusted through exploration and exploitation operations, and their technical and operational feasibility is ensured, while the rabbit individual is updated with a non-dominated EV schedule selected per iteration using a roulette wheel algorithm. The solution is evaluated within the framework of an e-mobility service in Terni city. The results indicate that coordinated charging and discharging of EVs not only meet balancing service requirements but also align with user preferences with minimal deviations.

Trainwreck: A damaging adversarial attack on image classifiers

Nov 24, 2023Adversarial attacks are an important security concern for computer vision (CV), as they enable malicious attackers to reliably manipulate CV models. Existing attacks aim to elicit an output desired by the attacker, but keep the model fully intact on clean data. With CV models becoming increasingly valuable assets in applied practice, a new attack vector is emerging: disrupting the models as a form of economic sabotage. This paper opens up the exploration of damaging adversarial attacks (DAAs) that seek to damage the target model and maximize the total cost incurred by the damage. As a pioneer DAA, this paper proposes Trainwreck, a train-time attack that poisons the training data of image classifiers to degrade their performance. Trainwreck conflates the data of similar classes using stealthy ($\epsilon \leq 8/255$) class-pair universal perturbations computed using a surrogate model. Trainwreck is a black-box, transferable attack: it requires no knowledge of the target model's architecture, and a single poisoned dataset degrades the performance of any model trained on it. The experimental evaluation on CIFAR-10 and CIFAR-100 demonstrates that Trainwreck is indeed an effective attack across various model architectures including EfficientNetV2, ResNeXt-101, and a finetuned ViT-L-16. The strength of the attack can be customized by the poison rate parameter. Finally, data redundancy with file hashing and/or pixel difference are identified as a reliable defense technique against Trainwreck or similar DAAs. The code is available at https://github.com/JanZahalka/trainwreck.

Prototype of deployment of Federated Learning with IoT devices

Nov 24, 2023In the age of technology, data is an increasingly important resource. This importance is growing in the field of Artificial Intelligence (AI), where sub fields such as Machine Learning (ML) need more and more data to achieve better results. Internet of Things (IoT) is the connection of sensors and smart objects to collect and exchange data, in addition to achieving many other tasks. A huge amount of the resource desired, data, is stored in mobile devices, sensors and other Internet of Things (IoT) devices, but remains there due to data protection restrictions. At the same time these devices do not have enough data or computational capacity to train good models. Moreover, transmitting, storing and processing all this data on a centralised server is problematic. Federated Learning (FL) provides an innovative solution that allows devices to learn in a collaborative way. More importantly, it accomplishes this without violating data protection laws. FL is currently growing, and there are several solutions that implement it. This article presents a prototype of a FL solution where the IoT devices used were raspberry pi boards. The results compare the performance of a solution of this type with those obtained in traditional approaches. In addition, the FL solution performance was tested in a hostile environment. A convolutional neural network (CNN) and a image data set were used. The results show the feasibility and usability of these techniques, although in many cases they do not reach the performance of traditional approaches.

Grad-Shafranov equilibria via data-free physics informed neural networks

Nov 22, 2023

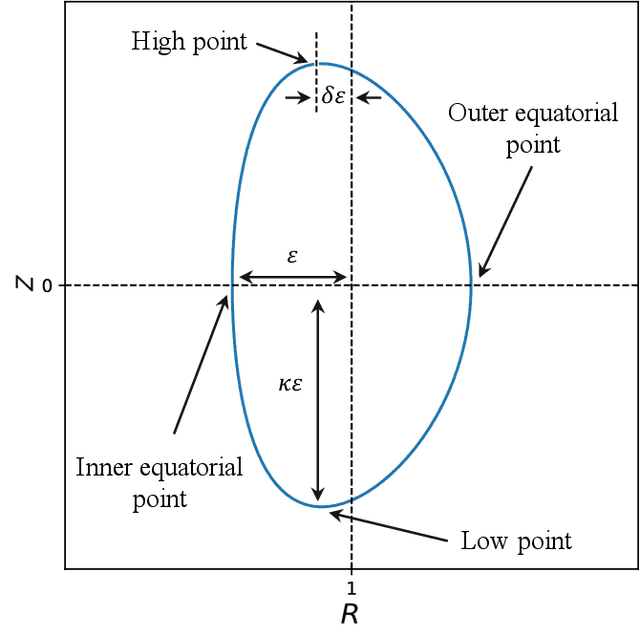

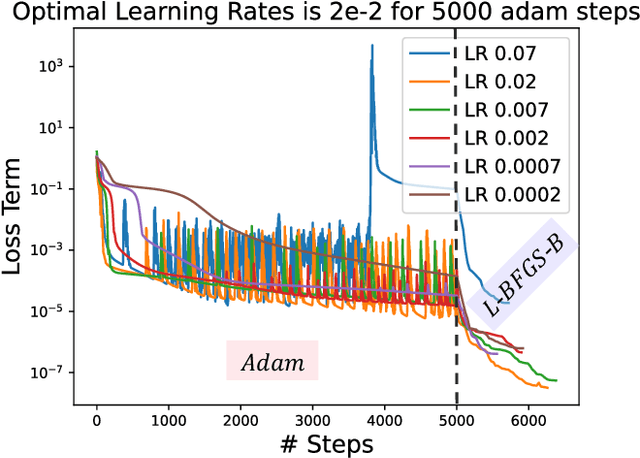

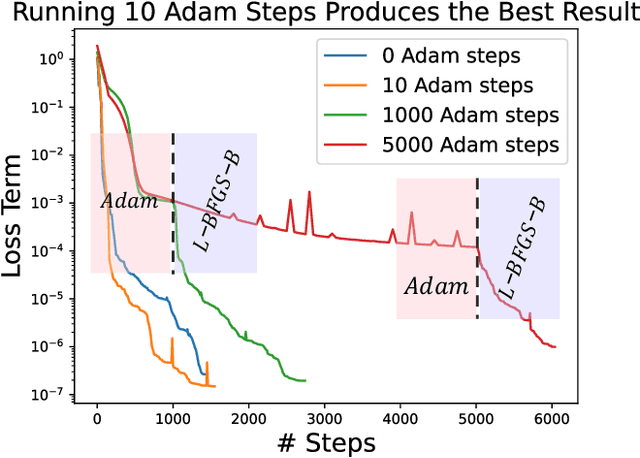

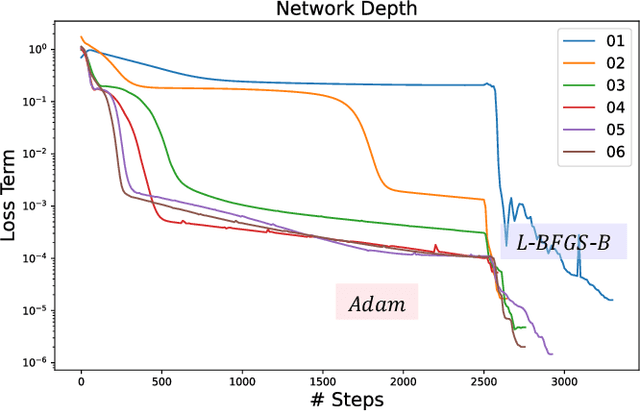

A large number of magnetohydrodynamic (MHD) equilibrium calculations are often required for uncertainty quantification, optimization, and real-time diagnostic information, making MHD equilibrium codes vital to the field of plasma physics. In this paper, we explore a method for solving the Grad-Shafranov equation by using Physics-Informed Neural Networks (PINNs). For PINNs, we optimize neural networks by directly minimizing the residual of the PDE as a loss function. We show that PINNs can accurately and effectively solve the Grad-Shafranov equation with several different boundary conditions. We also explore the parameter space by varying the size of the model, the learning rate, and boundary conditions to map various trade-offs such as between reconstruction error and computational speed. Additionally, we introduce a parameterized PINN framework, expanding the input space to include variables such as pressure, aspect ratio, elongation, and triangularity in order to handle a broader range of plasma scenarios within a single network. Parametrized PINNs could be used in future work to solve inverse problems such as shape optimization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge