"Time": models, code, and papers

A Survey of Evaluation Metrics Used for NLG Systems

Oct 05, 2020

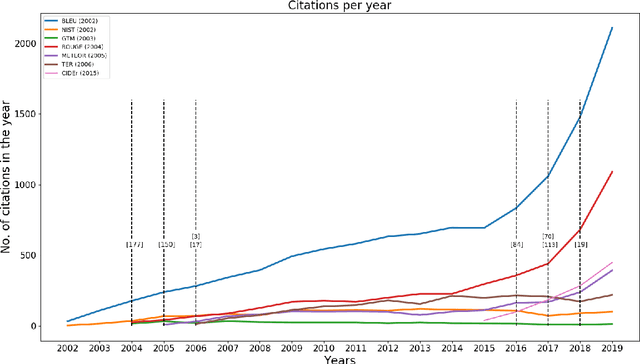

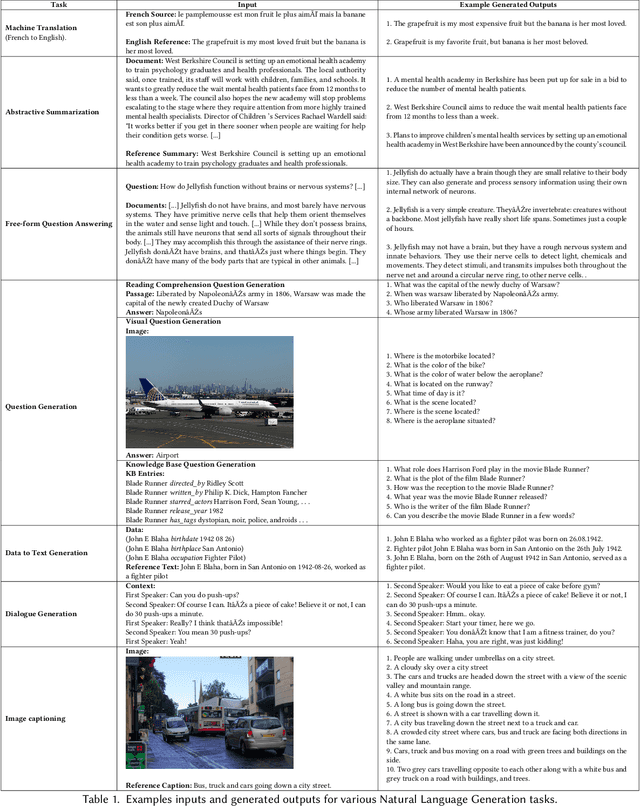

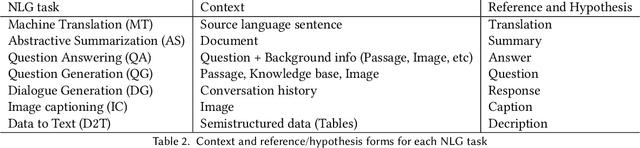

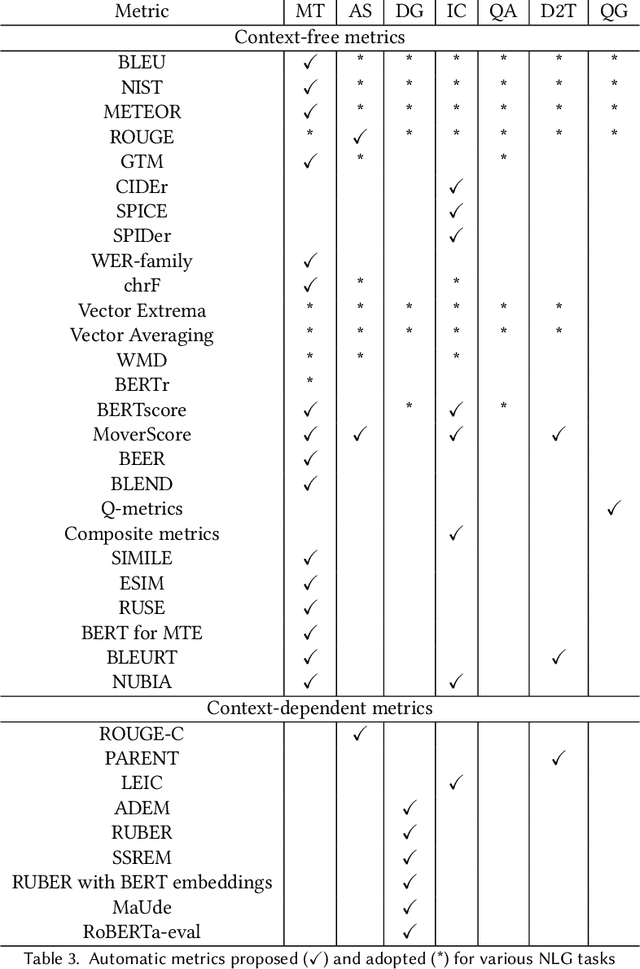

The success of Deep Learning has created a surge in interest in a wide a range of Natural Language Generation (NLG) tasks. Deep Learning has not only pushed the state of the art in several existing NLG tasks but has also facilitated researchers to explore various newer NLG tasks such as image captioning. Such rapid progress in NLG has necessitated the development of accurate automatic evaluation metrics that would allow us to track the progress in the field of NLG. However, unlike classification tasks, automatically evaluating NLG systems in itself is a huge challenge. Several works have shown that early heuristic-based metrics such as BLEU, ROUGE are inadequate for capturing the nuances in the different NLG tasks. The expanding number of NLG models and the shortcomings of the current metrics has led to a rapid surge in the number of evaluation metrics proposed since 2014. Moreover, various evaluation metrics have shifted from using pre-determined heuristic-based formulae to trained transformer models. This rapid change in a relatively short time has led to the need for a survey of the existing NLG metrics to help existing and new researchers to quickly come up to speed with the developments that have happened in NLG evaluation in the last few years. Through this survey, we first wish to highlight the challenges and difficulties in automatically evaluating NLG systems. Then, we provide a coherent taxonomy of the evaluation metrics to organize the existing metrics and to better understand the developments in the field. We also describe the different metrics in detail and highlight their key contributions. Later, we discuss the main shortcomings identified in the existing metrics and describe the methodology used to evaluate evaluation metrics. Finally, we discuss our suggestions and recommendations on the next steps forward to improve the automatic evaluation metrics.

Dynamic Future Net: Diversified Human Motion Generation

Aug 25, 2020

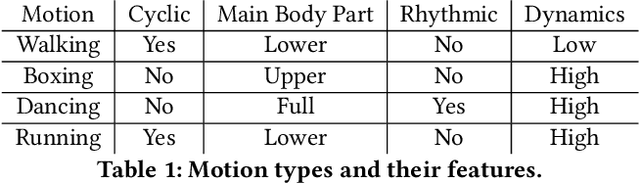

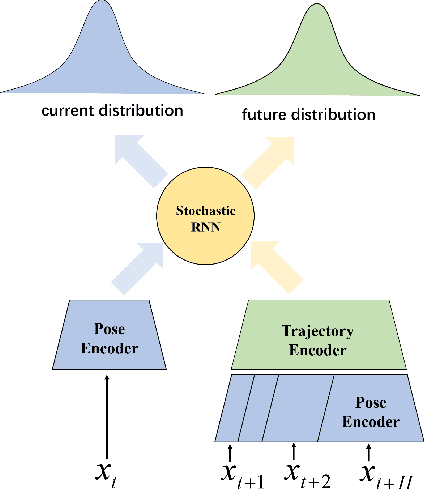

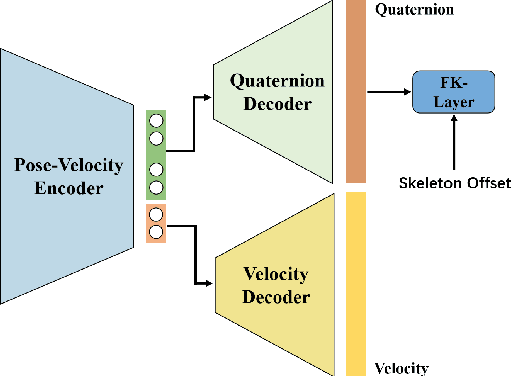

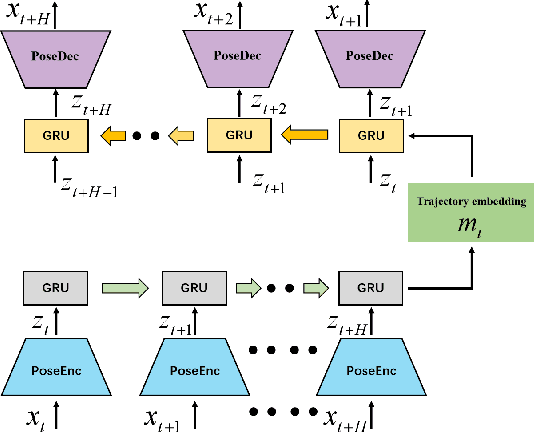

Human motion modelling is crucial in many areas such as computer graphics, vision and virtual reality. Acquiring high-quality skeletal motions is difficult due to the need for specialized equipment and laborious manual post-posting, which necessitates maximizing the use of existing data to synthesize new data. However, it is a challenge due to the intrinsic motion stochasticity of human motion dynamics, manifested in the short and long terms. In the short term, there is strong randomness within a couple frames, e.g. one frame followed by multiple possible frames leading to different motion styles; while in the long term, there are non-deterministic action transitions. In this paper, we present Dynamic Future Net, a new deep learning model where we explicitly focuses on the aforementioned motion stochasticity by constructing a generative model with non-trivial modelling capacity in temporal stochasticity. Given limited amounts of data, our model can generate a large number of high-quality motions with arbitrary duration, and visually-convincing variations in both space and time. We evaluate our model on a wide range of motions and compare it with the state-of-the-art methods. Both qualitative and quantitative results show the superiority of our method, for its robustness, versatility and high-quality.

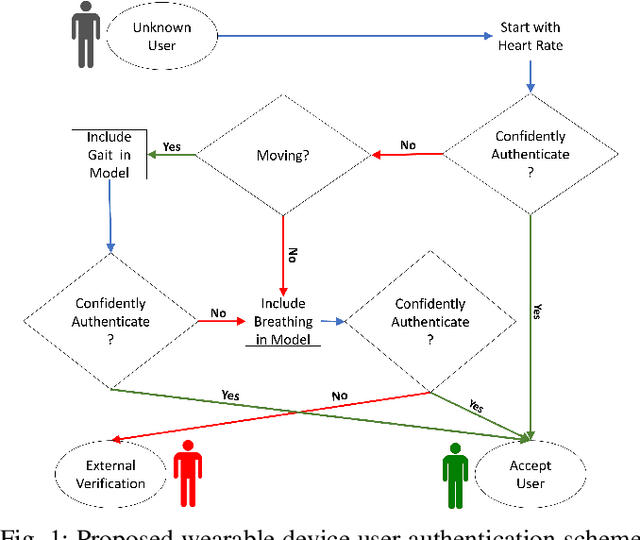

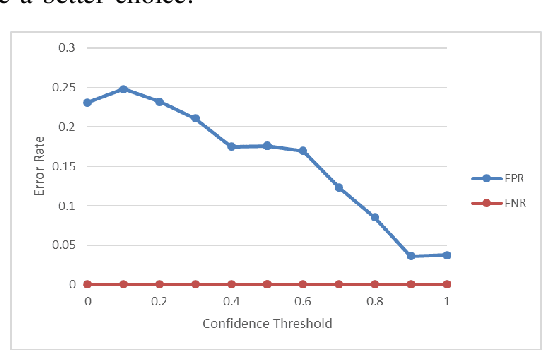

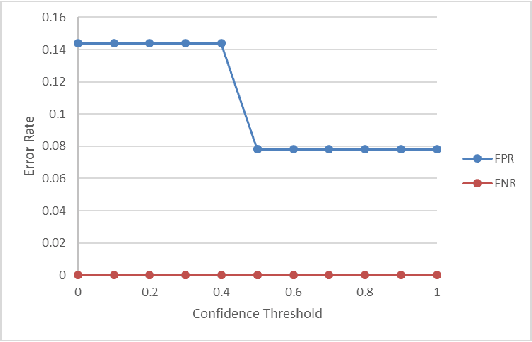

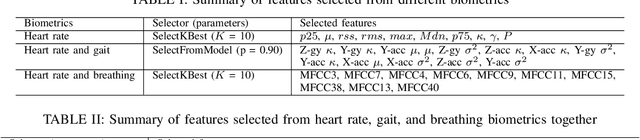

Continuous Authentication of Wearable Device Users from Heart Rate, Gait, and Breathing Data

Aug 25, 2020

The security of private information is becoming the bedrock of an increasingly digitized society. While the users are flooded with passwords and PINs, these gold-standard explicit authentications are becoming less popular and valuable. Recent biometric-based authentication methods, such as facial or finger recognition, are getting popular due to their higher accuracy. However, these hard-biometric-based systems require dedicated devices with powerful sensors and authentication models, which are often limited to most of the market wearables. Still, market wearables are collecting various private information of a user and are becoming an integral part of life: accessing cars, bank accounts, etc. Therefore, time demands a burden-free implicit authentication mechanism for wearables using the less-informative soft-biometric data that are easily obtainable from modern market wearables. In this work, we present a context-dependent soft-biometric-based authentication system for wearables devices using heart rate, gait, and breathing audio signals. From our detailed analysis using the "leave-one-out" validation, we find that a lighter $k$-Nearest Neighbor ($k$-NN) model with $k = 2$ can obtain an average accuracy of $0.93 \pm 0.06$, $F_1$ score $0.93 \pm 0.03$, and {\em false positive rate} (FPR) below $0.08$ at 50\% level of confidence, which shows the promise of this work.

Dynamics Generalization via Information Bottleneck in Deep Reinforcement Learning

Aug 03, 2020

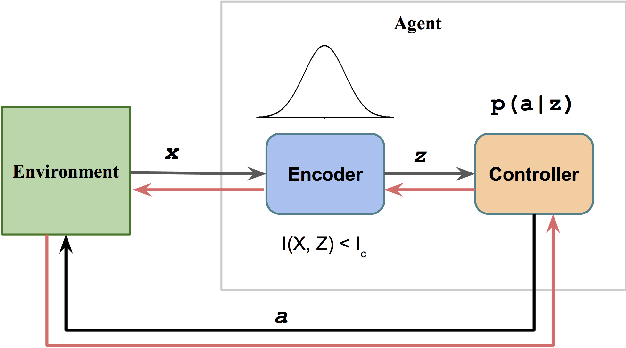

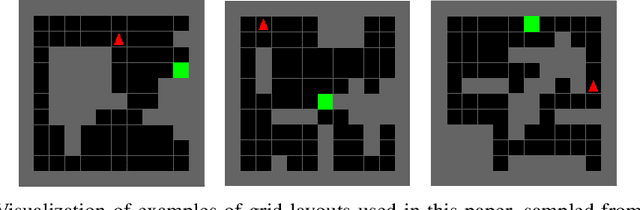

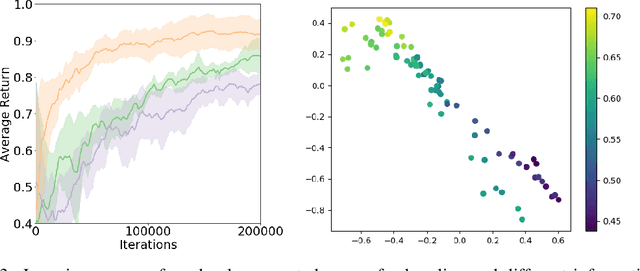

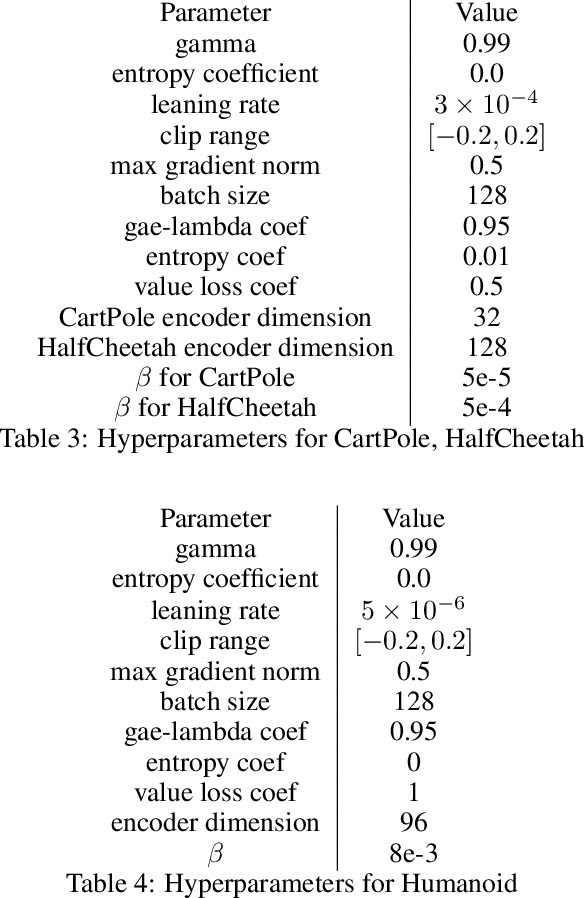

Despite the significant progress of deep reinforcement learning (RL) in solving sequential decision making problems, RL agents often overfit to training environments and struggle to adapt to new, unseen environments. This prevents robust applications of RL in real world situations, where system dynamics may deviate wildly from the training settings. In this work, our primary contribution is to propose an information theoretic regularization objective and an annealing-based optimization method to achieve better generalization ability in RL agents. We demonstrate the extreme generalization benefits of our approach in different domains ranging from maze navigation to robotic tasks; for the first time, we show that agents can generalize to test parameters more than 10 standard deviations away from the training parameter distribution. This work provides a principled way to improve generalization in RL by gradually removing information that is redundant for task-solving; it opens doors for the systematic study of generalization from training to extremely different testing settings, focusing on the established connections between information theory and machine learning.

A Spherical Hidden Markov Model for Semantics-Rich Human Mobility Modeling

Oct 05, 2020

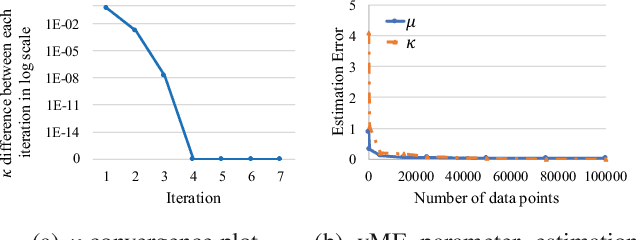

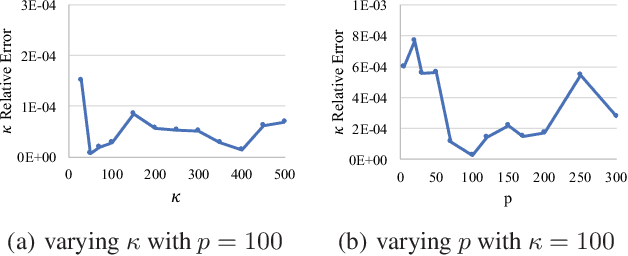

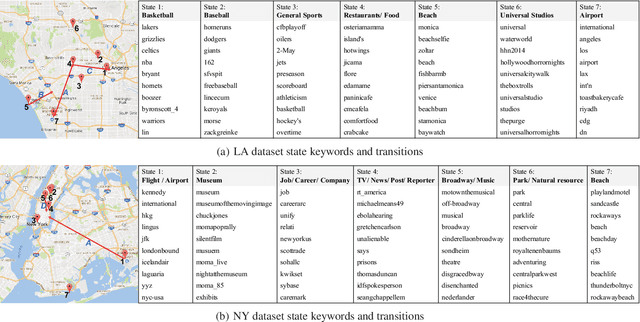

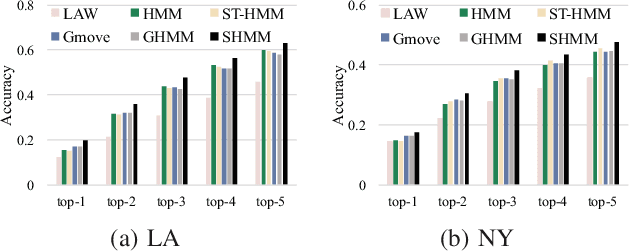

We study the problem of modeling human mobility from semantic trace data, wherein each GPS record in a trace is associated with a text message that describes the user's activity. Existing methods fall short in unveiling human movement regularities, because they either do not model the text data at all or suffer from text sparsity severely. We propose SHMM, a multi-modal spherical hidden Markov model for semantics-rich human mobility modeling. Under the hidden Markov assumption, SHMM models the generation process of a given trace by jointly considering the observed location, time, and text at each step of the trace. The distinguishing characteristic of SHMM is the text modeling part. We use fixed-size vector representations to encode the semantics of the text messages, and model the generation of the l2-normalized text embeddings on a unit sphere with the von Mises-Fisher (vMF) distribution. Compared with other alternatives like multi-variate Gaussian, our choice of the vMF distribution not only incurs much fewer parameters, but also better leverages the discriminative power of text embeddings in a directional metric space. The parameter inference for the vMF distribution is non-trivial since it involves functional inversion of ratios of Bessel functions. We theoretically prove that: 1) the classical Expectation-Maximization algorithm can work with vMF distributions; and 2) while closed-form solutions are hard to be obtained for the M-step, Newton's method is guaranteed to converge to the optimal solution with quadratic convergence rate. We have performed extensive experiments on both synthetic and real-life data. The results on synthetic data verify our theoretical analysis; while the results on real-life data demonstrate that SHMM learns meaningful semantics-rich mobility models, outperforms state-of-the-art mobility models for next location prediction, and incurs lower training cost.

Wound and episode level readmission risk or weeks to readmit: Why do patients get readmitted? How long does it take for a patient to get readmitted?

Oct 05, 2020

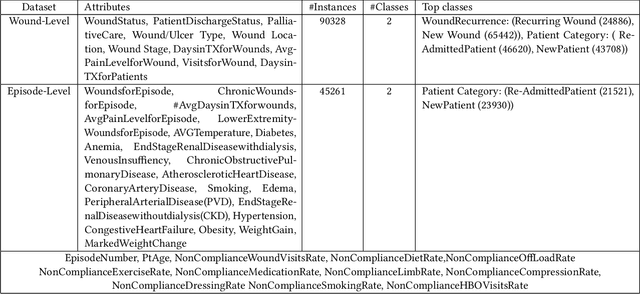

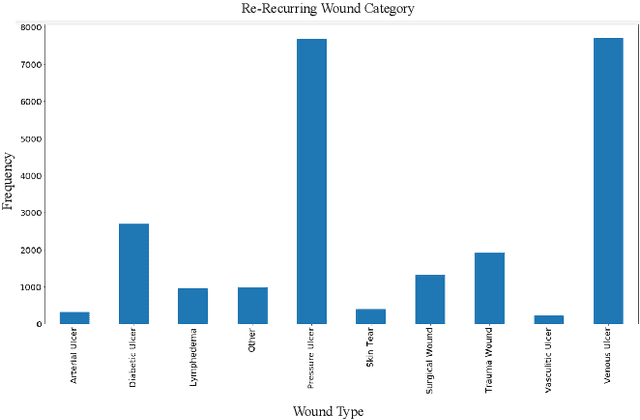

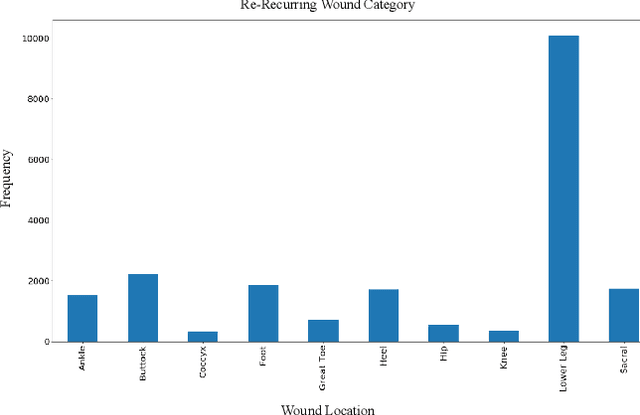

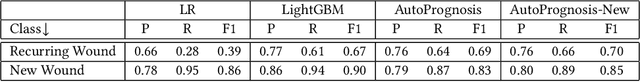

The Affordable care Act of 2010 had introduced Readmission reduction program in 2012 to reduce avoidable re-admissions to control rising healthcare costs. Wound care impacts 15 of medicare beneficiaries making it one of the major contributors of medicare health care cost. Health plans have been exploring proactive health care services that can focus on preventing wound recurrences and re-admissions to control the wound care costs. With rising costs of Wound care industry, it has become of paramount importance to reduce wound recurrences & patient re-admissions. What factors are responsible for a Wound to recur which ultimately lead to hospitalization or re-admission? Is there a way to identify the patients at risk of re-admission before the occurrence using data driven analysis? Patient re-admission risk management has become critical for patients suffering from chronic wounds such as diabetic ulcers, pressure ulcers, and vascular ulcers. Understanding the risk & the factors that cause patient readmission can help care providers and patients avoid wound recurrences. Our work focuses on identifying patients who are at high risk of re-admission & determining the time period with in which a patient might get re-admitted. Frequent re-admissions add financial stress to the patient & Health plan and deteriorate the quality of life of the patient. Having this information can allow a provider to set up preventive measures that can delay, if not prevent, patients' re-admission. On a combined wound & episode-level data set of patient's wound care information, our extended autoprognosis achieves a recall of 92 and a precision of 92 for the predicting a patient's re-admission risk. For new patient class, precision and recall are as high as 91 and 98, respectively. We are also able to predict the patient's discharge event for a re-admission event to occur through our model with a MAE of 2.3 weeks.

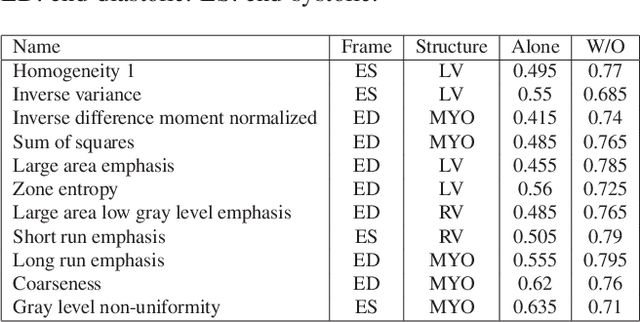

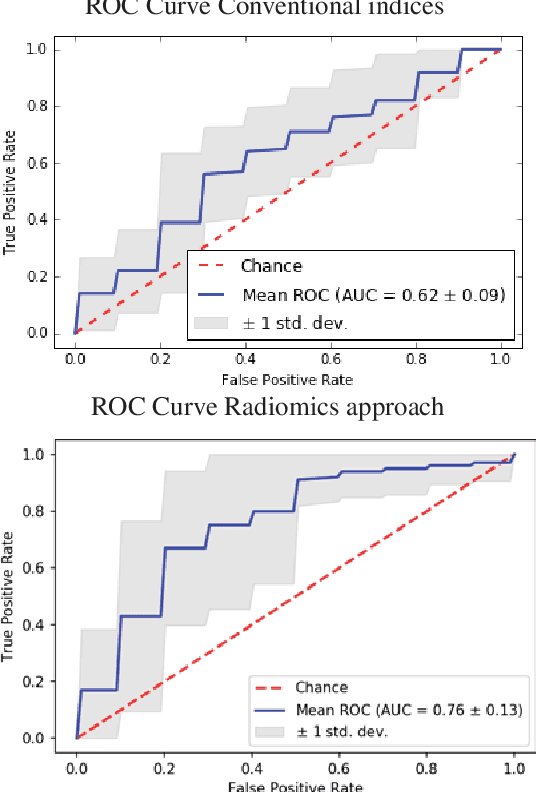

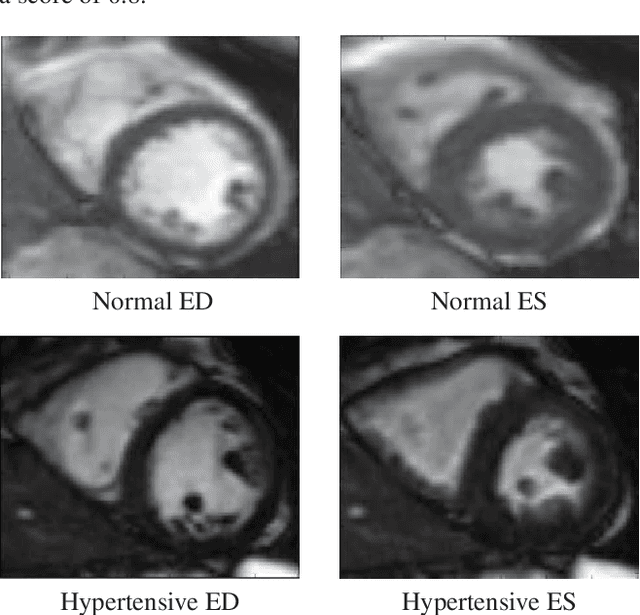

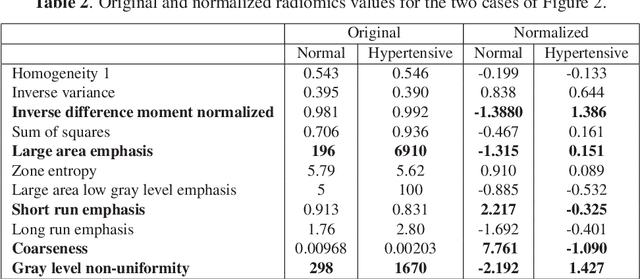

A radiomics approach to analyze cardiac alterations in hypertension

Jul 21, 2020

Hypertension is a medical condition that is well-established as a risk factor for many major diseases. For example, it can cause alterations in the cardiac structure and function over time that can lead to heart related morbidity and mortality. However, at the subclinical stage, these changes are subtle and cannot be easily captured using conventional cardiovascular indices calculated from clinical cardiac imaging. In this paper, we describe a radiomics approach for identifying intermediate imaging phenotypes associated with hypertension. The method combines feature selection and machine learning techniques to identify the most subtle as well as complex structural and tissue changes in hypertensive subgroups as compared to healthy individuals. Validation based on a sample of asymptomatic hearts that include both hypertensive and non-hypertensive cases demonstrate that the proposed radiomics model is capable of detecting intensity and textural changes well beyond the capabilities of conventional imaging phenotypes, indicating its potential for improved understanding of the longitudinal effects of hypertension on cardiovascular health and disease.

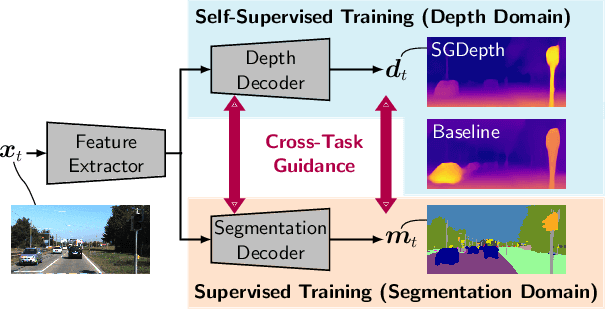

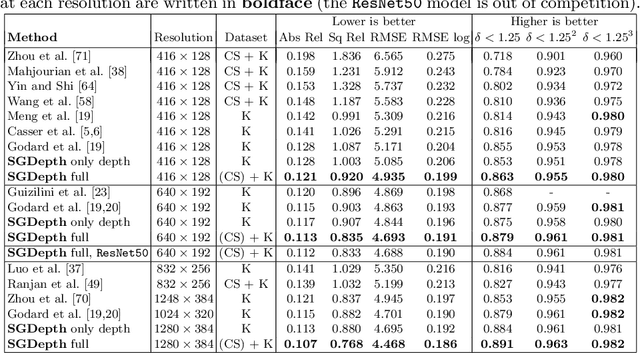

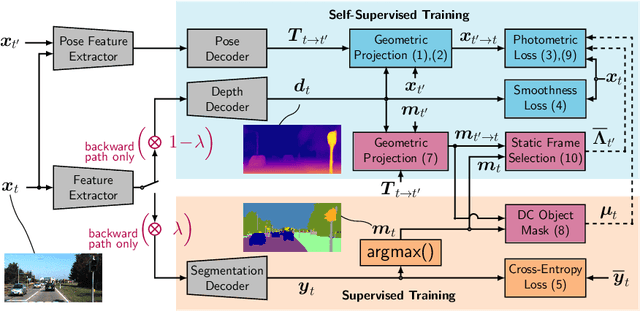

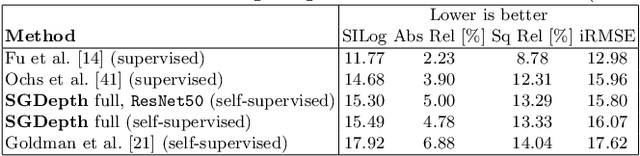

Self-Supervised Monocular Depth Estimation: Solving the Dynamic Object Problem by Semantic Guidance

Jul 21, 2020

Self-supervised monocular depth estimation presents a powerful method to obtain 3D scene information from single camera images, which is trainable on arbitrary image sequences without requiring depth labels, e.g., from a LiDAR sensor. In this work we present a new self-supervised semantically-guided depth estimation (SGDepth) method to deal with moving dynamic-class (DC) objects, such as moving cars and pedestrians, which violate the static-world assumptions typically made during training of such models. Specifically, we propose (i) mutually beneficial cross-domain training of (supervised) semantic segmentation and self-supervised depth estimation with task-specific network heads, (ii) a semantic masking scheme providing guidance to prevent moving DC objects from contaminating the photometric loss, and (iii) a detection method for frames with non-moving DC objects, from which the depth of DC objects can be learned. We demonstrate the performance of our method on several benchmarks, in particular on the Eigen split, where we exceed all baselines without test-time refinement.

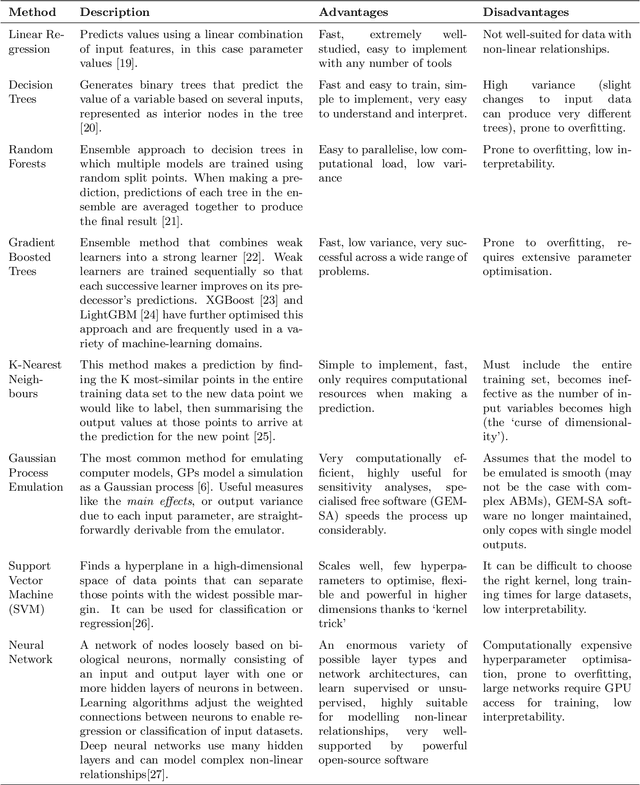

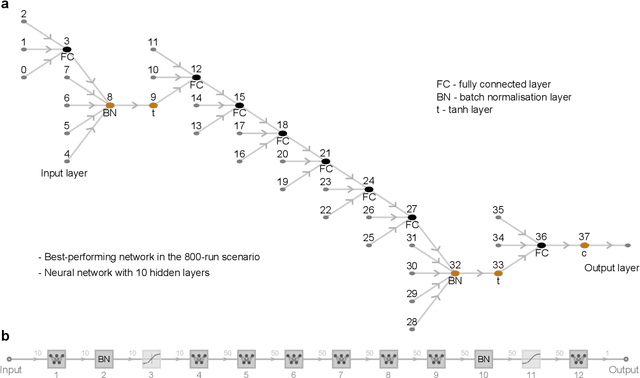

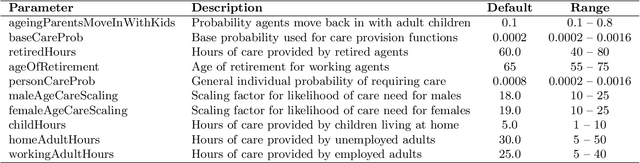

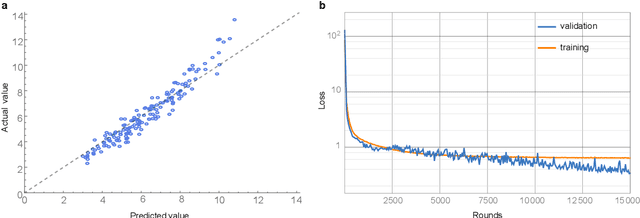

Using Machine Learning to Emulate Agent-Based Simulations

May 05, 2020

In this paper, we evaluate the performance of multiple machine-learning methods in the emulation of agent-based models (ABMs). ABMs are a popular methodology for modelling complex systems composed of multiple interacting processes. The analysis of ABM outputs is often not straightforward, as the relationships between input parameters can be non-linear or even chaotic, and each individual model run can require significant CPU time. Statistical emulation, in which a statistical model of the ABM is constructed to allow for more in-depth model analysis, has proven valuable for some applications. Here we compare multiple machine-learning methods for ABM emulation in order to determine the approaches best-suited to replicating the complex and non-linear behaviour of ABMs. Our results suggest that, in most scenarios, artificial neural networks (ANNs) and support vector machines outperform Gaussian process emulators, currently the most commonly used method for the emulation of complex computational models. ANNs produced the most accurate model replications in scenarios with high numbers of model runs, although training times for these emulators were considerably longer than for any other method. We propose that users of complex ABMs would benefit from using machine-learning methods for emulation, as this can facilitate more robust sensitivity analyses for their models as well as reducing CPU time consumption when calibrating and analysing the simulation.

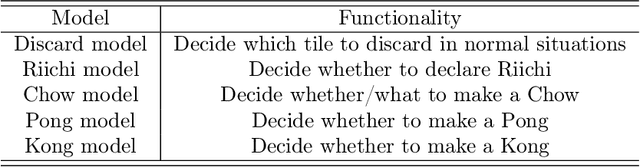

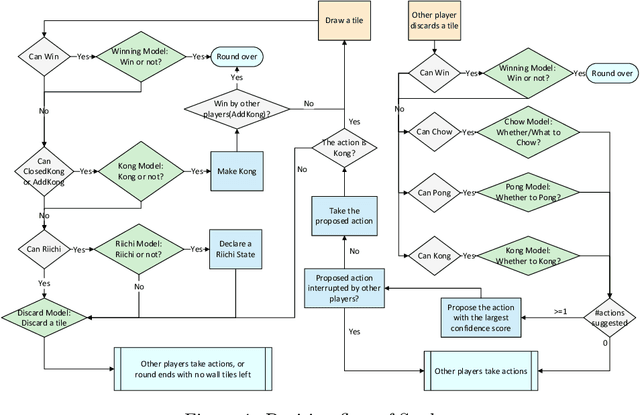

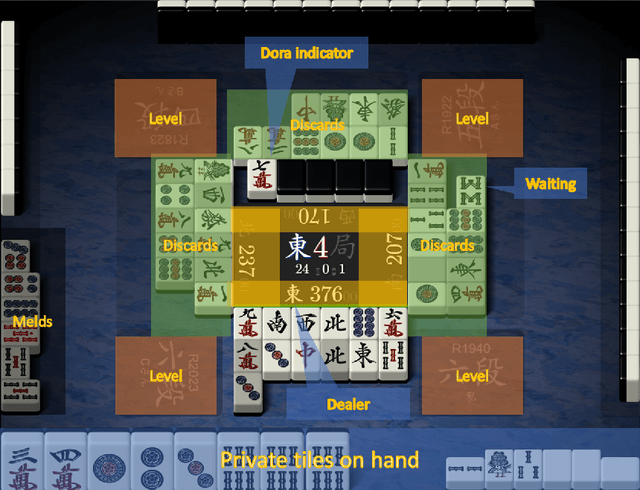

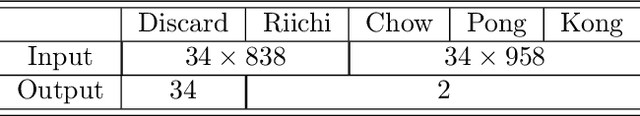

Suphx: Mastering Mahjong with Deep Reinforcement Learning

Mar 30, 2020

Artificial Intelligence (AI) has achieved great success in many domains, and game AI is widely regarded as its beachhead since the dawn of AI. In recent years, studies on game AI have gradually evolved from relatively simple environments (e.g., perfect-information games such as Go, chess, shogi or two-player imperfect-information games such as heads-up Texas hold'em) to more complex ones (e.g., multi-player imperfect-information games such as multi-player Texas hold'em and StartCraft II). Mahjong is a popular multi-player imperfect-information game worldwide but very challenging for AI research due to its complex playing/scoring rules and rich hidden information. We design an AI for Mahjong, named Suphx, based on deep reinforcement learning with some newly introduced techniques including global reward prediction, oracle guiding, and run-time policy adaptation. Suphx has demonstrated stronger performance than most top human players in terms of stable rank and is rated above 99.99% of all the officially ranked human players in the Tenhou platform. This is the first time that a computer program outperforms most top human players in Mahjong.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge