"Time": models, code, and papers

A Change-Detection Based Thompson Sampling Framework for Non-Stationary Bandits

Sep 06, 2020

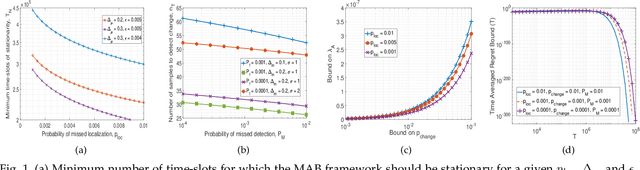

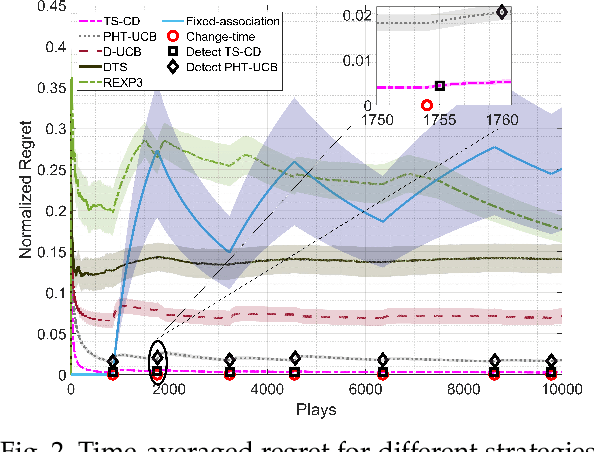

We consider a non-stationary two-armed bandit framework and propose a change-detection based Thompson sampling (TS) algorithm, named TS with change-detection (TS-CD), to keep track of the dynamic environment. The non-stationarity is modeled using a Poisson arrival process, which changes the mean of the rewards on each arrival. The proposed strategy compares the empirical mean of the recent rewards of an arm with the estimate of the mean of the rewards from its history. It detects a change when the empirical mean deviates from the mean estimate by a value larger than a threshold. Then, we characterize the lower bound on the duration of the time-window for which the bandit framework must remain stationary for TS-CD to successfully detect a change when it occurs. Consequently, our results highlight an upper bound on the parameter for the Poisson arrival process, for which the TS-CD achieves asymptotic regret optimality with high probability. Finally, we validate the efficacy of TS-CD by testing it for edge-control of radio access technique (RAT)-selection in a wireless network. Our results show that TS-CD not only outperforms the classical max-power RAT selection strategy but also other actively adaptive and passively adaptive bandit algorithms that are designed for non-stationary environments.

How semantic and geometric information mutually reinforce each other in ToF object localization

Aug 27, 2020

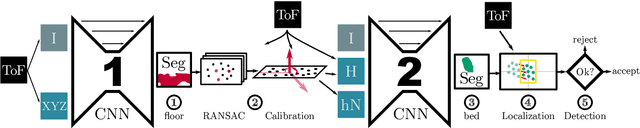

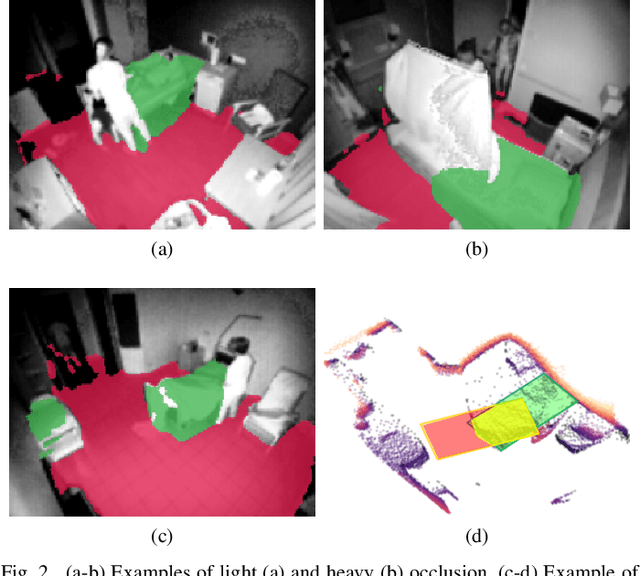

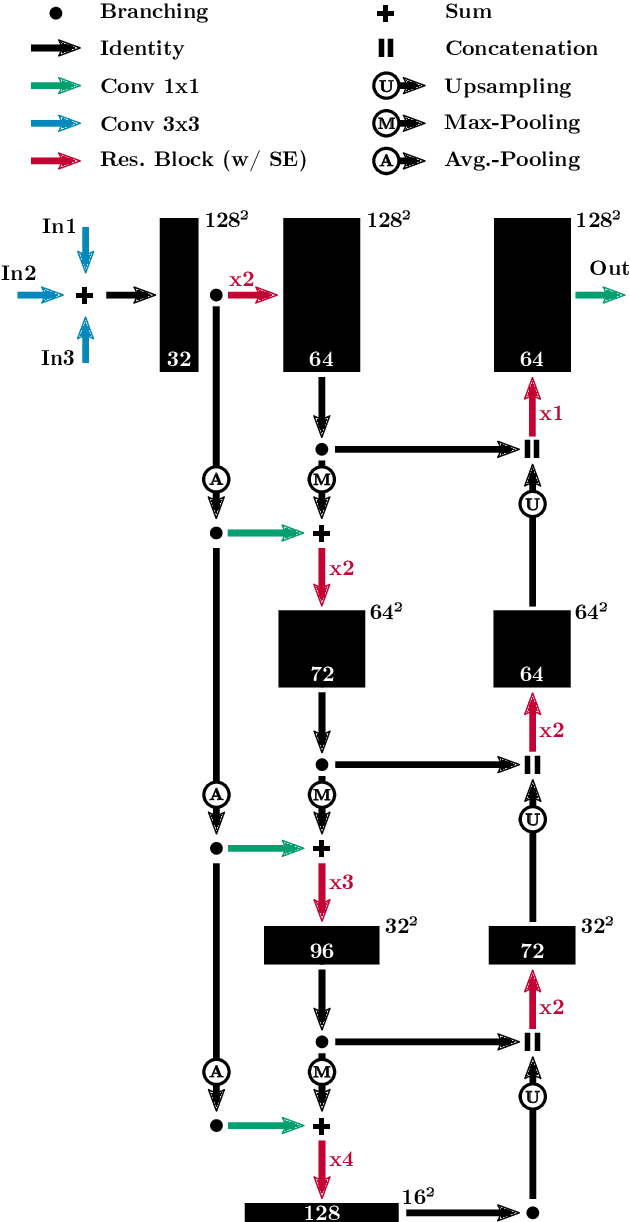

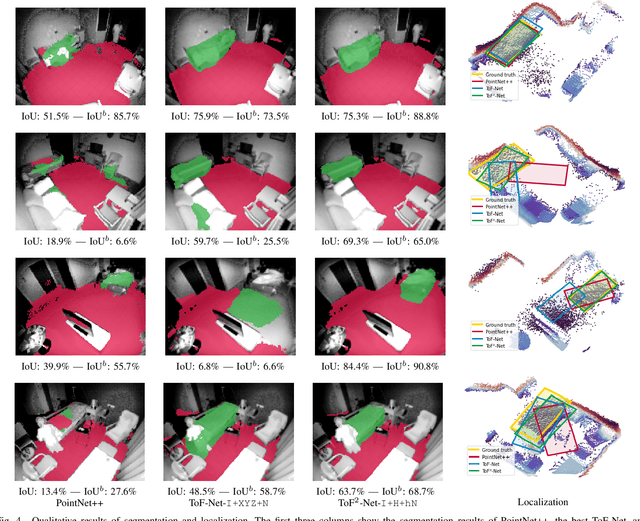

We propose a novel approach to localize a 3D object from the intensity and depth information images provided by a Time-of-Flight (ToF) sensor. Our method uses two CNNs. The first one uses raw depth and intensity images as input, to segment the floor pixels, from which the extrinsic parameters of the camera are estimated. The second CNN is in charge of segmenting the object-of-interest. As a main innovation, it exploits the calibration estimated from the prediction of the first CNN to represent the geometric depth information in a coordinate system that is attached to the ground, and is thus independent of the camera elevation. In practice, both the height of pixels with respect to the ground, and the orientation of normals to the point cloud are provided as input to the second CNN. Given the segmentation predicted by the second CNN, the object is localized based on point cloud alignment with a reference model. Our experiments demonstrate that our proposed two-step approach improves segmentation and localization accuracy by a significant margin compared to a conventional CNN architecture, ignoring calibration and height maps, but also compared to PointNet++.

Customer Support Ticket Escalation Prediction using Feature Engineering

Oct 10, 2020Understanding and keeping the customer happy is a central tenet of requirements engineering. Strategies to gather, analyze, and negotiate requirements are complemented by efforts to manage customer input after products have been deployed. For the latter, support tickets are key in allowing customers to submit their issues, bug reports, and feature requests. If insufficient attention is given to support issues, however, their escalation to management becomes time-consuming and expensive, especially for large organizations managing hundreds of customers and thousands of support tickets. Our work provides a step towards simplifying the job of support analysts and managers, particularly in predicting the risk of escalating support tickets. In a field study at our large industrial partner, IBM, we used a design science research methodology to characterize the support process and data available to IBM analysts in managing escalations. We then implemented these features into a machine learning model to predict support ticket escalations. We trained and evaluated our machine learning model on over 2.5 million support tickets and 10,000 escalations, obtaining a recall of 87.36% and an 88.23% reduction in the workload for support analysts looking to identify support tickets at risk of escalation. Finally, in addition to these research evaluation activities, we compared the performance of our support ticket model with that of a model developed with no feature engineering; the support ticket model features outperformed the non-engineered model. The artifacts created in this research are designed to serve as a starting place for organizations interested in predicting support ticket escalations, and for future researchers to build on to advance research in escalation prediction.

* 19 pages, 9 figures, published in Springer Requirements Engineering Journal. arXiv admin note: substantial text overlap with arXiv:1901.01092

Predicting SLA Violations in Real Time using Online Machine Learning

Sep 04, 2015

Detecting faults and SLA violations in a timely manner is critical for telecom providers, in order to avoid loss in business, revenue and reputation. At the same time predicting SLA violations for user services in telecom environments is difficult, due to time-varying user demands and infrastructure load conditions. In this paper, we propose a service-agnostic online learning approach, whereby the behavior of the system is learned on the fly, in order to predict client-side SLA violations. The approach uses device-level metrics, which are collected in a streaming fashion on the server side. Our results show that the approach can produce highly accurate predictions (>90% classification accuracy and < 10% false alarm rate) in scenarios where SLA violations are predicted for a video-on-demand service under changing load patterns. The paper also highlight the limitations of traditional offline learning methods, which perform significantly worse in many of the considered scenarios.

Real-time marker-less multi-person 3D pose estimation in RGB-Depth camera networks

Oct 17, 2017

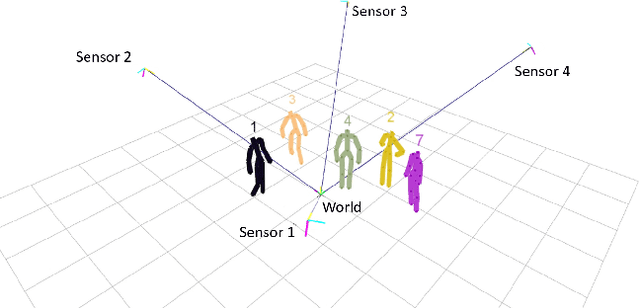

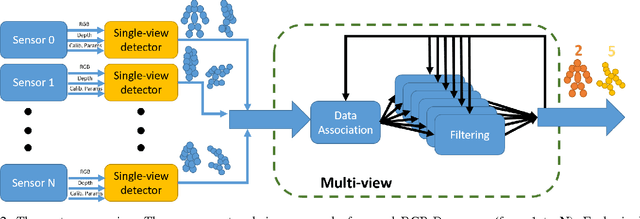

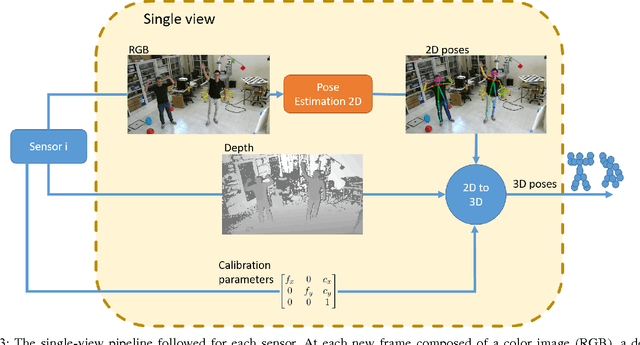

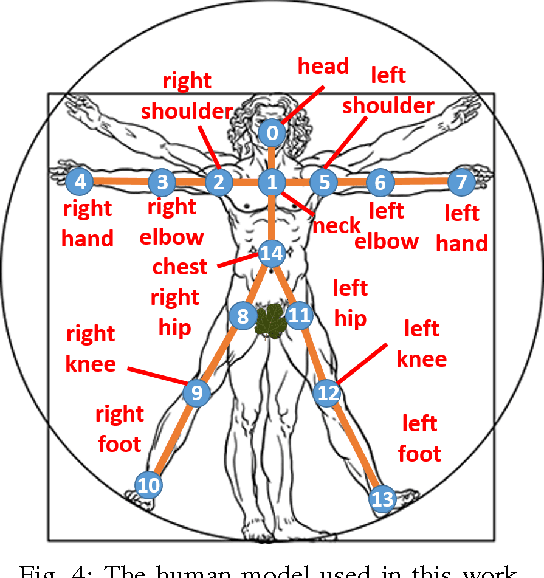

This paper proposes a novel system to estimate and track the 3D poses of multiple persons in calibrated RGB-Depth camera networks. The multi-view 3D pose of each person is computed by a central node which receives the single-view outcomes from each camera of the network. Each single-view outcome is computed by using a CNN for 2D pose estimation and extending the resulting skeletons to 3D by means of the sensor depth. The proposed system is marker-less, multi-person, independent of background and does not make any assumption on people appearance and initial pose. The system provides real-time outcomes, thus being perfectly suited for applications requiring user interaction. Experimental results show the effectiveness of this work with respect to a baseline multi-view approach in different scenarios. To foster research and applications based on this work, we released the source code in OpenPTrack, an open source project for RGB-D people tracking.

Towards High Performance, Portability, and Productivity: Lightweight Augmented Neural Networks for Performance Prediction

Mar 17, 2020

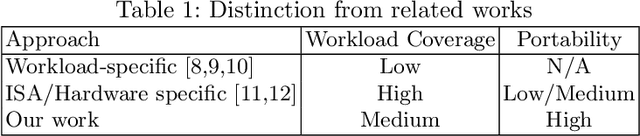

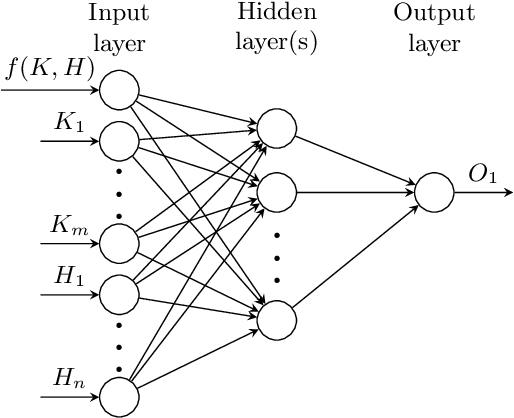

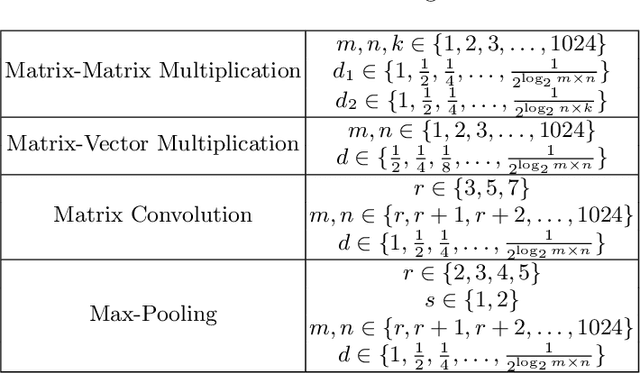

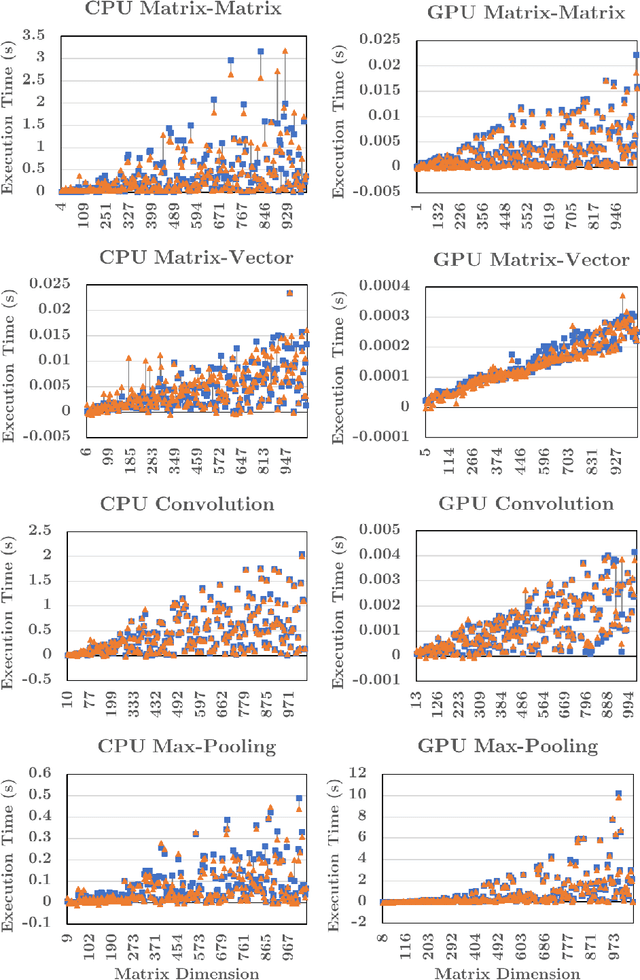

Writing high-performance code requires significant expertise of the programming language, compiler optimizations, and hardware knowledge. This often leads to poor productivity and portability and is inconvenient for a non-programmer domain-specialist such as a Physicist. More desirable is a high-level language where the domain-specialist simply specifies the workload in terms of high-level operations (e.g., matrix-multiply(A, B)) and the compiler identifies the best implementation fully utilizing the heterogeneous platform. For creating a compiler that supports productivity, portability, and performance simultaneously, it is crucial to predict performance of various available implementations (variants) of the dominant operations (kernels) contained in the workload on various hardware to decide (a) which variant should be chosen for each kernel in the workload, and (b) on which hardware resource the variant should run. To enable the performance prediction, we propose lightweight augmented neural networks for arbitrary combinations of kernel-variant-hardware. A key innovation is utilizing mathematical complexity of the kernels as a feature to achieve higher accuracy. These models are compact to reduce training time and fast inference during compile-time and run-time. Using models with less than 75 parameters, and only 250 training data instances, we are able to obtain a low MAPE of ~13% significantly outperforming traditional feed-forward neural networks on 40 kernel-variant-hardware combinations. We further demonstrate that our variant selection approach can be used in Halide implementations to obtain up to 1.5x speedup over Halide's autoscheduler.

Alternating Minimization Based Trajectory Generation for Quadrotor Aggressive Flight

Feb 25, 2020With much research has been conducted into trajectory planning for quadrotors, planning with spatial and temporal optimal trajectories in real-time is still challenging. In this paper, we propose a framework for generating large-scale piecewise polynomial trajectories for aggressive autonomous flights, with highlights on its superior computational efficiency and simultaneous spatial-temporal optimality. Exploiting the implicitly decoupled structure of the planning problem, we conduct alternating minimization between boundary conditions and time durations of trajectory pieces. In each minimization phase, we leverage the algebraic convenience of the sub-problem to escape poor local minima and achieve the lowest time consumption. Theoretical analysis for the global/local convergence rate of our proposed method is provided. Moreover, based on polynomial theory, an extremely fast feasibility check method is designed for various kinds of constraints. By incorporating the method into our alternating structure, a constrained minimization algorithm is constructed to optimize trajectories on the premise of feasibility. Benchmark evaluation shows that our algorithm outperforms state-of-the-art methods regarding efficiency, optimality, and scalability. Aggressive flight experiments in a limited space with dense obstacles are presented to demonstrate the performance of the proposed algorithm. We release our implementation as an open-source ros-package.

A Workload Adaptive Haptic Shared Control Scheme for Semi-Autonomous Driving

Mar 31, 2020

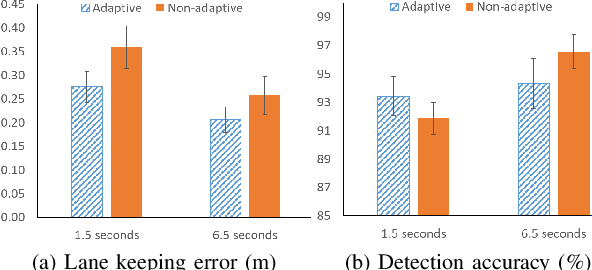

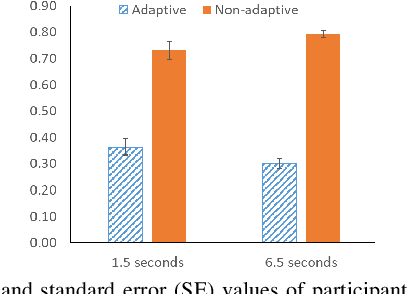

Haptic shared control is used to manage the control authority allocation between a human and an autonomous agent in semi-autonomous driving. Existing haptic shared control schemes, however, do not take full consideration of the human agent. To fill this research gap, this study presents a haptic shared control scheme that adapts to a human operator's workload, eyes on road and input torque in real-time. We conducted human-in-the-loop experiments with 24 participants. In the experiment, a human operator and an autonomy module for navigation shared the control of a simulated notional High Mobility Multipurpose Wheeled Vehicle (HMMWV) at a fixed speed. At the same time, the human operator performed a target detection task for surveillance. The autonomy could be either adaptive or non-adaptive to the above-mentioned human factors. Results indicate that the adaptive haptic control scheme resulted in significantly lower workload, higher trust in autonomy, better driving task performance and smaller control effort.

Structure and Automatic Segmentation of Dhrupad Vocal Bandish Audio

Aug 03, 2020

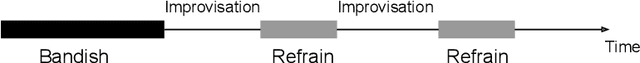

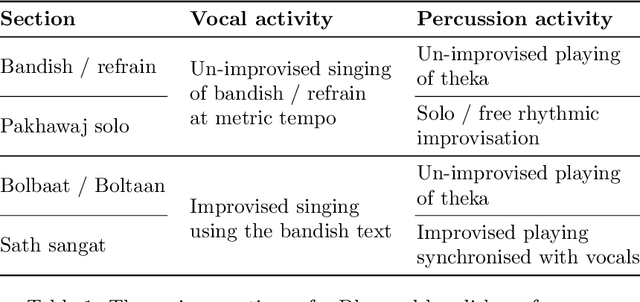

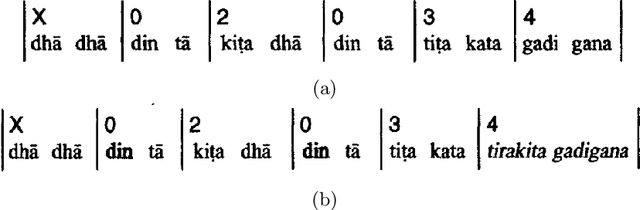

A Dhrupad vocal concert comprises a composition section that is interspersed with improvised episodes of increased rhythmic activity involving the interaction between the vocals and the percussion. Tracking the changing rhythmic density, in relation to the underlying metric tempo of the piece, thus facilitates the detection and labeling of the improvised sections in the concert structure. This work concerns the automatic detection of the musically relevant rhythmic densities as they change in time across the bandish (composition) performance. An annotated dataset of Dhrupad bandish concert sections is presented. We investigate a CNN-based system, trained to detect local tempo relationships, and follow it with temporal smoothing. We also employ audio source separation as a pre-processing step to the detection of the individual surface densities of the vocals and the percussion. This helps us obtain the complete musical description of the concert sections in terms of capturing the changing rhythmic interaction of the two performers.

Pseudoinverse Graph Convolutional Networks: Fast Filters Tailored for Large Eigengaps of Dense Graphs and Hypergraphs

Aug 03, 2020

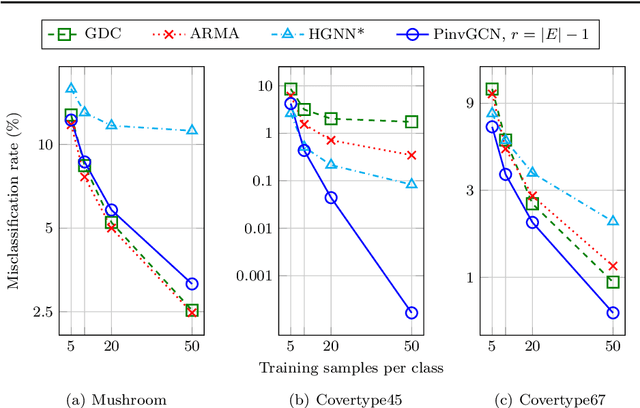

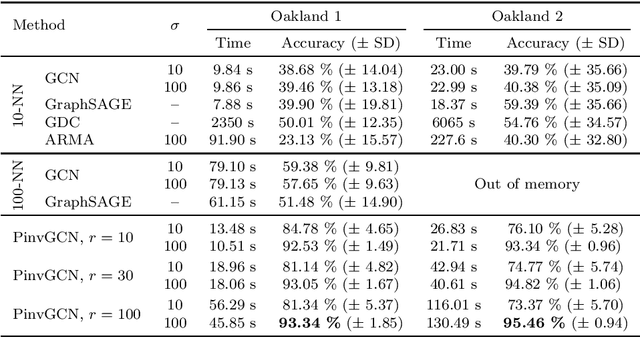

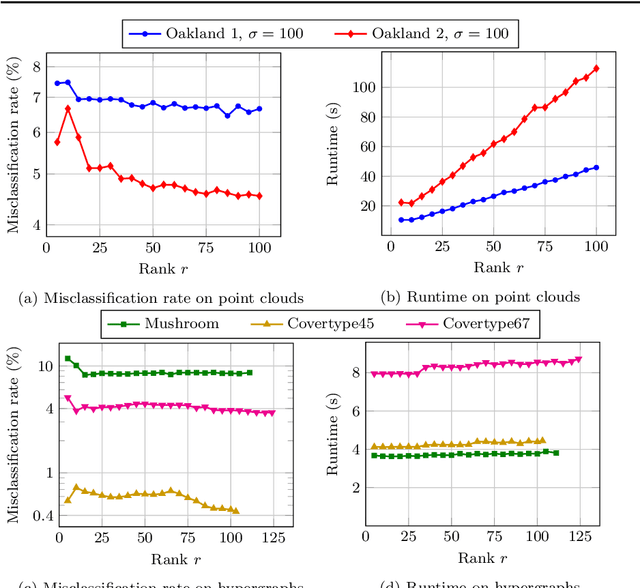

Graph Convolutional Networks (GCNs) have proven to be successful tools for semi-supervised classification on graph-based datasets. We propose a new GCN variant whose three-part filter space is targeted at dense graphs. Examples include Gaussian graphs for 3D point clouds with an increased focus on non-local information, as well as hypergraphs based on categorical data. These graphs differ from the common sparse benchmark graphs in terms of the spectral properties of their graph Laplacian. Most notably we observe large eigengaps, which are unfavorable for popular existing GCN architectures. Our method overcomes these issues by utilizing the pseudoinverse of the Laplacian. Another key ingredient is a low-rank approximation of the convolutional matrix, ensuring computational efficiency and increasing accuracy at the same time. We outline how the necessary eigeninformation can be computed efficiently in each applications and discuss the appropriate choice of the only metaparameter, the approximation rank. We finally showcase our method's performance regarding runtime and accuracy in various experiments with real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge