"Time": models, code, and papers

LG-GAN: Label Guided Adversarial Network for Flexible Targeted Attack of Point Cloud-based Deep Networks

Nov 01, 2020

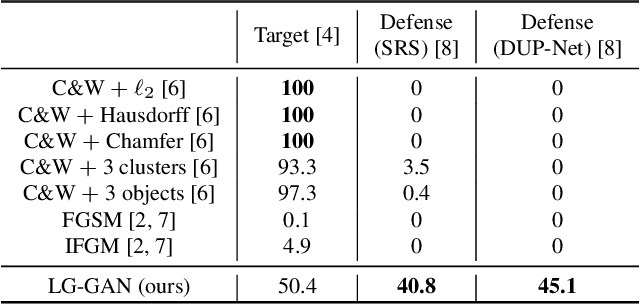

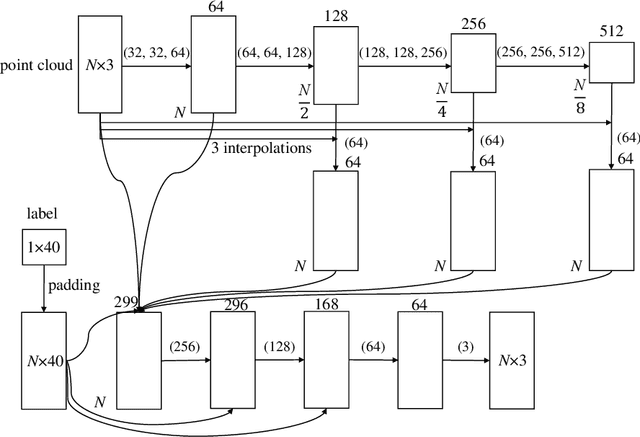

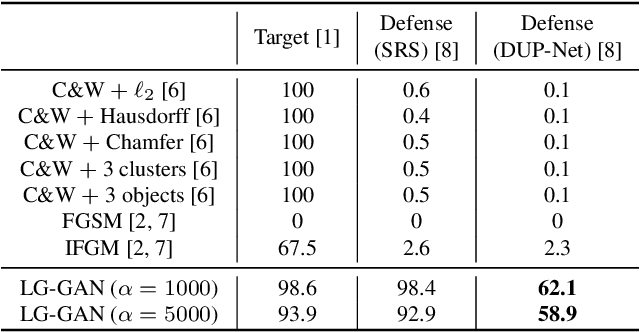

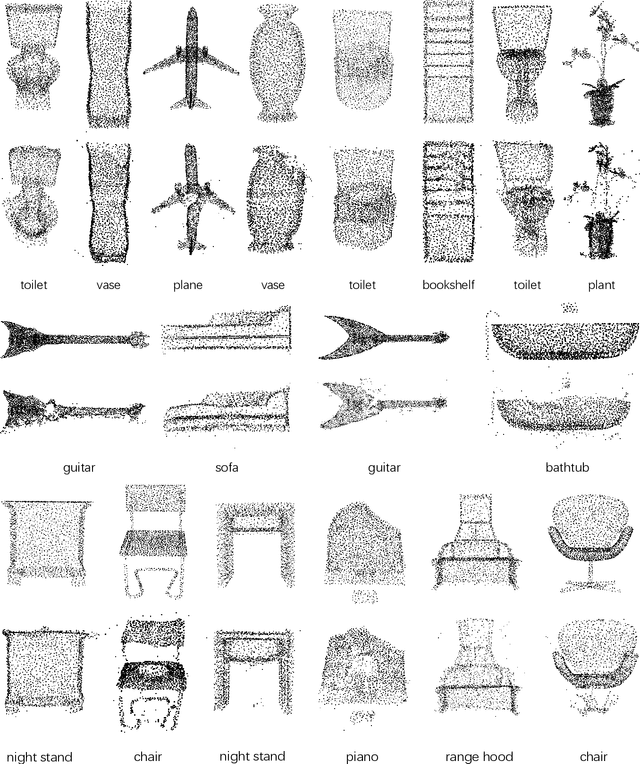

Deep neural networks have made tremendous progress in 3D point-cloud recognition. Recent works have shown that these 3D recognition networks are also vulnerable to adversarial samples produced from various attack methods, including optimization-based 3D Carlini-Wagner attack, gradient-based iterative fast gradient method, and skeleton-detach based point-dropping. However, after a careful analysis, these methods are either extremely slow because of the optimization/iterative scheme, or not flexible to support targeted attack of a specific category. To overcome these shortcomings, this paper proposes a novel label guided adversarial network (LG-GAN) for real-time flexible targeted point cloud attack. To the best of our knowledge, this is the first generation based 3D point cloud attack method. By feeding the original point clouds and target attack label into LG-GAN, it can learn how to deform the point clouds to mislead the recognition network into the specific label only with a single forward pass. In detail, LGGAN first leverages one multi-branch adversarial network to extract hierarchical features of the input point clouds, then incorporates the specified label information into multiple intermediate features using the label encoder. Finally, the encoded features will be fed into the coordinate reconstruction decoder to generate the target adversarial sample. By evaluating different point-cloud recognition models (e.g., PointNet, PointNet++ and DGCNN), we demonstrate that the proposed LG-GAN can support flexible targeted attack on the fly while guaranteeing good attack performance and higher efficiency simultaneously.

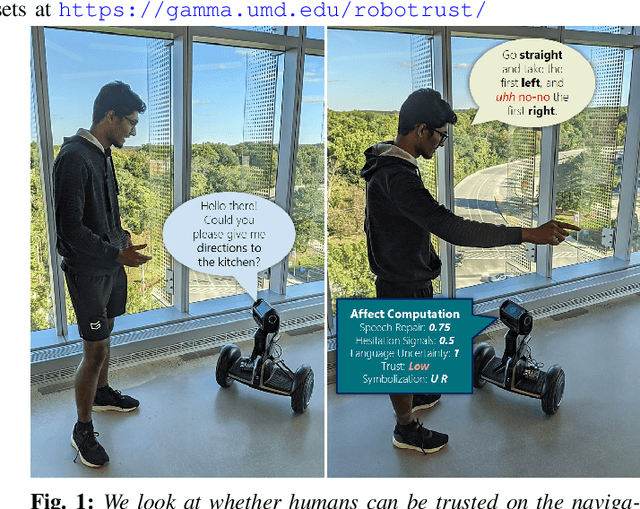

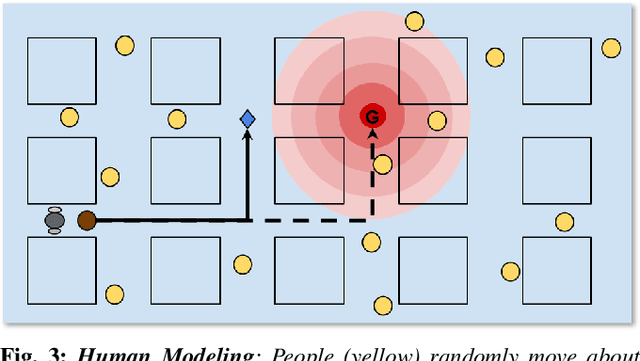

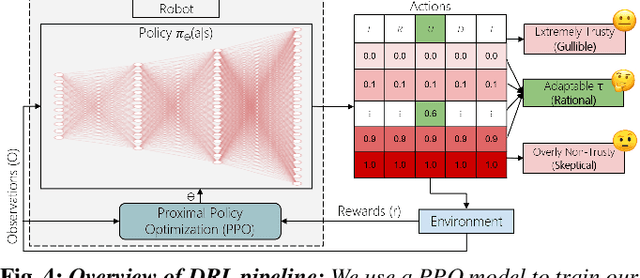

Can a Robot Trust You? A DRL-Based Approach to Trust-Driven Human-Guided Navigation

Nov 01, 2020

Humans are known to construct cognitive maps of their everyday surroundings using a variety of perceptual inputs. As such, when a human is asked for directions to a particular location, their wayfinding capability in converting this cognitive map into directional instructions is challenged. Owing to spatial anxiety, the language used in the spoken instructions can be vague and often unclear. To account for this unreliability in navigational guidance, we propose a novel Deep Reinforcement Learning (DRL) based trust-driven robot navigation algorithm that learns humans' trustworthiness to perform a language guided navigation task. Our approach seeks to answer the question as to whether a robot can trust a human's navigational guidance or not. To this end, we look at training a policy that learns to navigate towards a goal location using only trustworthy human guidance, driven by its own robot trust metric. We look at quantifying various affective features from language-based instructions and incorporate them into our policy's observation space in the form of a human trust metric. We utilize both these trust metrics into an optimal cognitive reasoning scheme that decides when and when not to trust the given guidance. Our results show that the learned policy can navigate the environment in an optimal, time-efficient manner as opposed to an explorative approach that performs the same task. We showcase the efficacy of our results both in simulation and a real-world environment.

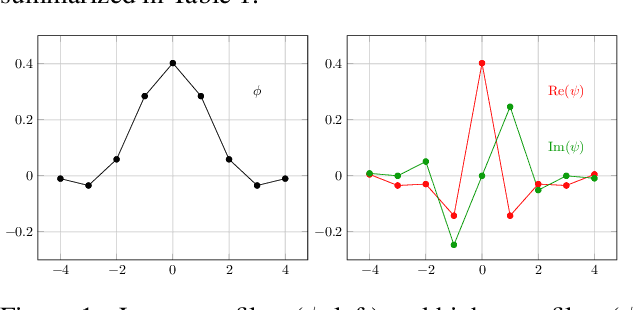

Combining Scatter Transform and Deep Neural Networks for Multilabel Electrocardiogram Signal Classification

Oct 15, 2020

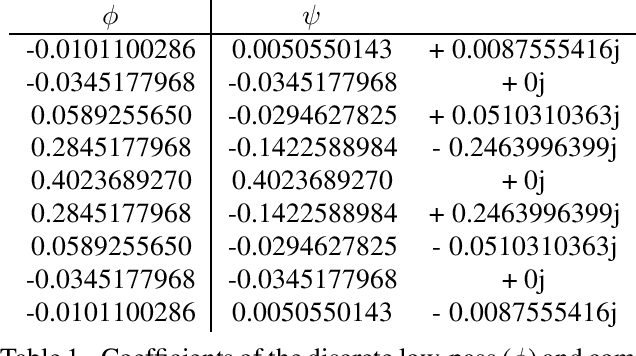

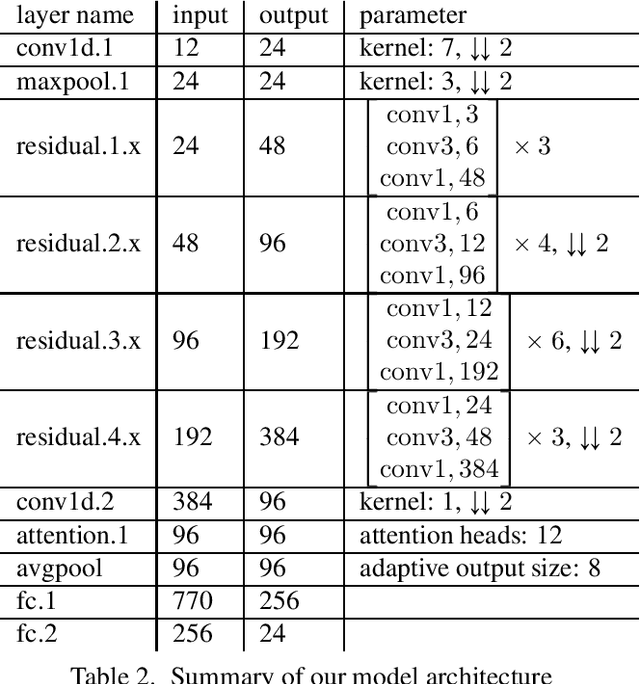

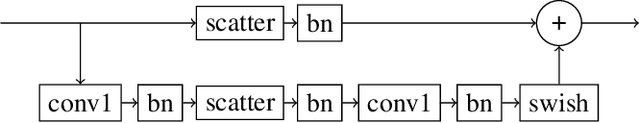

An essential part for the accurate classification of electrocardiogram (ECG) signals is the extraction of informative yet general features, which are able to discriminate diseases. Cardiovascular abnormalities manifest themselves in features on different time scales: small scale morphological features, such as missing P-waves, as well as rhythmical features apparent on heart rate scales. For this reason we incorporate a variant of the complex wavelet transform, called a scatter transform, in a deep residual neural network (ResNet). The former has the advantage of being derived from theory, making it well behaved under certain transformations of the input. The latter has proven useful in ECG classification, allowing feature extraction and classification to be learned in an end-to-end manner. Through the incorporation of trainable layers in between scatter transforms, the model gains the ability to combine information from different channels, yielding more informative features for the classification task and adapting them to the specific domain. For evaluation, we submitted our model in the official phase in the PhysioNet/Computing in Cardiology Challenge 2020. Our (Team Triage) approach achieved a challenge validation score of 0.640, and full test score of 0.485, placing us 4th out of 41 in the official ranking.

Reinforcement Learning for Mitigating Intermittent Interference in Terahertz Communication Networks

Mar 10, 2020

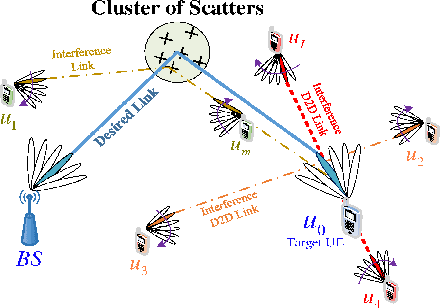

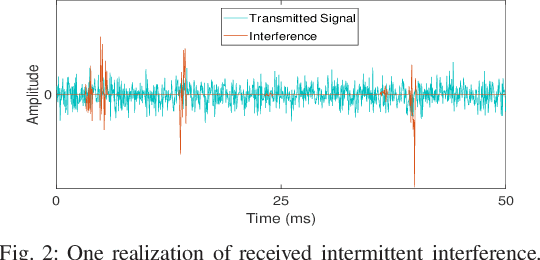

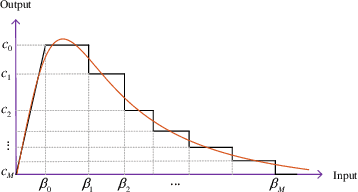

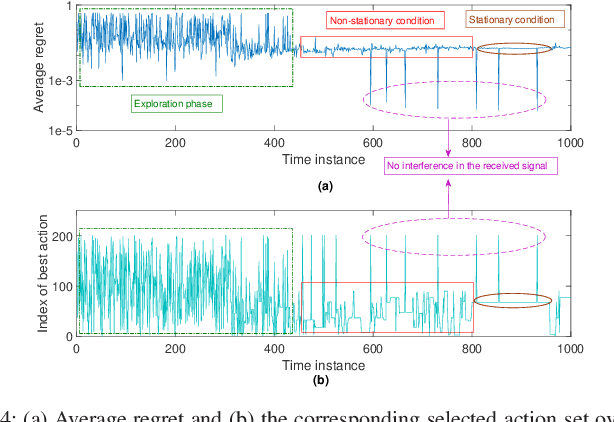

Emerging wireless services with extremely high data rate requirements, such as real-time extended reality applications, mandate novel solutions to further increase the capacity of future wireless networks. In this regard, leveraging large available bandwidth at terahertz frequency bands is seen as a key enabler. To overcome the large propagation loss at these very high frequencies, it is inevitable to manage transmissions over highly directional links. However, uncoordinated directional transmissions by a large number of users can cause substantial interference in terahertz networks. While such interference will be received over short random time intervals, the received power can be large. In this work, a new framework based on reinforcement learning is proposed that uses an adaptive multi-thresholding strategy to efficiently detect and mitigate the intermittent interference from directional links in the time domain. To find the optimal thresholds, the problem is formulated as a multidimensional multi-armed bandit system. Then, an algorithm is proposed that allows the receiver to learn the optimal thresholds with very low complexity. Another key advantage of the proposed approach is that it does not rely on any prior knowledge about the interference statistics, and hence, it is suitable for interference mitigation in dynamic scenarios. Simulation results confirm the superior bit-error-rate performance of the proposed method compared with two traditional time-domain interference mitigation approaches.

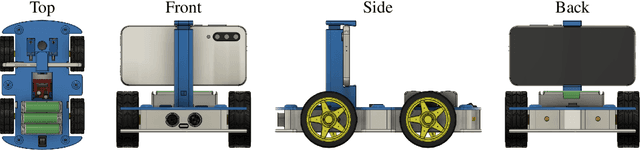

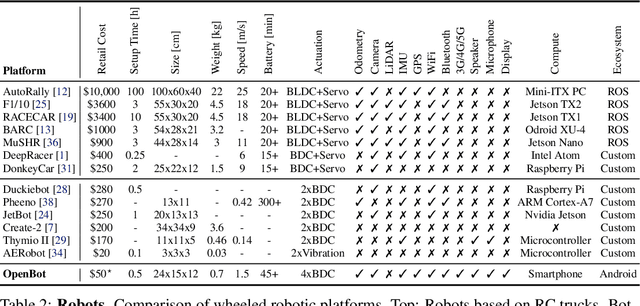

OpenBot: Turning Smartphones into Robots

Aug 24, 2020

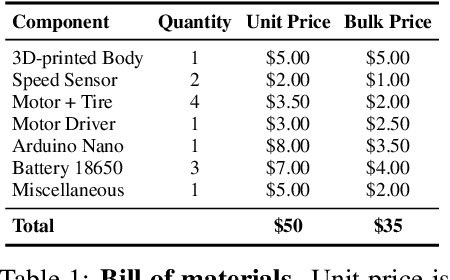

Current robots are either expensive or make significant compromises on sensory richness, computational power, and communication capabilities. We propose to leverage smartphones to equip robots with extensive sensor suites, powerful computational abilities, state-of-the-art communication channels, and access to a thriving software ecosystem. We design a small electric vehicle that costs $50 and serves as a robot body for standard Android smartphones. We develop a software stack that allows smartphones to use this body for mobile operation and demonstrate that the system is sufficiently powerful to support advanced robotics workloads such as person following and real-time autonomous navigation in unstructured environments. Controlled experiments demonstrate that the presented approach is robust across different smartphones and robot bodies. A video of our work is available at https://www.youtube.com/watch?v=qc8hFLyWDOM

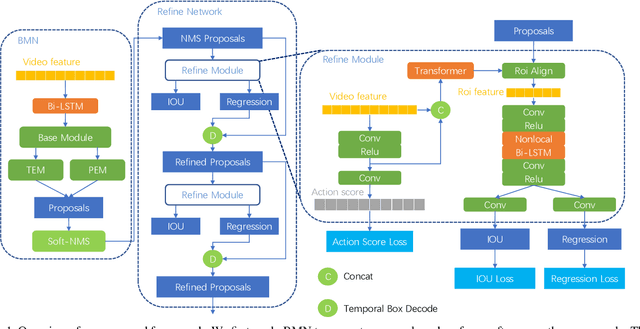

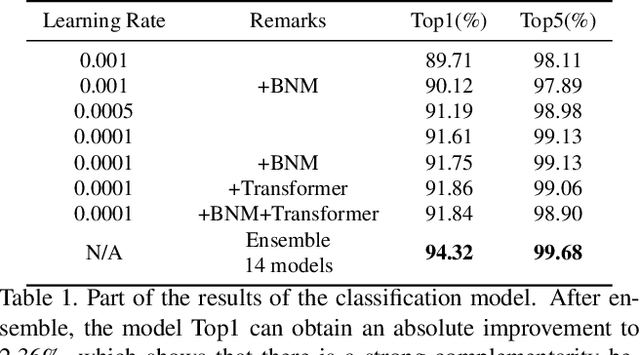

Temporal Fusion Network for Temporal Action Localization:Submission to ActivityNet Challenge 2020 (Task E)

Jun 13, 2020

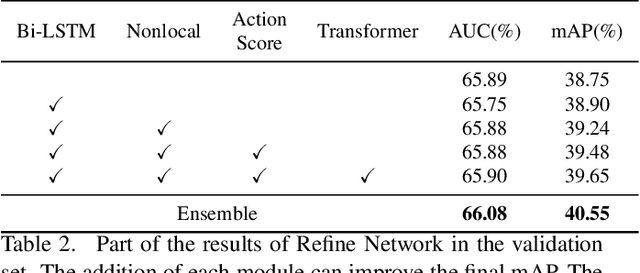

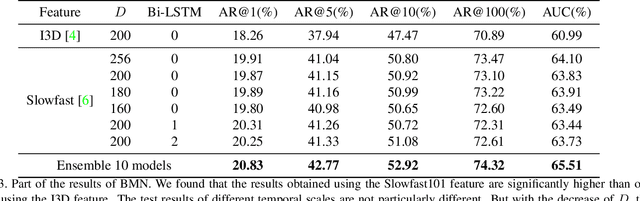

This technical report analyzes a temporal action localization method we used in the HACS competition which is hosted in Activitynet Challenge 2020.The goal of our task is to locate the start time and end time of the action in the untrimmed video, and predict action category.Firstly, we utilize the video-level feature information to train multiple video-level action classification models. In this way, we can get the category of action in the video.Secondly, we focus on generating high quality temporal proposals.For this purpose, we apply BMN to generate a large number of proposals to obtain high recall rates. We then refine these proposals by employing a cascade structure network called Refine Network, which can predict position offset and new IOU under the supervision of ground truth.To make the proposals more accurate, we use bidirectional LSTM, Nonlocal and Transformer to capture temporal relationships between local features of each proposal and global features of the video data.Finally, by fusing the results of multiple models, our method obtains 40.55% on the validation set and 40.53% on the test set in terms of mAP, and achieves Rank 1 in this challenge.

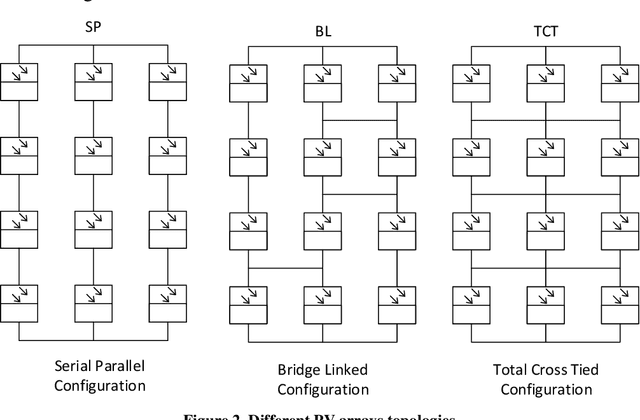

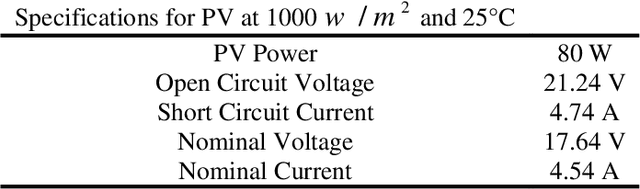

A proposed method to extract maximum possible power in the shortest time on solar PV arrays under partial shadings using metaheuristic algorithms

Mar 15, 2019

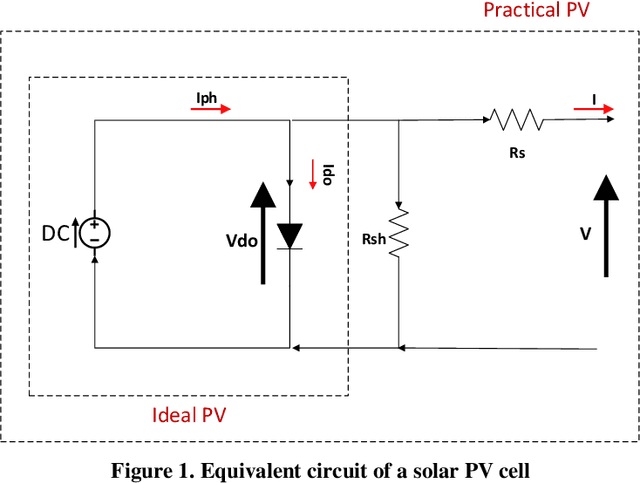

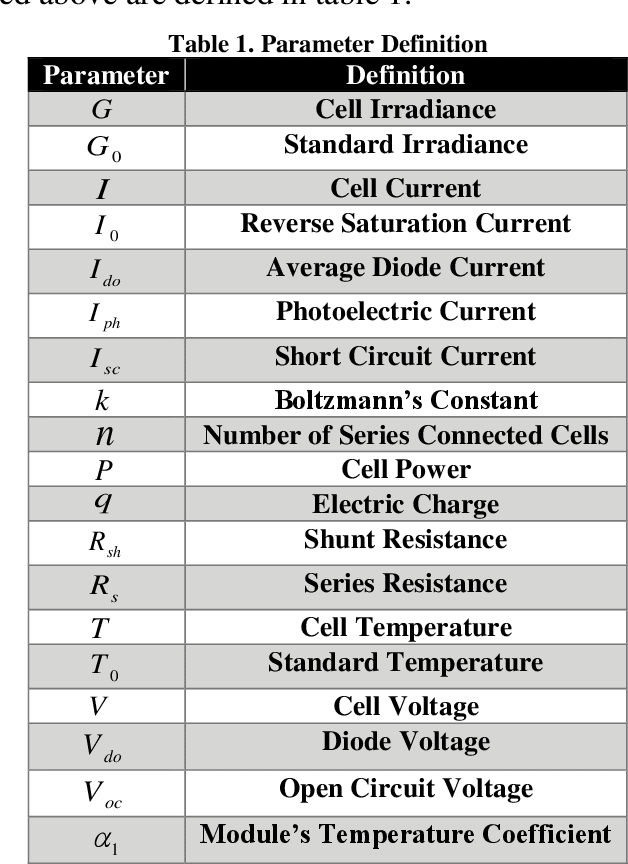

The increasing use of fossil fuels to produce energy is leading to environmental problems. Hence, it has led the human society to move towards the use of renewable energies, including solar energy. In recent years, one of the most popular methods to gain energy is using photovoltaic arrays to produce solar energy. Skyscrapers and different weather conditions cause shadings on these PV arrays, which leads to less power generation. Various methods such as TCT and Sudoku patterns have been proposed to improve power generation for partial shading PV arrays, but these methods have some problems such as not generating maximum power and being designed for a specific dimension of PV arrays. Therefore, we proposed a metaheuristic algorithm-based approach to extract maximum possible power in the shortest possible time. In this paper, five algorithms which have proper results in most of the searching problems are chosen from different groups of metaheuristic algorithms. Also, four different standard shading patterns are used for more realistic analysis. Results show that the proposed method achieves better results in maximum power generation compared to TCT arrangement (18.53%) and Sudoku arrangement (4.93%). Also, the results show that GWO is the fastest metaheuristic algorithm to reach maximum output power in PV arrays under partial shading condition. Thus, the authors believe that by using metaheuristic algorithms, an efficient, reliable, and fast solution is reached to solve partial shading PV arrays problem

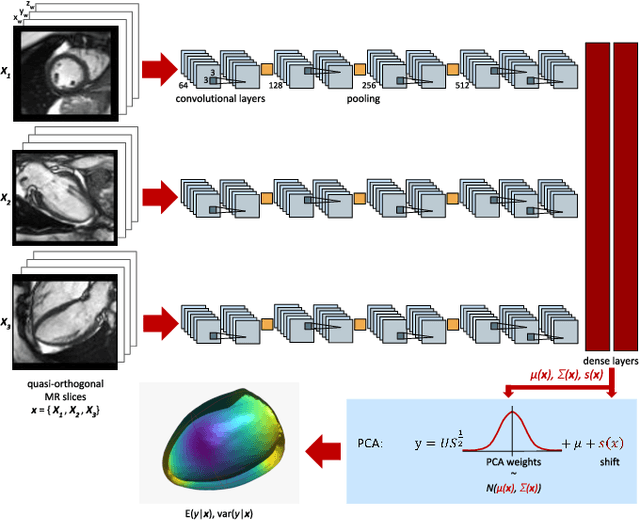

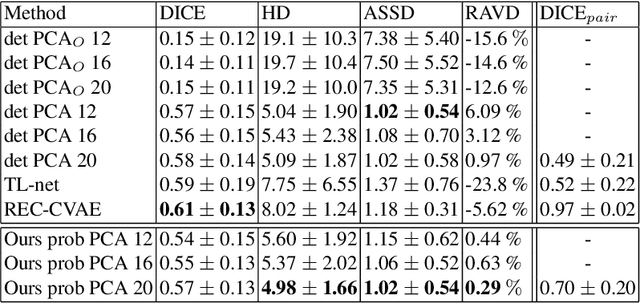

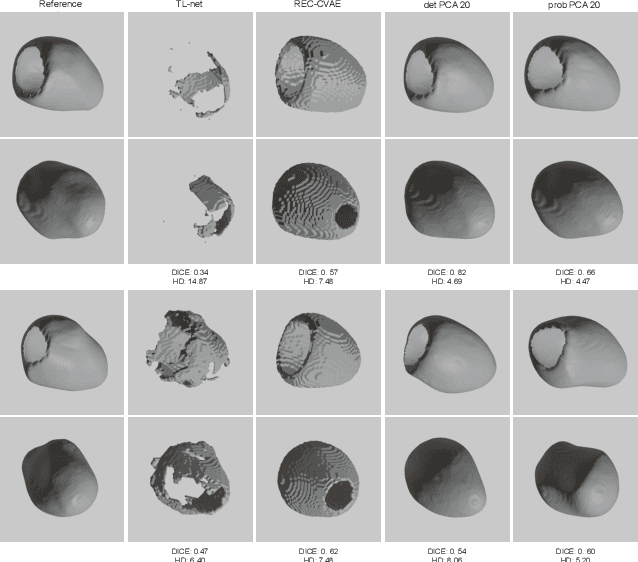

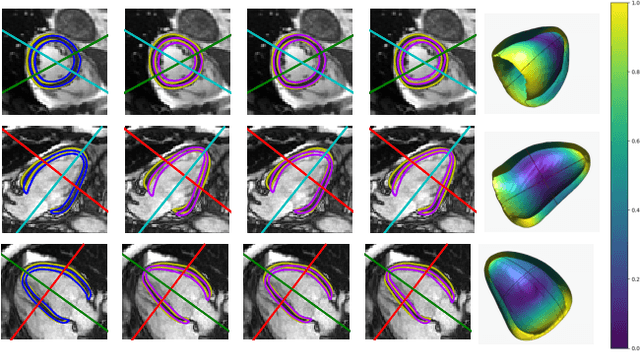

Probabilistic 3D surface reconstruction from sparse MRI information

Oct 05, 2020

Surface reconstruction from magnetic resonance (MR) imaging data is indispensable in medical image analysis and clinical research. A reliable and effective reconstruction tool should: be fast in prediction of accurate well localised and high resolution models, evaluate prediction uncertainty, work with as little input data as possible. Current deep learning state of the art (SOTA) 3D reconstruction methods, however, often only produce shapes of limited variability positioned in a canonical position or lack uncertainty evaluation. In this paper, we present a novel probabilistic deep learning approach for concurrent 3D surface reconstruction from sparse 2D MR image data and aleatoric uncertainty prediction. Our method is capable of reconstructing large surface meshes from three quasi-orthogonal MR imaging slices from limited training sets whilst modelling the location of each mesh vertex through a Gaussian distribution. Prior shape information is encoded using a built-in linear principal component analysis (PCA) model. Extensive experiments on cardiac MR data show that our probabilistic approach successfully assesses prediction uncertainty while at the same time qualitatively and quantitatively outperforms SOTA methods in shape prediction. Compared to SOTA, we are capable of properly localising and orientating the prediction via the use of a spatially aware neural network.

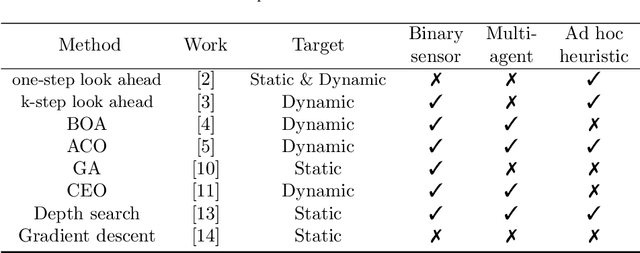

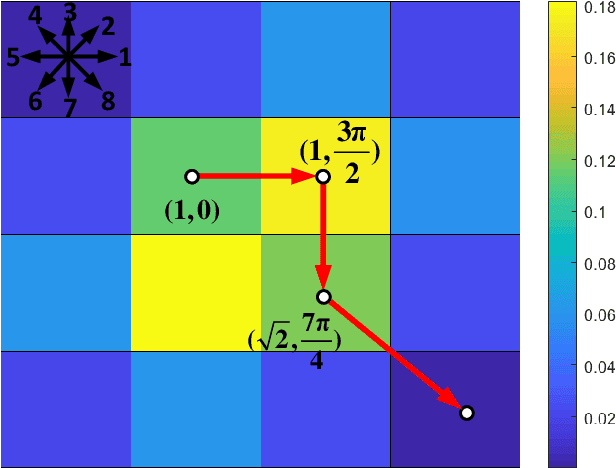

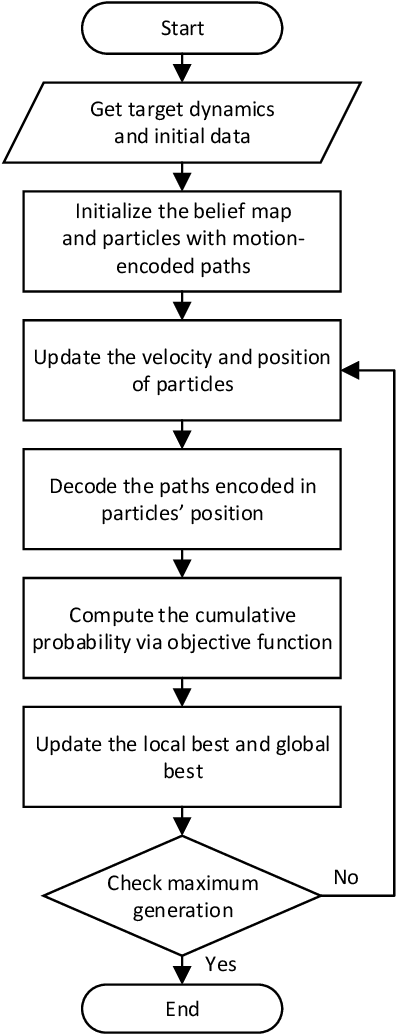

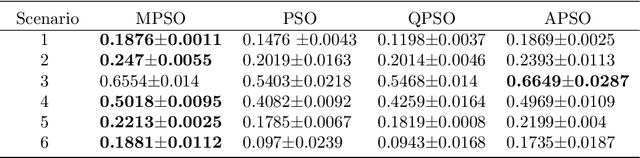

Motion-Encoded Particle Swarm Optimization for Moving Target Search Using UAVs

Oct 05, 2020

This paper presents a novel algorithm named the motion-encoded particle swarm optimization (MPSO) for finding a moving target with unmanned aerial vehicles (UAVs). From the Bayesian theory, the search problem can be converted to the optimization of a cost function that represents the probability of detecting the target. Here, the proposed MPSO is developed to solve that problem by encoding the search trajectory as a series of UAV motion paths evolving over the generation of particles in a PSO algorithm. This motion-encoded approach allows for preserving important properties of the swarm including the cognitive and social coherence, and thus resulting in better solutions. Results from extensive simulations with existing methods show that the proposed MPSO improves the detection performance by 24\% and time performance by 4.71 times compared to the original PSO, and moreover, also outperforms other state-of-the-art metaheuristic optimization algorithms including the artificial bee colony (ABC), ant colony optimization (ACO), genetic algorithm (GA), differential evolution (DE), and tree-seed algorithm (TSA) in most search scenarios. Experiments have been conducted with real UAVs in searching for a dynamic target in different scenarios to demonstrate MPSO merits in a practical application.

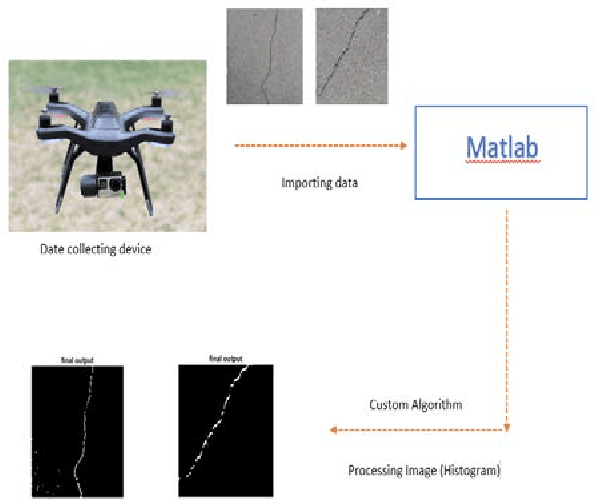

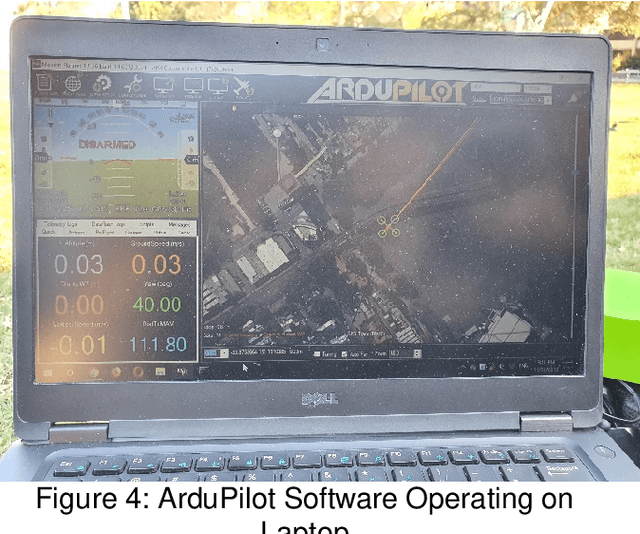

Built Infrastructure Monitoring and Inspection Using UAVs and Vision-based Algorithms

May 19, 2020

This study presents an inspecting system using real-time control unmanned aerial vehicles (UAVs) to investigate structural surfaces. The system operates under favourable weather conditions to inspect a target structure, which is the Wentworth light rail base structure in this study. The system includes a drone, a GoPro HERO4 camera, a controller and a mobile phone. The drone takes off the ground manually in the testing field to collect the data requiring for later analysis. The images are taken through HERO 4 camera and then transferred in real time to the remote processing unit such as a ground control station by the wireless connection established by a Wi-Fi router. An image processing method has been proposed to detect defects or damages such as cracks. The method based on intensity histogram algorithms to exploit the pixel group related to the crack contained in the low intensity interval. Experiments, simulation and comparisons have been conducted to evaluate the performance and validity of the proposed system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge