"Time": models, code, and papers

A Centroid Loss for Weakly Supervised Semantic Segmentation in Quality Control and Inspection Application

Oct 26, 2020

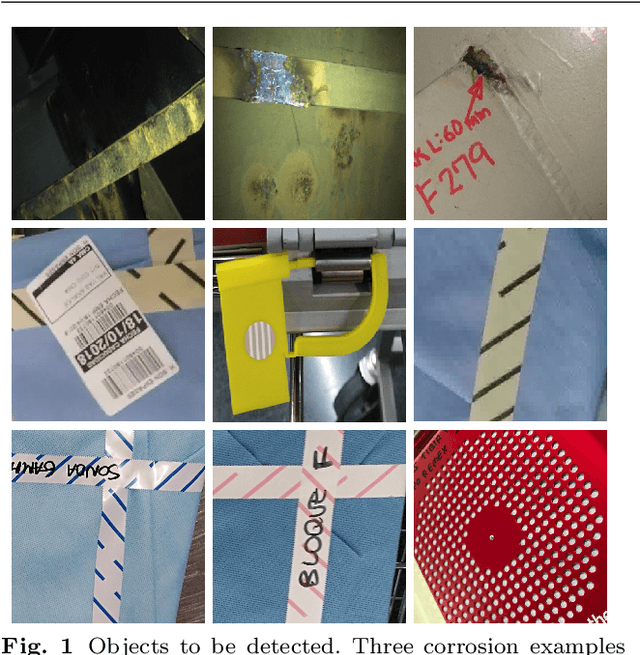

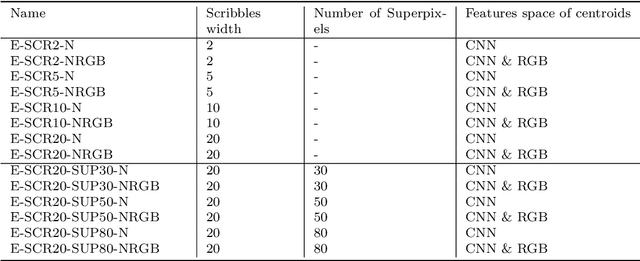

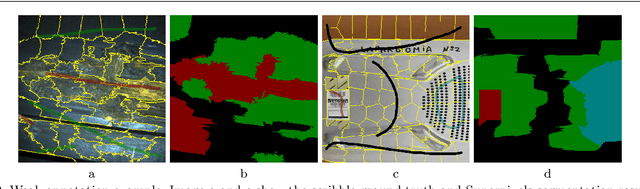

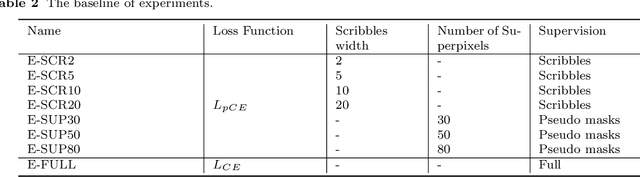

Process automation has enabled a level of accuracy and productivity that goes beyond human ability, and one critical area where automation is making a huge difference is the machine vision system. In this paper, a semantic segmentation solution is proposed for two scenes. One is the inspection intended for vessel corrosion detection, and the other is a detection system used to assist quality control on the surgery toolboxes prepared by the sterilization unit of a hospital. In order to reduce the time required to prepare pixel-level ground truth, this work focuses on the use of weakly supervised annotations (scribbles). Moreover, our solution integrates a clustering approach into a semantic segmentation network, thus reducing the negative effects caused by weakly supervised annotations. To evaluate the performance of our approach, two datasets are collected from the real world (vessels' structure and hospital surgery toolboxes) for both training and validation. According to the result of analysis, the approach proposed in this paper produce a satisfactory performance on two datasets through the use of weak annotations.

Multi-focus Image Fusion for Visual Sensor Networks

Oct 02, 2020

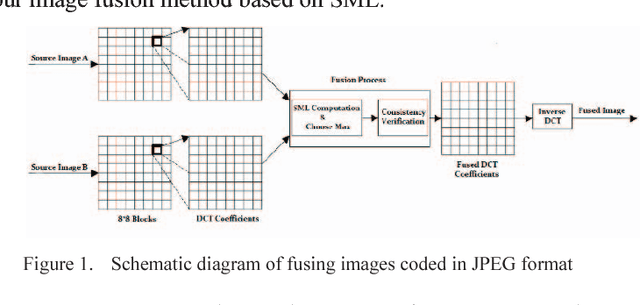

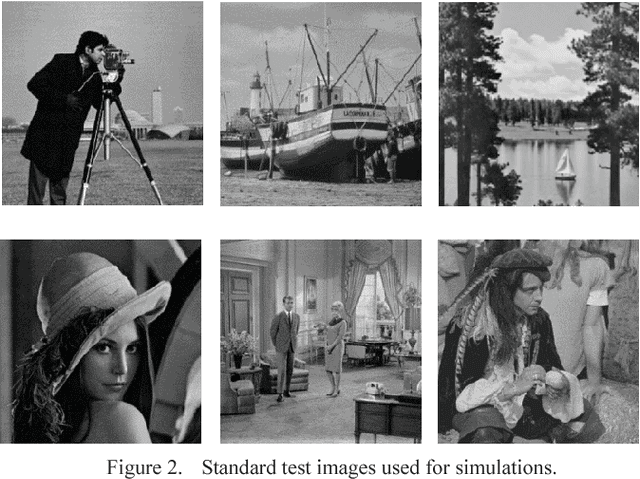

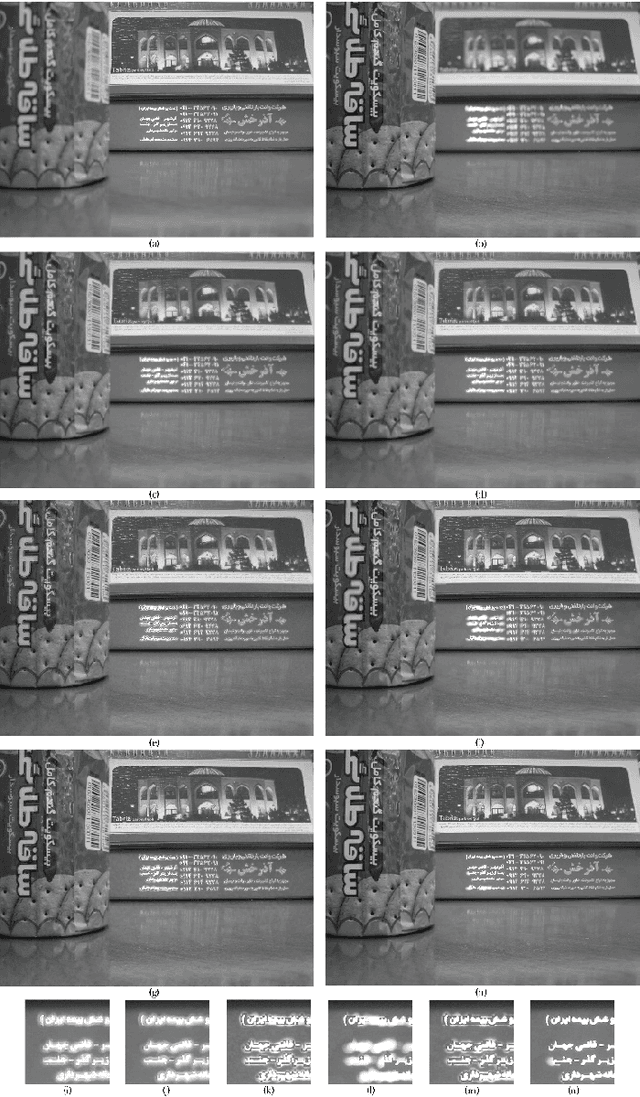

Image fusion in visual sensor networks (VSNs) aims to combine information from multiple images of the same scene in order to transform a single image with more information. Image fusion methods based on discrete cosine transform (DCT) are less complex and time-saving in DCT based standards of image and video which makes them more suitable for VSN applications. In this paper, an efficient algorithm for the fusion of multi-focus images in the DCT domain is proposed. The Sum of modified laplacian (SML) of corresponding blocks of source images is used as a contrast criterion and blocks with the larger value of SML are absorbed to output images. The experimental results on several images show the improvement of the proposed algorithm in terms of both subjective and objective quality of fused image relative to other DCT based techniques.

TPLinker: Single-stage Joint Extraction of Entities and Relations Through Token Pair Linking

Oct 26, 2020

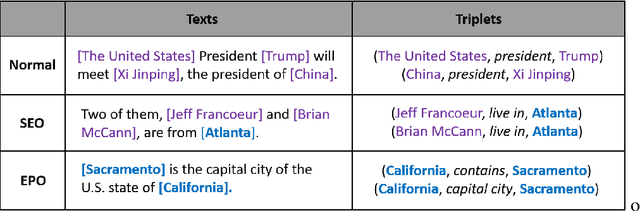

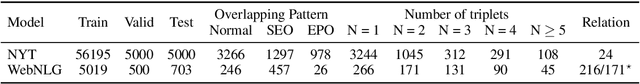

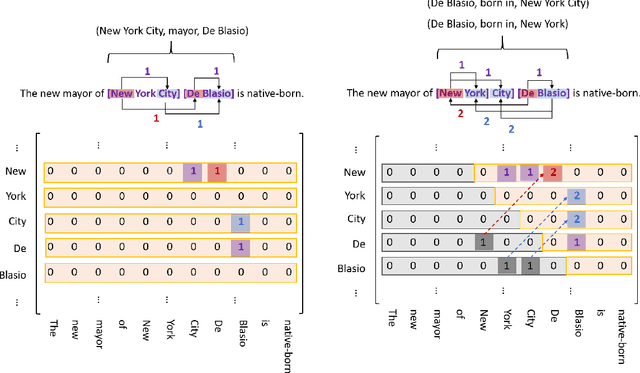

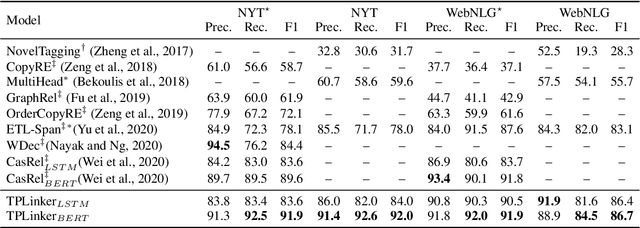

Extracting entities and relations from unstructured text has attracted increasing attention in recent years but remains challenging, due to the intrinsic difficulty in identifying overlapping relations with shared entities. Prior works show that joint learning can result in a noticeable performance gain. However, they usually involve sequential interrelated steps and suffer from the problem of exposure bias. At training time, they predict with the ground truth conditions while at inference it has to make extraction from scratch. This discrepancy leads to error accumulation. To mitigate the issue, we propose in this paper a one-stage joint extraction model, namely, TPLinker, which is capable of discovering overlapping relations sharing one or both entities while immune from the exposure bias. TPLinker formulates joint extraction as a token pair linking problem and introduces a novel handshaking tagging scheme that aligns the boundary tokens of entity pairs under each relation type. Experiment results show that TPLinker performs significantly better on overlapping and multiple relation extraction, and achieves state-of-the-art performance on two public datasets.

Accelerating Convergence of Replica Exchange Stochastic Gradient MCMC via Variance Reduction

Oct 02, 2020

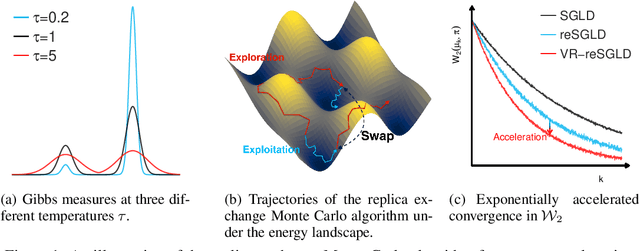

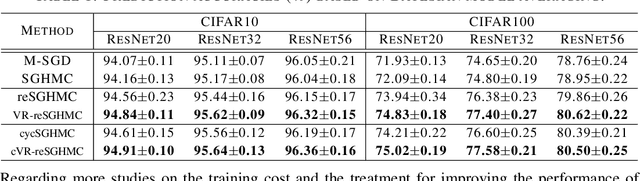

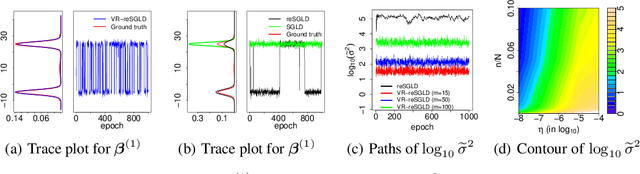

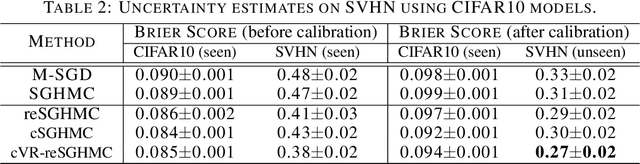

Replica exchange stochastic gradient Langevin dynamics (reSGLD) has shown promise in accelerating the convergence in non-convex learning; however, an excessively large correction for avoiding biases from noisy energy estimators has limited the potential of the acceleration. To address this issue, we study the variance reduction for noisy energy estimators, which promotes much more effective swaps. Theoretically, we provide a non-asymptotic analysis on the exponential acceleration for the underlying continuous-time Markov jump process; moreover, we consider a generalized Girsanov theorem which includes the change of Poisson measure to overcome the crude discretization based on the Gr\"{o}wall's inequality and yields a much tighter error in the 2-Wasserstein ($\mathcal{W}_2$) distance. Numerically, we conduct extensive experiments and obtain the state-of-the-art results in optimization and uncertainty estimates for synthetic experiments and image data.

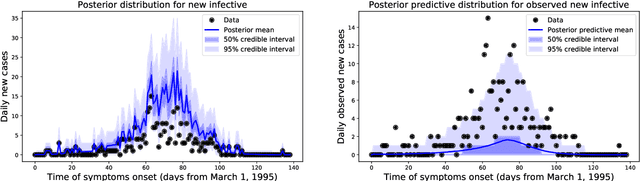

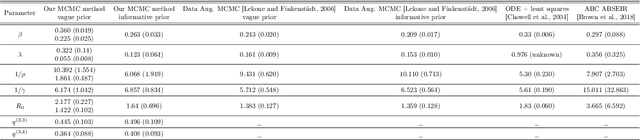

Inference in Stochastic Epidemic Models via Multinomial Approximations

Jun 24, 2020

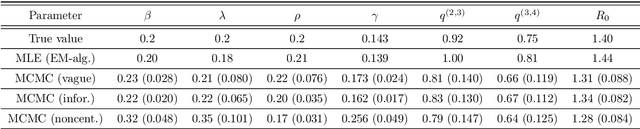

We introduce a new method for inference in stochastic epidemic models which uses recursive multinomial approximations to integrate over unobserved variables and thus circumvent likelihood intractability. The method is applicable to a class of discrete-time, finite-population compartmental models with partial, randomly under-reported or missing count observations. In contrast to state-of-the-art alternatives such as Approximate Bayesian Computation techniques, no forward simulation of the model is required and there are no tuning parameters. Evaluating the approximate marginal likelihood of model parameters is achieved through a computationally simple filtering recursion. The accuracy of the approximation is demonstrated through analysis of real and simulated data using a model of the 1995 Ebola outbreak in the Democratic Republic of Congo. We show how the method can be embedded within a Sequential Monte Carlo approach to estimating the time-varying reproduction number of COVID-19 in Wuhan, China, recently published by Kucharski et al. 2020.

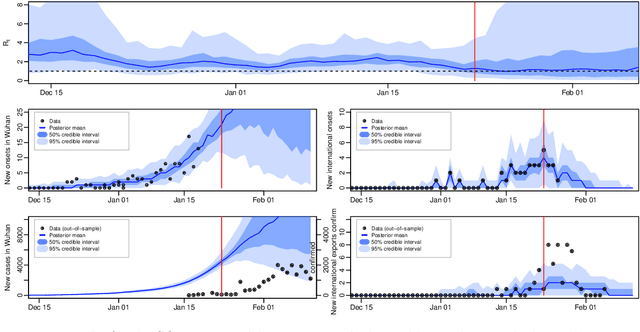

Real-Time Trajectory Replanning for MAVs using Uniform B-splines and a 3D Circular Buffer

Jul 24, 2017

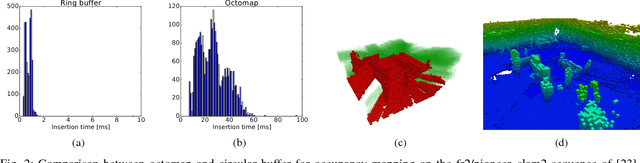

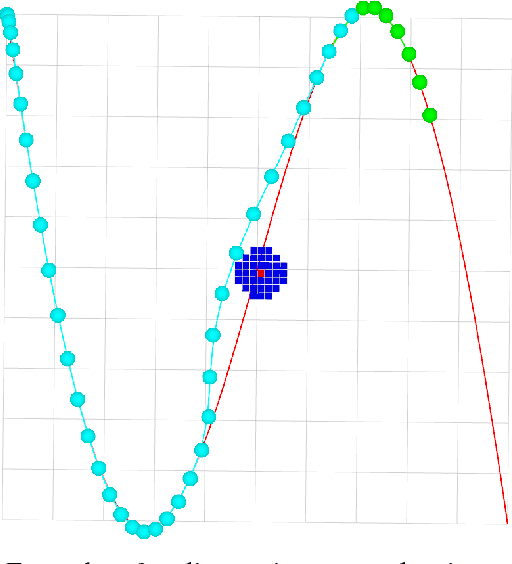

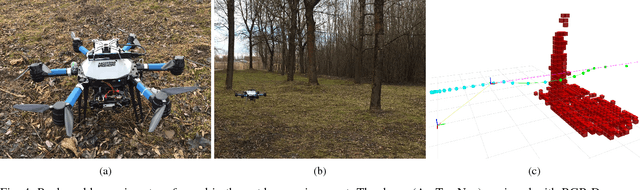

In this paper, we present a real-time approach to local trajectory replanning for microaerial vehicles (MAVs). Current trajectory generation methods for multicopters achieve high success rates in cluttered environments, but assume that the environment is static and require prior knowledge of the map. In the presented study, we use the results of such planners and extend them with a local replanning algorithm that can handle unmodeled (possibly dynamic) obstacles while keeping the MAV close to the global trajectory. To ensure that the proposed approach is real-time capable, we maintain information about the environment around the MAV in an occupancy grid stored in a three-dimensional circular buffer, which moves together with a drone, and represent the trajectories by using uniform B-splines. This representation ensures that the trajectory is sufficiently smooth and simultaneously allows for efficient optimization.

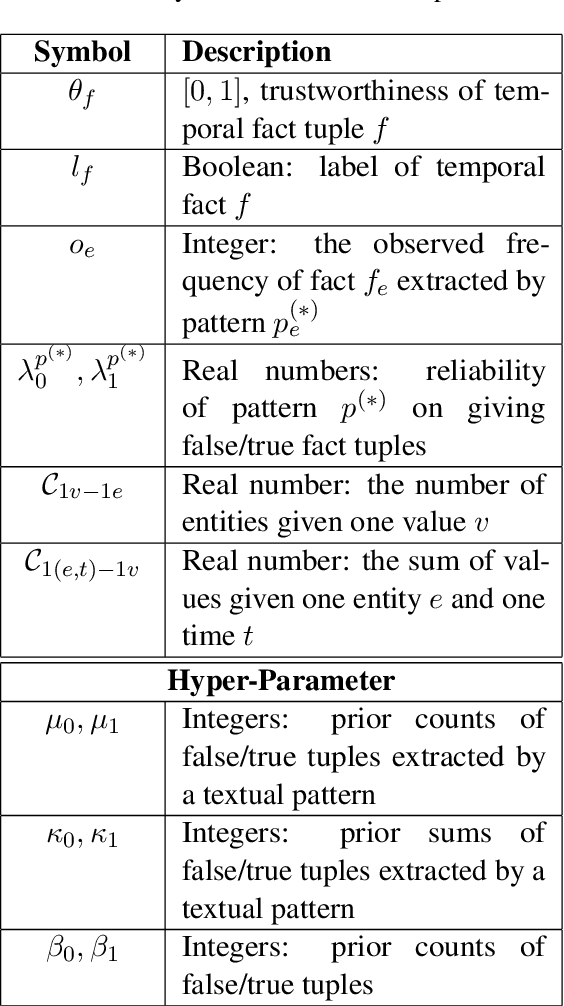

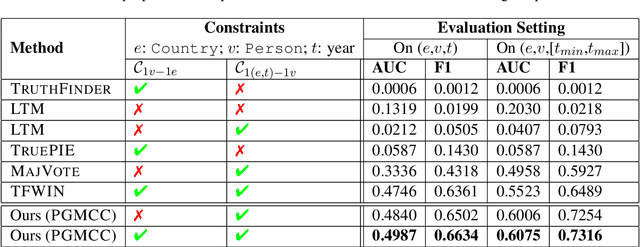

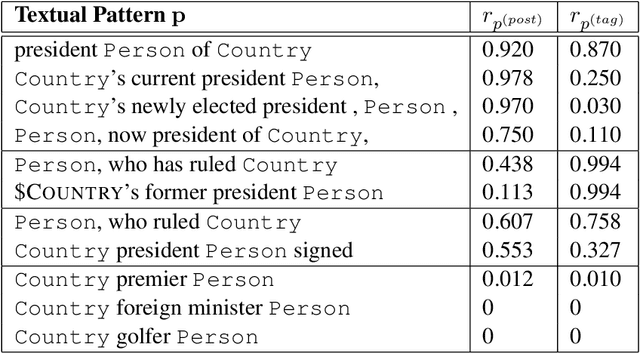

A Probabilistic Model with Commonsense Constraints for Pattern-based Temporal Fact Extraction

Jun 11, 2020

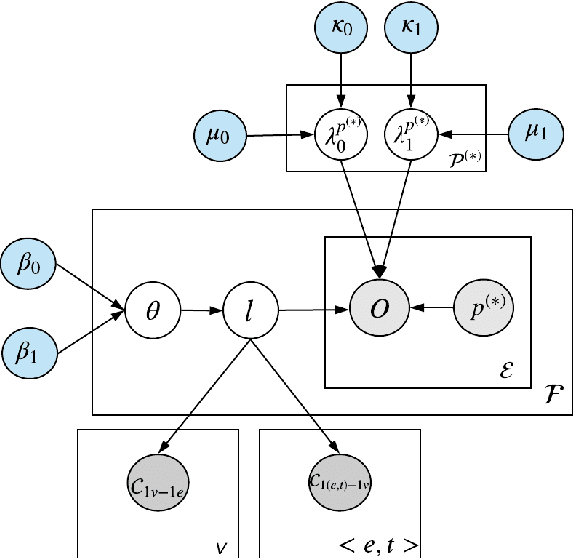

Textual patterns (e.g., Country's president Person) are specified and/or generated for extracting factual information from unstructured data. Pattern-based information extraction methods have been recognized for their efficiency and transferability. However, not every pattern is reliable: A major challenge is to derive the most complete and accurate facts from diverse and sometimes conflicting extractions. In this work, we propose a probabilistic graphical model which formulates fact extraction in a generative process. It automatically infers true facts and pattern reliability without any supervision. It has two novel designs specially for temporal facts: (1) it models pattern reliability on two types of time signals, including temporal tag in text and text generation time; (2) it models commonsense constraints as observable variables. Experimental results demonstrate that our model significantly outperforms existing methods on extracting true temporal facts from news data.

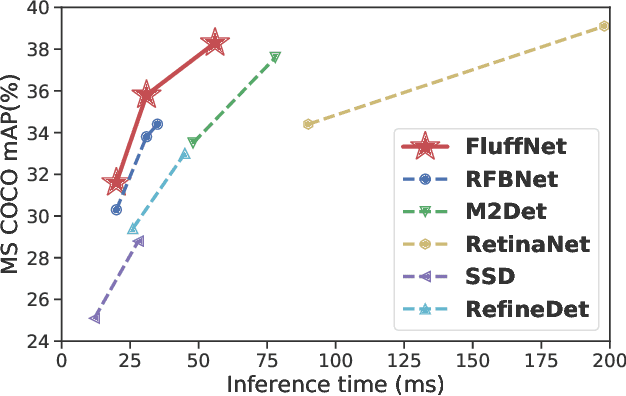

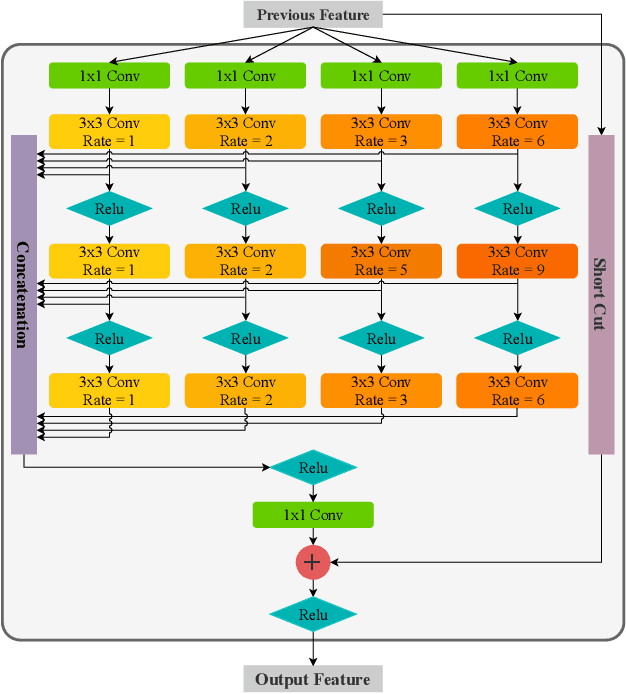

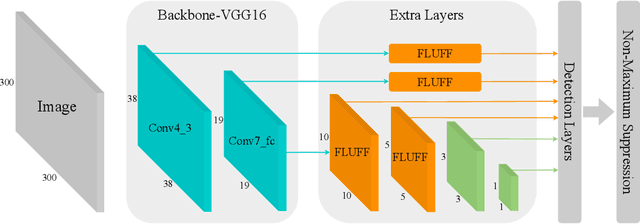

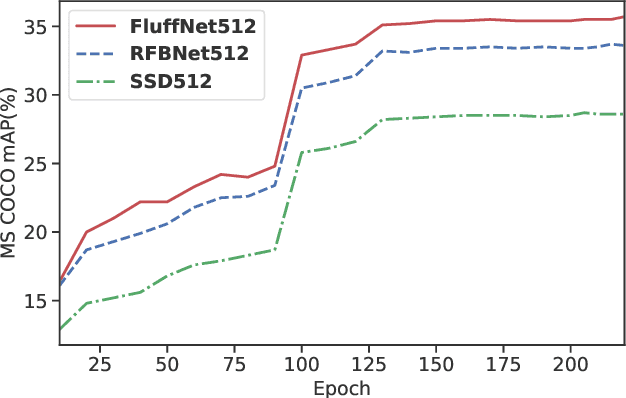

Fast Object Detection with Latticed Multi-Scale Feature Fusion

Nov 05, 2020

Scale variance is one of the crucial challenges in multi-scale object detection. Early approaches address this problem by exploiting the image and feature pyramid, which raises suboptimal results with computation burden and constrains from inherent network structures. Pioneering works also propose multi-scale (i.e., multi-level and multi-branch) feature fusions to remedy the issue and have achieved encouraging progress. However, existing fusions still have certain limitations such as feature scale inconsistency, ignorance of level-wise semantic transformation, and coarse granularity. In this work, we present a novel module, the Fluff block, to alleviate drawbacks of current multi-scale fusion methods and facilitate multi-scale object detection. Specifically, Fluff leverages both multi-level and multi-branch schemes with dilated convolutions to have rapid, effective and finer-grained feature fusions. Furthermore, we integrate Fluff to SSD as FluffNet, a powerful real-time single-stage detector for multi-scale object detection. Empirical results on MS COCO and PASCAL VOC have demonstrated that FluffNet obtains remarkable efficiency with state-of-the-art accuracy. Additionally, we indicate the great generality of the Fluff block by showing how to embed it to other widely-used detectors as well.

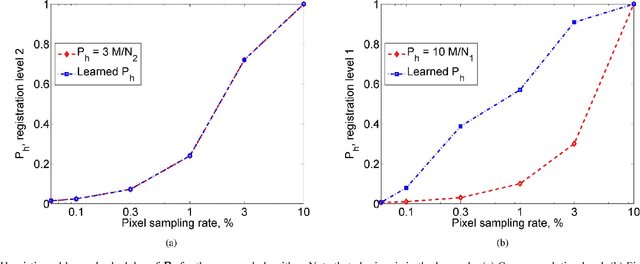

Uncertainty driven probabilistic voxel selection for image registration

Oct 02, 2020

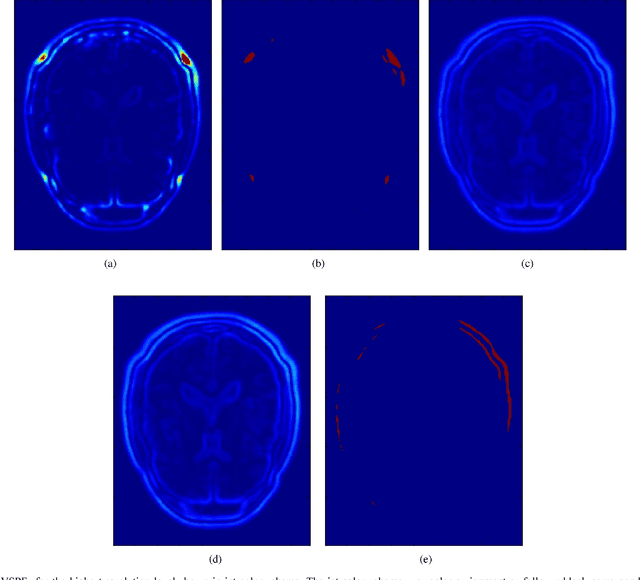

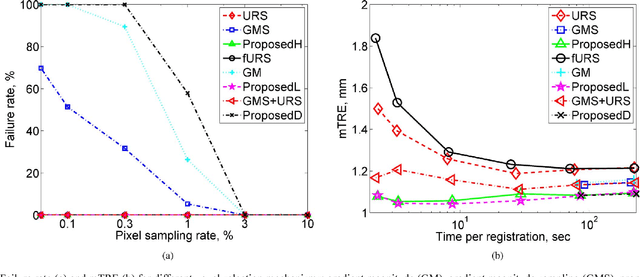

This paper presents a novel probabilistic voxel selection strategy for medical image registration in time-sensitive contexts, where the goal is aggressive voxel sampling (e.g. using less than 1% of the total number) while maintaining registration accuracy and low failure rate. We develop a Bayesian framework whereby, first, a voxel sampling probability field (VSPF) is built based on the uncertainty on the transformation parameters. We then describe a practical, multi-scale registration algorithm, where, at each optimization iteration, different voxel subsets are sampled based on the VSPF. The approach maximizes accuracy without committing to a particular fixed subset of voxels. The probabilistic sampling scheme developed is shown to manage the tradeoff between the robustness of traditional random voxel selection (by permitting more exploration) and the accuracy of fixed voxel selection (by permitting a greater proportion of informative voxels).

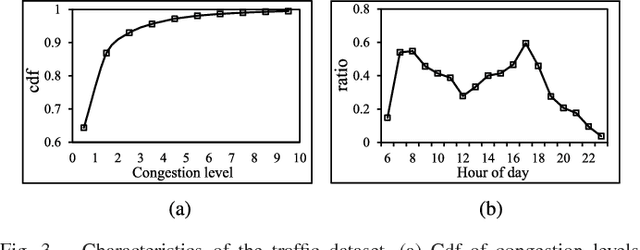

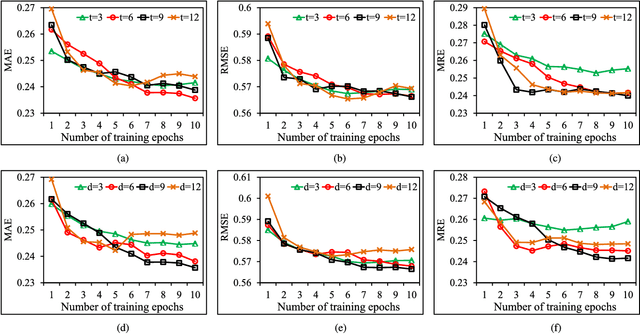

PCNN: Deep Convolutional Networks for Short-term Traffic Congestion Prediction

Mar 16, 2020

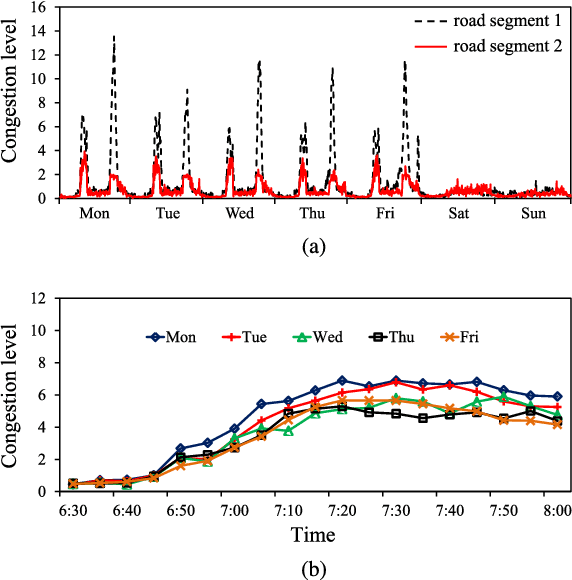

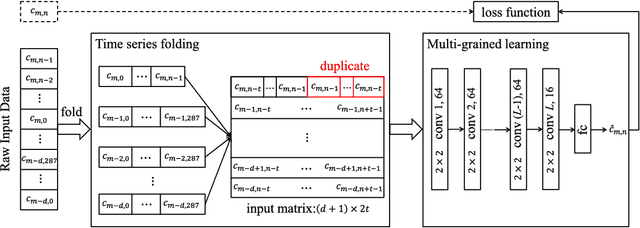

Traffic problems have seriously affected people's life quality and urban development, and forecasting the short-term traffic congestion is of great importance to both individuals and governments. However, understanding and modeling the traffic conditions can be extremely difficult, and our observations from real traffic data reveal that (1) similar traffic congestion patterns exist in the neighboring time slots and on consecutive workdays; (2) the levels of traffic congestion have clear multiscale properties. To capture these characteristics, we propose a novel method named PCNN based on deep Convolutional Neural Network, modeling Periodic traffic data for short-term traffic congestion prediction. PCNN has two pivotal procedures: time series folding and multi-grained learning. It first temporally folds the time series and constructs a two-dimensional matrix as the network input, such that both the real-time traffic conditions and past traffic patterns are well considered; then with a series of convolutions over the input matrix, it is able to model the local temporal dependency and multiscale traffic patterns. In particular, the global trend of congestion can be addressed at the macroscale; whereas more details and variations of the congestion can be captured at the microscale. Experimental results on a real-world urban traffic dataset confirm that folding time series data into a two-dimensional matrix is effective and PCNN outperforms the baselines significantly for the task of short-term congestion prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge