"Time": models, code, and papers

Animating Pictures with Eulerian Motion Fields

Nov 30, 2020

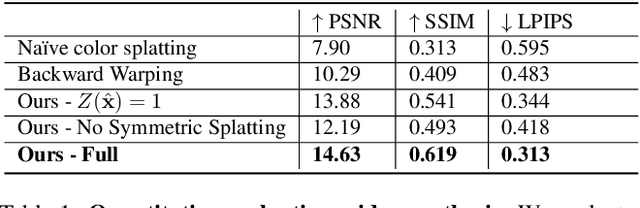

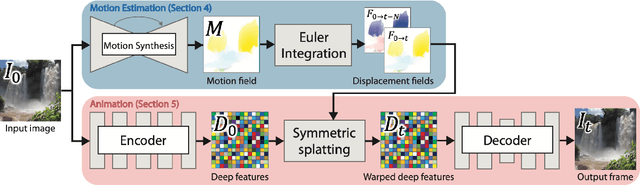

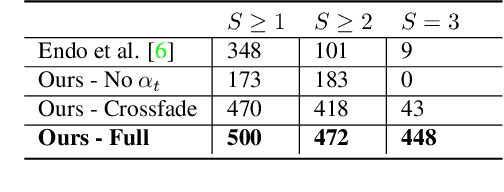

In this paper, we demonstrate a fully automatic method for converting a still image into a realistic animated looping video. We target scenes with continuous fluid motion, such as flowing water and billowing smoke. Our method relies on the observation that this type of natural motion can be convincingly reproduced from a static Eulerian motion description, i.e. a single, temporally constant flow field that defines the immediate motion of a particle at a given 2D location. We use an image-to-image translation network to encode motion priors of natural scenes collected from online videos, so that for a new photo, we can synthesize a corresponding motion field. The image is then animated using the generated motion through a deep warping technique: pixels are encoded as deep features, those features are warped via Eulerian motion, and the resulting warped feature maps are decoded as images. In order to produce continuous, seamlessly looping video textures, we propose a novel video looping technique that flows features both forward and backward in time and then blends the results. We demonstrate the effectiveness and robustness of our method by applying it to a large collection of examples including beaches, waterfalls, and flowing rivers.

Soft Robot Optimal Control Via Reduced Order Finite Element Models

Nov 04, 2020

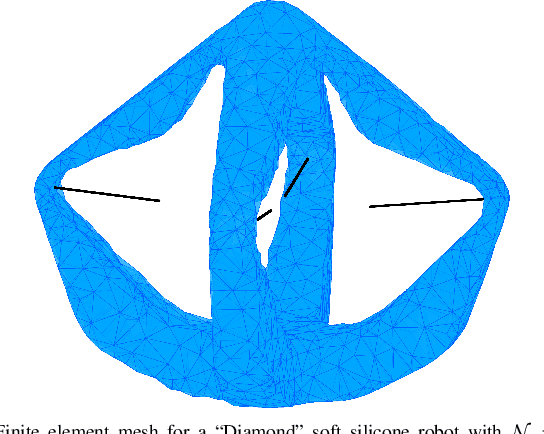

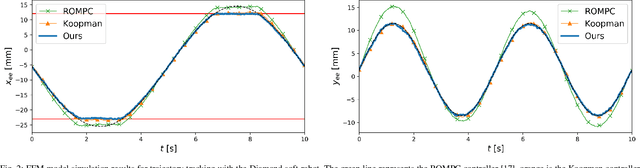

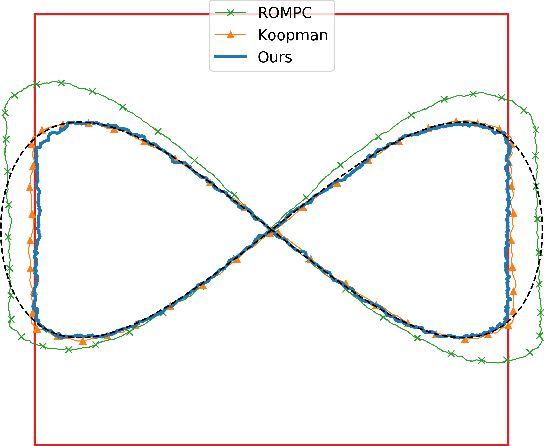

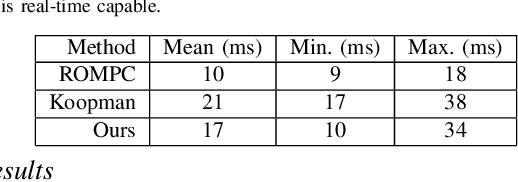

Finite element methods have been successfully used to develop physics-based models of soft robots that capture the nonlinear dynamic behavior induced by continuous deformation. These high-fidelity models are therefore ideal for designing controllers for complex dynamic tasks such as trajectory optimization and trajectory tracking. However, finite element models are also typically very high-dimensional, which makes real-time control challenging. In this work we propose an approach for finite element model-based control of soft robots that leverages model order reduction techniques to significantly increase computational efficiency. In particular, a constrained optimal control problem is formulated based on a nonlinear reduced order finite element model and is solved via sequential convex programming. This approach is demonstrated through simulation of a cable-driven soft robot for a constrained trajectory tracking task, where a 9768-dimensional finite element model is used for controller design.

Adaptive Workload Allocation for Multi-human Multi-robot Teams for Independent and Homogeneous Tasks

Jul 27, 2020

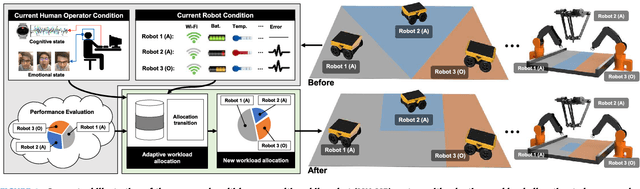

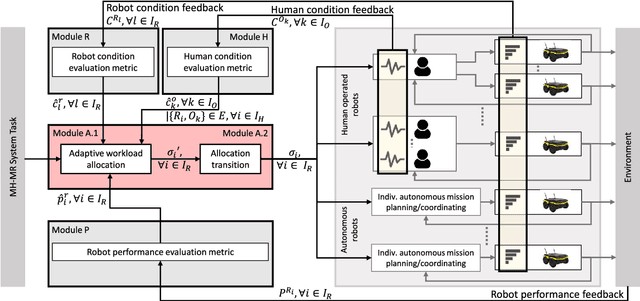

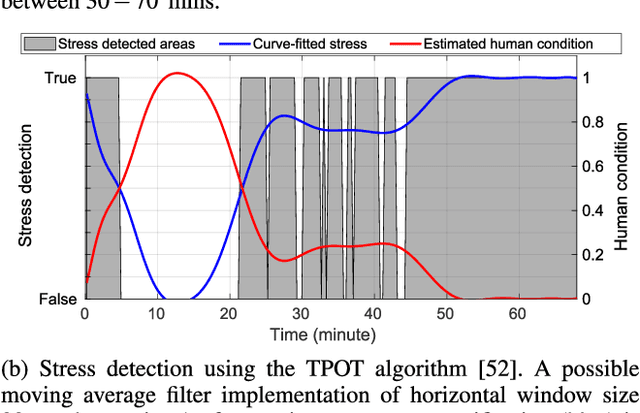

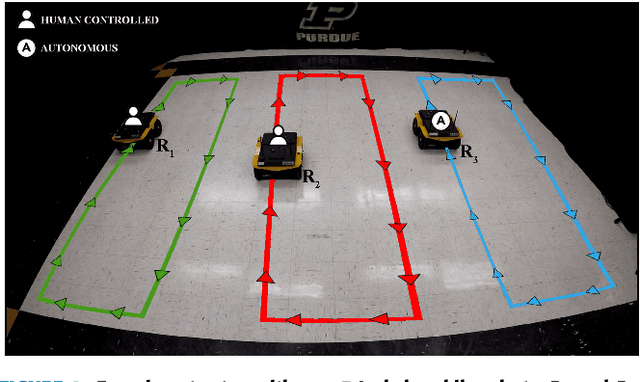

Multi-human multi-robot (MH-MR) systems have the ability to combine the potential advantages of robotic systems with those of having humans in the loop. Robotic systems contribute precision performance and long operation on repetitive tasks without tiring, while humans in the loop improve situational awareness and enhance decision-making abilities. A system's ability to adapt allocated workload to changing conditions and the performance of each individual (human and robot) during the mission is vital to maintaining overall system performance. Previous works from literature including market-based and optimization approaches have attempted to address the task/workload allocation problem with focus on maximizing the system output without regarding individual agent conditions, lacking in real-time processing and have mostly focused exclusively on multi-robot systems. Given the variety of possible combination of teams (autonomous robots and human-operated robots: any number of human operators operating any number of robots at a time) and the operational scale of MH-MR systems, development of a generalized framework of workload allocation has been a particularly challenging task. In this paper, we present such a framework for independent homogeneous missions, capable of adaptively allocating the system workload in relation to health conditions and work performances of human-operated and autonomous robots in real-time. The framework consists of removable modular function blocks ensuring its applicability to different MH-MR scenarios. A new workload transition function block ensures smooth transition without the workload change having adverse effects on individual agents. The effectiveness and scalability of the system's workload adaptability is validated by experiments applying the proposed framework in a MH-MR patrolling scenario with changing human and robot condition, and failing robots.

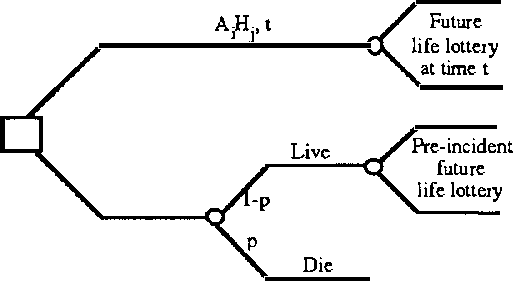

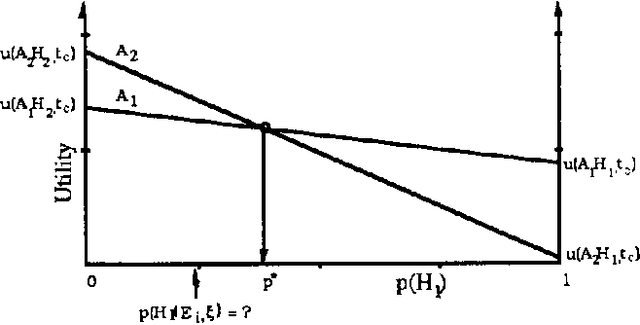

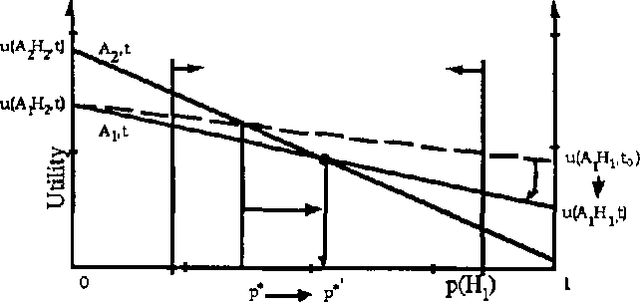

Time-Dependent Utility and Action Under Uncertainty

Mar 20, 2013

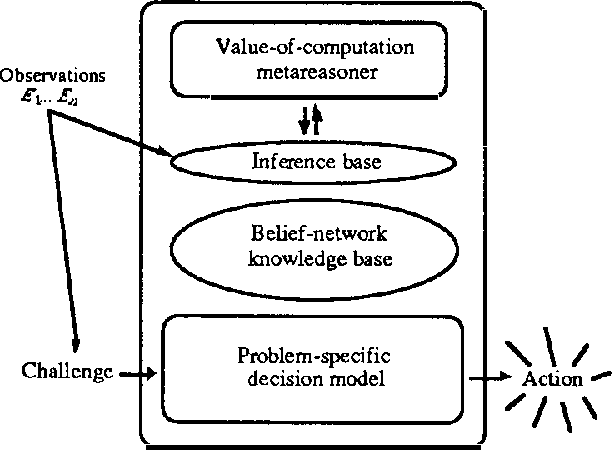

We discuss representing and reasoning with knowledge about the time-dependent utility of an agent's actions. Time-dependent utility plays a crucial role in the interaction between computation and action under bounded resources. We present a semantics for time-dependent utility and describe the use of time-dependent information in decision contexts. We illustrate our discussion with examples of time-pressured reasoning in Protos, a system constructed to explore the ideal control of inference by reasoners with limit abilities.

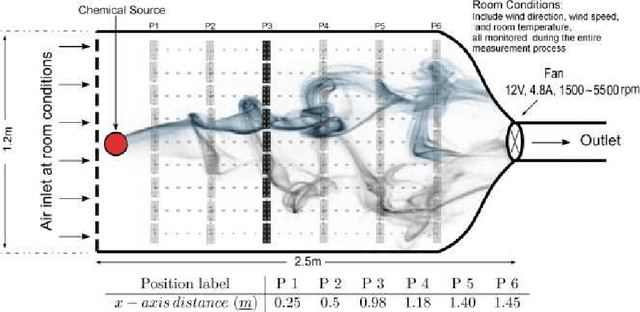

Machine olfaction using time scattering of sensor multiresolution graphs

Feb 13, 2016

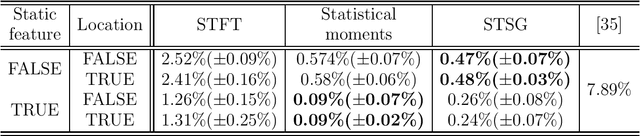

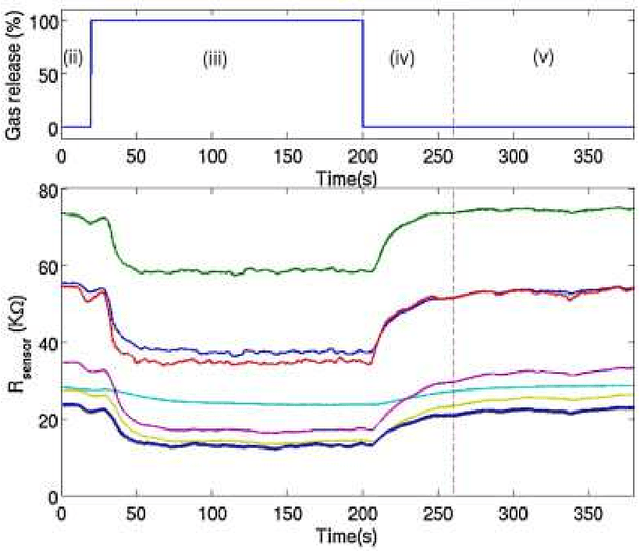

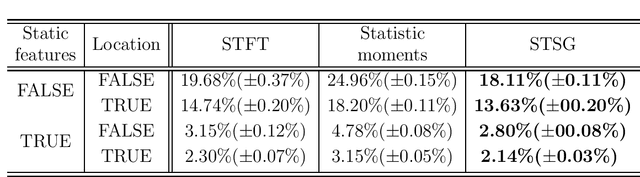

In this paper we construct a learning architecture for high dimensional time series sampled by sensor arrangements. Using a redundant wavelet decomposition on a graph constructed over the sensor locations, our algorithm is able to construct discriminative features that exploit the mutual information between the sensors. The algorithm then applies scattering networks to the time series graphs to create the feature space. We demonstrate our method on a machine olfaction problem, where one needs to classify the gas type and the location where it originates from data sampled by an array of sensors. Our experimental results clearly demonstrate that our method outperforms classical machine learning techniques used in previous studies.

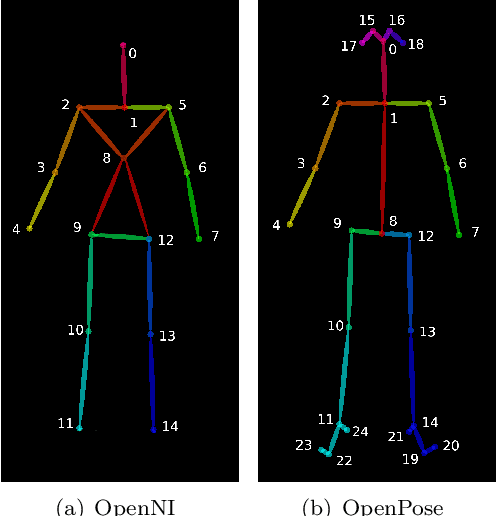

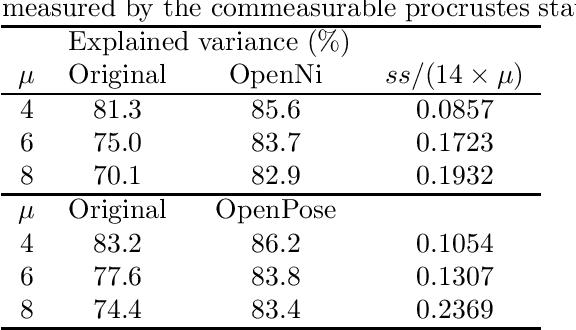

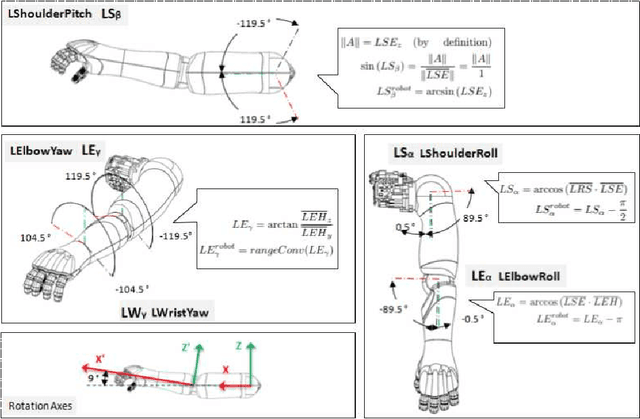

Quantitative analysis of robot gesticulation behavior

Oct 22, 2020

Social robot capabilities, such as talking gestures, are best produced using data driven approaches to avoid being repetitive and to show trustworthiness. However, there is a lack of robust quantitative methods that allow to compare such methods beyond visual evaluation. In this paper a quantitative analysis is performed that compares two Generative Adversarial Networks based gesture generation approaches. The aim is to measure characteristics such as fidelity to the original training data, but at the same time keep track of the degree of originality of the produced gestures. Principal Coordinate Analysis and procrustes statistics are performed and a new Fr\'echet Gesture Distance is proposed by adapting the Fr\'echet Inception Distance to gestures. These three techniques are taken together to asses the fidelity/originality of the generated gestures.

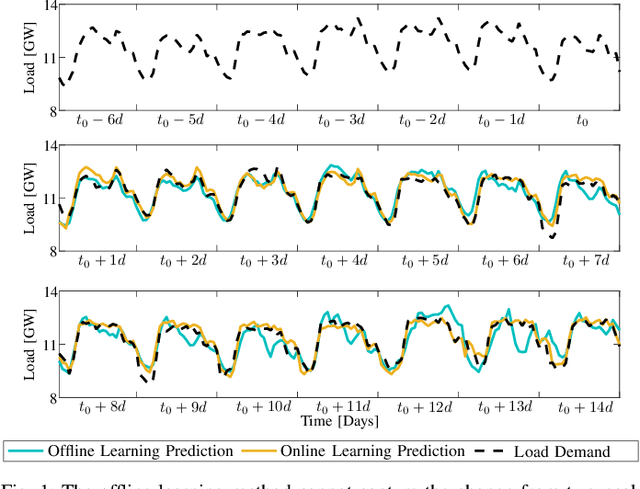

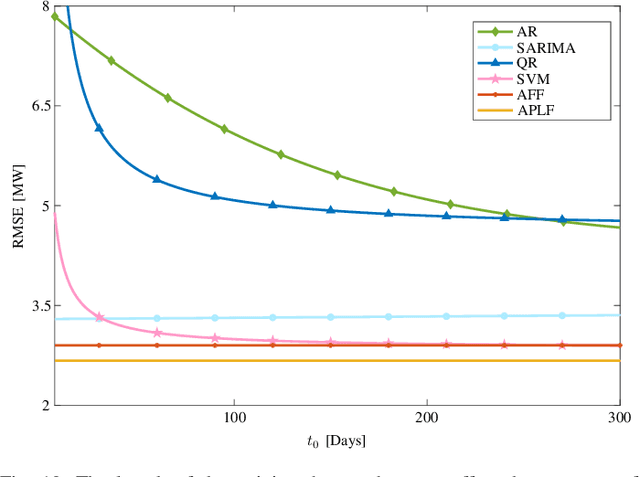

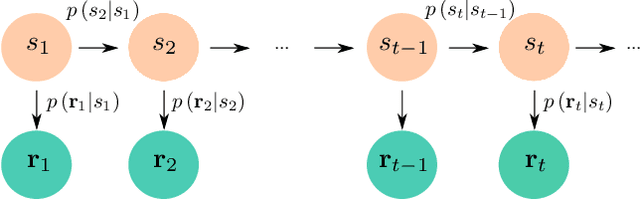

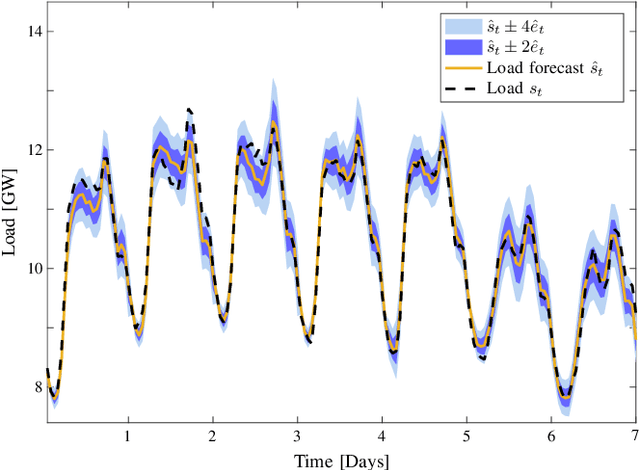

Probabilistic Load Forecasting Based on Adaptive Online Learning

Nov 30, 2020

Load forecasting is crucial for multiple energy management tasks such as scheduling generation capacity, planning supply and demand, and minimizing energy trade costs. Such relevance has increased even more in recent years due to the integration of renewable energies, electric cars, and microgrids. Conventional load forecasting techniques obtain single-value load forecasts by exploiting consumption patterns of past load demand. However, such techniques cannot assess intrinsic uncertainties in load demand, and cannot capture dynamic changes in consumption patterns. To address these problems, this paper presents a method for probabilistic load forecasting based on the adaptive online learning of hidden Markov models. We propose learning and forecasting techniques with theoretical guarantees, and experimentally assess their performance in multiple scenarios. In particular, we develop adaptive online learning techniques that update model parameters recursively, and sequential prediction techniques that obtain probabilistic forecasts using the most recent parameters. The performance of the method is evaluated using multiple datasets corresponding with regions that have different sizes and display assorted time-varying consumption patterns. The results show that the proposed method can significantly improve the performance of existing techniques for a wide range of scenarios.

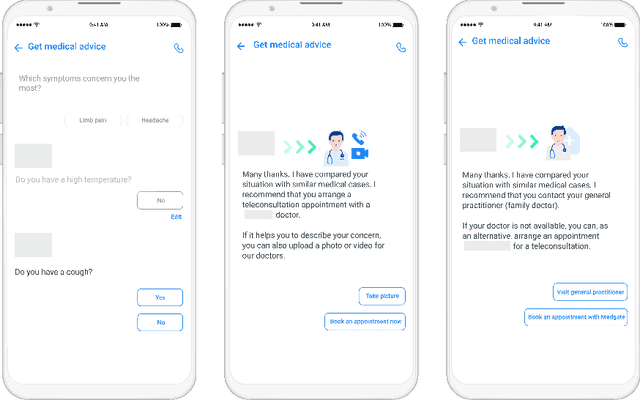

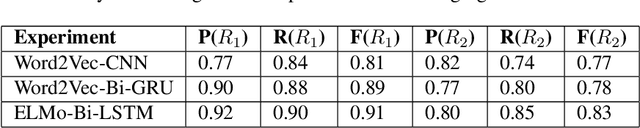

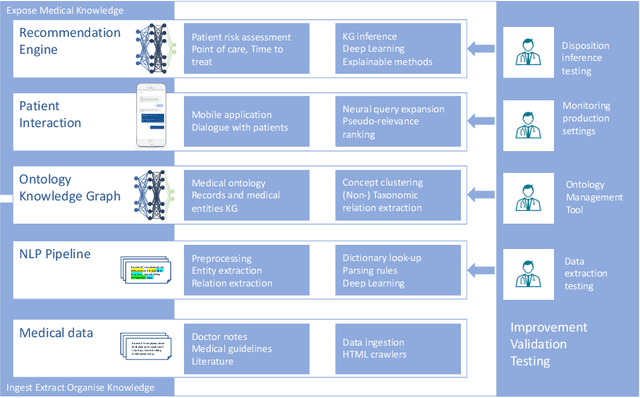

Artificial Intelligence Decision Support for Medical Triage

Nov 09, 2020

Applying state-of-the-art machine learning and natural language processing on approximately one million of teleconsultation records, we developed a triage system, now certified and in use at the largest European telemedicine provider. The system evaluates care alternatives through interactions with patients via a mobile application. Reasoning on an initial set of provided symptoms, the triage application generates AI-powered, personalized questions to better characterize the problem and recommends the most appropriate point of care and time frame for a consultation. The underlying technology was developed to meet the needs for performance, transparency, user acceptance and ease of use, central aspects to the adoption of AI-based decision support systems. Providing such remote guidance at the beginning of the chain of care has significant potential for improving cost efficiency, patient experience and outcomes. Being remote, always available and highly scalable, this service is fundamental in high demand situations, such as the current COVID-19 outbreak.

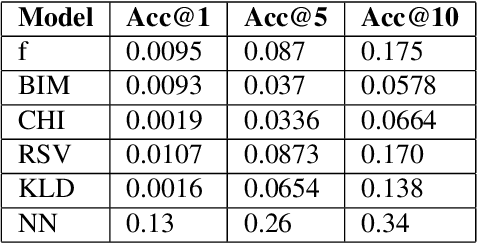

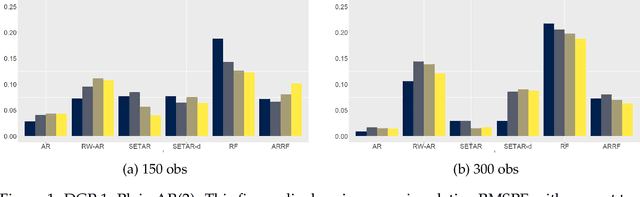

The Macroeconomy as a Random Forest

Jun 23, 2020

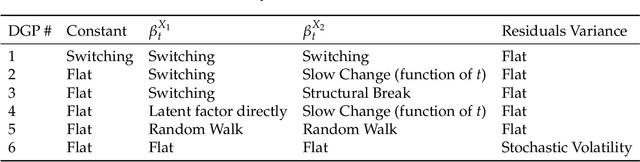

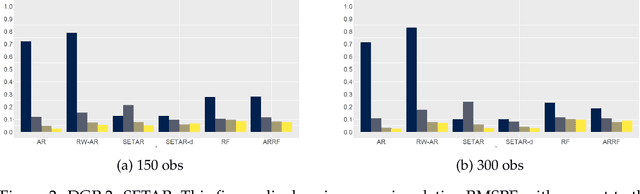

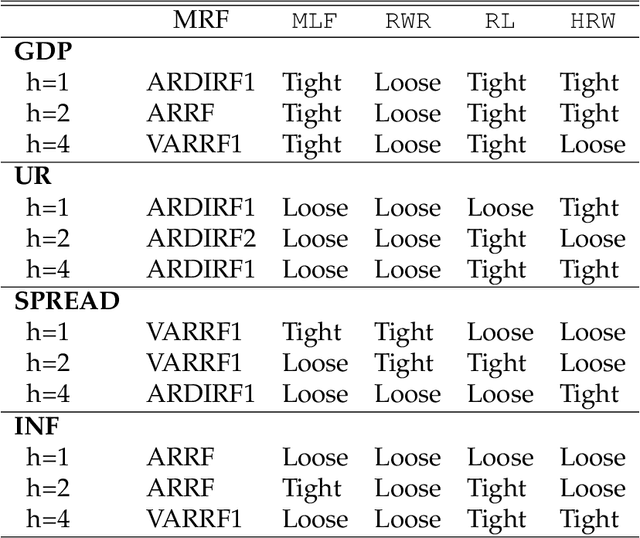

Over the last decades, an impressive amount of non-linearities have been proposed to reconcile reduced-form macroeconomic models with the data. Many of them boil down to have linear regression coefficients evolving through time: threshold/switching/smooth-transition regression; structural breaks and random walk time-varying parameters. While all of these schemes are reasonably plausible in isolation, I argue that those are much more in agreement with the data if they are combined. To this end, I propose Macroeconomic Random Forests, which adapts the canonical Machine Learning (ML) algorithm to the problem of flexibly modeling evolving parameters in a linear macro equation. The approach exhibits clear forecasting gains over a wide range of alternatives and successfully predicts the drastic 2008 rise in unemployment. The obtained generalized time-varying parameters (GTVPs) are shown to behave differently compared to random walk coefficients by adapting nicely to the problem at hand, whether it is regime-switching behavior or long-run structural change. By dividing the typical ML interpretation burden into looking at each TVP separately, I find that the resulting forecasts are, in fact, quite interpretable. An application to the US Phillips curve reveals it is probably not flattening the way you think.

NSL: Hybrid Interpretable Learning From Noisy Raw Data

Dec 09, 2020

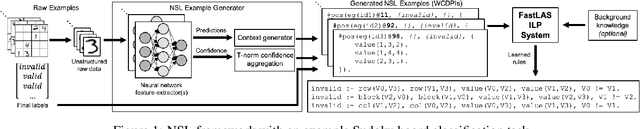

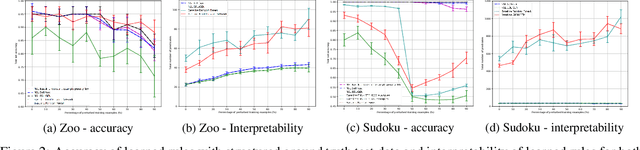

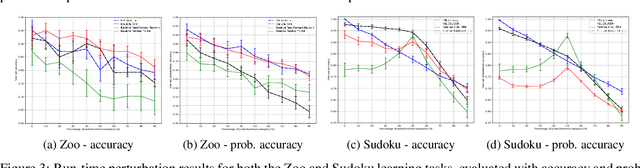

Inductive Logic Programming (ILP) systems learn generalised, interpretable rules in a data-efficient manner utilising existing background knowledge. However, current ILP systems require training examples to be specified in a structured logical format. Neural networks learn from unstructured data, although their learned models may be difficult to interpret and are vulnerable to data perturbations at run-time. This paper introduces a hybrid neural-symbolic learning framework, called NSL, that learns interpretable rules from labelled unstructured data. NSL combines pre-trained neural networks for feature extraction with FastLAS, a state-of-the-art ILP system for rule learning under the answer set semantics. Features extracted by the neural components define the structured context of labelled examples and the confidence of the neural predictions determines the level of noise of the examples. Using the scoring function of FastLAS, NSL searches for short, interpretable rules that generalise over such noisy examples. We evaluate our framework on propositional and first-order classification tasks using the MNIST dataset as raw data. Specifically, we demonstrate that NSL is able to learn robust rules from perturbed MNIST data and achieve comparable or superior accuracy when compared to neural network and random forest baselines whilst being more general and interpretable.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge