"Time": models, code, and papers

Neural language models for text classification in evidence-based medicine

Dec 01, 2020

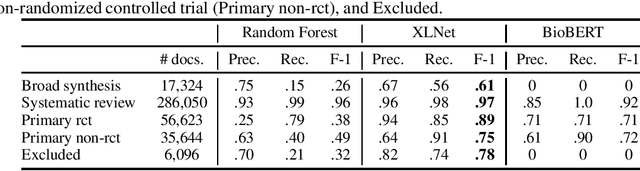

The COVID-19 has brought about a significant challenge to the whole of humanity, but with a special burden upon the medical community. Clinicians must keep updated continuously about symptoms, diagnoses, and effectiveness of emergent treatments under a never-ending flood of scientific literature. In this context, the role of evidence-based medicine (EBM) for curating the most substantial evidence to support public health and clinical practice turns essential but is being challenged as never before due to the high volume of research articles published and pre-prints posted daily. Artificial Intelligence can have a crucial role in this situation. In this article, we report the results of an applied research project to classify scientific articles to support Epistemonikos, one of the most active foundations worldwide conducting EBM. We test several methods, and the best one, based on the XLNet neural language model, improves the current approach by 93\% on average F1-score, saving valuable time from physicians who volunteer to curate COVID-19 research articles manually.

A Meta-Learning Approach to the Optimal Power Flow Problem Under Topology Reconfigurations

Dec 21, 2020

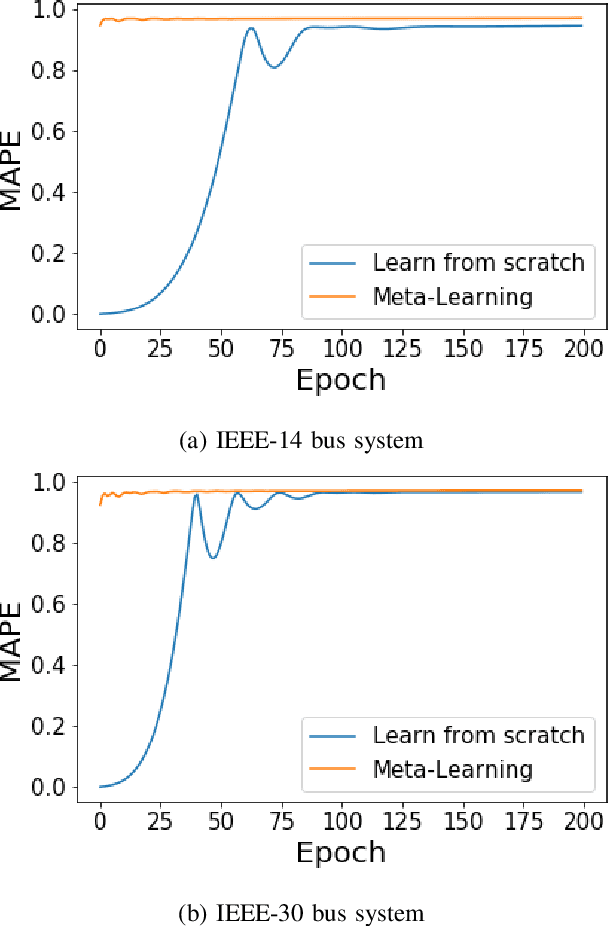

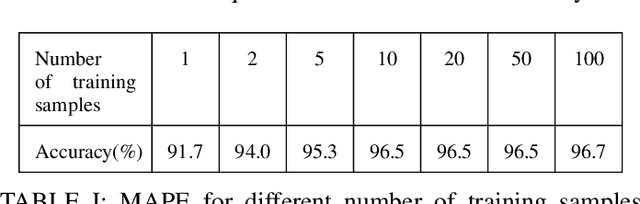

Recently, there has been a surge of interest in adopting deep neural networks (DNNs) for solving the optimal power flow (OPF) problem in power systems. Computing optimal generation dispatch decisions using a trained DNN takes significantly less time when compared to using conventional optimization solvers. However, a major drawback of existing work is that the machine learning models are trained for a specific system topology. Hence, the DNN predictions are only useful as long as the system topology remains unchanged. Changes to the system topology (initiated by the system operator) would require retraining the DNN, which incurs significant training overhead and requires an extensive amount of training data (corresponding to the new system topology). To overcome this drawback, we propose a DNN-based OPF predictor that is trained using a meta-learning (MTL) approach. The key idea behind this approach is to find a common initialization vector that enables fast training for any system topology. The developed OPF-predictor is validated through simulations using benchmark IEEE bus systems. The results show that the MTL approach achieves significant training speeds-ups and requires only a few gradient steps with a few data samples to achieve high OPF prediction accuracy.

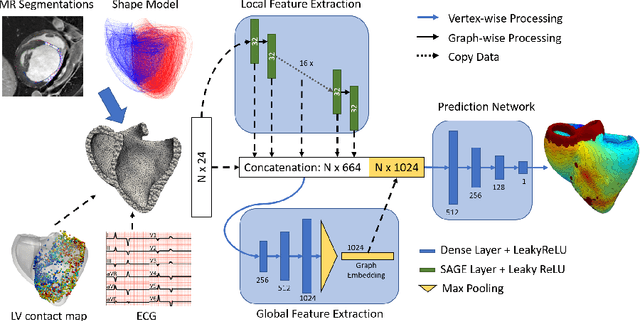

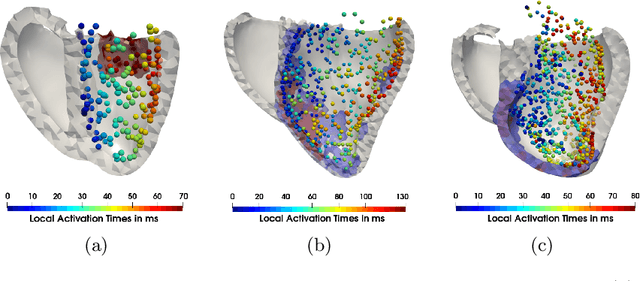

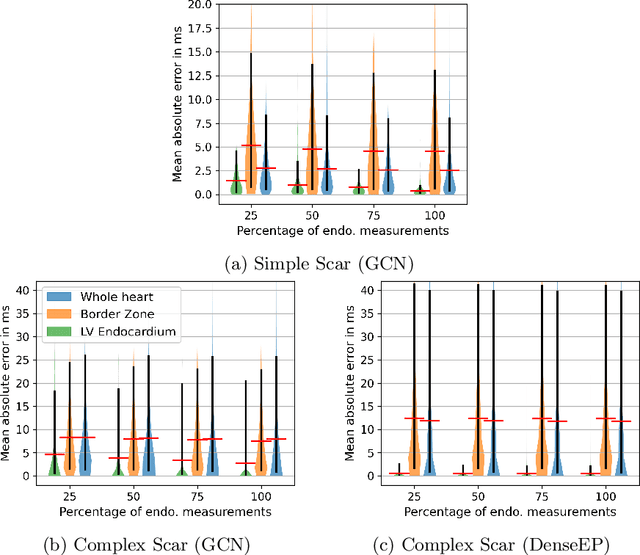

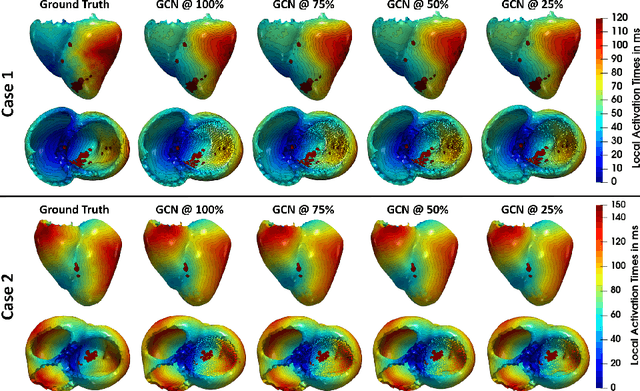

Graph convolutional regression of cardiac depolarization from sparse endocardial maps

Sep 28, 2020

Electroanatomic mapping as routinely acquired in ablation therapy of ventricular tachycardia is the gold standard method to identify the arrhythmogenic substrate. To reduce the acquisition time and still provide maps with high spatial resolution, we propose a novel deep learning method based on graph convolutional neural networks to estimate the depolarization time in the myocardium, given sparse catheter data on the left ventricular endocardium, ECG, and magnetic resonance images. The training set consists of data produced by a computational model of cardiac electrophysiology on a large cohort of synthetically generated geometries of ischemic hearts. The predicted depolarization pattern has good agreement with activation times computed by the cardiac electrophysiology model in a validation set of five swine heart geometries with complex scar and border zone morphologies. The mean absolute error hereby measures 8 ms on the entire myocardium when providing 50\% of the endocardial ground truth in over 500 computed depolarization patterns. Furthermore, when considering a complete animal data set with high density electroanatomic mapping data as reference, the neural network can accurately reproduce the endocardial depolarization pattern, even when a small percentage of measurements are provided as input features (mean absolute error of 7 ms with 50\% of input samples). The results show that the proposed method, trained on synthetically generated data, may generalize to real data.

Segmentation and Analysis of a Sketched Truss Frame Using Morphological Image Processing Techniques

Sep 28, 2020

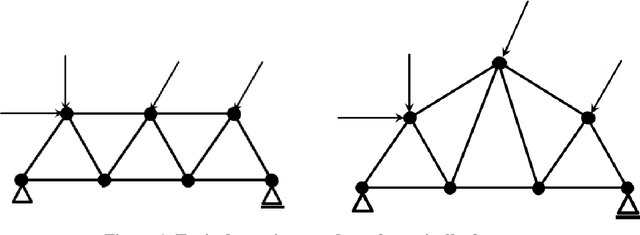

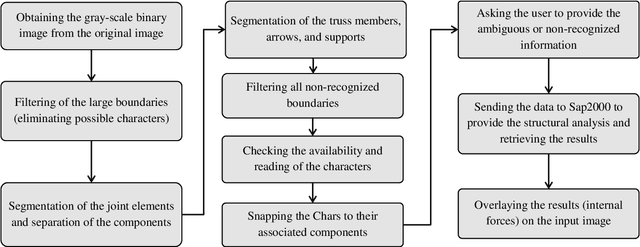

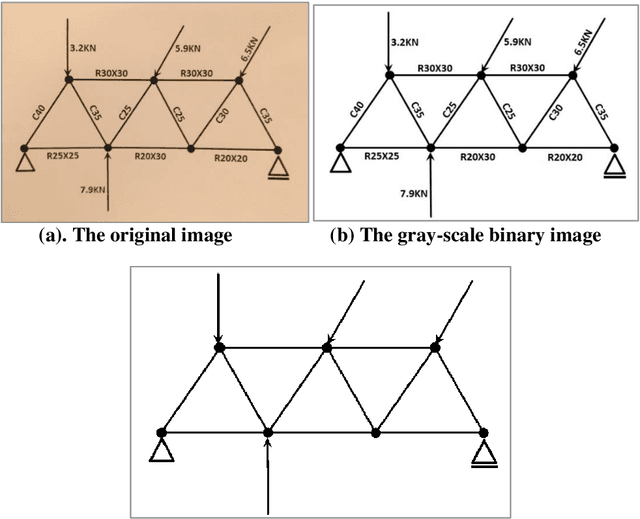

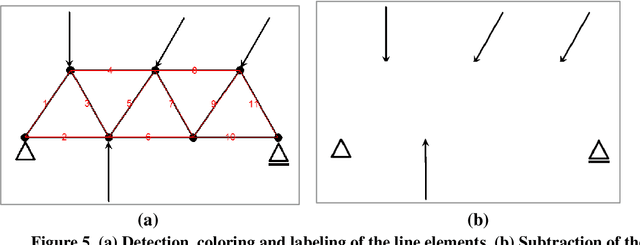

Development of computational tools to analyze and assess the building capacities has had a major impact in civil engineering. The interaction with the structural software packages is becoming easier and the modeling tools are becoming smarter by automating the users role during their interaction with the software. One of the difficulties and the most time consuming steps involved in the structural modeling is defining the geometry of the structure to provide the analysis. This paper is dedicated to the development of a methodology to automate analysis of a hand sketched or computer generated truss frame drawn on a piece of paper. First, we focus on the segmentation methodologies for hand sketched truss components using the morphological image processing techniques, and then we provide a real time analysis of the truss. We visualize and augment the results on the input image to facilitate the public understanding of the truss geometry and internal forces. MATLAB is used as the programming language for the image processing purposes, and the truss is analyzed using Sap2000 API to integrate with MATLAB to provide a convenient structural analysis. This paper highlights the potential of the automation of the structural analysis using image processing to quickly assess the efficiency of structural systems. Further development of this framework is likely to revolutionize the way that structures are modeled and analyzed.

Minimal Solutions for Panoramic Stitching Given Gravity Prior

Dec 01, 2020

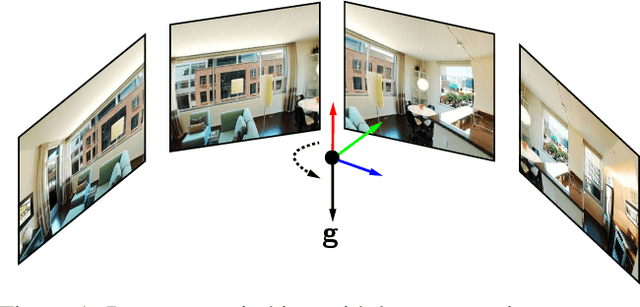

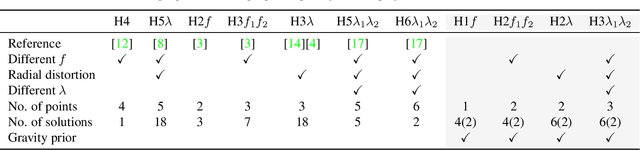

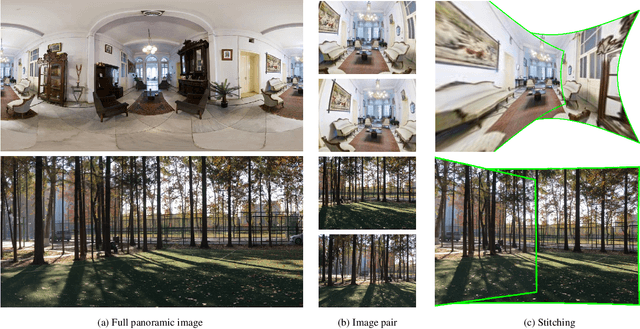

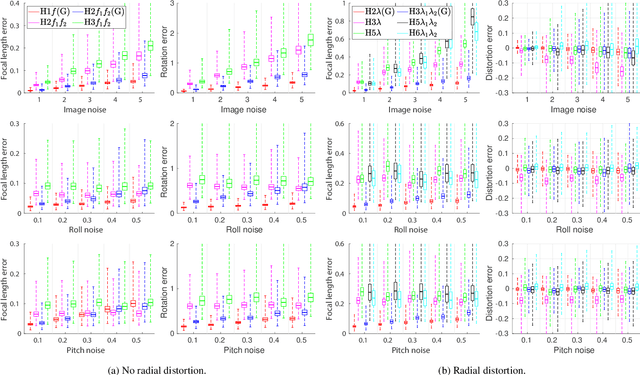

When capturing panoramas, people tend to align their cameras with the vertical axis, i.e., the direction of gravity. Moreover, modern devices, such as smartphones and tablets, are equipped with an IMU (Inertial Measurement Unit) that can measure the gravity vector accurately. Using this prior, the y-axes of the cameras can be aligned or assumed to be already aligned, reducing their relative orientation to 1-DOF (degree of freedom). Exploiting this assumption, we propose new minimal solutions to panoramic image stitching of images taken by cameras with coinciding optical centers, i.e., undergoing pure rotation. We consider four practical camera configurations, assuming unknown fixed or varying focal length with or without radial distortion. The solvers are tested both on synthetic scenes and on more than 500k real image pairs from the Sun360 dataset and from scenes captured by us using two smartphones equipped with IMUs. It is shown, that they outperform the state-of-the-art both in terms of accuracy and processing time.

Truly shift-invariant convolutional neural networks

Dec 01, 2020

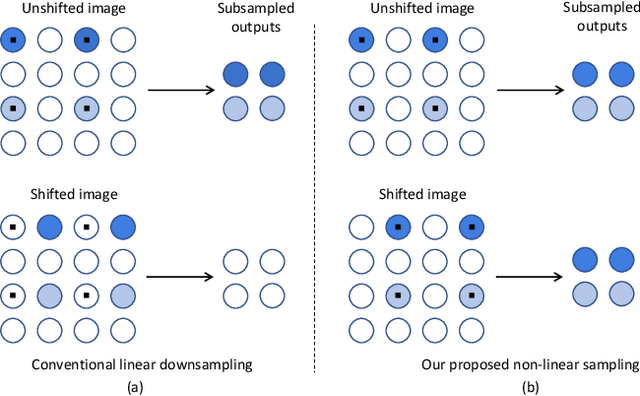

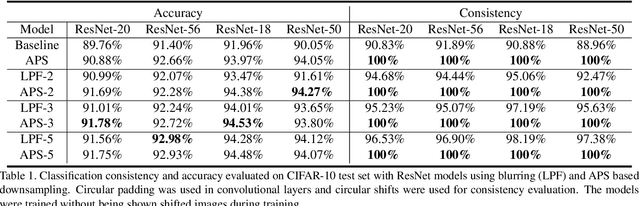

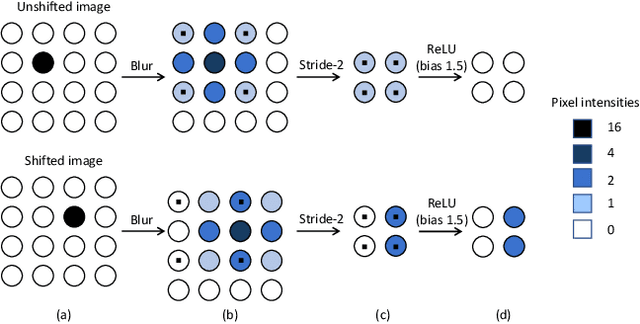

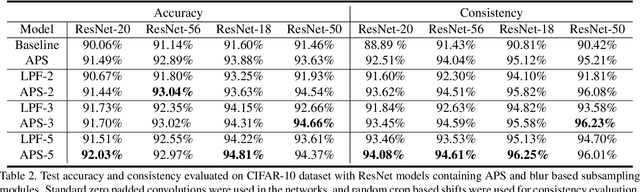

Thanks to the use of convolution and pooling layers, convolutional neural networks were for a long time thought to be shift-invariant. However, recent works have shown that the output of a CNN can change significantly with small shifts in input: a problem caused by the presence of downsampling (stride) layers. The existing solutions rely either on data augmentation or on anti-aliasing, both of which have limitations and neither of which enables perfect shift invariance. Additionally, the gains obtained from these methods do not extend to image patterns not seen during training. To address these challenges, we propose adaptive polyphase sampling (APS), a simple sub-sampling scheme that allows convolutional neural networks to achieve 100% consistency in classification performance under shifts, without any loss in accuracy. With APS the networks exhibit perfect consistency to shifts even before training, making it the first approach that makes convolutional neural networks truly shift invariant.

QUBO Formulations for Training Machine Learning Models

Aug 05, 2020

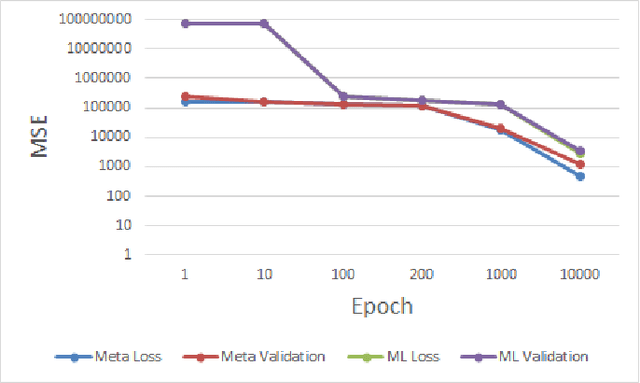

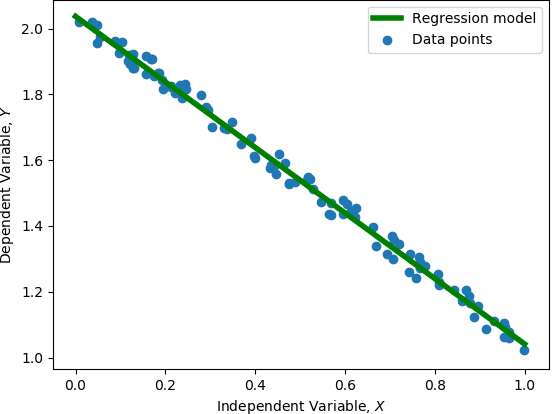

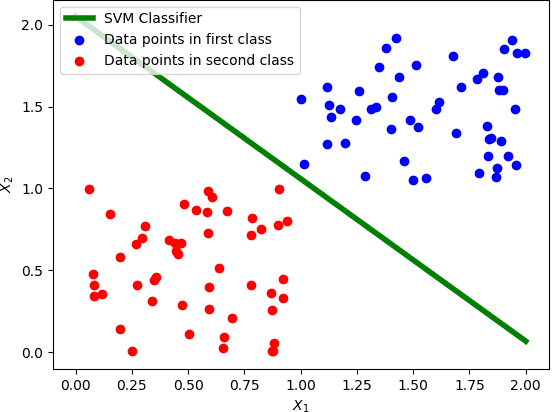

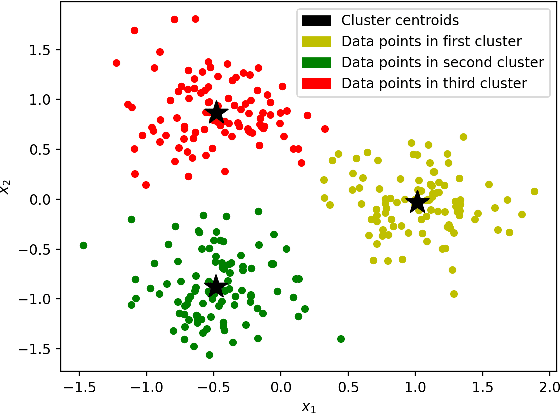

Training machine learning models on classical computers is usually a time and compute intensive process. With Moore's law coming to an end and ever increasing demand for large-scale data analysis using machine learning, we must leverage non-conventional computing paradigms like quantum computing to train machine learning models efficiently. Adiabatic quantum computers like the D-Wave 2000Q can approximately solve NP-hard optimization problems, such as the quadratic unconstrained binary optimization (QUBO), faster than classical computers. Since many machine learning problems are also NP-hard, we believe adiabatic quantum computers might be instrumental in training machine learning models efficiently in the post Moore's law era. In order to solve a problem on adiabatic quantum computers, it must be formulated as a QUBO problem, which is a challenging task in itself. In this paper, we formulate the training problems of three machine learning models---linear regression, support vector machine (SVM) and equal-sized k-means clustering---as QUBO problems so that they can be trained on adiabatic quantum computers efficiently. We also analyze the time and space complexities of our formulations and compare them to the state-of-the-art classical algorithms for training these machine learning models. We show that the time and space complexities of our formulations are better (in the case of SVM and equal-sized k-means clustering) or equivalent (in case of linear regression) to their classical counterparts.

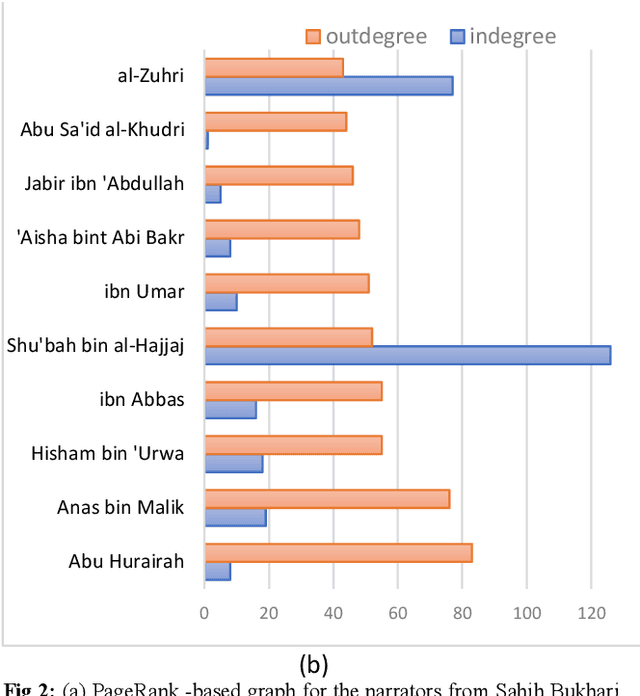

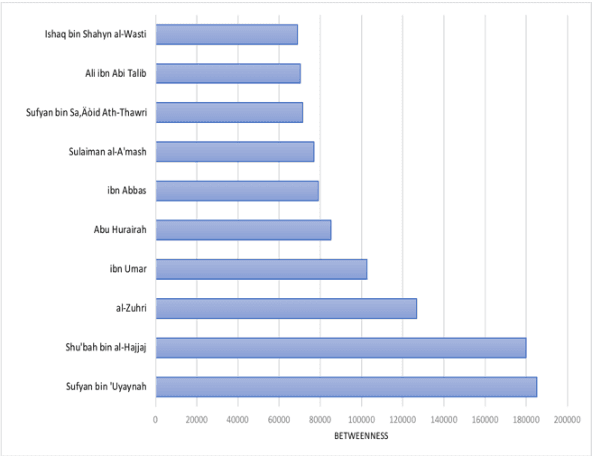

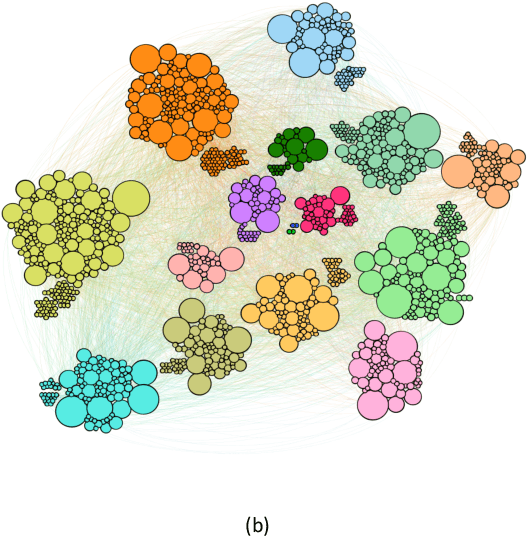

Social Network Analysis of Hadith Narrators from Sahih Bukhari

Feb 03, 2021

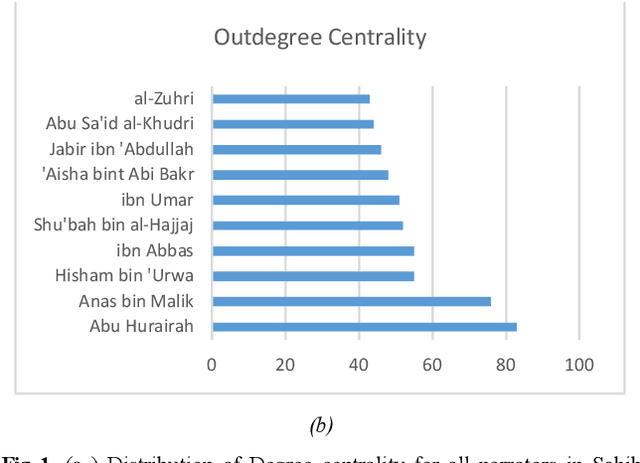

The ahadith, prophetic traditions for the Muslims around the world, are narrations originating from the sayings and the deeds of Prophet Muhammad (pbuh). They are considered one of the fundamental sources of Islamic legislation along with the Quran. The list of persons involved in the narration of each hadith is carefully scrutinized by scholars studying the hadith, with respect to their reputation and authenticity of the hadith. This is due to the its legislative importance in Islamic principles. There were many narrators who contributed to this responsibility of preserving prophetic narrations over the centuries. But to date, no systematic and comprehensive study, based on the social network, has been adapted to understand the contribution of early hadith narrators and the propagation of hadith across generations. In this study, we represented the chain of narrators of the hadith collection from Sahih Bukhari as a social graph. Based on social network analysis (SNA) on this graph, we found that the network of narrators is a scale-free network. We identified a list of influential narrators from the companions as well as the narrators from the second and third-generation who contribute significantly in the propagation of hadith collected in Sahih Bukhari. We discovered sixteen communities from the narrators of Sahih Bukhari. In each of these communities, there are other narrators who contributed significantly to the propagation of prophetic narrations. We also found that most narrators were centered in Makkah and Madinah in the era of companions and, then, gradually the center of hadith narrators shifted towards Kufa, Baghdad and central Asia over a period of time. To the best of our knowledge, this the first comprehensive and systematic study based on SNA, representing the narrators as a social graph to analyze their contribution to the preservation and propagation of hadith.

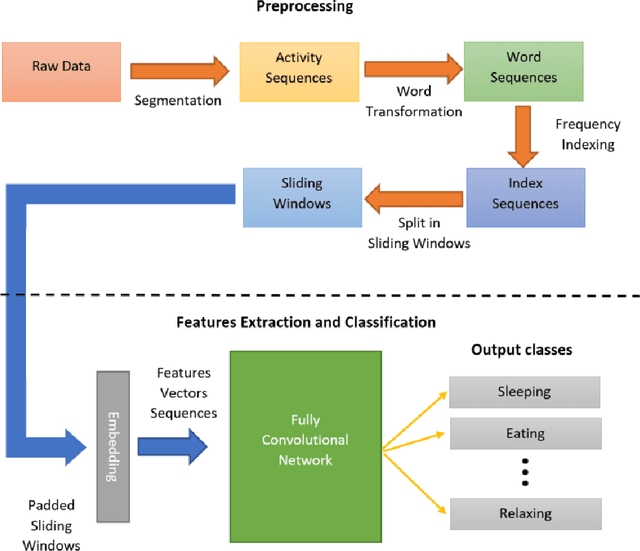

Fully Convolutional Network Bootstrapped by Word Encoding and Embedding for Activity Recognition in Smart Homes

Dec 01, 2020

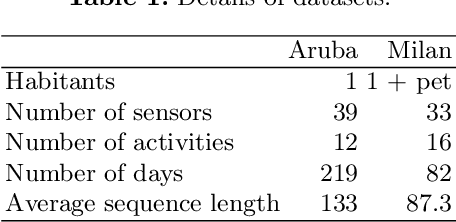

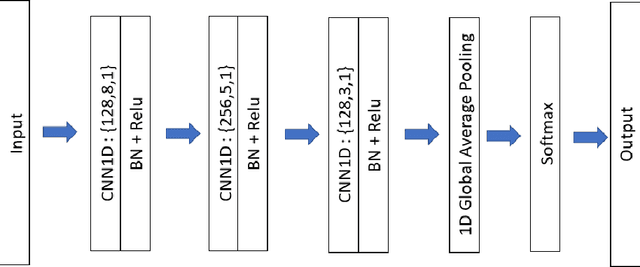

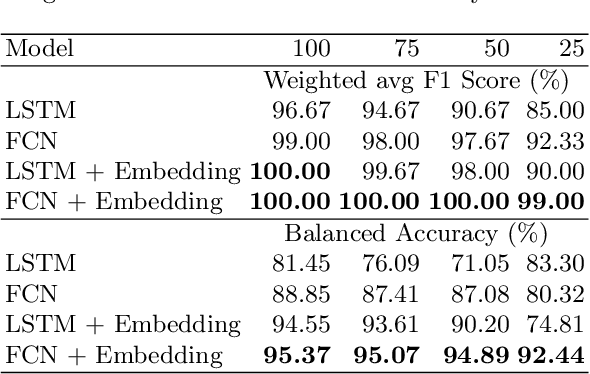

Activity recognition in smart homes is essential when we wish to propose automatic services for the inhabitants. However, it poses challenges in terms of variability of the environment, sensorimotor system, but also user habits. Therefore, endto-end systems fail at automatically extracting key features, without extensive pre-processing. We propose to tackle feature extraction for activity recognition in smart homes by merging methods from the Natural Language Processing (NLP) and the Time Series Classification (TSC) domains. We evaluate the performance of our method on two datasets issued from the Center for Advanced Studies in Adaptive Systems (CASAS). Moreover, we analyze the contributions of the use of NLP encoding Bag-Of-Word with Embedding as well as the ability of the FCN algorithm to automatically extract features and classify. The method we propose shows good performance in offline activity classification. Our analysis also shows that FCN is a suitable algorithm for smart home activity recognition and hightlights the advantages of automatic feature extraction.

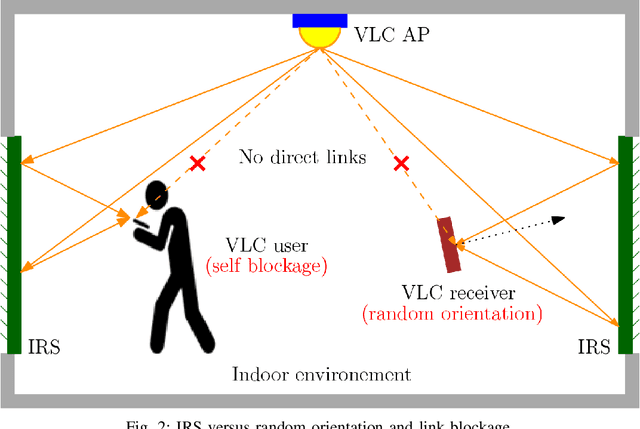

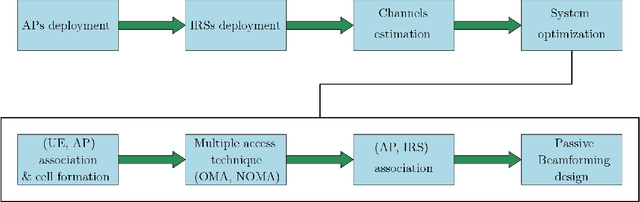

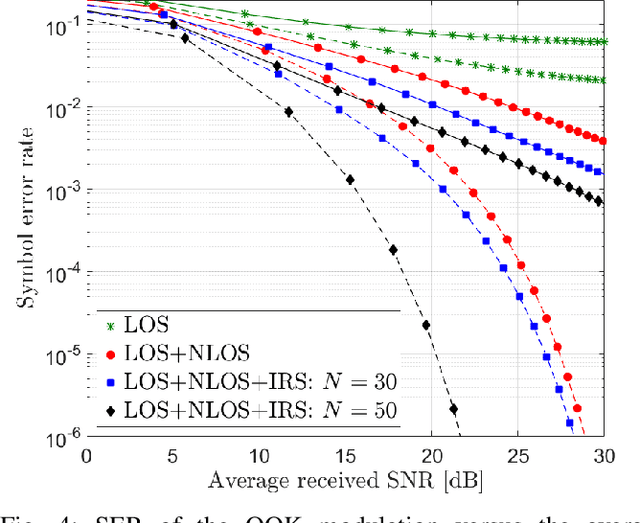

Integration of IRS in Indoor VLC Systems: Challenges, Potential and Promising Solutions

Jan 15, 2021

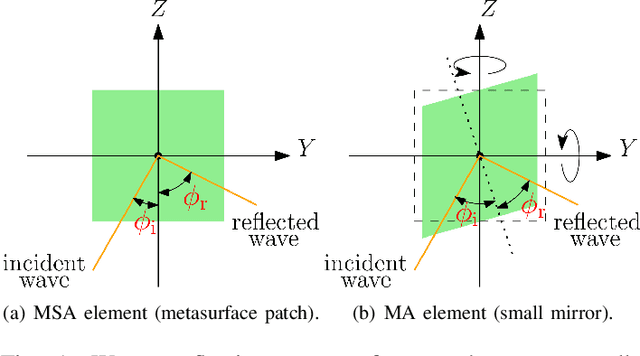

Visible light communication (VLC) is an optical wireless communication technology that is considered a promising solution for high-speed indoor connectivity. Unlike the case in conventional radio-frequency wireless systems, the VLC channel is not isotropic, meaning that the device orientation affects the channel gain significantly. In addition, due to the use of optical frequency bands, the presence of different obstacles (e.g., walls, human bodies, furniture) may easily block the VLC links. One solution to overcome these issues is the integration of the intelligent reflective surface (IRS), which is a new and revolutionizing technology that has the potential to significantly improve the performance of wireless networks. IRS is capable of smartly reconfiguring the wireless propagation environment with the use of massive low-cost passive reflecting elements integrated on a planar surface. In this paper, a framework for integrating IRS in indoor VLC systems is presented. We give an overview of IRS, including its advantages, different types and main applications in VLC systems, where we demonstrate the potential of IRS in overcoming the effects of random device orientation and links blockages. We discuss key factors pertaining to the design and integration of IRS in VLC systems, namely, the deployment of IRSs, the channel state information acquisition, the optimization of IRS configuration and the real-time IRS control. We also lay out a number of promising research directions that center around the integration of IRS in indoor VLC systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge