"Time": models, code, and papers

WaveFuse: A Unified Deep Framework for Image Fusion with Wavelet Transform

Jul 28, 2020

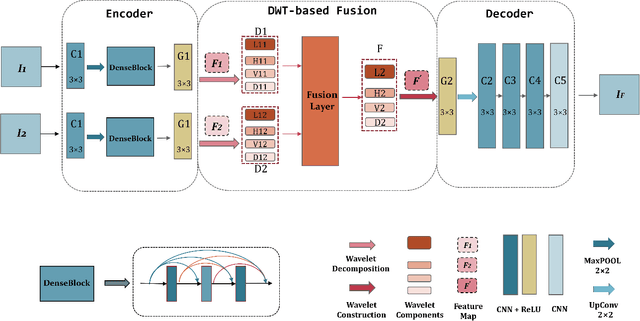

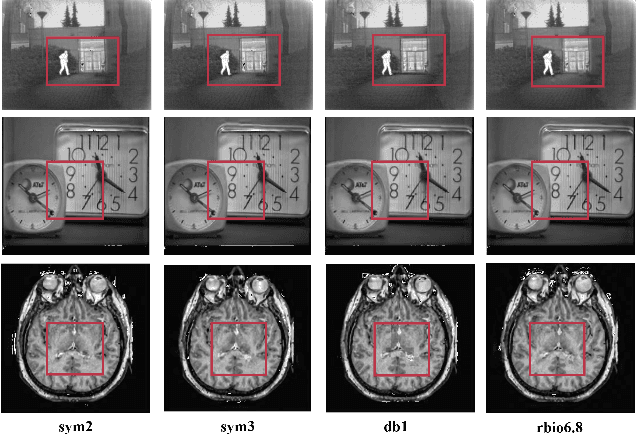

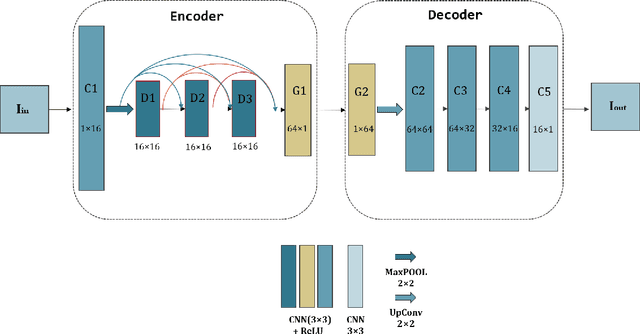

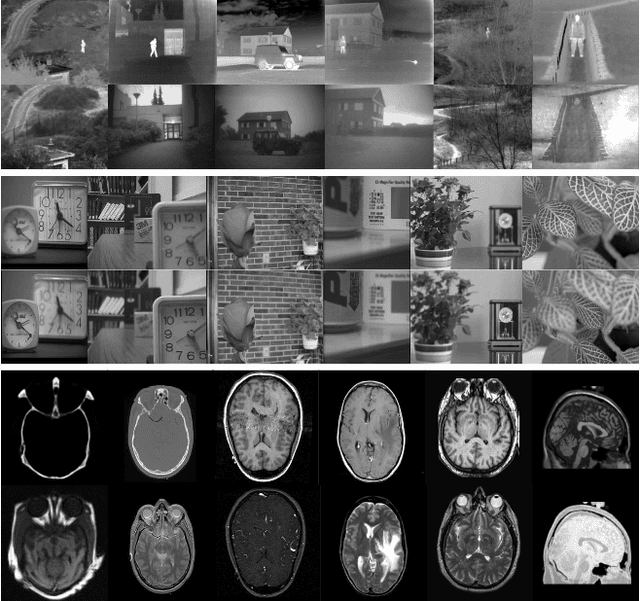

We propose an unsupervised image fusion architecture for multiple application scenarios based on the combination of multi-scale discrete wavelet transform through regional energy and deep learning. To our best knowledge, this is the first time the conventional image fusion method has been combined with deep learning. The useful information of feature maps can be utilized adequately through multi-scale discrete wavelet transform in our proposed method.Compared with other state-of-the-art fusion method, the proposed algorithm exhibits better fusion performance in both subjective and objective evaluation. Moreover, it's worth mentioning that comparable fusion performance trained in COCO dataset can be obtained by training with a much smaller dataset with only hundreds of images chosen randomly from COCO. Hence, the training time is shortened substantially, leading to the improvement of the model's performance both in practicality and training efficiency.

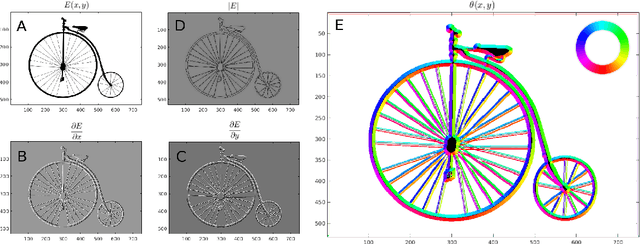

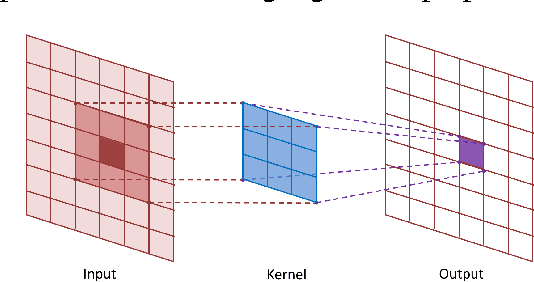

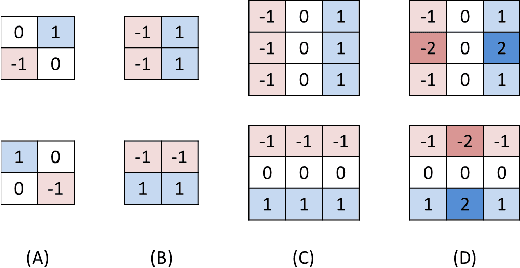

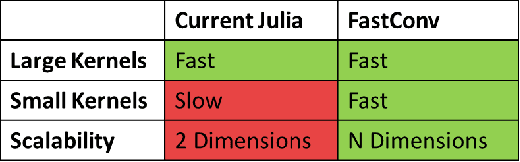

Accelerated Convolutions for Efficient Multi-Scale Time to Contact Computation in Julia

Dec 28, 2016

Convolutions have long been regarded as fundamental to applied mathematics, physics and engineering. Their mathematical elegance allows for common tasks such as numerical differentiation to be computed efficiently on large data sets. Efficient computation of convolutions is critical to artificial intelligence in real-time applications, like machine vision, where convolutions must be continuously and efficiently computed on tens to hundreds of kilobytes per second. In this paper, we explore how convolutions are used in fundamental machine vision applications. We present an accelerated n-dimensional convolution package in the high performance computing language, Julia, and demonstrate its efficacy in solving the time to contact problem for machine vision. Results are measured against synthetically generated videos and quantitatively assessed according to their mean squared error from the ground truth. We achieve over an order of magnitude decrease in compute time and allocated memory for comparable machine vision applications. All code is packaged and integrated into the official Julia Package Manager to be used in various other scenarios.

Coarse-grained and emergent distributed parameter systems from data

Nov 17, 2020

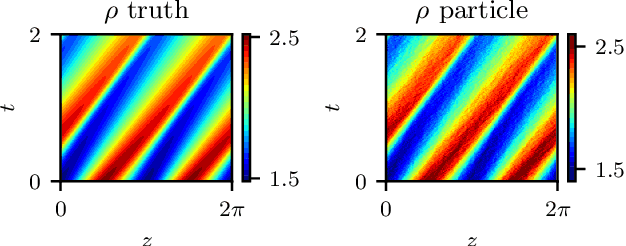

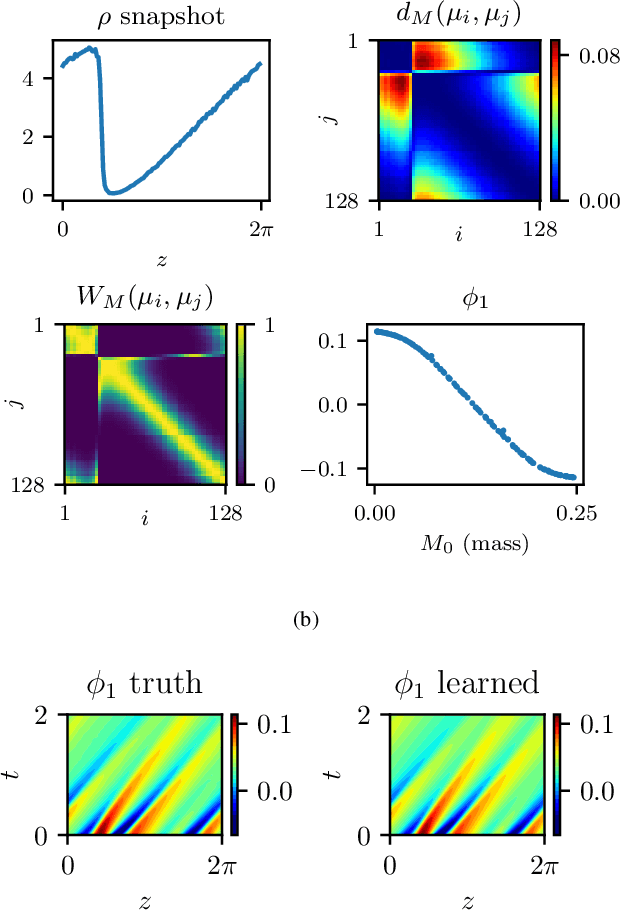

We explore the derivation of distributed parameter system evolution laws (and in particular, partial differential operators and associated partial differential equations, PDEs) from spatiotemporal data. This is, of course, a classical identification problem; our focus here is on the use of manifold learning techniques (and, in particular, variations of Diffusion Maps) in conjunction with neural network learning algorithms that allow us to attempt this task when the dependent variables, and even the independent variables of the PDE are not known a priori and must be themselves derived from the data. The similarity measure used in Diffusion Maps for dependent coarse variable detection involves distances between local particle distribution observations; for independent variable detection we use distances between local short-time dynamics. We demonstrate each approach through an illustrative established PDE example. Such variable-free, emergent space identification algorithms connect naturally with equation-free multiscale computation tools.

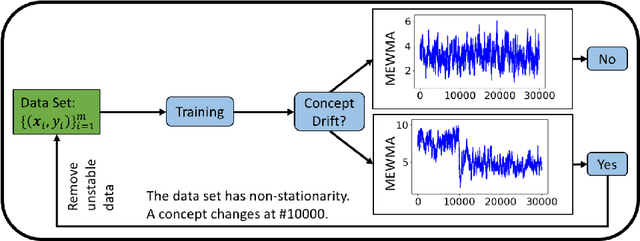

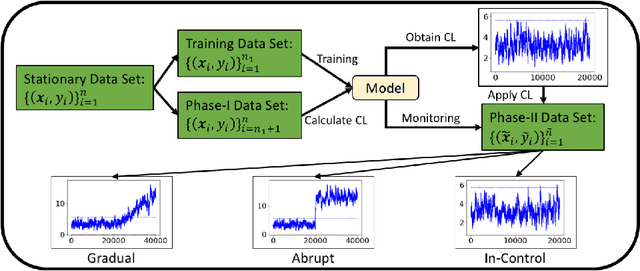

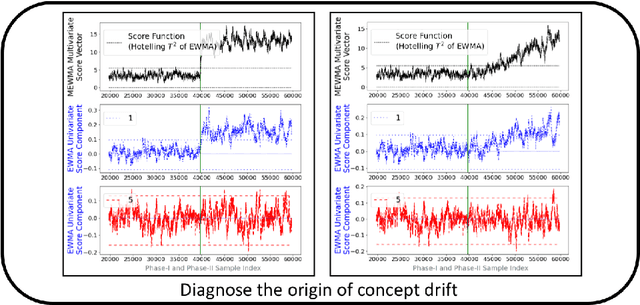

Concept Drift Monitoring and Diagnostics of Supervised Learning Models via Score Vectors

Dec 12, 2020

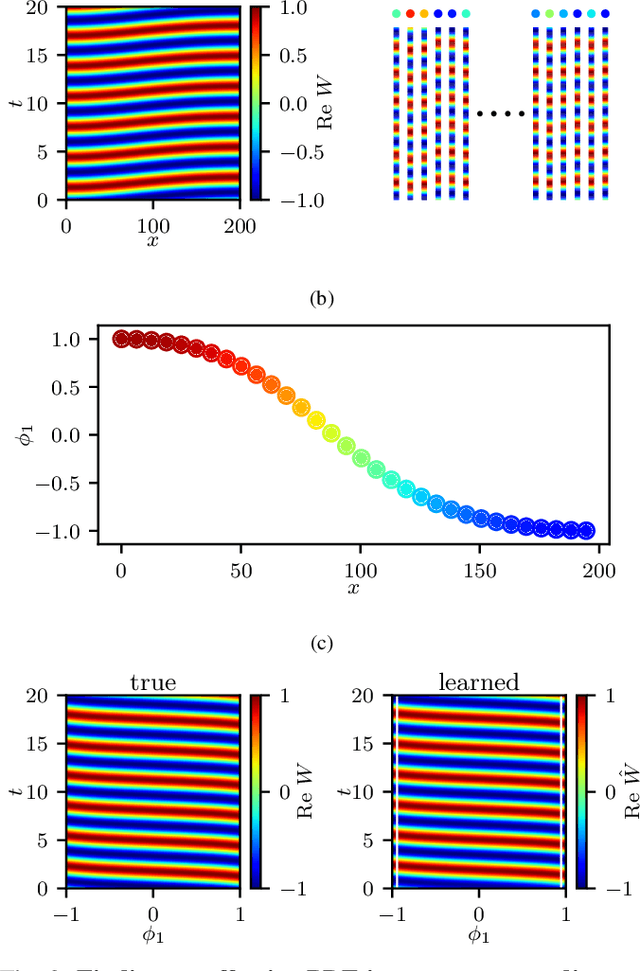

Supervised learning models are one of the most fundamental classes of models. Viewing supervised learning from a probabilistic perspective, the set of training data to which the model is fitted is usually assumed to follow a stationary distribution. However, this stationarity assumption is often violated in a phenomenon called concept drift, which refers to changes over time in the predictive relationship between covariates $\mathbf{X}$ and a response variable $Y$ and can render trained models suboptimal or obsolete. We develop a comprehensive and computationally efficient framework for detecting, monitoring, and diagnosing concept drift. Specifically, we monitor the Fisher score vector, defined as the gradient of the log-likelihood for the fitted model, using a form of multivariate exponentially weighted moving average, which monitors for general changes in the mean of a random vector. In spite of the substantial performance advantages that we demonstrate over popular error-based methods, a score-based approach has not been previously considered for concept drift monitoring. Advantages of the proposed score-based framework include applicability to any parametric model, more powerful detection of changes as shown in theory and experiments, and inherent diagnostic capabilities for helping to identify the nature of the changes.

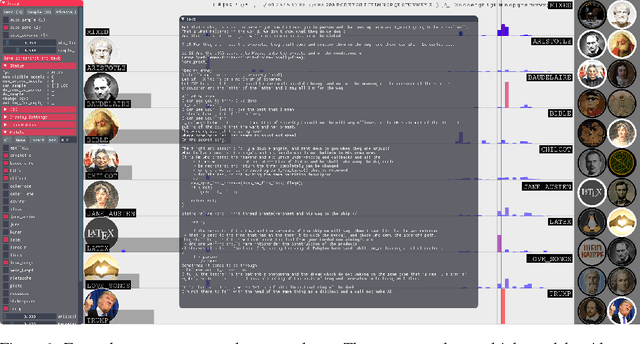

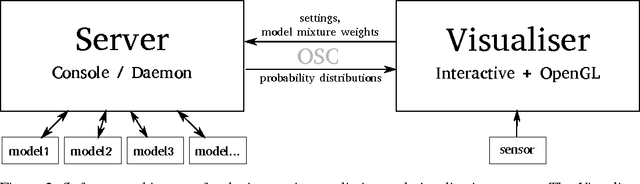

Real-time interactive sequence generation and control with Recurrent Neural Network ensembles

Feb 09, 2017

Recurrent Neural Networks (RNN), particularly Long Short Term Memory (LSTM) RNNs, are a popular and very successful method for learning and generating sequences. However, current generative RNN techniques do not allow real-time interactive control of the sequence generation process, thus aren't well suited for live creative expression. We propose a method of real-time continuous control and 'steering' of sequence generation using an ensemble of RNNs and dynamically altering the mixture weights of the models. We demonstrate the method using character based LSTM networks and a gestural interface allowing users to 'conduct' the generation of text.

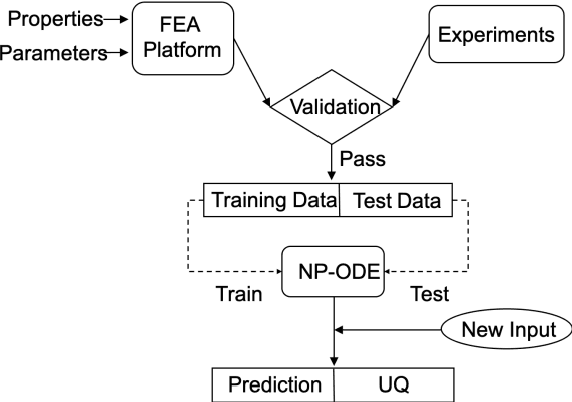

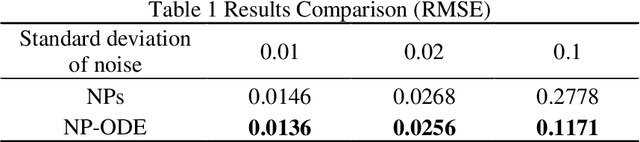

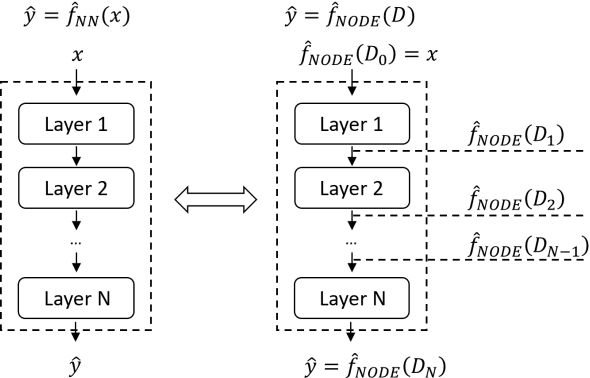

NP-ODE: Neural Process Aided Ordinary Differential Equations for Uncertainty Quantification of Finite Element Analysis

Dec 12, 2020

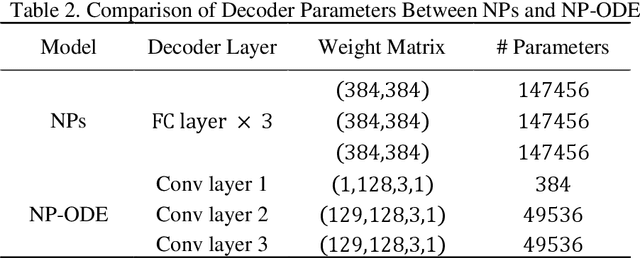

Finite element analysis (FEA) has been widely used to generate simulations of complex and nonlinear systems. Despite its strength and accuracy, the limitations of FEA can be summarized into two aspects: a) running high-fidelity FEA often requires significant computational cost and consumes a large amount of time; b) FEA is a deterministic method that is insufficient for uncertainty quantification (UQ) when modeling complex systems with various types of uncertainties. In this paper, a physics-informed data-driven surrogate model, named Neural Process Aided Ordinary Differential Equation (NP-ODE), is proposed to model the FEA simulations and capture both input and output uncertainties. To validate the advantages of the proposed NP-ODE, we conduct experiments on both the simulation data generated from a given ordinary differential equation and the data collected from a real FEA platform for tribocorrosion. The performances of the proposed NP-ODE and several benchmark methods are compared. The results show that the proposed NP-ODE outperforms benchmark methods. The NP-ODE method realizes the smallest predictive error as well as generates the most reasonable confidence interval having the best coverage on testing data points.

Multi-Agent Online Optimization with Delays: Asynchronicity, Adaptivity, and Optimism

Dec 21, 2020

Online learning has been successfully applied to many problems in which data are revealed over time. In this paper, we provide a general framework for studying multi-agent online learning problems in the presence of delays and asynchronicities. Specifically, we propose and analyze a class of adaptive dual averaging schemes in which agents only need to accumulate gradient feedback received from the whole system, without requiring any between-agent coordination. In the single-agent case, the adaptivity of the proposed method allows us to extend a range of existing results to problems with potentially unbounded delays between playing an action and receiving the corresponding feedback. In the multi-agent case, the situation is significantly more complicated because agents may not have access to a global clock to use as a reference point; to overcome this, we focus on the information that is available for producing each prediction rather than the actual delay associated with each feedback. This allows us to derive adaptive learning strategies with optimal regret bounds, at both the agent and network levels. Finally, we also analyze an "optimistic" variant of the proposed algorithm which is capable of exploiting the predictability of problems with a slower variation and leads to improved regret bounds.

From Points to Multi-Object 3D Reconstruction

Dec 21, 2020

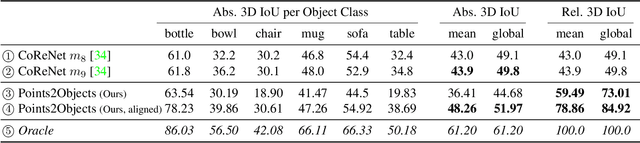

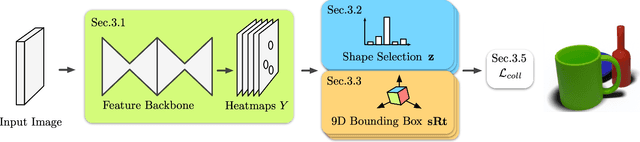

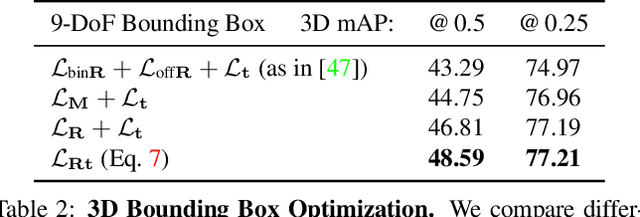

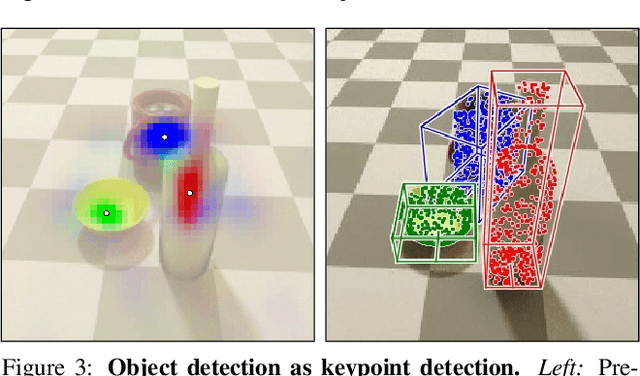

We propose a method to detect and reconstruct multiple 3D objects from a single RGB image. The key idea is to optimize for detection, alignment and shape jointly over all objects in the RGB image, while focusing on realistic and physically plausible reconstructions. To this end, we propose a keypoint detector that localizes objects as center points and directly predicts all object properties, including 9-DoF bounding boxes and 3D shapes -- all in a single forward pass. The proposed method formulates 3D shape reconstruction as a shape selection problem, i.e. it selects among exemplar shapes from a given database. This makes it agnostic to shape representations, which enables a lightweight reconstruction of realistic and visually-pleasing shapes based on CAD-models, while the training objective is formulated around point clouds and voxel representations. A collision-loss promotes non-intersecting objects, further increasing the reconstruction realism. Given the RGB image, the presented approach performs lightweight reconstruction in a single-stage, it is real-time capable, fully differentiable and end-to-end trainable. Our experiments compare multiple approaches for 9-DoF bounding box estimation, evaluate the novel shape-selection mechanism and compare to recent methods in terms of 3D bounding box estimation and 3D shape reconstruction quality.

Real-time Hand Tracking under Occlusion from an Egocentric RGB-D Sensor

Oct 05, 2017

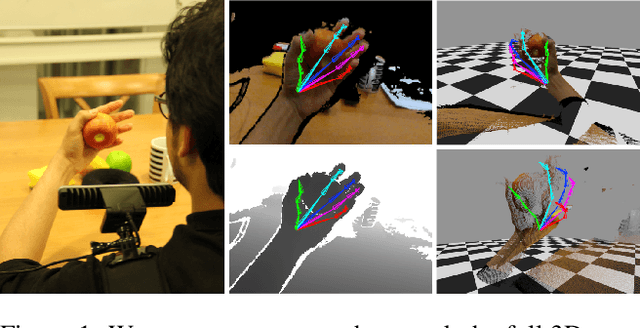

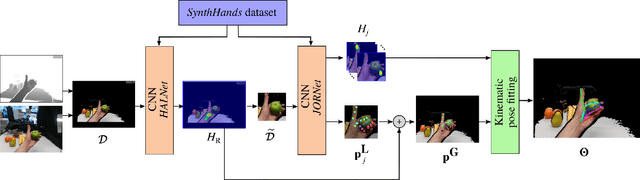

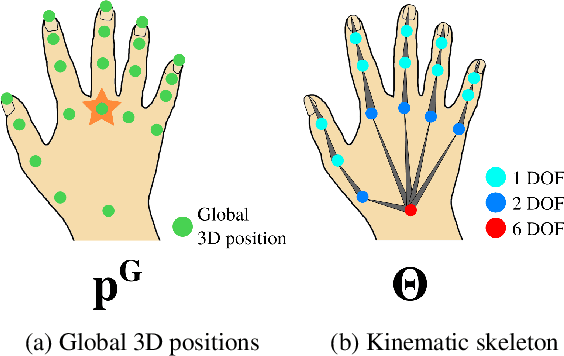

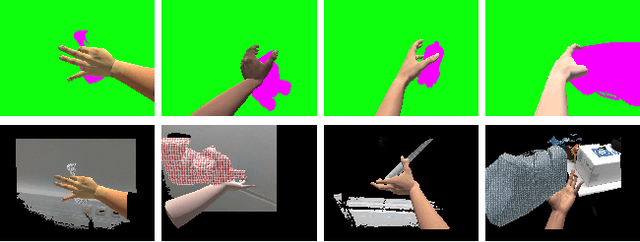

We present an approach for real-time, robust and accurate hand pose estimation from moving egocentric RGB-D cameras in cluttered real environments. Existing methods typically fail for hand-object interactions in cluttered scenes imaged from egocentric viewpoints, common for virtual or augmented reality applications. Our approach uses two subsequently applied Convolutional Neural Networks (CNNs) to localize the hand and regress 3D joint locations. Hand localization is achieved by using a CNN to estimate the 2D position of the hand center in the input, even in the presence of clutter and occlusions. The localized hand position, together with the corresponding input depth value, is used to generate a normalized cropped image that is fed into a second CNN to regress relative 3D hand joint locations in real time. For added accuracy, robustness and temporal stability, we refine the pose estimates using a kinematic pose tracking energy. To train the CNNs, we introduce a new photorealistic dataset that uses a merged reality approach to capture and synthesize large amounts of annotated data of natural hand interaction in cluttered scenes. Through quantitative and qualitative evaluation, we show that our method is robust to self-occlusion and occlusions by objects, particularly in moving egocentric perspectives.

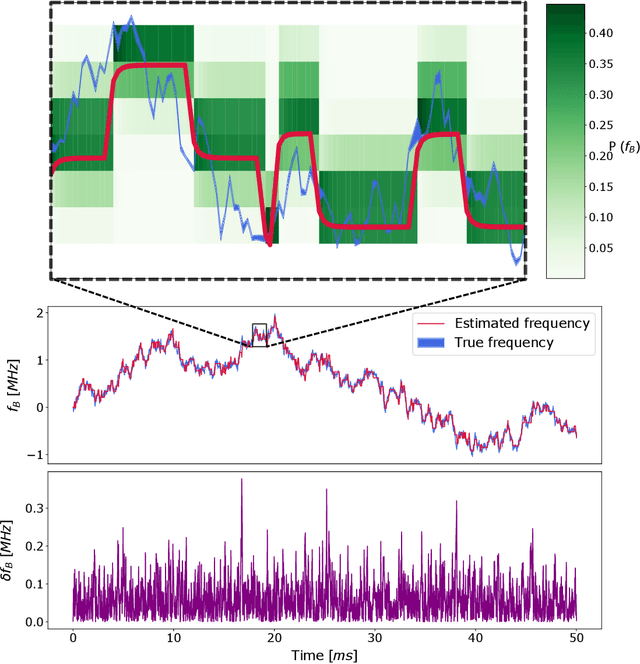

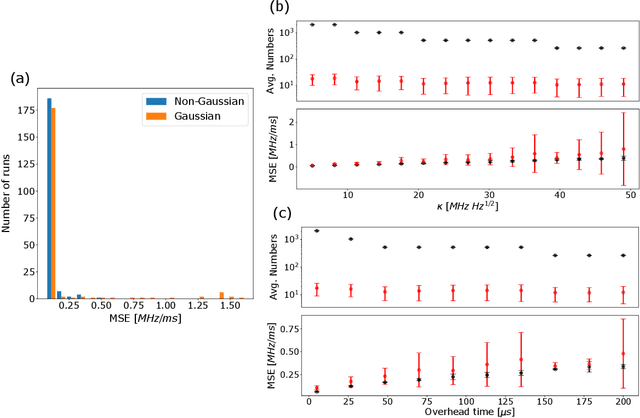

Resource-efficient adaptive Bayesian tracking of magnetic fields with a quantum sensor

Aug 20, 2020

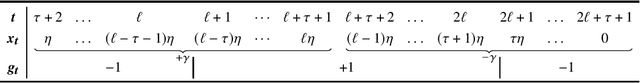

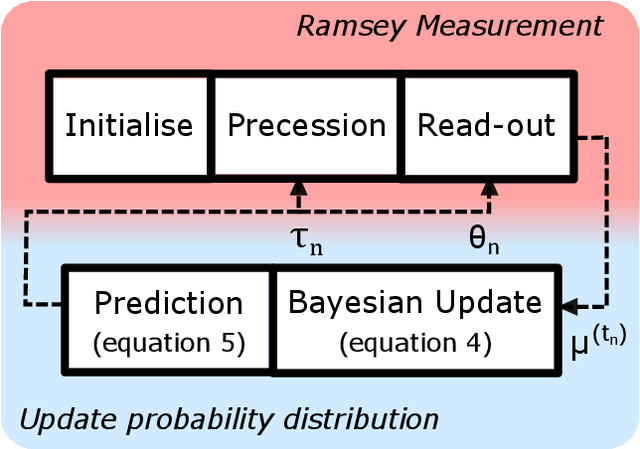

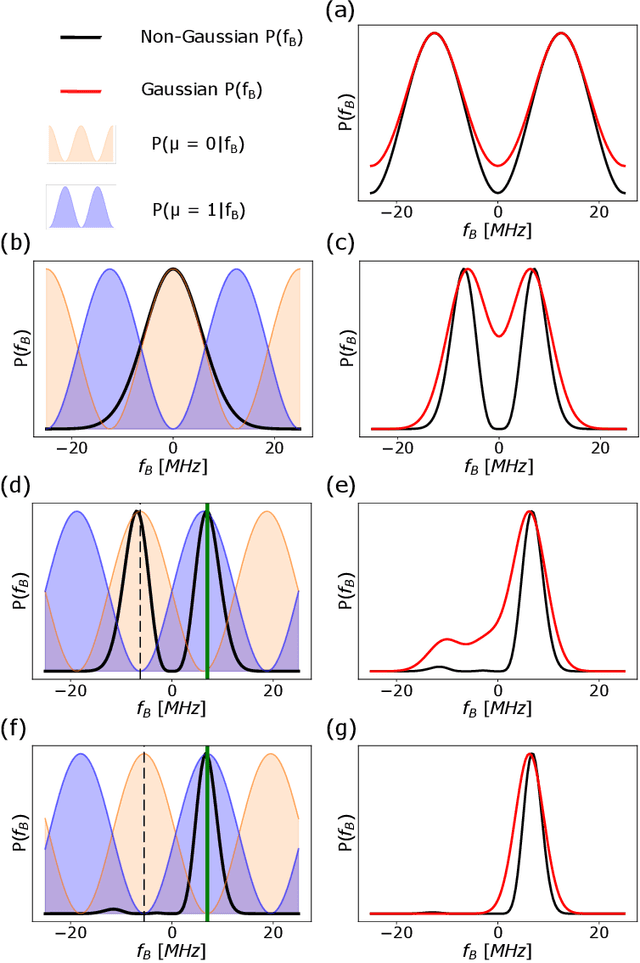

By addressing single electron spins through Ramsey experiments, nitrogen-vacancy centres can act as high-resolution sensors of magnetic field. In applications where the magnetic field may be changing rapidly, total sensing time is crucial and must be minimised. Bayesian estimation and adaptive experiment optimisation protocols work by computing the probability distribution of the magnetic field based on measurement outcomes and, by computing aquisition settings for the next measurement. These protocols can speed up the sensing process by reducing the number of measurements required. However, the computations feeding into the next iteration measurement settings must be performed quickly enough to allow real-time updates. This paper addresses the issue of computational speed by implementing an approximated Bayesian estimation technique, where probability distributions are approximated by a superposition of Gaussian functions. Given that only three parameters are required to fully describe a Gaussian, we find that the magnetic field probability distribution can typically be described by fewer than ten numbers, achieving a reduction in the number of operations by factor 20 compared to existing approaches, allowing for faster processing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge