"Time": models, code, and papers

Gaussian Process Bandit Optimization of the Thermodynamic Variational Objective

Oct 31, 2020

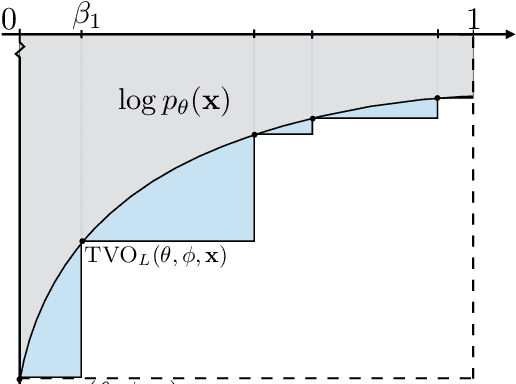

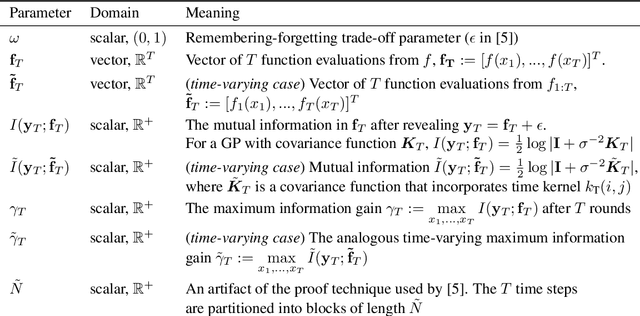

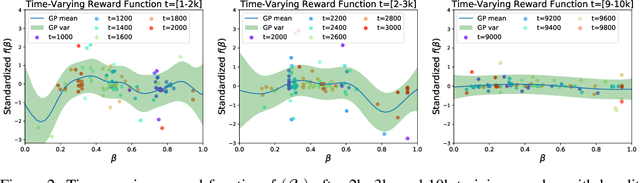

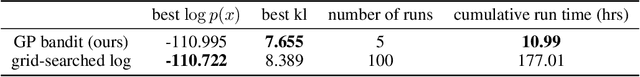

Achieving the full promise of the Thermodynamic Variational Objective (TVO), a recently proposed variational lower bound on the log evidence involving a one-dimensional Riemann integral approximation, requires choosing a "schedule" of sorted discretization points. This paper introduces a bespoke Gaussian process bandit optimization method for automatically choosing these points. Our approach not only automates their one-time selection, but also dynamically adapts their positions over the course of optimization, leading to improved model learning and inference. We provide theoretical guarantees that our bandit optimization converges to the regret-minimizing choice of integration points. Empirical validation of our algorithm is provided in terms of improved learning and inference in Variational Autoencoders and Sigmoid Belief Networks.

A Meta-Learning Approach to the Optimal Power Flow Problem Under Topology Reconfigurations

Dec 21, 2020

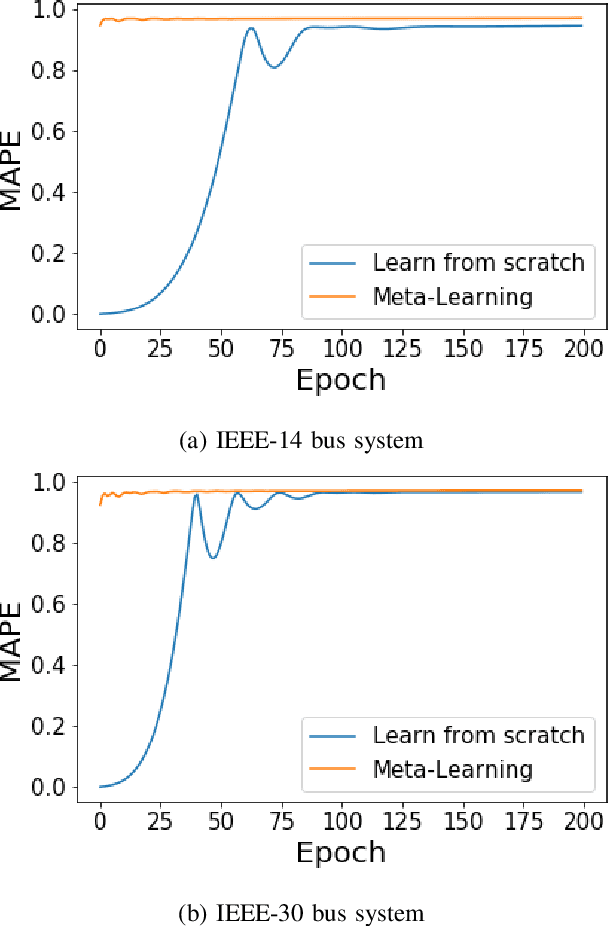

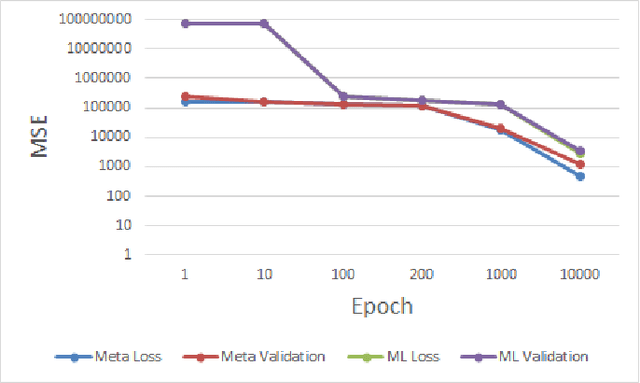

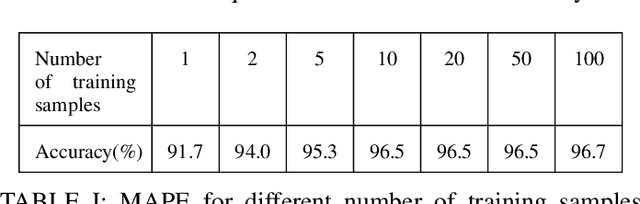

Recently, there has been a surge of interest in adopting deep neural networks (DNNs) for solving the optimal power flow (OPF) problem in power systems. Computing optimal generation dispatch decisions using a trained DNN takes significantly less time when compared to using conventional optimization solvers. However, a major drawback of existing work is that the machine learning models are trained for a specific system topology. Hence, the DNN predictions are only useful as long as the system topology remains unchanged. Changes to the system topology (initiated by the system operator) would require retraining the DNN, which incurs significant training overhead and requires an extensive amount of training data (corresponding to the new system topology). To overcome this drawback, we propose a DNN-based OPF predictor that is trained using a meta-learning (MTL) approach. The key idea behind this approach is to find a common initialization vector that enables fast training for any system topology. The developed OPF-predictor is validated through simulations using benchmark IEEE bus systems. The results show that the MTL approach achieves significant training speeds-ups and requires only a few gradient steps with a few data samples to achieve high OPF prediction accuracy.

Learning Sampling Distributions for Efficient High-Dimensional Motion Planning

Dec 01, 2020

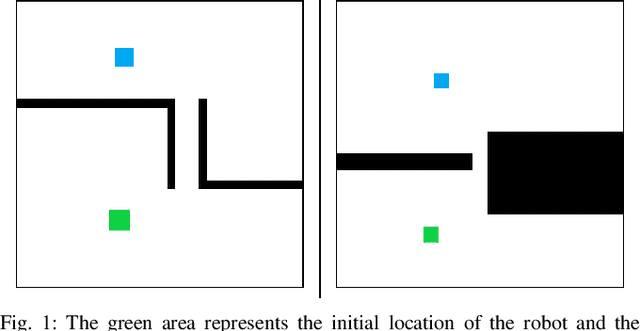

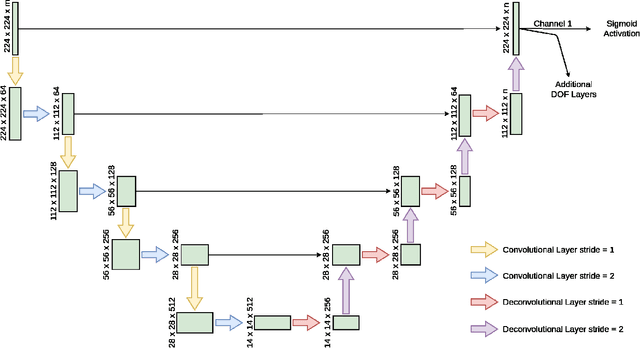

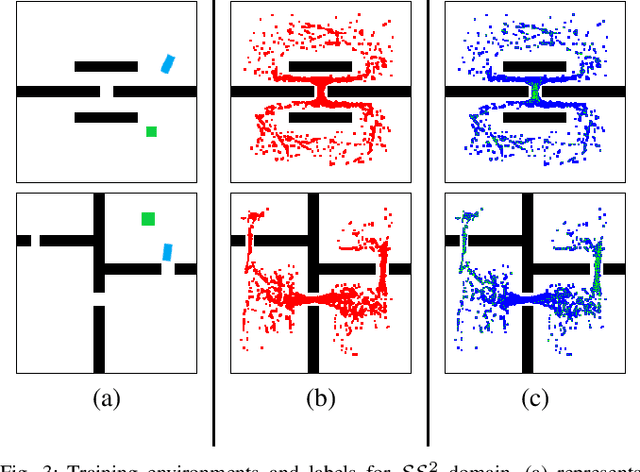

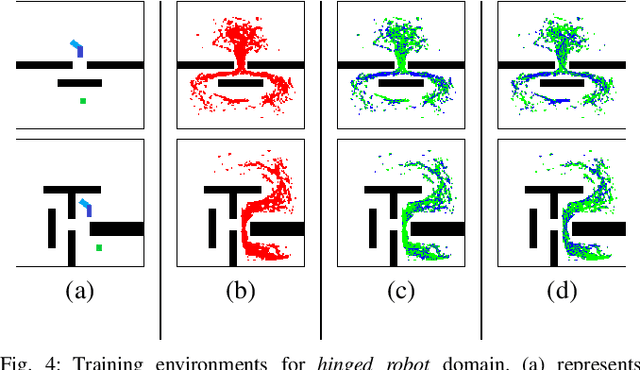

Robot motion planning involves computing a sequence of valid robot configurations that take the robot from its initial state to a goal state. Solving a motion planning problem optimally using analytical methods is proven to be PSPACE-Hard. Sampling-based approaches have tried to approximate the optimal solution efficiently. Generally, sampling-based planners use uniform samplers to cover the entire state space. In this paper, we propose a deep-learning-based framework that identifies robot configurations in the environment that are important to solve the given motion planning problem. These states are used to bias the sampling distribution in order to reduce the planning time. Our approach works with a unified network and generates domain-dependent network parameters based on the environment and the robot. We evaluate our approach with Learn and Link planner in three different settings. Results show significant improvement in motion planning times when compared with current sampling-based motion planners.

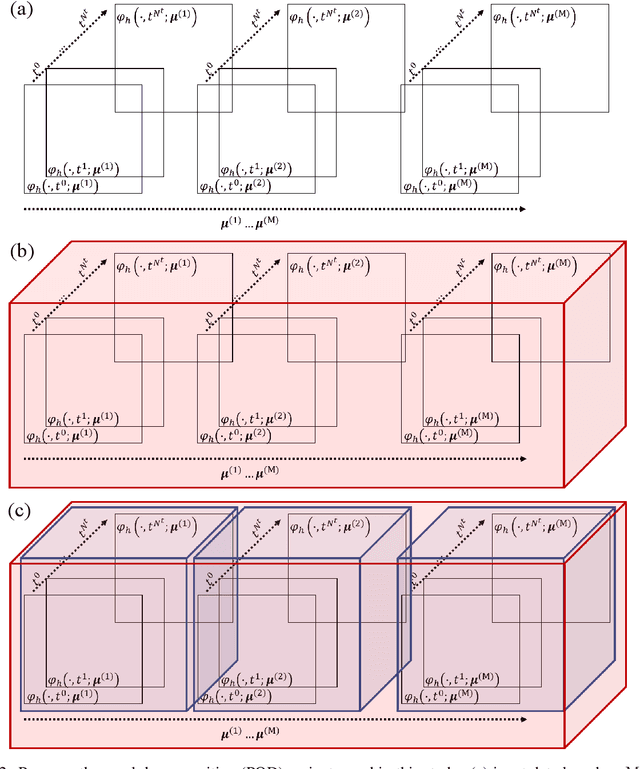

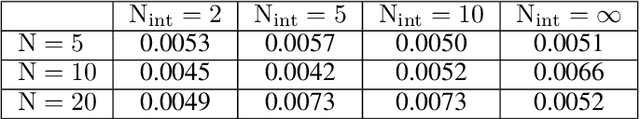

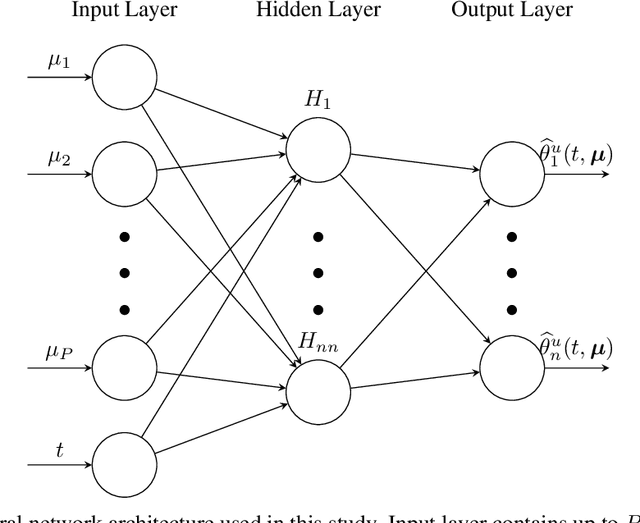

Non-intrusive reduced order modeling of poroelasticity of heterogeneous media based on a discontinuous Galerkin approximation

Jan 28, 2021

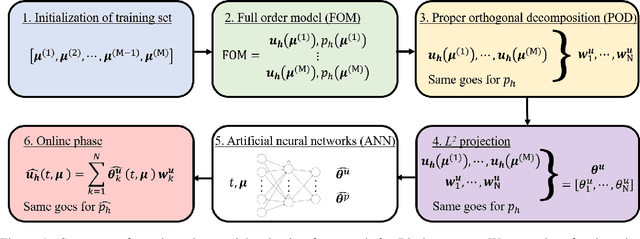

We present a non-intrusive model reduction framework for linear poroelasticity problems in heterogeneous porous media using proper orthogonal decomposition (POD) and neural networks, based on the usual offline-online paradigm. As the conductivity of porous media can be highly heterogeneous and span several orders of magnitude, we utilize the interior penalty discontinuous Galerkin (DG) method as a full order solver to handle discontinuity and ensure local mass conservation during the offline stage. We then use POD as a data compression tool and compare the nested POD technique, in which time and uncertain parameter domains are compressed consecutively, to the classical POD method in which all domains are compressed simultaneously. The neural networks are finally trained to map the set of uncertain parameters, which could correspond to material properties, boundary conditions, or geometric characteristics, to the collection of coefficients calculated from an $L^2$ projection over the reduced basis. We then perform a non-intrusive evaluation of the neural networks to obtain coefficients corresponding to new values of the uncertain parameters during the online stage. We show that our framework provides reasonable approximations of the DG solution, but it is significantly faster. Moreover, the reduced order framework can capture sharp discontinuities of both displacement and pressure fields resulting from the heterogeneity in the media conductivity, which is generally challenging for intrusive reduced order methods. The sources of error are presented, showing that the nested POD technique is computationally advantageous and still provides comparable accuracy to the classical POD method. We also explore the effect of different choices of the hyperparameters of the neural network on the framework performance.

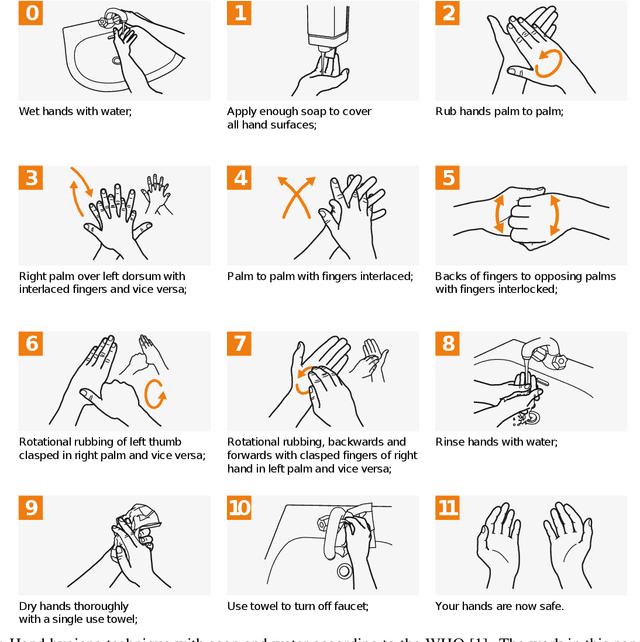

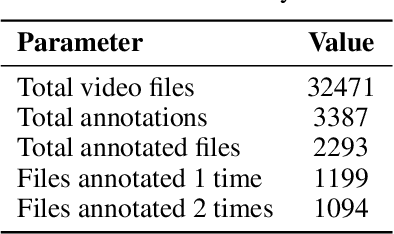

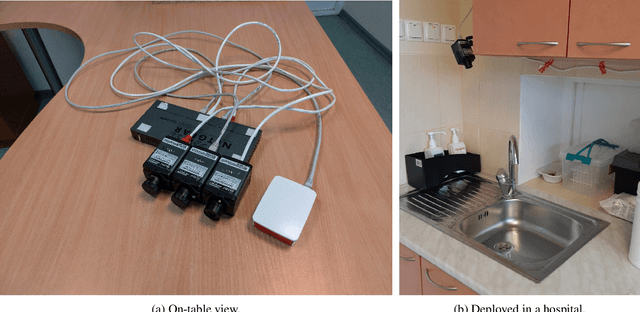

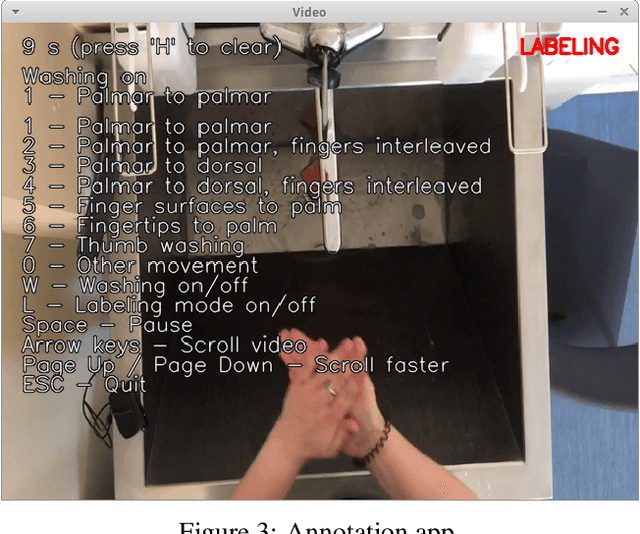

Automated Quality Assessment of Hand Washing Using Deep Learning

Dec 01, 2020

Washing hands is one of the most important ways to prevent infectious diseases, including COVID-19. Unfortunately, medical staff does not always follow the World Health Organization (WHO) hand washing guidelines in their everyday work. To this end, we present neural networks for automatically recognizing the different washing movements defined by the WHO. We train the neural network on a part of a large (2000+ videos) real-world labeled dataset with the different washing movements. The preliminary results show that using pre-trained neural network models such as MobileNetV2 and Xception for the task, it is possible to achieve >64 % accuracy in recognizing the different washing movements. We also describe the collection and the structure of the above open-access dataset created as part of this work. Finally, we describe how the neural network can be used to construct a mobile phone application for automatic quality control and real-time feedback for medical professionals.

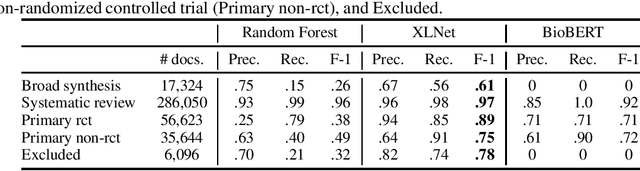

Neural language models for text classification in evidence-based medicine

Dec 01, 2020

The COVID-19 has brought about a significant challenge to the whole of humanity, but with a special burden upon the medical community. Clinicians must keep updated continuously about symptoms, diagnoses, and effectiveness of emergent treatments under a never-ending flood of scientific literature. In this context, the role of evidence-based medicine (EBM) for curating the most substantial evidence to support public health and clinical practice turns essential but is being challenged as never before due to the high volume of research articles published and pre-prints posted daily. Artificial Intelligence can have a crucial role in this situation. In this article, we report the results of an applied research project to classify scientific articles to support Epistemonikos, one of the most active foundations worldwide conducting EBM. We test several methods, and the best one, based on the XLNet neural language model, improves the current approach by 93\% on average F1-score, saving valuable time from physicians who volunteer to curate COVID-19 research articles manually.

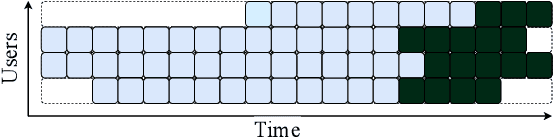

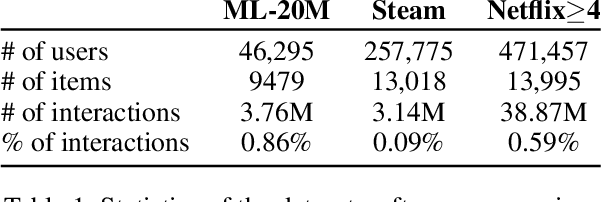

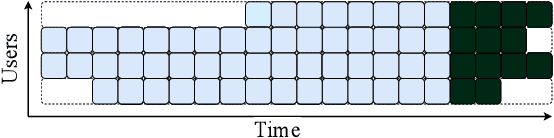

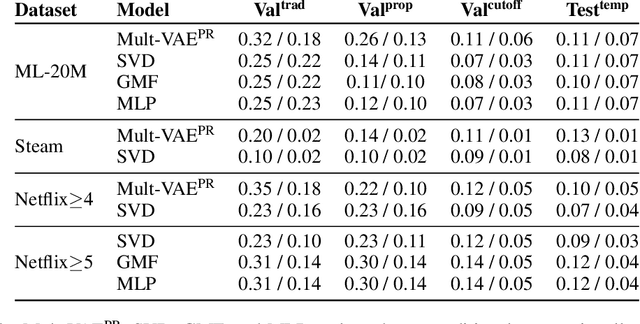

Modeling Online Behavior in Recommender Systems: The Importance of Temporal Context

Sep 19, 2020

Simulating online recommender system performance is notoriously difficult and the discrepancy between the online and offline behaviors is typically not accounted for in offline evaluations. Recommender systems research tends to evaluate model performance on randomly sampled targets, yet the same systems are later used to predict user behavior sequentially from a fixed point in time. This disparity permits weaknesses to go unnoticed until the model is deployed in a production setting. We first demonstrate how omitting temporal context when evaluating recommender system performance leads to false confidence. To overcome this, we propose an offline evaluation protocol modeling the real-life use-case that simultaneously accounts for temporal context. Next, we propose a training procedure to further embed the temporal context in existing models: we introduce it in a multi-objective approach to traditionally time-unaware recommender systems. We confirm the advantage of adding a temporal objective via the proposed evaluation protocol. Finally, we validate that the Pareto Fronts obtained with the added objective dominate those produced by state-of-the-art models that are only optimized for accuracy on three real-world publicly available datasets. The results show that including our temporal objective can improve recall@20 by up to 20%.

On Multi-Session Website Fingerprinting over TLS Handshake

Sep 19, 2020

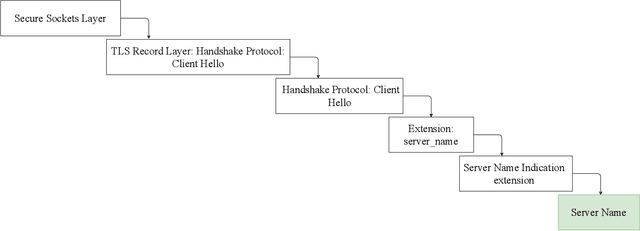

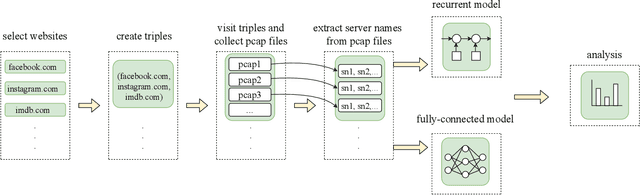

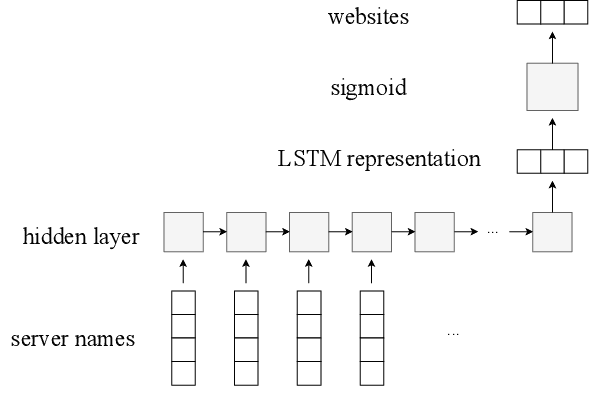

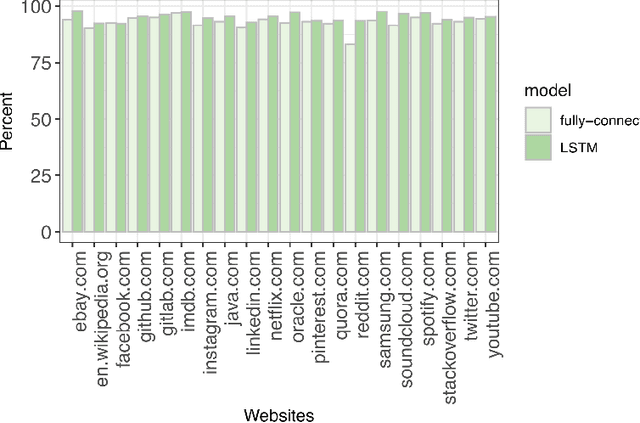

Analyzing users' Internet traffic data and activities has a certain impact on users' experiences in different ways, from maintaining the quality of service on the Internet and providing users with high-quality recommendation systems to anomaly detection and secure connection. Considering that the Internet is a complex network, we cannot disintegrate the packets for each activity. Therefore we have to have a model that can identify all the activities an Internet user does in a given period of time. In this paper, we propose a deep learning approach to generate a multi-label classifier that can predict the websites visited by a user in a certain period. This model works by extracting the server names appearing in chronological order in the TLSv1.2 and TLSv1.3 Client Hello packets. We compare the results on the test data with a simple fully-connected neural network developed for the same purpose to prove that using the time-sequential information improves the performance. For further evaluations, we test the model on a human-made dataset and a modified dataset to check the model's accuracy under different circumstances. Finally, our proposed model achieved an accuracy of 95% on the test dataset and above 90% on both the modified dataset and the human-made dataset.

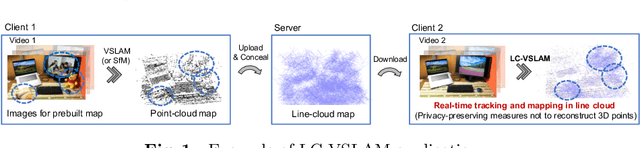

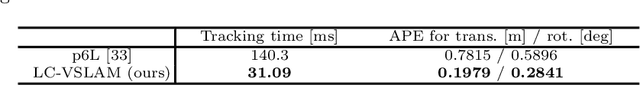

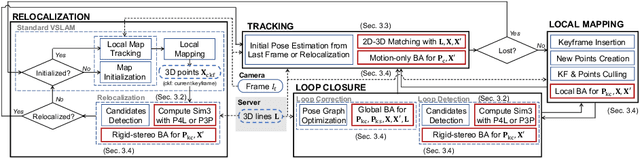

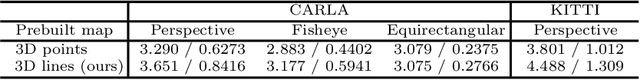

Privacy Preserving Visual SLAM

Jul 20, 2020

This study proposes a privacy-preserving Visual SLAM framework for estimating camera poses and performing bundle adjustment with mixed line and point clouds in real time. Previous studies have proposed localization methods to estimate a camera pose using a line-cloud map for a single image or a reconstructed point cloud. These methods offer a scene privacy protection against the inversion attacks by converting a point cloud to a line cloud, which reconstruct the scene images from the point cloud. However, they are not directly applicable to a video sequence because they do not address computational efficiency. This is a critical issue to solve for estimating camera poses and performing bundle adjustment with mixed line and point clouds in real time. Moreover, there has been no study on a method to optimize a line-cloud map of a server with a point cloud reconstructed from a client video because any observation points on the image coordinates are not available to prevent the inversion attacks, namely the reversibility of the 3D lines. The experimental results with synthetic and real data show that our Visual SLAM framework achieves the intended privacy-preserving formation and real-time performance using a line-cloud map.

DoubleFusion: Real-time Capture of Human Performances with Inner Body Shapes from a Single Depth Sensor

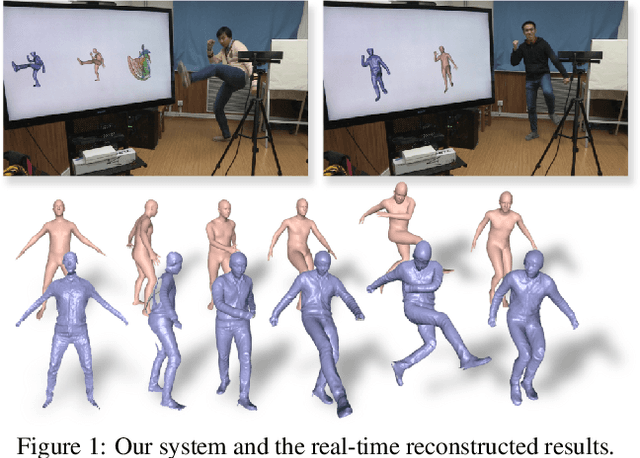

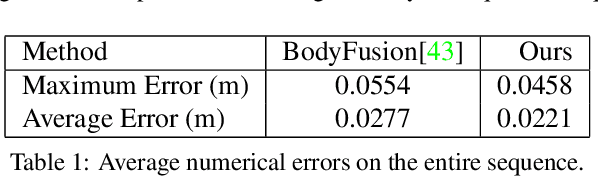

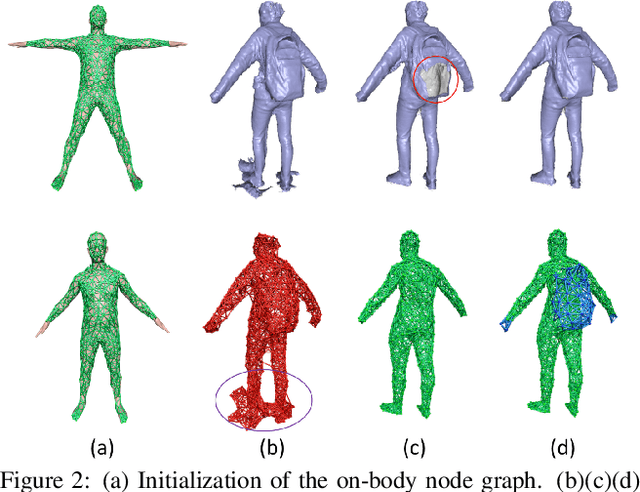

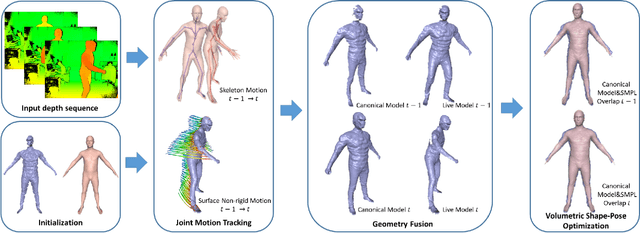

Apr 17, 2018

We propose DoubleFusion, a new real-time system that combines volumetric dynamic reconstruction with data-driven template fitting to simultaneously reconstruct detailed geometry, non-rigid motion and the inner human body shape from a single depth camera. One of the key contributions of this method is a double layer representation consisting of a complete parametric body shape inside, and a gradually fused outer surface layer. A pre-defined node graph on the body surface parameterizes the non-rigid deformations near the body, and a free-form dynamically changing graph parameterizes the outer surface layer far from the body, which allows more general reconstruction. We further propose a joint motion tracking method based on the double layer representation to enable robust and fast motion tracking performance. Moreover, the inner body shape is optimized online and forced to fit inside the outer surface layer. Overall, our method enables increasingly denoised, detailed and complete surface reconstructions, fast motion tracking performance and plausible inner body shape reconstruction in real-time. In particular, experiments show improved fast motion tracking and loop closure performance on more challenging scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge