"Time": models, code, and papers

Effect of Architectures and Training Methods on the Performance of Learned Video Frame Prediction

Aug 13, 2020

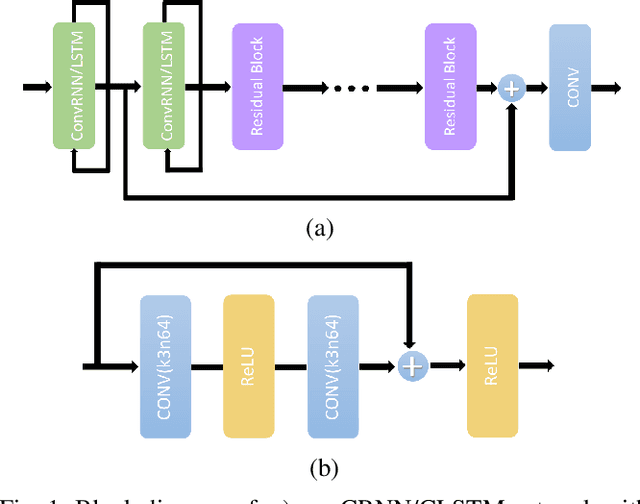

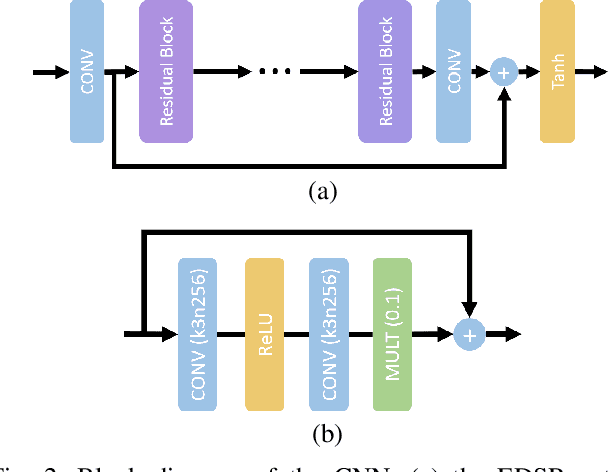

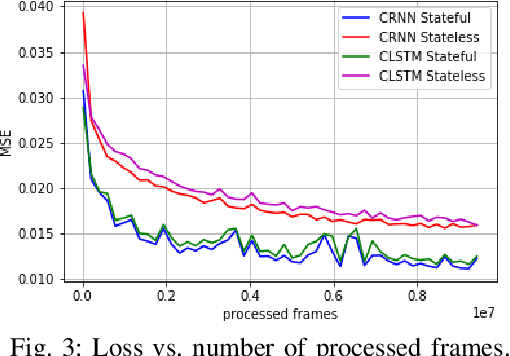

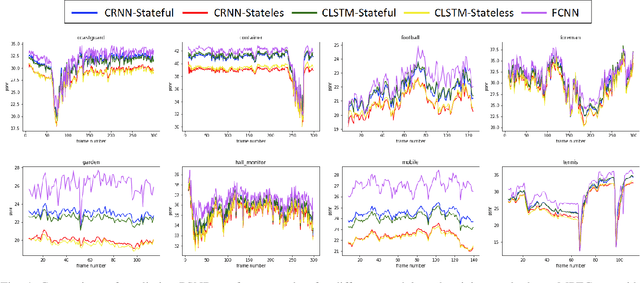

We analyze the performance of feedforward vs. recurrent neural network (RNN) architectures and associated training methods for learned frame prediction. To this effect, we trained a residual fully convolutional neural network (FCNN), a convolutional RNN (CRNN), and a convolutional long short-term memory (CLSTM) network for next frame prediction using the mean square loss. We performed both stateless and stateful training for recurrent networks. Experimental results show that the residual FCNN architecture performs the best in terms of peak signal to noise ratio (PSNR) at the expense of higher training and test (inference) computational complexity. The CRNN can be trained stably and very efficiently using the stateful truncated backpropagation through time procedure, and it requires an order of magnitude less inference runtime to achieve near real-time frame prediction with an acceptable performance.

Commission Fee is not Enough: A Hierarchical Reinforced Framework for Portfolio Management

Dec 23, 2020

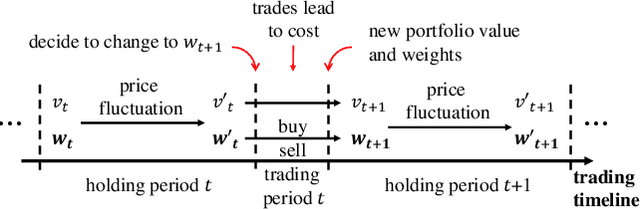

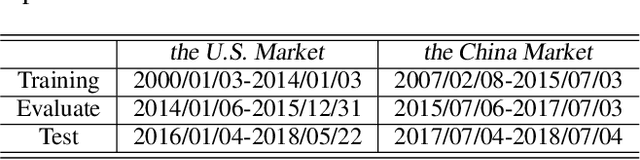

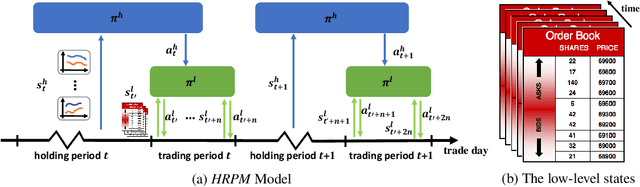

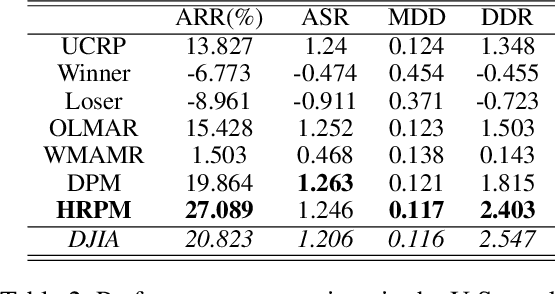

Portfolio management via reinforcement learning is at the forefront of fintech research, which explores how to optimally reallocate a fund into different financial assets over the long term by trial-and-error. Existing methods are impractical since they usually assume each reallocation can be finished immediately and thus ignoring the price slippage as part of the trading cost. To address these issues, we propose a hierarchical reinforced stock trading system for portfolio management (HRPM). Concretely, we decompose the trading process into a hierarchy of portfolio management over trade execution and train the corresponding policies. The high-level policy gives portfolio weights at a lower frequency to maximize the long term profit and invokes the low-level policy to sell or buy the corresponding shares within a short time window at a higher frequency to minimize the trading cost. We train two levels of policies via pre-training scheme and iterative training scheme for data efficiency. Extensive experimental results in the U.S. market and the China market demonstrate that HRPM achieves significant improvement against many state-of-the-art approaches.

Beyond I.I.D.: Three Levels of Generalization for Question Answering on Knowledge Bases

Dec 13, 2020

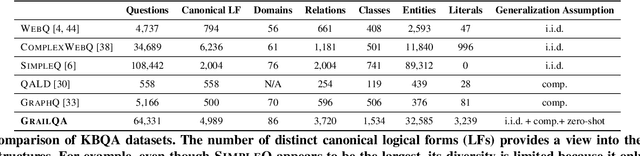

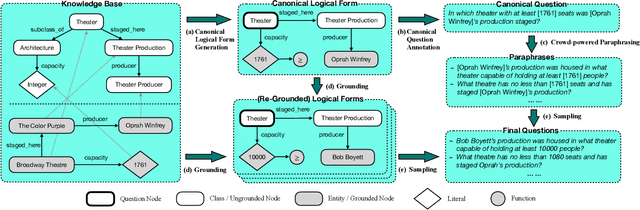

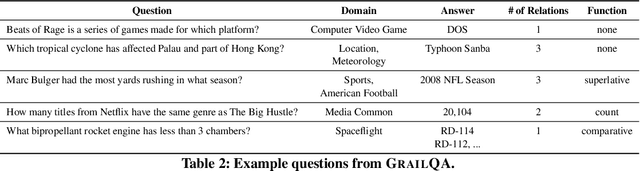

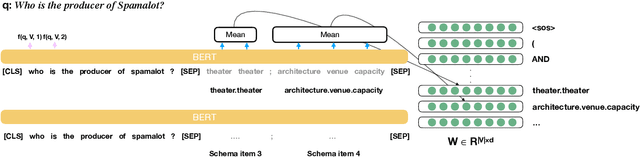

Existing studies on question answering on knowledge bases (KBQA) mainly operate with the standard i.i.d assumption, i.e., training distribution over questions is the same as the test distribution. However, i.i.d may be neither reasonably achievable nor desirable on large-scale KBs because 1) true user distribution is hard to capture and 2) randomly sample training examples from the enormous space would be highly data-inefficient. Instead, we suggest that KBQA models should have three levels of built-in generalization: i.i.d, compositional, and zero-shot. To facilitate the development of KBQA models with stronger generalization, we construct and release a new large-scale, high-quality dataset with 64,331 questions, GrailQA, and provide evaluation settings for all three levels of generalization. In addition, we propose a novel BERT-based KBQA model. The combination of our dataset and model enables us to thoroughly examine and demonstrate, for the first time, the key role of pre-trained contextual embeddings like BERT in the generalization of KBQA.

Quantum Mathematics in Artificial Intelligence

Feb 01, 2021

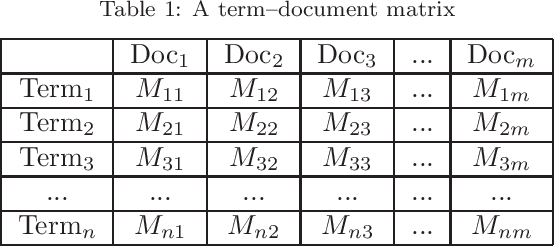

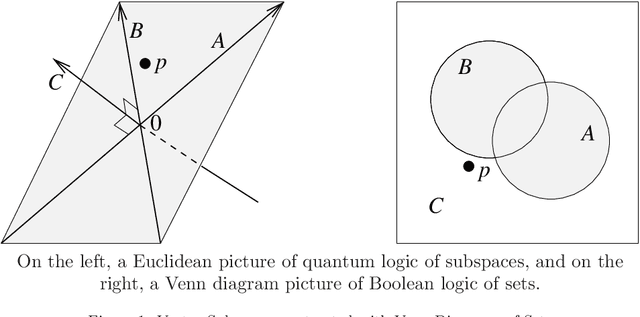

In the decade since 2010, successes in artificial intelligence have been at the forefront of computer science and technology, and vector space models have solidified a position at the forefront of artificial intelligence. At the same time, quantum computers have become much more powerful, and announcements of major advances are frequently in the news. The mathematical techniques underlying both these areas have more in common than is sometimes realized. Vector spaces took a position at the axiomatic heart of quantum mechanics in the 1930s, and this adoption was a key motivation for the derivation of logic and probability from the linear geometry of vector spaces. Quantum interactions between particles are modelled using the tensor product, which is also used to express objects and operations in artificial neural networks. This paper describes some of these common mathematical areas, including examples of how they are used in artificial intelligence (AI), particularly in automated reasoning and natural language processing (NLP). Techniques discussed include vector spaces, scalar products, subspaces and implication, orthogonal projection and negation, dual vectors, density matrices, positive operators, and tensor products. Application areas include information retrieval, categorization and implication, modelling word-senses and disambiguation, inference in knowledge bases, and semantic composition. Some of these approaches can potentially be implemented on quantum hardware. Many of the practical steps in this implementation are in early stages, and some are already realized. Explaining some of the common mathematical tools can help researchers in both AI and quantum computing further exploit these overlaps, recognizing and exploring new directions along the way.

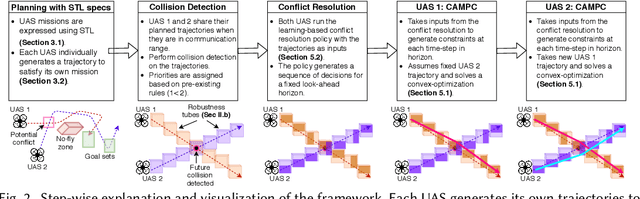

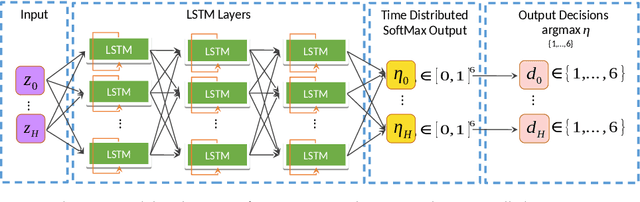

Learning-'N-Flying: A Learning-based, Decentralized Mission Aware UAS Collision Avoidance Scheme

Jan 25, 2021

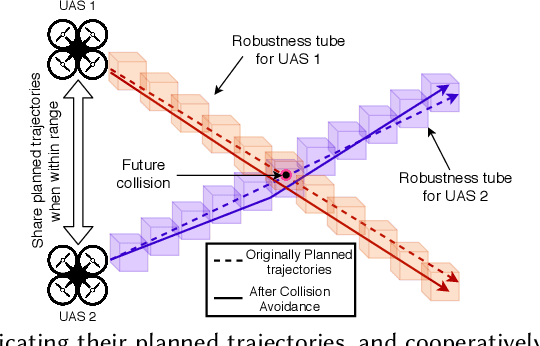

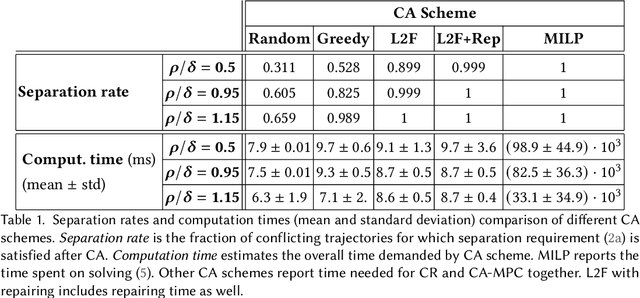

Urban Air Mobility, the scenario where hundreds of manned and Unmanned Aircraft System (UAS) carry out a wide variety of missions (e.g. moving humans and goods within the city), is gaining acceptance as a transportation solution of the future. One of the key requirements for this to happen is safely managing the air traffic in these urban airspaces. Due to the expected density of the airspace, this requires fast autonomous solutions that can be deployed online. We propose Learning-'N-Flying (LNF) a multi-UAS Collision Avoidance (CA) framework. It is decentralized, works on-the-fly and allows autonomous UAS managed by different operators to safely carry out complex missions, represented using Signal Temporal Logic, in a shared airspace. We initially formulate the problem of predictive collision avoidance for two UAS as a mixed-integer linear program, and show that it is intractable to solve online. Instead, we first develop Learning-to-Fly (L2F) by combining: a) learning-based decision-making, and b) decentralized convex optimization-based control. LNF extends L2F to cases where there are more than two UAS on a collision path. Through extensive simulations, we show that our method can run online (computation time in the order of milliseconds), and under certain assumptions has failure rates of less than 1% in the worst-case, improving to near 0% in more relaxed operations. We show the applicability of our scheme to a wide variety of settings through multiple case studies.

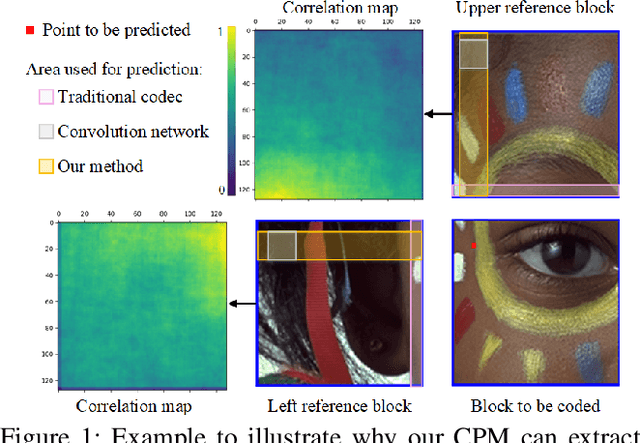

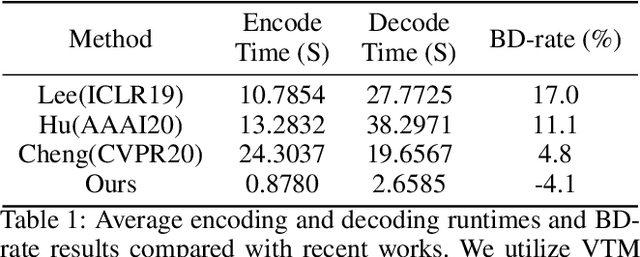

Learned Block-based Hybrid Image Compression

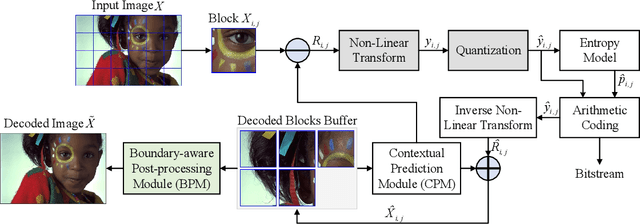

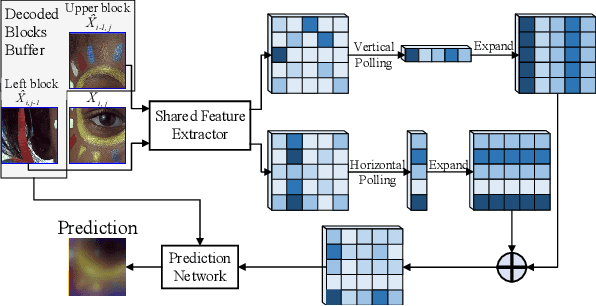

Jan 18, 2021

Recent works on learned image compression perform encoding and decoding processes in a full-resolution manner, resulting in two problems when deployed for practical applications. First, parallel acceleration of the autoregressive entropy model cannot be achieved due to serial decoding. Second, full-resolution inference often causes the out-of-memory(OOM) problem with limited GPU resources, especially for high-resolution images. Block partition is a good design choice to handle the above issues, but it brings about new challenges in reducing the redundancy between blocks and eliminating block effects. To tackle the above challenges, this paper provides a learned block-based hybrid image compression (LBHIC) framework. Specifically, we introduce explicit intra prediction into a learned image compression framework to utilize the relation among adjacent blocks. Superior to context modeling by linear weighting of neighbor pixels in traditional codecs, we propose a contextual prediction module (CPM) to better capture long-range correlations by utilizing the strip pooling to extract the most relevant information in neighboring latent space, thus achieving effective information prediction. Moreover, to alleviate blocking artifacts, we further propose a boundary-aware postprocessing module (BPM) with the edge importance taken into account. Extensive experiments demonstrate that the proposed LBHIC codec outperforms the VVC, with a bit-rate conservation of 4.1%, and reduces the decoding time by approximately 86.7% compared with that of state-of-the-art learned image compression methods.

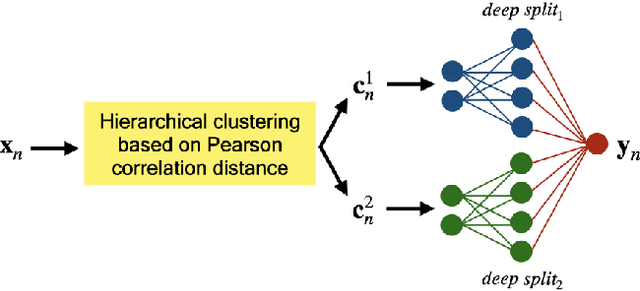

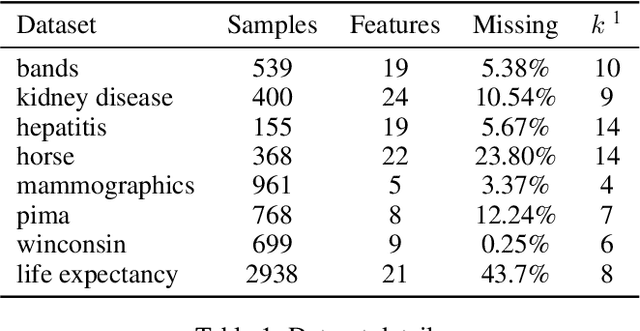

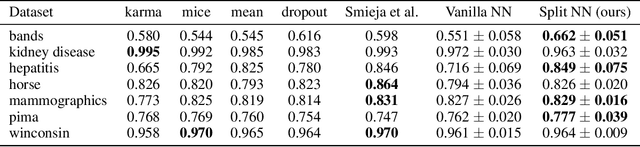

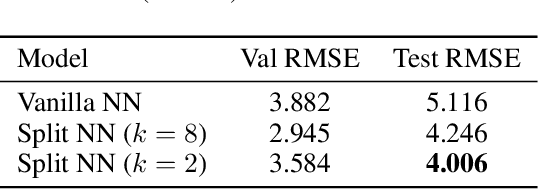

Robustness to Missing Features using Hierarchical Clustering with Split Neural Networks

Nov 19, 2020

The problem of missing data has been persistent for a long time and poses a major obstacle in machine learning and statistical data analysis. Past works in this field have tried using various data imputation techniques to fill in the missing data, or training neural networks (NNs) with the missing data. In this work, we propose a simple yet effective approach that clusters similar input features together using hierarchical clustering and then trains proportionately split neural networks with a joint loss. We evaluate this approach on a series of benchmark datasets and show promising improvements even with simple imputation techniques. We attribute this to learning through clusters of similar features in our model architecture. The source code is available at https://github.com/usarawgi911/Robustness-to-Missing-Features

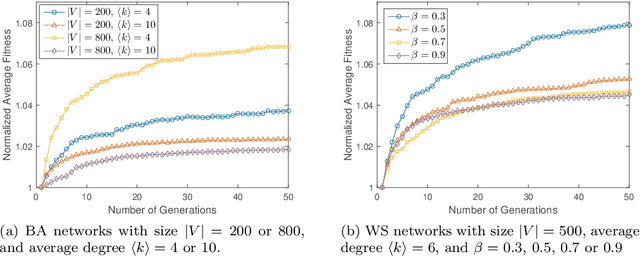

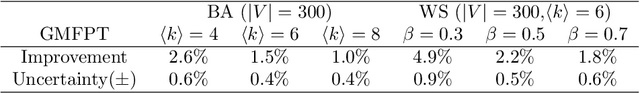

Selection of Random Walkers that Optimizes the Global Mean First-Passage Time for Search in Complex Networks

Dec 12, 2018

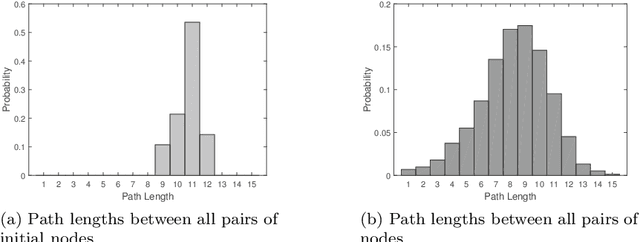

We design a method to optimize the global mean first-passage time (GMFPT) of multiple random walkers searching in complex networks for a general target, without specifying the property of the target node. According to the Laplace transformed formula of the GMFPT, we can equivalently minimize the overlap between the probability distribution of sites visited by the random walkers. We employ a mutation only genetic algorithm to solve this optimization problem using a population of walkers with different starting positions and a corresponding mutation matrix to modify them. The numerical experiments on two kinds of random networks (WS and BA) show satisfactory results in selecting the origins for the walkers to achieve minimum overlap. Our method thus provides guidance for setting up the search process by multiple random walkers on complex networks.

* 5 pages, 2 figures, accepted at ICCS'17

Machine learning based automated identification of thunderstorms from anemometric records using shapelet transform

Jan 10, 2021

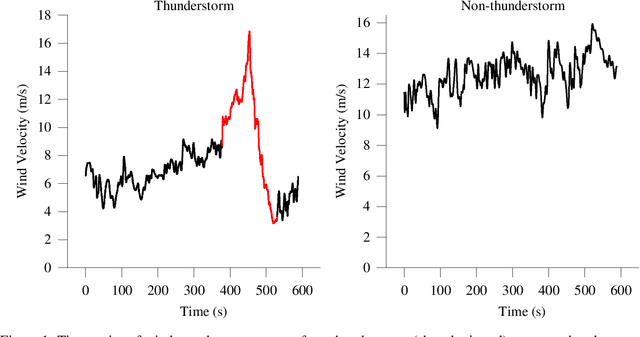

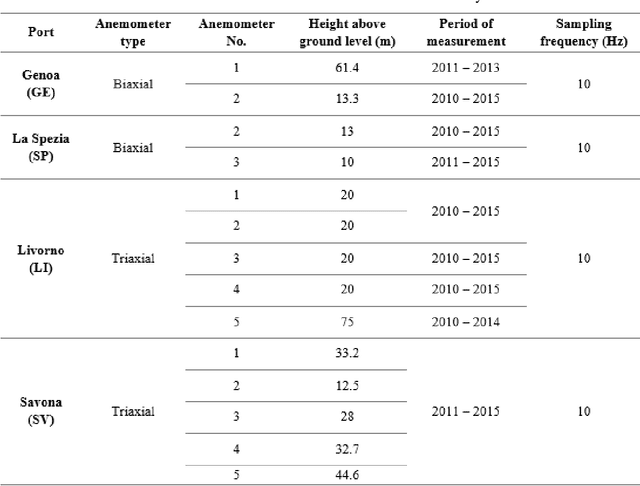

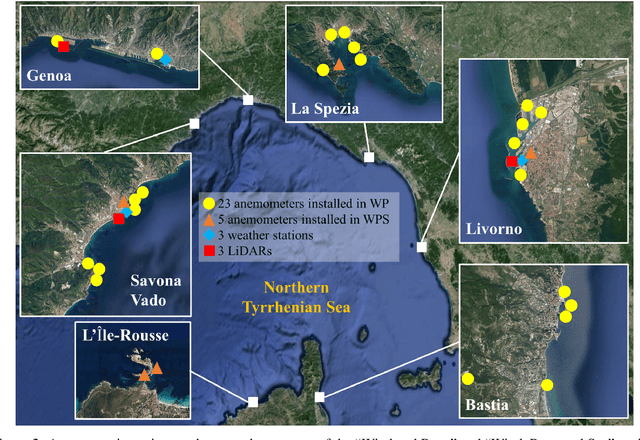

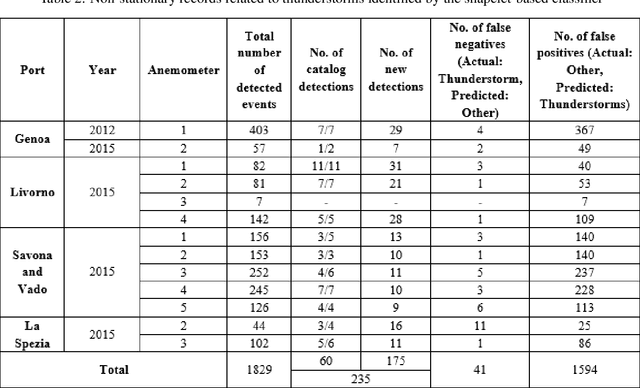

Detection of thunderstorms is important to the wind hazard community to better understand extreme winds field characteristics and associated wind induced load effects on structures. This paper contributes to this effort by proposing a new course of research that uses machine learning techniques, independent of wind statistics based parameters, to autonomously identify and separate thunderstorms from large databases containing high frequency sampled continuous wind speed measurements. In this context, the use of Shapelet transform is proposed to identify key individual attributes distinctive to extreme wind events based on similarity of shape of their time series. This novel shape based representation when combined with machine learning algorithms yields a practical event detection procedure with minimal domain expertise. In this paper, the shapelet transform along with Random Forest classifier is employed for the identification of thunderstorms from 1 year of data from 14 ultrasonic anemometers that are a part of an extensive in situ wind monitoring network in the Northern Mediterranean ports. A collective total of 235 non-stationary records associated with thunderstorms were identified using this method. The results lead to enhancing the pool of thunderstorm data for more comprehensive understanding of a wide variety of thunderstorms that have not been previously detected using conventional gust factor-based methods.

Design of Experiments for Verifying Biomolecular Networks

Nov 25, 2020

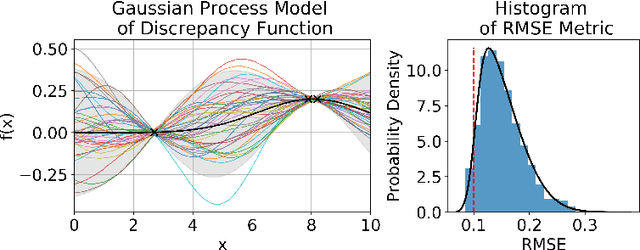

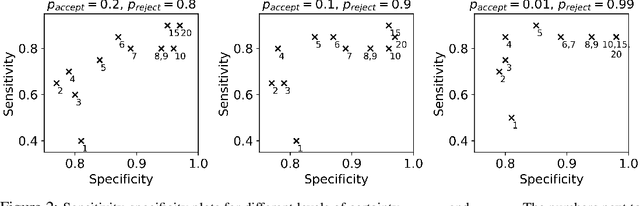

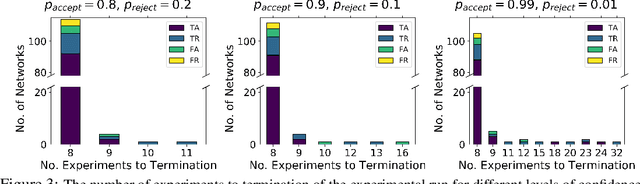

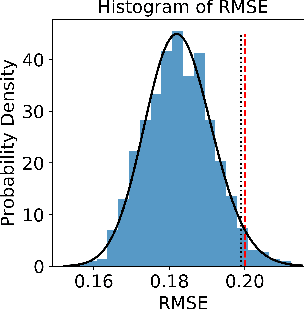

There is a growing trend in molecular and synthetic biology of using mechanistic (non machine learning) models to design biomolecular networks. Once designed, these networks need to be validated by experimental results to ensure the theoretical network correctly models the true system. However, these experiments can be expensive and time consuming. We propose a design of experiments approach for validating these networks efficiently. Gaussian processes are used to construct a probabilistic model of the discrepancy between experimental results and the designed response, then a Bayesian optimization strategy used to select the next sample points. We compare different design criteria and develop a stopping criterion based on a metric that quantifies this discrepancy over the whole surface, and its uncertainty. We test our strategy on simulated data from computer models of biochemical processes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge