"Time": models, code, and papers

MPC-Based Hierarchical Task Space Control of Underactuated and Constrained Robots for Execution of Multiple Tasks

Sep 13, 2020

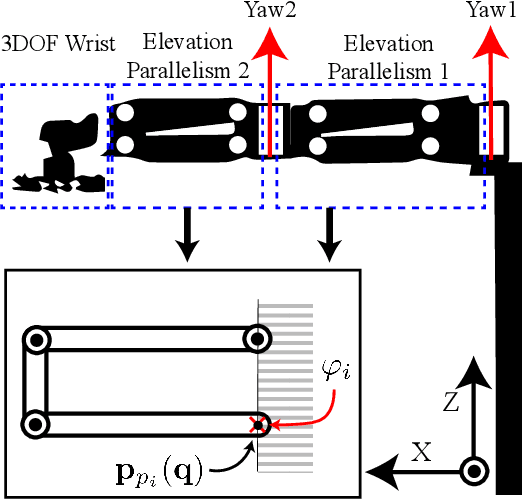

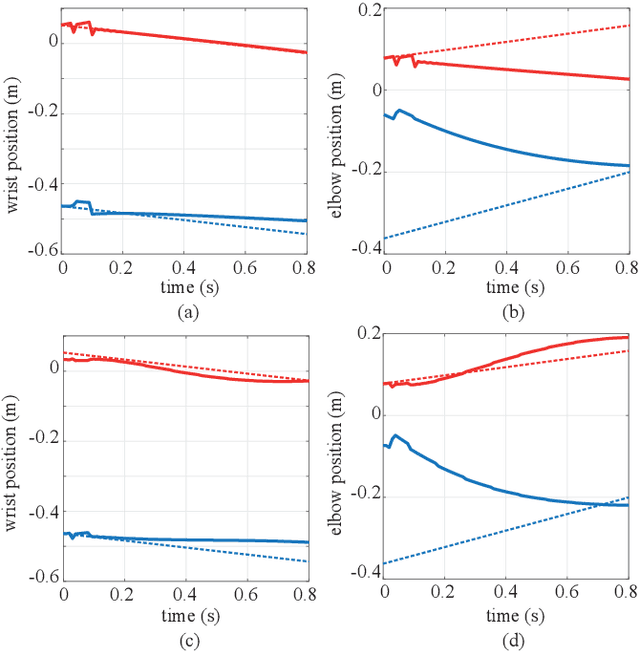

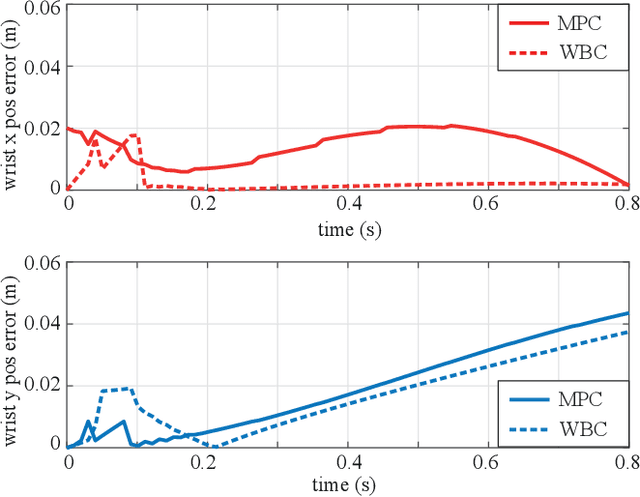

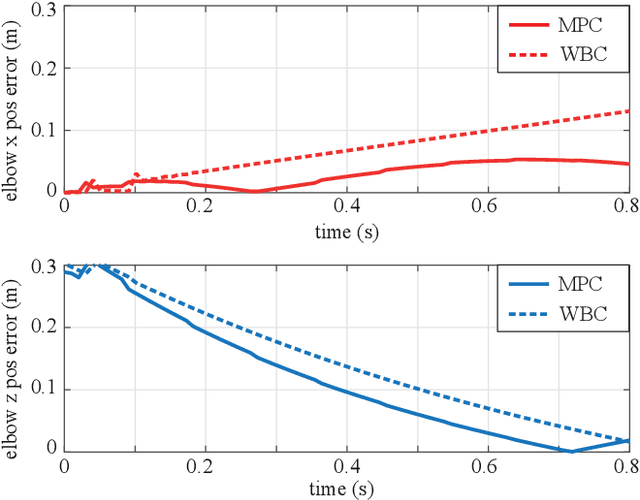

This paper proposes an MPC-based controller to efficiently execute multiple hierarchical tasks for underactuated and constrained robotic systems. Existing task-space controllers or whole-body controllers solve instantaneous optimization problems given task trajectories and the robot plant dynamics. However, the task-space control method we propose here relies on the prediction of future state trajectories and the corresponding costs-to-go terms over a finite time-horizon for computing control commands. We employ acceleration energy error as the performance index for the optimization problem and extend it over the finite-time horizon of our MPC. Our approach employs quadratically constrained quadratic programming, which includes quadratic constraints to handle multiple hierarchical tasks, and is computationally more efficient than nonlinear MPC-based approaches that rely on nonlinear programming. We validate our approach using numerical simulations of a new type of robot manipulator system, which contains underactuated and constrained mechanical structures.

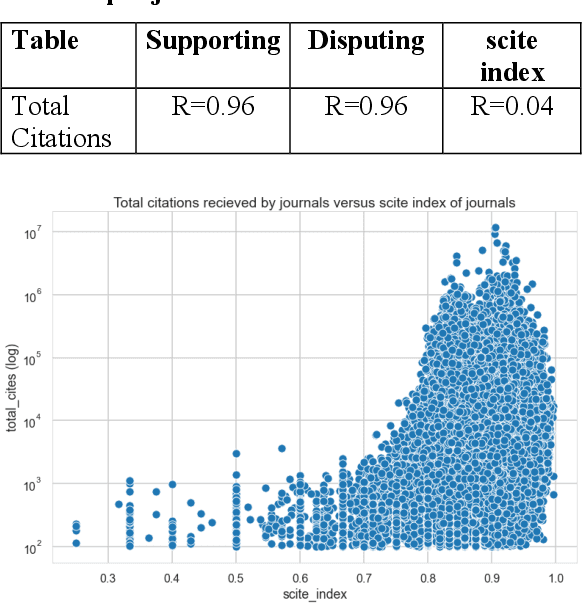

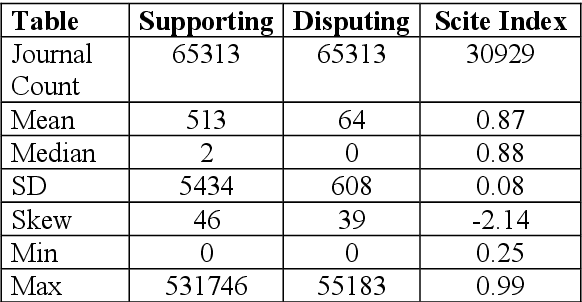

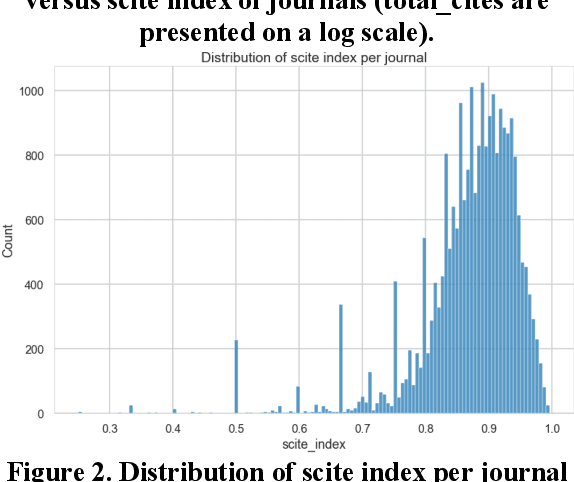

How are journals cited? characterizing journal citations by type of citation

Feb 22, 2021

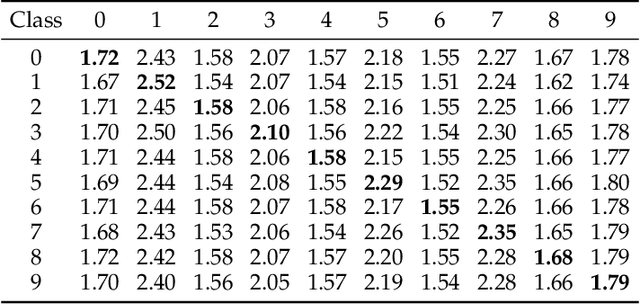

Evaluation of journals for quality is one of the dominant themes of bibliometrics since journals are the primary venue of vetting and distribution of scholarship. There are many criticisms of quantifying journal impact with bibliometrics including disciplinary differences among journals, what source materials are used, time windows for the inclusion of works to measure, and skewness of citation distributions (Lariviere & Sugimoto, 2019). However, despite various attempts to remediate these in newly proposed indicators such as SJR, SNIP, and Eigenfactor (Walters, 2017) indicators still remain based on citation counts and fail to acknowledge the critical differences that the type of citation made, whether it's supporting or disputing a work when quantifying journal impact. While various programs have been suggested to apply and encompass citation content analysis within bibliometrics projects, citation content analysis has not been done at the scale needed in order to supplement quantitate journal citation analysis until the scite citation index was produced. Using this citation index containing citation types based on citation function (supporting, disputing, or mentioning) we present initial results on the statistical characterization of citations to journals based on citation function. We also present initial results of characterizing the ratio of supports and disputes received by a journal as a potential indicator of quality and show two interesting results: the ratio of supports and disputes do not correlate with total citations and that the distribution of this ratio is not skewed showing a normal distribution. We conclude with a proposal for future research using citation analysis qualified by citation function as well as the implications of performing bibliometrics tasks such as research evaluation and information retrieval using citation function.

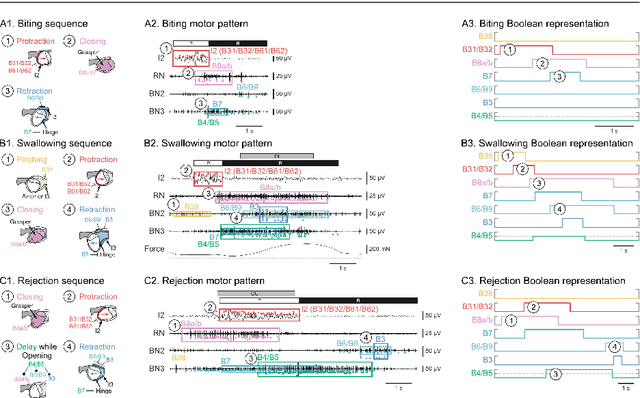

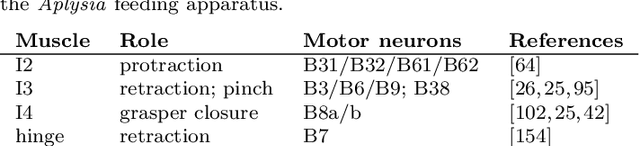

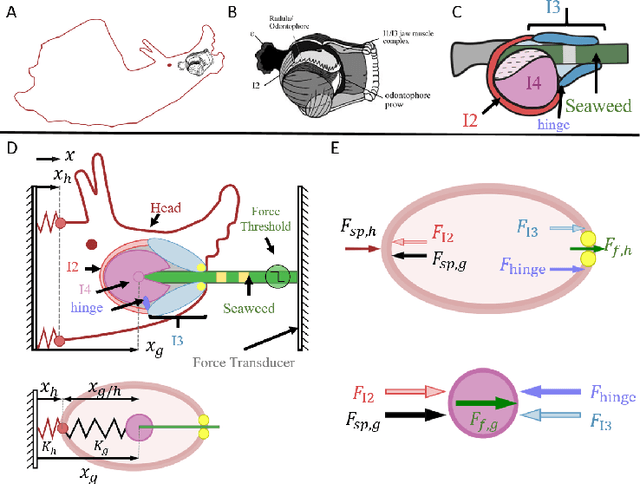

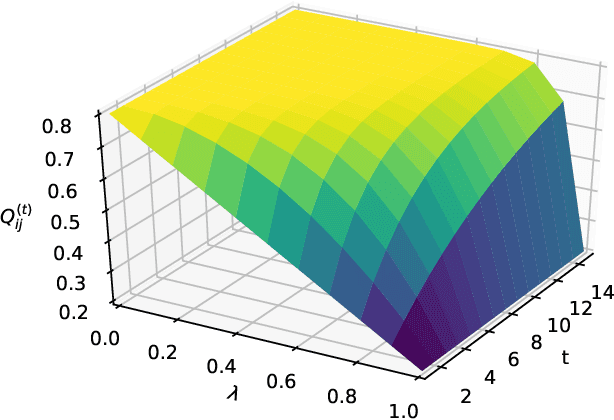

Control for Multifunctionality: Bioinspired Control Based on Feeding in Aplysia californica

Aug 11, 2020

Animals exhibit remarkable feats of behavioral flexibility and multifunctional control that remain challenging for robotic systems. The neural and morphological basis of multifunctionality in animals can provide a source of bio-inspiration for robotic controllers. However, many existing approaches to modeling biological neural networks rely on computationally expensive models and tend to focus solely on the nervous system, often neglecting the biomechanics of the periphery. As a consequence, while these models are excellent tools for neuroscience, they fail to predict functional behavior in real time, which is a critical capability for robotic control. To meet the need for real-time multifunctional control, we have developed a hybrid Boolean model framework capable of modeling neural bursting activity and simple biomechanics at speeds faster than real time. Using this approach, we present a multifunctional model of Aplysia californica feeding that qualitatively reproduces three key feeding behaviors (biting, swallowing, and rejection), demonstrates behavioral switching in response to external sensory cues, and incorporates both known neural connectivity and a simple bioinspired mechanical model of the feeding apparatus. We demonstrate that the model can be used for formulating testable hypotheses and discuss the implications of this approach for robotic control and neuroscience.

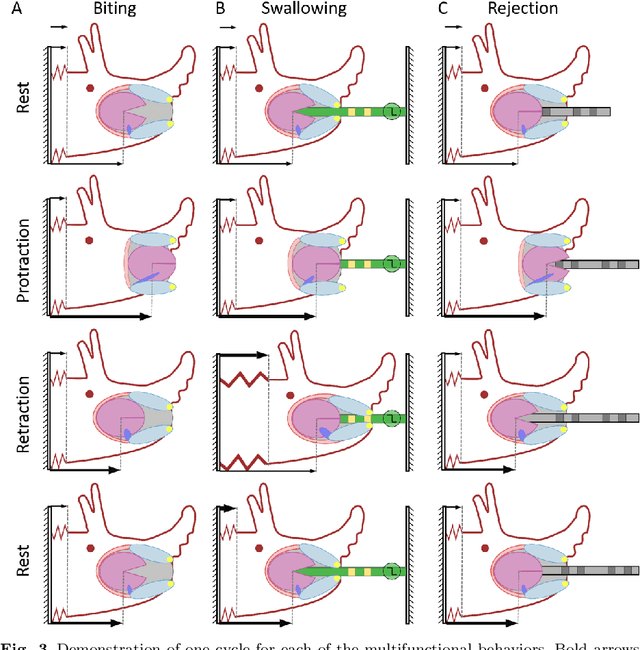

On a Bernoulli Autoregression Framework for Link Discovery and Prediction

Jul 23, 2020

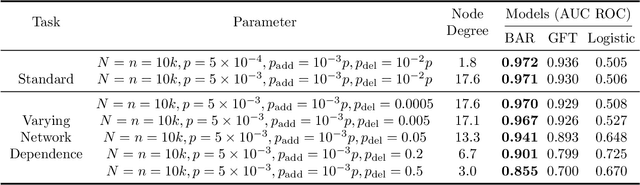

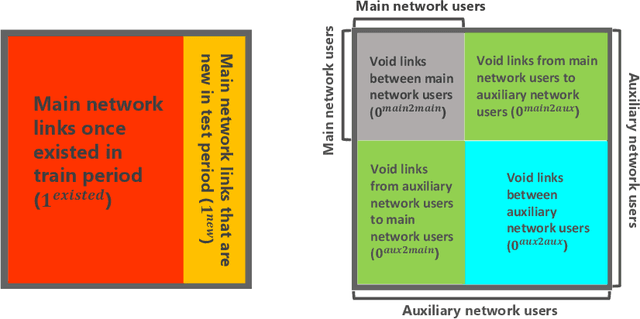

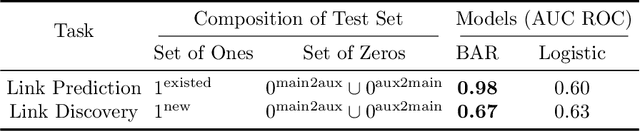

We present a dynamic prediction framework for binary sequences that is based on a Bernoulli generalization of the auto-regressive process. Our approach lends itself easily to variants of the standard link prediction problem for a sequence of time dependent networks. Focusing on this dynamic network link prediction/recommendation task, we propose a novel problem that exploits additional information via a much larger sequence of auxiliary networks and has important real-world relevance. To allow discovery of links that do not exist in the available data, our model estimation framework introduces a regularization term that presents a trade-off between the conventional link prediction and this discovery task. In contrast to existing work our stochastic gradient based estimation approach is highly efficient and can scale to networks with millions of nodes. We show extensive empirical results on both actual product-usage based time dependent networks and also present results on a Reddit based data set of time dependent sentiment sequences.

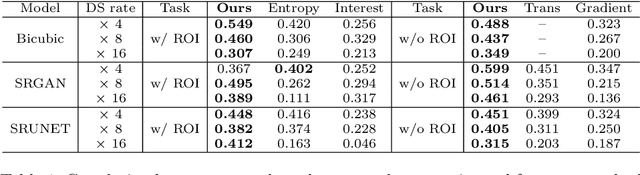

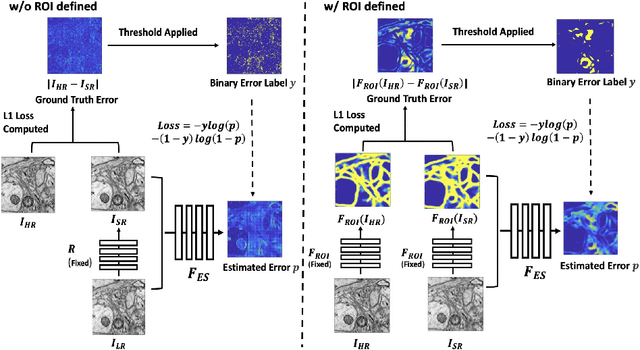

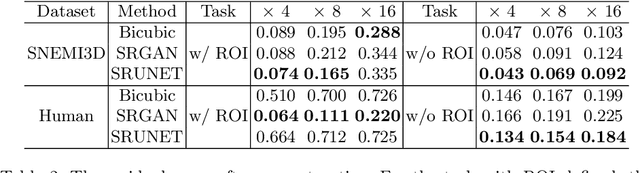

Learning Guided Electron Microscopy with Active Acquisition

Jan 07, 2021

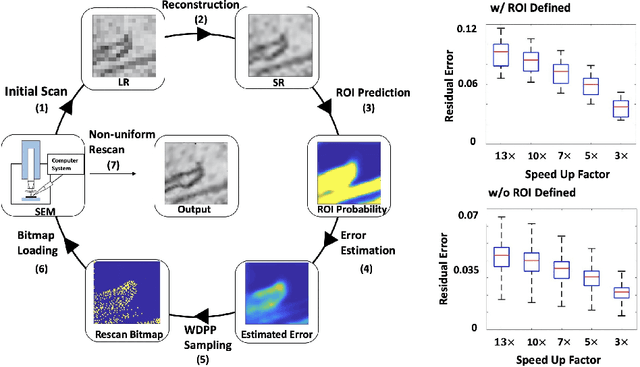

Single-beam scanning electron microscopes (SEM) are widely used to acquire massive data sets for biomedical study, material analysis, and fabrication inspection. Datasets are typically acquired with uniform acquisition: applying the electron beam with the same power and duration to all image pixels, even if there is great variety in the pixels' importance for eventual use. Many SEMs are now able to move the beam to any pixel in the field of view without delay, enabling them, in principle, to invest their time budget more effectively with non-uniform imaging. In this paper, we show how to use deep learning to accelerate and optimize single-beam SEM acquisition of images. Our algorithm rapidly collects an information-lossy image (e.g. low resolution) and then applies a novel learning method to identify a small subset of pixels to be collected at higher resolution based on a trade-off between the saliency and spatial diversity. We demonstrate the efficacy of this novel technique for active acquisition by speeding up the task of collecting connectomic datasets for neurobiology by up to an order of magnitude.

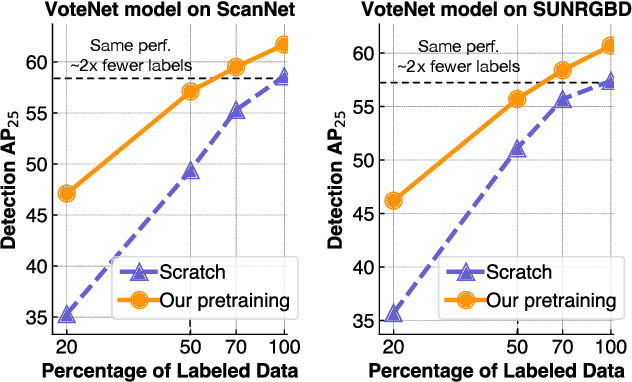

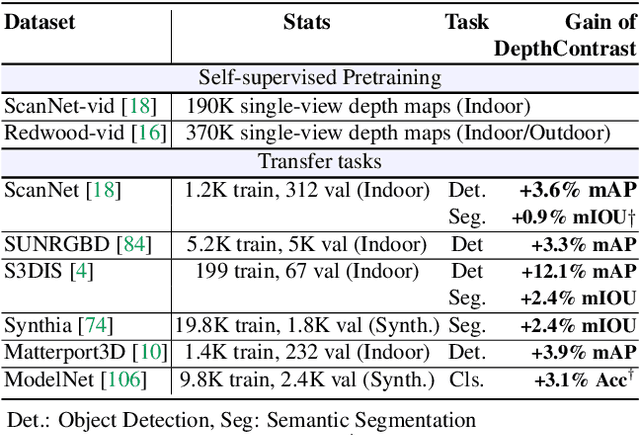

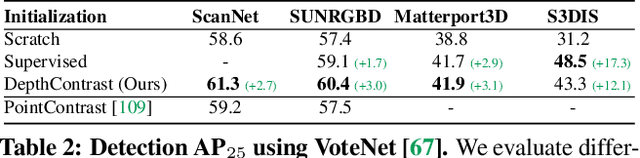

Self-Supervised Pretraining of 3D Features on any Point-Cloud

Jan 07, 2021

Pretraining on large labeled datasets is a prerequisite to achieve good performance in many computer vision tasks like 2D object recognition, video classification etc. However, pretraining is not widely used for 3D recognition tasks where state-of-the-art methods train models from scratch. A primary reason is the lack of large annotated datasets because 3D data is both difficult to acquire and time consuming to label. We present a simple self-supervised pertaining method that can work with any 3D data - single or multiview, indoor or outdoor, acquired by varied sensors, without 3D registration. We pretrain standard point cloud and voxel based model architectures, and show that joint pretraining further improves performance. We evaluate our models on 9 benchmarks for object detection, semantic segmentation, and object classification, where they achieve state-of-the-art results and can outperform supervised pretraining. We set a new state-of-the-art for object detection on ScanNet (69.0% mAP) and SUNRGBD (63.5% mAP). Our pretrained models are label efficient and improve performance for classes with few examples.

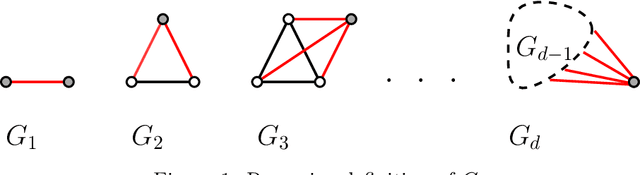

Recurrent Submodular Welfare and Matroid Blocking Bandits

Feb 16, 2021

A recent line of research focuses on the study of the stochastic multi-armed bandits problem (MAB), in the case where temporal correlations of specific structure are imposed between the player's actions and the reward distributions of the arms (Kleinberg and Immorlica [FOCS18], Basu et al. [NeurIPS19]). As opposed to the standard MAB setting, where the optimal solution in hindsight can be trivially characterized, these correlations lead to (sub-)optimal solutions that exhibit interesting dynamical patterns -- a phenomenon that yields new challenges both from an algorithmic as well as a learning perspective. In this work, we extend the above direction to a combinatorial bandit setting and study a variant of stochastic MAB, where arms are subject to matroid constraints and each arm becomes unavailable (blocked) for a fixed number of rounds after each play. A natural common generalization of the state-of-the-art for blocking bandits, and that for matroid bandits, yields a $(1-\frac{1}{e})$-approximation for partition matroids, yet it only guarantees a $\frac{1}{2}$-approximation for general matroids. In this paper we develop new algorithmic ideas that allow us to obtain a polynomial-time $(1 - \frac{1}{e})$-approximation algorithm (asymptotically and in expectation) for any matroid, and thus to control the $(1-\frac{1}{e})$-approximate regret. A key ingredient is the technique of correlated (interleaved) scheduling. Along the way, we discover an interesting connection to a variant of Submodular Welfare Maximization, for which we provide (asymptotically) matching upper and lower approximability bounds.

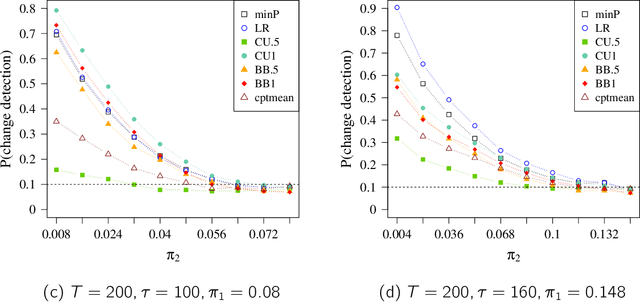

Exact Tests for Offline Changepoint Detection in Multichannel Binary and Count Data with Application to Networks

Aug 20, 2020

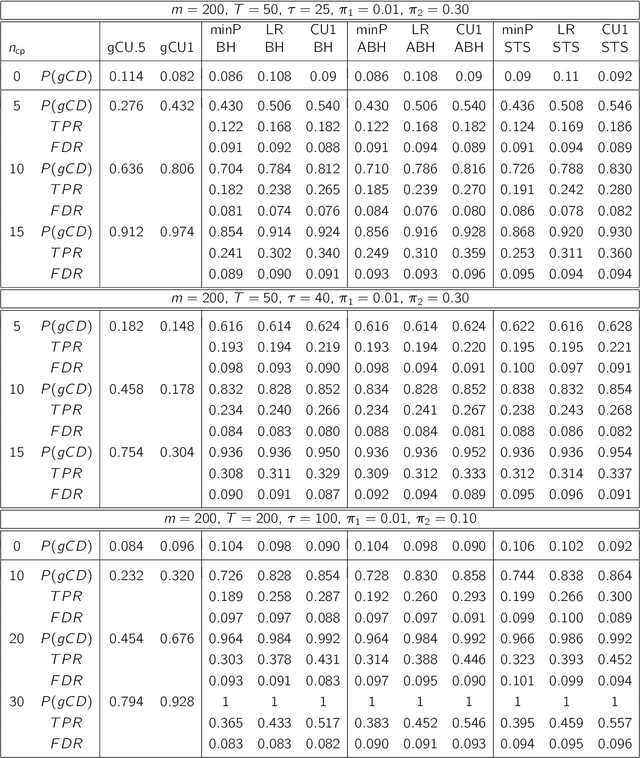

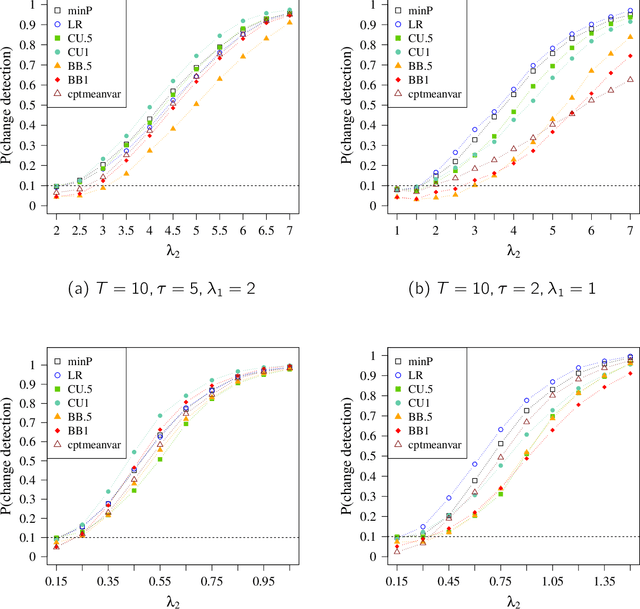

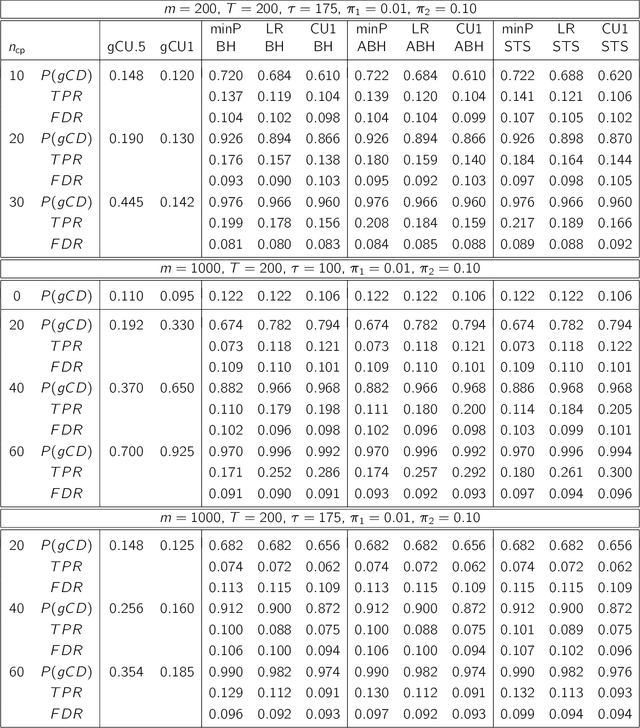

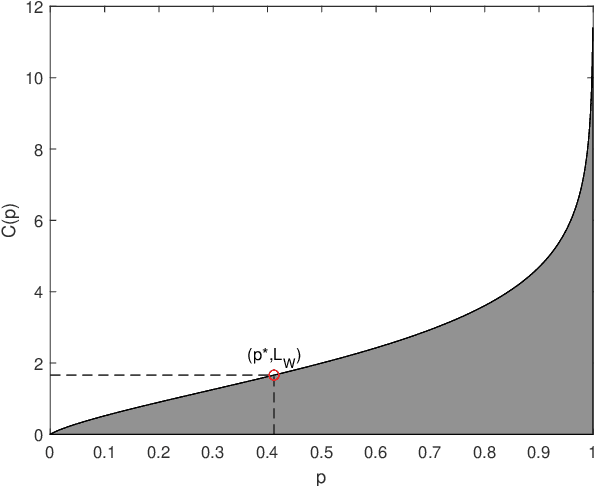

We consider offline detection of a single changepoint in binary and count time-series. We compare exact tests based on the cumulative sum (CUSUM) and the likelihood ratio (LR) statistics, and a new proposal that combines exact two-sample conditional tests with multiplicity correction, against standard asymptotic tests based on the Brownian bridge approximation to the CUSUM statistic. We see empirically that the exact tests are much more powerful in situations where normal approximations driving asymptotic tests are not trustworthy: (i) small sample settings; (ii) sparse parametric settings; (iii) time-series with changepoint near the boundary. We also consider a multichannel version of the problem, where channels can have different changepoints. Controlling the False Discovery Rate (FDR), we simultaneously detect changes in multiple channels. This "local" approach is shown to be more advantageous than multivariate global testing approaches when the number of channels with changepoints is much smaller than the total number of channels. As a natural application, we consider network-valued time-series and use our approach with (a) edges as binary channels and (b) node-degrees or other local subgraph statistics as count channels. The local testing approach is seen to be much more informative than global network changepoint algorithms.

Soft Compression for Lossless Image Coding

Dec 11, 2020

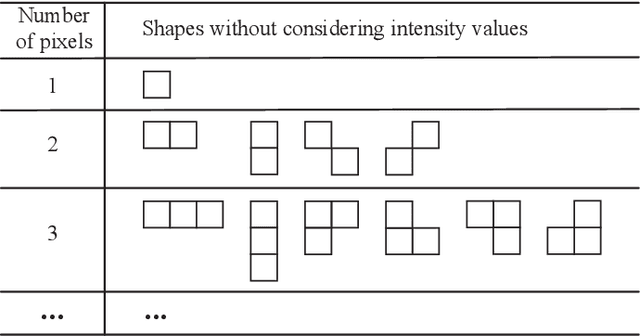

Soft compression is a lossless image compression method, which is committed to eliminating coding redundancy and spatial redundancy at the same time by adopting locations and shapes of codebook to encode an image from the perspective of information theory and statistical distribution. In this paper, we propose a new concept, compressible indicator function with regard to image, which gives a threshold about the average number of bits required to represent a location and can be used for revealing the performance of soft compression. We investigate and analyze soft compression for binary image, gray image and multi-component image by using specific algorithms and compressible indicator value. It is expected that the bandwidth and storage space needed when transmitting and storing the same kind of images can be greatly reduced by applying soft compression.

EEG-Based User Reaction Time Estimation Using Riemannian Geometry Features

Apr 27, 2017

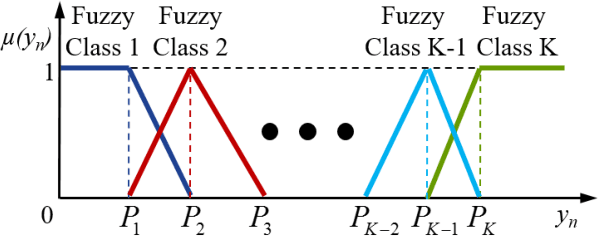

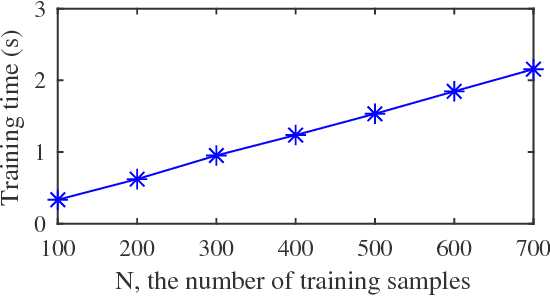

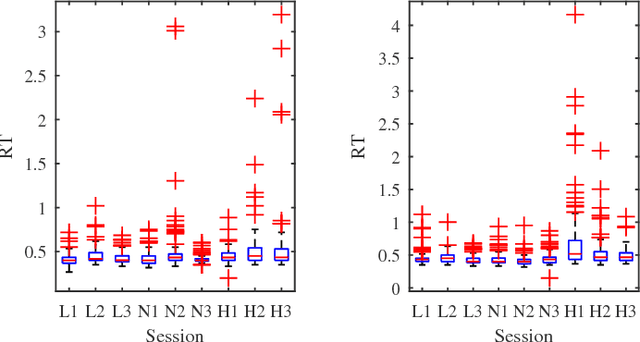

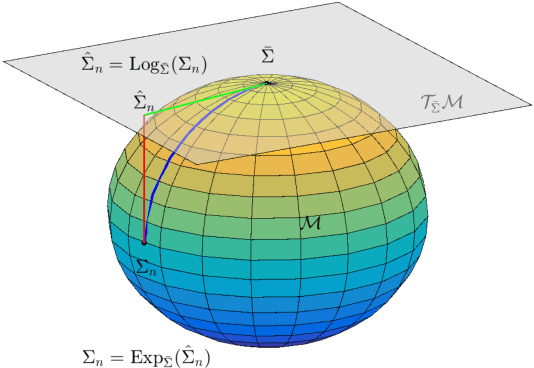

Riemannian geometry has been successfully used in many brain-computer interface (BCI) classification problems and demonstrated superior performance. In this paper, for the first time, it is applied to BCI regression problems, an important category of BCI applications. More specifically, we propose a new feature extraction approach for Electroencephalogram (EEG) based BCI regression problems: a spatial filter is first used to increase the signal quality of the EEG trials and also to reduce the dimensionality of the covariance matrices, and then Riemannian tangent space features are extracted. We validate the performance of the proposed approach in reaction time estimation from EEG signals measured in a large-scale sustained-attention psychomotor vigilance task, and show that compared with the traditional powerband features, the tangent space features can reduce the root mean square estimation error by 4.30-8.30%, and increase the estimation correlation coefficient by 6.59-11.13%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge