"Time": models, code, and papers

Safety Synthesis Sans Specification

Nov 27, 2020

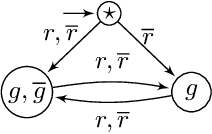

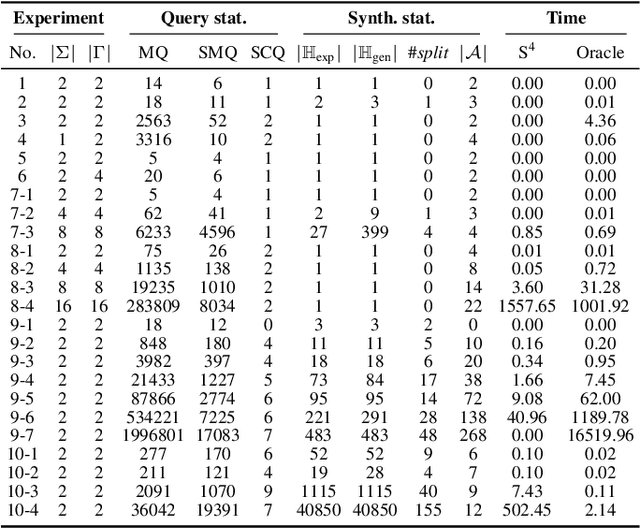

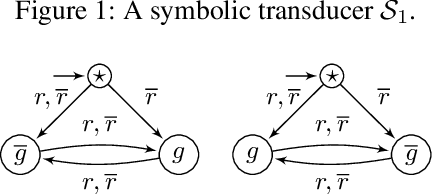

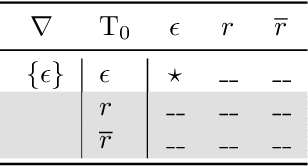

We define the problem of learning a transducer ${S}$ from a target language $U$ containing possibly conflicting transducers, using membership queries and conjecture queries. The requirement is that the language of ${S}$ be a subset of $U$. We argue that this is a natural question in many situations in hardware and software verification. We devise a learning algorithm for this problem and show that its time and query complexity is polynomial with respect to the rank of the target language, its incompatibility measure, and the maximal length of a given counterexample. We report on experiments conducted with a prototype implementation.

Non-Parametric Adaptive Network Pruning

Jan 20, 2021

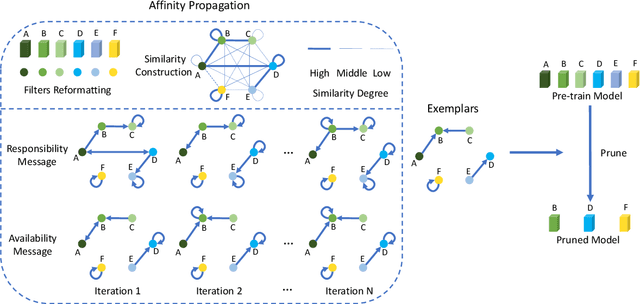

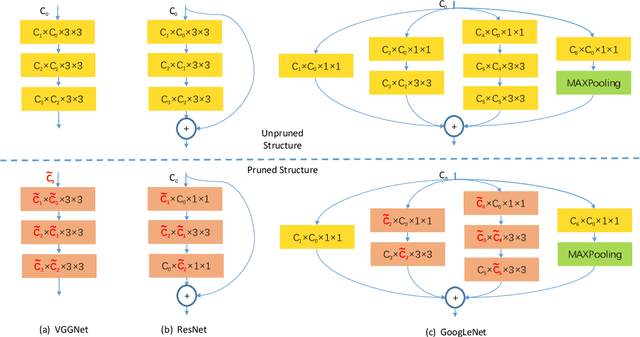

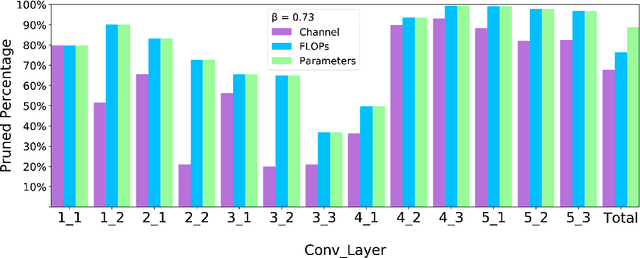

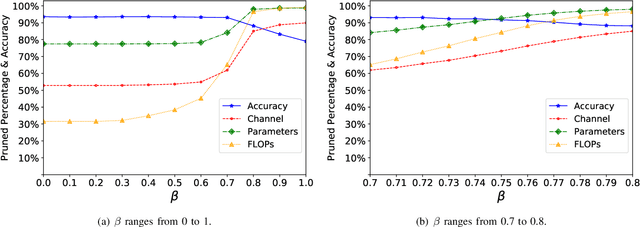

Popular network pruning algorithms reduce redundant information by optimizing hand-crafted parametric models, and may cause suboptimal performance and long time in selecting filters. We innovatively introduce non-parametric modeling to simplify the algorithm design, resulting in an automatic and efficient pruning approach called EPruner. Inspired by the face recognition community, we use a message passing algorithm Affinity Propagation on the weight matrices to obtain an adaptive number of exemplars, which then act as the preserved filters. EPruner breaks the dependency on the training data in determining the "important" filters and allows the CPU implementation in seconds, an order of magnitude faster than GPU based SOTAs. Moreover, we show that the weights of exemplars provide a better initialization for the fine-tuning. On VGGNet-16, EPruner achieves a 76.34%-FLOPs reduction by removing 88.80% parameters, with 0.06% accuracy improvement on CIFAR-10. In ResNet-152, EPruner achieves a 65.12%-FLOPs reduction by removing 64.18% parameters, with only 0.71% top-5 accuracy loss on ILSVRC-2012. Code can be available at https://github.com/lmbxmu/EPruner.

Understanding Higher-order Structures in Evolving Graphs: A Simplicial Complex based Kernel Estimation Approach

Feb 06, 2021

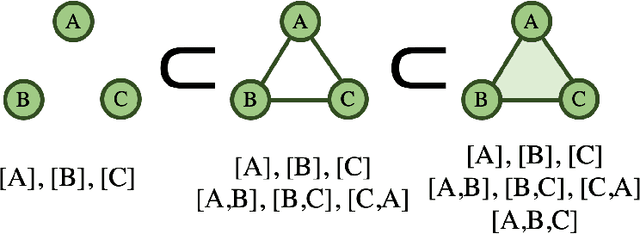

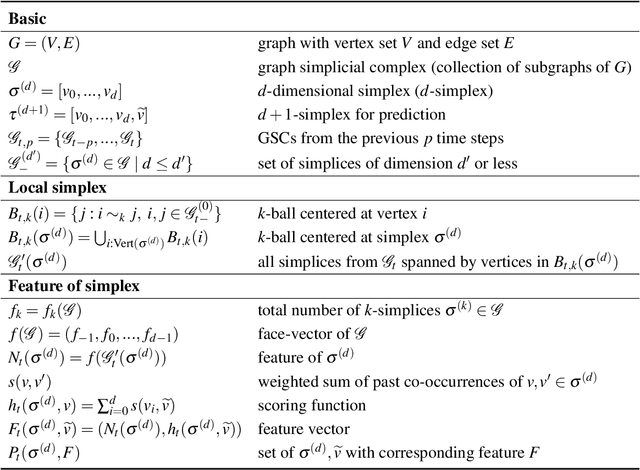

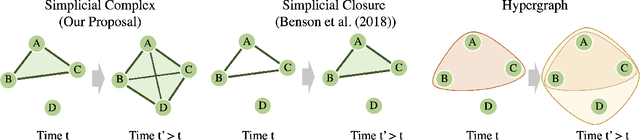

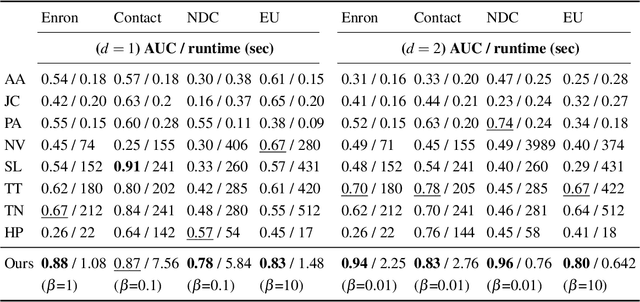

Dynamic graphs are rife with higher-order interactions, such as co-authorship relationships and protein-protein interactions in biological networks, that naturally arise between more than two nodes at once. In spite of the ubiquitous presence of such higher-order interactions, limited attention has been paid to the higher-order counterpart of the popular pairwise link prediction problem. Existing higher-order structure prediction methods are mostly based on heuristic feature extraction procedures, which work well in practice but lack theoretical guarantees. Such heuristics are primarily focused on predicting links in a static snapshot of the graph. Moreover, these heuristic-based methods fail to effectively utilize and benefit from the knowledge of latent substructures already present within the higher-order structures. In this paper, we overcome these obstacles by capturing higher-order interactions succinctly as \textit{simplices}, model their neighborhood by face-vectors, and develop a nonparametric kernel estimator for simplices that views the evolving graph from the perspective of a time process (i.e., a sequence of graph snapshots). Our method substantially outperforms several baseline higher-order prediction methods. As a theoretical achievement, we prove the consistency and asymptotic normality in terms of the Wasserstein distance of our estimator using Stein's method.

Real-Time Hand Shape Classification

Feb 11, 2014

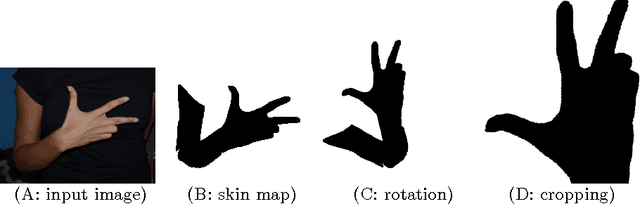

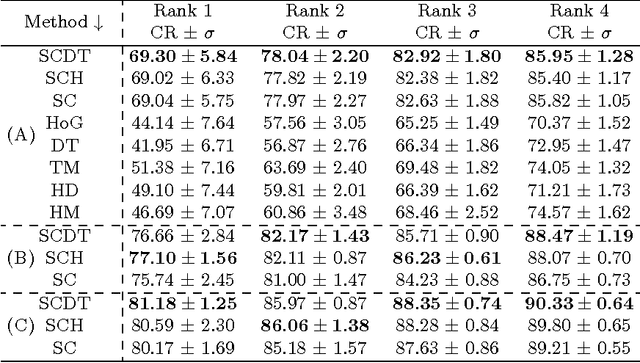

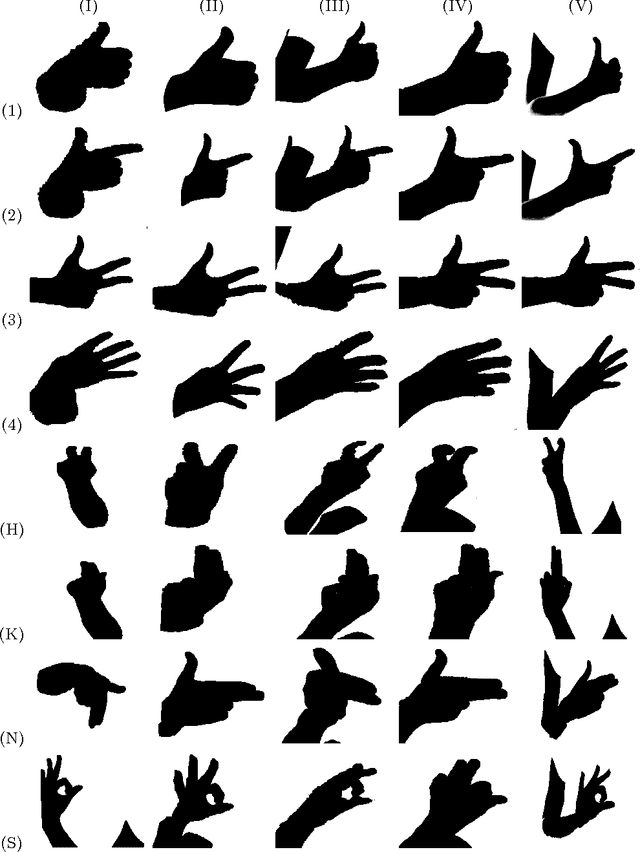

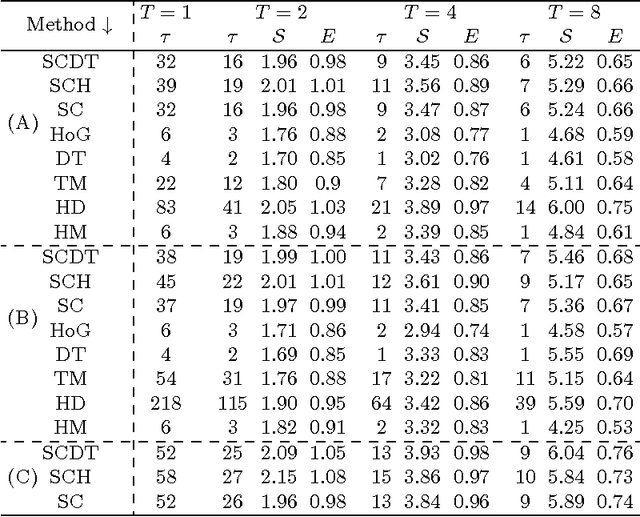

The problem of hand shape classification is challenging since a hand is characterized by a large number of degrees of freedom. Numerous shape descriptors have been proposed and applied over the years to estimate and classify hand poses in reasonable time. In this paper we discuss our parallel framework for real-time hand shape classification applicable in real-time applications. We show how the number of gallery images influences the classification accuracy and execution time of the parallel algorithm. We present the speedup and efficiency analyses that prove the efficacy of the parallel implementation. Noteworthy, different methods can be used at each step of our parallel framework. Here, we combine the shape contexts with the appearance-based techniques to enhance the robustness of the algorithm and to increase the classification score. An extensive experimental study proves the superiority of the proposed approach over existing state-of-the-art methods.

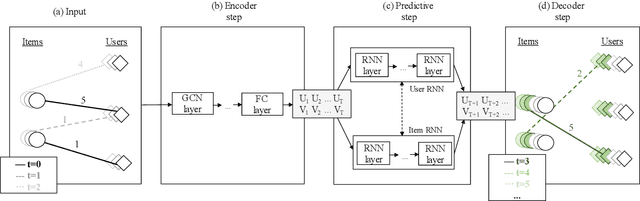

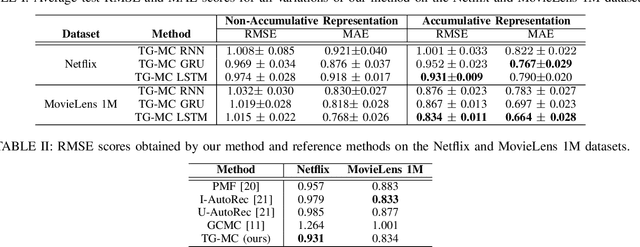

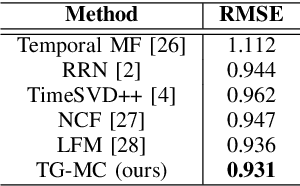

Temporal Collaborative Filtering with Graph Convolutional Neural Networks

Oct 13, 2020

Temporal collaborative filtering (TCF) methods aim at modelling non-static aspects behind recommender systems, such as the dynamics in users' preferences and social trends around items. State-of-the-art TCF methods employ recurrent neural networks (RNNs) to model such aspects. These methods deploy matrix-factorization-based (MF-based) approaches to learn the user and item representations. Recently, graph-neural-network-based (GNN-based) approaches have shown improved performance in providing accurate recommendations over traditional MF-based approaches in non-temporal CF settings. Motivated by this, we propose a novel TCF method that leverages GNNs to learn user and item representations, and RNNs to model their temporal dynamics. A challenge with this method lies in the increased data sparsity, which negatively impacts obtaining meaningful quality representations with GNNs. To overcome this challenge, we train a GNN model at each time step using a set of observed interactions accumulated time-wise. Comprehensive experiments on real-world data show the improved performance obtained by our method over several state-of-the-art temporal and non-temporal CF models.

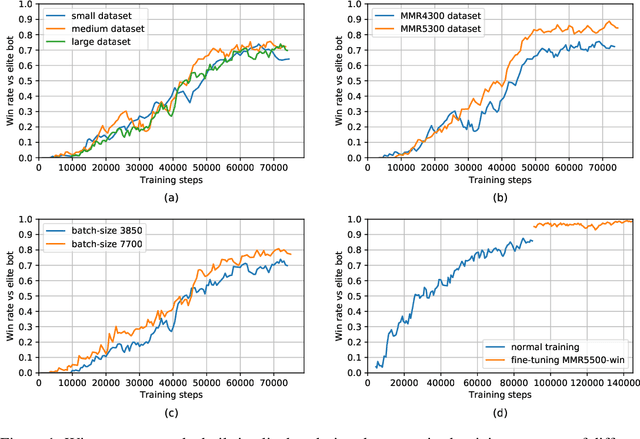

SCC: an efficient deep reinforcement learning agent mastering the game of StarCraft II

Dec 24, 2020

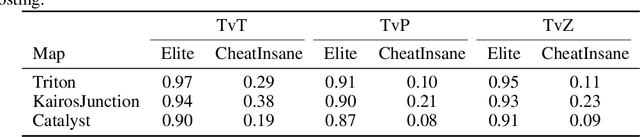

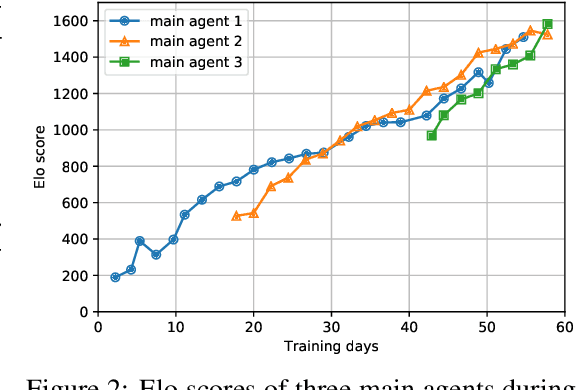

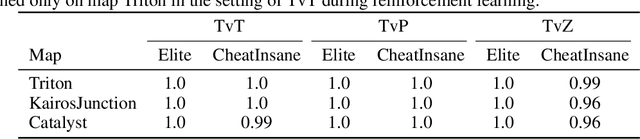

AlphaStar, the AI that reaches GrandMaster level in StarCraft II, is a remarkable milestone demonstrating what deep reinforcement learning can achieve in complex Real-Time Strategy (RTS) games. However, the complexities of the game, algorithms and systems, and especially the tremendous amount of computation needed are big obstacles for the community to conduct further research in this direction. We propose a deep reinforcement learning agent, StarCraft Commander (SCC). With order of magnitude less computation, it demonstrates top human performance defeating GrandMaster players in test matches and top professional players in a live event. Moreover, it shows strong robustness to various human strategies and discovers novel strategies unseen from human plays. In this paper, we will share the key insights and optimizations on efficient imitation learning and reinforcement learning for StarCraft II full game.

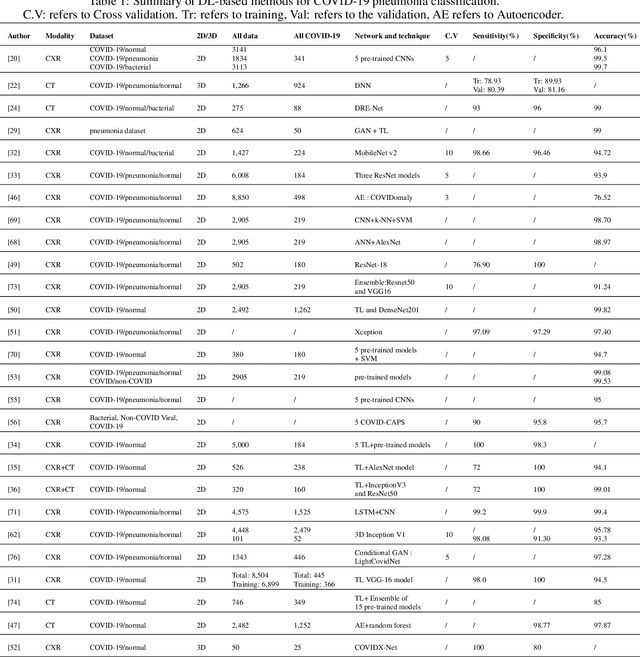

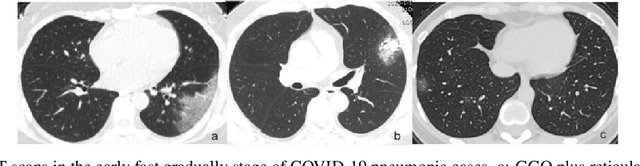

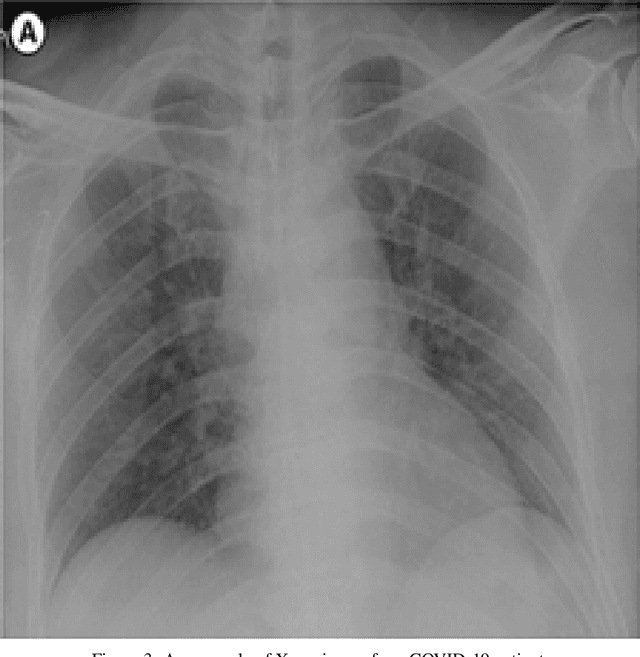

Deep Neural Networks for COVID-19 Detection and Diagnosis using Images and Acoustic-based Techniques: A Recent Review

Dec 10, 2020

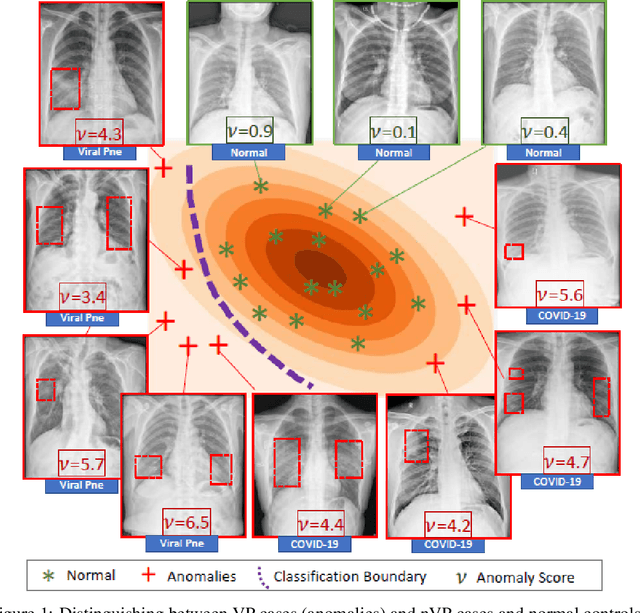

The new coronavirus disease (COVID-19) has been declared a pandemic since March 2020 by the World Health Organization. It consists of an emerging viral infection with respiratory tropism that could develop atypical pneumonia. Experts emphasize the importance of early detection of those who have the COVID-19 virus. In this way, patients will be isolated from other people and the spread of the virus can be prevented. For this reason, it has become an area of interest to develop early diagnosis and detection methods to ensure a rapid treatment process and prevent the virus from spreading. Since the standard testing system is time-consuming and not available for everyone, alternative early-screening techniques have become an urgent need. In this study, the approaches used in the detection of COVID-19 based on deep learning (DL) algorithms, which have been popular in recent years, have been comprehensively discussed. The advantages and disadvantages of different approaches used in literature are examined in detail. The Computed Tomography of the chest and X-ray images give a rich representation of the patient's lung that is less time-consuming and allows an efficient viral pneumonia detection using the DL algorithms. The first step is the pre-processing of these images to remove noise. Next, deep features are extracted using multiple types of deep models (pre-trained models, generative models, generic neural networks, etc). Finally, the classification is performed using the obtained features to decide whether the patient is infected by coronavirus or it is another lung disease. In this study, we also give a brief review of the latest applications of cough analysis to early screen the COVID-19, and human mobility estimation to limit its spread.

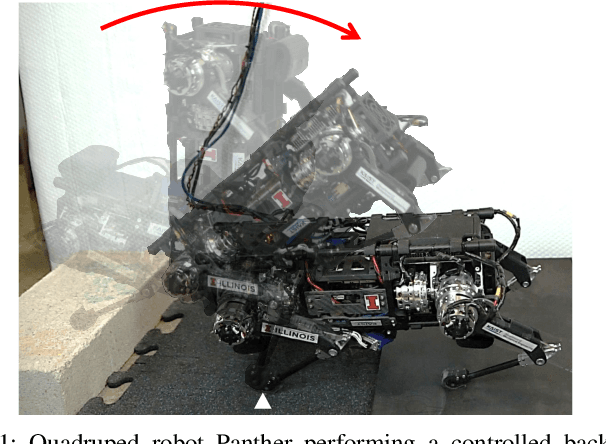

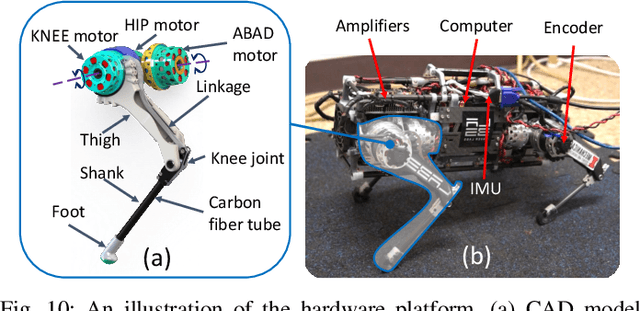

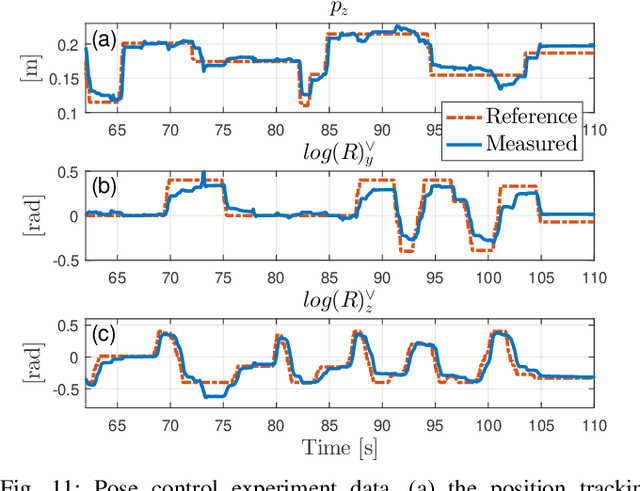

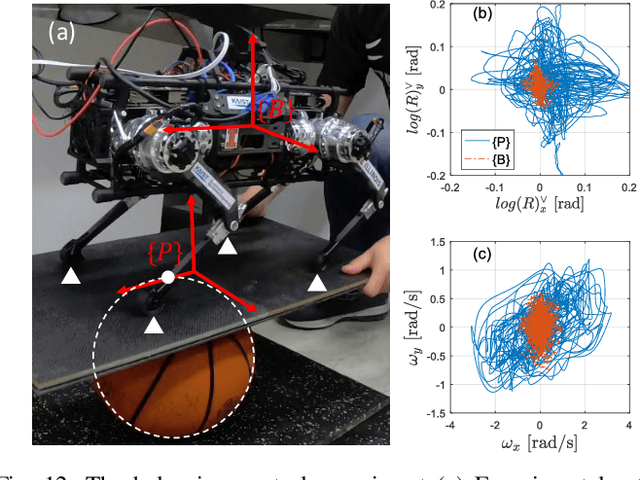

Representation-Free Model Predictive Control for Dynamic Motions in Quadrupeds

Dec 18, 2020

This paper presents a novel Representation-Free Model Predictive Control (RF-MPC) framework for controlling various dynamic motions of a quadrupedal robot in three dimensional (3D) space. Our formulation directly represents the rotational dynamics using the rotation matrix, which liberates us from the issues associated with the use of Euler angles and quaternion as the orientation representations. With a variation-based linearization scheme and a carefully constructed cost function, the MPC control law is transcribed to the standard Quadratic Program (QP) form. The MPC controller can operate at real-time rates of 250 Hz on a quadruped robot. Experimental results including periodic quadrupedal gaits and a controlled backflip validate that our control strategy could stabilize dynamic motions that involve singularity in 3D maneuvers.

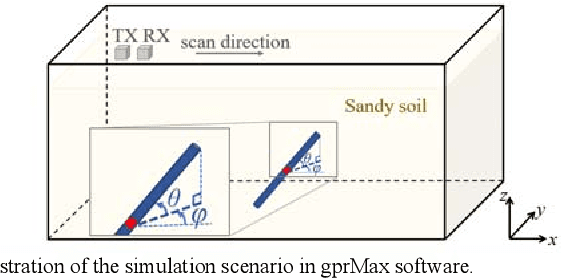

The Orientation Estimation of Elongated Underground Objects via Multi-Polarization Aggregation and Selection Neural Network

Jan 29, 2021

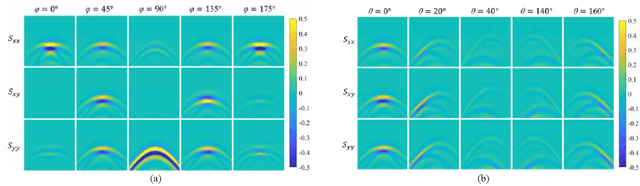

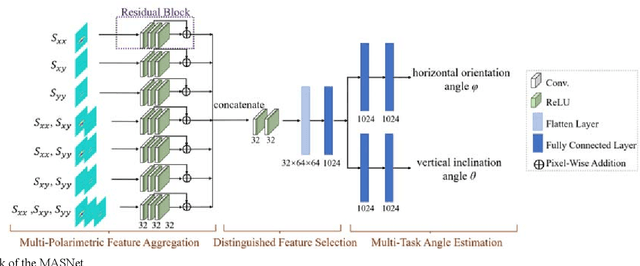

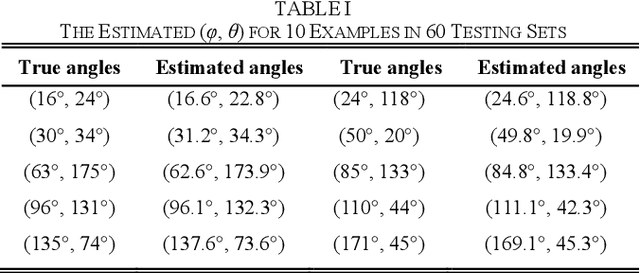

The horizontal orientation angle and vertical inclination angle of an elongated subsurface object are key parameters for object identification and imaging in ground penetration radar (GPR) applications. Conventional methods can only extract the horizontal orientation angle or estimate both angles in narrow ranges due to limited polarimetric information and detection capability. To address these issues, this letter, for the first time, explores the possibility of leveraging neural networks with multi-polarimetric GPR data to estimate both angles of an elongated subsurface object in the entire spatial range. Based on the polarization-sensitive characteristic of an elongated object, we propose a multi-polarization aggregation and selection neural network (MASNet), which takes the multi-polarimetric radargrams as inputs, integrates their characteristics in the feature space, and selects discriminative features of reflected signal patterns for accurate orientation estimation. Numerical results show that our proposed MASNet achieves high estimation accuracy with an angle estimation error of less than 5{\deg}, which outperforms conventional methods by a large margin. The promising results obtained by the proposed method encourages one to think of new solutions for GPR related tasks by integrating multi-polarization information with deep learning techniques.

Deep correction of breathing-related artifacts in MR-thermometry

Nov 10, 2020

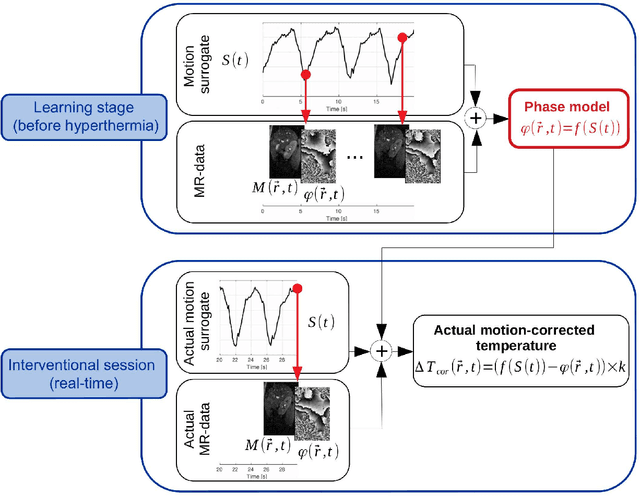

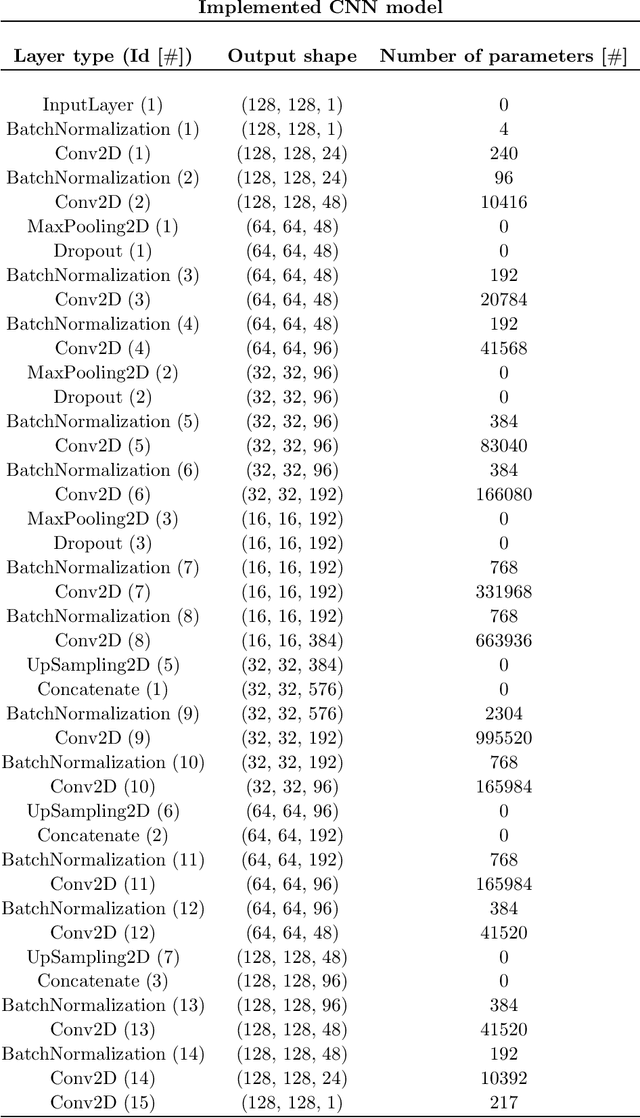

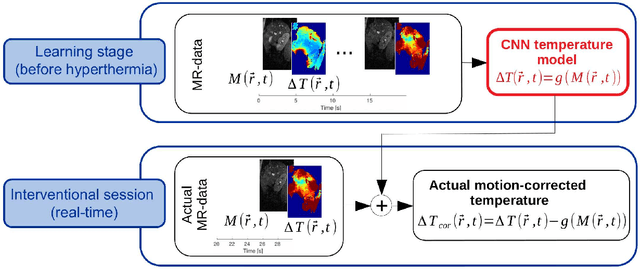

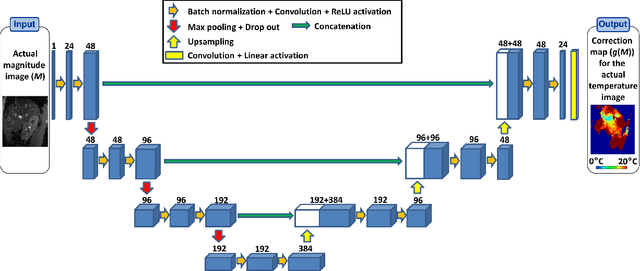

Real-time MR-imaging has been clinically adapted for monitoring thermal therapies since it can provide on-the-fly temperature maps simultaneously with anatomical information. However, proton resonance frequency based thermometry of moving targets remains challenging since temperature artifacts are induced by the respiratory as well as physiological motion. If left uncorrected, these artifacts lead to severe errors in temperature estimates and impair therapy guidance. In this study, we evaluated deep learning for on-line correction of motion related errors in abdominal MR-thermometry. For this, a convolutional neural network (CNN) was designed to learn the apparent temperature perturbation from images acquired during a preparative learning stage prior to hyperthermia. The input of the designed CNN is the most recent magnitude image and no surrogate of motion is needed. During the subsequent hyperthermia procedure, the recent magnitude image is used as an input for the CNN-model in order to generate an on-line correction for the current temperature map. The method's artifact suppression performance was evaluated on 12 free breathing volunteers and was found robust and artifact-free in all examined cases. Furthermore, thermometric precision and accuracy was assessed for in vivo ablation using high intensity focused ultrasound. All calculations involved at the different stages of the proposed workflow were designed to be compatible with the clinical time constraints of a therapeutic procedure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge