"Time": models, code, and papers

Federated Depression Detection from Multi-SourceMobile Health Data

Feb 06, 2021

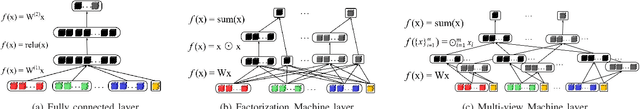

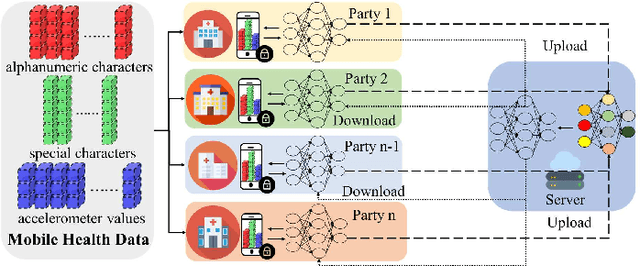

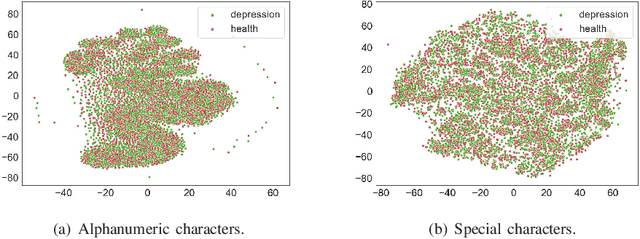

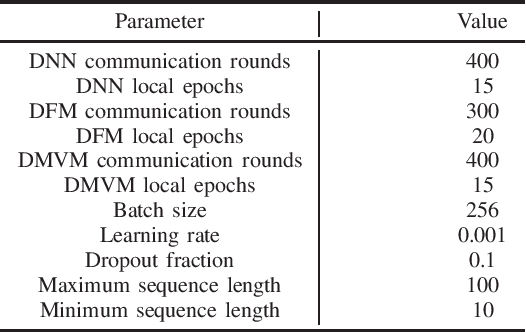

Depression is one of the most common mental illness problems, and the symptoms shown by patients are not consistent, making it difficult to diagnose in the process of clinical practice and pathological research.Although researchers hope that artificial intelligence can contribute to the diagnosis and treatment of depression, the traditional centralized machine learning needs to aggregate patient data, and the data privacy of patients with mental illness needs to be strictly confidential, which hinders machine learning algorithms clinical application.To solve the problem of privacy of the medical history of patients with depression, we implement federated learning to analyze and diagnose depression. First, we propose a general multi-view federated learning framework using multi-source data,which can extend any traditional machine learning model to support federated learning across different institutions or parties.Secondly, we adopt late fusion methods to solve the problem of inconsistent time series of multi-view data.Finally, we compare the federated framework with other cooperative learning frameworks in performance and discuss the related results.

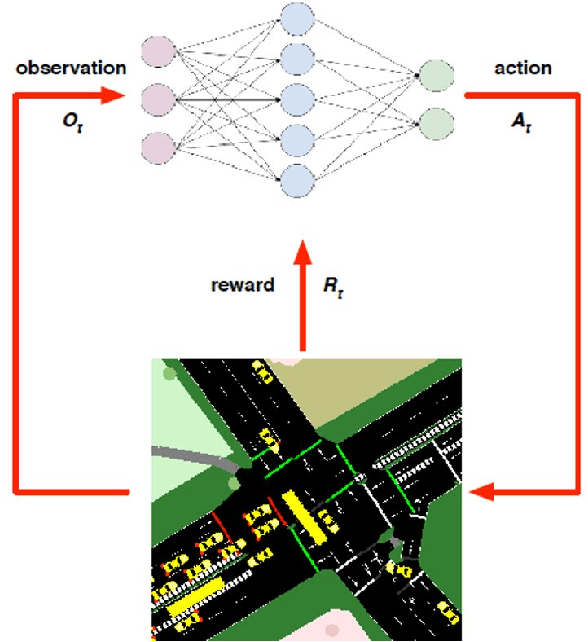

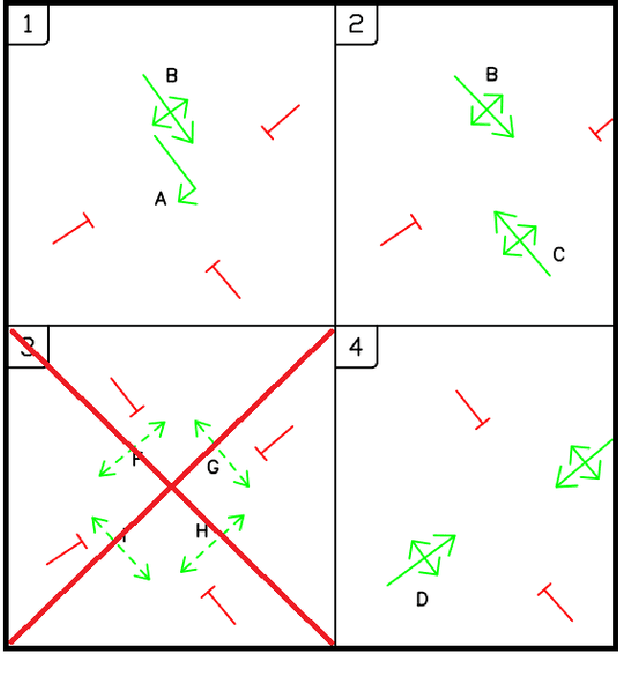

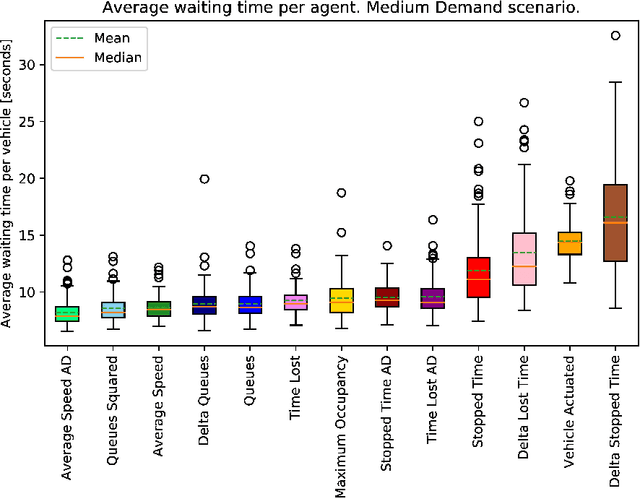

Assessment of Reward Functions for Reinforcement Learning Traffic Signal Control under Real-World Limitations

Aug 26, 2020

Adaptive traffic signal control is one key avenue for mitigating the growing consequences of traffic congestion. Incumbent solutions such as SCOOT and SCATS require regular and time-consuming calibration, can't optimise well for multiple road use modalities, and require the manual curation of many implementation plans. A recent alternative to these approaches are deep reinforcement learning algorithms, in which an agent learns how to take the most appropriate action for a given state of the system. This is guided by neural networks approximating a reward function that provides feedback to the agent regarding the performance of the actions taken, making it sensitive to the specific reward function chosen. Several authors have surveyed the reward functions used in the literature, but attributing outcome differences to reward function choice across works is problematic as there are many uncontrolled differences, as well as different outcome metrics. This paper compares the performance of agents using different reward functions in a simulation of a junction in Greater Manchester, UK, across various demand profiles, subject to real world constraints: realistic sensor inputs, controllers, calibrated demand, intergreen times and stage sequencing. The reward metrics considered are based on the time spent stopped, lost time, change in lost time, average speed, queue length, junction throughput and variations of these magnitudes. The performance of these reward functions is compared in terms of total waiting time. We find that speed maximisation resulted in the lowest average waiting times across all demand levels, displaying significantly better performance than other rewards previously introduced in the literature.

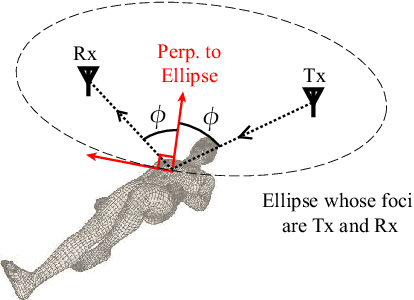

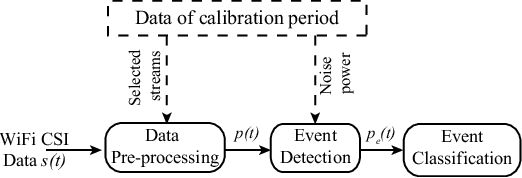

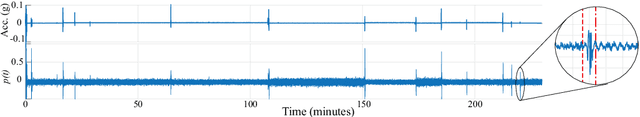

Nocturnal Seizure Detection Using Off-the-Shelf WiFi

Mar 25, 2021

Detection of nocturnal seizures in epilepsy patients is essential, both for the quick management of the seizure complications, and for the assessment of the ongoing seizure treatment. Traditional seizure detection products (e.g., wearables), however, are either very costly, uncomfortable, or unreliable. In this paper, we then propose to utilize everyday WiFi signals for robust, fast, and non-invasive detection of nocturnal seizures. We first present a new and rigorous mathematical characterization for the spectral content/bandwidth of the WiFi signal, measured on a WiFi device placed near a sleeping patient, during different kinds of sleep motions: seizures, normal movements (e.g. posture adjustments), and breathing. Based on this mathematical modeling, we propose a novel pipeline for processing the received WiFi signals to robustly detect all nocturnal non-breathing movements, and then classify them into normal body movements or seizures. In order to validate this, we carry out extensive experiments in 7 different typical bedroom locations, where a set of 20 actors simulate the state of having seizures (total of 260 instances), as well as normal sleep movements (total of 410 instances). Our proposed system detects 93.85% of the seizures with a mean response time of only 5.69 seconds since the onset of the seizure. Moreover, our proposed system achieves a probability of false alarm of only 0.0097, when classifying normal sleep movements. Overall, our new mathematical modeling and experimental results show the great potential the ubiquitous WiFi signals have for detecting nocturnal seizures, which can provide better support for epilepsy patients and their caregivers.

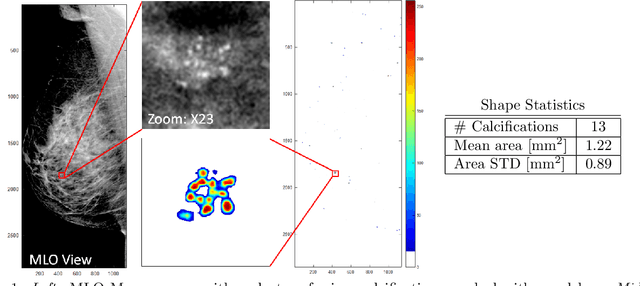

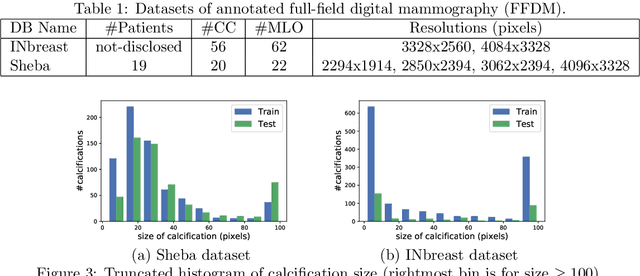

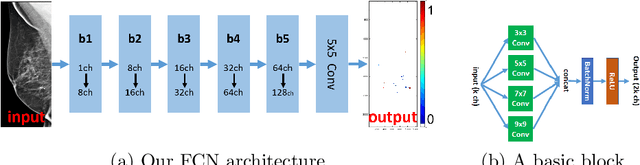

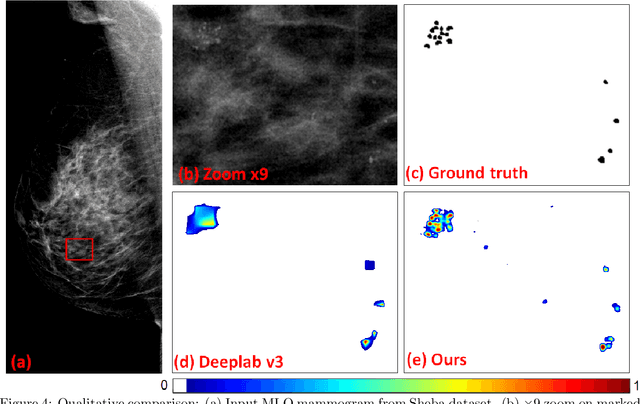

Segmenting Microcalcifications in Mammograms and its Applications

Feb 01, 2021

Microcalcifications are small deposits of calcium that appear in mammograms as bright white specks on the soft tissue background of the breast. Microcalcifications may be a unique indication for Ductal Carcinoma in Situ breast cancer, and therefore their accurate detection is crucial for diagnosis and screening. Manual detection of these tiny calcium residues in mammograms is both time-consuming and error-prone, even for expert radiologists, since these microcalcifications are small and can be easily missed. Existing computerized algorithms for detecting and segmenting microcalcifications tend to suffer from a high false-positive rate, hindering their widespread use. In this paper, we propose an accurate calcification segmentation method using deep learning. We specifically address the challenge of keeping the false positive rate low by suggesting a strategy for focusing the hard pixels in the training phase. Furthermore, our accurate segmentation enables extracting meaningful statistics on clusters of microcalcifications.

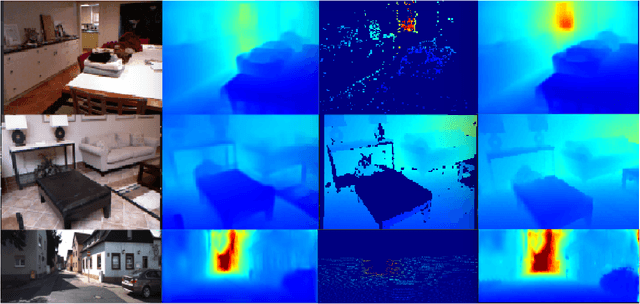

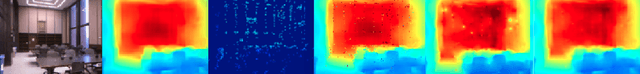

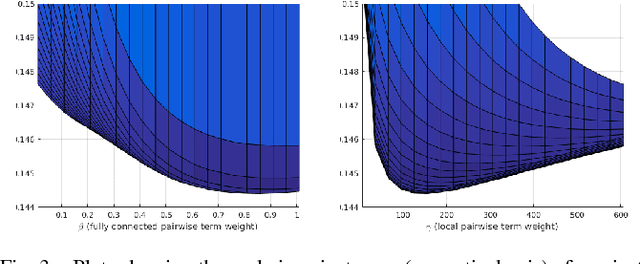

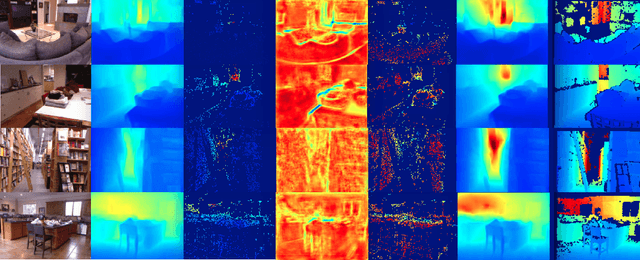

Just-in-Time Reconstruction: Inpainting Sparse Maps using Single View Depth Predictors as Priors

May 11, 2018

We present ``just-in-time reconstruction" as real-time image-guided inpainting of a map with arbitrary scale and sparsity to generate a fully dense depth map for the image. In particular, our goal is to inpaint a sparse map --- obtained from either a monocular visual SLAM system or a sparse sensor --- using a single-view depth prediction network as a virtual depth sensor. We adopt a fairly standard approach to data fusion, to produce a fused depth map by performing inference over a novel fully-connected Conditional Random Field (CRF) which is parameterized by the input depth maps and their pixel-wise confidence weights. Crucially, we obtain the confidence weights that parameterize the CRF model in a data-dependent manner via Convolutional Neural Networks (CNNs) which are trained to model the conditional depth error distributions given each source of input depth map and the associated RGB image. Our CRF model penalises absolute depth error in its nodes and pairwise scale-invariant depth error in its edges, and the confidence-based fusion minimizes the impact of outlier input depth values on the fused result. We demonstrate the flexibility of our method by real-time inpainting of ORB-SLAM, Kinect, and LIDAR depth maps acquired both indoors and outdoors at arbitrary scale and varied amount of irregular sparsity.

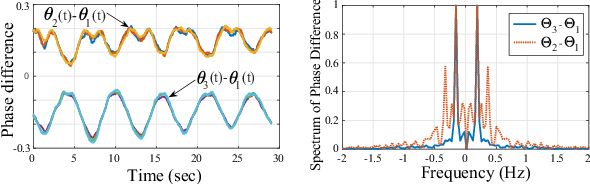

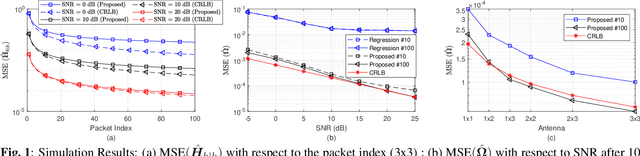

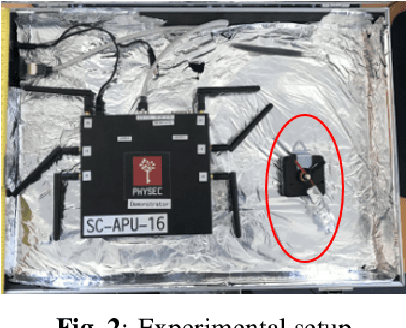

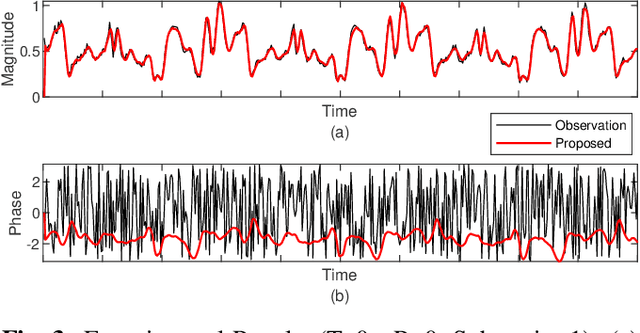

Kalman filter based MIMO CSI phase recovery for COTS WiFi devices

Jan 15, 2021

Recently channel state information (CSI) measurements from commercial multi input multi output (MIMO) WiFi systems have been ubiquitously used for different wireless sensing applications. However, the phase of the CSI realizations is usually distorted severely by phase errors due to the hardware impairments, which significantly reduce the sensing performance. In this paper, we directly utilize the modeling of the phase distortions caused by the hardware impairments and propose an adaptive CSI estimation approach based on Kalman filter (KF) with maximum a posteriori (MAP) estimation that considers the CSI from the previous time. The performance of the proposed algorithm is compared against the Cramer Rao lower bound (CRLB). Simulation and experimental results demonstrate that our approach can track the channel variations while eliminating the phase errors accurately.

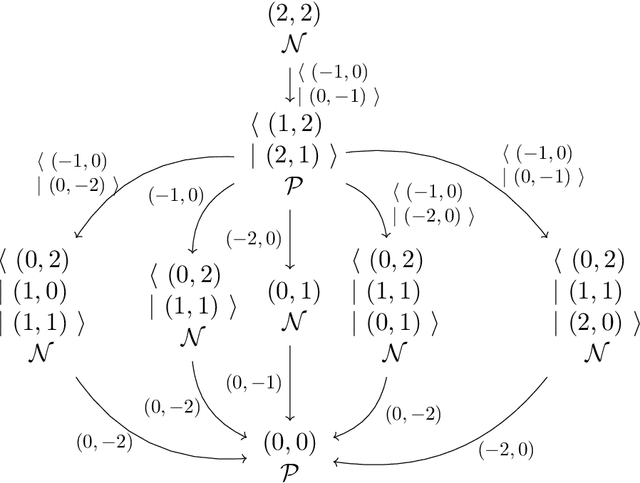

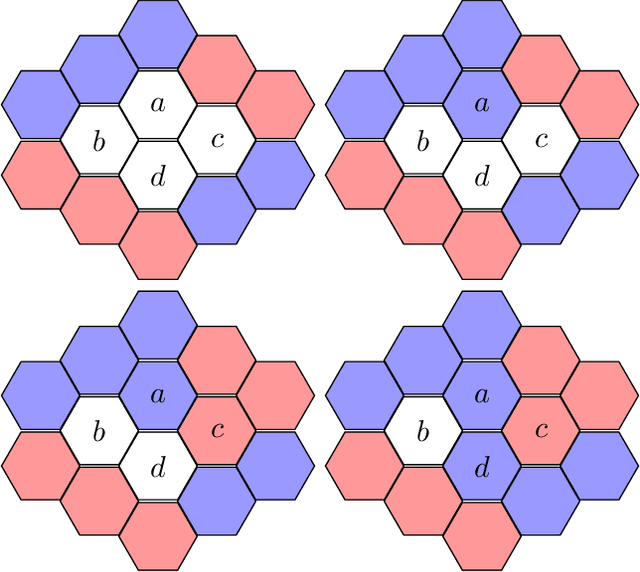

Quantum Combinatorial Games: Structures and Computational Complexity

Nov 07, 2020

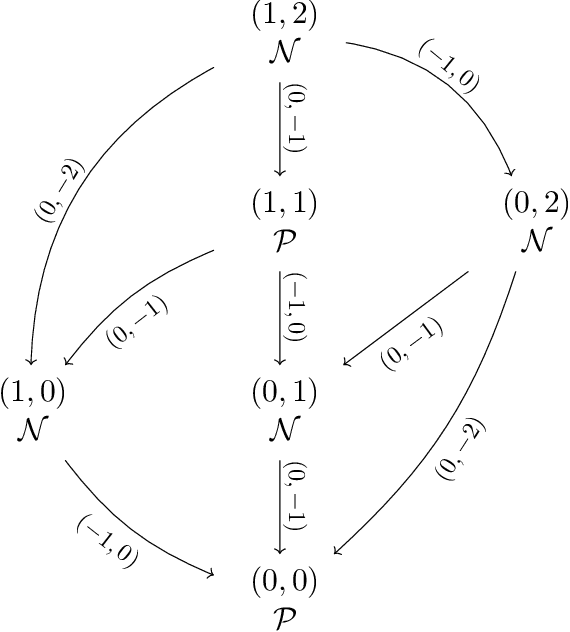

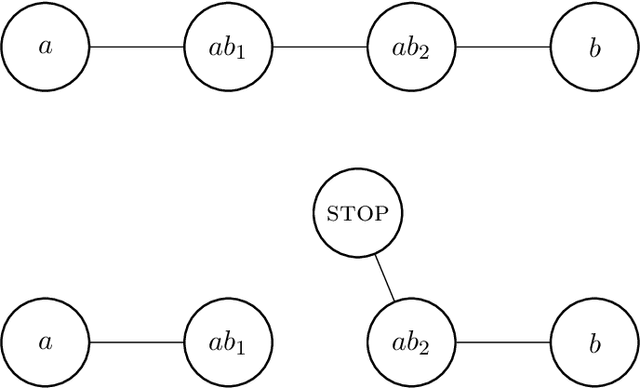

Recently, a standardized framework was proposed for introducing quantum-inspired moves in mathematical games with perfect information and no chance. The beauty of quantum games-succinct in representation, rich in structures, explosive in complexity, dazzling for visualization, and sophisticated for strategic reasoning-has drawn us to play concrete games full of subtleties and to characterize abstract properties pertinent to complexity consequence. Going beyond individual games, we explore the tractability of quantum combinatorial games as whole, and address fundamental questions including: Quantum Leap in Complexity: Are there polynomial-time solvable games whose quantum extensions are intractable? Quantum Collapses in Complexity: Are there PSPACE-complete games whose quantum extensions fall to the lower levels of the polynomial-time hierarchy? Quantumness Matters: How do outcome classes and strategies change under quantum moves? Under what conditions doesn't quantumness matter? PSPACE Barrier for Quantum Leap: Can quantum moves launch PSPACE games into outer polynomial space We show that quantum moves not only enrich the game structure, but also impact their computational complexity. In settling some of these basic questions, we characterize both the powers and limitations of quantum moves as well as the superposition of game configurations that they create. Our constructive proofs-both on the leap of complexity in concrete Quantum Nim and Quantum Undirected Geography and on the continuous collapses, in the quantum setting, of complexity in abstract PSPACE-complete games to each level of the polynomial-time hierarchy-illustrate the striking computational landscape over quantum games and highlight surprising turns with unexpected quantum impact. Our studies also enable us to identify several elegant open questions fundamental to quantum combinatorial game theory (QCGT).

Fast Design Space Exploration of Nonlinear Systems: Part I

Apr 05, 2021

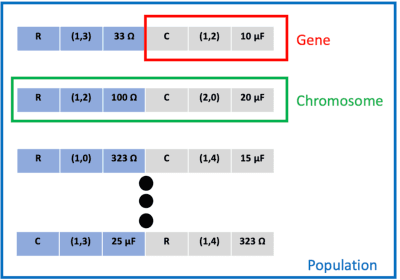

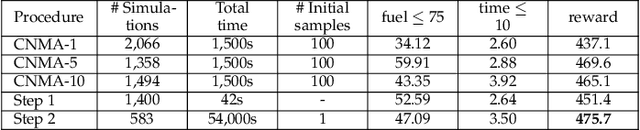

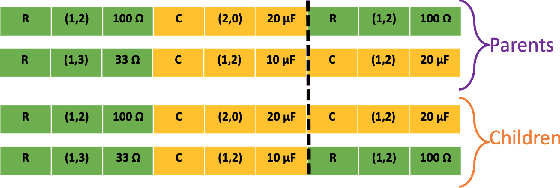

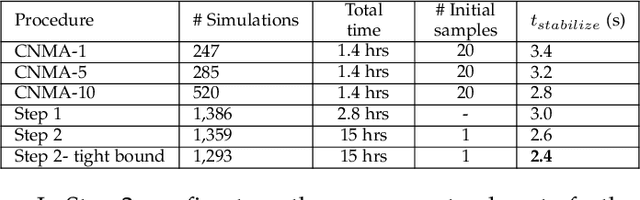

System design tools are often only available as blackboxes with complex nonlinear relationships between inputs and outputs. Blackboxes typically run in the forward direction: for a given design as input they compute an output representing system behavior. Most cannot be run in reverse to produce an input from requirements on output. Thus, finding a design satisfying a requirement is often a trial-and-error process without assurance of optimality. Finding designs concurrently satisfying multiple requirements is harder because designs satisfying individual requirements may conflict with each other. Compounding the hardness are the facts that blackbox evaluations can be expensive and sometimes fail to produce an output due to non-convergence of underlying numerical algorithms. This paper presents CNMA (Constrained optimization with Neural networks, MILP solvers and Active Learning), a new optimization method for blackboxes. It is conservative in the number of blackbox evaluations. Any designs it finds are guaranteed to satisfy all requirements. It is resilient to the failure of blackboxes to compute outputs. It tries to sample only the part of the design space relevant to solving the design problem, leveraging the power of neural networks, MILPs, and a new learning-from-failure feedback loop. The paper also presents parallel CNMA that improves the efficiency and quality of solutions over the sequential version, and tries to steer it away from local optima. CNMA's performance is evaluated for seven nonlinear design problems of 8 (2 problems), 10, 15, 36 and 60 real-valued dimensions and one with 186 binary dimensions. It is shown that CNMA improves the performance of stable, off-the-shelf implementations of Bayesian Optimization and Nelder Mead and Random Search by 1%-87% for a given fixed time and function evaluation budget. Note, that these implementations did not always return solutions.

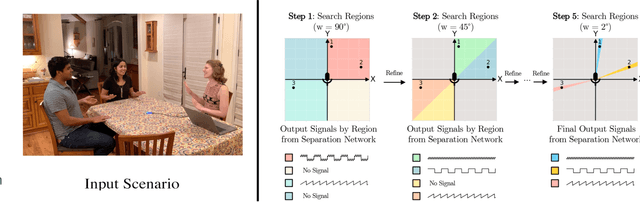

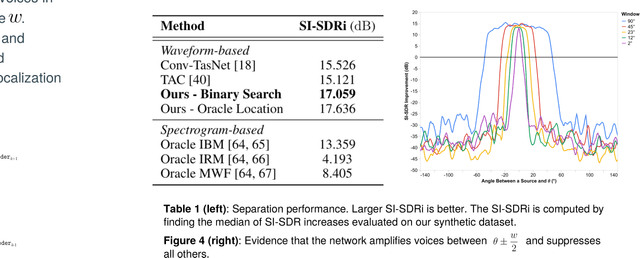

The Cone of Silence: Speech Separation by Localization

Oct 12, 2020

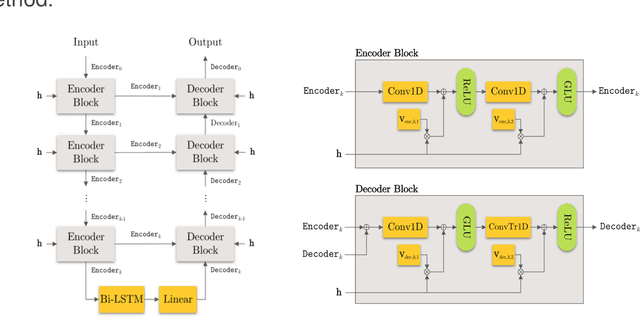

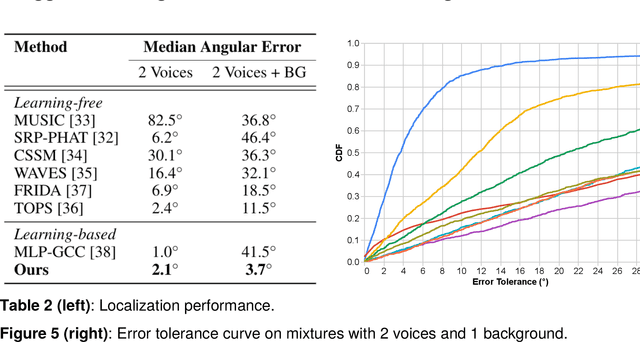

Given a multi-microphone recording of an unknown number of speakers talking concurrently, we simultaneously localize the sources and separate the individual speakers. At the core of our method is a deep network, in the waveform domain, which isolates sources within an angular region $\theta \pm w/2$, given an angle of interest $\theta$ and angular window size $w$. By exponentially decreasing $w$, we can perform a binary search to localize and separate all sources in logarithmic time. Our algorithm allows for an arbitrary number of potentially moving speakers at test time, including more speakers than seen during training. Experiments demonstrate state-of-the-art performance for both source separation and source localization, particularly in high levels of background noise.

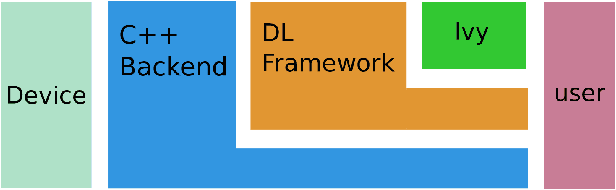

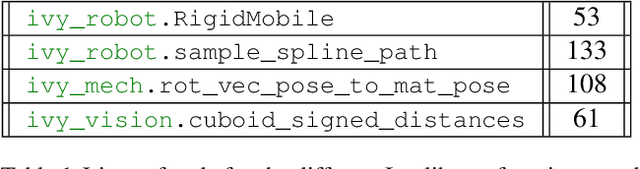

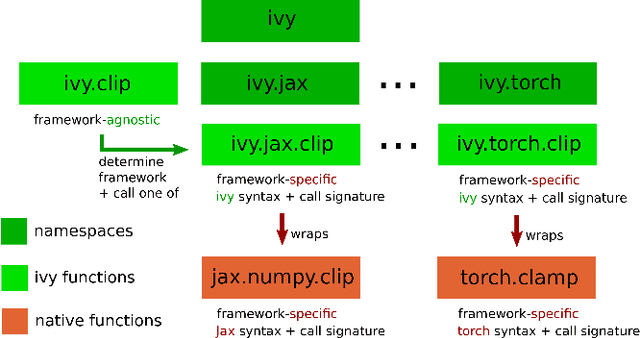

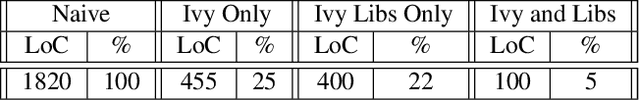

Ivy: Templated Deep Learning for Inter-Framework Portability

Feb 15, 2021

We introduce Ivy, a templated Deep Learning (DL) framework which abstracts existing DL frameworks such that their core functions all exhibit consistent call signatures, syntax and input-output behaviour. Ivy allows high-level framework-agnostic functions to be implemented through the use of framework templates. The framework templates act as placeholders for the specific framework at development time, which are then determined at runtime. The portability of Ivy functions enables their use in projects of any supported framework. Ivy currently supports TensorFlow, PyTorch, MXNet, Jax and NumPy. Alongside Ivy, we release four pure-Ivy libraries for mechanics, 3D vision, robotics, and differentiable environments. Through our evaluations, we show that Ivy can significantly reduce lines of code with a runtime overhead of less than 1% in most cases. We welcome developers to join the Ivy community by writing their own functions, layers and libraries in Ivy, maximizing their audience and helping to accelerate DL research through the creation of lifelong inter-framework codebases. More information can be found at https://ivy-dl.org.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge