"Time": models, code, and papers

A Robust Extrinsic Calibration Framework for Vehicles with Unscaled Sensors

Mar 17, 2021

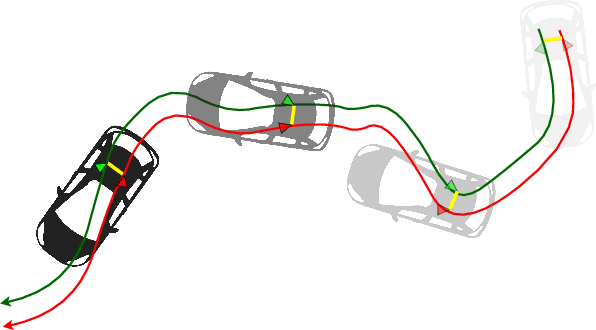

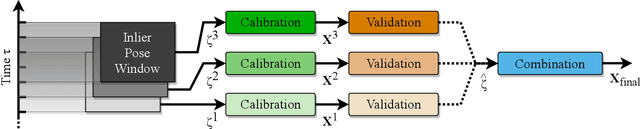

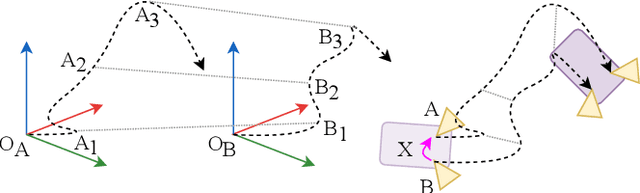

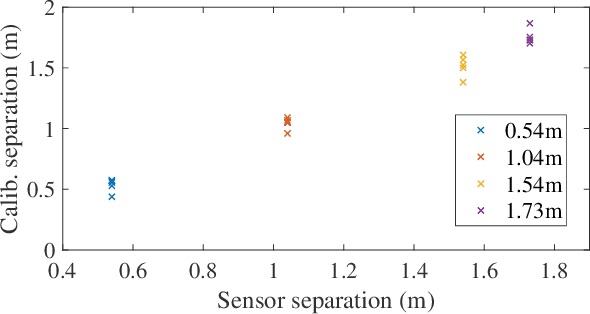

Accurate extrinsic sensor calibration is essential for both autonomous vehicles and robots. Traditionally this is an involved process requiring calibration targets, known fiducial markers and is generally performed in a lab. Moreover, even a small change in the sensor layout requires recalibration. With the anticipated arrival of consumer autonomous vehicles, there is demand for a system which can do this automatically, after deployment and without specialist human expertise. To solve these limitations, we propose a flexible framework which can estimate extrinsic parameters without an explicit calibration stage, even for sensors with unknown scale. Our first contribution builds upon standard hand-eye calibration by jointly recovering scale. Our second contribution is that our system is made robust to imperfect and degenerate sensor data, by collecting independent sets of poses and automatically selecting those which are most ideal. We show that our approach's robustness is essential for the target scenario. Unlike previous approaches, ours runs in real time and constantly estimates the extrinsic transform. For both an ideal experimental setup and a real use case, comparison against these approaches shows that we outperform the state-of-the-art. Furthermore, we demonstrate that the recovered scale may be applied to the full trajectory, circumventing the need for scale estimation via sensor fusion.

Turkey Behavior Identification System with a GUI Using Deep Learning and Video Analytics

Feb 09, 2021

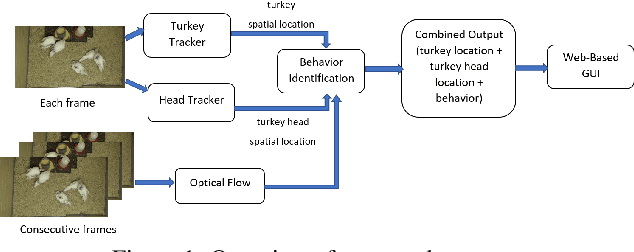

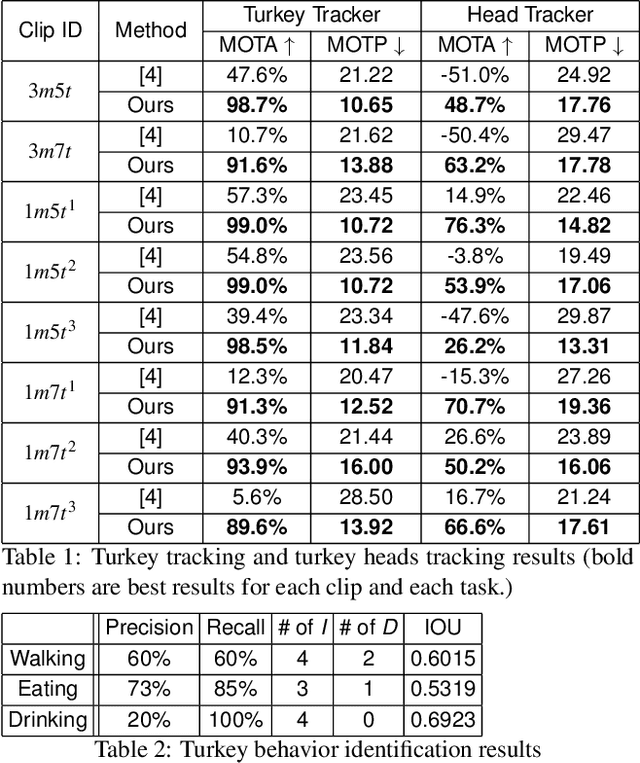

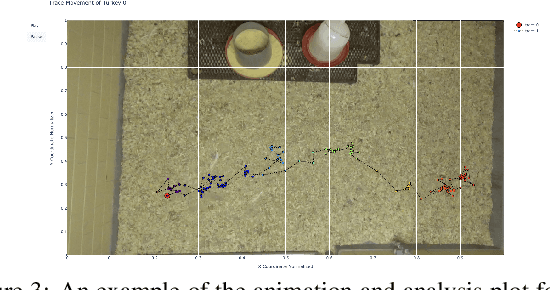

In this paper, we propose a video analytics system to identify the behavior of turkeys. Turkey behavior provides evidence to assess turkey welfare, which can be negatively impacted by uncomfortable ambient temperature and various diseases. In particular, healthy and sick turkeys behave differently in terms of the duration and frequency of activities such as eating, drinking, preening, and aggressive interactions. Our system incorporates recent advances in object detection and tracking to automate the process of identifying and analyzing turkey behavior captured by commercial grade cameras. We combine deep-learning and traditional image processing methods to address challenges in this practical agricultural problem. Our system also includes a web-based user interface to create visualization of automated analysis results. Together, we provide an improved tool for turkey researchers to assess turkey welfare without the time-consuming and labor-intensive manual inspection.

AI on the Bog: Monitoring and Evaluating Cranberry Crop Risk

Nov 08, 2020

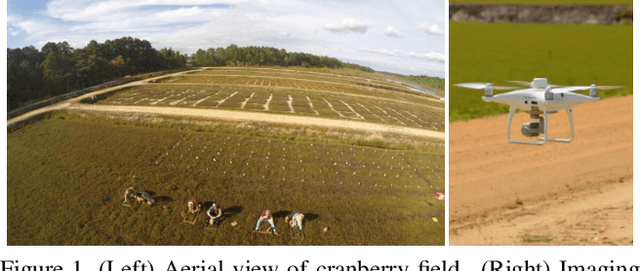

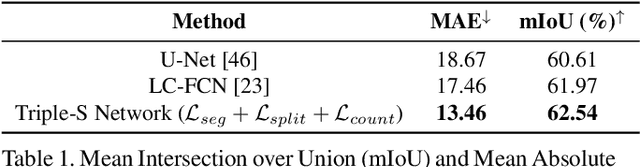

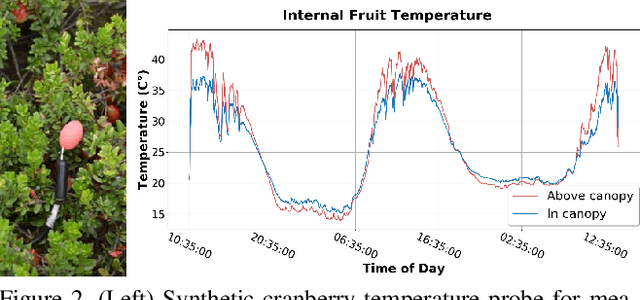

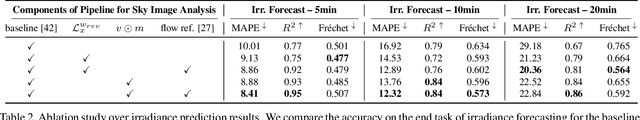

Machine vision for precision agriculture has attracted considerable research interest in recent years. The goal of this paper is to develop an end-to-end cranberry health monitoring system to enable and support real time cranberry over-heating assessment to facilitate informed decisions that may sustain the economic viability of the farm. Toward this goal, we propose two main deep learning-based modules for: 1) cranberry fruit segmentation to delineate the exact fruit regions in the cranberry field image that are exposed to sun, 2) prediction of cloud coverage conditions and sun irradiance to estimate the inner temperature of exposed cranberries. We develop drone-based field data and ground-based sky data collection systems to collect video imagery at multiple time points for use in crop health analysis. Extensive evaluation on the data set shows that it is possible to predict exposed fruit's inner temperature with high accuracy (0.02% MAPE). The sun irradiance prediction error was found to be 8.41-20.36% MAPE in the 5-20 minutes time horizon. With 62.54% mIoU for segmentation and 13.46 MAE for counting accuracies in exposed fruit identification, this system is capable of giving informed feedback to growers to take precautionary action (e.g. irrigation) in identified crop field regions with higher risk of sunburn in the near future. Though this novel system is applied for cranberry health monitoring, it represents a pioneering step forward for efficient farming and is useful in precision agriculture beyond the problem of cranberry overheating.

Magnetization Transfer-Mediated MR Fingerprinting

Apr 06, 2021

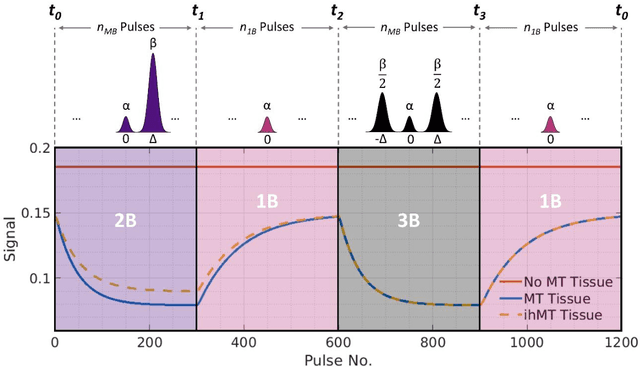

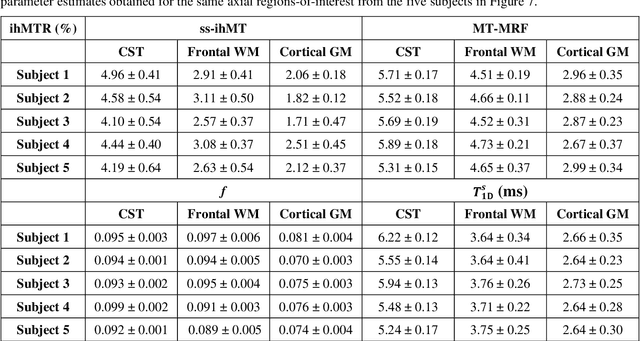

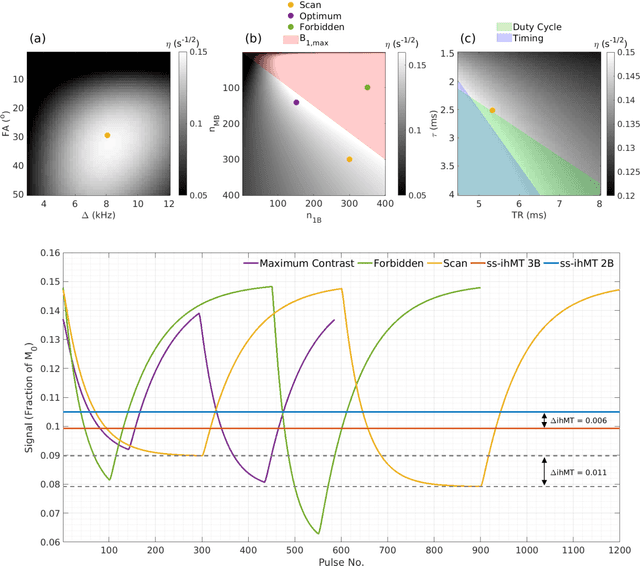

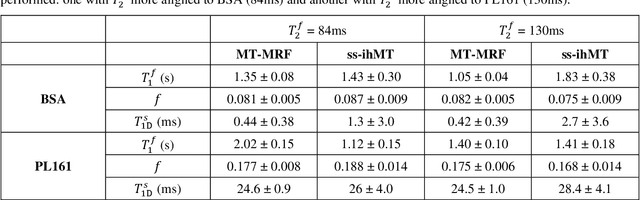

Purpose: Magnetization transfer (MT) and inhomogeneous MT (ihMT) contrasts are used in MRI to provide information about macromolecular tissue content. In particular, MT is sensitive to macromolecules and ihMT appears to be specific to myelinated tissue. This study proposes a technique to characterize MT and ihMT properties from a single acquisition, producing both semiquantitative contrast ratios, and quantitative parameter maps. Theory and Methods: Building upon previous work that uses multiband radiofrequency (RF) pulses to efficiently generate ihMT contrast, we propose a cyclic-steady-state approach that cycles between multiband and single-band pulses to boost the achieved contrast. Resultant time-variable signals are reminiscent of a magnetic resonance fingerprinting (MRF) acquisition, except that the signal fluctuations are entirely mediated by magnetization transfer effects. A dictionary-based low-rank inversion method is used to reconstruct the resulting images and to produce both semiquantitative MT ratio (MTR) and ihMT ratio (ihMTR) maps, as well as quantitative parameter estimates corresponding to an ihMT tissue model. Results: Phantom and in vivo brain data acquired at 1.5T demonstrate the expected contrast trends, with ihMTR maps showing contrast more specific to white matter (WM), as has been reported by others. Quantitative estimation of semisolid fraction and dipolar T1 was also possible and yielded measurements consistent with literature values in the brain. Conclusions: By cycling between multiband and single-band pulses, an entirely magnetization transfer mediated 'fingerprinting' method was demonstrated. This proof-of-concept approach can be used to generate semiquantitative maps and quantitatively estimate some macromolecular specific tissue parameters.

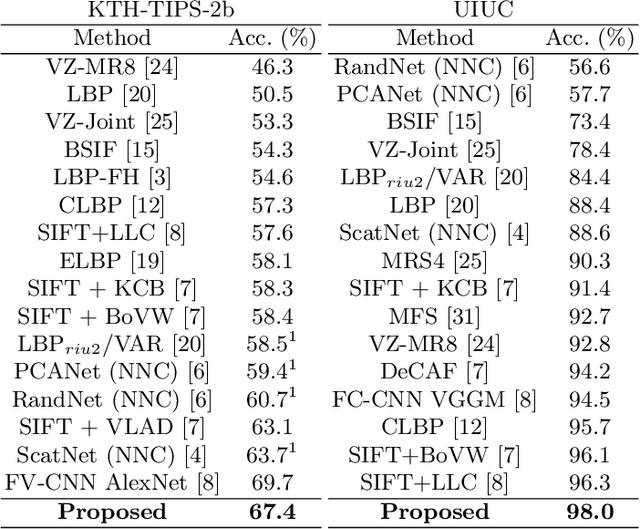

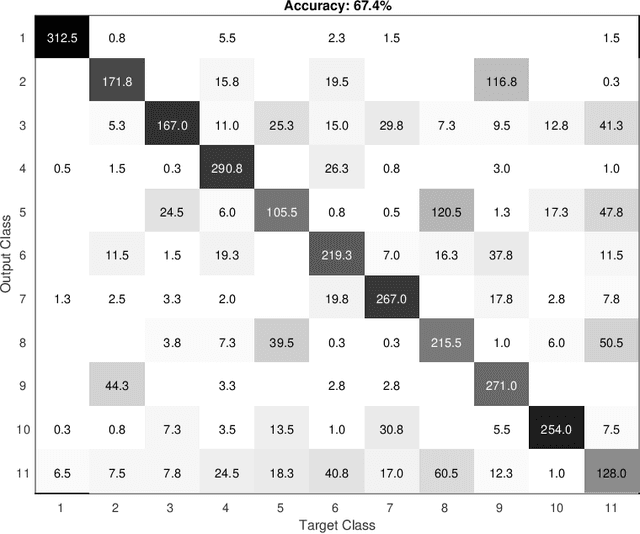

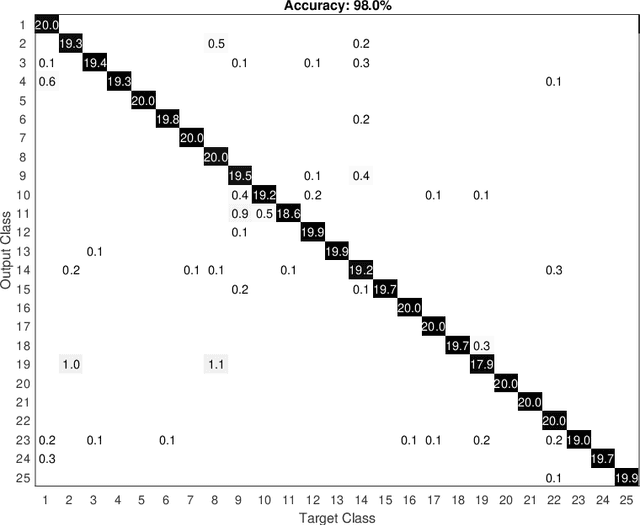

An application of a pseudo-parabolic modeling to texture image recognition

Feb 09, 2021

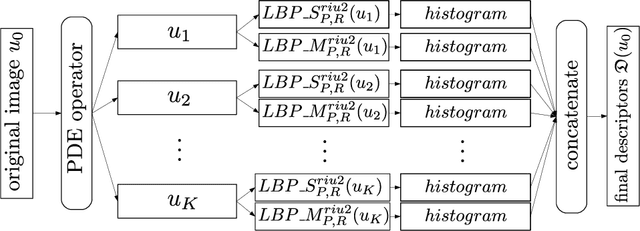

In this work, we present a novel methodology for texture image recognition using a partial differential equation modeling. More specifically, we employ the pseudo-parabolic Buckley-Leverett equation to provide a dynamics to the digital image representation and collect local descriptors from those images evolving in time. For the local descriptors we employ the magnitude and signal binary patterns and a simple histogram of these features was capable of achieving promising results in a classification task. We compare the accuracy over well established benchmark texture databases and the results demonstrate competitiveness, even with the most modern deep learning approaches. The achieved results open space for future investigation on this type of modeling for image analysis, especially when there is no large amount of data for training deep learning models and therefore model-based approaches arise as suitable alternatives.

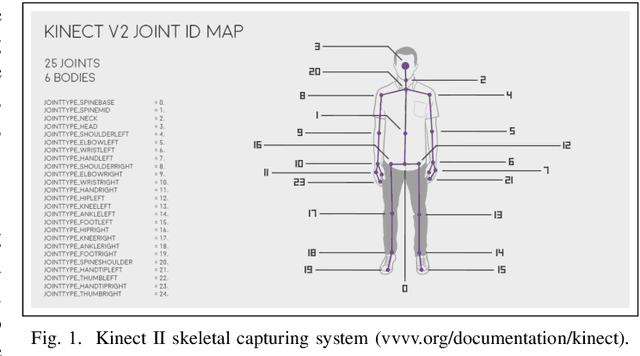

Space-Time Domain Tensor Neural Networks: An Application on Human Pose Recognition

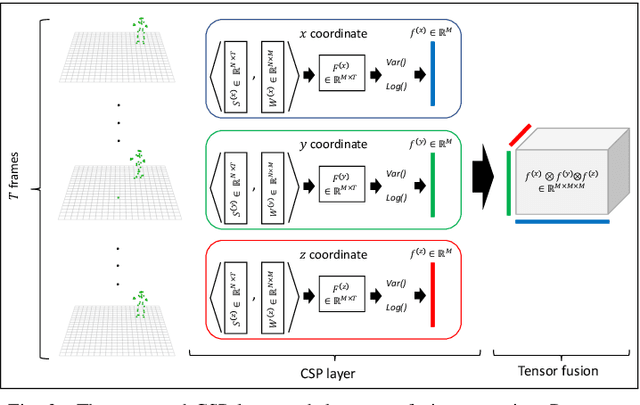

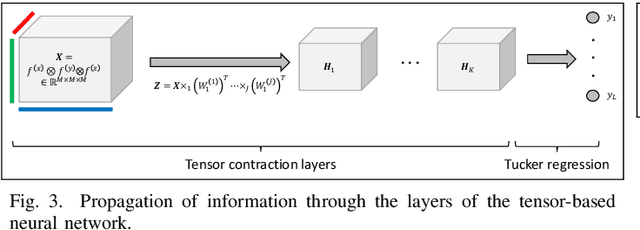

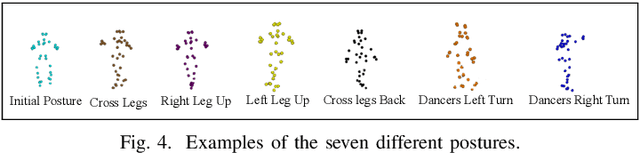

Apr 17, 2020

Recent advances in sensing technologies require the design and development of pattern recognition models capable of processing spatiotemporal data efficiently. In this work, we propose a spatially and temporally aware tensor-based neural network for human pose recognition using three-dimensional skeleton data. Our model employs three novel components. First, an input layer capable of constructing highly discriminative spatiotemporal features. Second, a tensor fusion operation that produces compact yet rich representations of the data, and third, a tensor-based neural network that processes data representations in their original tensor form. Our model is end-to-end trainable and characterized by a small number of trainable parameters making it suitable for problems where the annotated data is limited. Experimental validation of the proposed model indicates that it can achieve state-of-the-art performance. Although in this study, we consider the problem of human pose recognition, our methodology is general enough to be applied to any pattern recognition problem spatiotemporal data from sensor networks.

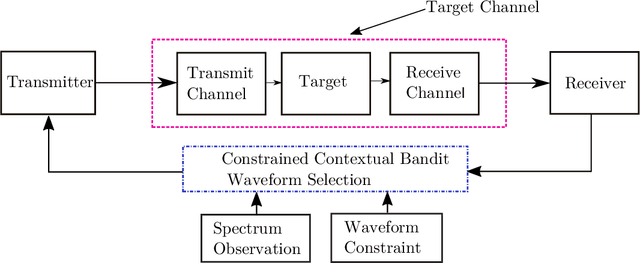

Constrained Contextual Bandit Learning for Adaptive Radar Waveform Selection

Mar 09, 2021

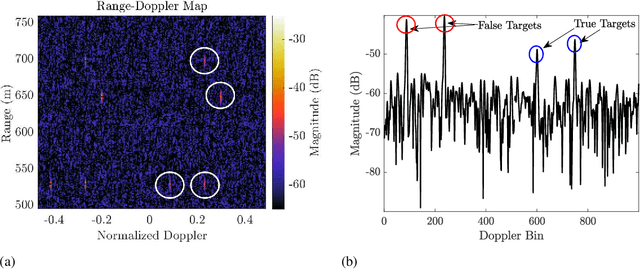

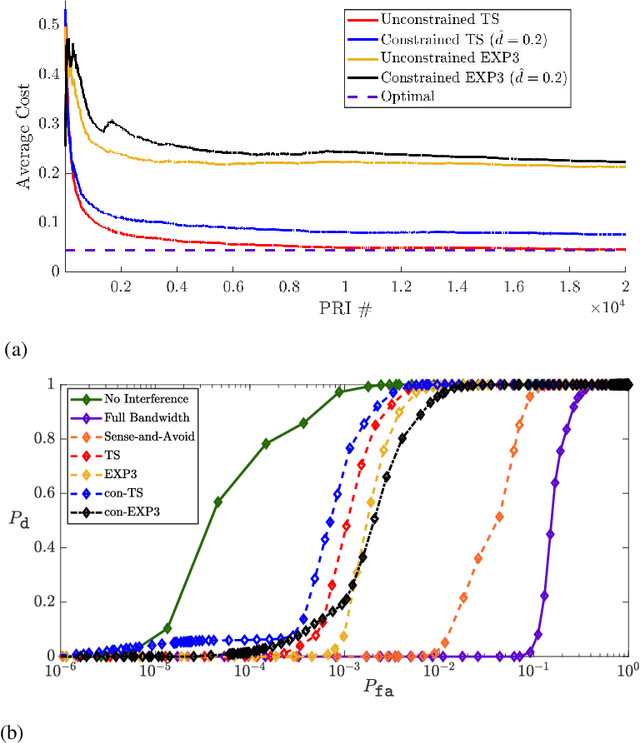

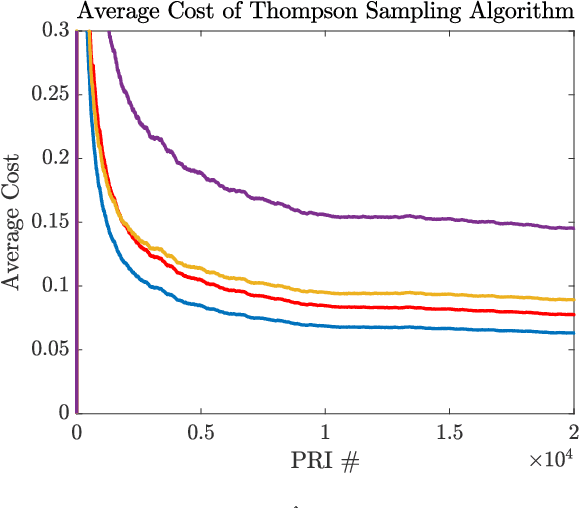

A sequential decision process in which an adaptive radar system repeatedly interacts with a finite-state target channel is studied. The radar is capable of passively sensing the spectrum at regular intervals, which provides side information for the waveform selection process. The radar transmitter uses the sequence of spectrum observations as well as feedback from a collocated receiver to select waveforms which accurately estimate target parameters. It is shown that the waveform selection problem can be effectively addressed using a linear contextual bandit formulation in a manner that is both computationally feasible and sample efficient. Stochastic and adversarial linear contextual bandit models are introduced, allowing the radar to achieve effective performance in broad classes of physical environments. Simulations in a radar-communication coexistence scenario, as well as in an adversarial radar-jammer scenario, demonstrate that the proposed formulation provides a substantial improvement in target detection performance when Thompson Sampling and EXP3 algorithms are used to drive the waveform selection process. Further, it is shown that the harmful impacts of pulse-agile behavior on coherently processed radar data can be mitigated by adopting a time-varying constraint on the radar's waveform catalog.

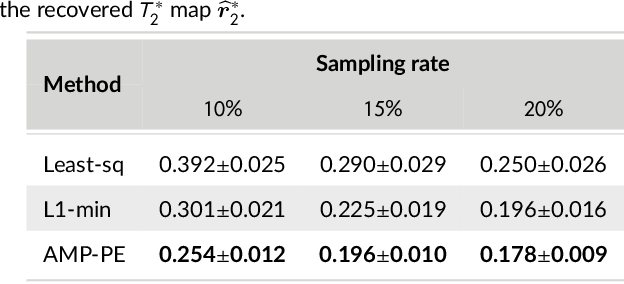

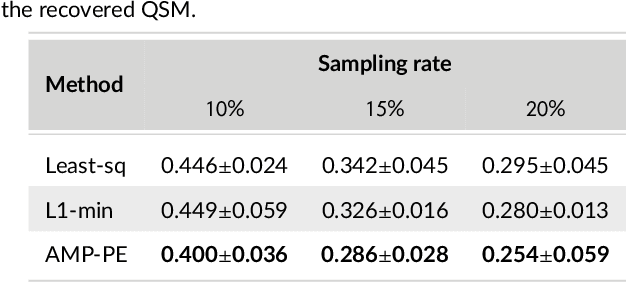

A Bayesian approach for $T_2^*$ Mapping and Quantitative Susceptibility Mapping

Mar 09, 2021

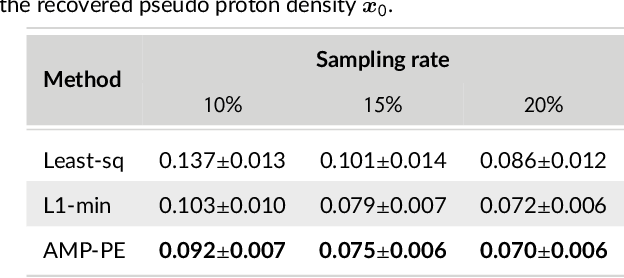

Magnetic resonance $T_2^*$ mapping and quantitative susceptibility mapping (QSM) provide direct and precise mappings of tissue contrasts. They are widely used to study iron deposition, hemorrhage and calcification in various clinical applications. In practice, the measurements can be undersampled in the $k$-space to reduce the scan time needed for high-resolution 3D maps, and sparse prior on the wavelet coefficients of images can be used to fill in the missing information via compressive sensing. To avoid the extensive parameter tuning process of conventional regularization methods, we adopt a Bayesian approach to perform $T_2^*$ mapping and QSM using approximate message passing (AMP): the sparse prior is enforced through probability distributions, and the distribution parameters can be automatically and adaptively estimated. In this paper we propose a new nonlinear AMP framework that incorporates the mono-exponential decay model, and use it to recover the proton density, the $T_2^*$ map and complex multi-echo images. The QSM can be computed from the multi-echo images subsequently. Experimental results show that the proposed approach successfully recovers $T_2^*$ map and QSM across various sampling rates, and performs much better than the state-of-the-art $l_1$-norm regularization approach.

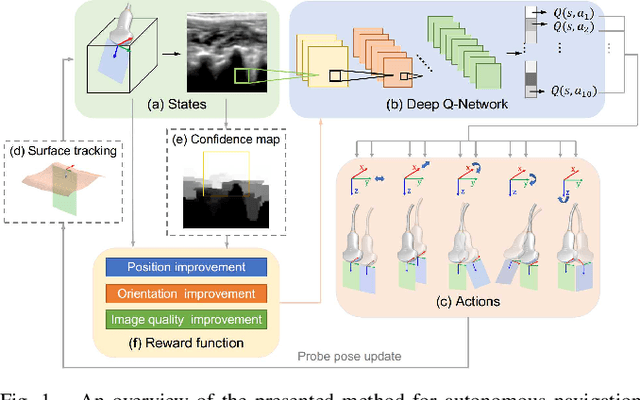

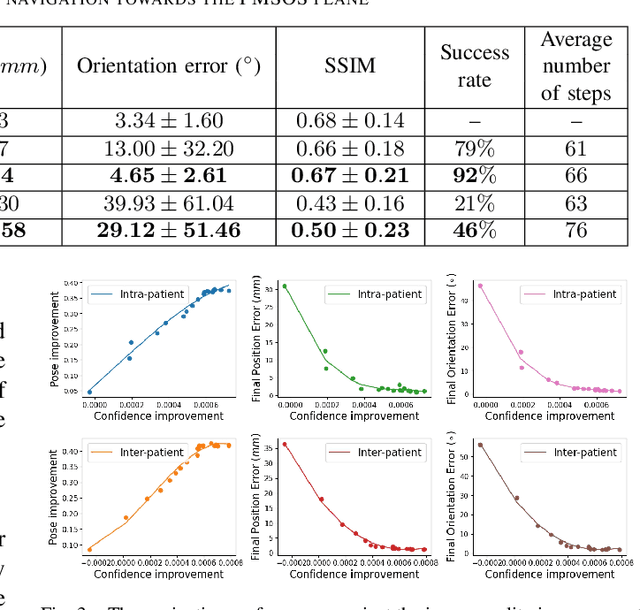

Autonomous Navigation of an Ultrasound Probe Towards Standard Scan Planes with Deep Reinforcement Learning

Mar 01, 2021

Autonomous ultrasound (US) acquisition is an important yet challenging task, as it involves interpretation of the highly complex and variable images and their spatial relationships. In this work, we propose a deep reinforcement learning framework to autonomously control the 6-D pose of a virtual US probe based on real-time image feedback to navigate towards the standard scan planes under the restrictions in real-world US scans. Furthermore, we propose a confidence-based approach to encode the optimization of image quality in the learning process. We validate our method in a simulation environment built with real-world data collected in the US imaging of the spine. Experimental results demonstrate that our method can perform reproducible US probe navigation towards the standard scan plane with an accuracy of $4.91mm/4.65^\circ$ in the intra-patient setting, and accomplish the task in the intra- and inter-patient settings with a success rate of $92\%$ and $46\%$, respectively. The results also show that the introduction of image quality optimization in our method can effectively improve the navigation performance.

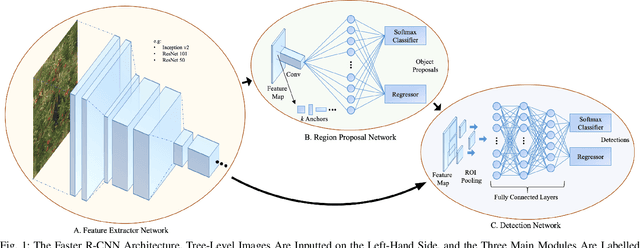

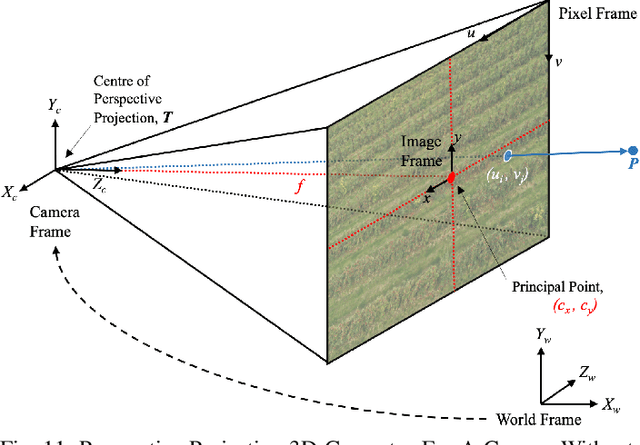

An Artificial Intelligence System for Combined Fruit Detection and Georeferencing, Using RTK-Based Perspective Projection in Drone Imagery

Jan 01, 2021

This work presents an Artificial Intelligence (AI) system, based on the Faster Region-Based Convolution Neural Network (Faster R-CNN) framework, which detects and counts apples from oblique, aerial drone imagery of giant commercial orchards. To reduce computational cost, a novel precursory stage to the network is designed to preprocess raw imagery into cropped images of individual trees. Unique geospatial identifiers are allocated to these using the perspective projection model. This employs Real-Time Kinematic (RTK) data, Digital Terrain and Surface Models (DTM and DSM), as well as internal and external camera parameters. The bulk of experiments however focus on tuning hyperparameters in the detection network itself. Apples which are on trees and apples which are on the ground are treated as separate classes. A mean Average Precision (mAP) metric, calibrated by the size of the two classes, is devised to mitigate spurious results. Anchor box design is of key interest due to the scale of the apples. As such, a k-means clustering approach, never before seen in literature for Faster R-CNN, resulted in the most significant improvements to calibrated mAP. Other experiments showed that the maximum number of box proposals should be 225; the initial learning rate of 0.001 is best applied to the adaptive RMS Prop optimiser; and ResNet 101 is the ideal base feature extractor when considering mAP and, to a lesser extent, inference time. The amalgamation of the optimal hyperparameters leads to a model with a calibrated mAP of 0.7627.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge