"Time": models, code, and papers

Towards Real-time Eyeblink Detection in The Wild:Dataset,Theory and Practices

Feb 21, 2019

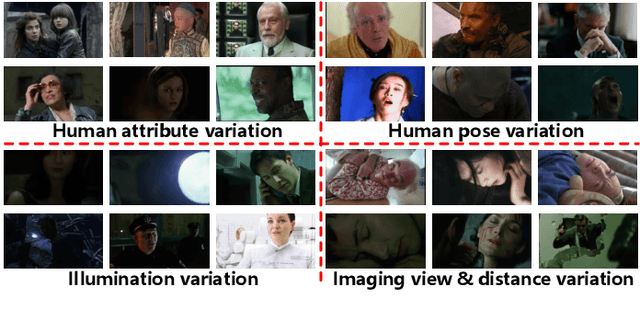

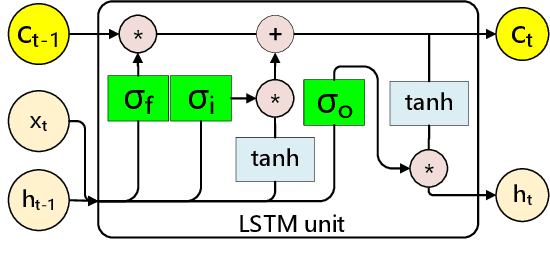

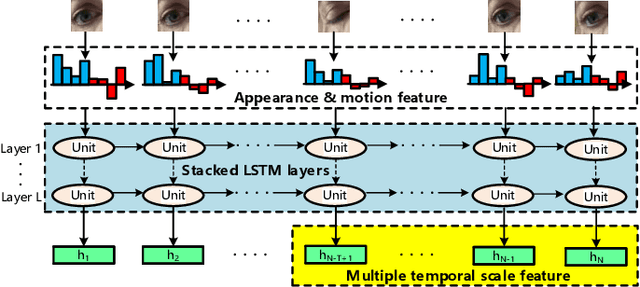

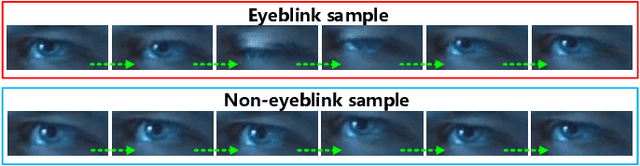

Effective and real-time eyeblink detection is of wide-range applications, such as deception detection, drive fatigue detection, face anti-spoofing, etc. Although numerous of efforts have already been paid, most of them focus on addressing the eyeblink detection problem under the constrained indoor conditions with the relative consistent subject and environment setup. Nevertheless, towards the practical applications eyeblink detection in the wild is more required, and of greater challenges. However, to our knowledge this has not been well studied before. In this paper, we shed the light to this research topic. A labelled eyeblink in the wild dataset (i.e., HUST-LEBW) of 673 eyeblink video samples (i.e., 381 positives, and 292 negatives) is first established by us. These samples are captured from the unconstrained movies, with the dramatic variation on human attribute, human pose, illumination condition, imaging configuration, etc. Then, we formulate eyeblink detection task as a spatial-temporal pattern recognition problem. After locating and tracking human eye using SeetaFace engine and KCF tracker respectively, a modified LSTM model able to capture the multi-scale temporal information is proposed to execute eyeblink verification. A feature extraction approach that reveals appearance and motion characteristics simultaneously is also proposed. The experiments on HUST-LEBW reveal the superiority and efficiency of our approach. It also verifies that, the existing eyeblink detection methods cannot achieve satisfactory performance in the wild.

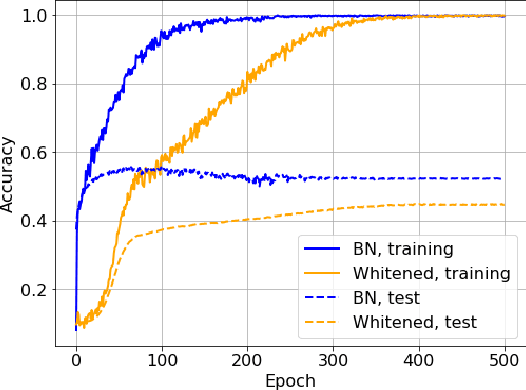

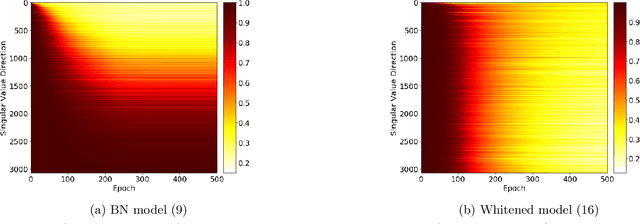

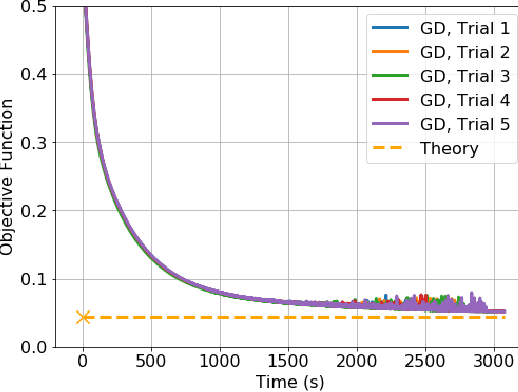

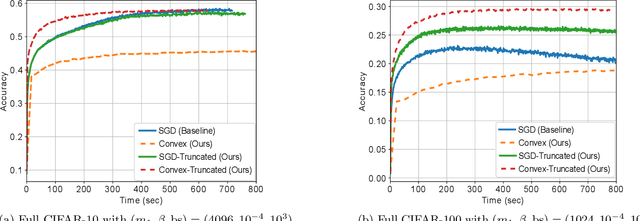

Demystifying Batch Normalization in ReLU Networks: Equivalent Convex Optimization Models and Implicit Regularization

Mar 02, 2021

Batch Normalization (BN) is a commonly used technique to accelerate and stabilize training of deep neural networks. Despite its empirical success, a full theoretical understanding of BN is yet to be developed. In this work, we analyze BN through the lens of convex optimization. We introduce an analytic framework based on convex duality to obtain exact convex representations of weight-decay regularized ReLU networks with BN, which can be trained in polynomial-time. Our analyses also show that optimal layer weights can be obtained as simple closed-form formulas in the high-dimensional and/or overparameterized regimes. Furthermore, we find that Gradient Descent provides an algorithmic bias effect on the standard non-convex BN network, and we design an approach to explicitly encode this implicit regularization into the convex objective. Experiments with CIFAR image classification highlight the effectiveness of this explicit regularization for mimicking and substantially improving the performance of standard BN networks.

Human-in-the-loop Handling of Knowledge Drift

Mar 27, 2021

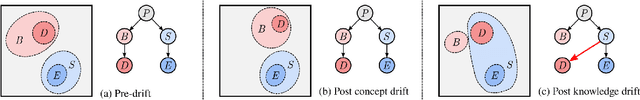

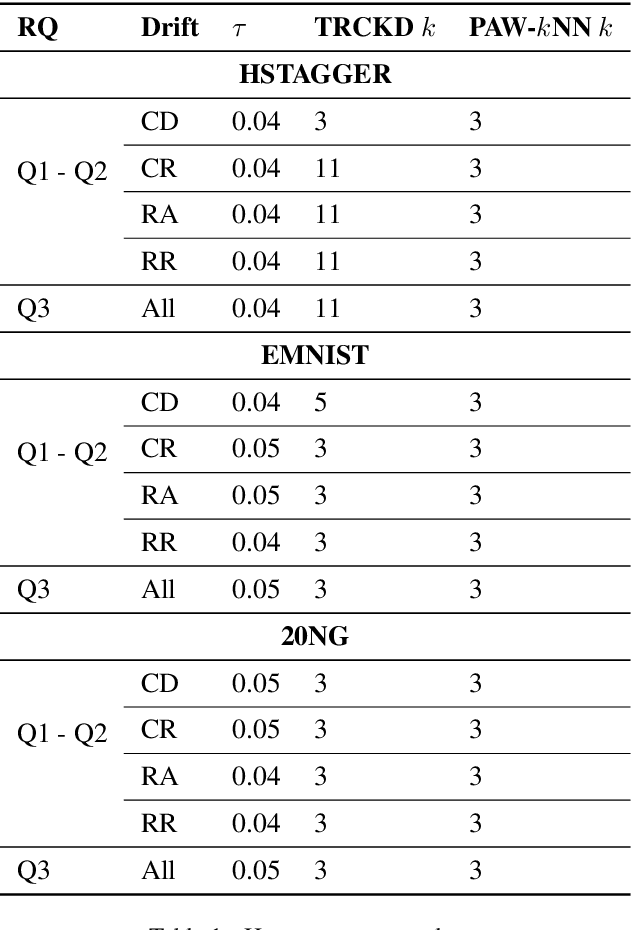

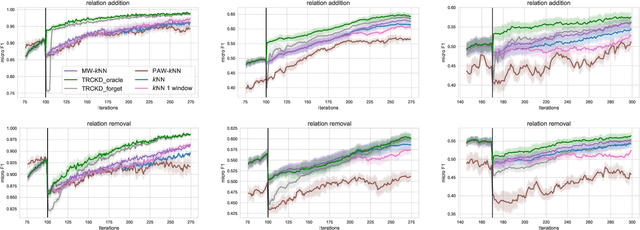

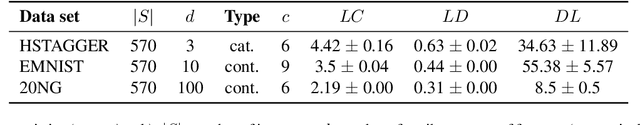

We introduce and study knowledge drift (KD), a complex form of drift that occurs in hierarchical classification. Under KD the vocabulary of concepts, their individual distributions, and the is-a relations between them can all change over time. The main challenge is that, since the ground-truth concept hierarchy is unobserved, it is hard to tell apart different forms of KD. For instance, introducing a new is-a relation between two concepts might be confused with individual changes to those concepts, but it is far from equivalent. Failure to identify the right kind of KD compromises the concept hierarchy used by the classifier, leading to systematic prediction errors. Our key observation is that in many human-in-the-loop applications (like smart personal assistants) the user knows whether and what kind of drift occurred recently. Motivated by this, we introduce TRCKD, a novel approach that combines automated drift detection and adaptation with an interactive stage in which the user is asked to disambiguate between different kinds of KD. In addition, TRCKD implements a simple but effective knowledge-aware adaptation strategy. Our simulations show that often a handful of queries to the user are enough to substantially improve prediction performance on both synthetic and realistic data.

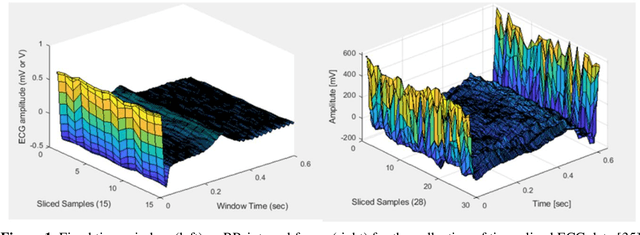

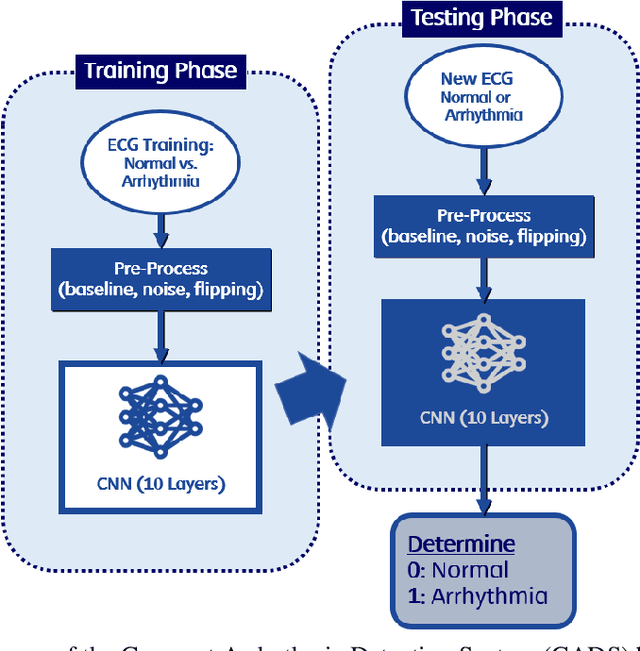

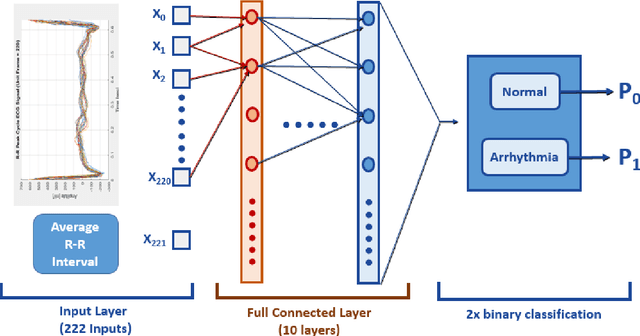

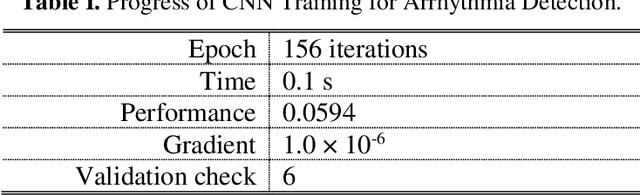

Deep Learning-Based Arrhythmia Detection Using RR-Interval Framed Electrocardiograms

Dec 01, 2020

Deep learning applied to electrocardiogram (ECG) data can be used to achieve personal authentication in biometric security applications, but it has not been widely used to diagnose cardiovascular disorders. We developed a deep learning model for the detection of arrhythmia in which time-sliced ECG data representing the distance between successive R-peaks are used as the input for a convolutional neural network (CNN). The main objective is developing the compact deep learning based detect system which minimally uses the dataset but delivers the confident accuracy rate of the Arrhythmia detection. This compact system can be implemented in wearable devices or real-time monitoring equipment because the feature extraction step is not required for complex ECG waveforms, only the R-peak data is needed. The results of both tests indicated that the Compact Arrhythmia Detection System (CADS) matched the performance of conventional systems for the detection of arrhythmia in two consecutive test runs. All features of the CADS are fully implemented and publicly available in MATLAB.

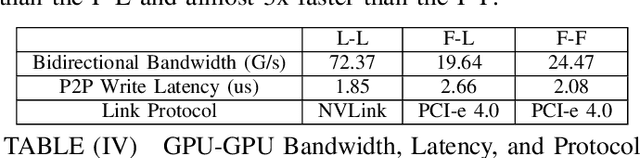

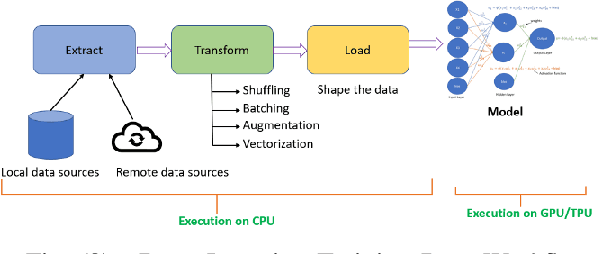

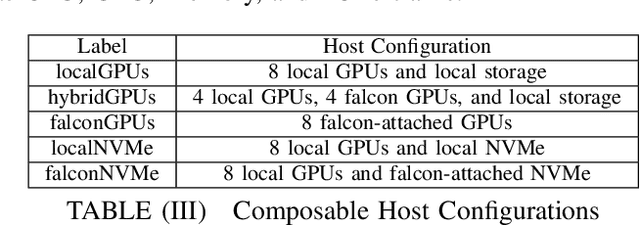

Performance Analysis of Deep Learning Workloads on a Composable System

Mar 19, 2021

A composable infrastructure is defined as resources, such as compute, storage, accelerators and networking, that are shared in a pool and that can be grouped in various configurations to meet application requirements. This freedom to 'mix and match' resources dynamically allows for experimentation early in the design cycle, prior to the final architectural design or hardware implementation of a system. This design provides flexibility to serve a variety of workloads and provides a dynamic co-design platform that allows experiments and measurements in a controlled manner. For instance, key performance bottlenecks can be revealed early on in the experimentation phase thus avoiding costly and time consuming mistakes. Additionally, various system-level topologies can be evaluated when experimenting with new System on Chip (SoCs) and new accelerator types. This paper details the design of an enterprise composable infrastructure that we have implemented and made available to our partners in the IBM Research AI Hardware Center (AIHC). Our experimental evaluations on the composable system give insights into how the system works and evaluates the impact of various resource aggregations and reconfigurations on representative deep learning benchmarks.

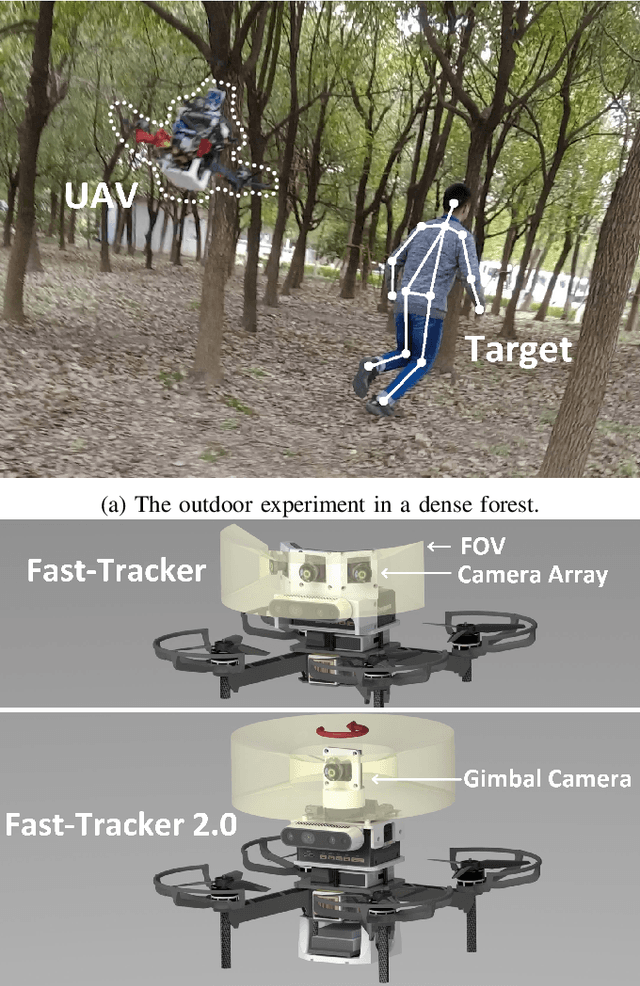

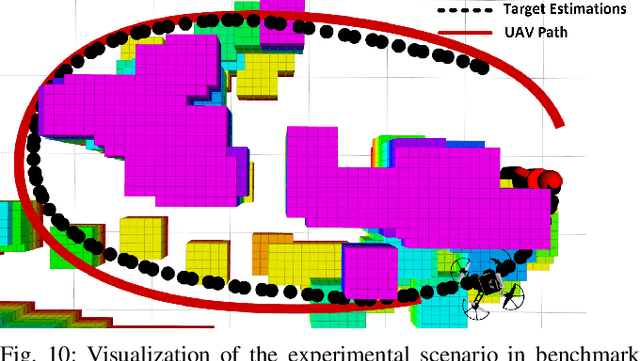

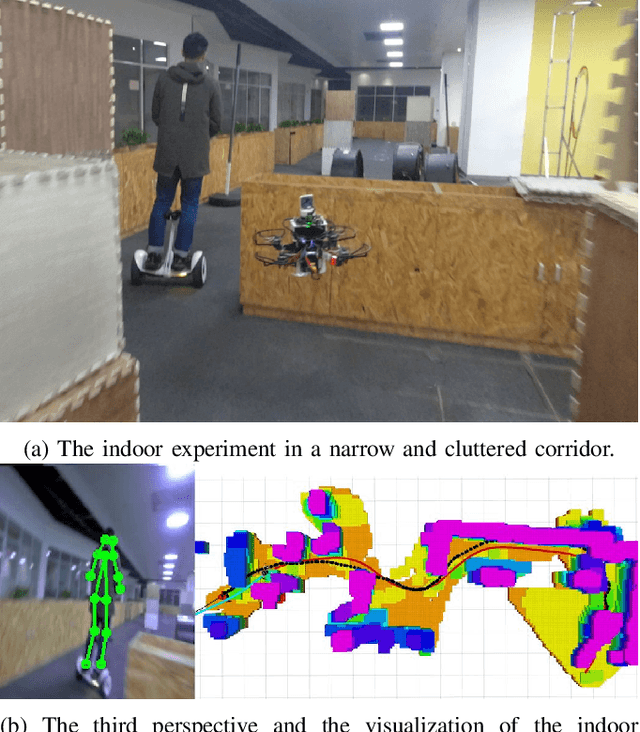

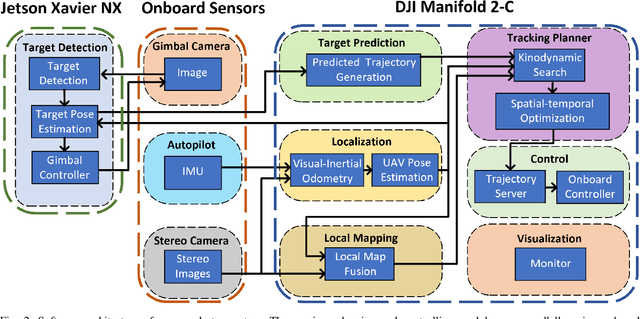

Fast-Tracker 2.0: Improving Autonomy of Aerial Tracking with Active Vision and Human Location Regression

Mar 11, 2021

In recent years, several progressive works promote the development of aerial tracking. One of the representative works is our previous work Fast-tracker which is applicable to various challenging tracking scenarios. However, it suffers from two main drawbacks: 1) the over simplification in target detection by using artificial markers and 2) the contradiction between simultaneous target and environment perception with limited onboard vision. In this paper, we upgrade the target detection in Fast-tracker to detect and localize a human target based on deep learning and non-linear regression to solve the former problem. For the latter one, we equip the quadrotor system with 360 degree active vision on a customized gimbal camera. Furthermore, we improve the tracking trajectory planning in Fast-tracker by incorporating an occlusion-aware mechanism that generates observable tracking trajectories. Comprehensive real-world tests confirm the proposed system's robustness and real-time capability. Benchmark comparisons with Fast-tracker validate that the proposed system presents better tracking performance even when performing more difficult tracking tasks.

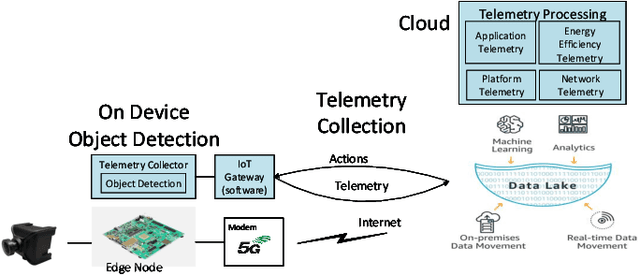

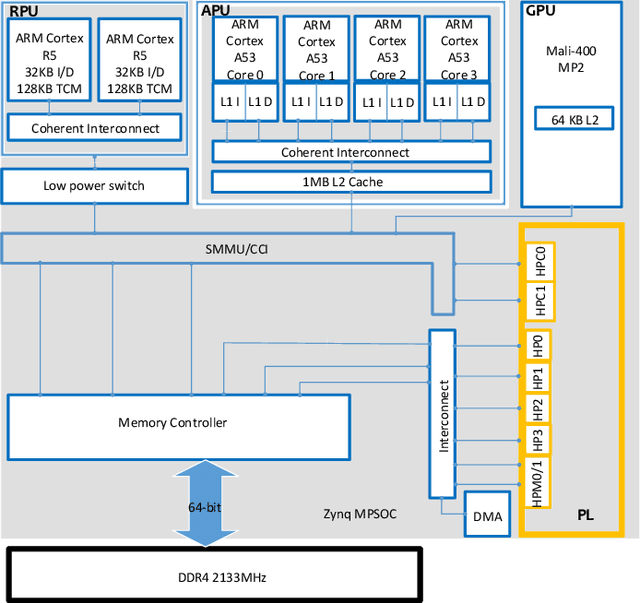

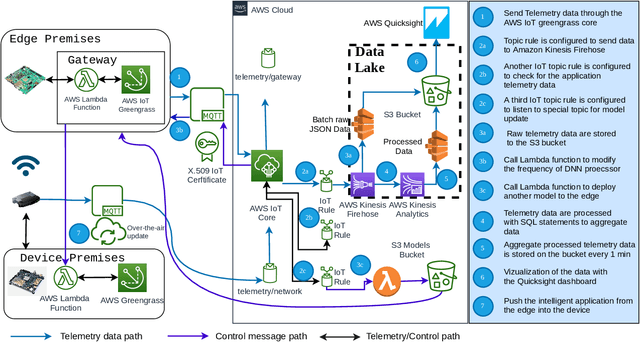

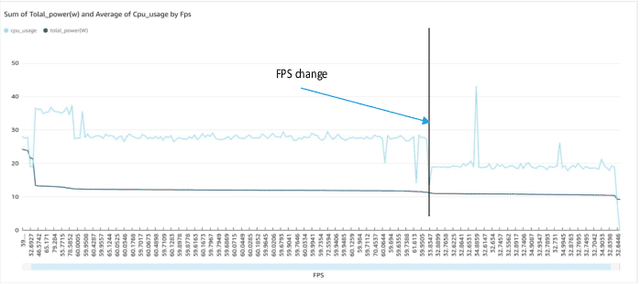

Data Collection and Acceleration Infrastructure for FPGA-based Edge AI Applications

Mar 11, 2021

As data being produced by IoT applications continues to explode, there's a growing need to bring computing power closer to the source of the data to meet the response-time, power-consumption and cost goals of performance-critical applications like Industrial Internet of Things (IIoT), Automated Driving, Medical Imaging or Surveillance among others. This paper proposes a FPGA-based data collection and utilization framework that allows runtime platform and application data to be sent to an edge and cloud system via data collection agents running close to the platform. Agents are connected to a cloud system able to train AI models to improve overall energy efficiency of an AI application executed on a FPGA-based edge platform. In the implementation part we show that it is feasible to collect relevant data from an FPGA platform, transmit the data to a cloud system for processing and receiving feedback actions to execute an edge AI application energy efficiently. As future work we foresee the possibility to train, deploy and continuously improve a base model able to efficiently adapt the execution of edge applications.

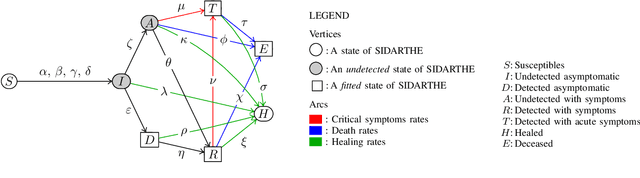

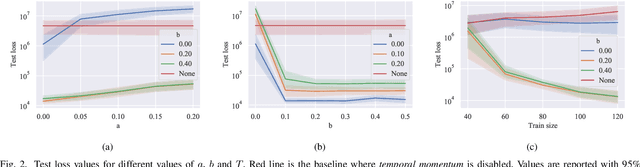

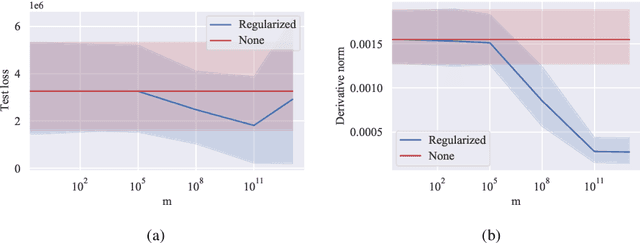

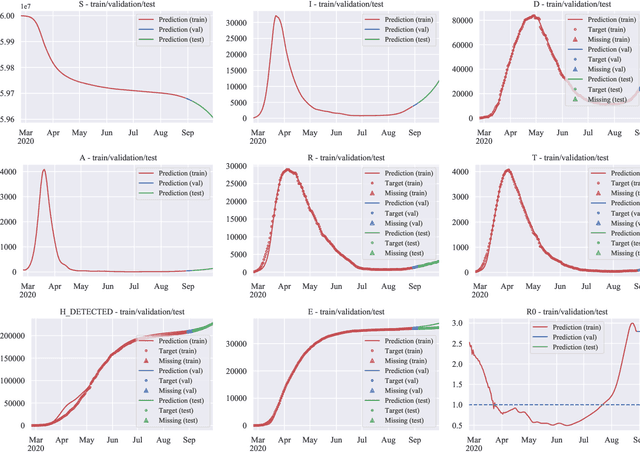

An Optimal Control Approach to Learning in SIDARTHE Epidemic model

Oct 28, 2020

The COVID-19 outbreak has stimulated the interest in the proposal of novel epidemiological models to predict the course of the epidemic so as to help planning effective control strategies. In particular, in order to properly interpret the available data, it has become clear that one must go beyond most classic epidemiological models and consider models that, like the recently proposed SIDARTHE, offer a richer description of the stages of infection. The problem of learning the parameters of these models is of crucial importance especially when assuming that they are time-variant, which further enriches their effectiveness. In this paper we propose a general approach for learning time-variant parameters of dynamic compartmental models from epidemic data. We formulate the problem in terms of a functional risk that depends on the learning variables through the solutions of a dynamic system. The resulting variational problem is then solved by using a gradient flow on a suitable, regularized functional. We forecast the epidemic evolution in Italy and France. Results indicate that the model provides reliable and challenging predictions over all available data as well as the fundamental role of the chosen strategy on the time-variant parameters.

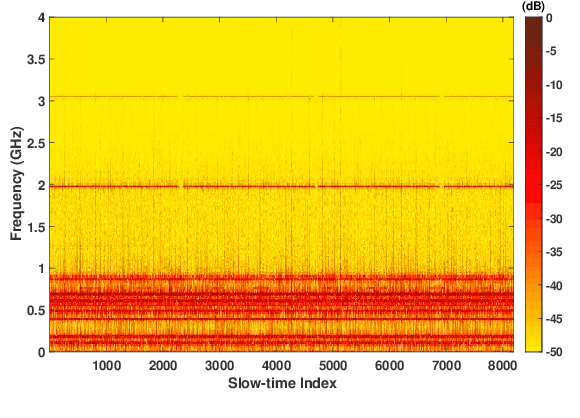

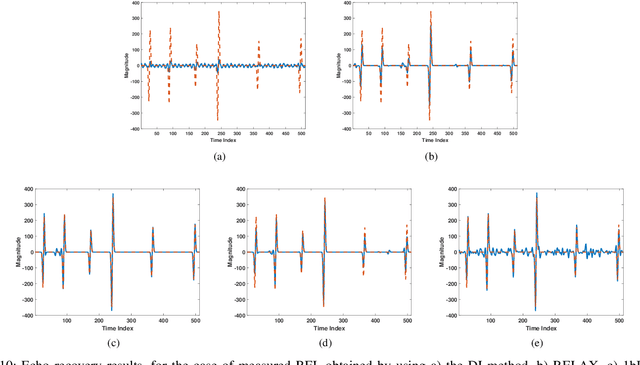

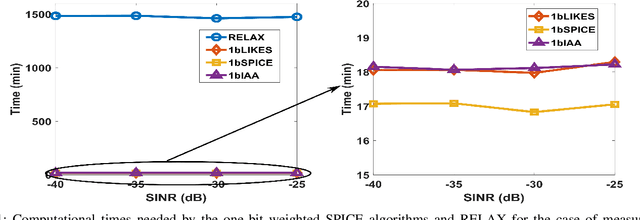

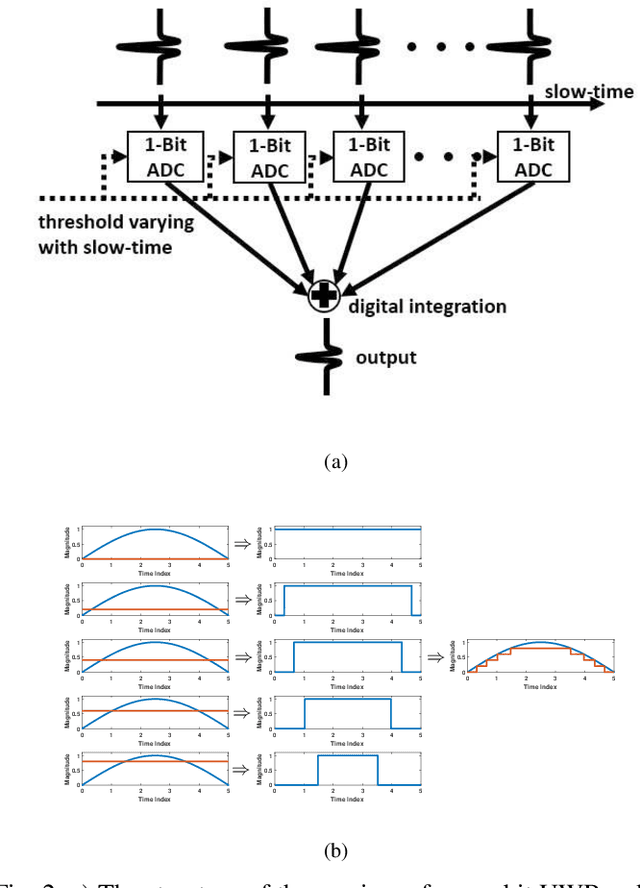

Joint RFI Mitigation and Radar Echo Recovery for One-Bit UWB Radar

Mar 19, 2021

Radio frequency interference (RFI) mitigation and radar echo recovery are critically important for the proper functioning of ultra-wideband (UWB) radar systems using one-bit sampling techniques. We recently introduced a technique for one-bit UWB radar, which first uses a majorization-minimization method for RFI parameter estimation followed by a sparse method for radar echo recovery. However, this technique suffers from high computational complexity due to the need to estimate the parameters of each RFI source separately and iteratively. In this paper, we present a computationally efficient joint RFI mitigation and radar echo recovery framework to greatly reduce the computational cost. Specifically, we exploit the sparsity of RFI in the fast-frequency domain and the sparsity of radar echoes in the fast-time domain to design a one-bit weighted SPICE (SParse Iterative Covariance-based Estimation) based framework for the joint RFI mitigation and radar echo recovery of one-bit UWB radar. Both simulated and experimental results are presented to show that the proposed one-bit weighted SPICE framework can not only reduce the computational cost but also outperform the existing approach for decoupled RFI mitigation and radar echo recovery of one-bit UWB radar.

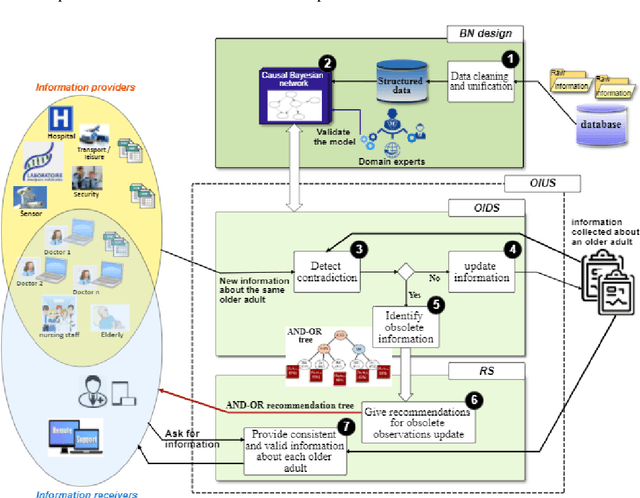

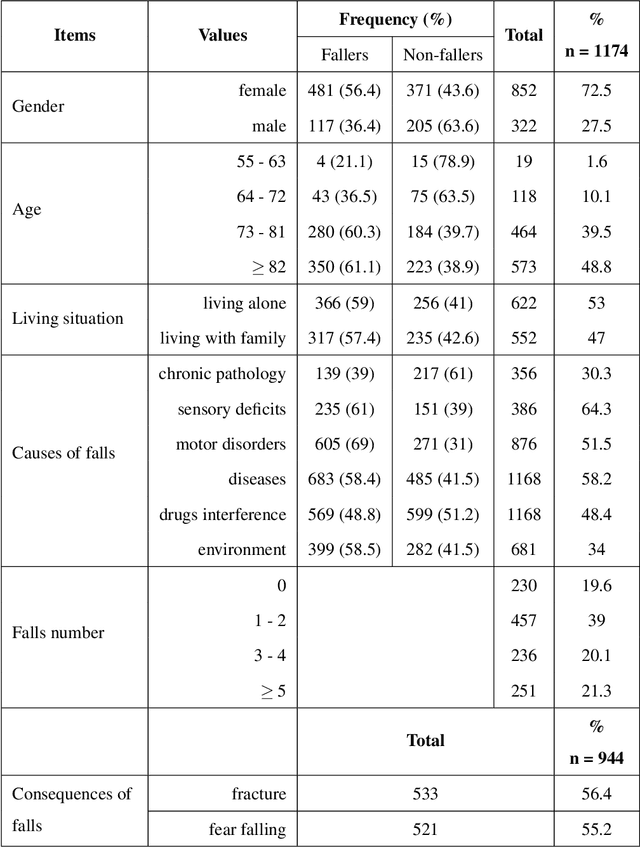

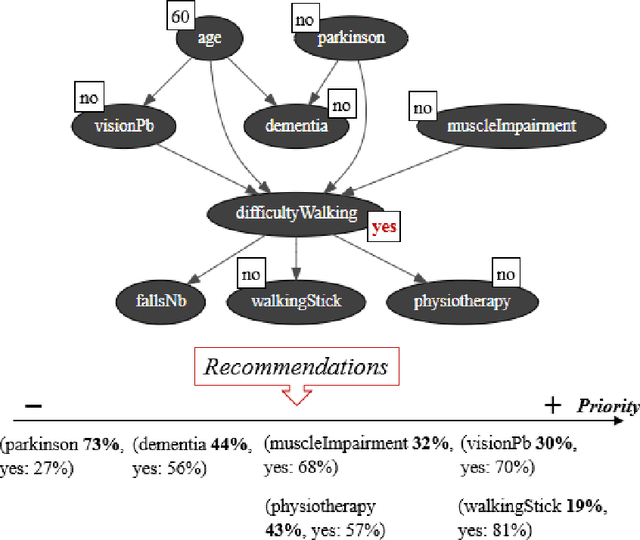

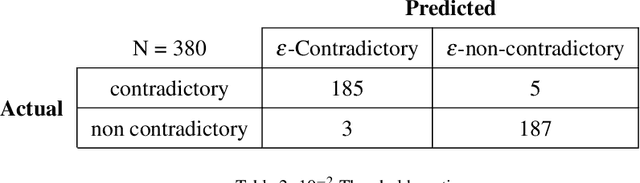

Obsolete Personal Information Update System for the Prevention of Falls among Elderly Patients

Jan 20, 2021

Falls are a common problem affecting the older adults and a major public health issue. Centers for Disease Control and Prevention, and World Health Organization report that one in three adults over the age of 65 and half of the adults over 80 fall each year. In recent years, an ever-increasing range of applications have been developed to help deliver more effective falls prevention interventions. All these applications rely on a huge elderly personal database collected from hospitals, mutual health, and other organizations in caring for elderly. The information describing an elderly is continually evolving and may become obsolete at a given moment and contradict what we already know on the same person. So, it needs to be continuously checked and updated in order to restore the database consistency and then provide better service. This paper provides an outline of an Obsolete personal Information Update System (OIUS) designed in the context of the elderly-fall prevention project. Our OIUS aims to control and update in real-time the information acquired about each older adult, provide on-demand consistent information and supply tailored interventions to caregivers and fall-risk patients. The approach outlined for this purpose is based on a polynomial-time algorithm build on top of a causal Bayesian network representing the elderly data. The result is given as a recommendation tree with some accuracy level. We conduct a thorough empirical study for such a model on an elderly personal information base. Experiments confirm the viability and effectiveness of our OIUS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge