"Time": models, code, and papers

A Study of the Mathematics of Deep Learning

Apr 28, 2021

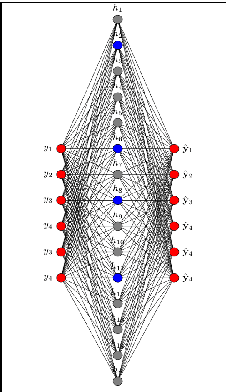

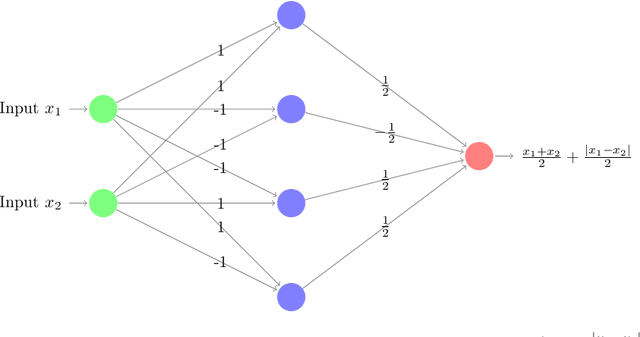

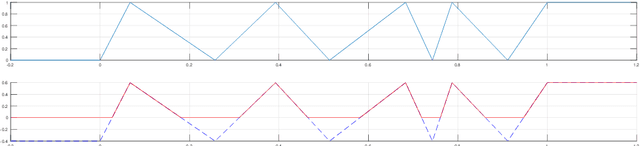

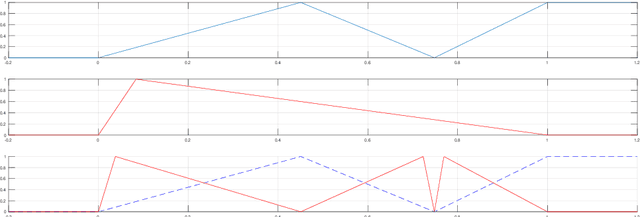

"Deep Learning"/"Deep Neural Nets" is a technological marvel that is now increasingly deployed at the cutting-edge of artificial intelligence tasks. This dramatic success of deep learning in the last few years has been hinged on an enormous amount of heuristics and it has turned out to be a serious mathematical challenge to be able to rigorously explain them. In this thesis, submitted to the Department of Applied Mathematics and Statistics, Johns Hopkins University we take several steps towards building strong theoretical foundations for these new paradigms of deep-learning. In chapter 2 we show new circuit complexity theorems for deep neural functions and prove classification theorems about these function spaces which in turn lead to exact algorithms for empirical risk minimization for depth 2 ReLU nets. We also motivate a measure of complexity of neural functions to constructively establish the existence of high-complexity neural functions. In chapter 3 we give the first algorithm which can train a ReLU gate in the realizable setting in linear time in an almost distribution free set up. In chapter 4 we give rigorous proofs towards explaining the phenomenon of autoencoders being able to do sparse-coding. In chapter 5 we give the first-of-its-kind proofs of convergence for stochastic and deterministic versions of the widely used adaptive gradient deep-learning algorithms, RMSProp and ADAM. This chapter also includes a detailed empirical study on autoencoders of the hyper-parameter values at which modern algorithms have a significant advantage over classical acceleration based methods. In the last chapter 6 we give new and improved PAC-Bayesian bounds for the risk of stochastic neural nets. This chapter also includes an experimental investigation revealing new geometric properties of the paths in weight space that are traced out by the net during the training.

External Dynamic InTerference Estimation and Removal (EDITER) for low field MRI

Apr 18, 2021

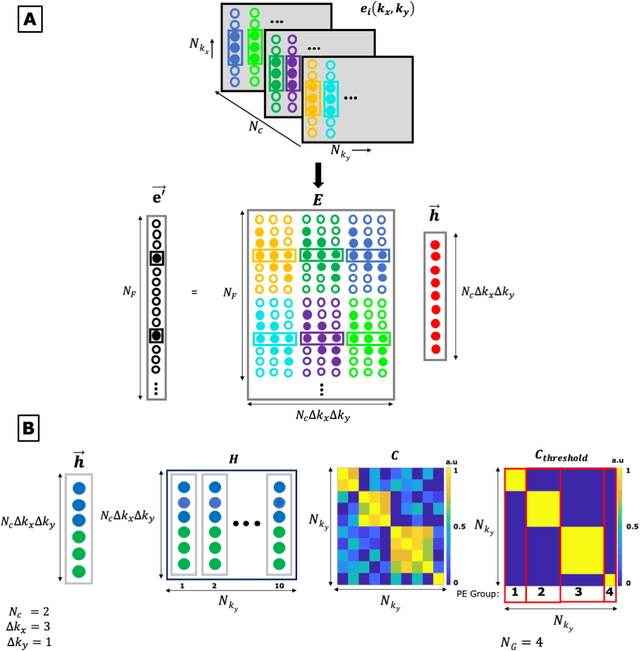

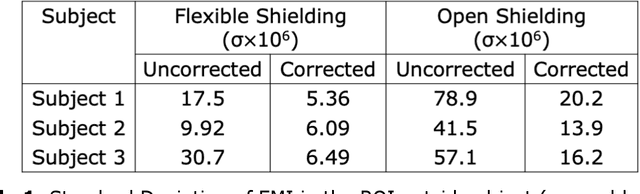

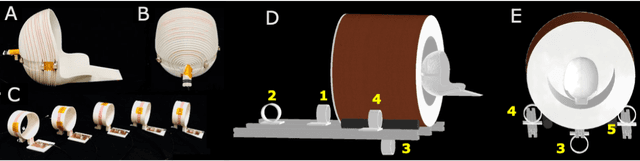

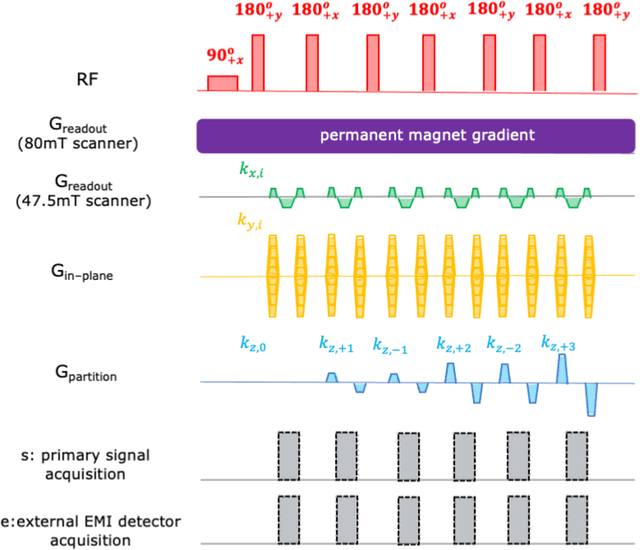

Purpose: Point-of-care MRI requires operation outside of a faraday shielded room normally used to block image-degrading electromagnetic Interference (EMI). To address this, we introduce the EDITER method, an external sensor based dynamic EMI estimation and removal method to retrospectively remove time-varying external interference sources. Theory and Methods: The method acquires data from multiple EMI detectors (tuned receive coils and electrodes placed on the body) simultaneous with the primary MR coil during image data acquisition. We dynamically calculate impulse response functions that map the data from the detectors to the artifacts in the kspace data, then remove the transformed detected EMI from the MR data. Performance of the EDITER algorithm was assessed in phantom and in vivo imaging experiments in an 80mT portable brain MRI in a controlled EMI environment and with an open 47.5mT MRI scanner in an uncontrolled EMI setting. Results: In the controlled setting, the effectiveness of the EDITER technique was demonstrated for specific types of introduced EMI sources with up to a 97% reduction of structured EMI and up to 76% reduction of broadband EMI. In the uncontrolled EMI experiments, we demonstrate EMI reductions of 37% with a single pickup coil and 89% with a single electrode and up to 99% with both. Conclusion: The EDITER technique is a flexible and robust method to improve image quality in portable MRI systems with minimal passive shielding. This could reduce the reliance of MRI on shielded rooms and allow for truly portable MRI with specialized compact POC scanners

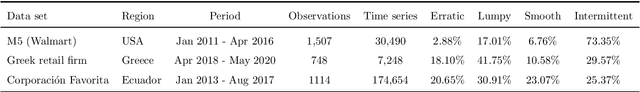

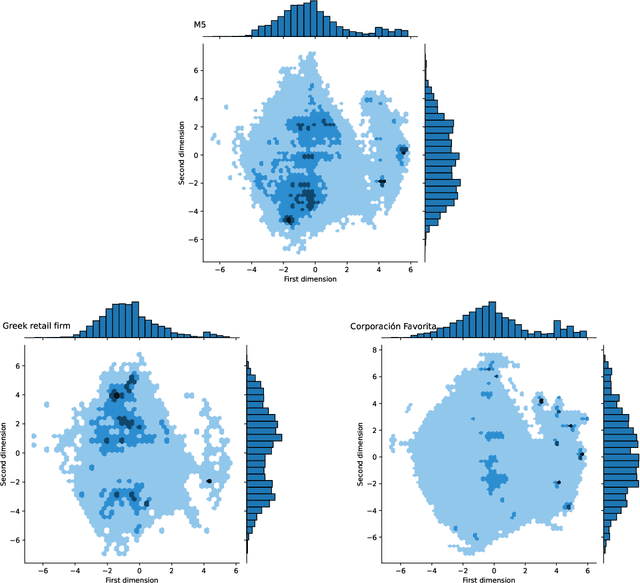

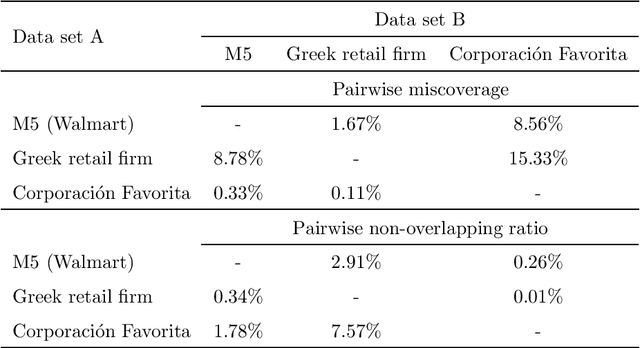

Exploring the representativeness of the M5 competition data

Mar 04, 2021

The main objective of the M5 competition, which focused on forecasting the hierarchical unit sales of Walmart, was to evaluate the accuracy and uncertainty of forecasting methods in the field in order to identify best practices and highlight their practical implications. However, whether the findings of the M5 competition can be generalized and exploited by retail firms to better support their decisions and operation depends on the extent to which the M5 data is representative of the reality, i.e., sufficiently represent the unit sales data of retailers that operate in different regions, sell different types of products, and consider different marketing strategies. To answer this question, we analyze the characteristics of the M5 time series and compare them with those of two grocery retailers, namely Corporaci\'on Favorita and a major Greek supermarket chain, using feature spaces. Our results suggest that there are only small discrepancies between the examined data sets, supporting the representativeness of the M5 data.

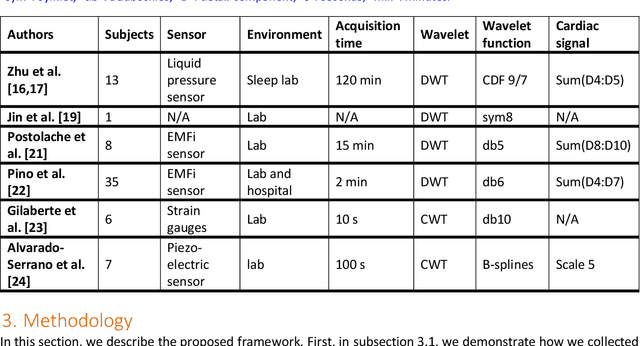

A comparison of three heart rate detection algorithms over ballistocardiogram signals

Jan 22, 2021

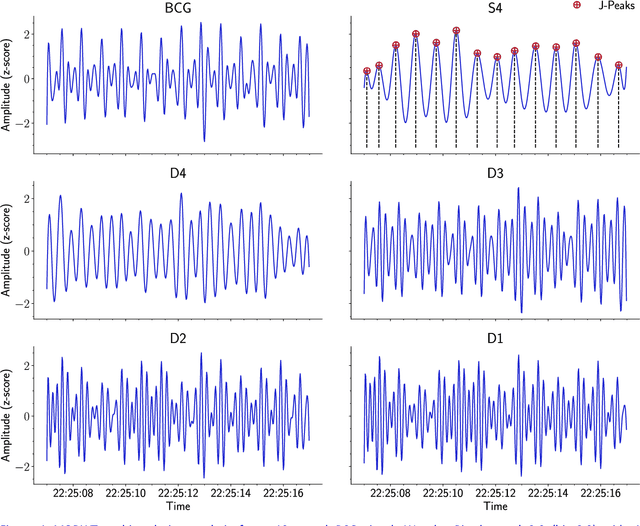

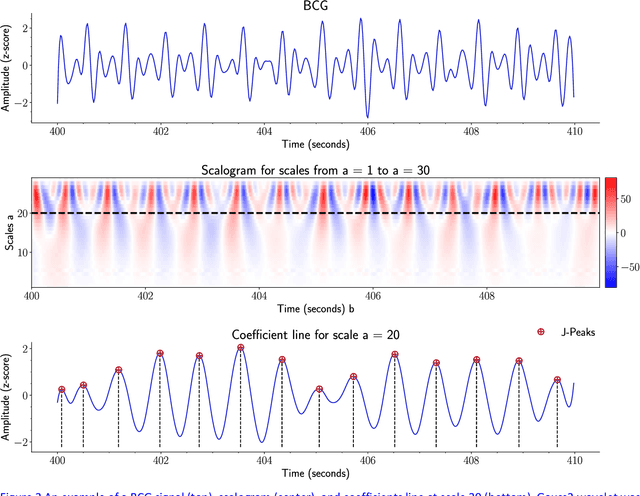

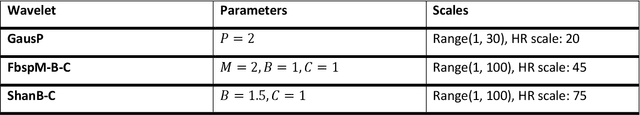

Heart rate (HR) detection from ballistocardiogram (BCG) signals is challenging for various reasons. For example, BCG signals' morphology can vary between and within-subjects. Also, it differs from one sensor to another. Hence, it is essential to evaluate HR detection algorithms across different datasets and under different experimental setups. This paper investigated the suitability of three algorithms (i.e., MODWT-MRA, CWT, and template matching) for HR detection across three independent BCG datasets. The first two datasets (Datset1 and DataSet2) were obtained using a microbend fiber optic (MFOS) sensor, while the last one (DataSet3) was obtained using a fiber Bragg grating (FBG) sensor. DataSet1 was collected from 10 OSA patients during an in-lab PSG study, Datset2 was obtained from 50 subjects in a sitting position, and DataSet3 was gathered from 10 subjects in a sleeping position. The CWT with derivative of Gaussian (Gaus2) provided superior results than the MODWT-MAR, CWT (frequency B-spline-Fbsp-2-1-1), and CWT (Shannon-Shan1.5-1.0) for DataSet1 and DataSet2. That said, a BCG template was constructed from DataSet1. Then, it was applied for HR detection across DataSet2. The template matching method achieved slightly superior results than CWT-Gaus2 for DataSet2. Furthermore, it has proved useful for HR detection across DataSet3 despite that BCG signals were obtained from a different sensor and under different conditions. Overall, the time required to analyze a 30-second BCG signal was in a millisecond resolution for the three proposed methods. The MODWT-MRA had the highest performance, with an average time of 4.92 ms.

Robust Cell-Load Learning with a Small Sample Set

Mar 21, 2021

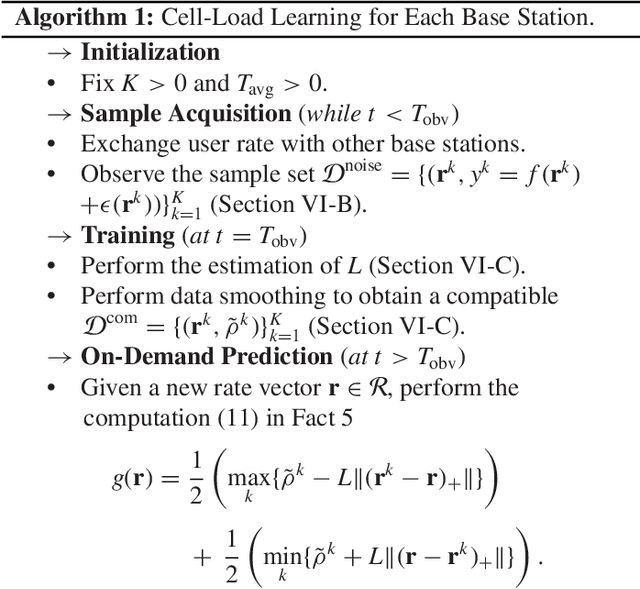

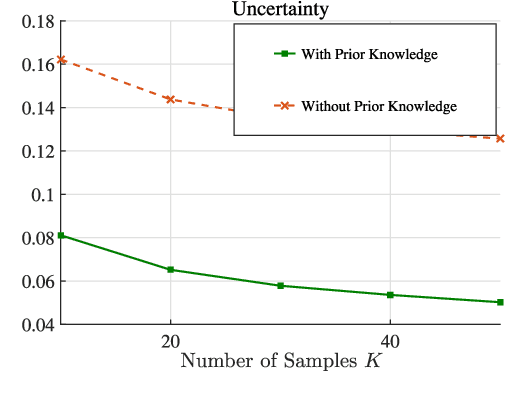

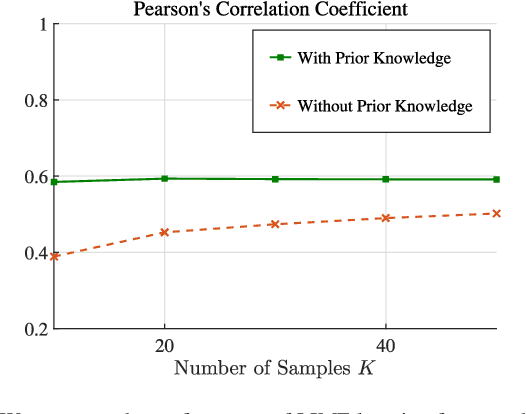

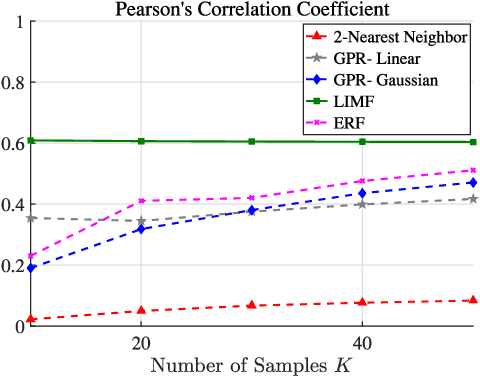

Learning of the cell-load in radio access networks (RANs) has to be performed within a short time period. Therefore, we propose a learning framework that is robust against uncertainties resulting from the need for learning based on a relatively small training sample set. To this end, we incorporate prior knowledge about the cell-load in the learning framework. For example, an inherent property of the cell-load is that it is monotonic in downlink (data) rates. To obtain additional prior knowledge we first study the feasible rate region, i.e., the set of all vectors of user rates that can be supported by the network. We prove that the feasible rate region is compact. Moreover, we show the existence of a Lipschitz function that maps feasible rate vectors to cell-load vectors. With these results in hand, we present a learning technique that guarantees a minimum approximation error in the worst-case scenario by using prior knowledge and a small training sample set. Simulations in the network simulator NS3 demonstrate that the proposed method exhibits better robustness and accuracy than standard multivariate learning techniques, especially for small training sample sets.

* Published in IEEE Transactions on Signal Processing ( Volume: 68)

BayesPerf: Minimizing Performance Monitoring Errors Using Bayesian Statistics

Feb 22, 2021

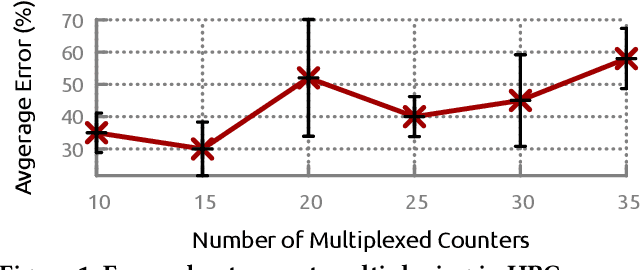

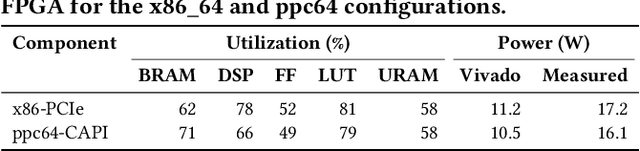

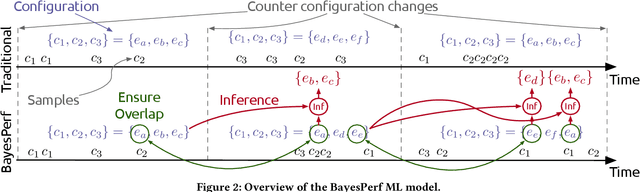

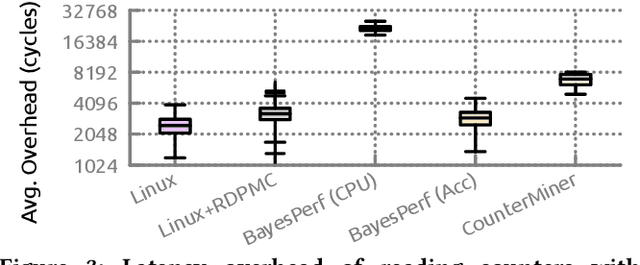

Hardware performance counters (HPCs) that measure low-level architectural and microarchitectural events provide dynamic contextual information about the state of the system. However, HPC measurements are error-prone due to non determinism (e.g., undercounting due to event multiplexing, or OS interrupt-handling behaviors). In this paper, we present BayesPerf, a system for quantifying uncertainty in HPC measurements by using a domain-driven Bayesian model that captures microarchitectural relationships between HPCs to jointly infer their values as probability distributions. We provide the design and implementation of an accelerator that allows for low-latency and low-power inference of the BayesPerf model for x86 and ppc64 CPUs. BayesPerf reduces the average error in HPC measurements from 40.1% to 7.6% when events are being multiplexed. The value of BayesPerf in real-time decision-making is illustrated with a simple example of scheduling of PCIe transfers.

Deep Learning for Regularization Prediction in Diffeomorphic Image Registration

Nov 28, 2020

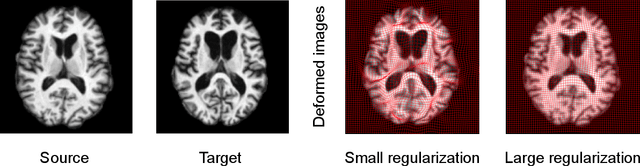

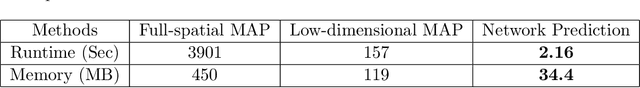

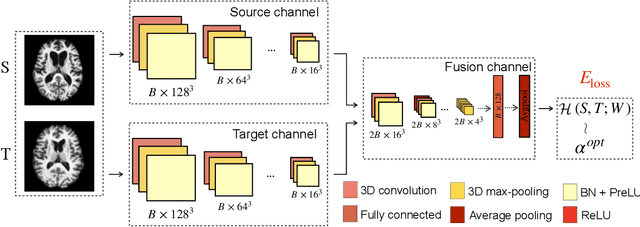

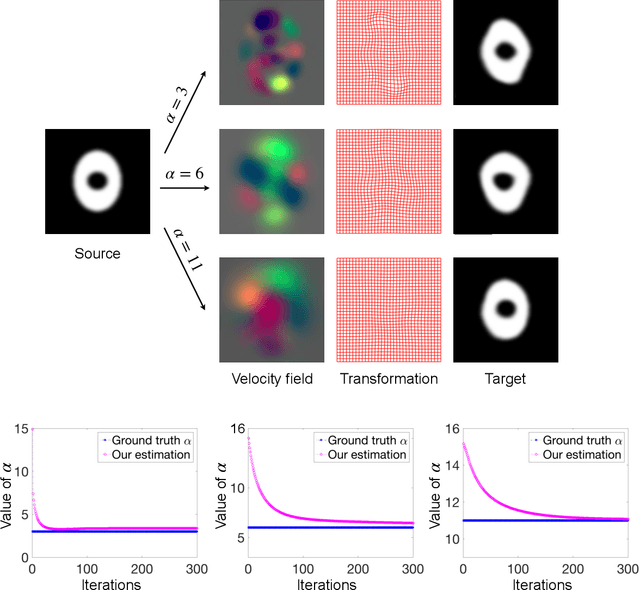

This paper presents a predictive model for estimating regularization parameters of diffeomorphic image registration. We introduce a novel framework that automatically determines the parameters controlling the smoothness of diffeomorphic transformations. Our method significantly reduces the effort of parameter tuning, which is time and labor-consuming. To achieve the goal, we develop a predictive model based on deep convolutional neural networks (CNN) that learns the mapping between pairwise images and the regularization parameter of image registration. In contrast to previous methods that estimate such parameters in a high-dimensional image space, our model is built in an efficient bandlimited space with much lower dimensions. We demonstrate the effectiveness of our model on both 2D synthetic data and 3D real brain images. Experimental results show that our model not only predicts appropriate regularization parameters for image registration, but also improving the network training in terms of time and memory efficiency.

EC-SAGINs: Edge Computing-enhanced Space-Air-Ground Integrated Networks for Internet of Vehicles

Jan 15, 2021

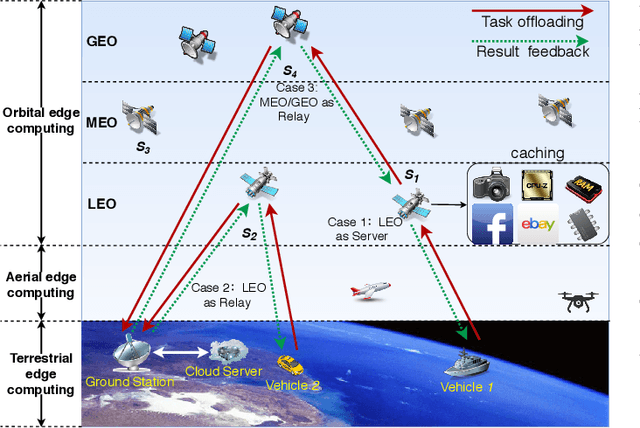

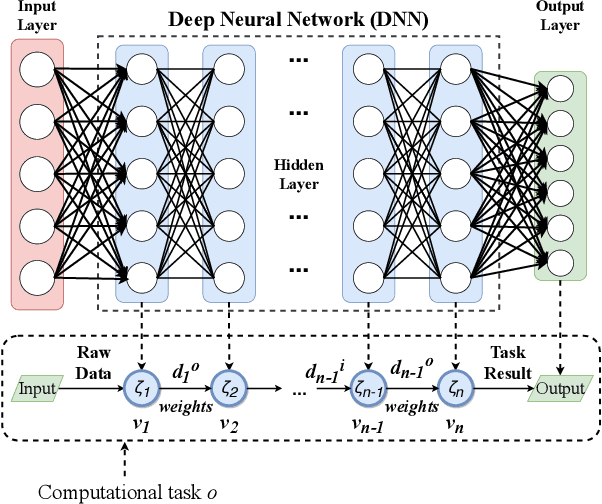

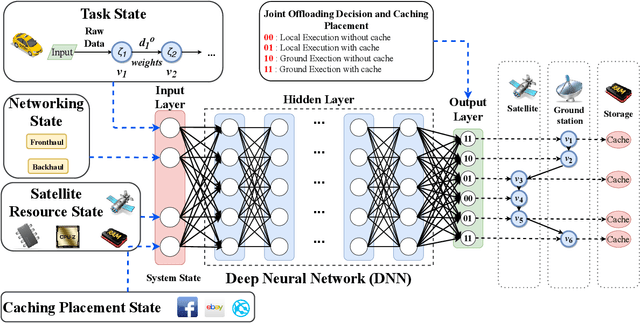

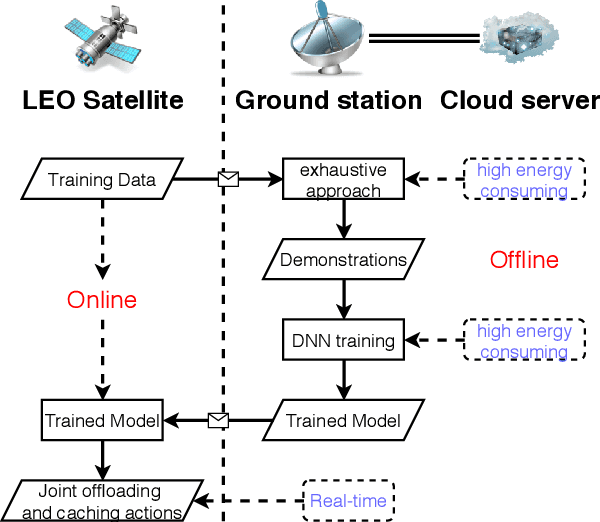

Edge computing-enhanced Internet of Vehicles (EC-IoV) enables ubiquitous data processing and content sharing among vehicles and terrestrial edge computing (TEC) infrastructures (e.g., 5G base stations and roadside units) with little or no human intervention, plays a key role in the intelligent transportation systems. However, EC-IoV is heavily dependent on the connections and interactions between vehicles and TEC infrastructures, thus will break down in some remote areas where TEC infrastructures are unavailable (e.g., desert, isolated islands and disaster-stricken areas). Driven by the ubiquitous connections and global-area coverage, space-air-ground integrated networks (SAGINs) efficiently support seamless coverage and efficient resource management, represent the next frontier for edge computing. In light of this, we first review the state-of-the-art edge computing research for SAGINs in this article. After discussing several existing orbital and aerial edge computing architectures, we propose a framework of edge computing-enabled space-air-ground integrated networks (EC-SAGINs) to support various IoV services for the vehicles in remote areas. The main objective of the framework is to minimize the task completion time and satellite resource usage. To this end, a pre-classification scheme is presented to reduce the size of action space, and a deep imitation learning (DIL) driven offloading and caching algorithm is proposed to achieve real-time decision making. Simulation results show the effectiveness of our proposed scheme. At last, we also discuss some technology challenges and future directions.

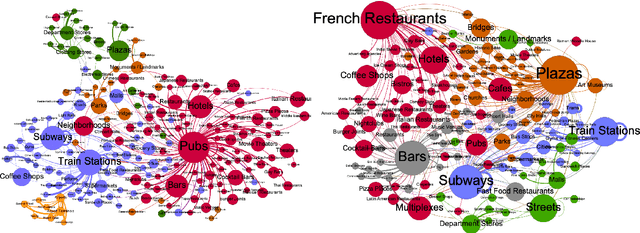

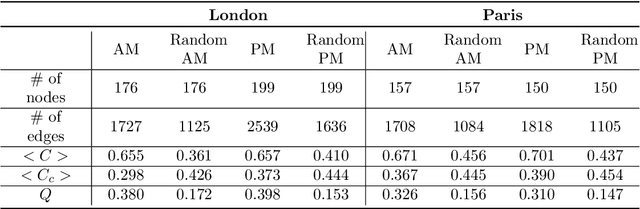

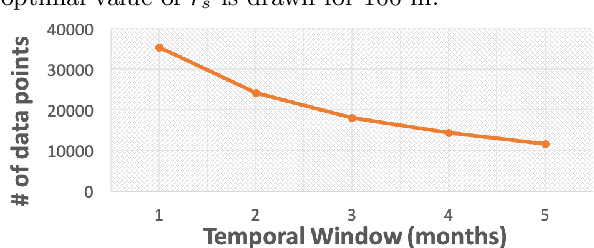

Modelling Cooperation and Competition in Urban Retail Ecosystems with Complex Network Metrics

Apr 28, 2021

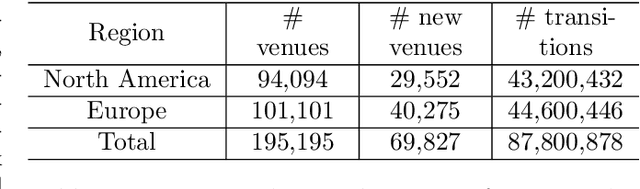

Understanding the impact that a new business has on the local market ecosystem is a challenging task as it is multifaceted in nature. Past work in this space has examined the collaborative or competitive role of homogeneous venue types (i.e. the impact of a new bookstore on existing bookstores). However, these prior works have been limited in their scope and explanatory power. To better measure retail performance in a modern city, a model should consider a number of factors that interact synchronously. This paper is the first which considers the multifaceted types of interactions that occur in urban cities when examining the impact of new businesses. We first present a modeling framework which examines the role of new businesses in their respective local areas. Using a longitudinal dataset from location technology platform Foursquare, we model new venue impact across 26 major cities worldwide. Representing cities as connected networks of venues, we quantify their structure and characterise their dynamics over time. We note a strong community structure emerging in these retail networks, an observation that highlights the interplay of cooperative and competitive forces that emerge in local ecosystems of retail establishments. We next devise a data-driven metric that captures the first-order correlation on the impact of a new venue on retailers within its vicinity accounting for both homogeneous and heterogeneous interactions between venue types. Lastly, we build a supervised machine learning model to predict the impact of a given new venue on its local retail ecosystem. Our approach highlights the power of complex network measures in building machine learning prediction models. These models have numerous applications within the retail sector and can support policymakers, business owners, and urban planners in the development of models to characterize and predict changes in urban settings.

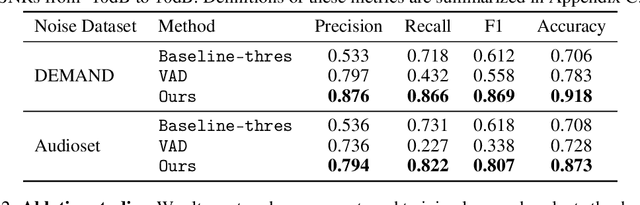

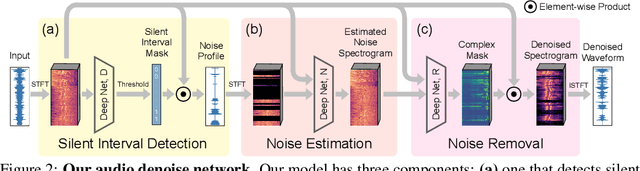

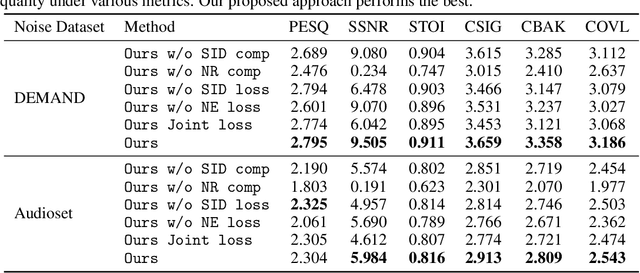

Listening to Sounds of Silence for Speech Denoising

Oct 22, 2020

We introduce a deep learning model for speech denoising, a long-standing challenge in audio analysis arising in numerous applications. Our approach is based on a key observation about human speech: there is often a short pause between each sentence or word. In a recorded speech signal, those pauses introduce a series of time periods during which only noise is present. We leverage these incidental silent intervals to learn a model for automatic speech denoising given only mono-channel audio. Detected silent intervals over time expose not just pure noise but its time-varying features, allowing the model to learn noise dynamics and suppress it from the speech signal. Experiments on multiple datasets confirm the pivotal role of silent interval detection for speech denoising, and our method outperforms several state-of-the-art denoising methods, including those that accept only audio input (like ours) and those that denoise based on audiovisual input (and hence require more information). We also show that our method enjoys excellent generalization properties, such as denoising spoken languages not seen during training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge