"Time": models, code, and papers

Information Theoretic Key Agreement Protocol based on ECG signals

May 14, 2021

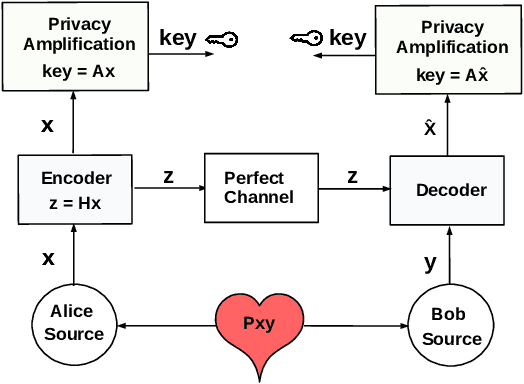

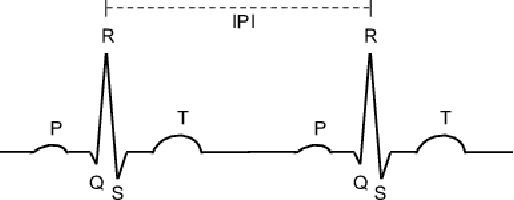

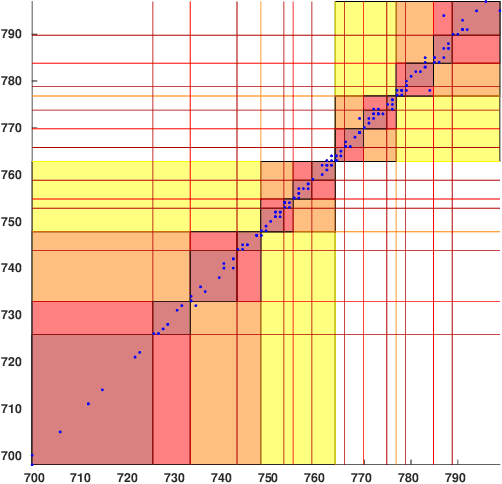

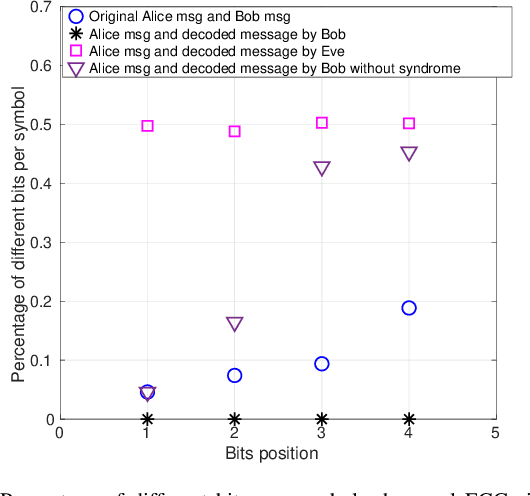

Wireless body area networks (WBANs) are becoming increasingly popular as they allow individuals to continuously monitor their vitals and physiological parameters remotely from the hospital. With the spread of the SARS-CoV-2 pandemic, the availability of portable pulse-oximeters and wearable heart rate detectors has boomed in the market. At the same time, in 2020 we assisted to an unprecedented increase of healthcare breaches, revealing the extreme vulnerability of the current generation of WBANs. Therefore, the development of new security protocols to ensure data protection, authentication, integrity and privacy within WBANs are highly needed. Here, we targeted a WBAN collecting ECG signals from different sensor nodes on the individual's body, we extracted the inter-pulse interval (i.e., R-R interval) sequence from each of them, and we developed a new information theoretic key agreement protocol that exploits the inherent randomness of ECG to ensure authentication between sensor pairs within the WBAN. After proper pre-processing, we provide an analytical solution that ensures robust authentication; we provide a unique information reconciliation matrix, which gives good performance for all ECG sensor pairs; and we can show that a relationship between information reconciliation and privacy amplification matrices can be found. Finally, we show the trade-off between the level of security, in terms of key generation rate, and the complexity of the error correction scheme implemented in the system.

Real-time Pedestrian Detection Approach with an Efficient Data Communication Bandwidth Strategy

Aug 27, 2018

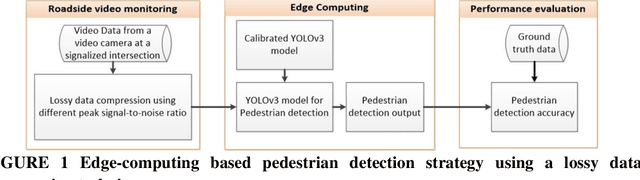

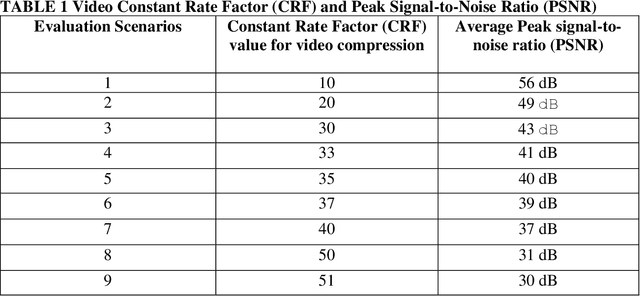

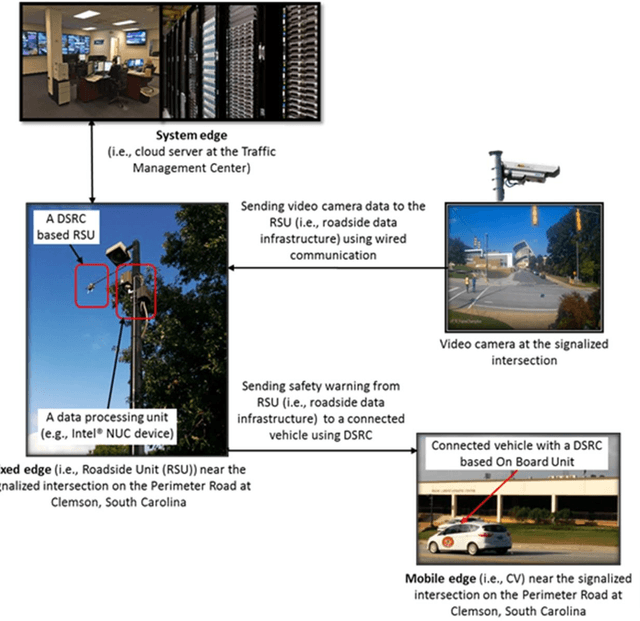

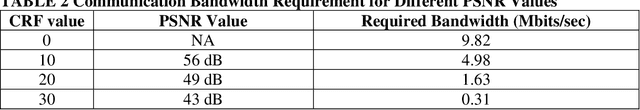

Vehicle-to-Pedestrian (V2P) communication can significantly improve pedestrian safety at a signalized intersection. It is unlikely that pedestrians will carry a low latency communication enabled device and activate a pedestrian safety application in their hand-held device all the time. Because of this limitation, multiple traffic cameras at the signalized intersection can be used to accurately detect and locate pedestrians using deep learning and broadcast safety alerts related to pedestrians to warn connected vehicles around a signalized intersection. However, unavailability of high-performance computing infrastructure at the roadside and limited network bandwidth between traffic cameras and the computing infrastructure limits the ability of real-time data streaming and processing for pedestrian detection. In this paper, we developed an edge computing based real-time pedestrian detection strategy combining pedestrian detection algorithm using deep learning and an efficient data communication approach to reduce bandwidth requirements while maintaining a high object detection accuracy. We utilized a lossy traffic camera data compression technique to determine the tradeoff between the reduction of the communication bandwidth requirements and a defined object detection accuracy. The performance of the pedestrian-detection strategy is measured in terms of pedestrian classification accuracy with varying peak signal-to-noise ratios. The analyses reveal that we detect pedestrians by maintaining a defined detection accuracy with a peak signal-to-noise ratio (PSNR) 43 dB while reducing the communication bandwidth from 9.82 Mbits/sec to 0.31 Mbits/sec.

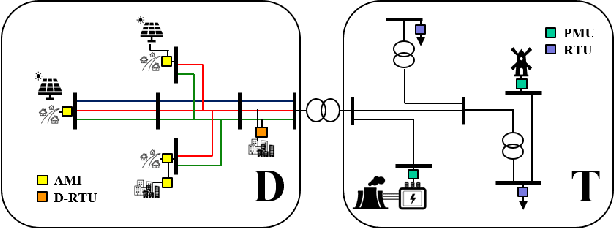

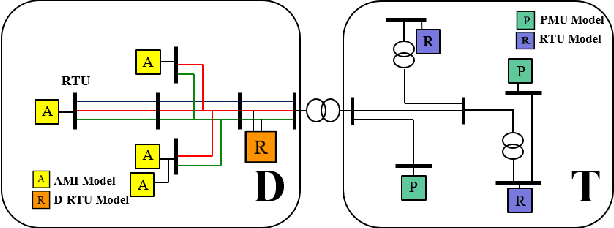

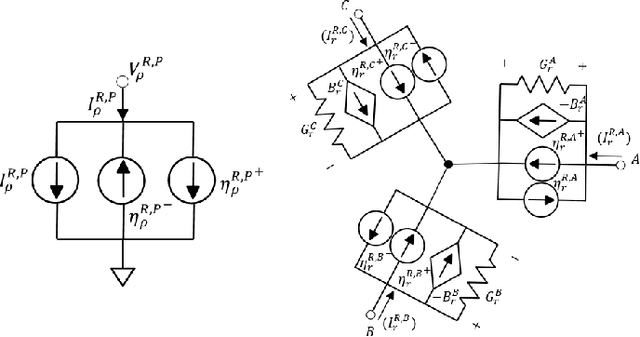

Combined Transmission and Distribution State-Estimation for Future Electric Grids

May 14, 2021

Proliferation of grid resources on the distribution network along with the inability to forecast them accurately will render the existing methodology of grid operation and control untenable in the future. Instead, a more distributed yet coordinated approach for grid operation and control will emerge that models and analyzes the grid with a larger footprint and deeper hierarchy to unify control of disparate T&D grid resources under a common framework. Such approach will require AC state-estimation (ACSE) of joint T&D networks. Today, no practical method for realizing combined T&D ACSE exists. This paper addresses that gap from circuit-theoretic perspective through realizing a combined T&D ACSE solution methodology that is fast, convex and robust against bad-data. To address daunting challenges of problem size (million+ variables) and data-privacy, the approach is distributed both in memory and computing resources. To ensure timely convergence, the approach constructs a distributed circuit model for combined T&D networks and utilizes node-tearing techniques for efficient parallelism. To demonstrate the efficacy of the approach, combined T&D ACSE algorithm is run on large test networks that comprise of multiple T&D feeders. The results reflect the accuracy of the estimates in terms of root mean-square error and algorithm scalability in terms of wall-clock time.

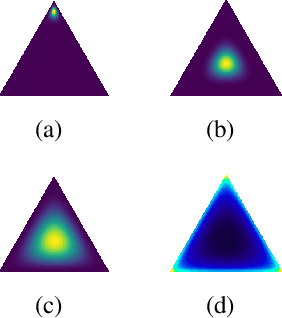

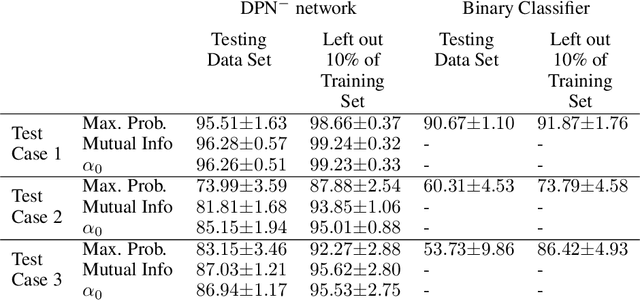

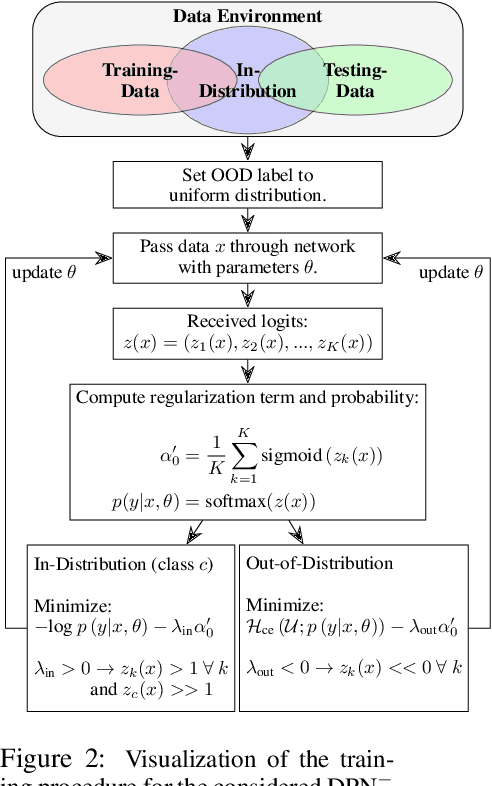

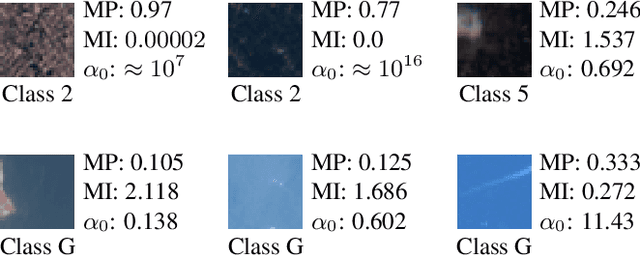

Out-of-distribution detection in satellite image classification

Apr 09, 2021

In satellite image analysis, distributional mismatch between the training and test data may arise due to several reasons, including unseen classes in the test data and differences in the geographic area. Deep learning based models may behave in unexpected manner when subjected to test data that has such distributional shifts from the training data, also called out-of-distribution (OOD) examples. Predictive uncertainly analysis is an emerging research topic which has not been explored much in context of satellite image analysis. Towards this, we adopt a Dirichlet Prior Network based model to quantify distributional uncertainty of deep learning models for remote sensing. The approach seeks to maximize the representation gap between the in-domain and OOD examples for a better identification of unknown examples at test time. Experimental results on three exemplary test scenarios show the efficacy of the model in satellite image analysis.

OneVision: Centralized to Distributed Controller Synthesis with Delay Compensation

Apr 14, 2021

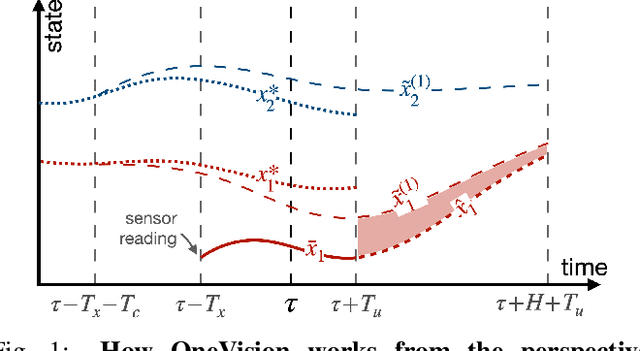

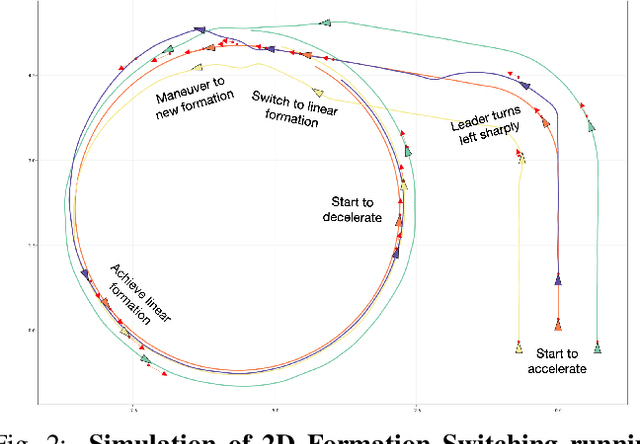

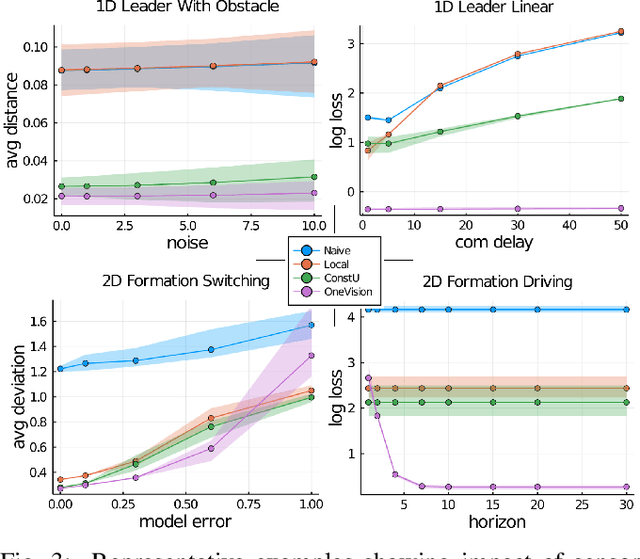

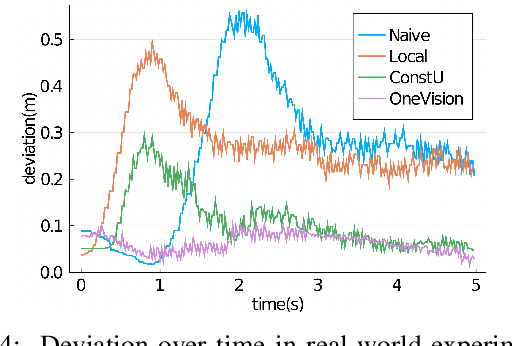

We propose a new algorithm to simplify the controller development for distributed robotic systems subject to external observations, disturbances, and communication delays. Unlike prior approaches that propose specialized solutions to handling communication latency for specific robotic applications, our algorithm uses an arbitrary centralized controller as the specification and automatically generates distributed controllers with communication management and delay compensation. We formulate our goal as nonlinear optimal control -- using a regret minimizing objective that measures how much the distributed agents behave differently from the delay-free centralized response -- and solve for optimal actions w.r.t. local estimations of this objective using gradient-based optimization. We analyze our proposed algorithm's behavior under a linear time-invariant special case and prove that the closed-loop dynamics satisfy a form of input-to-state stability w.r.t. unexpected disturbances and observations. Our experimental results on both simulated and real-world robotic tasks demonstrate the practical usefulness of our approach and show significant improvement over several baseline approaches.

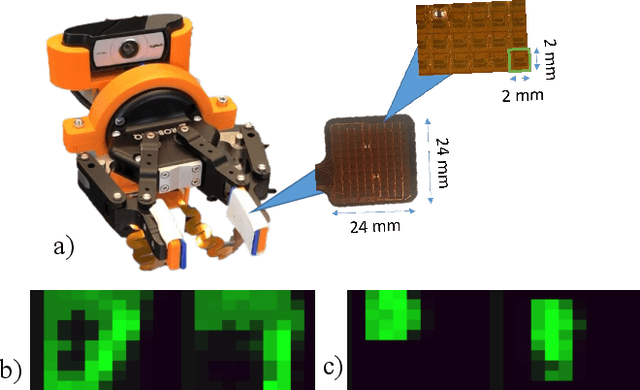

ZoomTouch: Multi-User Remote Robot Control in Zoom by DNN-based Gesture Recognition

Nov 07, 2020

We present ZoomTouch - a breakthrough technology for multi-user control of robot from Zoom in real-time by DNN-based gesture recognition. The users from digital world can have a video conferencing and manipulate the robot to make the dexterous manipulations with tangible objects. As the scenario, we proposed the remote COVID-19 test Laboratory to considerably reduce the time to receive the data and substitute medical assistant working in protective gear in close proximity with infected cells. The proposed technology suggests a new type of reality, where multi-users can jointly interact with remote object, e.g. make a new building design, joint cooking in robotic kitchen, etc, and discuss/modify the results at the same time.

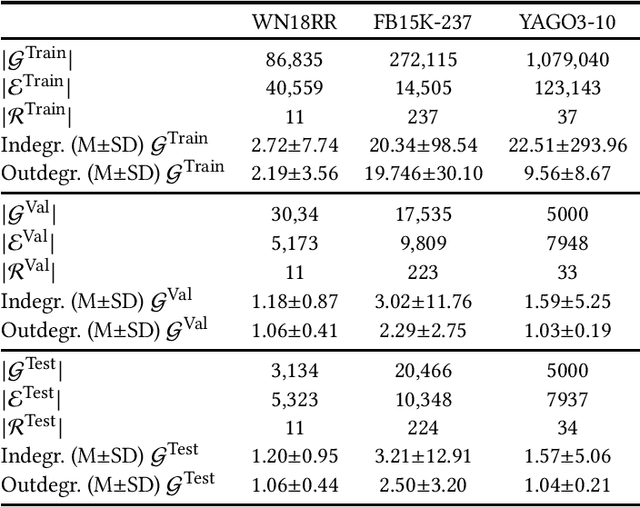

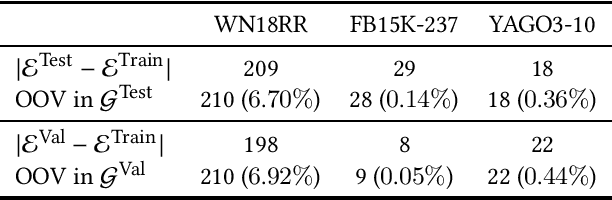

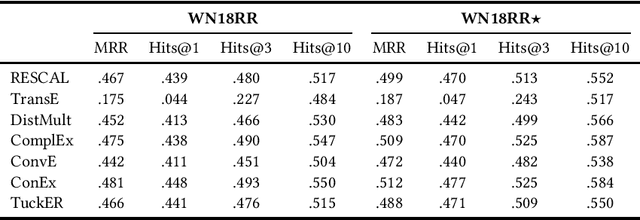

Out-of-Vocabulary Entities in Link Prediction

May 26, 2021

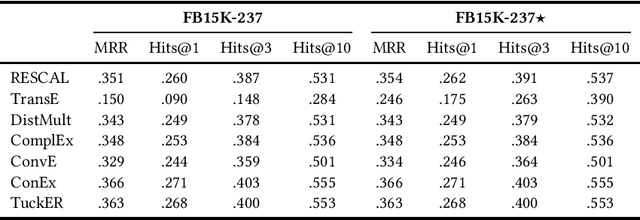

Knowledge graph embedding techniques are key to making knowledge graphs amenable to the plethora of machine learning approaches based on vector representations. Link prediction is often used as a proxy to evaluate the quality of these embeddings. Given that the creation of benchmarks for link prediction is a time-consuming endeavor, most work on the subject matter uses only a few benchmarks. As benchmarks are crucial for the fair comparison of algorithms, ensuring their quality is tantamount to providing a solid ground for developing better solutions to link prediction and ipso facto embedding knowledge graphs. First studies of benchmarks pointed to limitations pertaining to information leaking from the development to the test fragments of some benchmark datasets. We spotted a further common limitation of three of the benchmarks commonly used for evaluating link prediction approaches: out-of-vocabulary entities in the test and validation sets. We provide an implementation of an approach for spotting and removing such entities and provide corrected versions of the datasets WN18RR, FB15K-237, and YAGO3-10. Our experiments on the corrected versions of WN18RR, FB15K-237, and YAGO3-10 suggest that the measured performance of state-of-the-art approaches is altered significantly with p-values <1%, <1.4%, and <1%, respectively. Overall, state-of-the-art approaches gain on average absolute $3.29 \pm 0.24\%$ in all metrics on WN18RR. This means that some of the conclusions achieved in previous works might need to be revisited. We provide an open-source implementation of our experiments and corrected datasets at at https://github.com/dice-group/OOV-In-Link-Prediction.

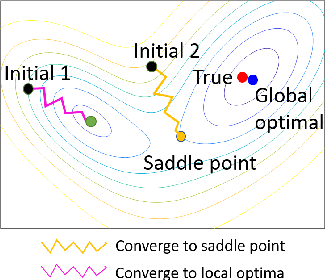

Stochastic Gradient Descent with Large Learning Rate

Dec 17, 2020

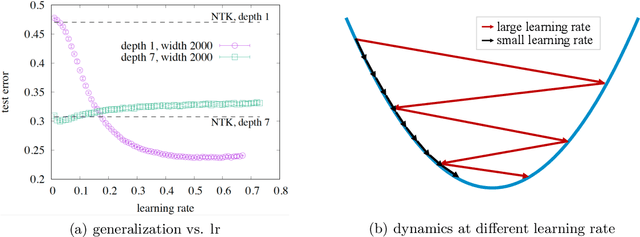

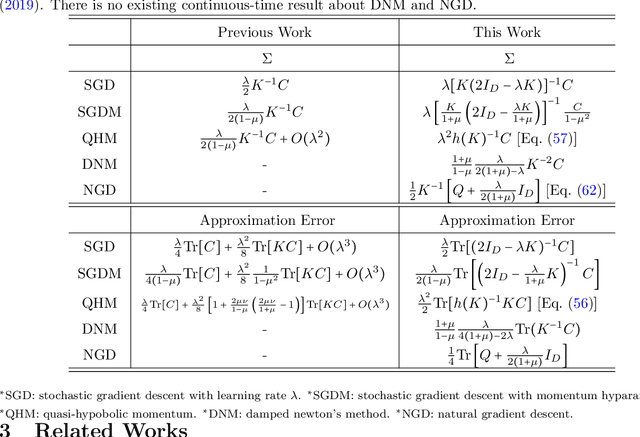

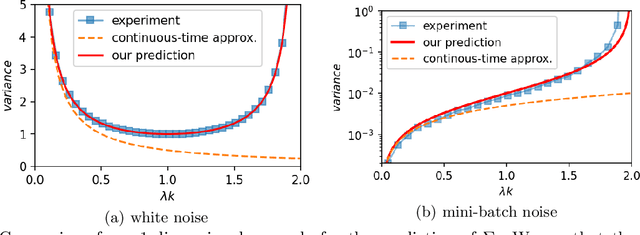

As a simple and efficient optimization method in deep learning, stochastic gradient descent (SGD) has attracted tremendous attention. In the vanishing learning rate regime, SGD is now relatively well understood, and the majority of theoretical approaches to SGD set their assumptions in the continuous-time limit. However, the continuous-time predictions are unlikely to reflect the experimental observations well because the practice often runs in the large learning rate regime, where the training is faster and the generalization of models are often better. In this paper, we propose to study the basic properties of SGD and its variants in the non-vanishing learning rate regime. The focus is on deriving exactly solvable results and relating them to experimental observations. The main contributions of this work are to derive the stable distribution for discrete-time SGD in a quadratic loss function with and without momentum. Examples of applications of the proposed theory considered in this work include the approximation error of variants of SGD, the effect of mini-batch noise, the escape rate from a sharp minimum, and and the stationary distribution of a few second order methods.

GATSBI: Generative Agent-centric Spatio-temporal Object Interaction

Apr 09, 2021

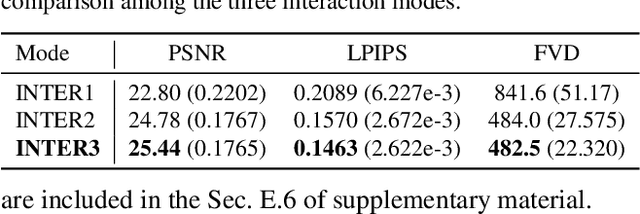

We present GATSBI, a generative model that can transform a sequence of raw observations into a structured latent representation that fully captures the spatio-temporal context of the agent's actions. In vision-based decision-making scenarios, an agent faces complex high-dimensional observations where multiple entities interact with each other. The agent requires a good scene representation of the visual observation that discerns essential components and consistently propagates along the time horizon. Our method, GATSBI, utilizes unsupervised object-centric scene representation learning to separate an active agent, static background, and passive objects. GATSBI then models the interactions reflecting the causal relationships among decomposed entities and predicts physically plausible future states. Our model generalizes to a variety of environments where different types of robots and objects dynamically interact with each other. We show GATSBI achieves superior performance on scene decomposition and video prediction compared to its state-of-the-art counterparts.

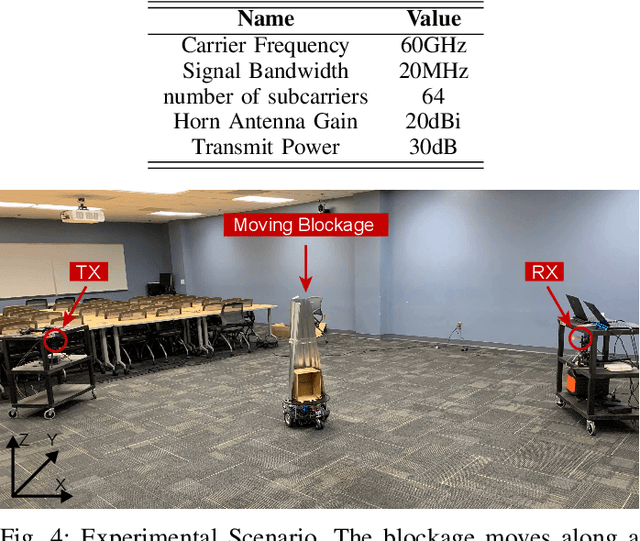

Deep Learning for Moving Blockage Predictionusing Real Millimeter Wave Measurements

Jan 18, 2021

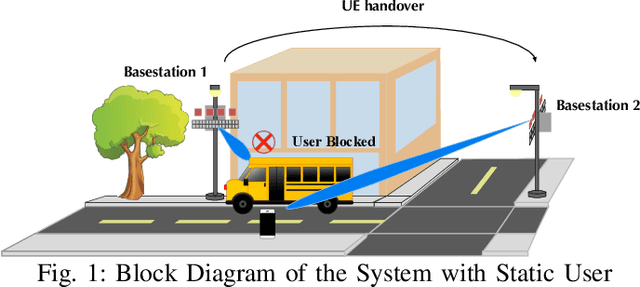

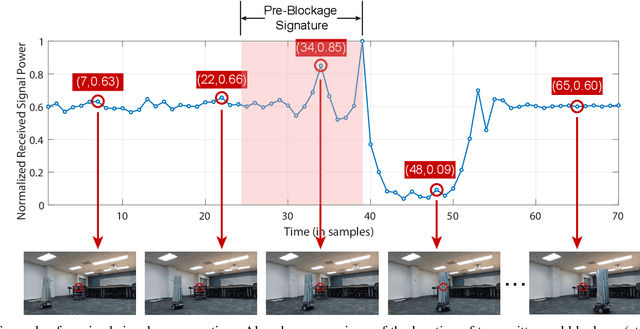

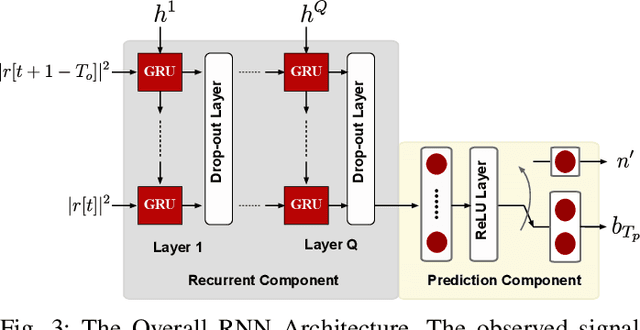

Millimeter wave (mmWave) communication is being seriously considered for the next generation communication systems because of its ability to support high bandwidth and high data rates. Unfortunately, these systems perform badly in the presence of blockage. A sudden blockage in the line of sight(LOS) link leads to communication disconnection, which causes a reliability problem. Also, searching alternative base stations(BS) for re-connection results in latency overhead. In this paper, we tackle these problems by predicting the time of blockage occurrence using a machine learning (ML) technique. In our approach, BS learns how to predict that a certain link will experience blockage in the near future using the received signal power. Simulation results on a real dataset show that blockage occurrence can be predicted with 85% accuracy and the exact time instance of blockage occurrence can be obtained with low error. Thus the proposed method reduces the communication disconnections in mmWave communication, thereby increasing reliability and reducing latency of such systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge