"Time": models, code, and papers

Hide and Seek tracker: Real-time recovery from target loss

Jun 20, 2018

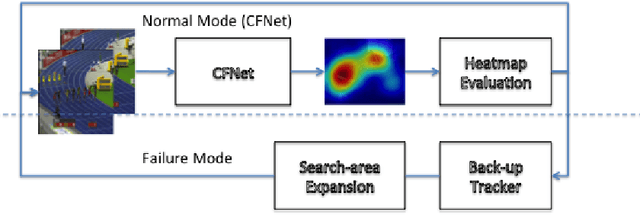

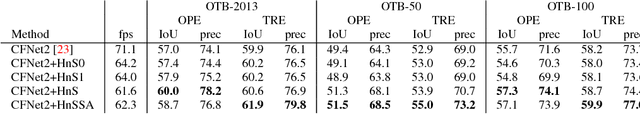

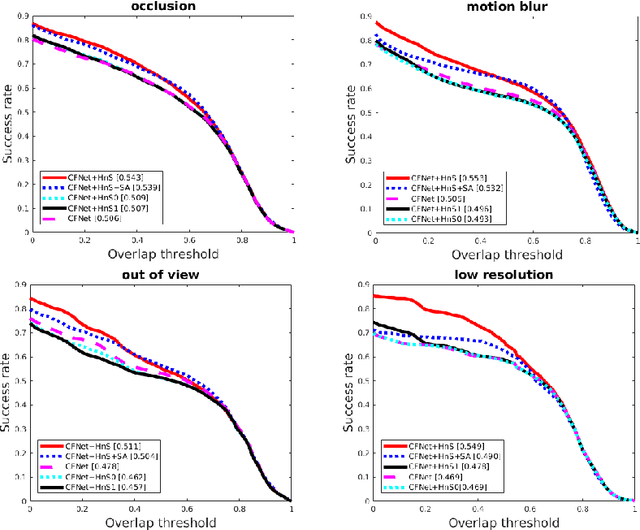

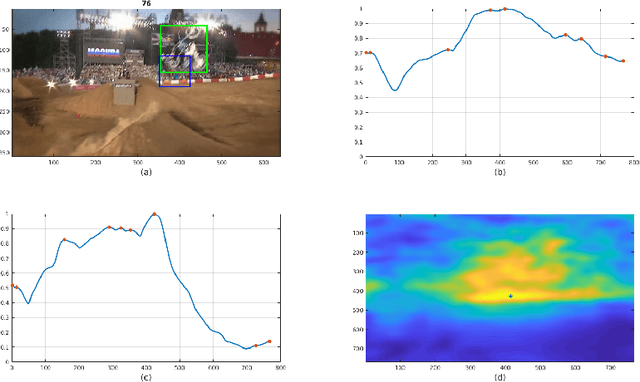

In this paper, we examine the real-time recovery of a video tracker from a target loss, using information that is already available from the original tracker and without a significant computational overhead. More specifically, before using the tracker output to update the target position we estimate the detection confidence. In the case of a low confidence, the position update is rejected and the tracker passes to a single-frame failure mode, during which the patch low-level visual content is used to swiftly update the object position, before recovering from the target loss in the next frame. Orthogonally to this improvement, we further enhance the running average method used for creating the query model in tracking-through-similarity. The experimental evidence provided by evaluation on standard tracking datasets (OTB-50, OTB-100 and OTB-2013) validate that target recovery can be successfully achieved without compromising the real-time update of the target position.

Predicting Intraoperative Hypoxemia with Joint Sequence Autoencoder Networks

Apr 30, 2021

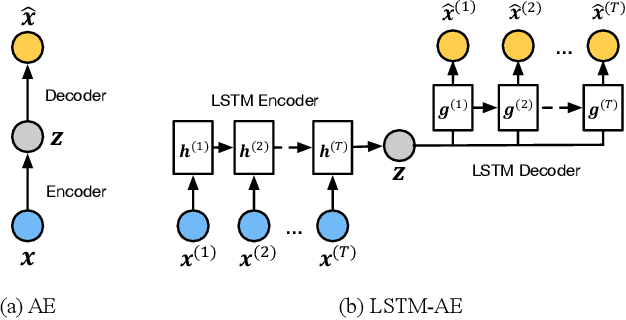

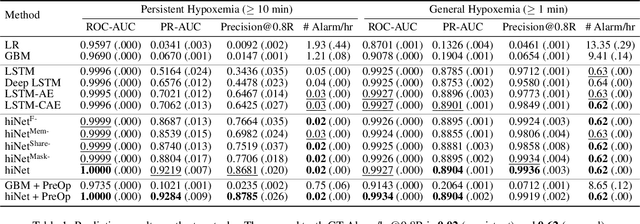

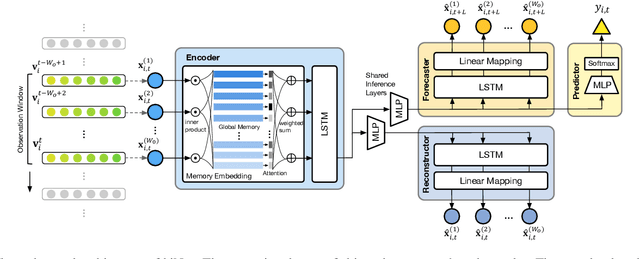

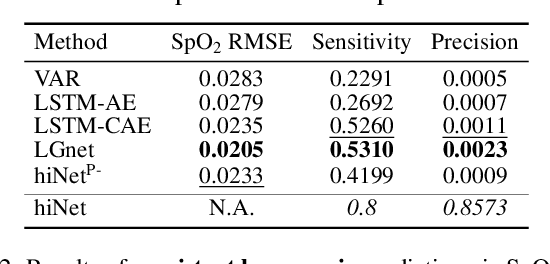

We present an end-to-end model using streaming physiological time series to accurately predict near-term risk for hypoxemia, a rare, but life-threatening condition known to cause serious patient harm during surgery. Our proposed model makes inference on both hypoxemia outcomes and future input sequences, enabled by a joint sequence autoencoder that simultaneously optimizes a discriminative decoder for label prediction, and two auxiliary decoders trained for data reconstruction and forecast, which seamlessly learns future-indicative latent representation. All decoders share a memory-based encoder that helps capture the global dynamics of patient data. In a large surgical cohort of 73,536 surgeries at a major academic medical center, our model outperforms all baselines and gives a large performance gain over the state-of-the-art hypoxemia prediction system. With a high sensitivity cutoff at 80%, it presents 99.36% precision in predicting hypoxemia and 86.81% precision in predicting the much more severe and rare hypoxemic condition, persistent hypoxemia. With exceptionally low rate of false alarms, our proposed model is promising in improving clinical decision making and easing burden on the health system.

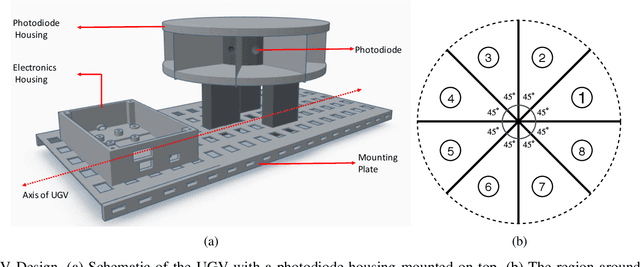

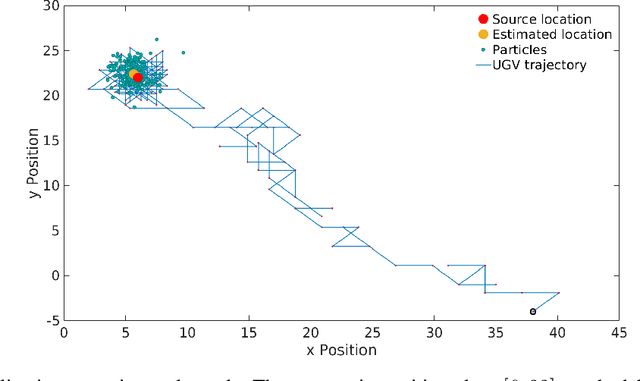

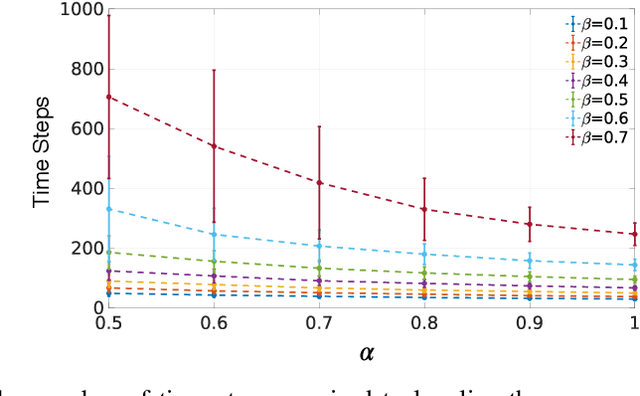

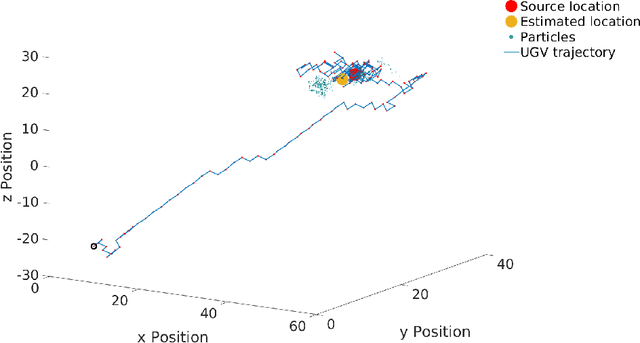

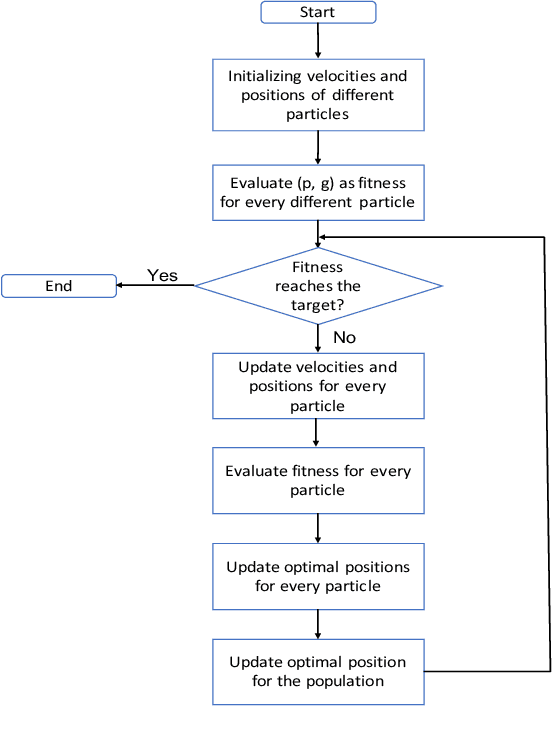

Source localization using particle filtering on FPGA for robotic navigation with imprecise binary measurement

Oct 22, 2020

Particle filtering is a recursive Bayesian estimation technique that has gained popularity recently for tracking and localization applications. It uses Monte Carlo simulation and has proven to be a very reliable technique to model non-Gaussian and non-linear elements of physical systems. Particle filters outperform various other traditional filters like Kalman filters in non-Gaussian and non-linear settings due to their non-analytical and non-parametric nature. However, a significant drawback of particle filters is their computational complexity, which inhibits their use in real-time applications with conventional CPU or DSP based implementation schemes. This paper proposes a modification to the existing particle filter algorithm and presents a highspeed and dedicated hardware architecture. The architecture incorporates pipelining and parallelization in the design to reduce execution time considerably. The design is validated for a source localization problem wherein we estimate the position of a source in real-time using the particle filter algorithm implemented on hardware. The validation setup relies on an Unmanned Ground Vehicle (UGV) with a photodiode housing on top to sense and localize a light source. We have prototyped the design using Artix-7 field-programmable gate array (FPGA), and resource utilization for the proposed system is presented. Further, we show the execution time and estimation accuracy of the high-speed architecture and observe a significant reduction in computational time. Our implementation of particle filters on FPGA is scalable and modular, with a low execution time of about 5.62 us for processing 1024 particles and can be deployed for real-time applications.

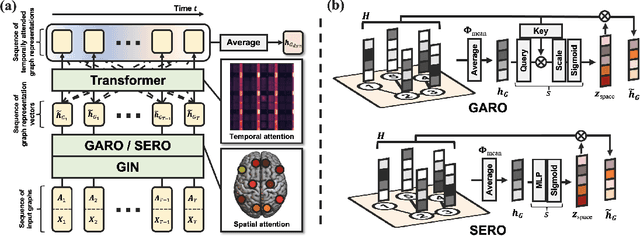

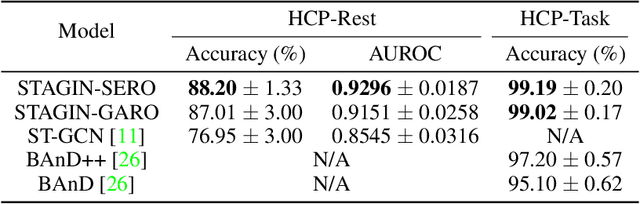

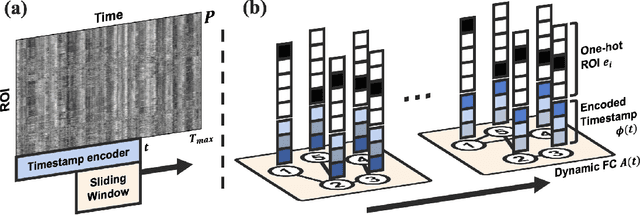

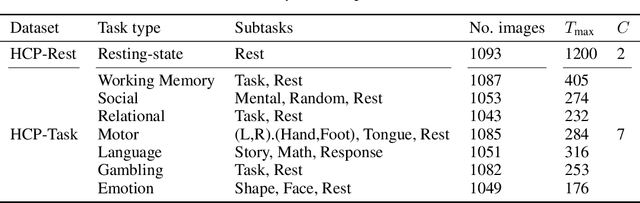

Learning Dynamic Graph Representation of Brain Connectome with Spatio-Temporal Attention

May 27, 2021

Functional connectivity (FC) between regions of the brain can be assessed by the degree of temporal correlation measured with functional neuroimaging modalities. Based on the fact that these connectivities build a network, graph-based approaches for analyzing the brain connectome have provided insights into the functions of the human brain. The development of graph neural networks (GNNs) capable of learning representation from graph structured data has led to increased interest in learning the graph representation of the brain connectome. Although recent attempts to apply GNN to the FC network have shown promising results, there is still a common limitation that they usually do not incorporate the dynamic characteristics of the FC network which fluctuates over time. In addition, a few studies that have attempted to use dynamic FC as an input for the GNN reported a reduction in performance compared to static FC methods, and did not provide temporal explainability. Here, we propose STAGIN, a method for learning dynamic graph representation of the brain connectome with spatio-temporal attention. Specifically, a temporal sequence of brain graphs is input to the STAGIN to obtain the dynamic graph representation, while novel READOUT functions and the Transformer encoder provide spatial and temporal explainability with attention, respectively. Experiments on the HCP-Rest and the HCP-Task datasets demonstrate exceptional performance of our proposed method. Analysis of the spatio-temporal attention also provide concurrent interpretation with the neuroscientific knowledge, which further validates our method. Code is available at https://github.com/egyptdj/stagin

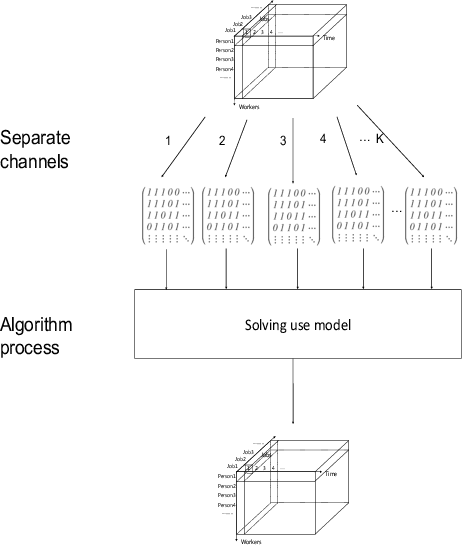

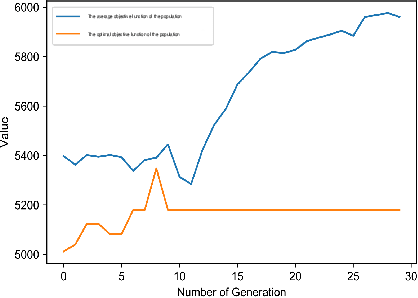

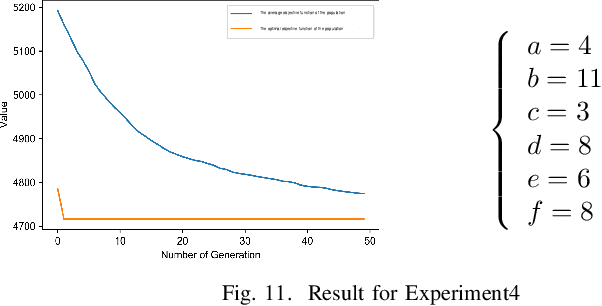

An Intelligent Model for Solving Manpower Scheduling Problems

May 07, 2021

The manpower scheduling problem is a critical research field in the resource management area. Based on the existing studies on scheduling problem solutions, this paper transforms the manpower scheduling problem into a combinational optimization problem under multi-constraint conditions from a new perspective. It also uses logical paradigms to build a mathematical model for problem solution and an improved multi-dimensional evolution algorithm for solving the model. Moreover, the constraints discussed in this paper basically cover all the requirements of human resource coordination in modern society and are supported by our experiment results. In the discussion part, we compare our model with other heuristic algorithms or linear programming methods and prove that the model proposed in this paper makes a 25.7% increase in efficiency and a 17% increase in accuracy at most. In addition, to the numerical solution of the manpower scheduling problem, this paper also studies the algorithm for scheduling task list generation and the method of displaying scheduling results. As a result, we not only provide various modifications for the basic algorithm to solve different condition problems but also propose a new algorithm that increases at least 28.91% in time efficiency by comparing with different baseline models.

* none

Hailstorm : A Statically-Typed, Purely Functional Language for IoT Applications

May 27, 2021

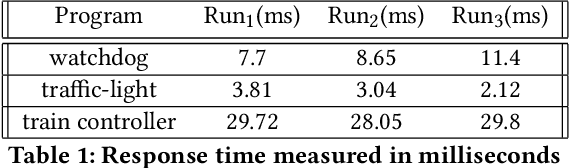

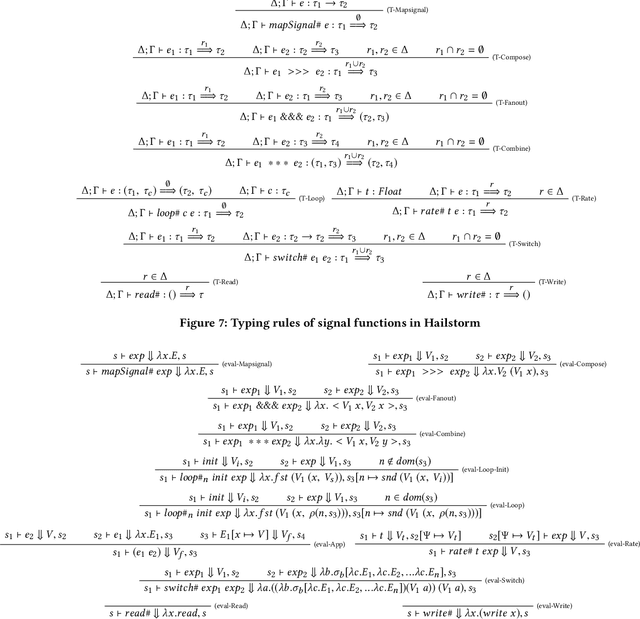

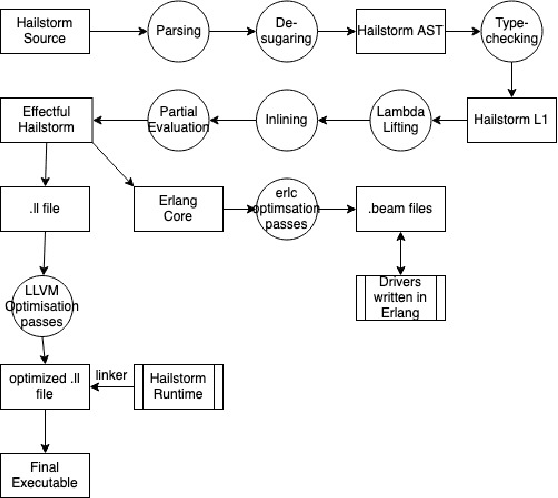

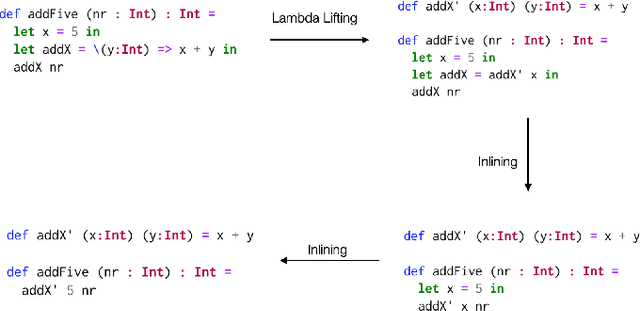

With the growing ubiquity of Internet of Things(IoT), more complex logic is being programmed on resource-constrained IoT devices, almost exclusively using the C programming language. While C provides low-level control over memory, it lacks a number of high-level programming abstractions such as higher-order functions, polymorphism, strong static typing, memory safety, and automatic memory management. We present Hailstorm, a statically-typed, purely functional programming language that attempts to address the above problem. It is a high-level programming language with a strict typing discipline. It supports features like higher-order functions, tail-recursion, and automatic memory management, to program IoT devices in a declarative manner. Applications running on these devices tend to be heavily dominated by I/O. Hailstorm tracks side effects likeI/O in its type system using resource types. This choice allowed us to explore the design of a purely functional standalone language, in an area where it is more common to embed a functional core in an imperative shell. The language borrows the combinators of arrowized FRP, but has discrete-time semantics. The design of the full set of combinators is work in progress, driven by examples. So far, we have evaluated Hailstorm by writing standard examples from the literature (earthquake detection, a railway crossing system and various other clocked systems), and also running examples on the GRiSP embedded systems board, through generation of Erlang.

Multi-stage, multi-swarm PSO for joint optimization of well placement and control

Jun 02, 2021

Evolutionary optimization algorithms, including particle swarm optimization (PSO), have been successfully applied in oil industry for production planning and control. Such optimization studies are quite challenging due to large number of decision variables, production scenarios, and subsurface uncertainties. In this work, a multi-stage, multi-swarm PSO (MS2PSO) is proposed to fix certain issues with canonical PSO algorithm such as premature convergence, excessive influence of global best solution, and oscillation. Multiple experiments are conducted using Olympus benchmark to compare the efficacy of algorithms. Canonical PSO hyperparameters are first tuned to prioritize exploration in early phase and exploitation in late phase. Next, a two-stage multi-swarm PSO (2SPSO) is used where multiple-swarms of the first stage collapse into a single swarm in the second stage. Finally, MS2PSO with multiple stages and multiple swarms is used in which swarms recursively collapse after each stage. Multiple swarm strategy ensures that diversity is retained within the population and multiple modes are explored. Staging ensures that local optima found during initial stage does not lead to premature convergence. Optimization test case comprises of 90 control variables and a twenty year period of flow simulation. It is observed that different algorithm designs have their own benefits and drawbacks. Multiple swarms and stages help algorithm to move away from local optima, but at the same time they may also necessitate larger number of iterations for convergence. Both 2SPSO and MS2PSO are found to be helpful for problems with high dimensions and multiple modes where greater degree of exploration is desired.

Eye Know You: Metric Learning for End-to-end Biometric Authentication Using Eye Movements from a Longitudinal Dataset

Apr 21, 2021

While numerous studies have explored eye movement biometrics since the modality's inception in 2004, the permanence of eye movements remains largely unexplored as most studies utilize datasets collected within a short time frame. This paper presents a convolutional neural network for authenticating users using their eye movements. The network is trained with an established metric learning loss function, multi-similarity loss, which seeks to form a well-clustered embedding space and directly enables the enrollment and authentication of out-of-sample users. Performance measures are computed on GazeBase, a task-diverse and publicly-available dataset collected over a 37-month period. This study includes an exhaustive analysis of the effects of training on various tasks and downsampling from 1000 Hz to several lower sampling rates. Our results reveal that reasonable authentication accuracy may be achieved even during a low-cognitive-load task or at low sampling rates. Moreover, we find that eye movements are quite resilient against template aging after 3 years.

Physics-informed neural networks for the shallow-water equations on the sphere

Apr 01, 2021

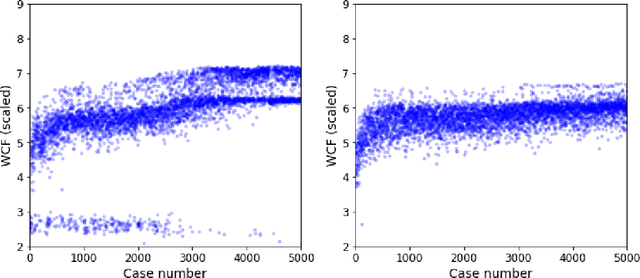

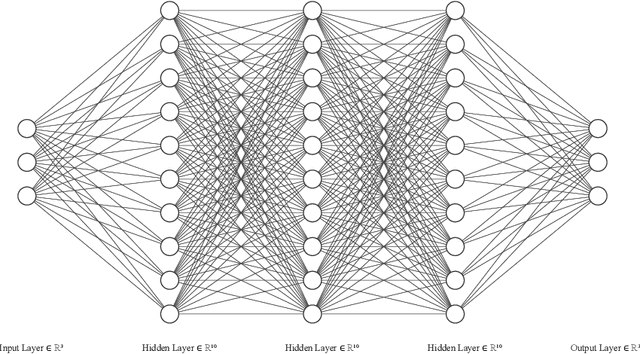

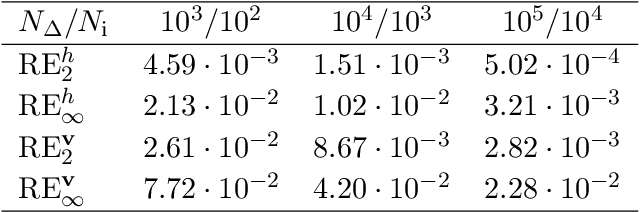

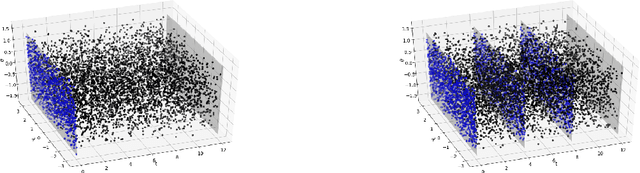

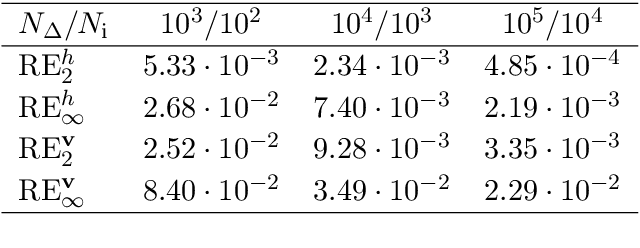

We propose the use of physics-informed neural networks for solving the shallow-water equations on the sphere. Physics-informed neural networks are trained to satisfy the differential equations along with the prescribed initial and boundary data, and thus can be seen as an alternative approach to solving differential equations compared to traditional numerical approaches such as finite difference, finite volume or spectral methods. We discuss the training difficulties of physics-informed neural networks for the shallow-water equations on the sphere and propose a simple multi-model approach to tackle test cases of comparatively long time intervals. We illustrate the abilities of the method by solving the most prominent test cases proposed by Williamson et al. [J. Comput. Phys. 102, 211-224, 1992].

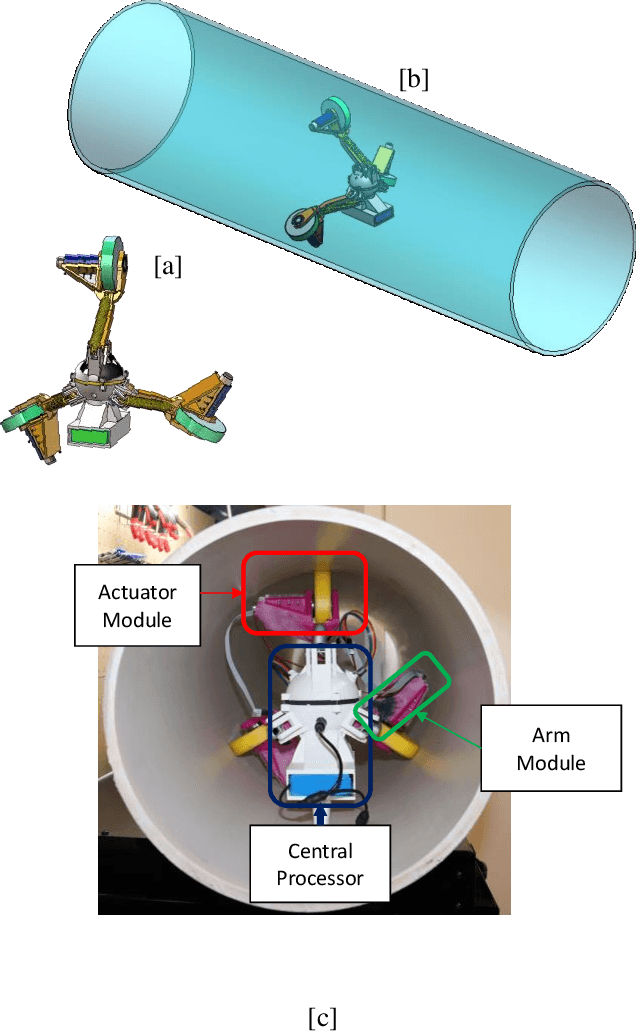

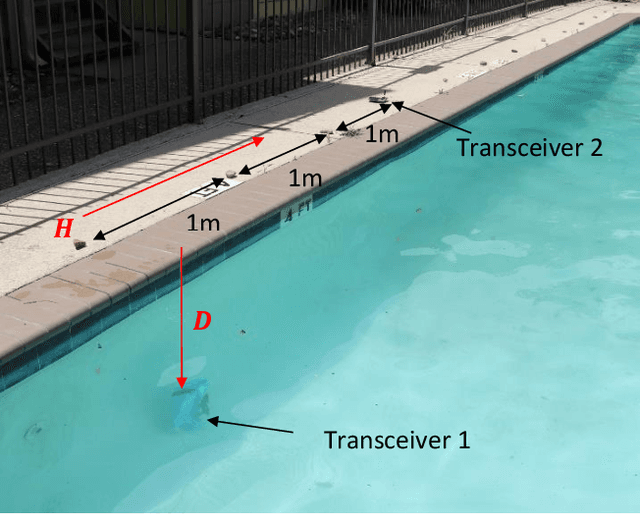

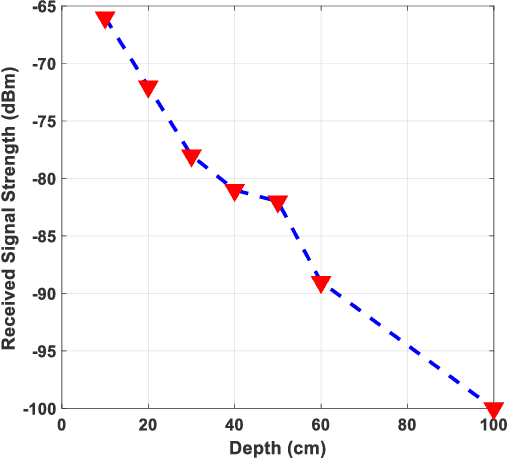

A Localization and Navigation Method for an In-pipe Robot in Water Distribution System through Wireless Control towards Long-Distance Inspection

May 21, 2021

In this paper, we propose an operation procedure for our previously developed in-pipe robotic system that is used for water quality monitoring in water distribution systems (WDS). The proposed operation procedure synchronizes a developed wireless communication system that is suitable for harsh environments of soil, water, and rock with a multi-phase control algorithm. The new wireless control algorithm facilitates smart navigation and near real-time wireless data transmission during operation for our in-pipe robot in WDS. The smart navigation enables the robot to pass through different configurations of the pipeline with long inspection capability with a battery in which is mounted on the robot. To this end, we have divided the operation procedure into five steps that assign a specific motion control phase and wireless communication task to the robot. We describe each step and the algorithm associated with that step in this paper. The proposed robotic system defines the configuration type in each pipeline with the pre-programmed pipeline map that is given to the robot before the operation and the wireless communication system. The wireless communication system includes some relay nodes that perform bi-directional communication in the operation procedure. The developed wireless robotic system along with operation procedure facilitates localization and navigation for the robot toward long-distance inspection in WDS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge