"Time": models, code, and papers

Vehicle Re-identification Method Based on Vehicle Attribute and Mutual Exclusion Between Cameras

Apr 30, 2021

Vehicle Re-identification aims to identify a specific vehicle across time and camera view. With the rapid growth of intelligent transportation systems and smart cities, vehicle Re-identification technology gets more and more attention. However, due to the difference of shooting angle and the high similarity of vehicles belonging to the same brand, vehicle re-identification becomes a great challenge for existing method. In this paper, we propose a vehicle attribute-guided method to re-rank vehicle Re-ID result. The attributes used include vehicle orientation and vehicle brand . We also focus on the camera information and introduce camera mutual exclusion theory to further fine-tune the search results. In terms of feature extraction, we combine the data augmentations of multi-resolutions with the large model ensemble to get a more robust vehicle features. Our method achieves mAP of 63.73% and rank-1 accuracy 76.61% in the CVPR 2021 AI City Challenge.

Towards Knowledge Organization Ecosystems

May 23, 2021It is needless to mention the (already established) overarching importance of knowledge organization and its tried-and-tested high-quality schemes in knowledge-based Artificial Intelligence (AI) systems. But equally, it is also hard to ignore that, increasingly, standalone KOSs are becoming functionally ineffective components for such systems, given their inability to capture the continuous facetization and drift of domains. The paper proposes a radical re-conceptualization of KOSs as a first step to solve such an inability, and, accordingly, contributes in the form of the following dimensions: (i) an explicit characterization of Knowledge Organization Ecosystems (KOEs) (possibly for the first time) and their positioning as pivotal components in realizing sustainable knowledge-based AI solutions, (ii) as a consequence of such a novel characterization, a first examination and characterization of KOEs as Socio-Technical Systems (STSs), thus opening up an entirely new stream of research in knowledge-based AI, and (iii) motivating KOEs not to be mere STSs but STSs which are grounded in Ethics and Responsible Artificial Intelligence cardinals from their very genesis. The paper grounds the above contributions in relevant research literature in a distributed fashion throughout the paper, and finally concludes by outlining the future research possibilities.

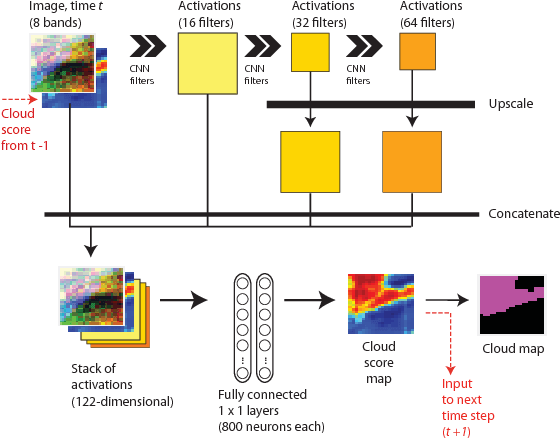

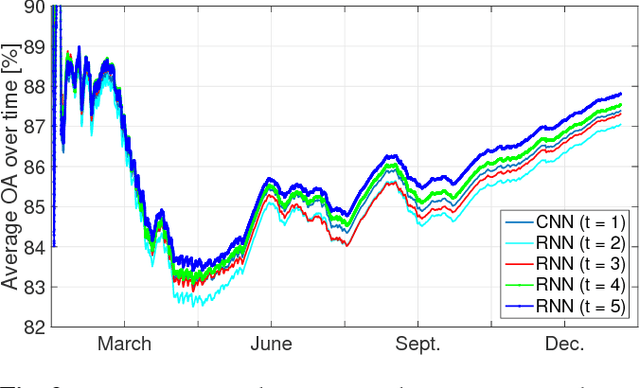

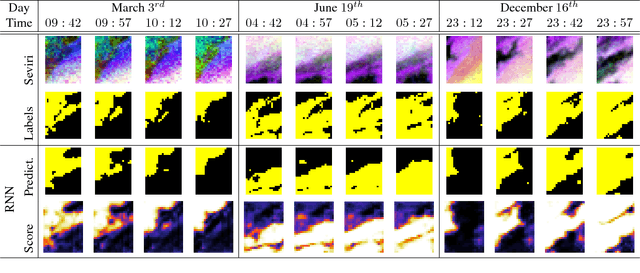

A deep network approach to multitemporal cloud detection

Dec 09, 2020

We present a deep learning model with temporal memory to detect clouds in image time series acquired by the Seviri imager mounted on the Meteosat Second Generation (MSG) satellite. The model provides pixel-level cloud maps with related confidence and propagates information in time via a recurrent neural network structure. With a single model, we are able to outline clouds along all year and during day and night with high accuracy.

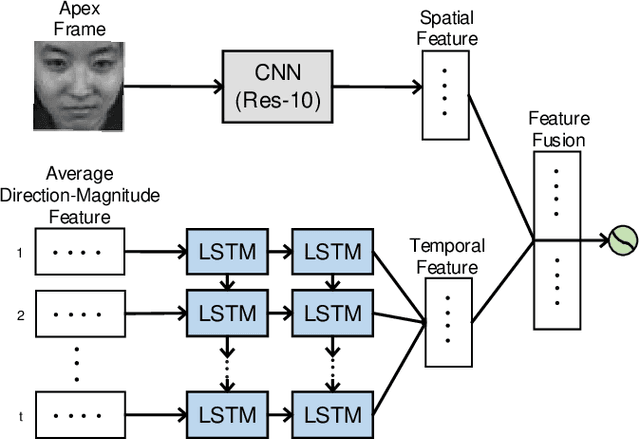

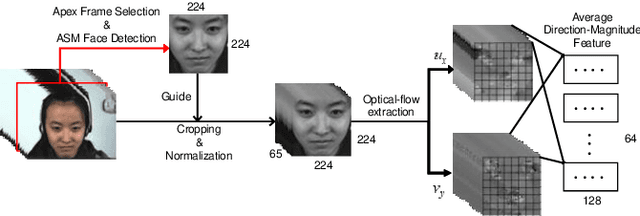

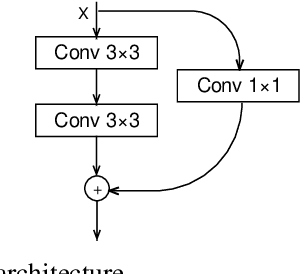

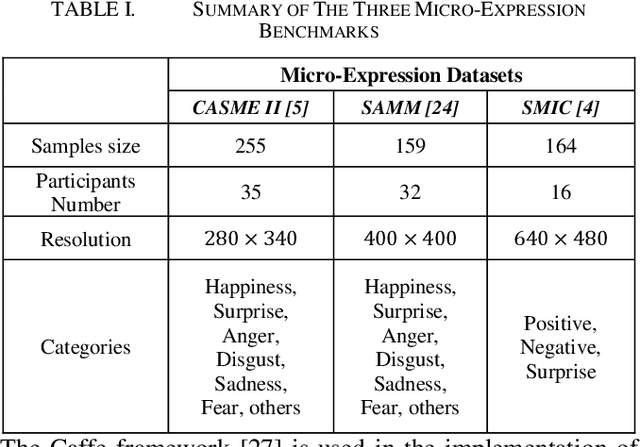

A Novel Apex-Time Network for Cross-Dataset Micro-Expression Recognition

Apr 23, 2019

The automatic recognition of micro-expression has been boosted ever since the successful introduction of deep learning approaches. Whilst researchers working on such topics are more and more tending to learn from the nature of micro-expression, the practice of using deep learning techniques has evolved from processing the entire video clip of micro-expression to the recognition on apex frame. Using apex frame is able to get rid of redundant information but the temporal evidence of micro-expression would be thereby left out. In this paper, we propose to do the recognition based on the spatial information from apex frame as well as on the temporal information from respective-adjacent frames. As such, a novel Apex-Time Network (ATNet) is proposed. Through extensive experiments on three benchmarks, we demonstrate the improvement achieved by adding the temporal information learned from adjacent frames around the apex frame. Specially, the model with such temporal information is more robust in cross-dataset validations.

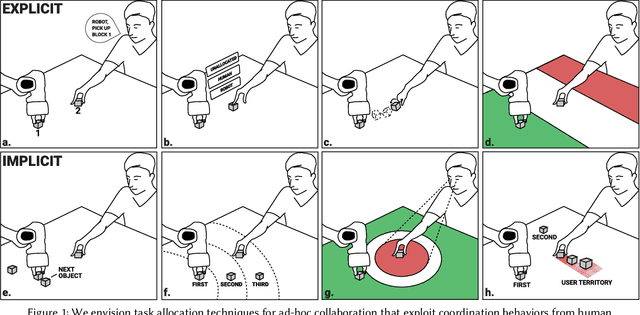

"Grip-that-there": An Investigation of Explicit and Implicit Task Allocation Techniques for Human-Robot Collaboration

Feb 01, 2021

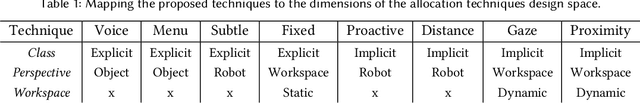

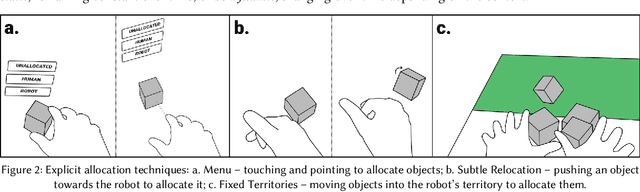

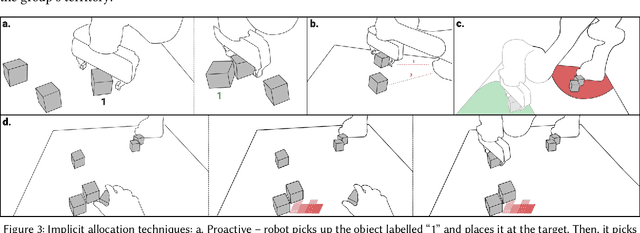

In ad-hoc human-robot collaboration (HRC), humans and robots work on a task without pre-planning the robot's actions prior to execution; instead, task allocation occurs in real-time. However, prior research has largely focused on task allocations that are pre-planned - there has not been a comprehensive exploration or evaluation of techniques where task allocation is adjusted in real-time. Inspired by HCI research on territoriality and proxemics, we propose a design space of novel task allocation techniques including both explicit techniques, where the user maintains agency, and implicit techniques, where the efficiency of automation can be leveraged. The techniques were implemented and evaluated using a tabletop HRC simulation in VR. A 16-participant study, which presented variations of a collaborative block stacking task, showed that implicit techniques enable efficient task completion and task parallelization, and should be augmented with explicit mechanisms to provide users with fine-grained control.

Discovering an Aid Policy to Minimize Student Evasion Using Offline Reinforcement Learning

Apr 20, 2021

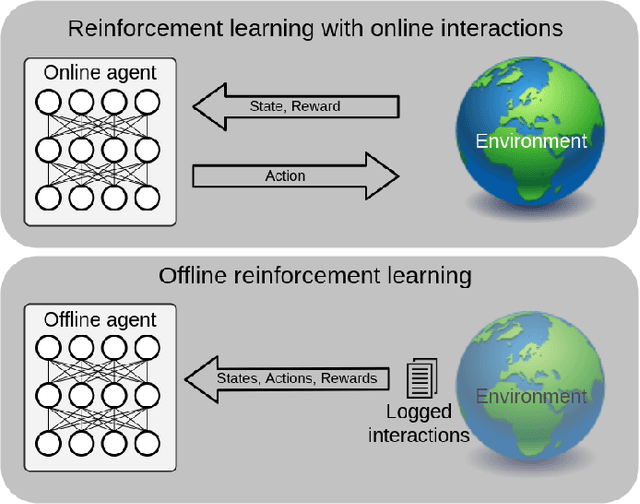

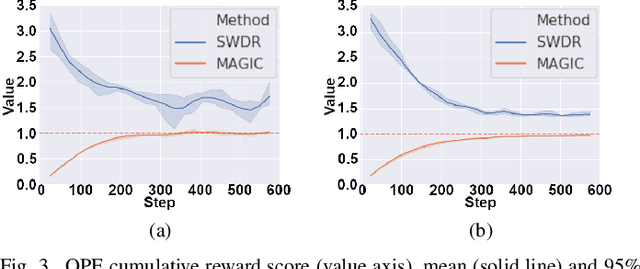

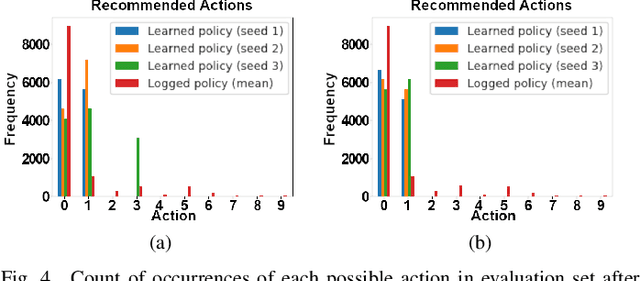

High dropout rates in tertiary education expose a lack of efficiency that causes frustration of expectations and financial waste. Predicting students at risk is not enough to avoid student dropout. Usually, an appropriate aid action must be discovered and applied in the proper time for each student. To tackle this sequential decision-making problem, we propose a decision support method to the selection of aid actions for students using offline reinforcement learning to support decision-makers effectively avoid student dropout. Additionally, a discretization of student's state space applying two different clustering methods is evaluated. Our experiments using logged data of real students shows, through off-policy evaluation, that the method should achieve roughly 1.0 to 1.5 times as much cumulative reward as the logged policy. So, it is feasible to help decision-makers apply appropriate aid actions and, possibly, reduce student dropout.

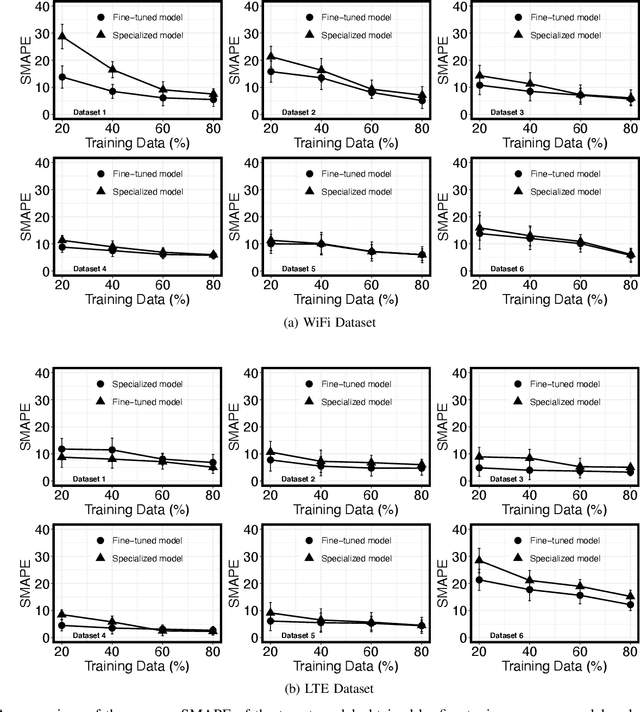

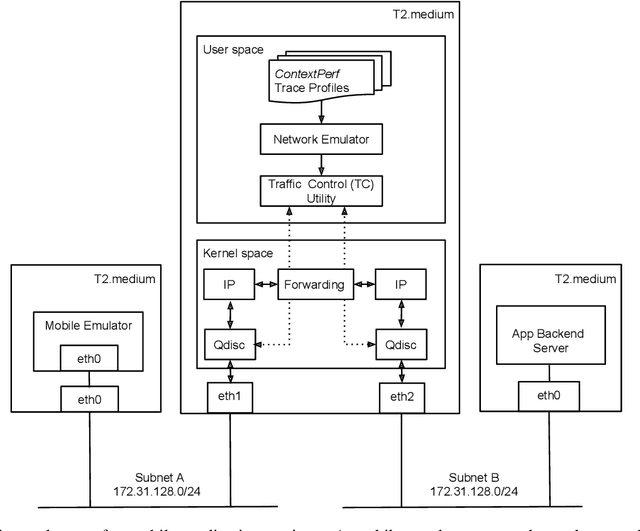

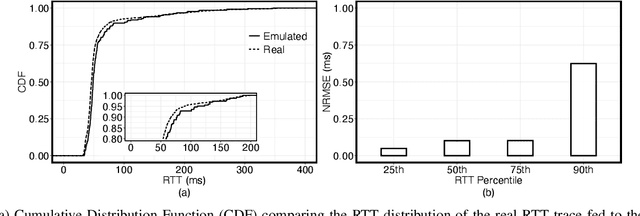

Generation of Realistic Cloud Access Times for Mobile Application Testing using Transfer Learning

Mar 16, 2021

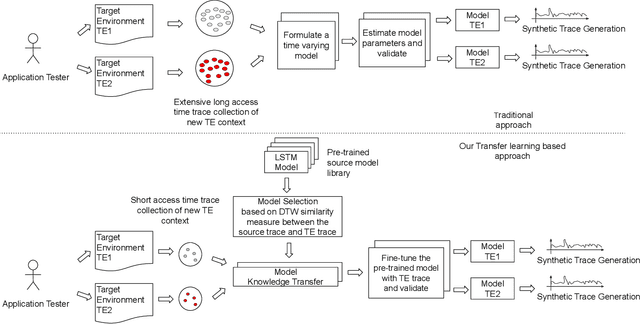

The network Quality of Service (QoS) metrics such as the access time, the bandwidth, and the packet loss play an important role in determining the Quality of Experience (QoE) of mobile applications. Various factors like the Radio Resource Control (RRC) states, the Mobile Network Operator (MNO) specific retransmission configurations, handovers triggered by the user mobility, the network load etc. can cause high variability in these QoS metrics on 4G/LTE, and WiFi networks, which can be detrimental to the application QoE. Therefore, exposing mobile application to realistic network QoS metrics is critical for testers attempting to predict its QoE. A viable approach is testing using synthetic traces. The main challenge in generation of realisitc synthetic traces is the diversity of environments and lack of wide scope of real traces to calibrate the generators. In this paper, we describe a measurement-driven methodology based on transfer learning with Long Short Term Memory (LSTM) neural nets to solve this problem. The methodology requires a relatively short sample of the targeted environment to adapt the presented basic model to new environments, thus simplifying synthetic traces generation. We present this feature for realistic WiFi and LTE cloud access time models adapted for diverse target environments with a trace size of just 6000 samples measured over a few tens of minutes. We demonstrate that synthetic traces generated from these models are capable of accurately reproducing application QoE metric distributions including their outlier values.

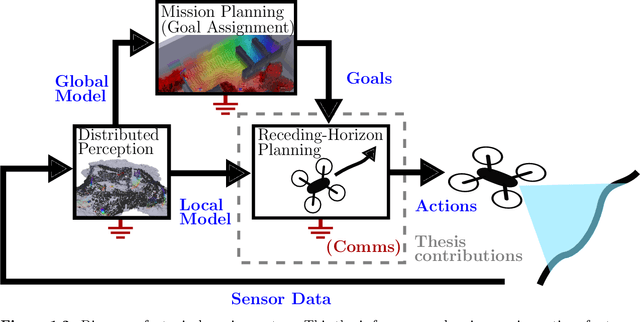

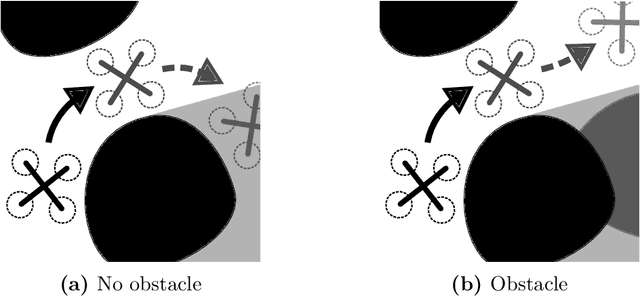

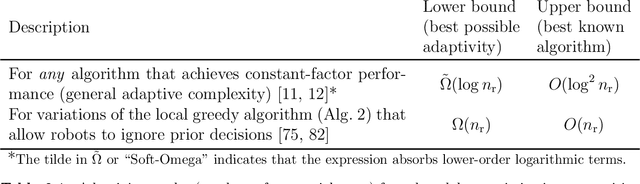

Sensor Planning for Large Numbers of Robots

Feb 08, 2021

*The following abbreviates the abstract. Please refer to the thesis for the full abstract.* After a disaster, locating and extracting victims quickly is critical because mortality rises rapidly after the first two days. To assist search and rescue teams and improve response times, teams of camera-equipped aerial robots can engage in tasks such as mapping buildings and locating victims. These sensing tasks encapsulate difficult (NP-Hard) problems. One way to simplify planning for these tasks is to focus on maximizing sensing performance over a short time horizon. Specifically, consider the problem of how to select motions for a team of robots to maximize a notion of sensing quality (the sensing objective) over the near future, say by maximizing the amount of unknown space in a map that robots will observe over the next several seconds. By repeating this process regularly, the team can react quickly to new observations as they work to complete the sensing task. In technical terms, this planning and control process forms an example of receding-horizon control. Fortunately, common sensing objectives benefit from well-known monotonicity properties (e.g. submodularity), and greedy algorithms can exploit these monotonicity properties to solve the receding-horizon optimization problems that we study near-optimally. However, greedy algorithms typically force robots to make decisions sequentially so that planning time grows with the number of robots. Further, recent works that investigate sequential greedy planning, have demonstrated that reducing the number of sequential steps while retaining suboptimality guarantees can be hard or impossible. We demonstrate that halting growth in planning time is sometimes possible. To do so, we introduce novel greedy algorithms involving fixed numbers of sequential steps.

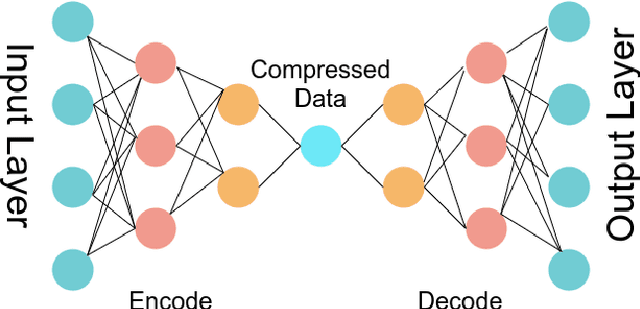

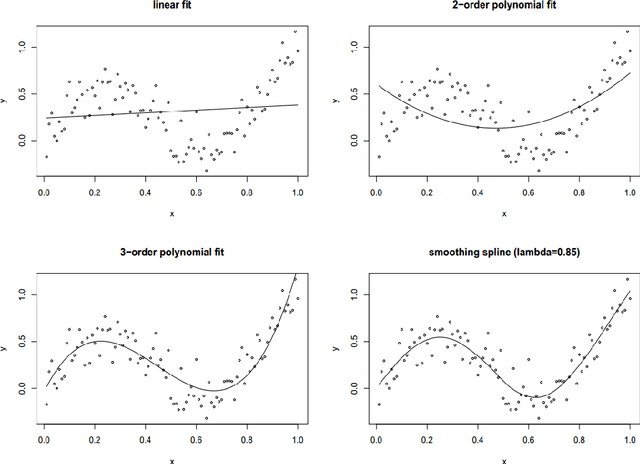

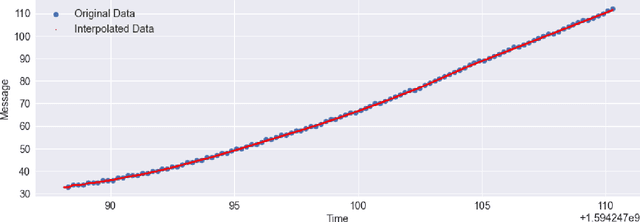

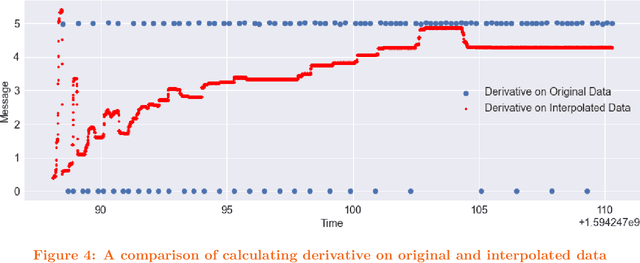

AutoEncoder for Interpolation

Jan 06, 2021

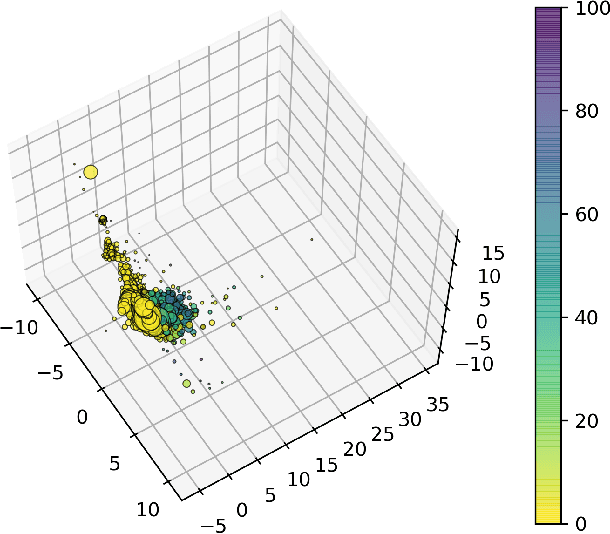

In physical science, sensor data are collected over time to produce timeseries data. However, depending on the real-world condition and underlying physics of the sensor, data might be noisy. Besides, the limitation of sample-time on sensors may not allow collecting data over all the timepoints, may require some form of interpolation. Interpolation may not be smooth enough, fail to denoise data, and derivative operation on noisy sensor data may be poor that do not reveal any high order dynamics. In this article, we propose to use AutoEncoder to perform interpolation that also denoise data simultaneously. A brief example using a real-world is also provided.

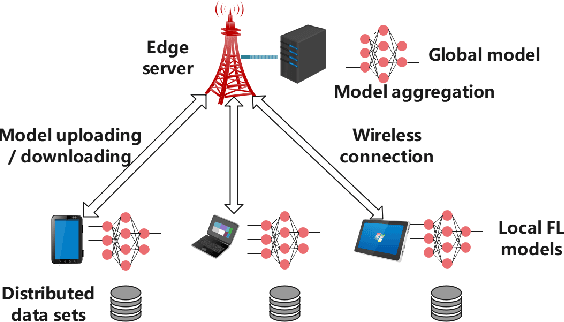

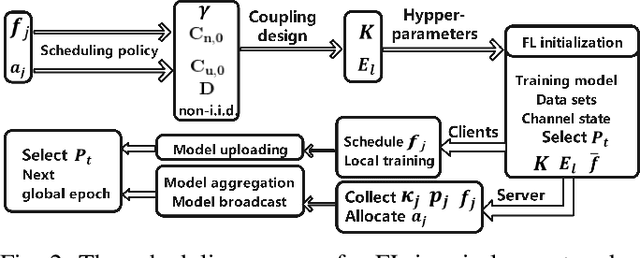

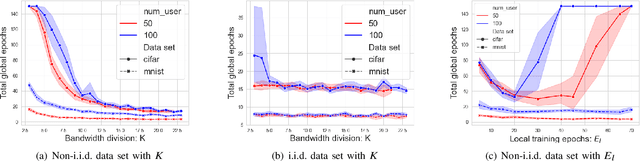

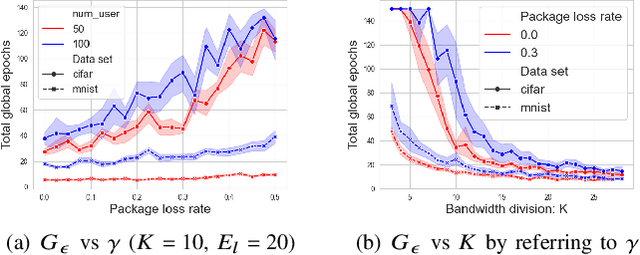

Convergence Analysis and System Design for Federated Learning over Wireless Networks

Apr 30, 2021

Federated learning (FL) has recently emerged as an important and promising learning scheme in IoT, enabling devices to jointly learn a model without sharing their raw data sets. However, as the training data in FL is not collected and stored centrally, FL training requires frequent model exchange, which is largely affected by the wireless communication network. Therein, limited bandwidth and random package loss restrict interactions in training. Meanwhile, the insufficient message synchronization among distributed clients could also affect FL convergence. In this paper, we analyze the convergence rate of FL training considering the joint impact of communication network and training settings. Further by considering the training costs in terms of time and power, the optimal scheduling problems for communication networks are formulated. The developed theoretical results can be used to assist the system parameter selections and explain the principle of how the wireless communication system could influence the distributed training process and network scheduling.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge