"Time": models, code, and papers

Identifying Water Stress in Chickpea Plant by Analyzing Progressive Changes in Shoot Images using Deep Learning

Apr 16, 2021

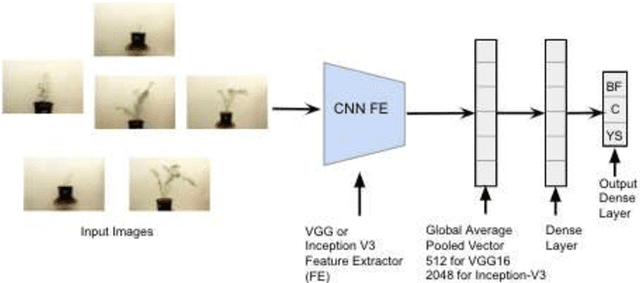

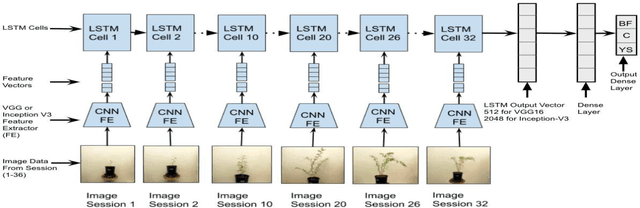

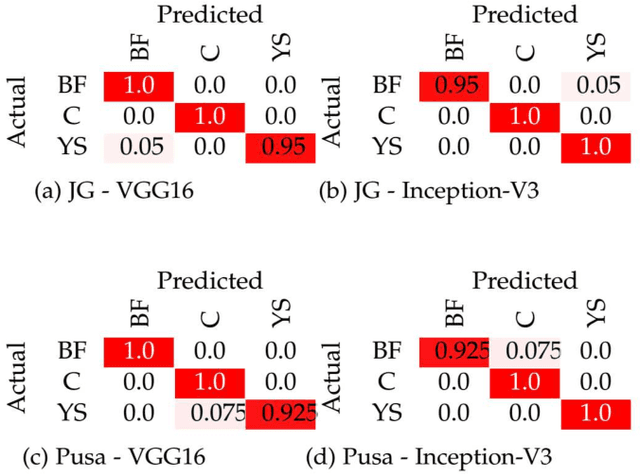

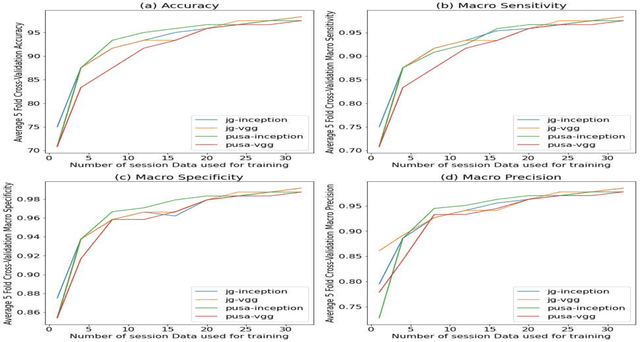

To meet the needs of a growing world population, we need to increase the global agricultural yields by employing modern, precision, and automated farming methods. In the recent decade, high-throughput plant phenotyping techniques, which combine non-invasive image analysis and machine learning, have been successfully applied to identify and quantify plant health and diseases. However, these image-based machine learning usually do not consider plant stress's progressive or temporal nature. This time-invariant approach also requires images showing severe signs of stress to ensure high confidence detections, thereby reducing this approach's feasibility for early detection and recovery of plants under stress. In order to overcome the problem mentioned above, we propose a temporal analysis of the visual changes induced in the plant due to stress and apply it for the specific case of water stress identification in Chickpea plant shoot images. For this, we have considered an image dataset of two chickpea varieties JG-62 and Pusa-372, under three water stress conditions; control, young seedling, and before flowering, captured over five months. We then develop an LSTM-CNN architecture to learn visual-temporal patterns from this dataset and predict the water stress category with high confidence. To establish a baseline context, we also conduct a comparative analysis of the CNN architecture used in the proposed model with the other CNN techniques used for the time-invariant classification of water stress. The results reveal that our proposed LSTM-CNN model has resulted in the ceiling level classification performance of \textbf{98.52\%} on JG-62 and \textbf{97.78\%} on Pusa-372 and the chickpea plant data. Lastly, we perform an ablation study to determine the LSTM-CNN model's performance on decreasing the amount of temporal session data used for training.

Simulating Continuum Mechanics with Multi-Scale Graph Neural Networks

Jun 09, 2021

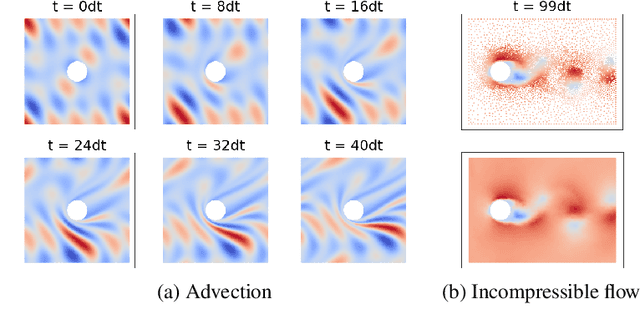

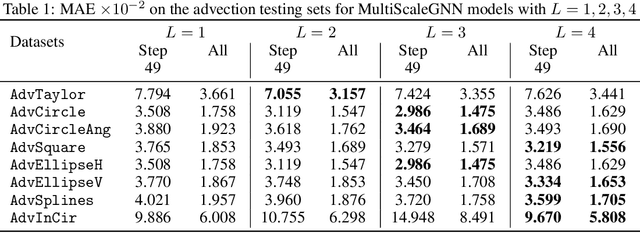

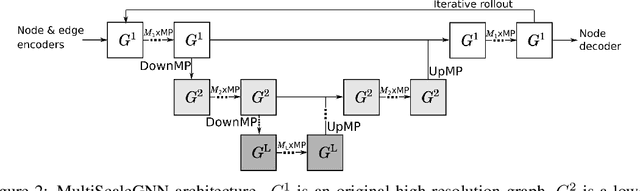

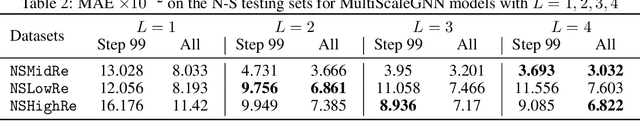

Continuum mechanics simulators, numerically solving one or more partial differential equations, are essential tools in many areas of science and engineering, but their performance often limits application in practice. Recent modern machine learning approaches have demonstrated their ability to accelerate spatio-temporal predictions, although, with only moderate accuracy in comparison. Here we introduce MultiScaleGNN, a novel multi-scale graph neural network model for learning to infer unsteady continuum mechanics. MultiScaleGNN represents the physical domain as an unstructured set of nodes, and it constructs one or more graphs, each of them encoding different scales of spatial resolution. Successive learnt message passing between these graphs improves the ability of GNNs to capture and forecast the system state in problems encompassing a range of length scales. Using graph representations, MultiScaleGNN can impose periodic boundary conditions as an inductive bias on the edges in the graphs, and achieve independence to the nodes' positions. We demonstrate this method on advection problems and incompressible fluid dynamics. Our results show that the proposed model can generalise from uniform advection fields to high-gradient fields on complex domains at test time and infer long-term Navier-Stokes solutions within a range of Reynolds numbers. Simulations obtained with MultiScaleGNN are between two and four orders of magnitude faster than the ones on which it was trained.

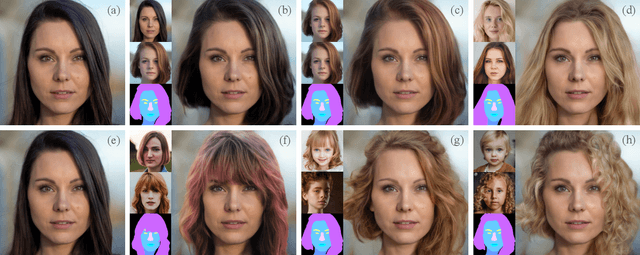

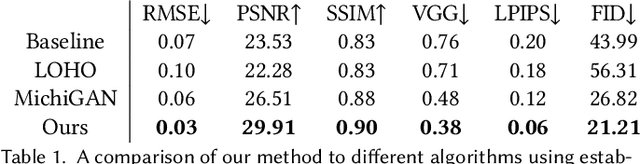

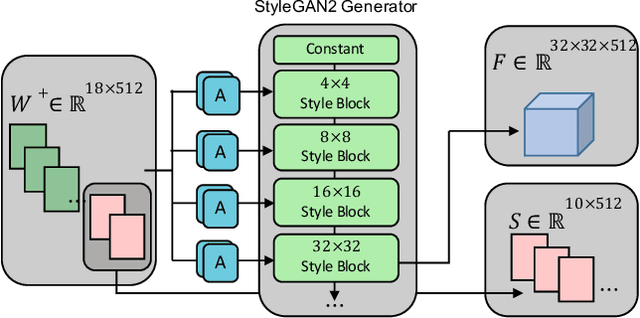

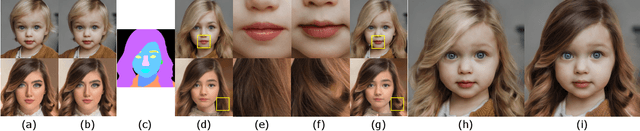

Barbershop: GAN-based Image Compositing using Segmentation Masks

Jun 02, 2021

Seamlessly blending features from multiple images is extremely challenging because of complex relationships in lighting, geometry, and partial occlusion which cause coupling between different parts of the image. Even though recent work on GANs enables synthesis of realistic hair or faces, it remains difficult to combine them into a single, coherent, and plausible image rather than a disjointed set of image patches. We present a novel solution to image blending, particularly for the problem of hairstyle transfer, based on GAN-inversion. We propose a novel latent space for image blending which is better at preserving detail and encoding spatial information, and propose a new GAN-embedding algorithm which is able to slightly modify images to conform to a common segmentation mask. Our novel representation enables the transfer of the visual properties from multiple reference images including specific details such as moles and wrinkles, and because we do image blending in a latent-space we are able to synthesize images that are coherent. Our approach avoids blending artifacts present in other approaches and finds a globally consistent image. Our results demonstrate a significant improvement over the current state of the art in a user study, with users preferring our blending solution over 95 percent of the time.

Temporal graph-based approach for behavioural entity classification

May 11, 2021

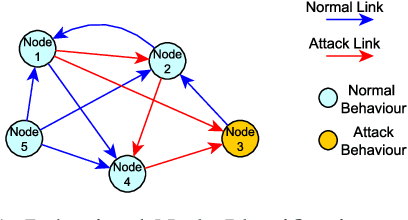

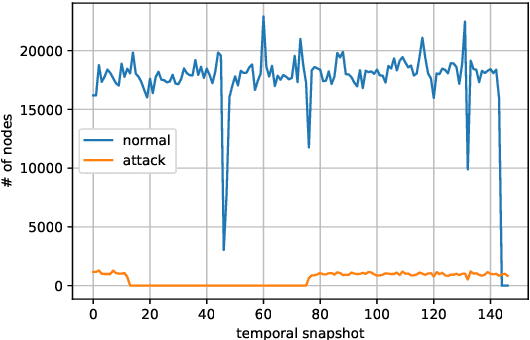

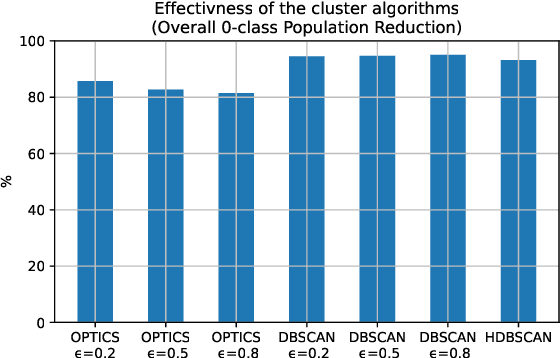

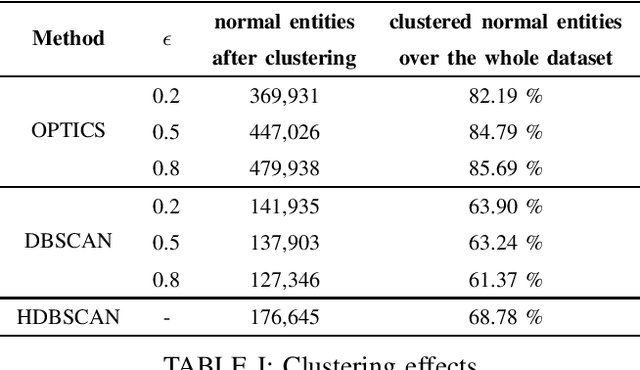

Graph-based analyses have gained a lot of relevance in the past years due to their high potential in describing complex systems by detailing the actors involved, their relations and their behaviours. Nevertheless, in scenarios where these aspects are evolving over time, it is not easy to extract valuable information or to characterize correctly all the actors. In this study, a two phased approach for exploiting the potential of graph structures in the cybersecurity domain is presented. The main idea is to convert a network classification problem into a graph-based behavioural one. We extract these graph structures that can represent the evolution of both normal and attack entities and apply a temporal dissection approach in order to highlight their micro-dynamics. Further, three clustering techniques are applied to the normal entities in order to aggregate similar behaviours, mitigate the imbalance problem and reduce noisy data. Our approach suggests the implementation of two promising deep learning paradigms for entity classification based on Graph Convolutional Networks.

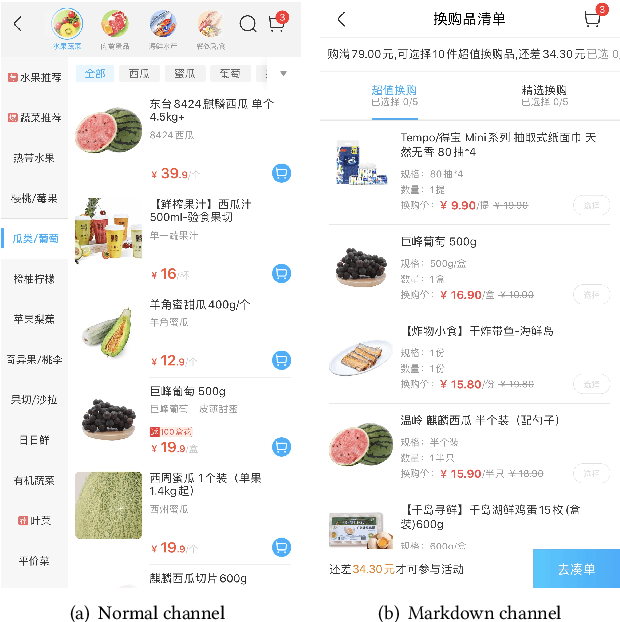

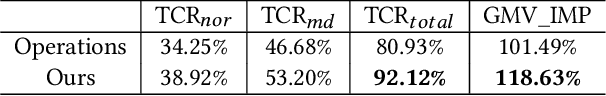

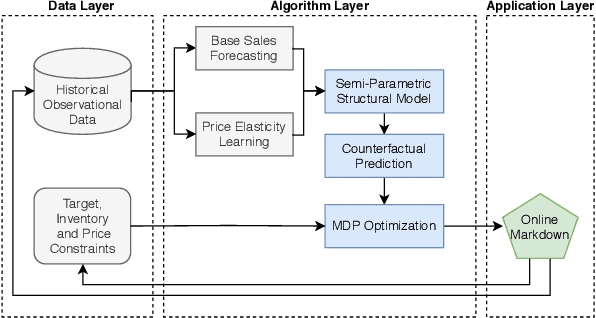

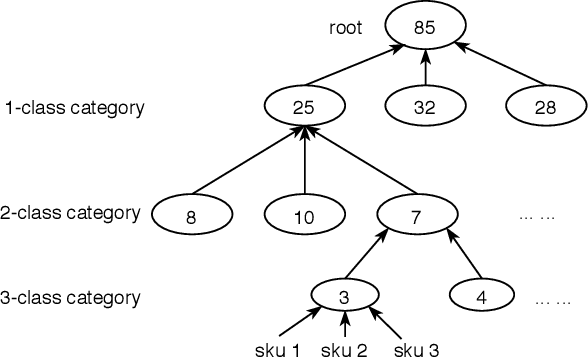

Markdowns in E-Commerce Fresh Retail: A Counterfactual Prediction and Multi-Period Optimization Approach

May 19, 2021

In this paper, by leveraging abundant observational transaction data, we propose a novel data-driven and interpretable pricing approach for markdowns, consisting of counterfactual prediction and multi-period price optimization. Firstly, we build a semi-parametric structural model to learn individual price elasticity and predict counterfactual demand. This semi-parametric model takes advantage of both the predictability of nonparametric machine learning model and the interpretability of economic model. Secondly, we propose a multi-period dynamic pricing algorithm to maximize the overall profit of a perishable product over its finite selling horizon. Different with the traditional approaches that use the deterministic demand, we model the uncertainty of counterfactual demand since it inevitably has randomness in the prediction process. Based on the stochastic model, we derive a sequential pricing strategy by Markov decision process, and design a two-stage algorithm to solve it. The proposed algorithm is very efficient. It reduces the time complexity from exponential to polynomial. Experimental results show the advantages of our pricing algorithm, and the proposed framework has been successfully deployed to the well-known e-commerce fresh retail scenario - Freshippo.

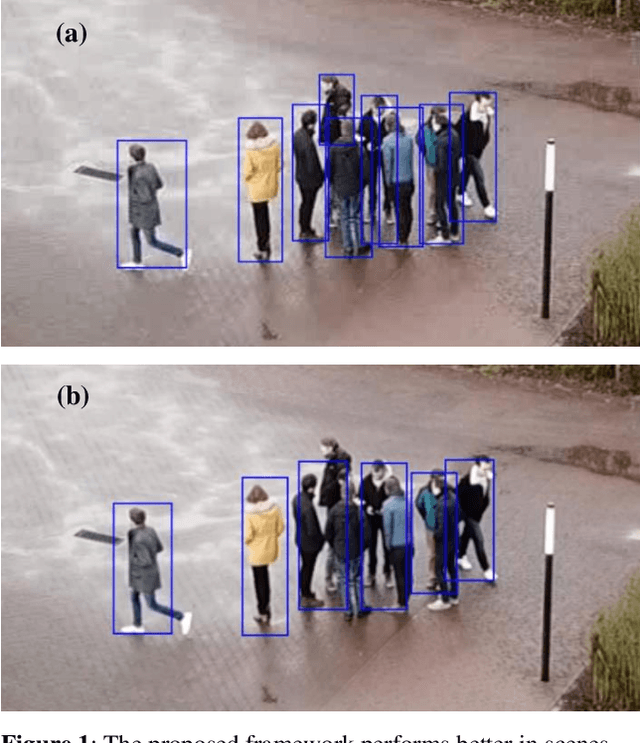

Robust Real-time Pedestrian Detection in Aerial Imagery on Jetson TX2

May 16, 2019

Detection of pedestrians in aerial imagery captured by drones has many applications including intersection monitoring, patrolling, and surveillance, to name a few. However, the problem is involved due to continuouslychanging camera viewpoint and object appearance as well as the need for lightweight algorithms to run on on-board embedded systems. To address this issue, the paper proposes a framework for pedestrian detection in videos based on the YOLO object detection network [6] while having a high throughput of more than 5 FPS on the Jetson TX2 embedded board. The framework exploits deep learning for robust operation and uses a pre-trained model without the need for any additional training which makes it flexible to apply on different setups with minimum amount of tuning. The method achieves ~81 mAP when applied on a sample video from the Embedded Real-Time Inference (ERTI) Challenge where pedestrians are monitored by a UAV.

A Hybrid Recommender System for Recommending Smartphones to Prospective Customers

May 26, 2021

Recommender Systems are a subclass of machine learning systems that employ sophisticated information filtering strategies to reduce the search time and suggest the most relevant items to any particular user. Hybrid recommender systems combine multiple recommendation strategies in different ways to benefit from their complementary advantages. Some hybrid recommender systems have combined collaborative filtering and content-based approaches to build systems that are more robust. In this paper, we propose a hybrid recommender system, which combines Alternative Least Squares (ALS) based collaborative filtering with deep learning to enhance recommendation performance as well as overcome the limitations associated with the collaborative filtering approach, especially concerning its cold start problem. In essence, we use the outputs from ALS (collaborative filtering) to influence the recommendations from a Deep Neural Network (DNN), which combines characteristic, contextual, structural and sequential information, in a big data processing framework. We have conducted several experiments in testing the efficacy of the proposed hybrid architecture in recommending smartphones to prospective customers and compared its performance with other open-source recommenders. The results have shown that the proposed system has outperformed several existing hybrid recommender systems.

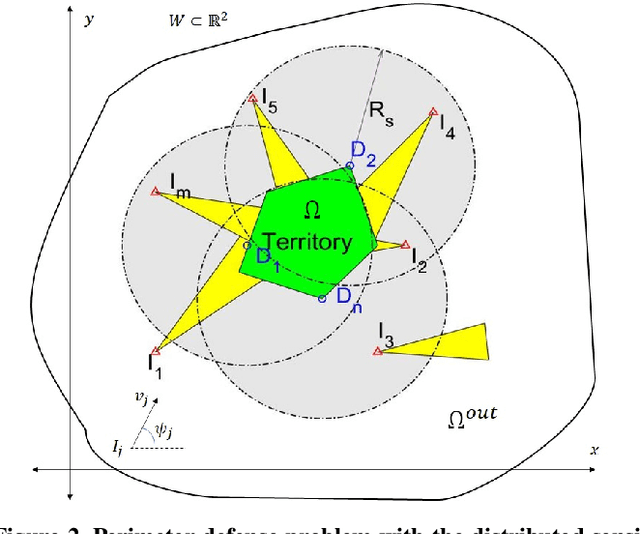

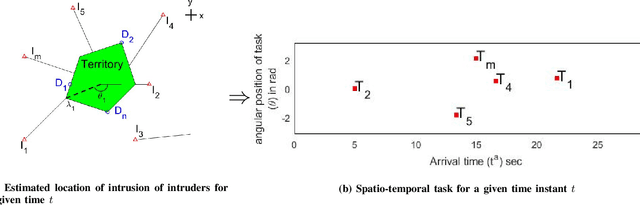

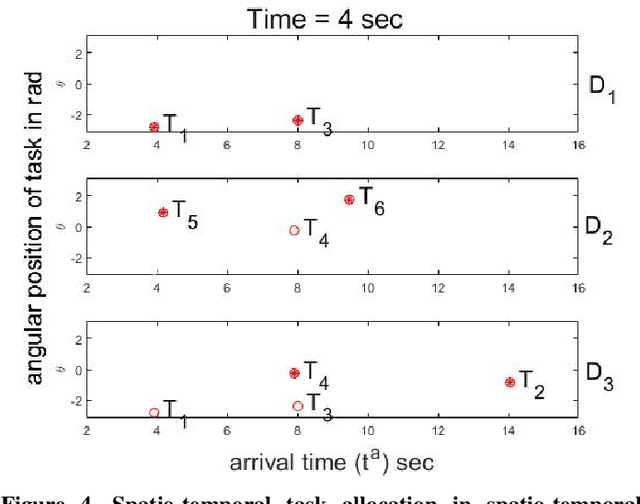

A Decentralized Multi-UAV Spatio-Temporal Multi-Task Allocation Approach for Perimeter Defense

Feb 15, 2021

This paper provides a new solution approach to a multi-player perimeter defense game, in which the intruders' team tries to enter the territory, and a team of defenders protects the territory by capturing intruders on the perimeter of the territory. The objective of the defenders is to detect and capture the intruders before the intruders enter the territory. Each defender independently senses the intruder and computes his trajectory to capture the assigned intruders in a cooperative fashion. The intruder is estimated to reach a specific location on the perimeter at a specific time. Each intruder is viewed as a spatio-temporal task, and the defenders are assigned to execute these spatio-temporal tasks. At any given time, the perimeter defense problem is converted into a Decentralized Multi-UAV Spatio-Temporal Multi-Task Allocation (DMUST-MTA) problem. The cost of executing a task for a trajectory is defined by a composite cost function of both the spatial and temporal components. In this paper, a decentralized consensus-based bundle algorithm has been modified to solve the spatio-temporal multi-task allocation problem, and the performance evaluation of the proposed approach is carried out based on Monte-Carlo simulations. The simulation results show the effectiveness of the proposed approach to solve the perimeter defense game under different scenarios. Performance comparison with a state-of-the-art centralized approach with full observability, clearly indicates that DMUST-MTA achieves similar performance in a decentralized way with partial observability conditions with a lesser computational time and easy scaling up.

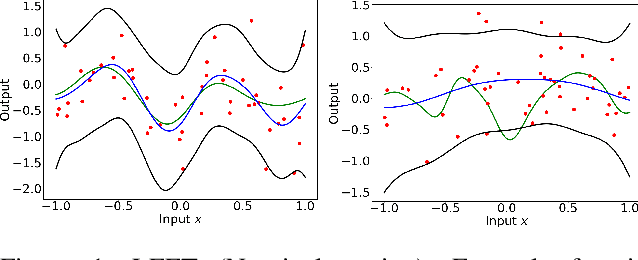

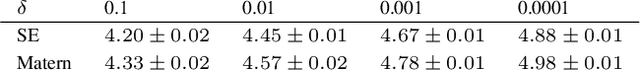

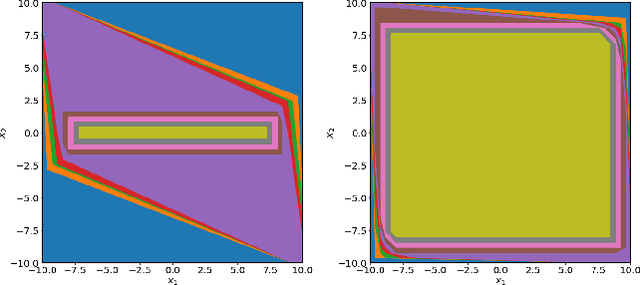

Practical and Rigorous Uncertainty Bounds for Gaussian Process Regression

May 06, 2021

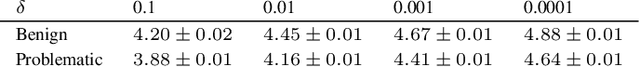

Gaussian Process Regression is a popular nonparametric regression method based on Bayesian principles that provides uncertainty estimates for its predictions. However, these estimates are of a Bayesian nature, whereas for some important applications, like learning-based control with safety guarantees, frequentist uncertainty bounds are required. Although such rigorous bounds are available for Gaussian Processes, they are too conservative to be useful in applications. This often leads practitioners to replacing these bounds by heuristics, thus breaking all theoretical guarantees. To address this problem, we introduce new uncertainty bounds that are rigorous, yet practically useful at the same time. In particular, the bounds can be explicitly evaluated and are much less conservative than state of the art results. Furthermore, we show that certain model misspecifications lead to only graceful degradation. We demonstrate these advantages and the usefulness of our results for learning-based control with numerical examples.

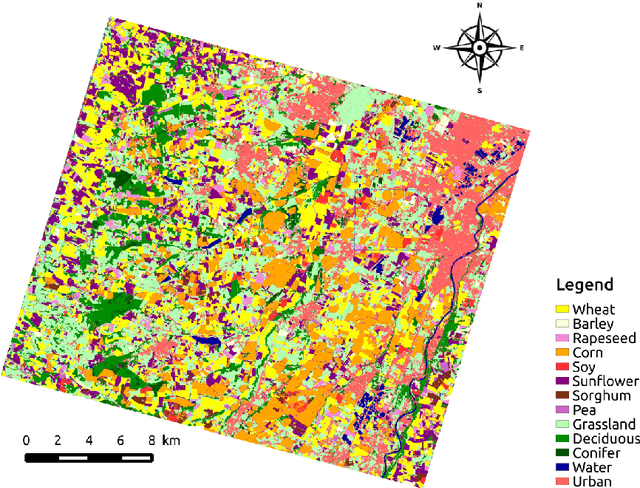

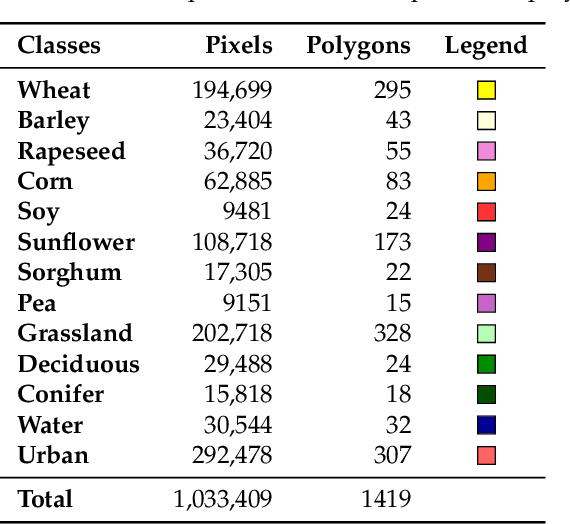

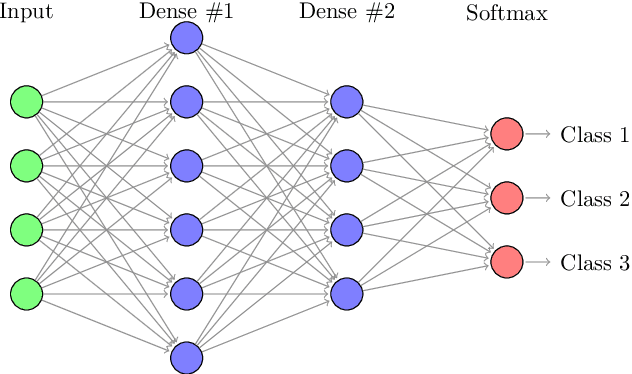

Temporal Convolutional Neural Network for the Classification of Satellite Image Time Series

Nov 26, 2018

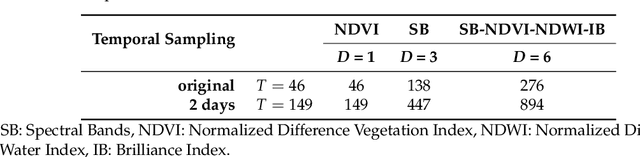

New remote sensing sensors acquire now high spatial and spectral Satellite Image Time Series (SITS) of the world. These series of images are a key component of any classification framework to obtain up-to-date and accurate land cover maps of the Earth's soils. More specifically, the combination of the temporal, spectral and spatial resolutions of new SITS enables the monitoring of vegetation dynamics. Although some traditional classification algorithms, such as Random Forest (RF), have been successfully applied for SITS classification, these algorithms do not fully take advantage of the temporal domain. Conversely, deep-learning based methods have been successfully used to make the most of sequential data such as text and audio data. For the first time, this paper explores the use of Convolutional Neural Networks (CNNs) with convolutions applied in the temporal dimension for SITS classification. The goal is to quantitatively and qualitatively evaluate the contribution of temporal CNNs for SITS classification. More precisely, this paper proposes a set of experiments performed on a million Formosat-2 time series. The experimental results show that temporal CNNs are 2 to 3 % more accurate than RF. The experiments also highlight some counter-intuitive results on pooling layers: contrary to image classification, their use decreases accuracy. Moreover, we provide some general guidelines on the network architecture, common regularization mechanisms, and hyper-parameter values such as the batch size. Finally, the visual quality of the land cover maps produced by the temporal CNN is assessed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge