"Time": models, code, and papers

The Limit Order Book Recreation Model (LOBRM): An Extended Analysis

Jul 01, 2021

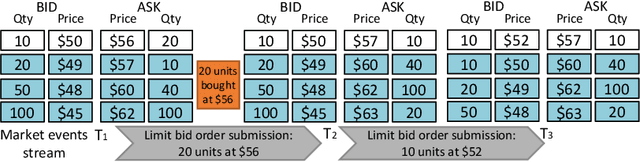

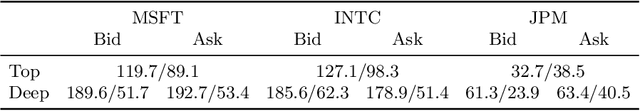

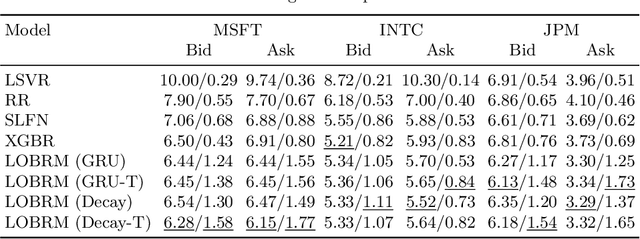

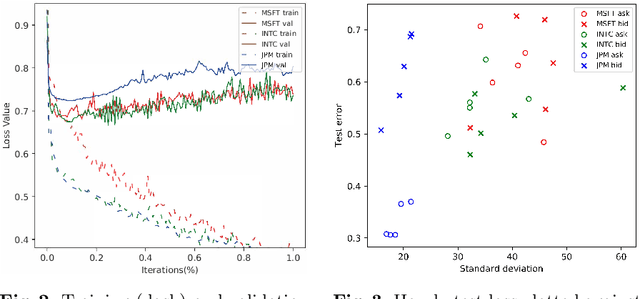

The limit order book (LOB) depicts the fine-grained demand and supply relationship for financial assets and is widely used in market microstructure studies. Nevertheless, the availability and high cost of LOB data restrict its wider application. The LOB recreation model (LOBRM) was recently proposed to bridge this gap by synthesizing the LOB from trades and quotes (TAQ) data. However, in the original LOBRM study, there were two limitations: (1) experiments were conducted on a relatively small dataset containing only one day of LOB data; and (2) the training and testing were performed in a non-chronological fashion, which essentially re-frames the task as interpolation and potentially introduces lookahead bias. In this study, we extend the research on LOBRM and further validate its use in real-world application scenarios. We first advance the workflow of LOBRM by (1) adding a time-weighted z-score standardization for the LOB and (2) substituting the ordinary differential equation kernel with an exponential decay kernel to lower computation complexity. Experiments are conducted on the extended LOBSTER dataset in a chronological fashion, as it would be used in a real-world application. We find that (1) LOBRM with decay kernel is superior to traditional non-linear models, and module ensembling is effective; (2) prediction accuracy is negatively related to the volatility of order volumes resting in the LOB; (3) the proposed sparse encoding method for TAQ exhibits good generalization ability and can facilitate manifold tasks; and (4) the influence of stochastic drift on prediction accuracy can be alleviated by increasing historical samples.

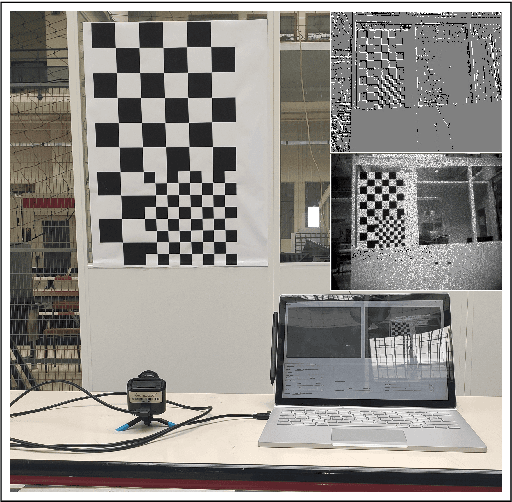

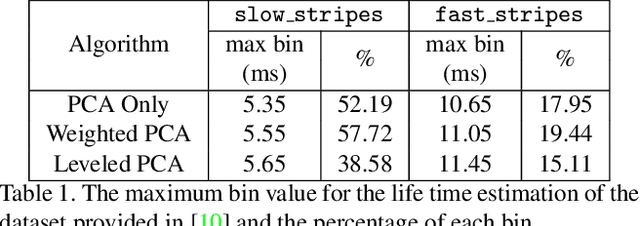

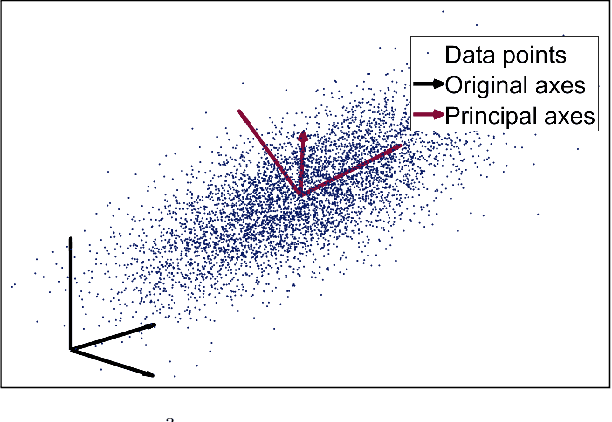

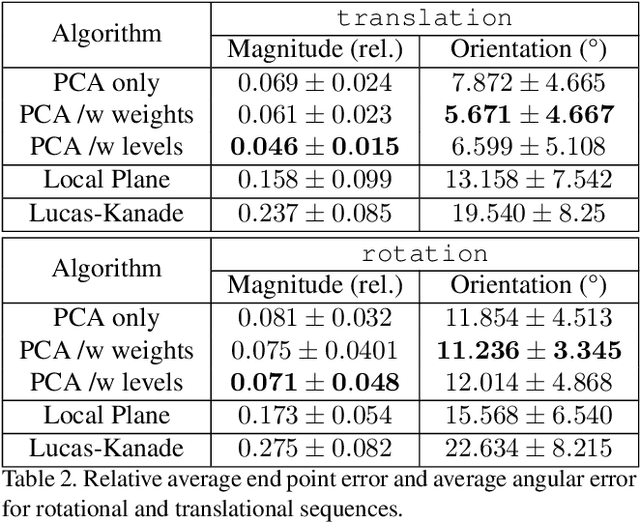

PCA Event-Based Otical Flow for Visual Odometry

May 08, 2021

With the advent of neuromorphic vision sensors such as event-based cameras, a paradigm shift is required for most computer vision algorithms. Among these algorithms, optical flow estimation is a prime candidate for this process considering that it is linked to a neuromorphic vision approach. Usage of optical flow is widespread in robotics applications due to its richness and accuracy. We present a Principal Component Analysis (PCA) approach to the problem of event-based optical flow estimation. In this approach, we examine different regularization methods which efficiently enhance the estimation of the optical flow. We show that the best variant of our proposed method, dedicated to the real-time context of visual odometry, is about two times faster compared to state-of-the-art implementations while significantly improves optical flow accuracy.

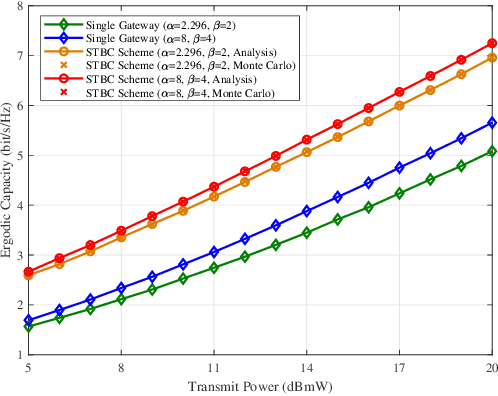

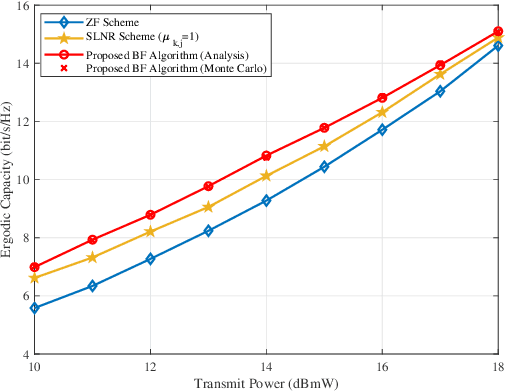

Ergodic Capacity of High Throughput Satellite Systems With Mixed FSO-RF Transmission

May 13, 2021

We study a high throughput satellite system, where the feeder link uses free-space optical (FSO) and the user link uses radio frequency (RF) communication. In particular, we first propose a transmit diversity using Alamouti space time block coding to mitigate the atmospheric turbulence in the feeder link. Then, based on the concept of average virtual signal-to-interference-plus-noise ratio and one-bit feedback, we propose a beamforming algorithm for the user link to maximize the ergodic capacity (EC). Moreover, by assuming that the FSO links follow the Malaga distribution whereas RF links undergo the shadowed-Rician fading, we derive a closed-form EC expression of the considered system. Finally, numerical simulations validate the accuracy of our theoretical analysis, and show that the proposed schemes can achieve higher capacity compared with the reference schemes.

How effective are Graph Neural Networks in Fraud Detection for Network Data?

May 30, 2021Graph-based Neural Networks (GNNs) are recent models created for learning representations of nodes (and graphs), which have achieved promising results when detecting patterns that occur in large-scale data relating different entities. Among these patterns, financial fraud stands out for its socioeconomic relevance and for presenting particular challenges, such as the extreme imbalance between the positive (fraud) and negative (legitimate transactions) classes, and the concept drift (i.e., statistical properties of the data change over time). Since GNNs are based on message propagation, the representation of a node is strongly impacted by its neighbors and by the network's hubs, amplifying the imbalance effects. Recent works attempt to adapt undersampling and oversampling strategies for GNNs in order to mitigate this effect without, however, accounting for concept drift. In this work, we conduct experiments to evaluate existing techniques for detecting network fraud, considering the two previous challenges. For this, we use real data sets, complemented by synthetic data created from a new methodology introduced here. Based on this analysis, we propose a series of improvement points that should be investigated in future research.

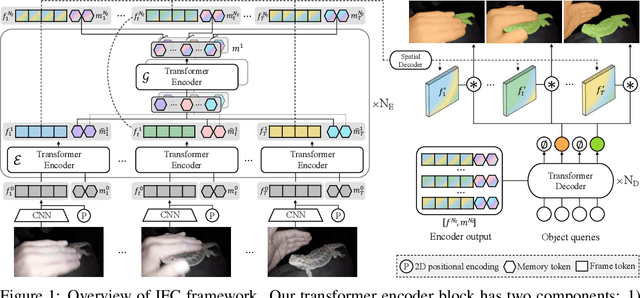

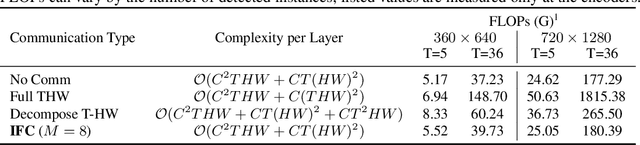

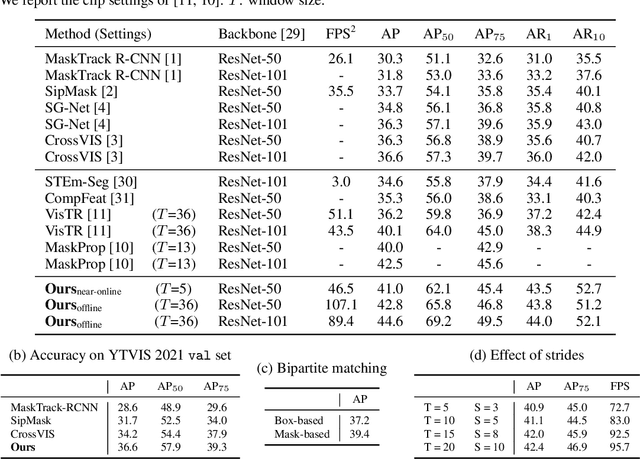

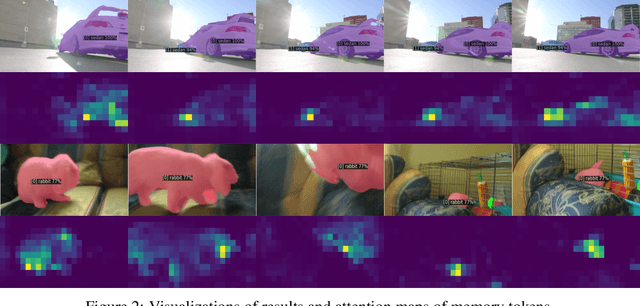

Video Instance Segmentation using Inter-Frame Communication Transformers

Jun 07, 2021

We propose a novel end-to-end solution for video instance segmentation (VIS) based on transformers. Recently, the per-clip pipeline shows superior performance over per-frame methods leveraging richer information from multiple frames. However, previous per-clip models require heavy computation and memory usage to achieve frame-to-frame communications, limiting practicality. In this work, we propose Inter-frame Communication Transformers (IFC), which significantly reduces the overhead for information-passing between frames by efficiently encoding the context within the input clip. Specifically, we propose to utilize concise memory tokens as a mean of conveying information as well as summarizing each frame scene. The features of each frame are enriched and correlated with other frames through exchange of information between the precisely encoded memory tokens. We validate our method on the latest benchmark sets and achieved the state-of-the-art performance (AP 44.6 on YouTube-VIS 2019 val set using the offline inference) while having a considerably fast runtime (89.4 FPS). Our method can also be applied to near-online inference for processing a video in real-time with only a small delay. The code will be made available.

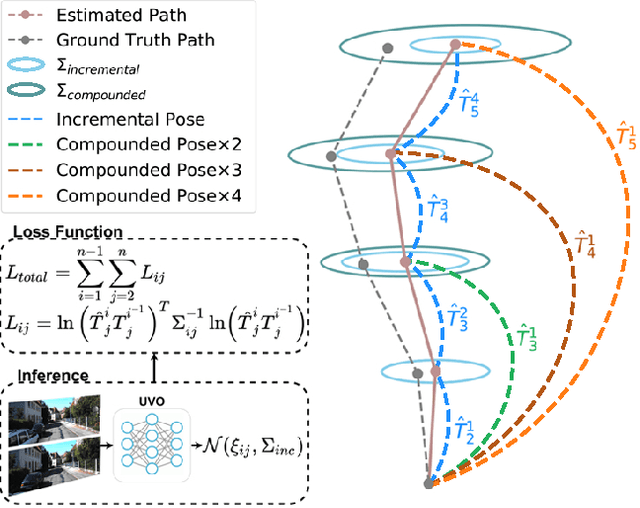

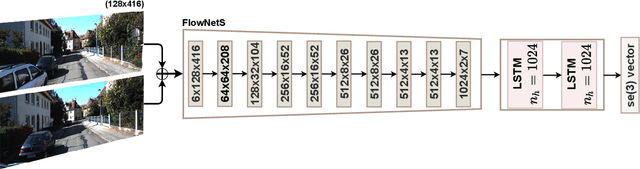

A Consistency-Based Loss for Deep Odometry Through Uncertainty Propagation

Jul 01, 2021

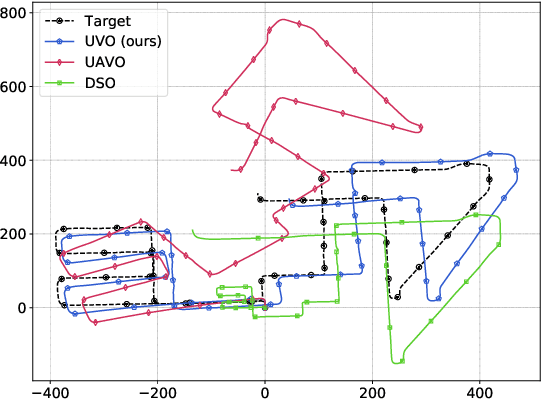

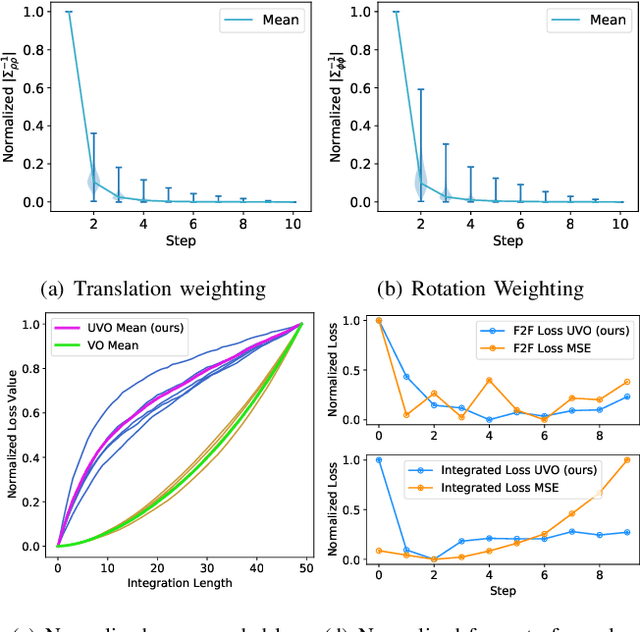

The incremental poses computed through odometry can be integrated over time to calculate the pose of a device with respect to an initial location. The resulting global pose may be used to formulate a second, consistency based, loss term in a deep odometry setting. In such cases where multiple losses are imposed on a network, the uncertainty over each output can be derived to weigh the different loss terms in a maximum likelihood setting. However, when imposing a constraint on the integrated transformation, due to how only odometry is estimated at each iteration of the algorithm, there is no information about the uncertainty associated with the global pose to weigh the global loss term. In this paper, we associate uncertainties with the output poses of a deep odometry network and propagate the uncertainties through each iteration. Our goal is to use the estimated covariance matrix at each incremental step to weigh the loss at the corresponding step while weighting the global loss term using the compounded uncertainty. This formulation provides an adaptive method to weigh the incremental and integrated loss terms against each other, noting the increase in uncertainty as new estimates arrive. We provide quantitative and qualitative analysis of pose estimates and show that our method surpasses the accuracy of the state-of-the-art Visual Odometry approaches. Then, uncertainty estimates are evaluated and comparisons against fixed baselines are provided. Finally, the uncertainty values are used in a realistic example to show the effectiveness of uncertainty quantification for localization.

Tails: Chasing Comets with the Zwicky Transient Facility and Deep Learning

Feb 26, 2021

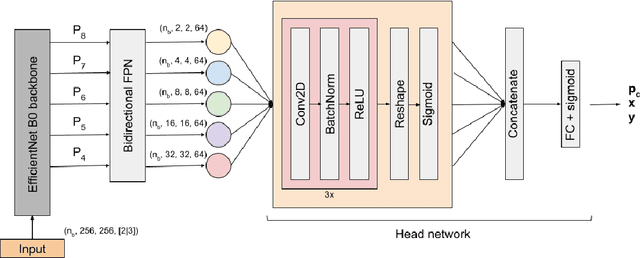

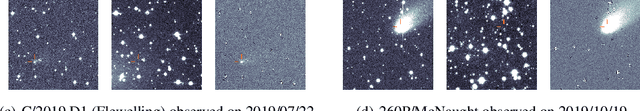

We present Tails, an open-source deep-learning framework for the identification and localization of comets in the image data of the Zwicky Transient Facility (ZTF), a robotic optical time-domain survey currently in operation at the Palomar Observatory in California, USA. Tails employs a custom EfficientDet-based architecture and is capable of finding comets in single images in near real time, rather than requiring multiple epochs as with traditional methods. The system achieves state-of-the-art performance with 99% recall, 0.01% false positive rate, and 1-2 pixel root mean square error in the predicted position. We report the initial results of the Tails efficiency evaluation in a production setting on the data of the ZTF Twilight survey, including the first AI-assisted discovery of a comet (C/2020 T2) and the recovery of a comet (P/2016 J3 = P/2021 A3).

Synchronization of Tree Parity Machines using non-binary input vectors

Apr 22, 2021

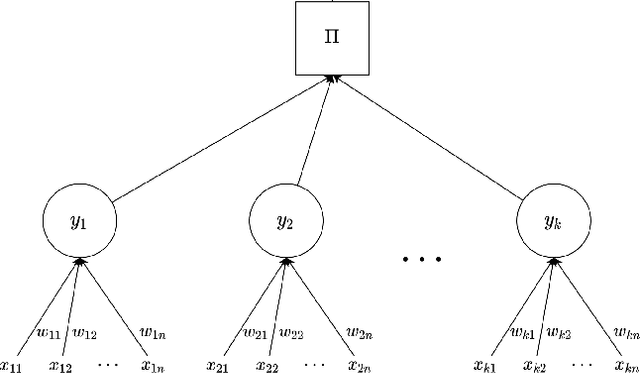

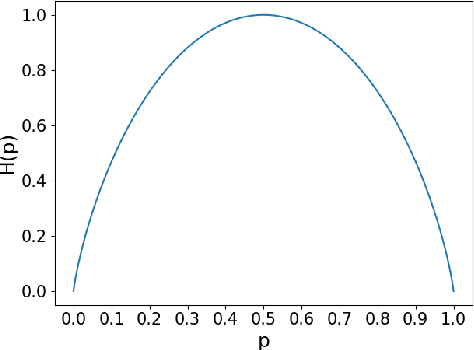

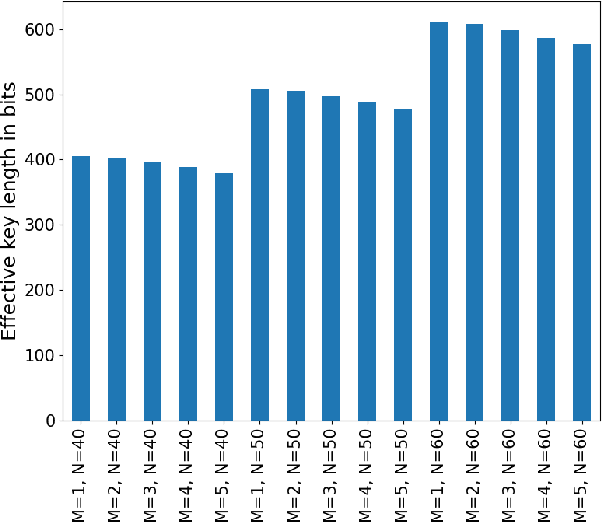

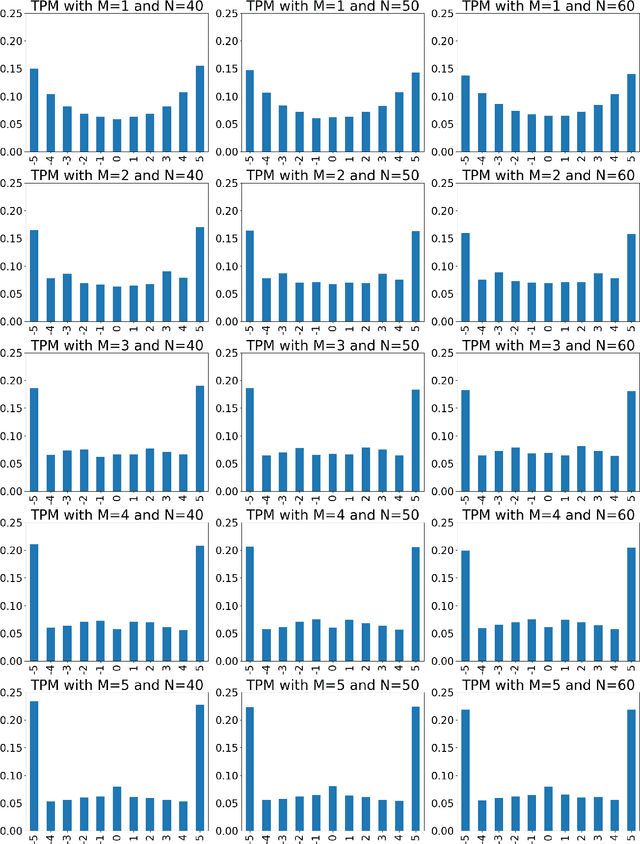

Neural cryptography is the application of artificial neural networks in the subject of cryptography. The functionality of this solution is based on a tree parity machine. It uses artificial neural networks to perform secure key exchange between network entities. This article proposes improvements to the synchronization of two tree parity machines. The improvement is based on learning artificial neural network using input vectors which have a wider range of values than binary ones. As a result, the duration of the synchronization process is reduced. Therefore, tree parity machines achieve common weights in a shorter time due to the reduction of necessary bit exchanges. This approach improves the security of neural cryptography

Preventing Unauthorized Use of Proprietary Data: Poisoning for Secure Dataset Release

Feb 16, 2021

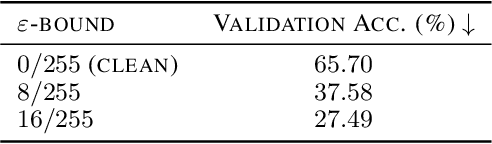

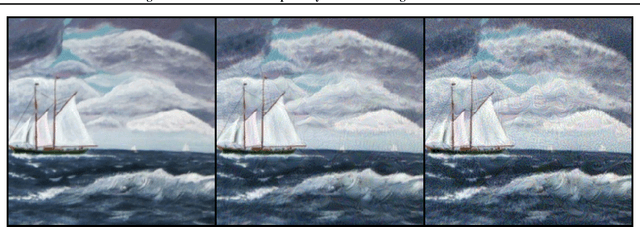

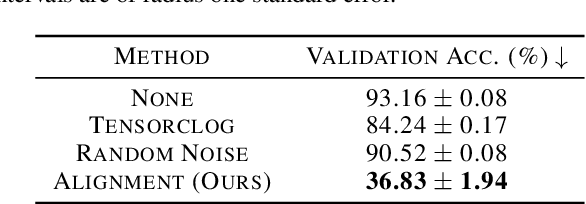

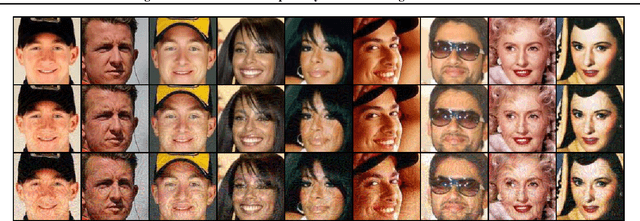

Large organizations such as social media companies continually release data, for example user images. At the same time, these organizations leverage their massive corpora of released data to train proprietary models that give them an edge over their competitors. These two behaviors can be in conflict as an organization wants to prevent competitors from using their own data to replicate the performance of their proprietary models. We solve this problem by developing a data poisoning method by which publicly released data can be minimally modified to prevent others from train-ing models on it. Moreover, our method can be used in an online fashion so that companies can protect their data in real time as they release it.We demonstrate the success of our approach onImageNet classification and on facial recognition.

A Hybrid APM-CPGSO Approach for Constraint Satisfaction Problem Solving: Application to Remote Sensing

Jun 06, 2021

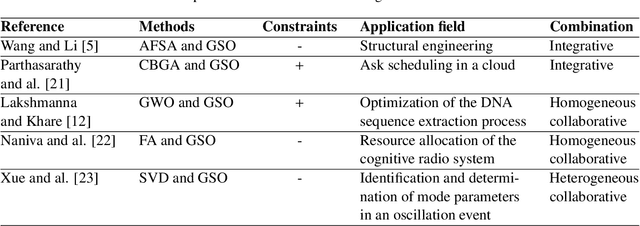

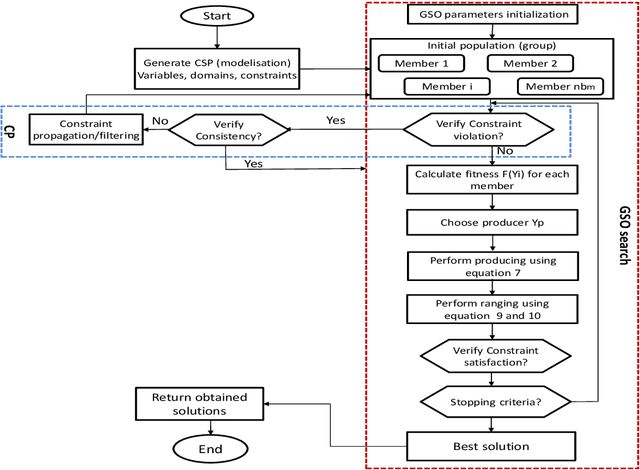

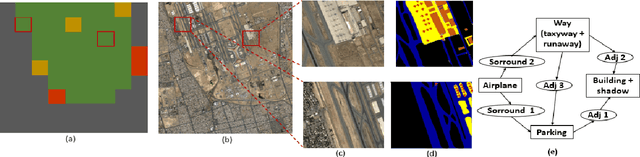

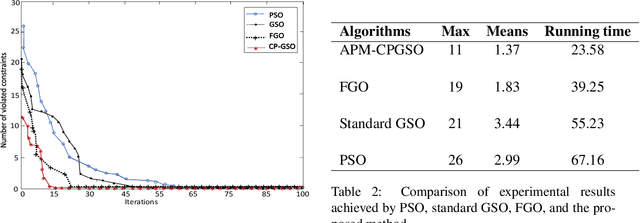

Constraint satisfaction problem (CSP) has been actively used for modeling and solving a wide range of complex real-world problems. However, it has been proven that developing efficient methods for solving CSP, especially for large problems, is very difficult and challenging. Existing complete methods for problem-solving are in most cases unsuitable. Therefore, proposing hybrid CSP-based methods for problem-solving has been of increasing interest in the last decades. This paper aims at proposing a novel approach that combines incomplete and complete CSP methods for problem-solving. The proposed approach takes advantage of the group search algorithm (GSO) and the constraint propagation (CP) methods to solve problems related to the remote sensing field. To the best of our knowledge, this paper represents the first study that proposes a hybridization between an improved version of GSO and CP in the resolution of complex constraint-based problems. Experiments have been conducted for the resolution of object recognition problems in satellite images. Results show good performances in terms of convergence and running time of the proposed CSP-based method compared to existing state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge