"Time": models, code, and papers

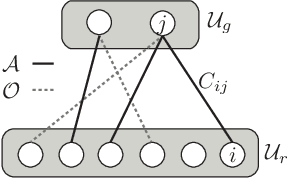

Medical Concept Embedding with Time-Aware Attention

Jun 06, 2018

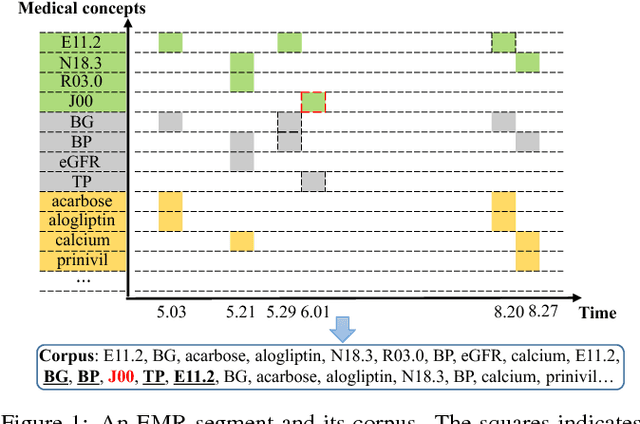

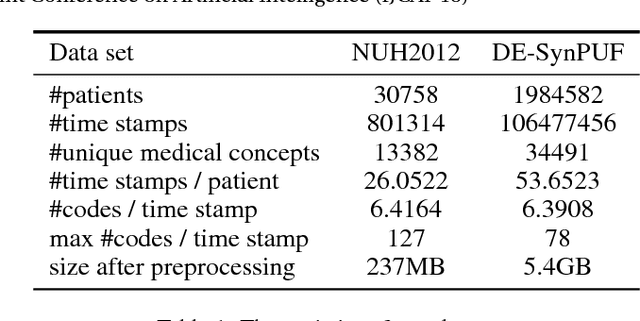

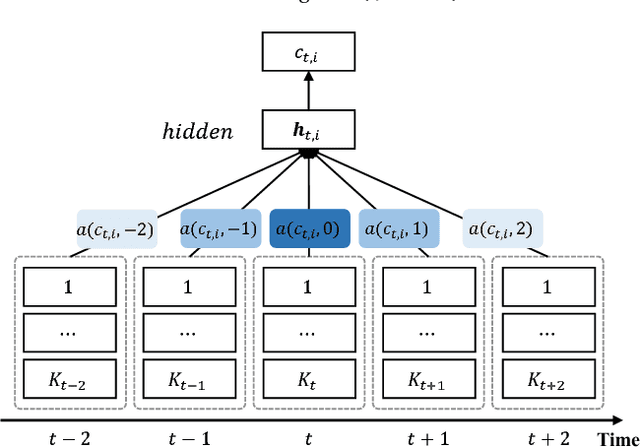

Embeddings of medical concepts such as medication, procedure and diagnosis codes in Electronic Medical Records (EMRs) are central to healthcare analytics. Previous work on medical concept embedding takes medical concepts and EMRs as words and documents respectively. Nevertheless, such models miss out the temporal nature of EMR data. On the one hand, two consecutive medical concepts do not indicate they are temporally close, but the correlations between them can be revealed by the time gap. On the other hand, the temporal scopes of medical concepts often vary greatly (e.g., \textit{common cold} and \textit{diabetes}). In this paper, we propose to incorporate the temporal information to embed medical codes. Based on the Continuous Bag-of-Words model, we employ the attention mechanism to learn a "soft" time-aware context window for each medical concept. Experiments on public and proprietary datasets through clustering and nearest neighbour search tasks demonstrate the effectiveness of our model, showing that it outperforms five state-of-the-art baselines.

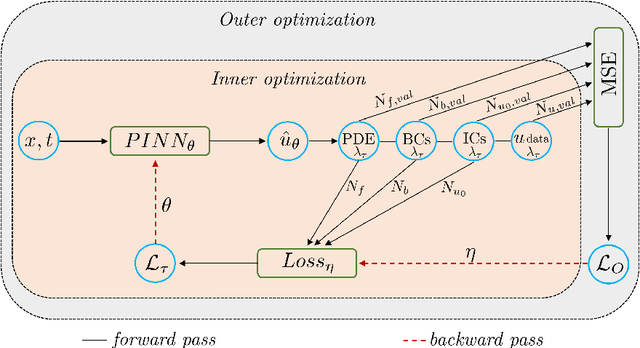

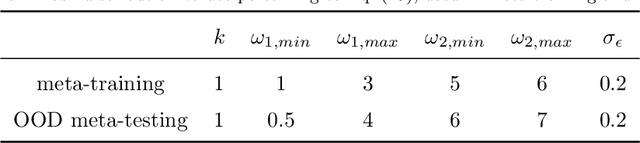

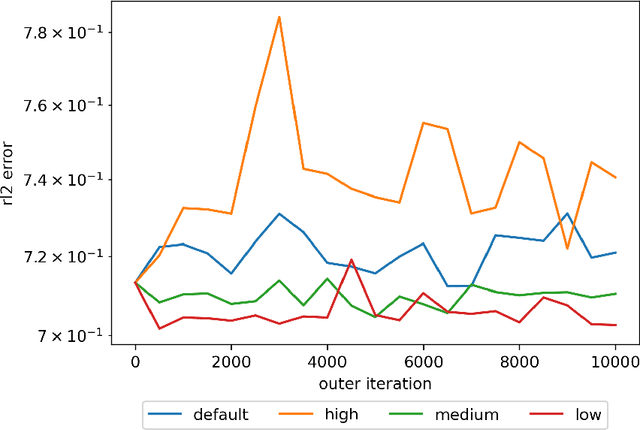

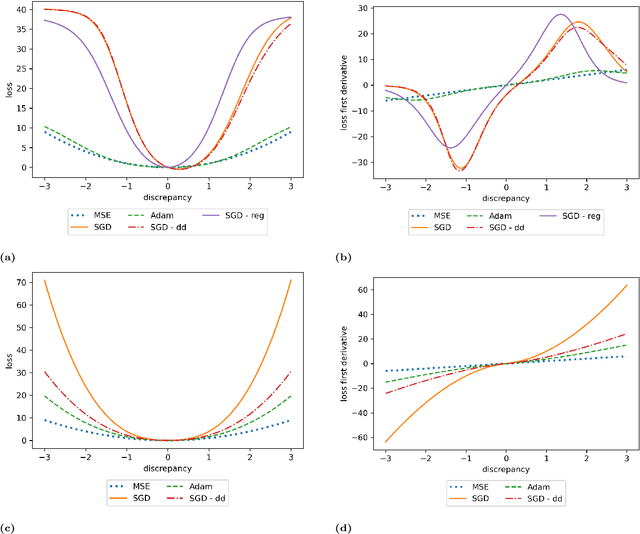

Meta-learning PINN loss functions

Jul 12, 2021

We propose a meta-learning technique for offline discovery of physics-informed neural network (PINN) loss functions. We extend earlier works on meta-learning, and develop a gradient-based meta-learning algorithm for addressing diverse task distributions based on parametrized partial differential equations (PDEs) that are solved with PINNs. Furthermore, based on new theory we identify two desirable properties of meta-learned losses in PINN problems, which we enforce by proposing a new regularization method or using a specific parametrization of the loss function. In the computational examples, the meta-learned losses are employed at test time for addressing regression and PDE task distributions. Our results indicate that significant performance improvement can be achieved by using a shared-among-tasks offline-learned loss function even for out-of-distribution meta-testing. In this case, we solve for test tasks that do not belong to the task distribution used in meta-training, and we also employ PINN architectures that are different from the PINN architecture used in meta-training. To better understand the capabilities and limitations of the proposed method, we consider various parametrizations of the loss function and describe different algorithm design options and how they may affect meta-learning performance.

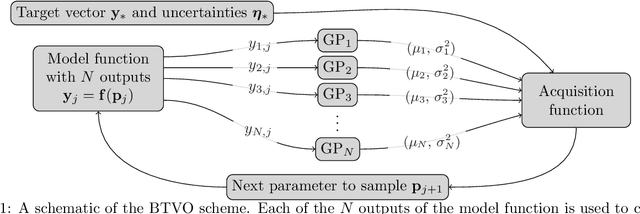

Recent advances in Bayesian optimization with applications to parameter reconstruction in optical nano-metrology

Jul 12, 2021

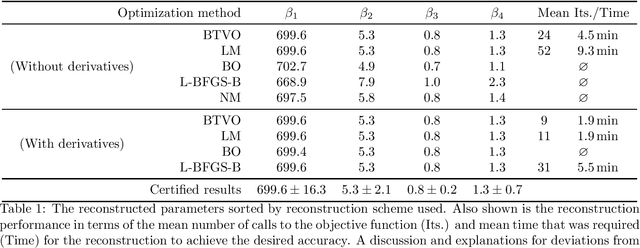

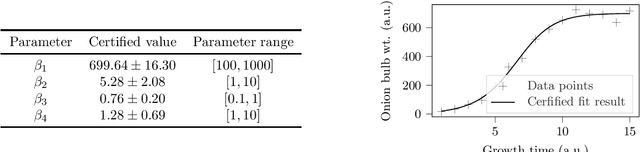

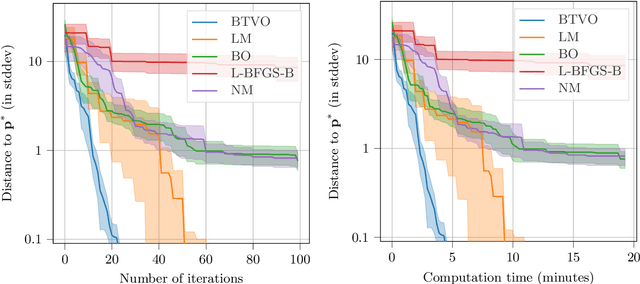

Parameter reconstruction is a common problem in optical nano metrology. It generally involves a set of measurements, to which one attempts to fit a numerical model of the measurement process. The model evaluation typically involves to solve Maxwell's equations and is thus time consuming. This makes the reconstruction computationally demanding. Several methods exist for fitting the model to the measurements. On the one hand, Bayesian optimization methods for expensive black-box optimization enable an efficient reconstruction by training a machine learning model of the squared sum of deviations. On the other hand, curve fitting algorithms, such as the Levenberg-Marquardt method, take the deviations between all model outputs and corresponding measurement values into account which enables a fast local convergence. In this paper we present a Bayesian Target Vector Optimization scheme which combines these two approaches. We compare the performance of the presented method against a standard Levenberg-Marquardt-like algorithm, a conventional Bayesian optimization scheme, and the L-BFGS-B and Nelder-Mead simplex algorithms. As a stand-in for problems from nano metrology, we employ a non-linear least-square problem from the NIST Standard Reference Database. We find that the presented method generally uses fewer calls of the model function than any of the competing schemes to achieve similar reconstruction performance.

* Proceedings article, SPIE conference "Modeling Aspects in Optical Metrology VIII"

Co-evolutionary multi-task learning for dynamic time series prediction

Jun 13, 2018

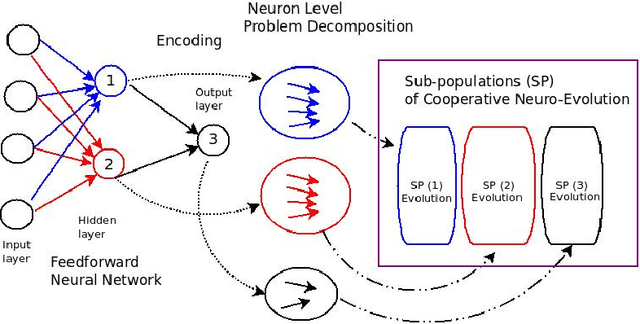

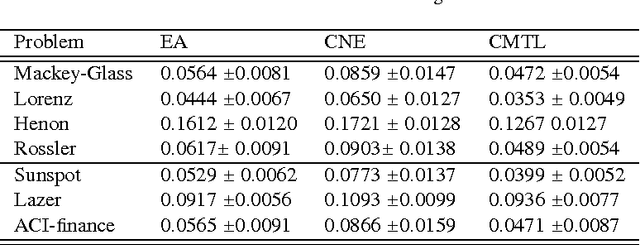

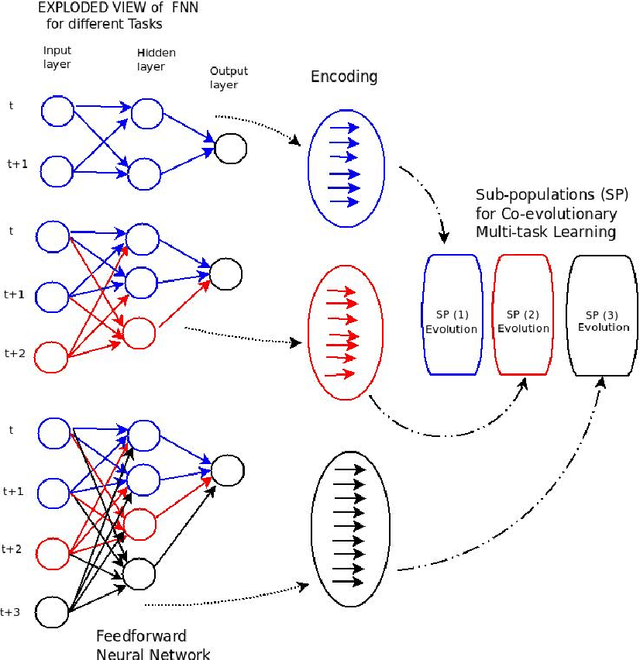

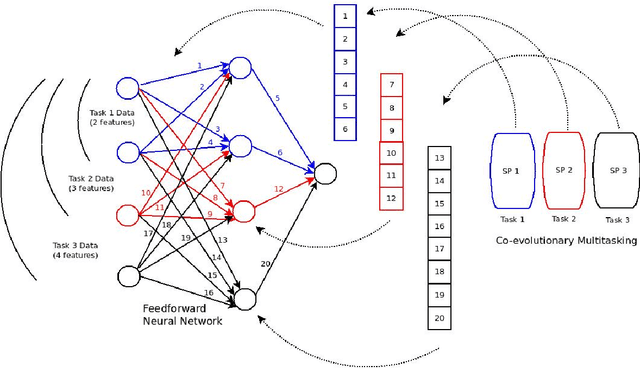

Time series prediction typically consists of a data reconstruction phase where the time series is broken into overlapping windows known as the timespan. The size of the timespan can be seen as a way of determining the extent of past information required for an effective prediction. In certain applications such as the prediction of wind-intensity of storms and cyclones, prediction models need to be dynamic in accommodating different values of the timespan. These applications require robust prediction as soon as the event takes place. We identify a new category of problem called dynamic time series prediction that requires a model to give prediction when presented with varying lengths of the timespan. In this paper, we propose a co-evolutionary multi-task learning method that provides a synergy between multi-task learning and co-evolutionary algorithms to address dynamic time series prediction. The method features effective use of building blocks of knowledge inspired by dynamic programming and multi-task learning. It enables neural networks to retain modularity during training for making a decision in situations even when certain inputs are missing. The effectiveness of the method is demonstrated using one-step-ahead chaotic time series and tropical cyclone wind-intensity prediction.

Supermodular Optimization for Redundant Robot Assignment under Travel-Time Uncertainty

Apr 13, 2018

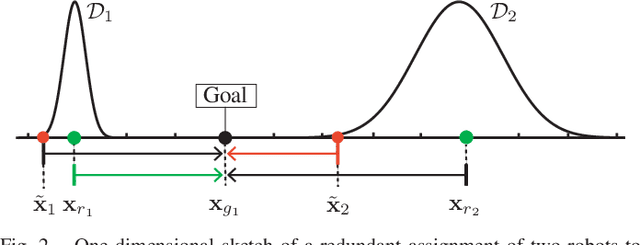

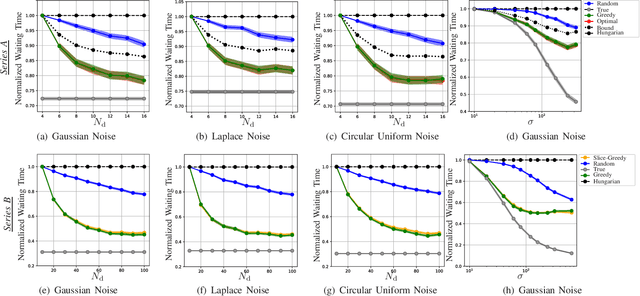

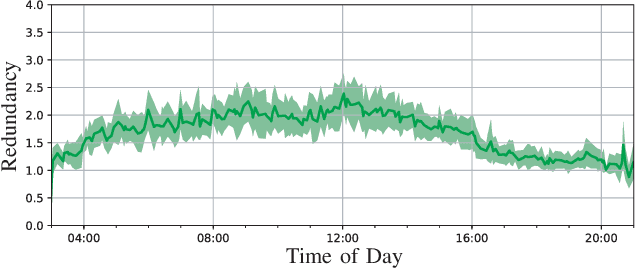

This paper considers the assignment of multiple mobile robots to goal locations under uncertain travel time estimates. Our aim is to produce optimal assignments, such that the average waiting time at destinations is minimized. Our premise is that time is the most valuable asset in the system. Hence, we make use of redundant robots to counter the effect of uncertainty. Since solving the redundant assignment problem is strongly NP-hard, we exploit structural properties of our problem to propose a polynomial-time, near-optimal solution. We demonstrate that our problem can be reduced to minimizing a supermodular cost function subject to a matroid constraint. This allows us to develop a greedy algorithm, for which we derive sub-optimality bounds. A comparison with the baseline non-redundant assignment shows that redundant assignment reduces the waiting time at goals, and that this performance gap increases as noise increases. Finally, we evaluate our method on a mobility data set (specifying vehicle availability and passenger requests), recorded in the area of Manhattan, New York. Our algorithm performs in real-time, and reduces passenger waiting times when travel times are uncertain.

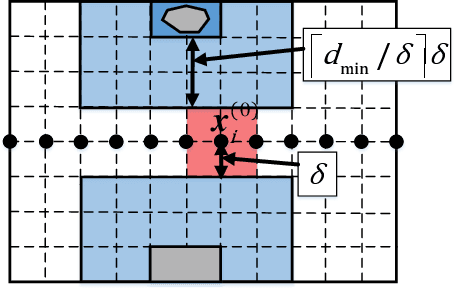

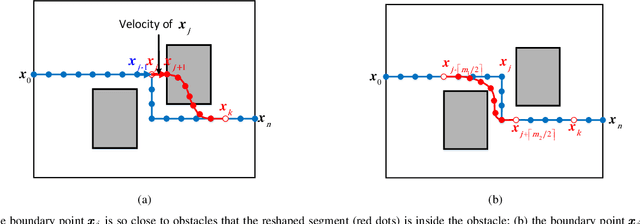

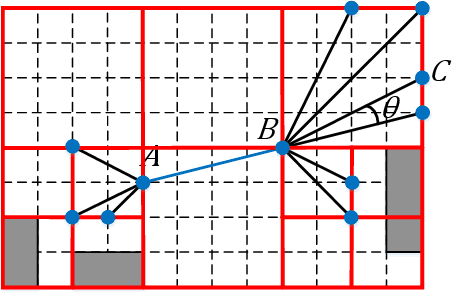

A Roadmap-Path Reshaping Algorithm for Real-Time Motion Planning

Feb 28, 2019

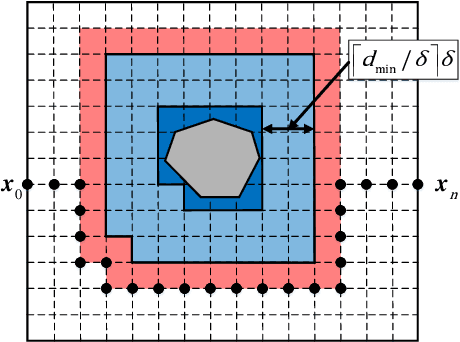

Real-time motion planning is a vital function of robotic systems. Different from existing roadmap algorithms which first determine the free space and then determine the collision-free path, researchers recently proposed several convex relaxation based smoothing algorithms which first select an initial path to link the starting configuration and the goal configuration and then reshape this path to meet other requirements (e.g., collision-free conditions) by using convex relaxation. However, convex relaxation based smoothing algorithms often fail to give a satisfactory path, since the initial paths are selected randomly. Moreover, the curvature constraints were not considered in the existing convex relaxation based smoothing algorithms. In this paper, we show that we can first grid the whole configuration space to pick a candidate path and reshape this shortest path to meet our goal. This new algorithm inherits the merits of the roadmap algorithms and the convex feasible set algorithm. We further discuss how to meet the curvature constraints by using both the Beamlet algorithm to select a better initial path and an iterative optimization algorithm to adjust the curvature of the path. Theoretical analyzing and numerical testing results show that it can almost surely find a feasible path and use much less time than the recently proposed convex feasible set algorithm.

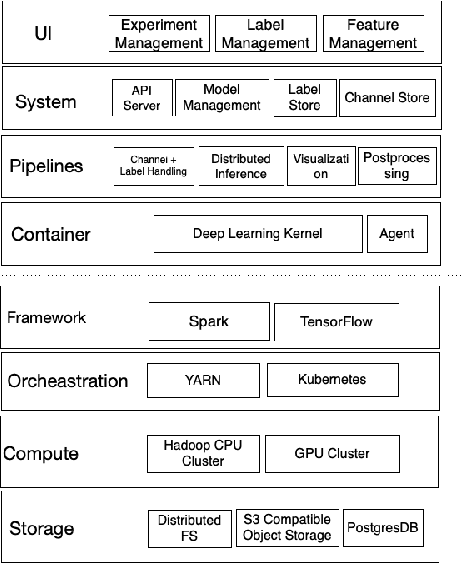

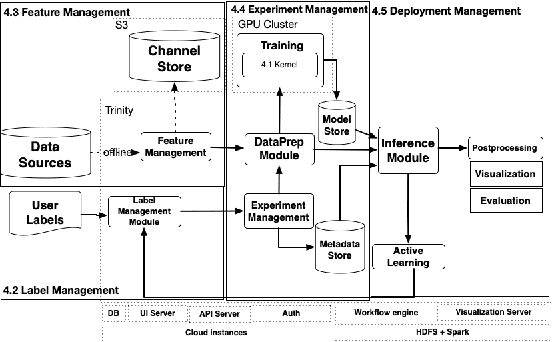

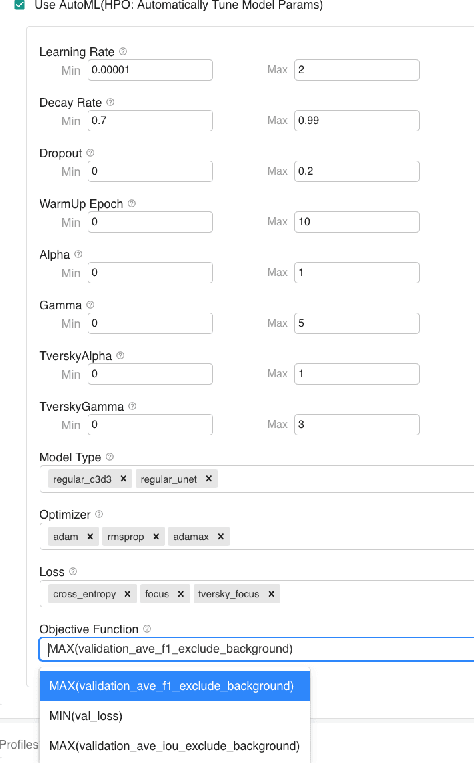

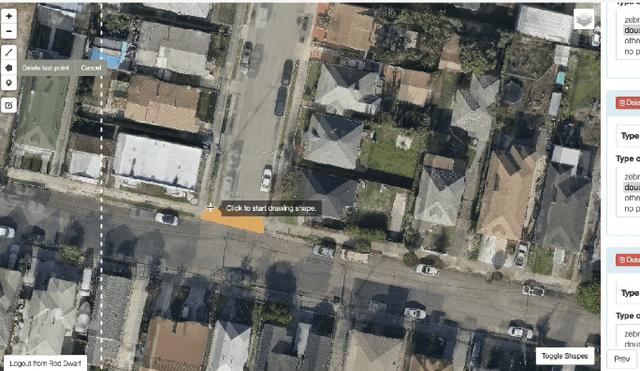

Trinity: A No-Code AI platform for complex spatial datasets

Jun 28, 2021

We present a no-code Artificial Intelligence (AI) platform called Trinity with the main design goal of enabling both machine learning researchers and non-technical geospatial domain experts to experiment with domain-specific signals and datasets for solving a variety of complex problems on their own. This versatility to solve diverse problems is achieved by transforming complex Spatio-temporal datasets to make them consumable by standard deep learning models, in this case, Convolutional Neural Networks (CNNs), and giving the ability to formulate disparate problems in a standard way, eg. semantic segmentation. With an intuitive user interface, a feature store that hosts derivatives of complex feature engineering, a deep learning kernel, and a scalable data processing mechanism, Trinity provides a powerful platform for domain experts to share the stage with scientists and engineers in solving business-critical problems. It enables quick prototyping, rapid experimentation and reduces the time to production by standardizing model building and deployment. In this paper, we present our motivation behind Trinity and its design along with showcasing sample applications to motivate the idea of lowering the bar to using AI.

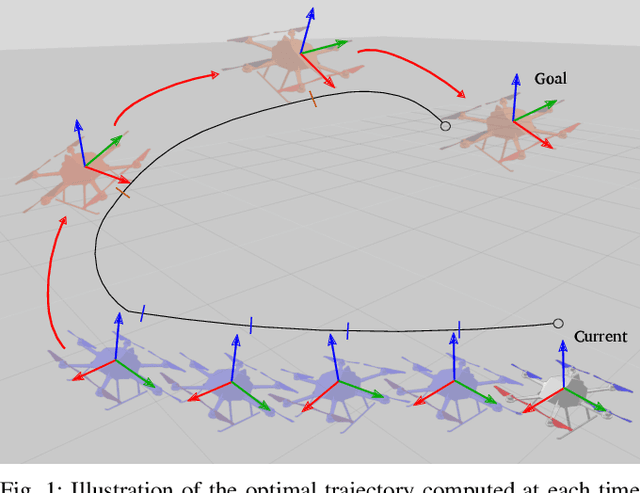

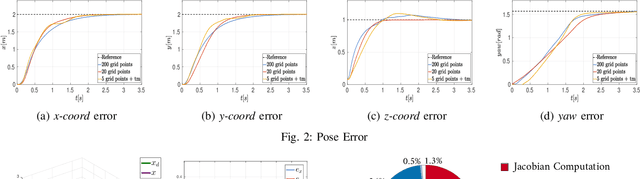

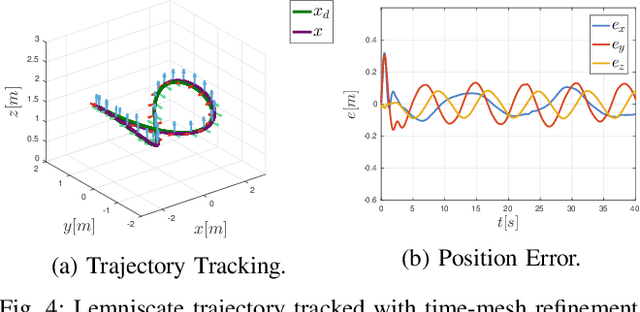

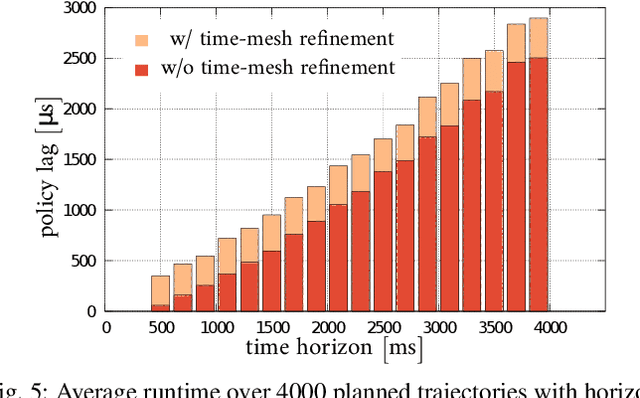

Non-Linear Model Predictive Control with Adaptive Time-Mesh Refinement

Mar 28, 2018

In this paper, we present a novel solution for real-time, Non-Linear Model Predictive Control (NMPC) exploiting a time-mesh refinement strategy. The proposed controller formulates the Optimal Control Problem (OCP) in terms of flat outputs over an adaptive lattice. In common approximated OCP solutions, the number of discretization points composing the lattice represents a critical upper bound for real-time applications. The proposed NMPC-based technique refines the initially uniform time horizon by adding time steps with a sampling criterion that aims to reduce the discretization error. This enables a higher accuracy in the initial part of the receding horizon, which is more relevant to NMPC, while keeping bounded the number of discretization points. By combining this feature with an efficient Least Square formulation, our solver is also extremely time-efficient, generating trajectories of multiple seconds within only a few milliseconds. The performance of the proposed approach has been validated in a high fidelity simulation environment, by using an UAV platform. We also released our implementation as open source C++ code.

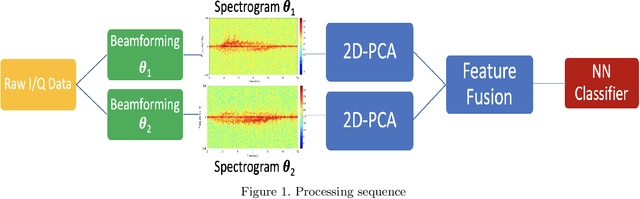

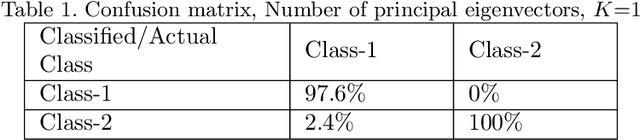

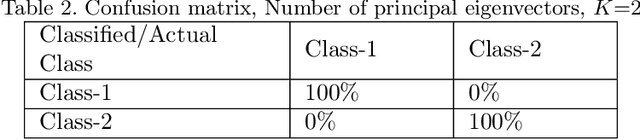

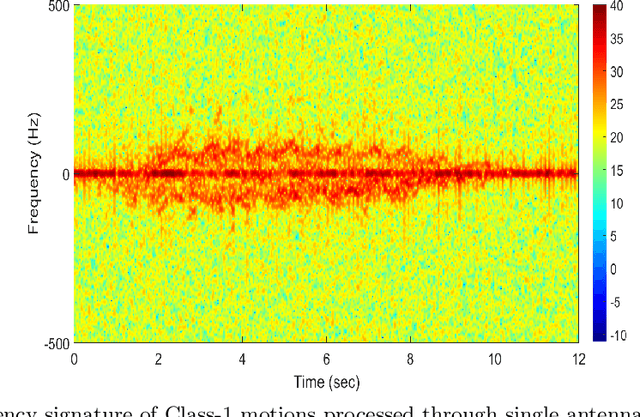

Radar Human Motion Classification Using Multi-Antenna System

Apr 01, 2021

This paper considers human activity classification for an indoor radar system. Human motions generate nonstationary radar returns which represent Doppler and micro-Doppler signals. The time-frequency (TF) analysis of micro-Doppler signals can discern subtle variations on the motion by precisely revealing velocity components of various moving body parts. We consider radar for activity monitoring using TF-based machine learning approach exploiting both temporal and spatial degrees of freedom. The proposed approach captures different human motion representations more vividly in joint-variable data domains achieved through beamforming at the receiver. The radar data is collected using real time measurements at 77 GHz using four receive antennas, and subsequently micro-Doppler signatures are analyzed through machine learning algorithm for classifications of human walking motions. We present the performance of the proposed multi antenna approach in separating and classifying two closely walking persons moving in opposite directions.

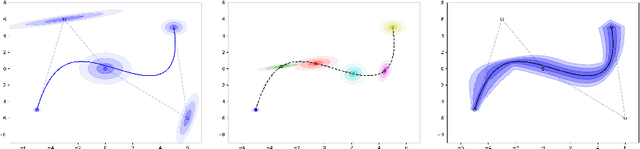

Modeling continuous-time stochastic processes using $\mathcal{N}$-Curve mixtures

Aug 21, 2019

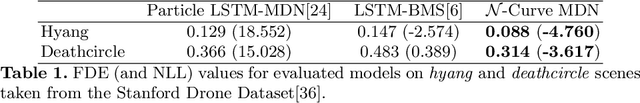

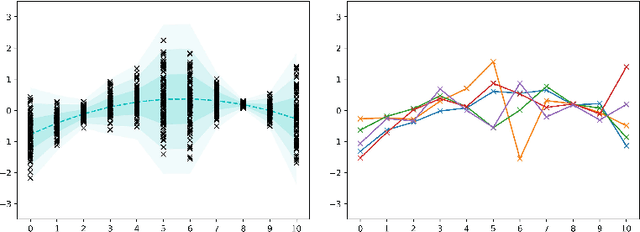

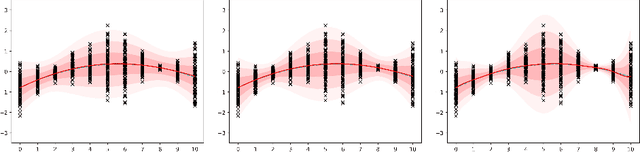

Representations of sequential data are commonly based on the assumption that observed sequences are realizations of an unknown underlying stochastic process, where the learning problem includes determination of the model parameters. In this context the model must be able to capture the multi-modal nature of the data, without blurring between modes. This property is essential for applications like trajectory prediction or human motion modeling. Towards this end, a neural network model for continuous-time stochastic processes usable for sequence prediction is proposed. The model is based on Mixture Density Networks using B\'ezier curves with Gaussian random variables as control points (abbrev.: $\mathcal{N}$-Curves). Key advantages of the model include the ability of generating smooth multi-mode predictions in a single inference step which reduces the need for Monte Carlo simulation, as required in many multi-step prediction models, based on state-of-the-art neural networks. Essential properties of the proposed approach are illustrated by several toy examples and the task of multi-step sequence prediction. Further, the model performance is evaluated on two real world use-cases, i.e. human trajectory prediction and human motion modeling, outperforming different state-of-the-art models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge