"Time": models, code, and papers

Linear-Time Constituency Parsing with RNNs and Dynamic Programming

May 21, 2018

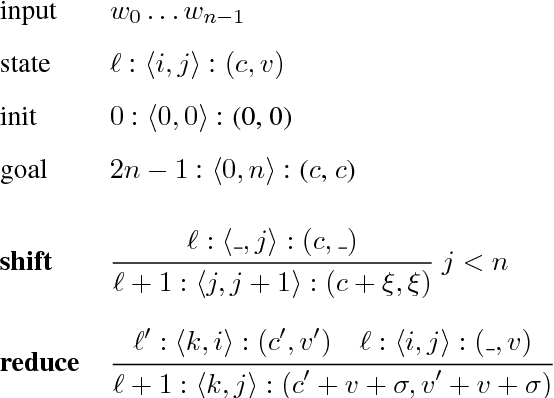

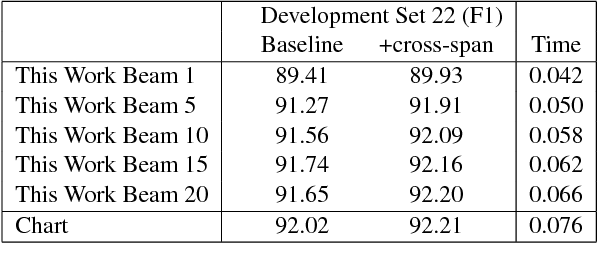

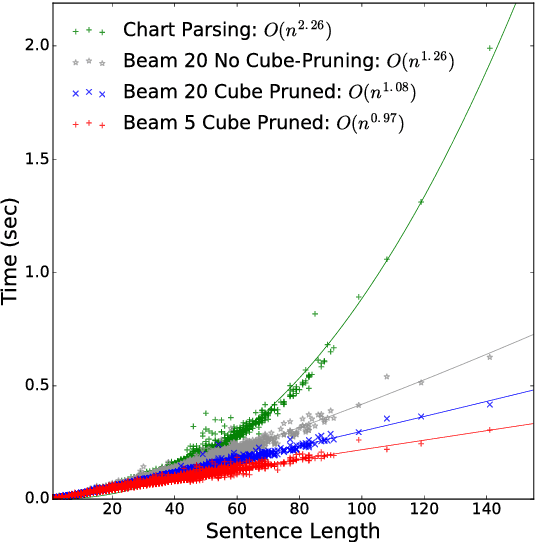

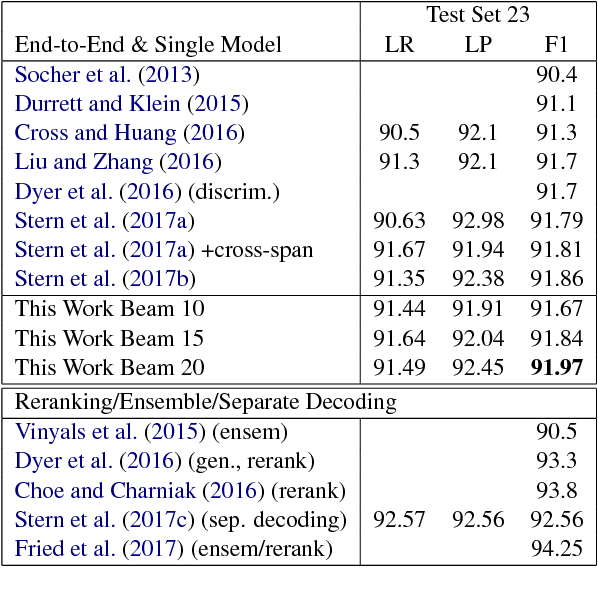

Recently, span-based constituency parsing has achieved competitive accuracies with extremely simple models by using bidirectional RNNs to model "spans". However, the minimal span parser of Stern et al (2017a) which holds the current state of the art accuracy is a chart parser running in cubic time, $O(n^3)$, which is too slow for longer sentences and for applications beyond sentence boundaries such as end-to-end discourse parsing and joint sentence boundary detection and parsing. We propose a linear-time constituency parser with RNNs and dynamic programming using graph-structured stack and beam search, which runs in time $O(n b^2)$ where $b$ is the beam size. We further speed this up to $O(n b\log b)$ by integrating cube pruning. Compared with chart parsing baselines, this linear-time parser is substantially faster for long sentences on the Penn Treebank and orders of magnitude faster for discourse parsing, and achieves the highest F1 accuracy on the Penn Treebank among single model end-to-end systems.

Learning to Confuse: Generating Training Time Adversarial Data with Auto-Encoder

May 22, 2019

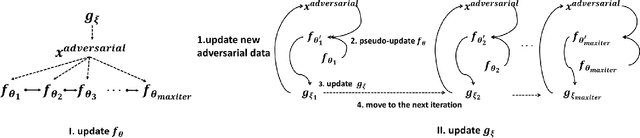

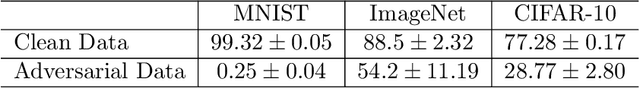

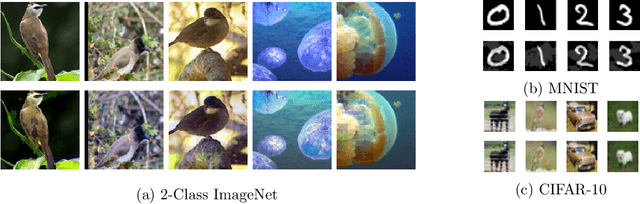

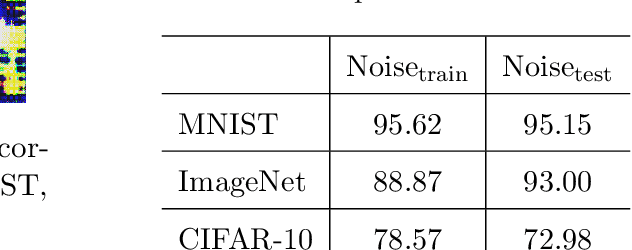

In this work, we consider one challenging training time attack by modifying training data with bounded perturbation, hoping to manipulate the behavior (both targeted or non-targeted) of any corresponding trained classifier during test time when facing clean samples. To achieve this, we proposed to use an auto-encoder-like network to generate the pertubation on the training data paired with one differentiable system acting as the imaginary victim classifier. The perturbation generator will learn to update its weights by watching the training procedure of the imaginary classifier in order to produce the most harmful and imperceivable noise which in turn will lead the lowest generalization power for the victim classifier. This can be formulated into a non-linear equality constrained optimization problem. Unlike GANs, solving such problem is computationally challenging, we then proposed a simple yet effective procedure to decouple the alternating updates for the two networks for stability. The method proposed in this paper can be easily extended to the label specific setting where the attacker can manipulate the predictions of the victim classifiers according to some predefined rules rather than only making wrong predictions. Experiments on various datasets including CIFAR-10 and a reduced version of ImageNet confirmed the effectiveness of the proposed method and empirical results showed that, such bounded perturbation have good transferability regardless of which classifier the victim is actually using on image data.

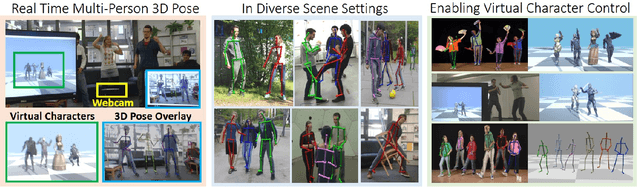

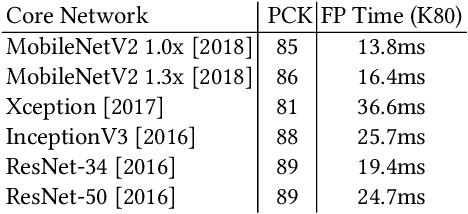

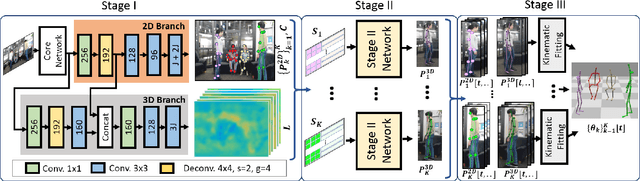

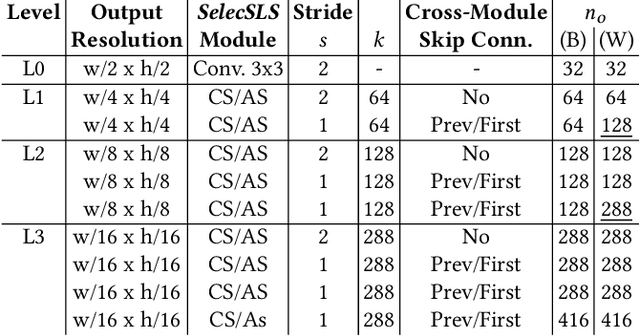

XNect: Real-time Multi-person 3D Human Pose Estimation with a Single RGB Camera

Jul 01, 2019

We present a real-time approach for multi-person 3D motion capture at over 30 fps using a single RGB camera. It operates in generic scenes and is robust to difficult occlusions both by other people and objects. Our method operates in subsequent stages. The first stage is a convolutional neural network (CNN) that estimates 2D and 3D pose features along with identity assignments for all visible joints of all individuals. We contribute a new architecture for this CNN, called SelecSLS Net, that uses novel selective long and short range skip connections to improve the information flow allowing for a drastically faster network without compromising accuracy. In the second stage, a fully-connected neural network turns the possibly partial (on account of occlusion) 2D pose and 3D pose features for each subject into a complete 3D pose estimate per individual. The third stage applies space-time skeletal model fitting to the predicted 2D and 3D pose per subject to further reconcile the 2D and 3D pose, and enforce temporal coherence. Our method returns the full skeletal pose in joint angles for each subject. This is a further key distinction from previous work that neither extracted global body positions nor joint angle results of a coherent skeleton in real time for multi-person scenes. The proposed system runs on consumer hardware at a previously unseen speed of more than 30 fps given 512x320 images as input while achieving state-of-the-art accuracy, which we will demonstrate on a range of challenging real-world scenes.

Text-Aware Predictive Monitoring of Business Processes

Apr 20, 2021

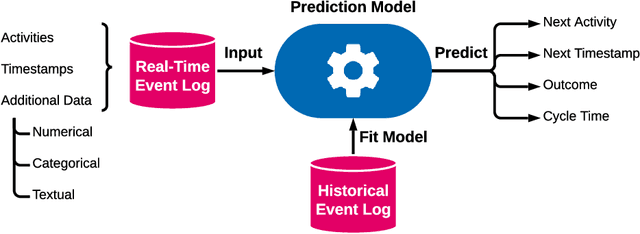

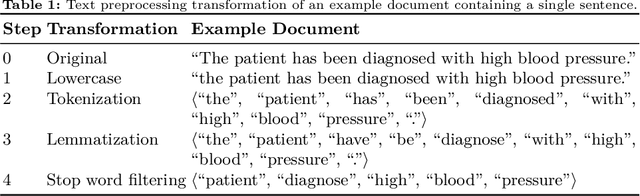

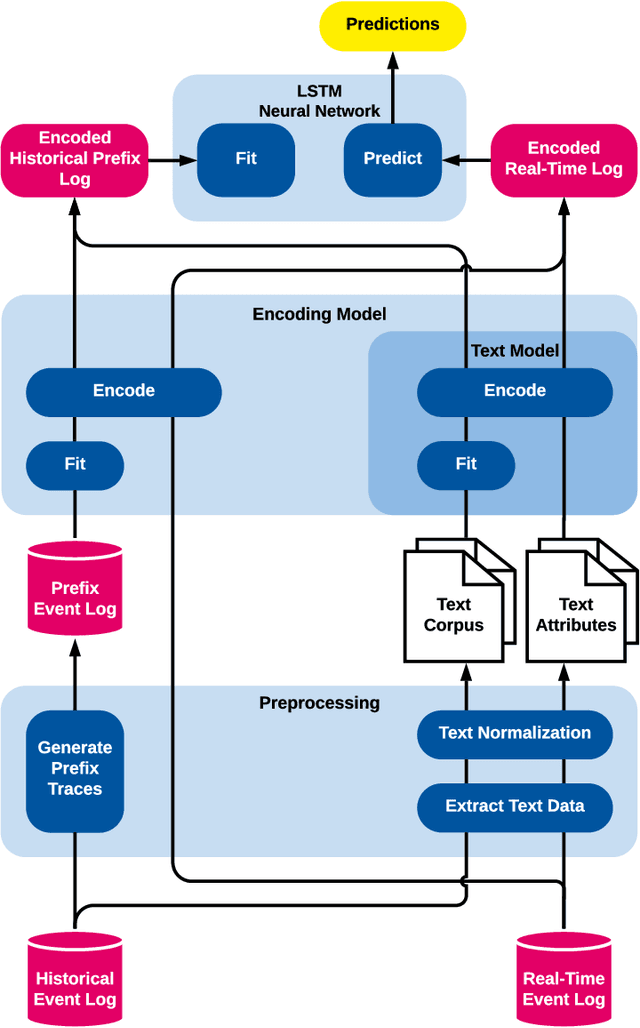

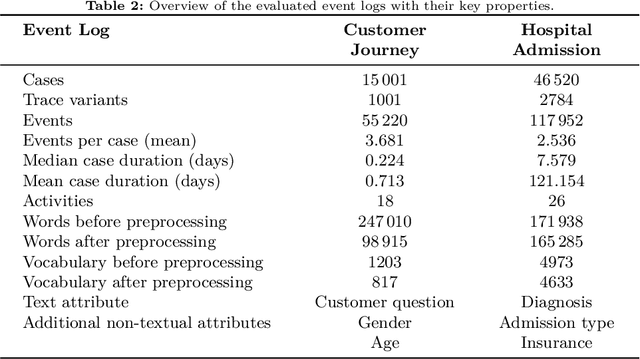

The real-time prediction of business processes using historical event data is an important capability of modern business process monitoring systems. Existing process prediction methods are able to also exploit the data perspective of recorded events, in addition to the control-flow perspective. However, while well-structured numerical or categorical attributes are considered in many prediction techniques, almost no technique is able to utilize text documents written in natural language, which can hold information critical to the prediction task. In this paper, we illustrate the design, implementation, and evaluation of a novel text-aware process prediction model based on Long Short-Term Memory (LSTM) neural networks and natural language models. The proposed model can take categorical, numerical and textual attributes in event data into account to predict the activity and timestamp of the next event, the outcome, and the cycle time of a running process instance. Experiments show that the text-aware model is able to outperform state-of-the-art process prediction methods on simulated and real-world event logs containing textual data.

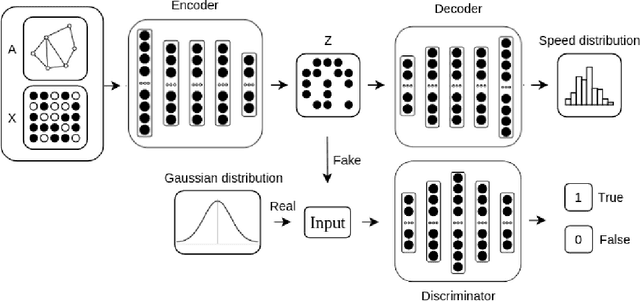

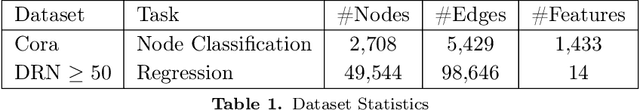

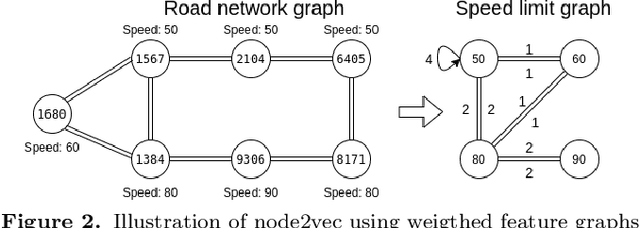

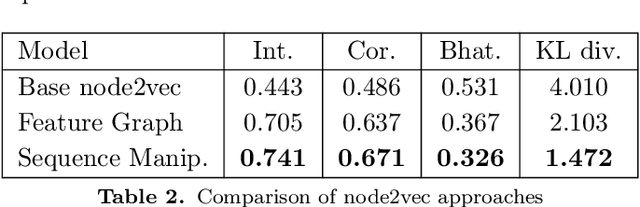

Partitioned Graph Convolution Using Adversarial and Regression Networks for Road Travel Speed Prediction

Feb 26, 2021

Access to quality travel time information for roads in a road network has become increasingly important with the rising demand for real-time travel time estimation for paths within road networks. In the context of the Danish road network (DRN) dataset used in this paper, the data coverage is sparse and skewed towards arterial roads, with a coverage of 23.88% across 850,980 road segments, which makes travel time estimation difficult. Existing solutions for graph-based data processing often neglect the size of the graph, which is an apparent problem for road networks with a large amount of connected road segments. To this end, we propose a framework for predicting road segment travel speed histograms for dataless edges, based on a latent representation generated by an adversarially regularized convolutional network. We apply a partitioning algorithm to divide the graph into dense subgraphs, and then train a model for each subgraph to predict speed histograms for the nodes. The framework achieves an accuracy of 71.5% intersection and 78.5% correlation on predicting travel speed histograms using the DRN dataset. Furthermore, experiments show that partitioning the dataset into clusters increases the performance of the framework. Specifically, partitioning the road network dataset into 100 clusters, with approximately 500 road segments in each cluster, achieves a better performance than when using 10 and 20 clusters.

Fine-Grained Classroom Activity Detection from Audio with Neural Networks

Jul 29, 2021

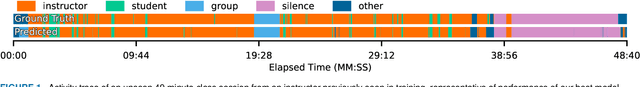

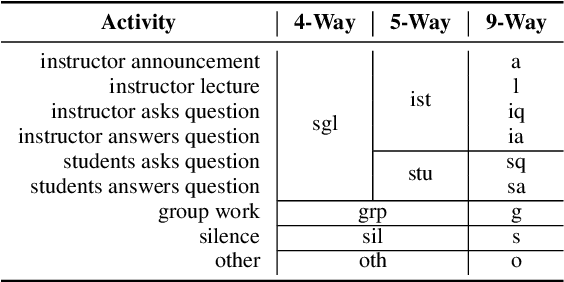

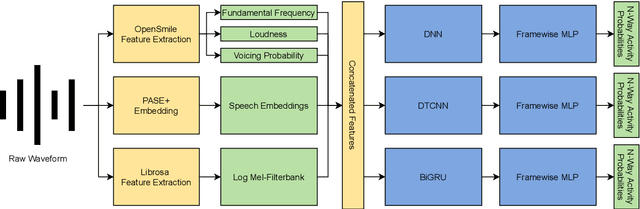

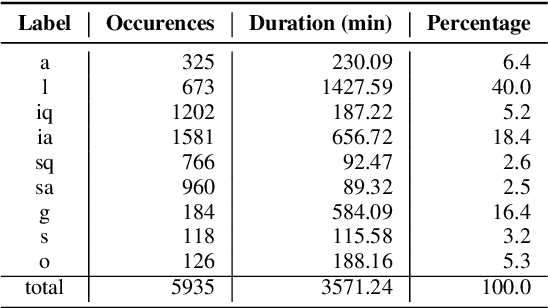

Instructors are increasingly incorporating student-centered learning techniques in their classrooms to improve learning outcomes. In addition to lecture, these class sessions involve forms of individual and group work, and greater rates of student-instructor interaction. Quantifying classroom activity is a key element of accelerating the evaluation and refinement of innovative teaching practices, but manual annotation does not scale. In this manuscript, we present advances to the young application area of automatic classroom activity detection from audio. Using a university classroom corpus with nine activity labels (e.g., "lecture," "group work," "student question"), we propose and evaluate deep fully connected, convolutional, and recurrent neural network architectures, comparing the performance of mel-filterbank, OpenSmile, and self-supervised acoustic features. We compare 9-way classification performance with 5-way and 4-way simplifications of the task and assess two types of generalization: (1) new class sessions from previously seen instructors, and (2) previously unseen instructors. We obtain strong results on the new fine-grained task and state-of-the-art on the 4-way task: our best model obtains frame-level error rates of 6.2%, 7.7% and 28.0% when generalizing to unseen instructors for the 4-way, 5-way, and 9-way classification tasks, respectively (relative reductions of 35.4%, 48.3% and 21.6% over a strong baseline). When estimating the aggregate time spent on classroom activities, our average root mean squared error is 1.64 minutes per class session, a 54.9% relative reduction over the baseline.

A Database for Research on Detection and Enhancement of Speech Transmitted over HF links

Jun 04, 2021

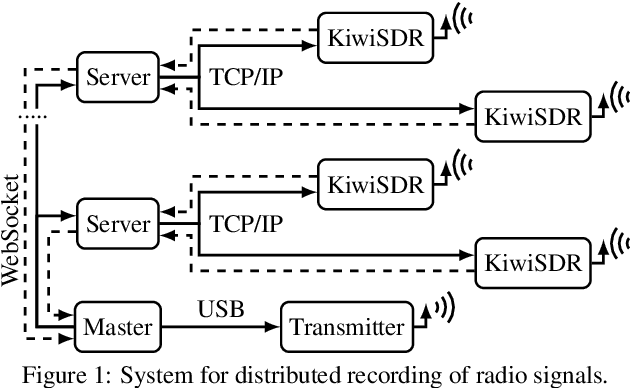

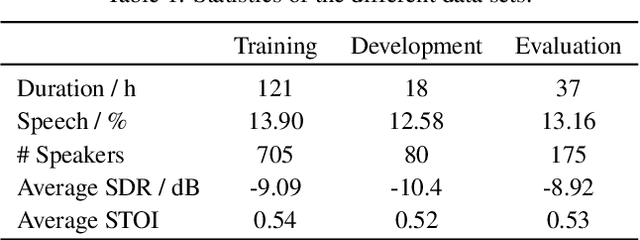

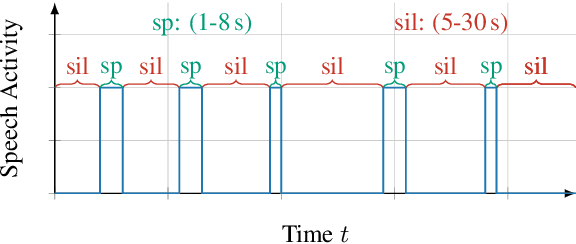

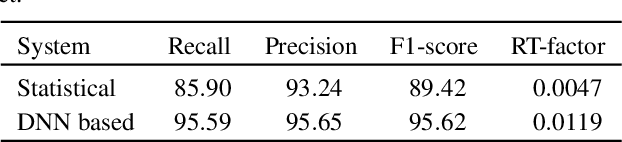

In this paper we present an open database for the development of detection and enhancement algorithms of speech transmitted over HF radio channels. It consists of audio samples recorded by various receivers at different locations across Europe, all monitoring the same single-sideband modulated transmission from a base station in Paderborn, Germany. Transmitted and received speech signals are precisely time aligned to offer parallel data for supervised training of deep learning based detection and enhancement algorithms. For the task of speech activity detection two exemplary baseline systems are presented, one based on statistical methods employing a multi-stage Wiener filter with minimum statistics noise floor estimation, and the other relying on a deep learning approach.

Going Deeper into Semi-supervised Person Re-identification

Jul 24, 2021

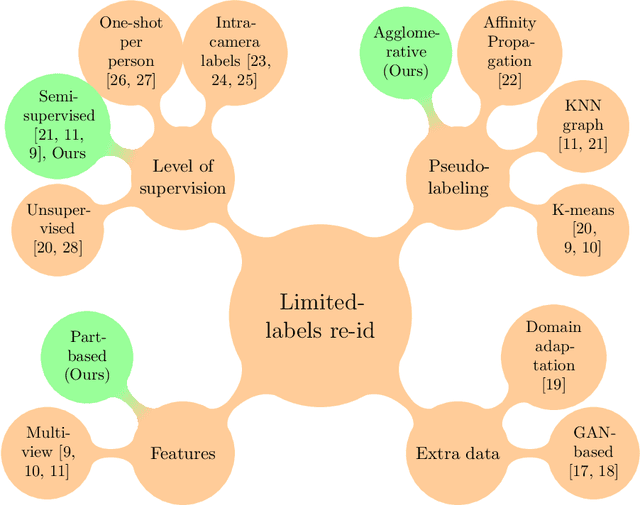

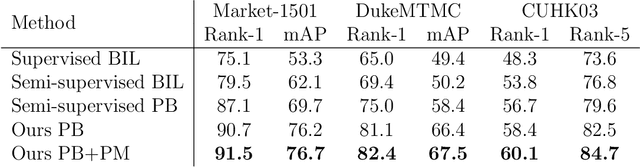

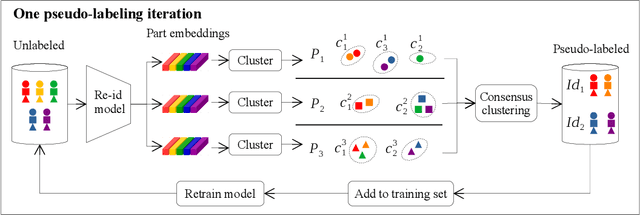

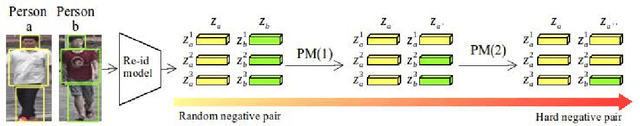

Person re-identification is the challenging task of identifying a person across different camera views. Training a convolutional neural network (CNN) for this task requires annotating a large dataset, and hence, it involves the time-consuming manual matching of people across cameras. To reduce the need for labeled data, we focus on a semi-supervised approach that requires only a subset of the training data to be labeled. We conduct a comprehensive survey in the area of person re-identification with limited labels. Existing works in this realm are limited in the sense that they utilize features from multiple CNNs and require the number of identities in the unlabeled data to be known. To overcome these limitations, we propose to employ part-based features from a single CNN without requiring the knowledge of the label space (i.e., the number of identities). This makes our approach more suitable for practical scenarios, and it significantly reduces the need for computational resources. We also propose a PartMixUp loss that improves the discriminative ability of learned part-based features for pseudo-labeling in semi-supervised settings. Our method outperforms the state-of-the-art results on three large-scale person re-id datasets and achieves the same level of performance as fully supervised methods with only one-third of labeled identities.

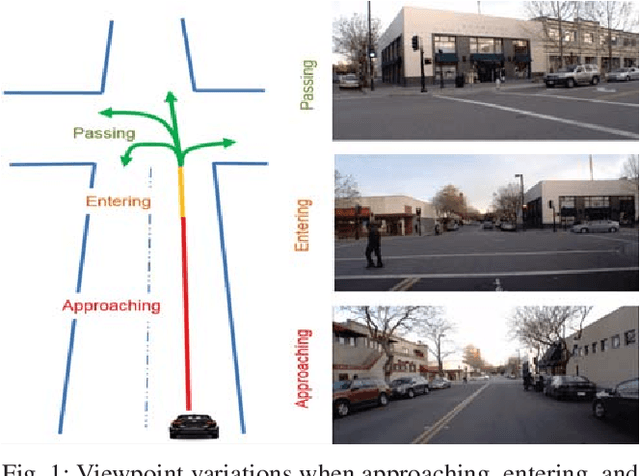

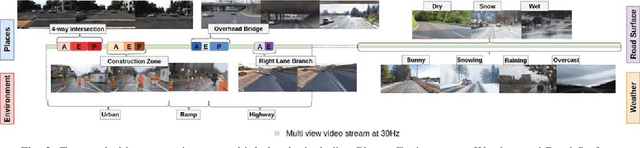

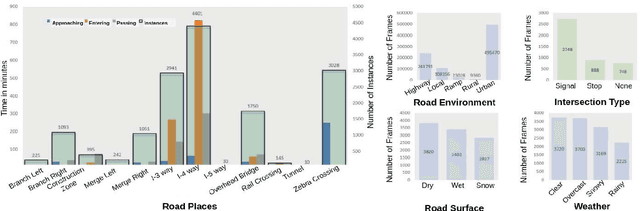

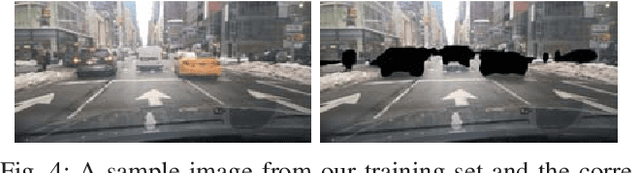

Dynamic Traffic Scene Classification with Space-Time Coherence

May 29, 2019

This paper examines the problem of dynamic traffic scene classification under space-time variations in viewpoint that arise from video captured on-board a moving vehicle. Solutions to this problem are important for realization of effective driving assistance technologies required to interpret or predict road user behavior. Currently, dynamic traffic scene classification has not been adequately addressed due to a lack of benchmark datasets that consider spatiotemporal evolution of traffic scenes resulting from a vehicle's ego-motion. This paper has three main contributions. First, an annotated dataset is released to enable dynamic scene classification that includes 80 hours of diverse high quality driving video data clips collected in the San Francisco Bay area. The dataset includes temporal annotations for road places, road types, weather, and road surface conditions. Second, we introduce novel and baseline algorithms that utilize semantic context and temporal nature of the dataset for dynamic classification of road scenes. Finally, we showcase algorithms and experimental results that highlight how extracted features from scene classification serve as strong priors and help with tactical driver behavior understanding. The results show significant improvement from previously reported driving behavior detection baselines in the literature.

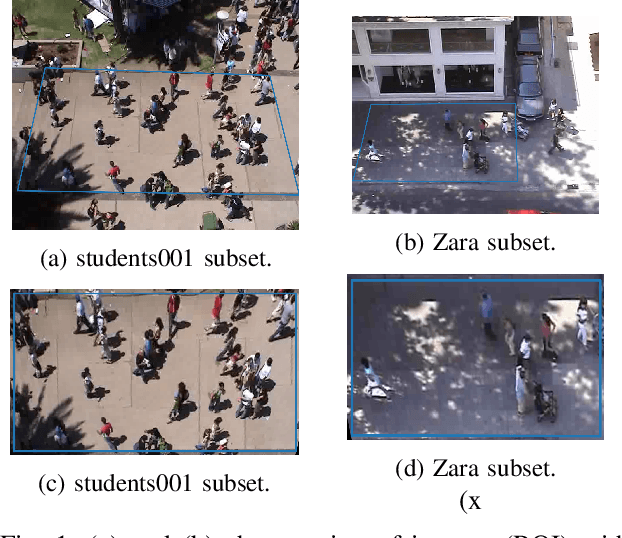

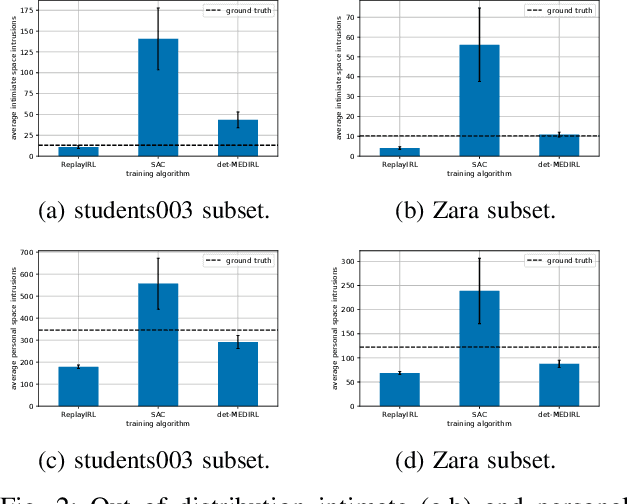

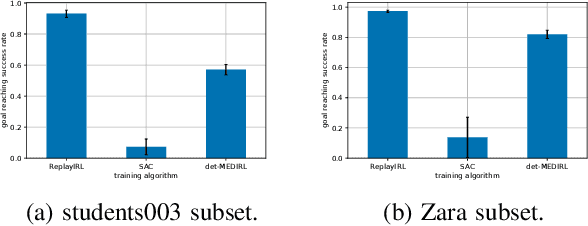

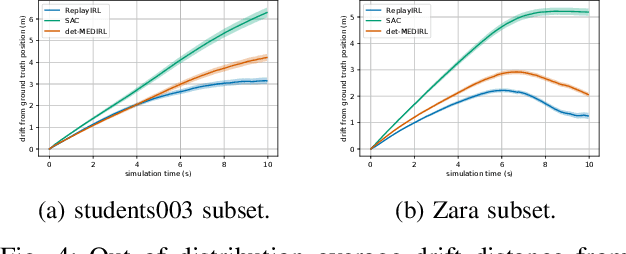

Sample Efficient Social Navigation Using Inverse Reinforcement Learning

Jun 18, 2021

In this paper, we present an algorithm to efficiently learn socially-compliant navigation policies from observations of human trajectories. As mobile robots come to inhabit and traffic social spaces, they must account for social cues and behave in a socially compliant manner. We focus on learning such cues from examples. We describe an inverse reinforcement learning based algorithm which learns from human trajectory observations without knowing their specific actions. We increase the sample-efficiency of our approach over alternative methods by leveraging the notion of a replay buffer (found in many off-policy reinforcement learning methods) to eliminate the additional sample complexity associated with inverse reinforcement learning. We evaluate our method by training agents using publicly available pedestrian motion data sets and compare it to related methods. We show that our approach yields better performance while also decreasing training time and sample complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge